TL;DR: The question practitioners are actually asking is not "how do I personalize at scale?" It is "how do I personalize at scale without sounding like I did it at scale?" The answer is not better AI tools. It is better inputs. Signal-first personalization, prompt specificity over adjectives, and an honest 80/20 split between automated and handwritten copy are what separate replies from radio silence. This guide covers the exact frameworks and prompt templates that produce genuinely relevant outreach, not just technically correct outreach.

AI-generated outreach is now table stakes. Most sales teams are running some version of it. The problem is that buyers know it too. "Sounds like AI" has replaced "sounds like a template" as the fastest way to get your email deleted without a response.

The practitioners who are actually converting at the conversion stage are not using less AI. They are using it differently. They are feeding it better signals, writing tighter prompts, and making deliberate decisions about which parts of their outreach to automate and which parts to write by hand. The result is copy that reads like it came from someone who actually looked at the prospect's world, not someone who ran a mail merge.

This guide covers five practical frameworks and five prompt templates you can use today. No theory. Just the mechanics that work.

Why Does AI Outreach Still Sound Generic?

AI outreach sounds generic because the inputs are generic. Most teams feed AI a prospect's name, company, job title, and maybe a LinkedIn bio. That is the same information every competitor has. When everyone is personalizing from the same shallow pool of context, the output converges to the same sounding email.

The second problem is prompt design. Most sales teams write prompts like "write a personalized cold email to [name] at [company] about [product]." That instruction tells the AI nothing about tone, specificity, or what signal it should anchor on. The AI fills in the gaps with the most statistically common cold email patterns in its training data, which is exactly what the buyer is trying to filter out.

McKinsey's 2024 research on AI in commercial functions found that buyer skepticism toward AI-generated communications has grown substantially as adoption has spread. When every vendor runs the same tool with the same shallow inputs, the outputs converge, and buyers pattern-match against that average. The problem is not AI itself. It is undifferentiated AI output deployed at scale and mislabeled as personalization.

Unify customers sending signal-triggered, prompt-engineered outreach see average reply rates of 4.2% compared to an industry baseline of 1.1% for generic AI outreach. That 3.8x gap is not explained by better targeting lists or more sends. It is explained by more relevant copy, generated from better signals and tighter prompts. The inputs are different. The output quality follows.

What Is the "Human Audit" Test?

The human audit test is a single question you ask about every AI-generated message before it sends: "Would a human who had done this research actually write this?" If the answer is no, the prompt needs to be rewritten.

The test exposes a specific failure mode: AI copy that is technically accurate but behaviorally implausible. No human who actually read a prospect's latest earnings call would open with "I noticed your company is growing rapidly." A human would say "Your Q3 call mentioned the push into mid-market. That's exactly where your current sequencing setup tends to break." The first is a generic compliment. The second is a signal-anchored observation.

To run the human audit test on your own sequences, print out three AI-generated emails and read them aloud. Ask yourself: does this sound like something a great SDR who spent 10 minutes on research would actually say? Or does it sound like something produced by averaging 10,000 cold emails? The latter will always underperform because buyers are pattern-matching against exactly that average.

The human audit is not about eliminating AI from your workflow. It is about setting a quality floor that automation has to clear before anything hits a prospect's inbox.

What Is Signal-First Personalization?

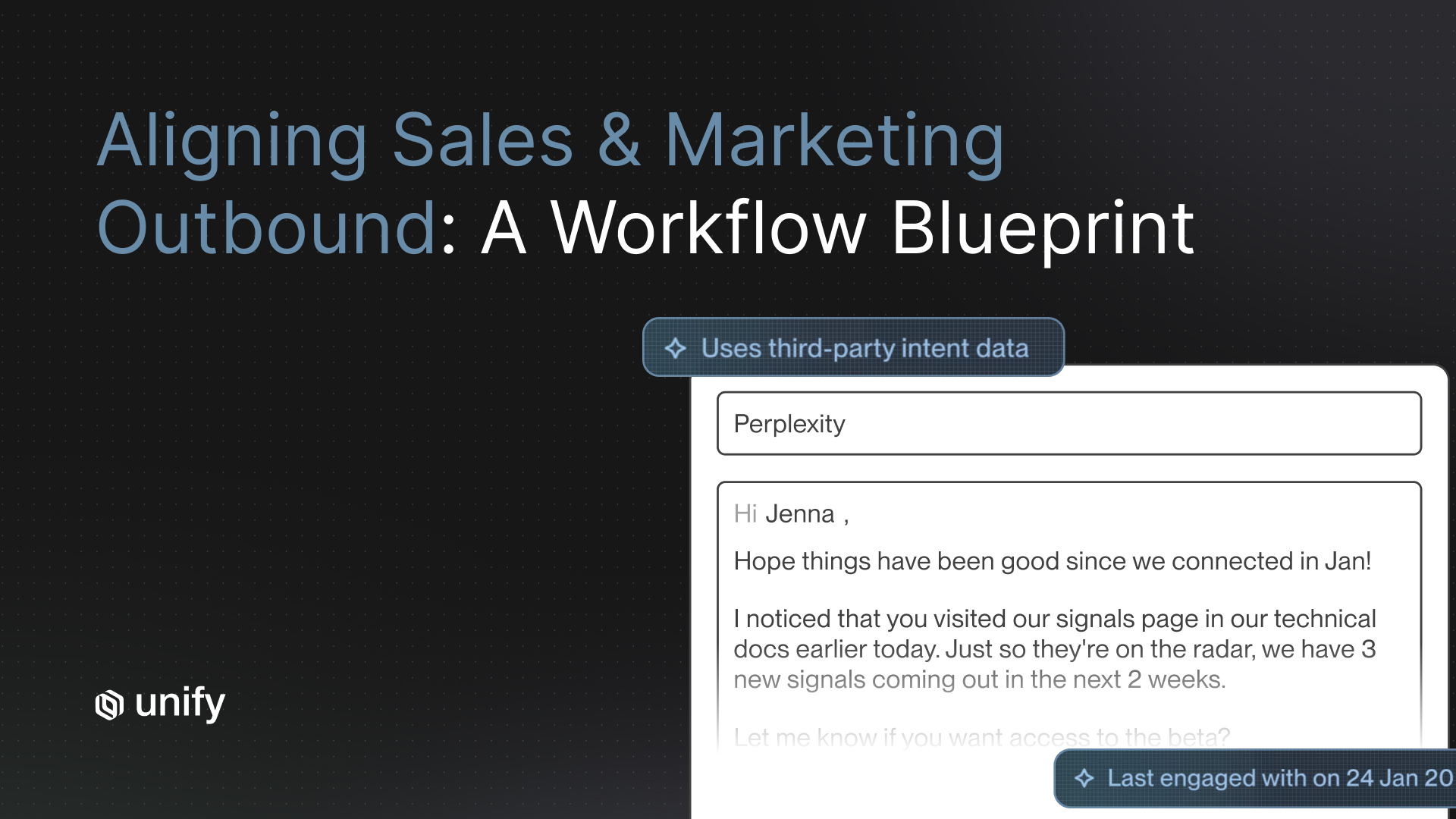

Signal-first personalization means leading every outreach message with the specific trigger or buying signal that prompted the outreach, rather than with a compliment or context-setting statement about the prospect's company. The signal is the reason the email is relevant right now. It belongs in the first sentence, not buried in paragraph two.

A buying signal is any observable change in a prospect's world that indicates they may have a problem your product solves. This includes: a new hire in a relevant role, a funding announcement, a product launch that changes their go-to-market motion, a job posting that signals a capability gap, or a public statement that reveals a strategic priority.

For a deeper breakdown of how buying signals work and which ones convert best at each funnel stage, see What Is Signal-Based Selling.

Signal-first outreach works because it demonstrates research without announcing it. When you open with "Saw you just brought on a VP of Revenue Operations last month," you are not telling the prospect you researched them. You are showing them you noticed something specific. That is a very different experience than "I've been following your company's growth."

Generic (signal-last): "Hi [Name], I love what [Company] is doing in the enterprise space. As you scale your revenue team, I imagine outbound efficiency becomes a bigger priority..."

Signal-first: "Saw the VP RevOps hire last month. That's usually the moment teams realize their outbound stack was built for a 5-person team, not a 50-person one. Worth 15 minutes to see if that's the case here?"

The signal-first version is shorter, more specific, and does not require the prospect to trust your premise. The signal is the premise. It either resonates or it does not. There is no wasted middle ground.

How Do You Write Prompts That Produce Natural-Sounding Copy?

Prompts that produce natural-sounding copy share one characteristic: they prioritize specificity over adjectives and observations over compliments. The fastest way to make AI copy sound human is to strip every adjective from your prompt instructions and replace each one with a concrete constraint.

The rule: never ask AI to write something "personalized," "relevant," "conversational," or "genuine." These are aesthetic judgments the model cannot reliably make from a prompt. Instead, specify the exact signal to reference, the exact observation to make, and the exact question to end with.

The Five Core Prompt Principles

- Anchor to one signal, not three. Emails that reference multiple signals feel like a laundry list. One well-chosen trigger, delivered with precision, outperforms three generic observations every time.

- Describe the observation, not the sentiment. Do not prompt "write something that shows you care about their business." Prompt "reference the fact that they posted two SDR job listings in the last 30 days and connect that to the problem we solve."

- Give the voice constraint explicitly. "Write this in the voice of a senior revenue leader who has 12 seconds to make a point, not a marketer pitching features." Voice instructions produce more consistent tone than adjectives like "casual" or "direct."

- Set a word count floor and ceiling. AI defaults to thorough. Sales emails should be sparse. Prompt for 60-80 words maximum and watch the copy tighten immediately.

- Tell the AI what not to say. Negative constraints are underused. "Do not compliment their growth. Do not mention their LinkedIn profile. Do not use the word 'excited.'" These exclusions do more to differentiate output than positive instructions alone.

Five Prompt Templates That Produce Genuinely Relevant Copy

These five templates are designed to be dropped directly into an AI model with prospect-specific inputs filled in. Each is built around one signal type and one clear voice constraint. They have been refined through thousands of sequences run on the Unify platform.

Template 1: New Executive Hire Signal

Write a cold email (60-70 words) from a senior account executive to a [VP of Sales / CRO / CMO] who just joined [Company] 4-6 weeks ago. Reference that they are inheriting a team and a stack they did not choose. The email should acknowledge this without being presumptuous. Connect it to [specific problem your product solves]. End with a low-commitment ask: 15 minutes to share how similar transitions have played out at comparable companies. Do not use the word "excited." Do not compliment the company.

Template 2: Funding Announcement Signal

Write a cold email (60-75 words) to the Head of Revenue at a company that just raised a [Series A/B/C]. Do not congratulate them. Open with the specific operational challenge that becomes acute after a funding round at this stage: [pipeline generation pressure, headcount ramp, go-to-market efficiency]. Frame our product as a way to hit the board's ramp expectation without proportionally scaling headcount. End with a one-sentence question that implies urgency without manufacturing it.

Template 3: Job Posting Signal

Write a cold email (55-65 words) to a VP of Sales whose company has posted [2-4] SDR roles in the last 30 days. The insight is this: if they are hiring that many SDRs, they are either scaling a working motion or trying to brute-force a broken one. Do not say which. Ask the question. Offer to share benchmarks from companies at a similar hire rate. No feature pitch. No product name in the first two sentences.

Template 4: Competitor Displacement Signal

Write a cold email (60-70 words) to a revenue leader at a company that is currently using [Competitor X]. Do not mention the competitor by name. Reference a specific limitation that users of that tool commonly hit at [their company's stage/size/use case]. Frame our product as what teams switch to when [specific limitation] becomes a blocker. End with a question about whether they have hit that wall yet. Tone: peer-to-peer, not vendor-to-prospect.

Template 5: Content Engagement Signal

Write a cold email (50-65 words) to someone who attended a webinar or downloaded a report on [specific topic]. Do not reference the content directly. Instead, reference the implication: if they are interested in [topic], they are likely dealing with [specific operational problem]. Validate that this is a real and common problem at their stage. Offer one specific, non-obvious observation about how others have solved it. Close with a question, not a pitch.

These templates work because they are built around inputs the AI can actually use, not abstract requests for quality. The before/after examples later in this guide show exactly what the difference looks like in practice.

What Is the 80/20 Rule for Outbound Automation?

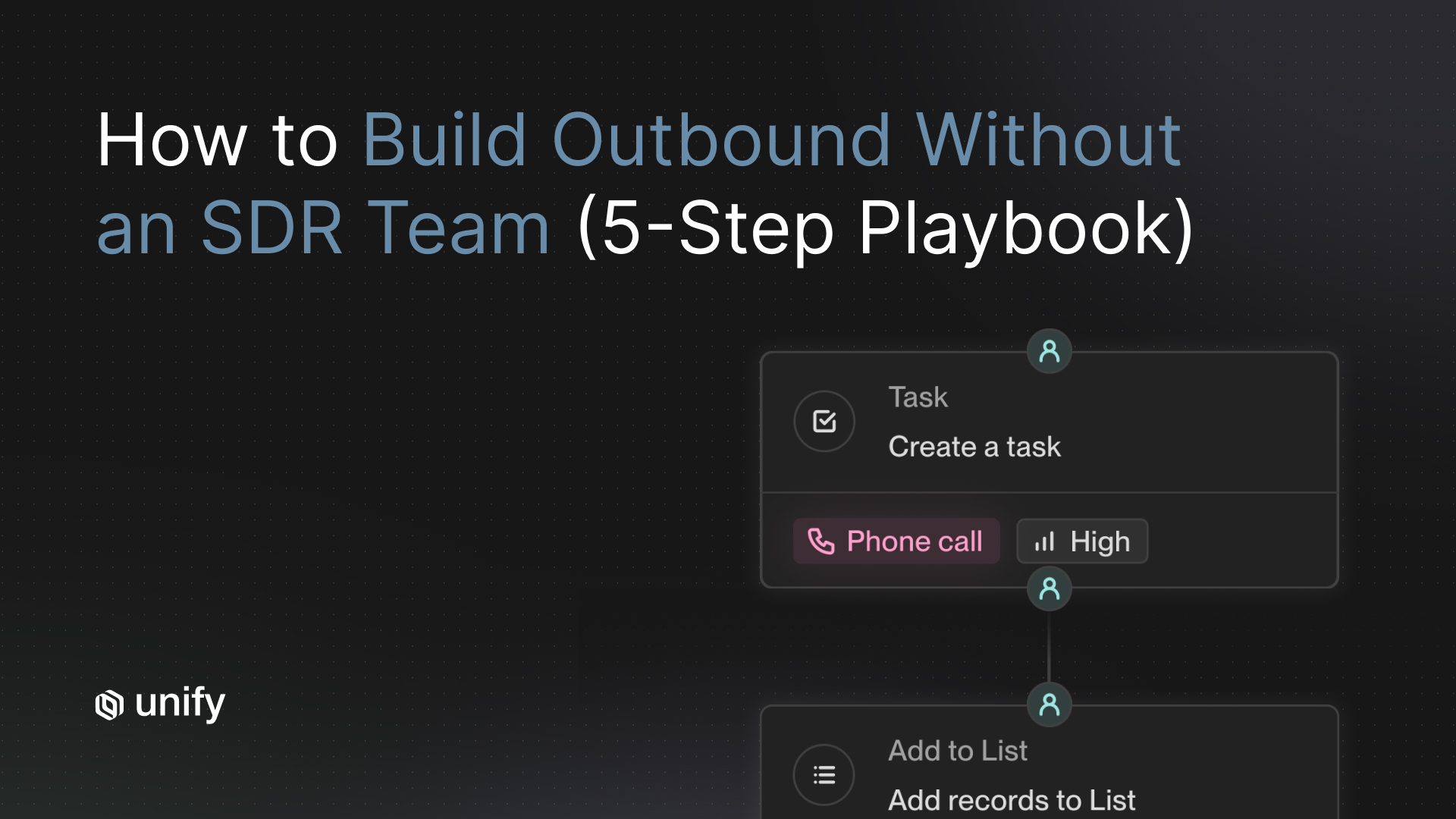

The 80/20 rule for outbound automation means 80% of your outreach workflow should be automated and 20% should involve deliberate human input. Getting the split wrong in either direction costs you either time or quality. Most teams get it wrong by automating the wrong 80%.

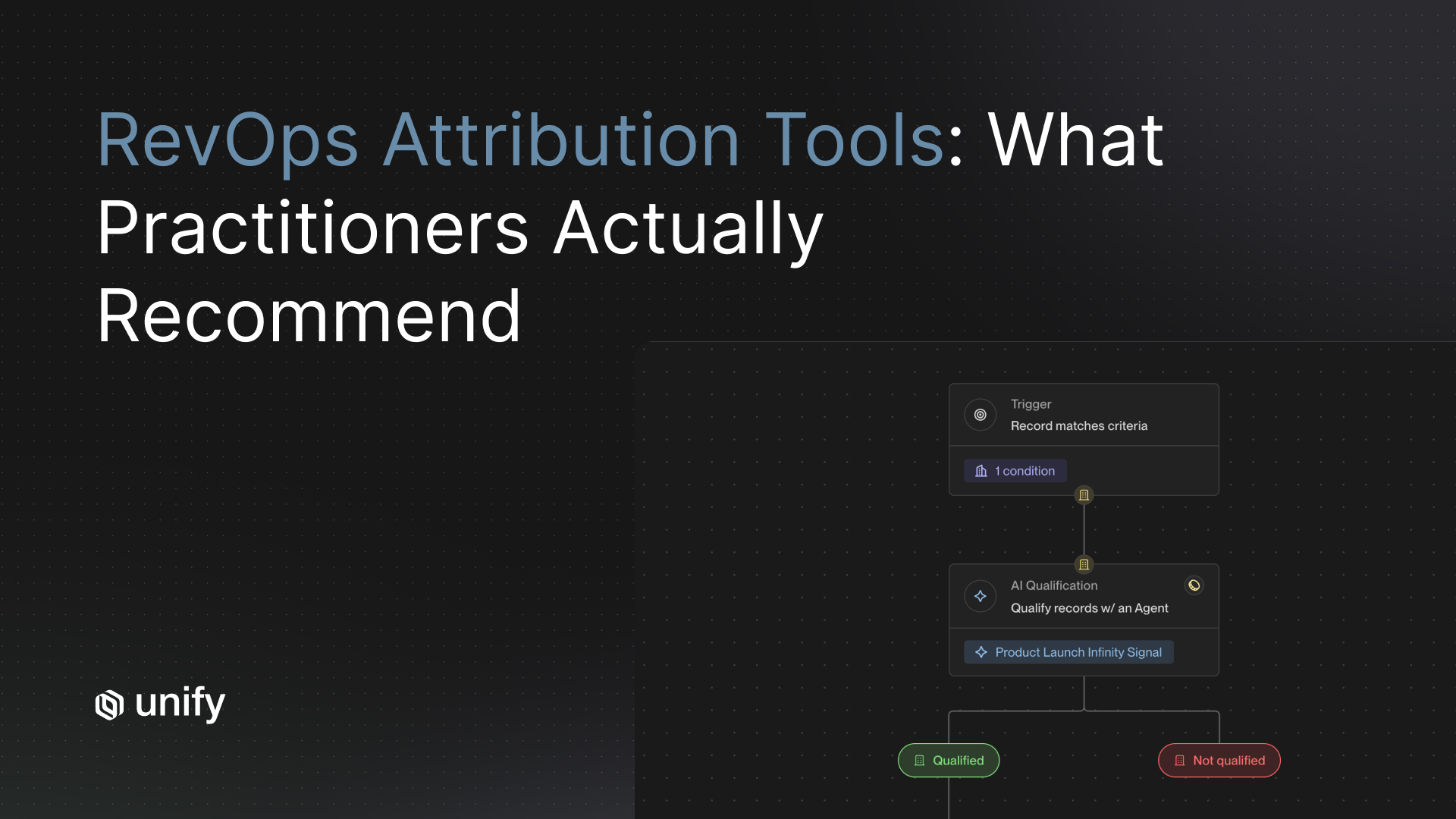

The parts that are safe to automate: prospect research and signal aggregation, first-draft copy generation from a strong prompt, personalization variable population, sequence enrollment logic, follow-up timing and delivery. These are mechanical steps where automation improves consistency without degrading quality.

The parts that require human judgment: choosing which signal to anchor on, deciding whether the timing is right for this specific prospect, editing the first draft to match the rep's actual voice, writing the P.S. line or any call-back that requires genuine company knowledge. These are judgment calls that AI gets wrong at a rate that matters.

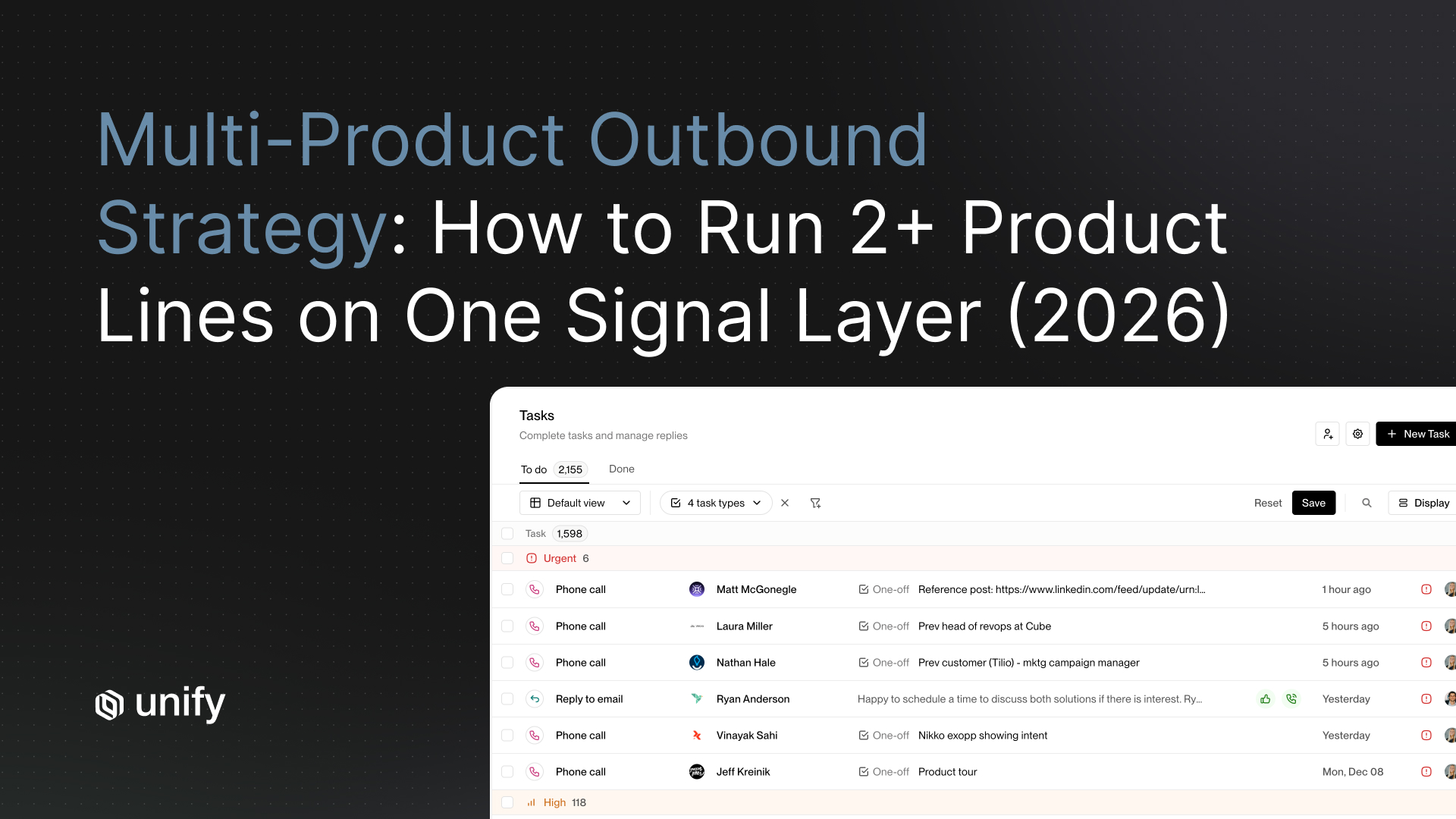

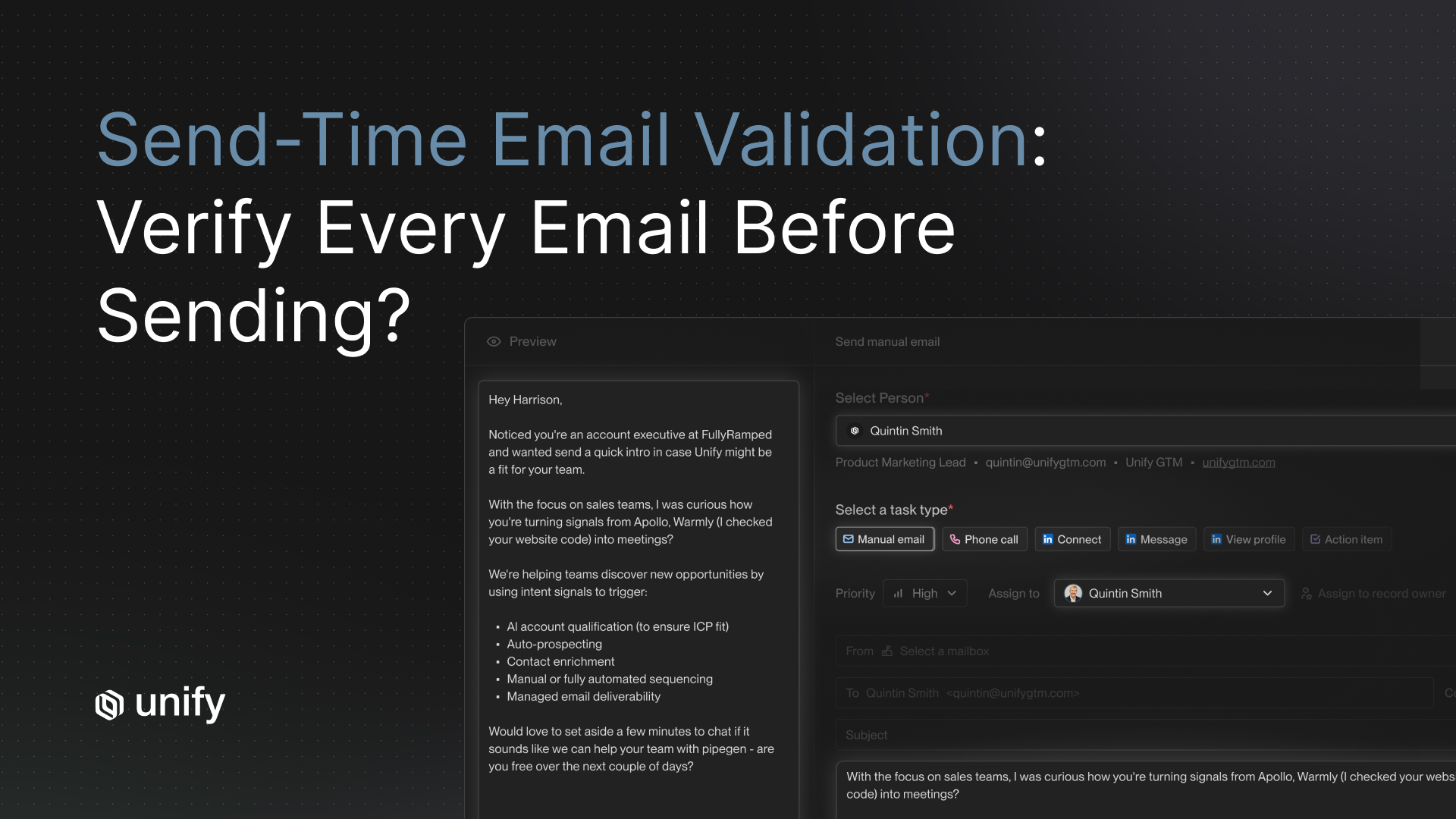

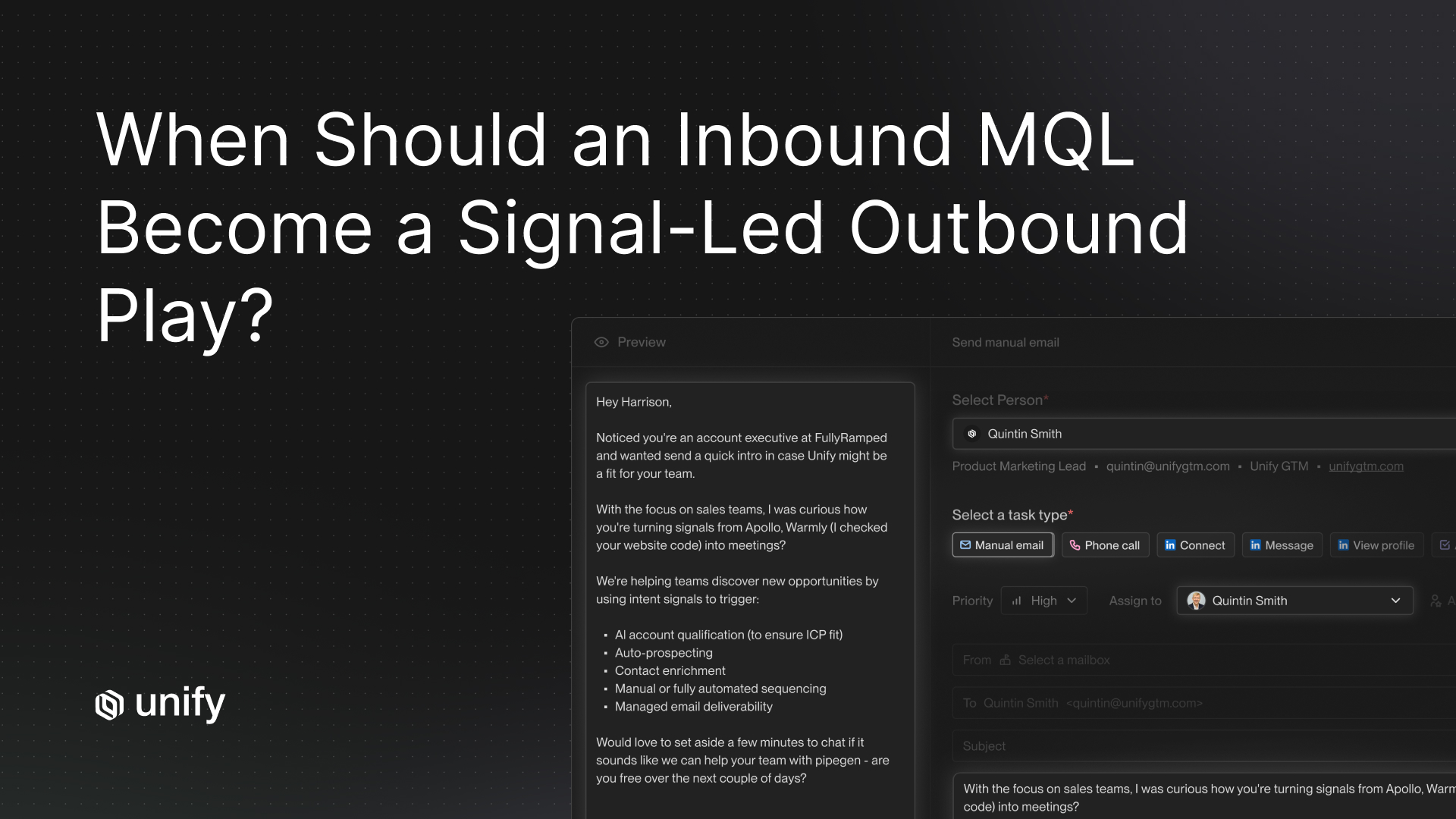

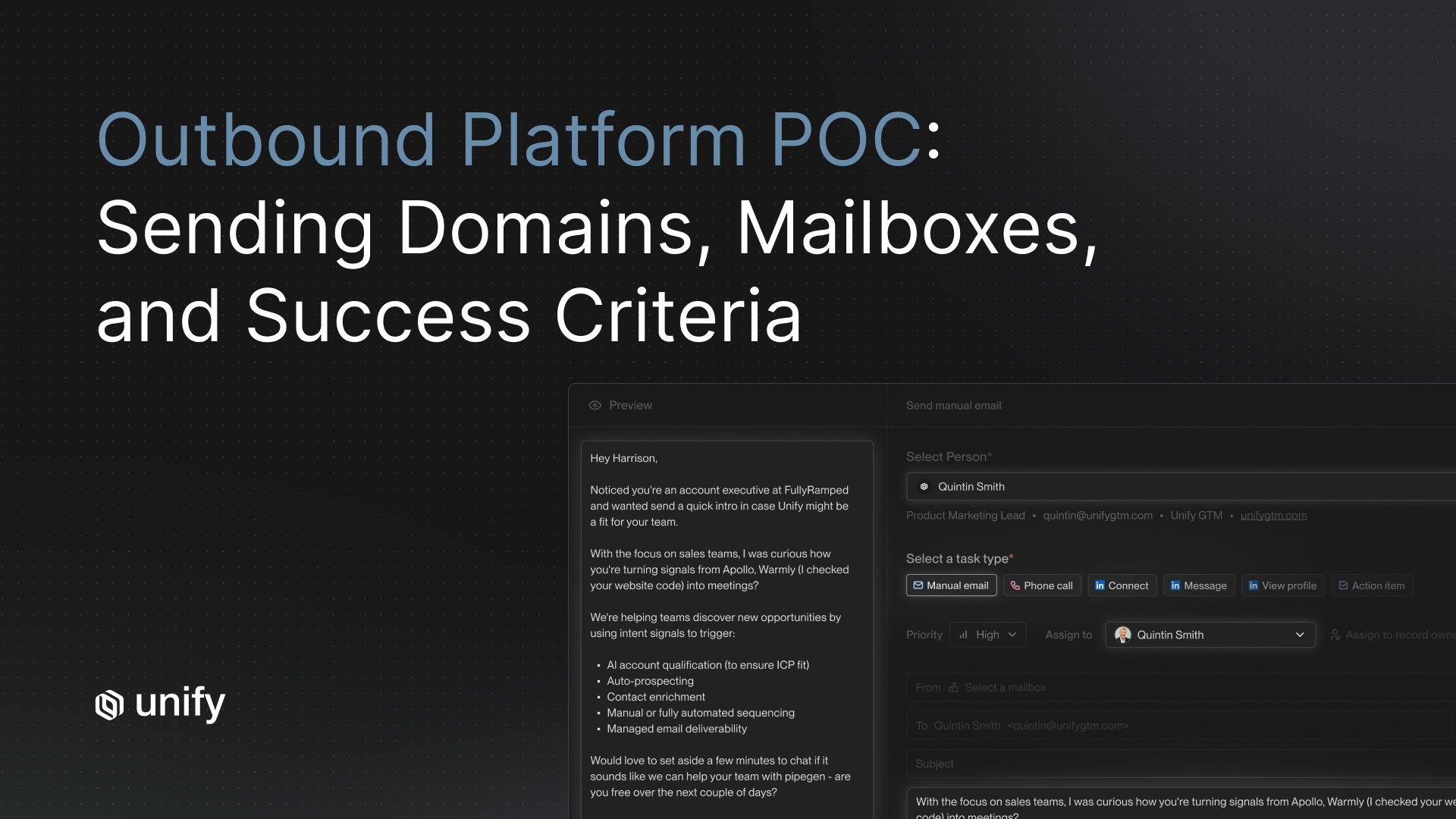

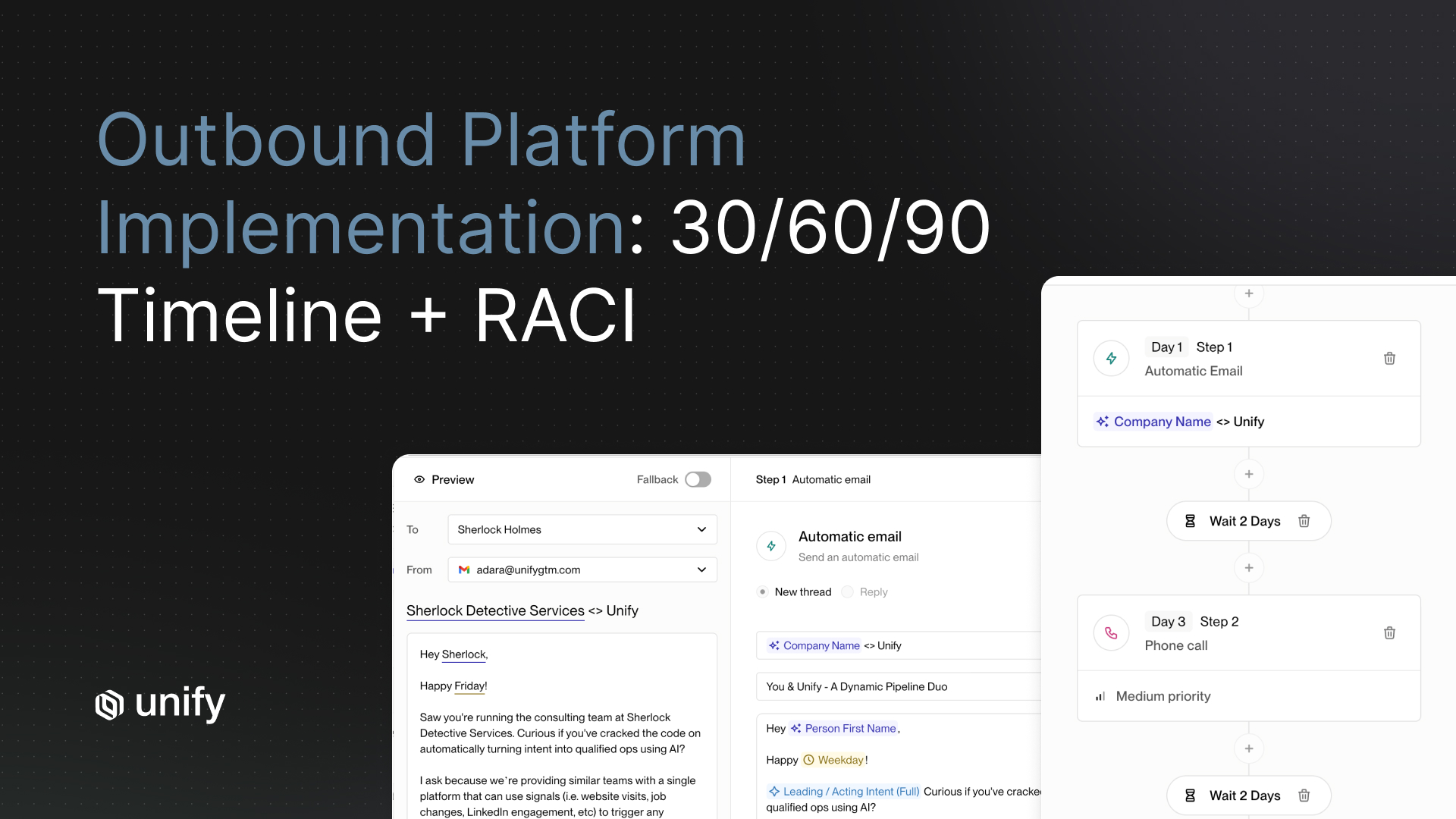

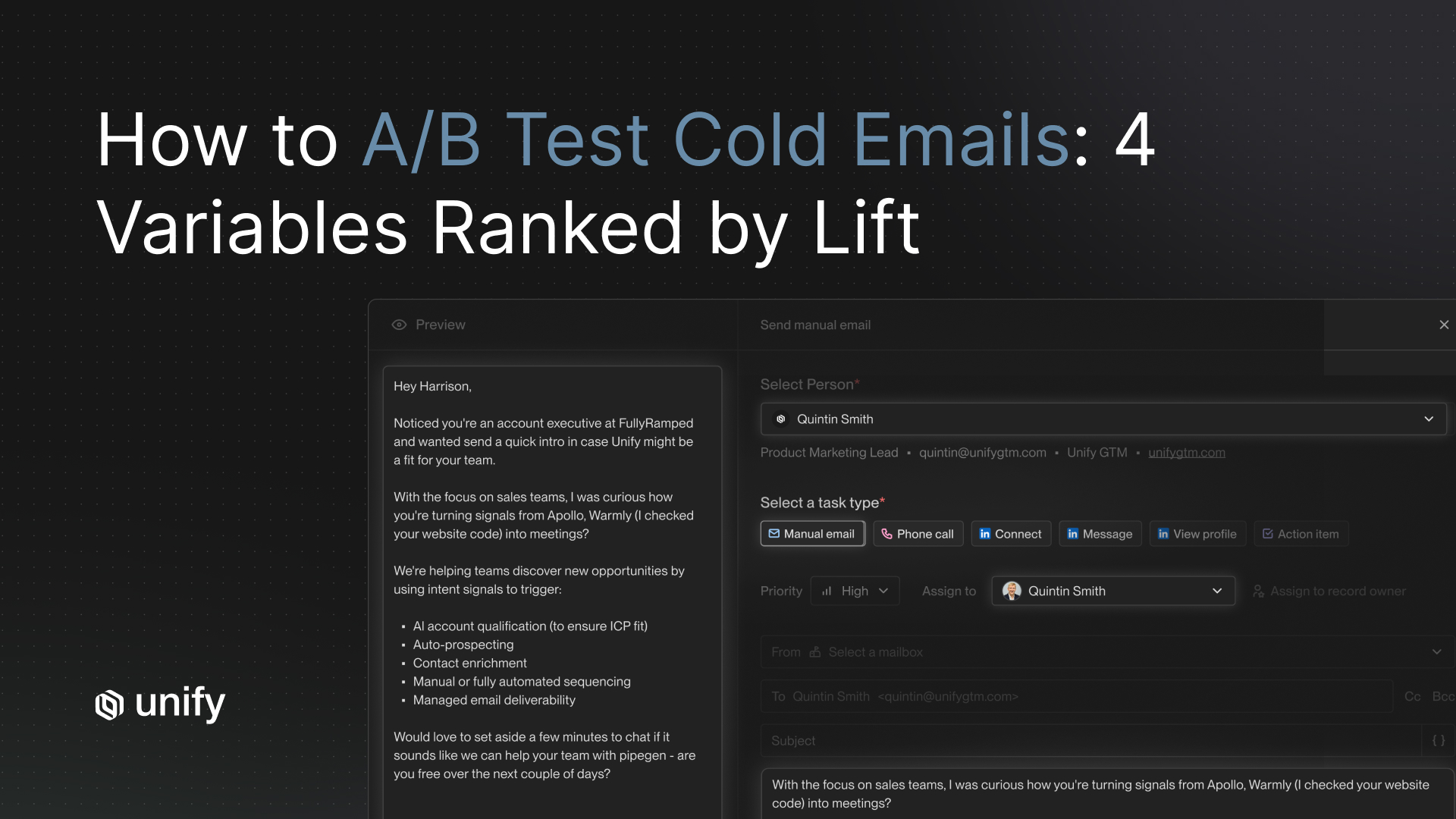

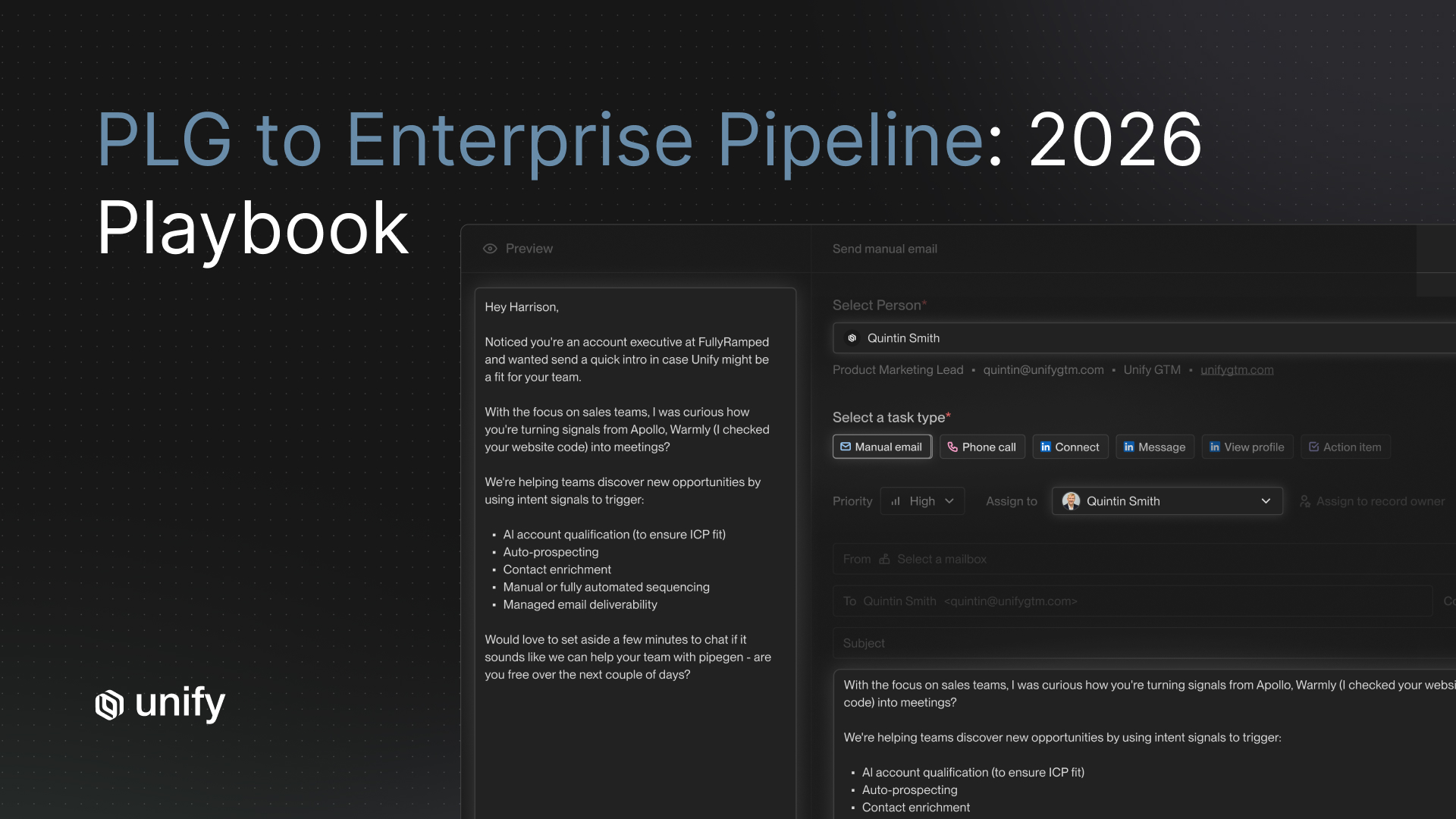

In practice, at Unify, this looks like: AI ingests buying signals and generates a draft email personalized to the top signal. The rep reviews the draft in a queue, makes minor edits or approves as-is, and the message sends. Average review time is under 90 seconds per email. The rep is not writing from scratch. They are quality-controlling a draft that is already 80% of the way there.

Teams using this workflow on Unify see their reps send 3-4x more personalized outreach per day compared to fully manual sequences, while maintaining the same average reply rate as hand-written outreach. The leverage is in the automation. The quality floor is in the review.

Before/After: What AI-Generic vs. AI-Authentic Copy Looks Like

The fastest way to calibrate your own outreach quality is to compare examples side by side. Below are three before/after pairs. The "before" is the kind of output most AI tools produce with a standard prompt. The "after" is what comes out of a signal-anchored, constraint-heavy prompt using the templates above.

The pattern is consistent. AI-generic copy announces its intent (congratulate, establish relevance, pitch). AI-authentic copy earns the response by demonstrating that the sender has already done the work of understanding the prospect's situation. The authentic versions are also shorter. Specificity compresses copy. When you know exactly what you want to say, you need fewer words to say it.

How Does Unify Handle Personalized Outreach at Scale?

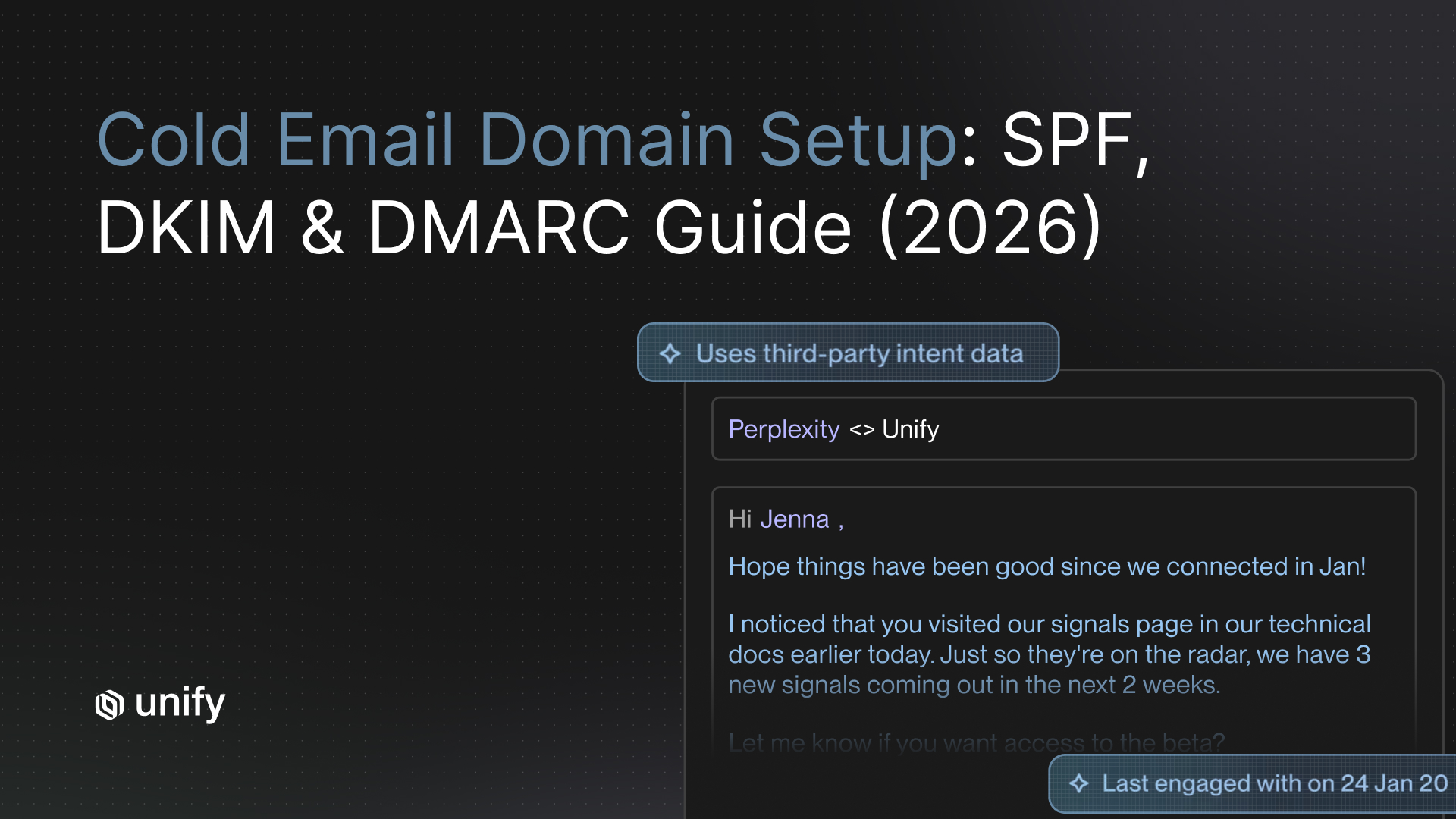

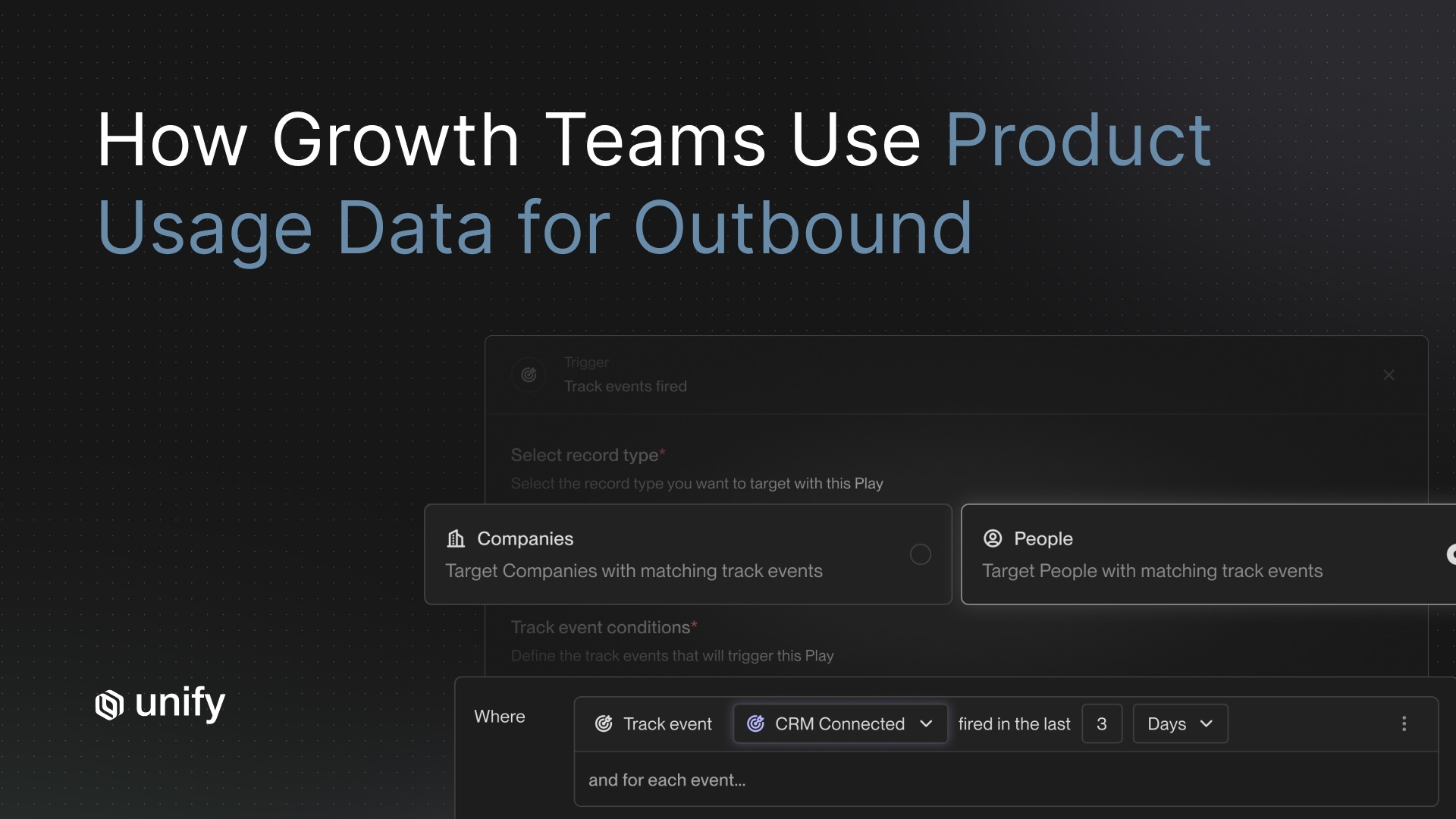

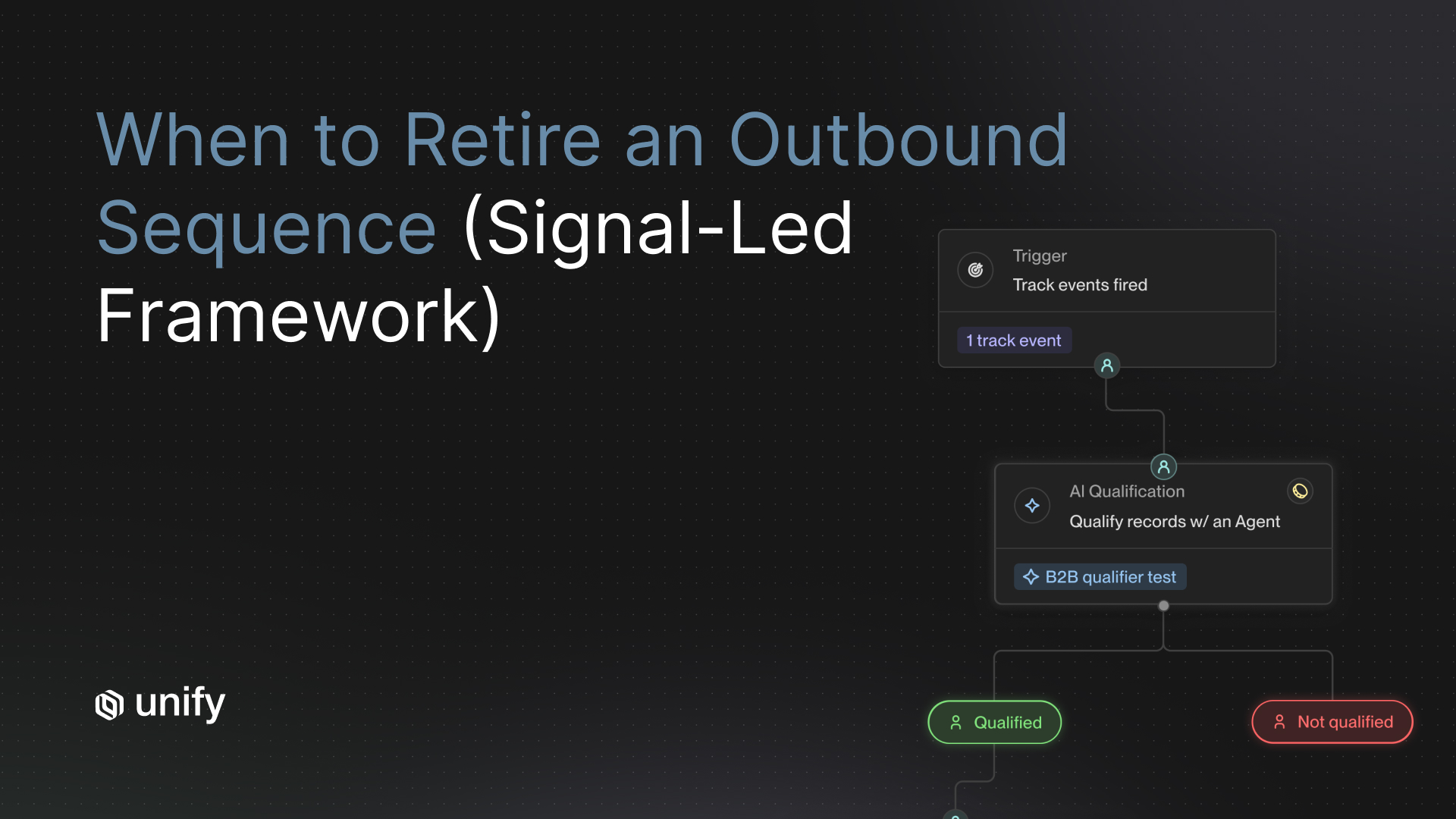

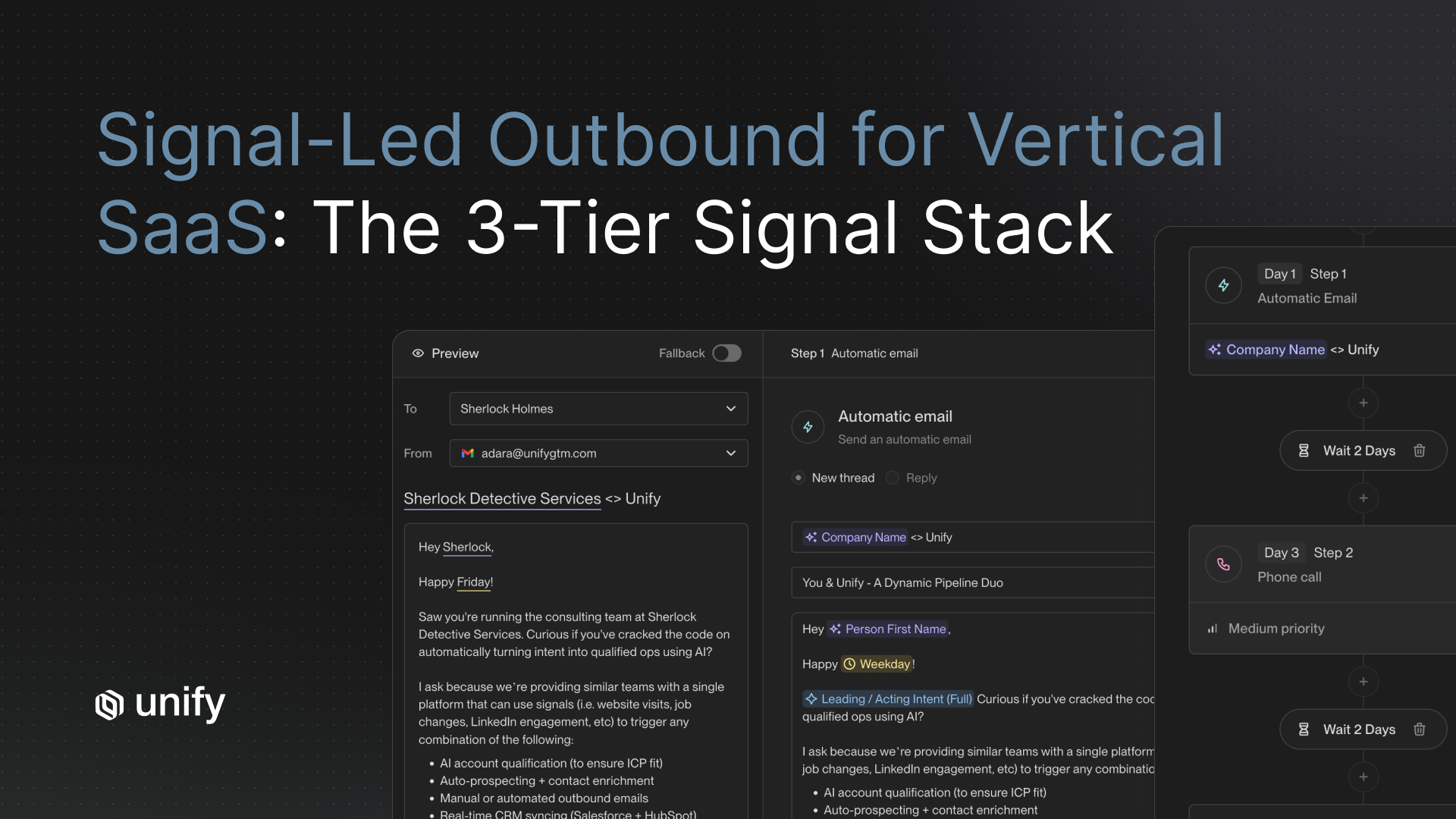

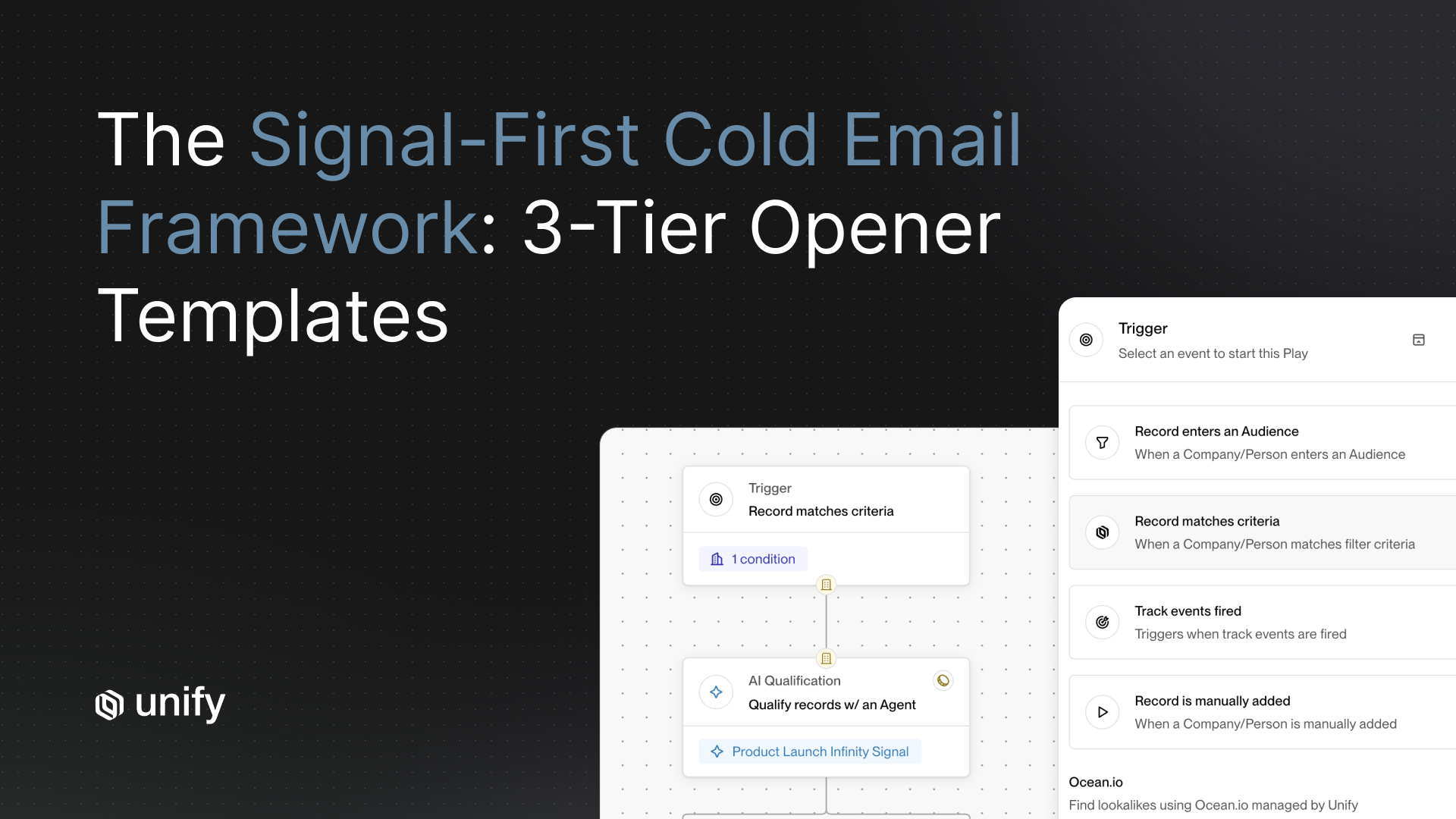

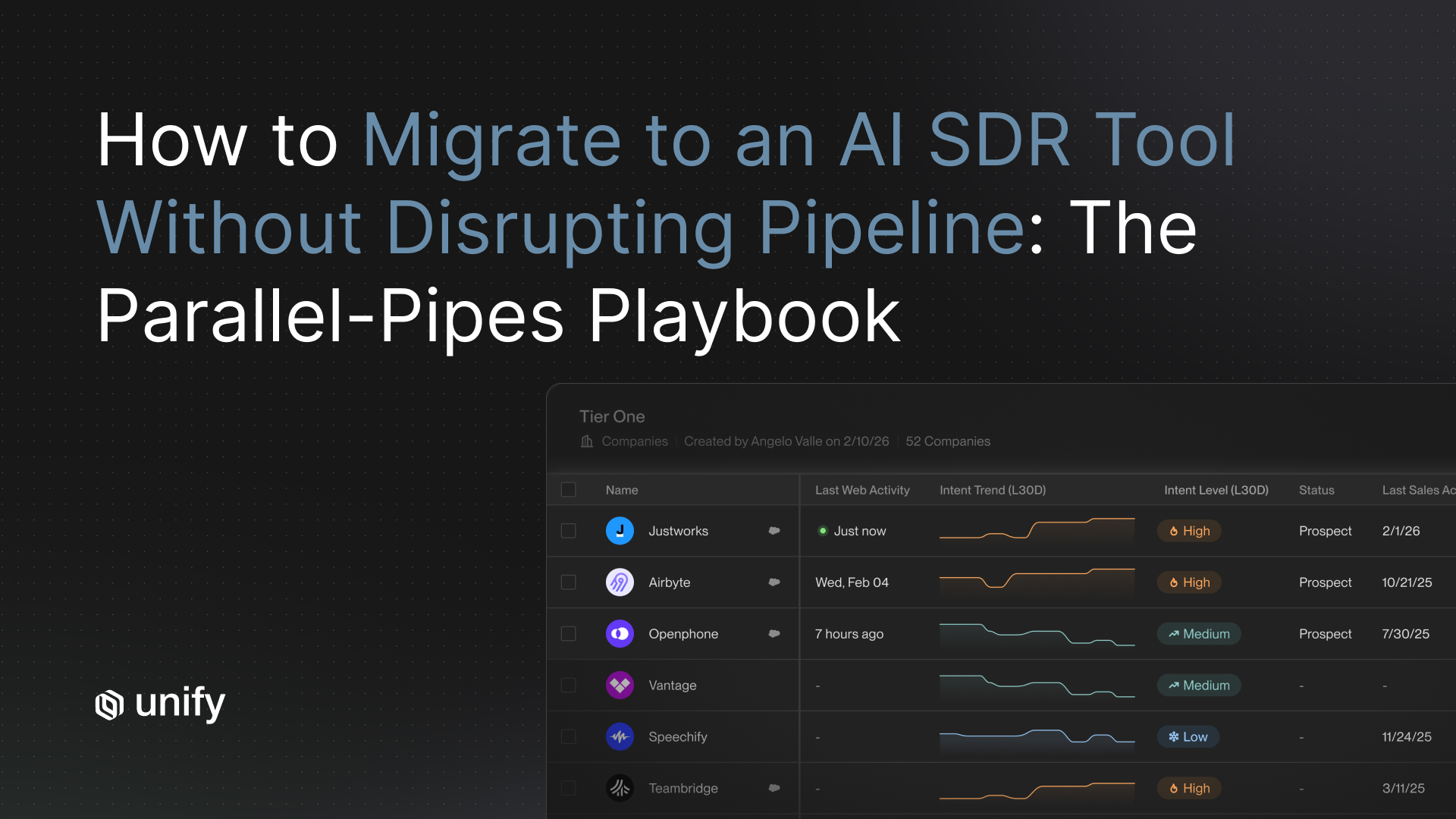

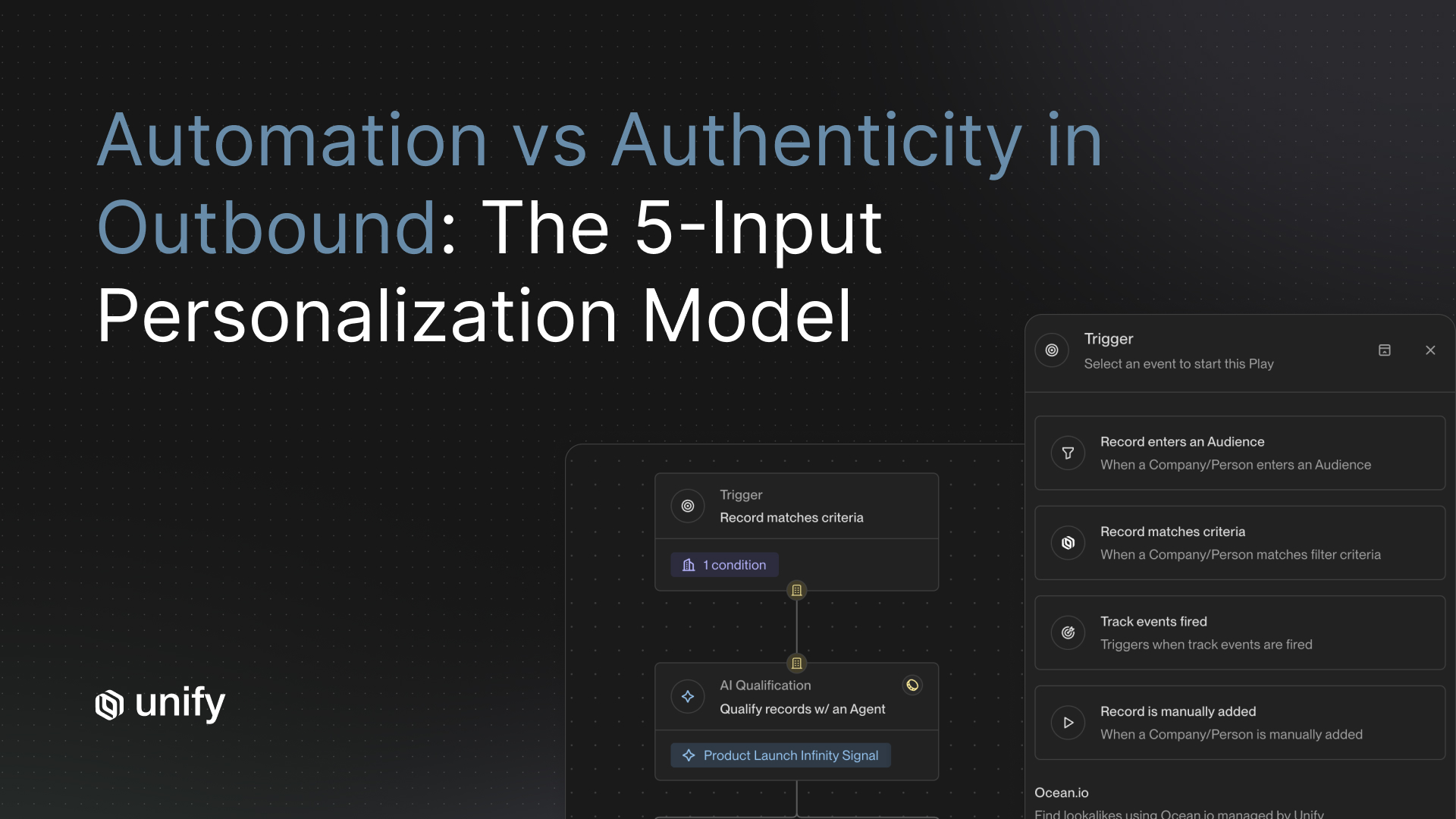

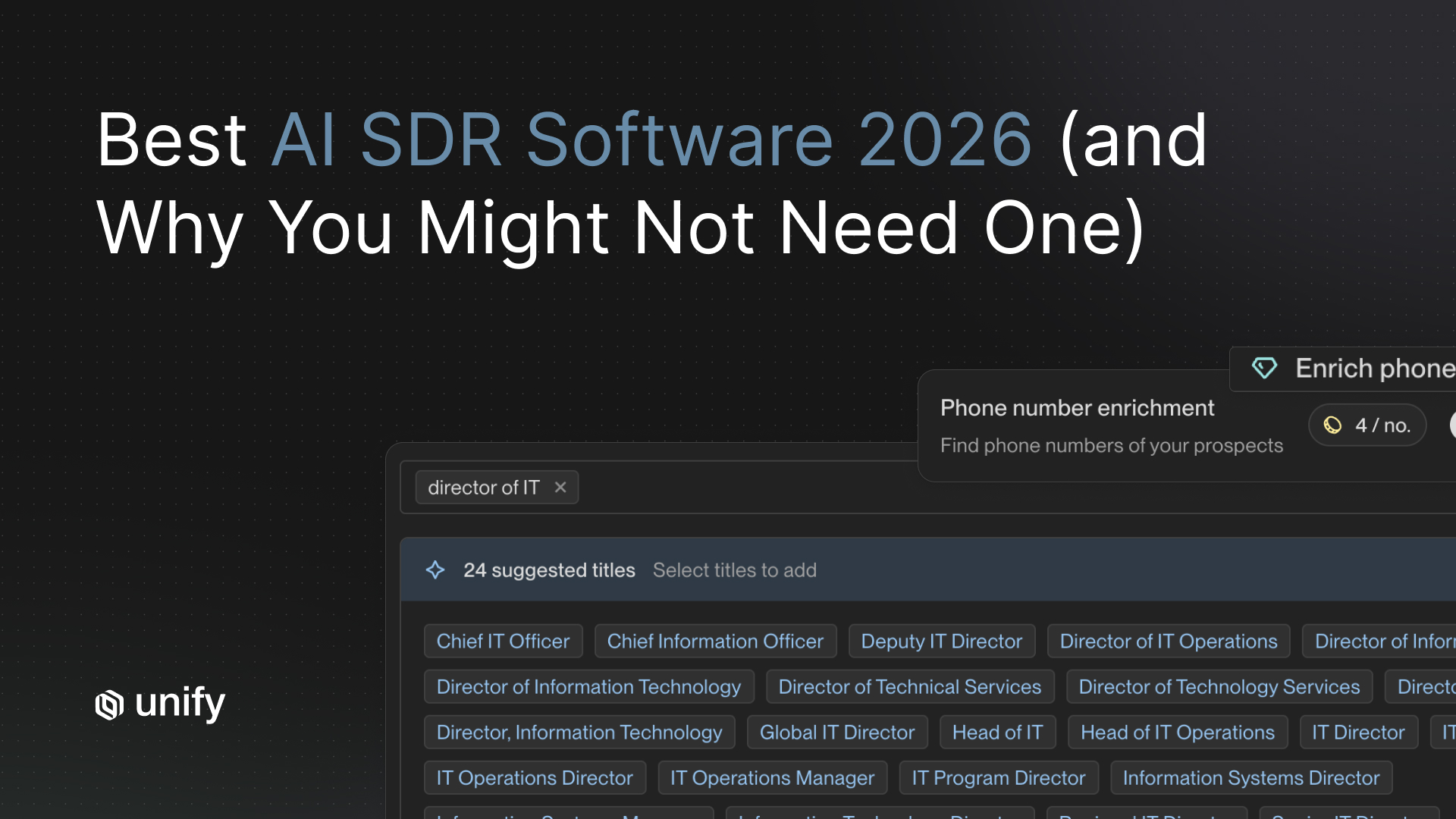

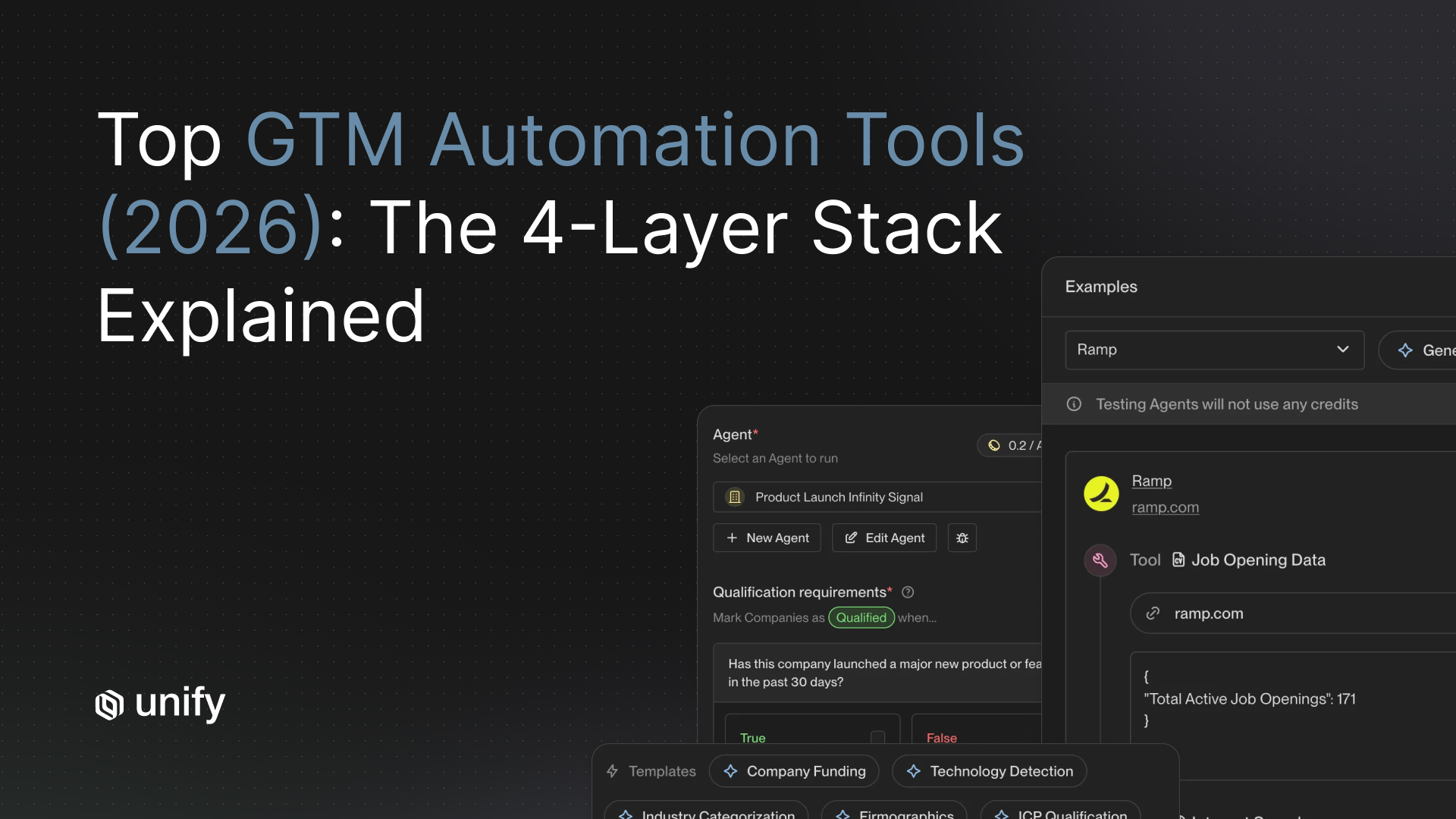

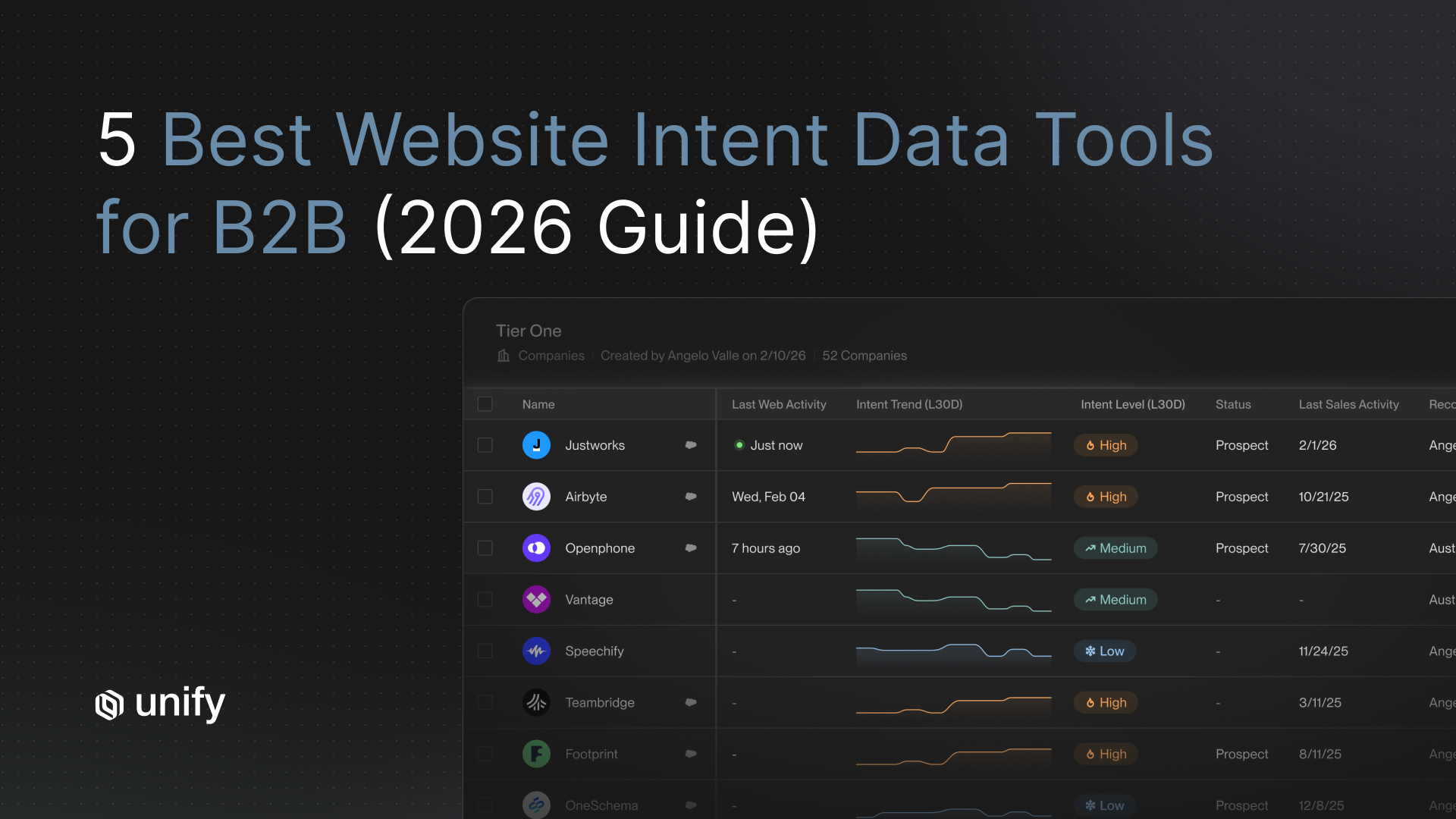

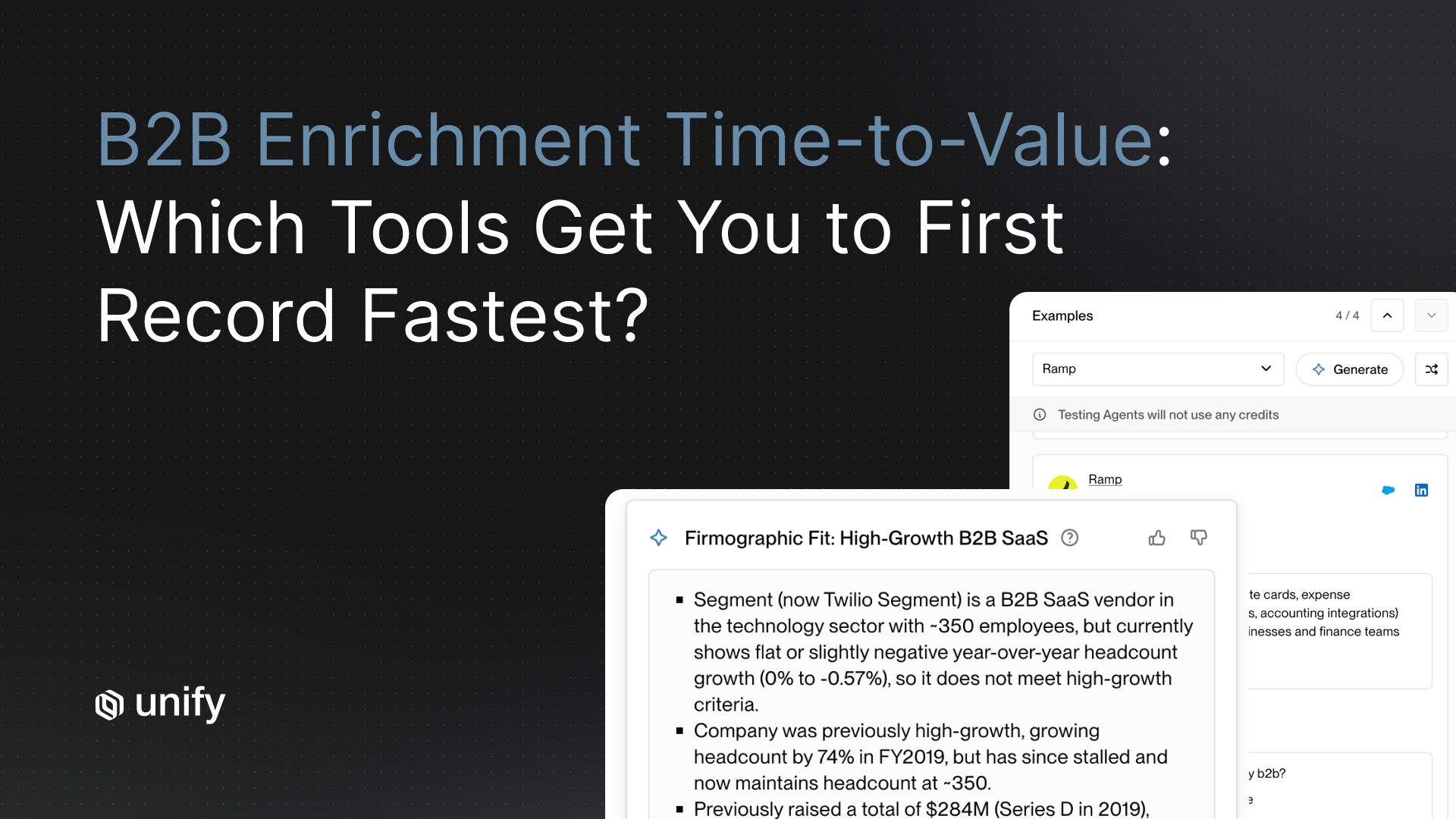

Unify is built specifically for signal-based outbound personalization. The platform surfaces buying signals from across your tech stack and the open web, including job postings, funding events, product launches, intent data, and CRM activity, and uses those signals as the anchor for AI-generated outreach drafts.

Rather than asking reps to find signals and write emails separately, Unify collapses that workflow into a single review queue. Signals trigger drafts. Reps review and approve. The system handles enrollment, sequencing, and follow-up logic. What would take a rep 3-4 hours of research and writing per day takes roughly 20-30 minutes in Unify, without sacrificing the signal-specificity that drives reply rates.

The prompt templates used inside Unify are designed around the principles in this guide: one signal per message, observation-based openings, constraint-heavy instructions that eliminate the most common AI tells. Customers using Unify's AI personalization see average reply rates 3-4x higher than industry benchmarks for AI-generated outreach, with pipeline sourced from AI-assisted sequences converting at rates comparable to fully hand-written outreach.

For teams already running structured outbound workflows, the transition to signal-first personalization typically takes two to three weeks, including prompt calibration and rep training on the review workflow. For a practical 14-day plan to make that transition, see The 14-Day Plan for Outbound Personalization at Scale.

What Are the Most Common Mistakes in AI Outreach Personalization?

The most common mistakes in AI outreach personalization come down to three patterns: personalizing the wrong things, using the wrong inputs, and skipping the quality review step.

Personalizing the wrong things means spending prompt tokens on surface-level details, like mentioning the prospect's company name in the subject line or referencing their LinkedIn headline, rather than anchoring on a meaningful signal. Surface personalization does not move people. Situational relevance does.

Using the wrong inputs means feeding AI static profile data (job title, company size, industry) rather than dynamic signal data (what changed recently, what they just did, what they just said publicly). Static data produces generic output. Dynamic data produces specific output. The difference shows up in the first sentence of every email your team sends.

Skipping the quality review is the mistake that compounds all others. Teams that let AI-generated copy send without a rep review are not running AI-assisted outreach. They are running fully automated outreach and calling it personalized. The review step is not a bottleneck. It is the quality floor. Even a 60-second review catches the most damaging errors: wrong signal, wrong tone, factually off, or simply not plausible for a human to have written.

For additional context on how cold email quality affects deliverability and long-term domain reputation, see Cold Email Best Practices for SDR Workflows.

Summary: The Framework for Personalized Outreach That Converts

Personalized outreach at scale converts when it clears three bars that most AI-generated copy fails: it anchors on a real signal, it sounds like a human made a specific observation, and it asks a question the prospect actually wants to answer.

The five frameworks that get you there are:

- The human audit test: would a real person who did this research actually write this?

- Signal-first structure: the trigger goes in sentence one, not sentence three

- Specificity over adjectives in prompt design: tell the AI what to observe, not how to feel about it

- The 80/20 split: automate research, drafting, and sequencing. Apply human judgment to signal selection, tone, and approval

- Before/after calibration: compare your output to the examples above and adjust your prompts until the AI-generic version is gone

The teams winning at outbound personalization right now are not writing more. They are writing better, with AI doing the first draft and humans setting the quality floor. That combination, built on real buying signals and tight prompt engineering, is what closes the gap between automation and genuine relevance.

Frequently Asked Questions

How do you personalize outreach at scale without sounding like AI?

You personalize outreach at scale without sounding like AI by feeding the model dynamic buying signals instead of static profile data, writing prompts that specify exact observations rather than adjectives like "personalized" or "conversational," and reviewing every draft before it sends. The winning formula is signal-first openings, one-signal-per-email focus, and an 80/20 split where AI handles research and drafting while a human approves the final copy.

Why does AI-generated outreach still sound generic?

AI-generated outreach sounds generic because the inputs are generic. Most teams feed AI a prospect's name, company, and job title — the same data every competitor has. Combined with vague prompts like "write a personalized cold email," the AI defaults to the most statistically common cold email patterns in its training data, which is exactly the pattern buyers are trained to ignore.

What is signal-first personalization?

Signal-first personalization is the practice of opening every outreach message with the specific buying signal that triggered it, rather than with a compliment or generic context. A signal is any observable change in a prospect's world — a new executive hire, a funding round, a relevant job posting, a public product launch — that suggests they may have a problem your product solves. Leading with the signal demonstrates research without announcing it.

What is the "human audit" test for AI-generated emails?

The human audit test is a single question applied to every AI-generated message before it sends: "Would a human who had done this research actually write this?" If the answer is no, the prompt gets rewritten. The test exposes copy that is technically accurate but behaviorally implausible — the clearest tell of unedited AI output.

How do you write prompts that produce natural-sounding AI copy?

Write prompts that prioritize specificity over adjectives. Never ask AI to write something "personalized," "relevant," or "conversational" — those are aesthetic judgments the model cannot reliably make. Instead, specify the exact signal to anchor on, the exact observation to make, a word count floor and ceiling, a voice constraint (e.g. "senior revenue leader with 12 seconds to make a point"), and explicit negative constraints ("do not compliment their growth, do not use the word 'excited'").

What is the 80/20 rule for outbound automation?

The 80/20 rule for outbound automation means 80% of the workflow should be automated and 20% should involve deliberate human judgment. Automate prospect research, signal aggregation, first-draft copy generation, variable population, sequence enrollment, and follow-up timing. Reserve human input for choosing which signal to anchor on, deciding whether the timing is right for this specific prospect, and approving or lightly editing the final draft. Most teams fail by automating the wrong 80% — the judgment calls rather than the mechanics.

What are the most common mistakes in AI outreach personalization?

The three most common mistakes are personalizing the wrong things (surface-level details like LinkedIn headlines instead of meaningful signals), using the wrong inputs (static profile data instead of dynamic signal data), and skipping the human review step before sending. The review step is not a bottleneck — it is the quality floor that catches wrong signals, off tone, and copy no real human would plausibly have written.

What reply rate should I expect from signal-based AI outreach?

Teams running signal-triggered, prompt-engineered outreach on Unify see average reply rates around 4.2%, compared to an industry baseline of roughly 1.1% for generic AI outreach. The 3-4x gap is not explained by more sends or better lists — it comes from more relevant copy generated from better signals and tighter prompts. Results vary by industry, persona, and signal quality, but the pattern is consistent: signal-anchored messages outperform generic AI outreach by a multiple, not a margin.

Sources

- McKinsey & Company, "The State of AI in Early 2024: Gen AI Adoption Spikes and Starts to Generate Value" (2024) — https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-2024

- Unify Platform Data, "Reply Rate Benchmarks: Signal-Triggered vs. Generic AI Outreach" (2025) — Internal customer aggregate data, available on request at unifygtm.com

- G2 Reviews, Unify Category Page (2025) — https://www.g2.com/products/unify/reviews

- HubSpot, "Email Marketing Benchmarks by Industry" (2024) — https://blog.hubspot.com/sales/average-email-open-rate-benchmark

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)