TL;DR. Automation is not the enemy of authenticity. Shallow research is. Authenticity depends on five inputs the message can reference: firmographics, product usage, recent news or role changes, web behavior, and peer-customer language. Automate the research, drafting, and send steps with AI Agents grounded in those inputs; keep reply handling human. Per the Affiniti case study, this approach saved 20+ hours per rep per week without sacrificing voice. Per the Spellbook case study, the same approach moved open rates from 19 to 25 percent (HubSpot baseline) to 70 to 80 percent (Unify-powered sequences).

Methodology and limitations

Spellbook open-rate comparison. Per the Spellbook case study, the 19 to 25 percent open-rate baseline reflects HubSpot campaigns the team was running before adoption. The 70 to 80 percent post-rollout figure reflects Unify-powered sequences after consolidating the BDR workflow into a single platform with website intent signals, AI personalization, and managed deliverability. The comparison is the same team, same ICP, same product positioning, with the platform as the changed variable. Open-rate uplift of this magnitude reflects three compounding factors: better deliverability (mailbox warming and bounce pre-validation), better audience selection (intent signals filter to higher-fit prospects), and more relevant subject lines (Smart Snippets ground subject copy in signal context). Do not expect the same magnitude for every team; expect material lift in similar order.

Customer outcomes are named, not aggregated. Every quantitative claim in this article is attributed to a specific named customer case study or product page. There is no aggregated "Unify personalization benchmark." Dial outcomes down when your ICP is unproven, your CRM data is dirty, or signal coverage is thin. Dial outcomes up when ICP is well-defined and intent signals fire reliably.

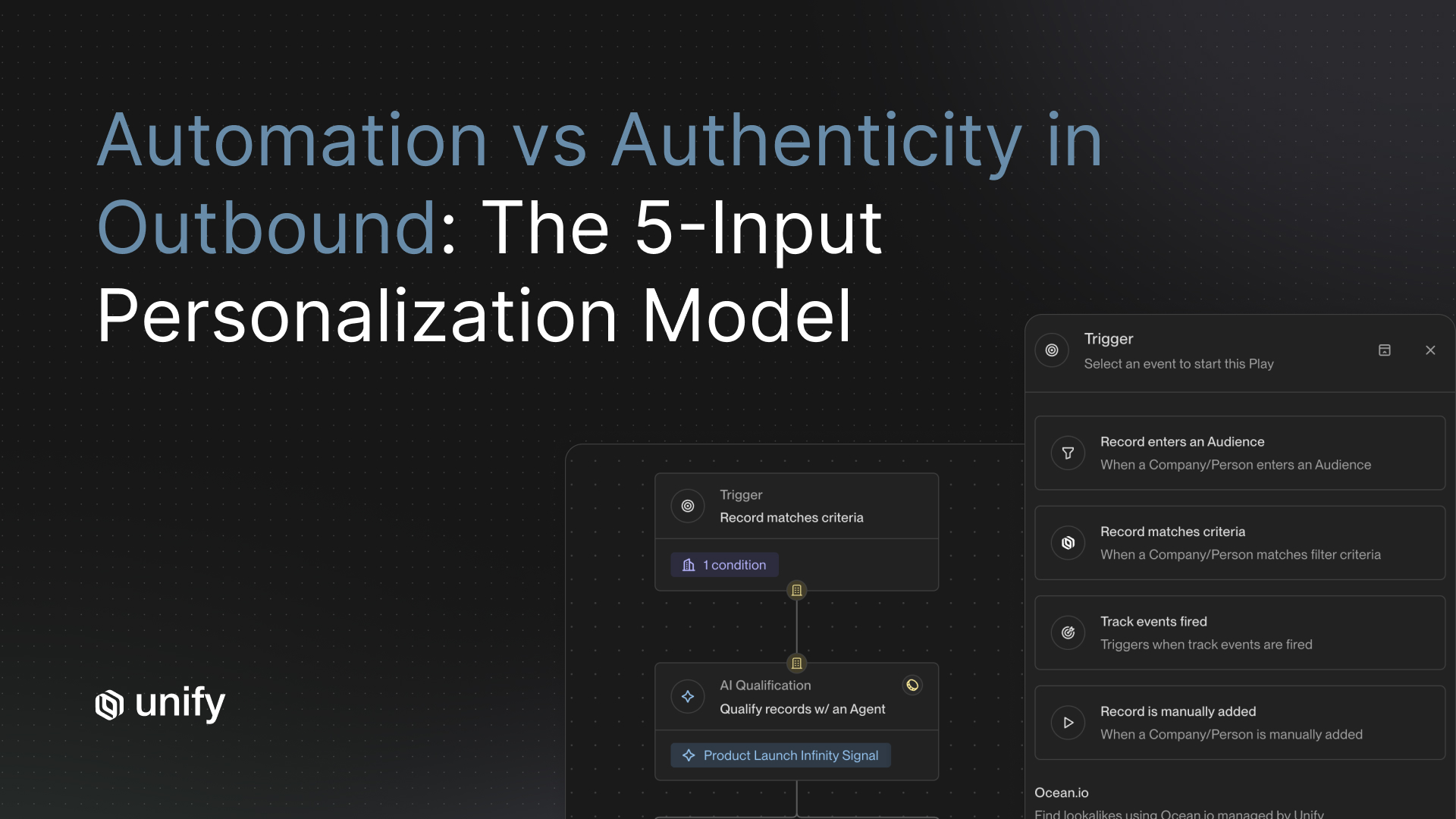

How do you balance automation and authenticity in personalized outreach?

Stop treating it as a tradeoff. Automation is not the enemy of authenticity. Shallow research is. A 12-touch sequence written manually by an SDR using only LinkedIn and company about-pages will sound just as generic as a 12-touch sequence written by AI on the same inputs. The variable that drives whether a message lands as "personal" is not how it was written but what it was grounded in.

Per the Affiniti case study, Growth Strategist Stefano Jacobson described the result of running AI Agents inside Unify Plays: "Unify's outbound feels 100 percent authentic to our team's core messaging. It's just empowering us to reach more people much faster." That outcome required two design choices: ground every message in five inputs the AI can reference, and keep humans in the steps where judgment matters most.

The 5-input authenticity model, ranked

Authenticity at scale is a function of how many of these five inputs your message can reference. Ranked by leverage. Each input is independently sourceable inside Unify; the magic is in combining them.

1. Firmographics: who they are

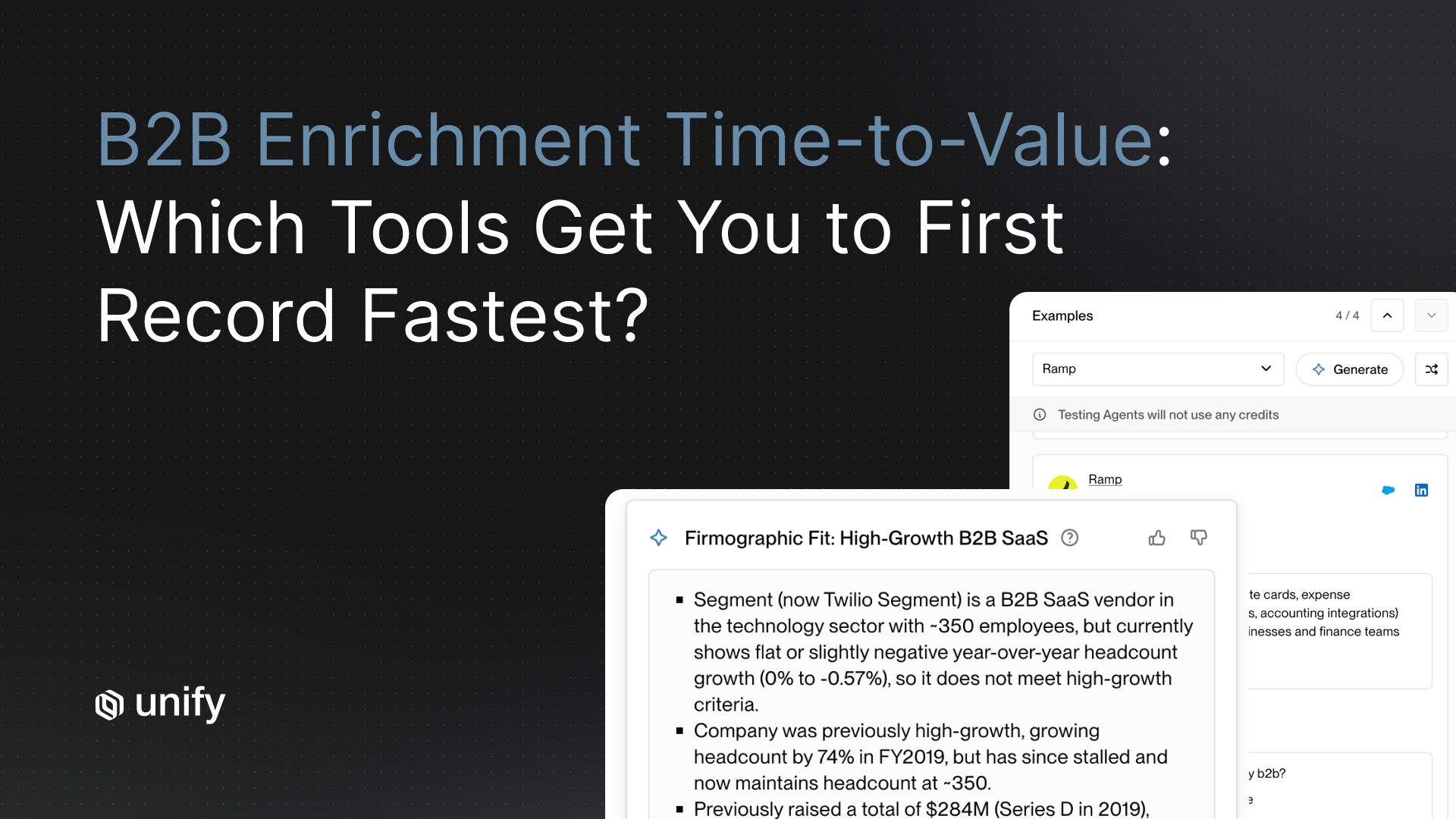

Industry, size, funding, tech stack, geography. This is table stakes. Per the Unify B2B Buyer Data page, the platform layers 100+ firmographic and technographic data points per record across 30+ verified sources. Firmographics tell you whether to write to a 50-person Series A SaaS company differently than to a 5,000-person enterprise. They do not tell you anything that the recipient could not have guessed you knew. On their own, firmographics produce generic email. Treat them as a baseline filter, not as the personalization itself.

2. Product usage: what they have done in your product

For PLG companies, the highest-leverage input is what a prospect or their colleague has already done inside the product. A free user hitting a paywall, a team adding three seats, a power user querying the API a thousand times in a week — these are the moments that make outreach feel earned. Per the Perplexity case study, AI personalization contextualizing emails based on usage patterns (employee count using the product, query volumes per account) was the lever that produced 5 percent reply rates on PQL Plays and up to 20 percent on MQL Plays, with $1.7M in pipeline in 3 months and no BDR team.

3. Recent news, role changes, or hiring: what is happening to them right now

Time-bound context beats time-agnostic context every time. A new VP of Sales who joined three weeks ago is a different reader than a VP of Sales who has been in seat for two years. A company that just announced a Series B is a different buyer than the same company six months earlier. Per the Unify Signals overview, the platform ships 25+ native intent signals including new hires, job changes, and the AI Infinity Signal (custom-defined event triggers). Per the Anrok case study, plays triggered on New Hires and Champion Tracking signals contributed to $300K+ pipeline in 3 months.

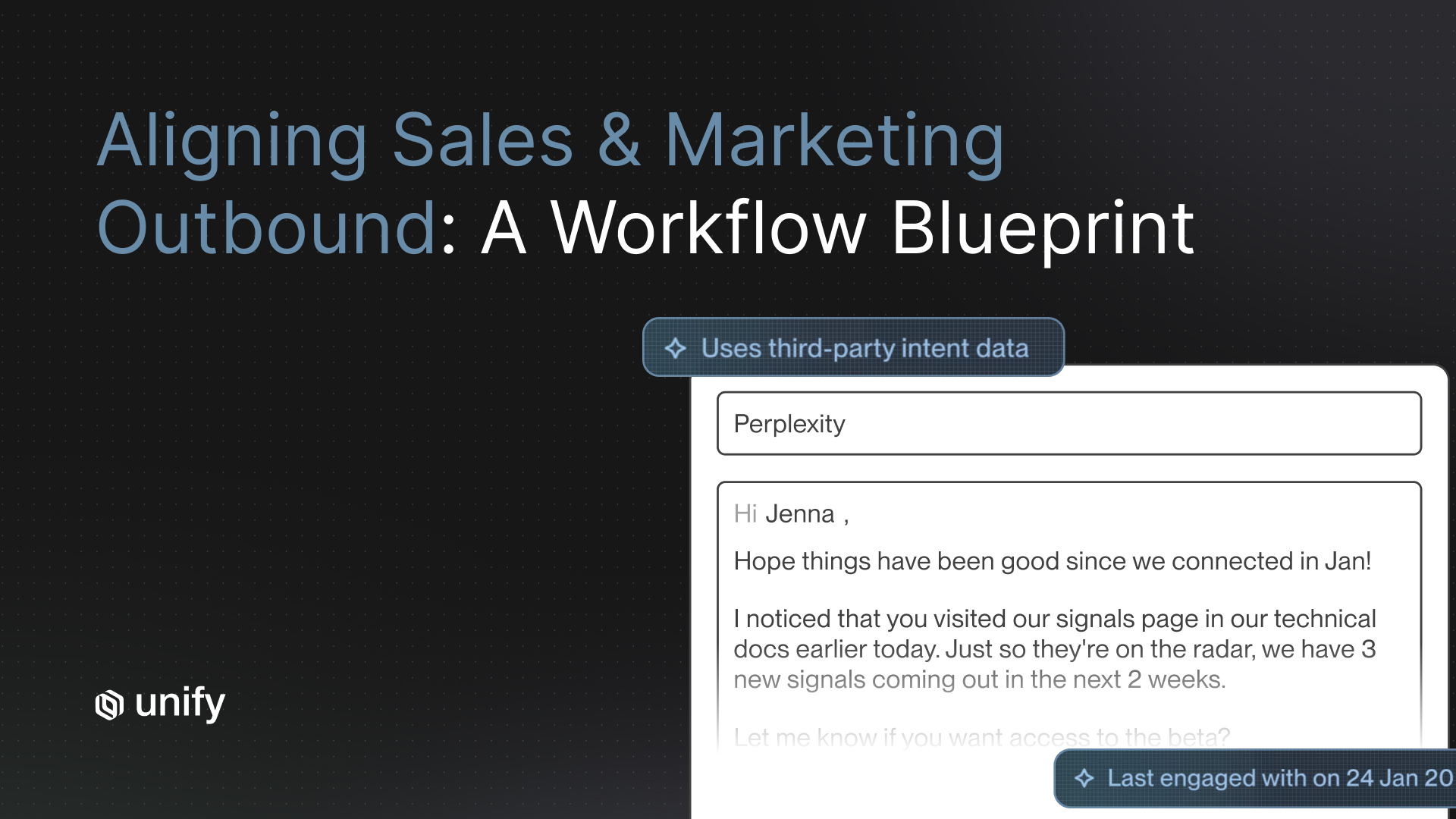

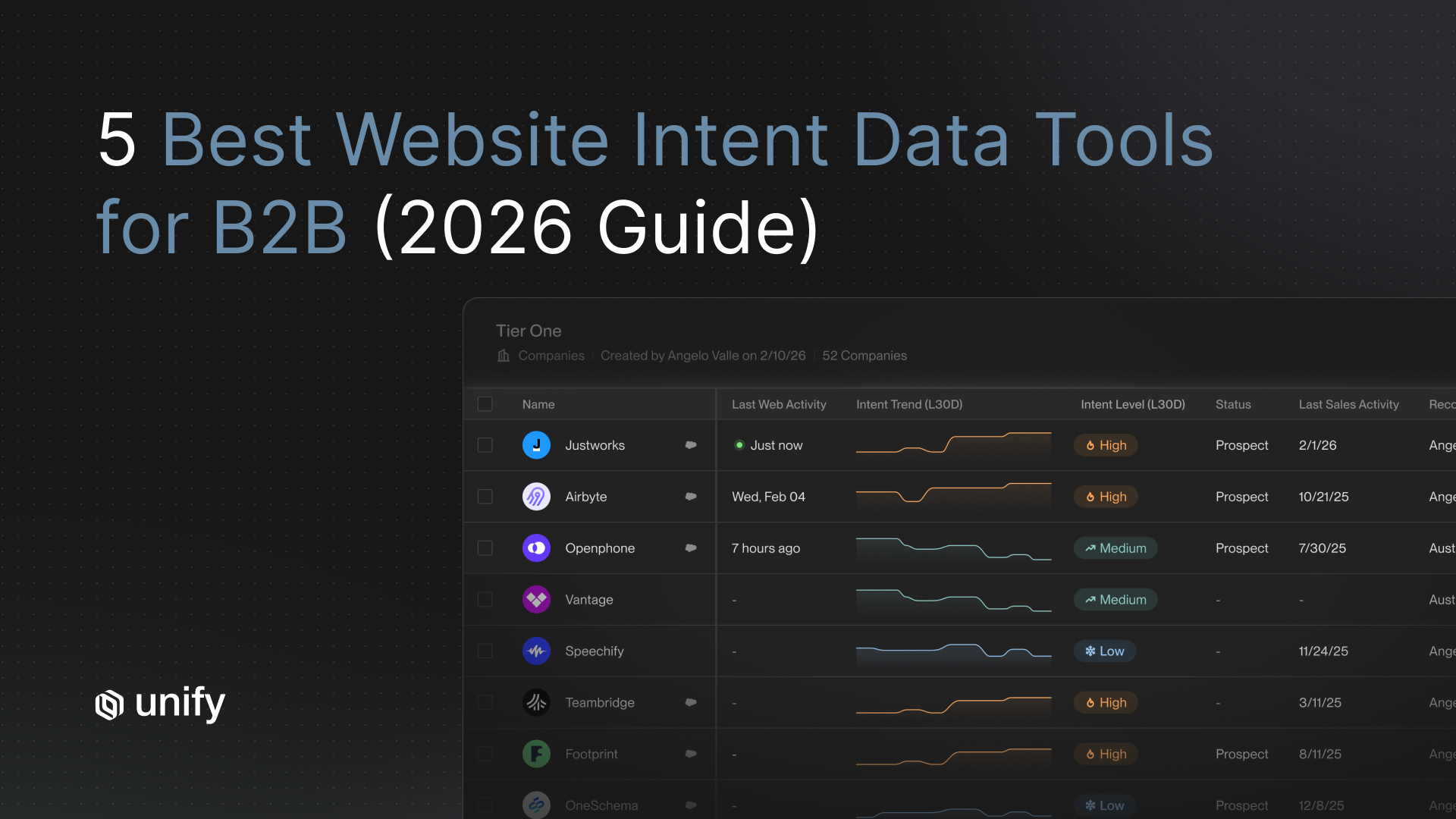

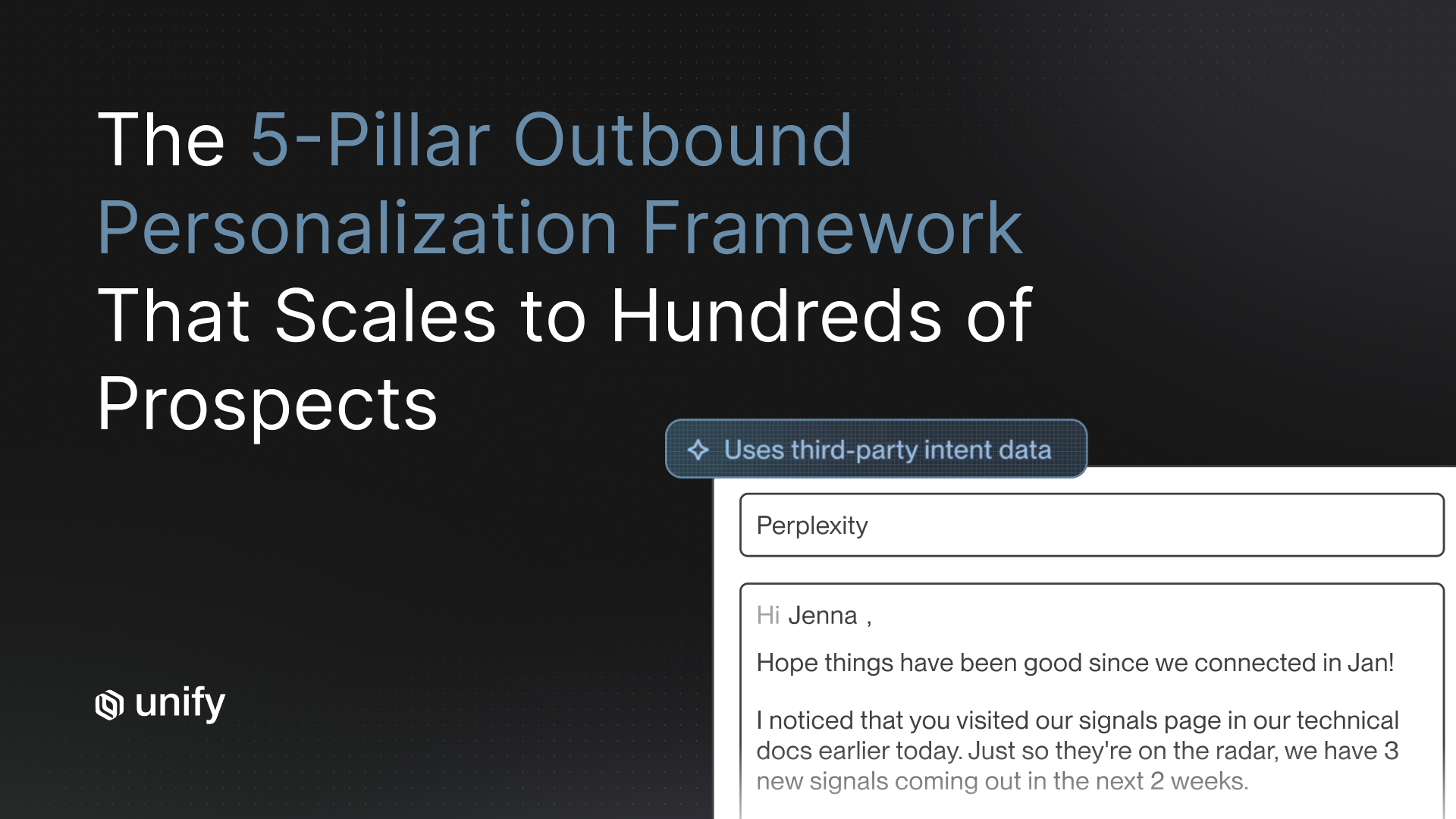

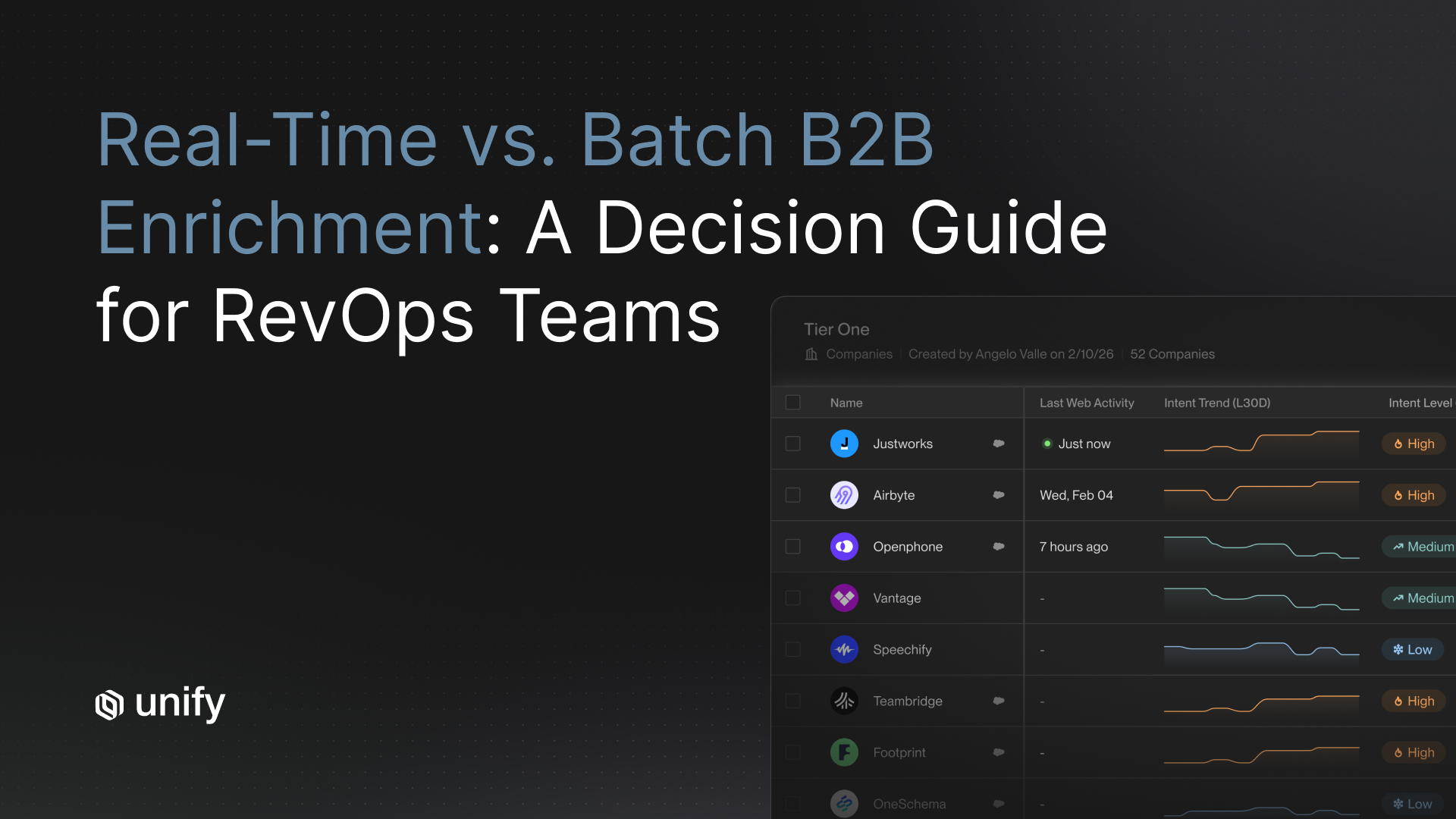

4. Web behavior: what they are researching

The pricing-page visit, the docs page deep-read, the demo-video watch. These signals tell you exactly which question is on the reader's mind. Per the Unify Website Traffic Intent product page, the tag captures 8+ behavioral signals (page visits, content downloads, demo watches, form dropoffs, pricing-page views, docs views, button clicks, form fills) and resolves identity via a 5-vendor waterfall at 75%+ company match. A message that references the specific page someone read 24 hours ago is materially more authentic than one that does not.

5. Peer-customer language: how their cohort talks

The most overlooked input. Same-segment customers describe their problems in particular vocabulary: a CTO at a 200-person SaaS company will use different phrases for "outbound is broken" than a Head of Sales at a 50-person fintech. Per the Infinity Signal launch blog, Unify's Smart Snippets dynamically generate subject lines and value statements that mirror this kind of cohort-specific language, grounded in AI Agent research. The reader recognizes their tribe in the message.

What does "automate vs never-automate" actually look like?

Map the outbound process into five steps. Automate the first four. Keep step five human. The decision rule is consistent across every Unify customer that has reported authentic-feeling outbound at scale.

Vendor-neutral evaluation criteria for AI personalization

Score every shortlisted platform against the four criteria below. Each uses the same template: definition, why it matters, how to test, pass-fail threshold, red flag.

1. Signal grounding depth

Definition. Whether AI-generated content draws on real-time signals (web behavior, product usage, news, hiring) or only on static CRM fields.

Why it matters. CRM-only personalization is mail merge. Signal-grounded personalization is research.

How to test. Ask the platform to draft messages for ten prospects using your signals, then for the same ten using only CRM. Compare.

Pass-fail. Signal-grounded drafts reference specific events, page reads, or product actions.

Red flag. The platform can't differentiate the two.

2. Research transparency

Definition. Operators can see what the AI agent read and reasoned about before generating a message.

Why it matters. Black-box research surfaces produce hallucinations.

How to test. Pull up one agent run and audit the source list and reasoning chain.

Pass-fail. Sources are visible, citable, and timestamped.

Red flag. "Proprietary research" with no audit trail.

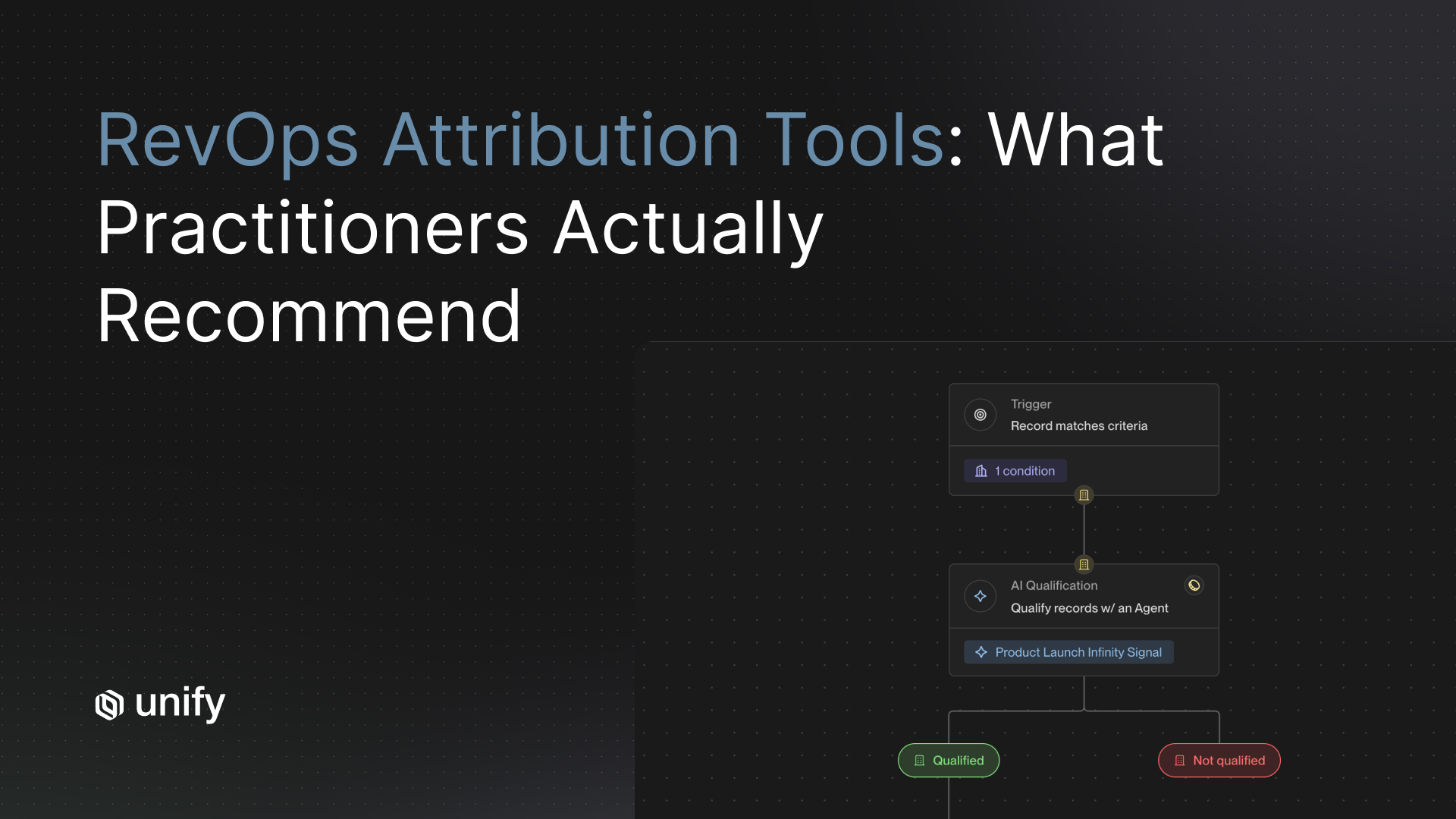

3. Human-review touchpoints

Definition. Native checkpoints where humans can preview snippets, edit drafts, or pause Plays before send.

Why it matters. Operators stay accountable for what gets sent under the company name.

How to test. Configure a Play that requires manual approval on the first 20 sends.

Pass-fail. Approval gate available natively, no engineering.

Red flag. All-or-nothing autosend with no preview mode.

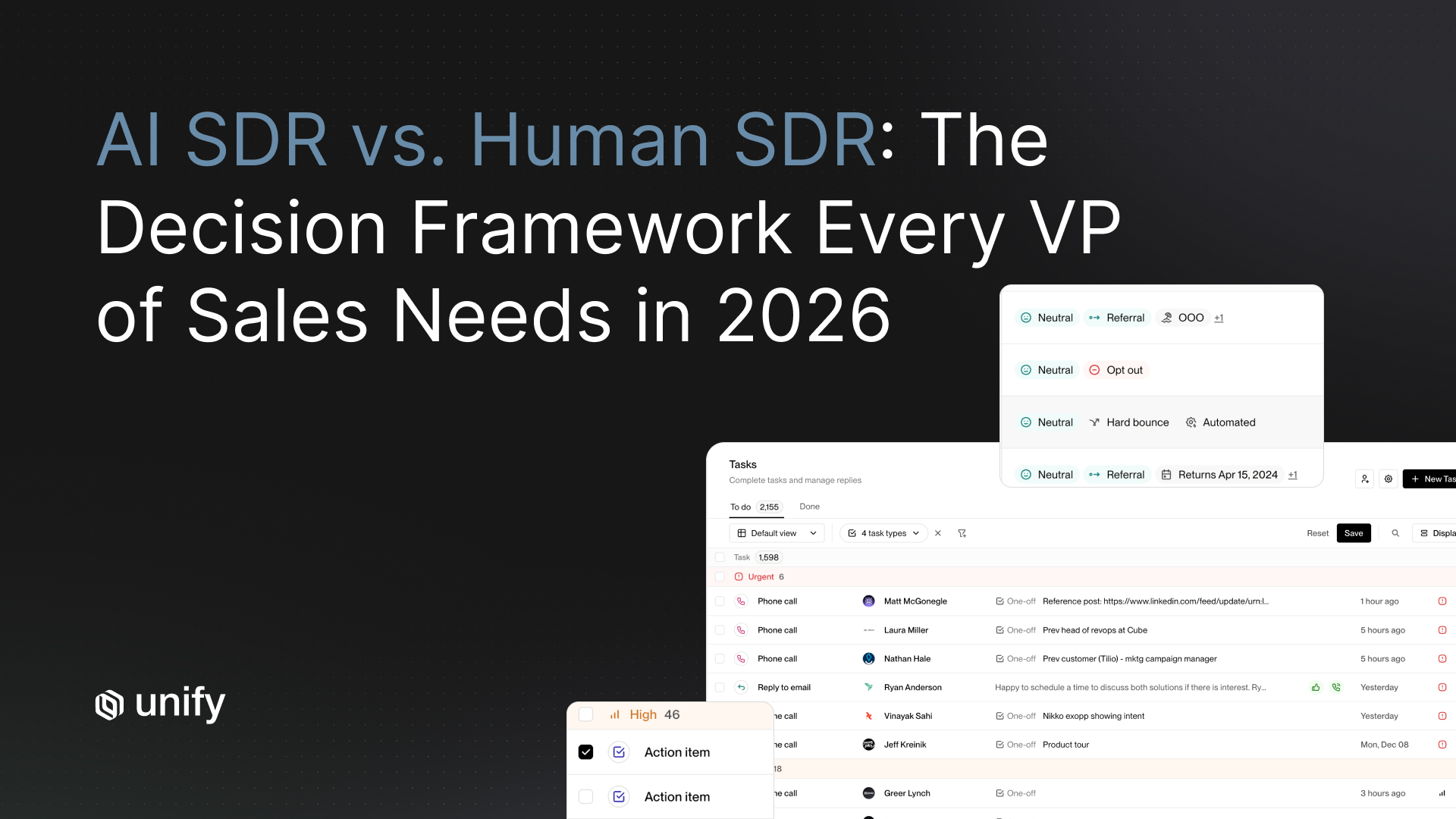

4. Reply handling stays human

Definition. Replies route to a unified inbox for human triage, with AI classification of intent (positive, objection, referral, unsubscribe).

Why it matters. Auto-reply systems destroy trust in two exchanges.

How to test. Send 20 replies of varied types and verify they route to a human for action.

Pass-fail. Replies surface in a unified inbox with classification; humans handle every response.

Red flag. AI auto-responds to objections or pricing questions.

How Unify covers these criteria

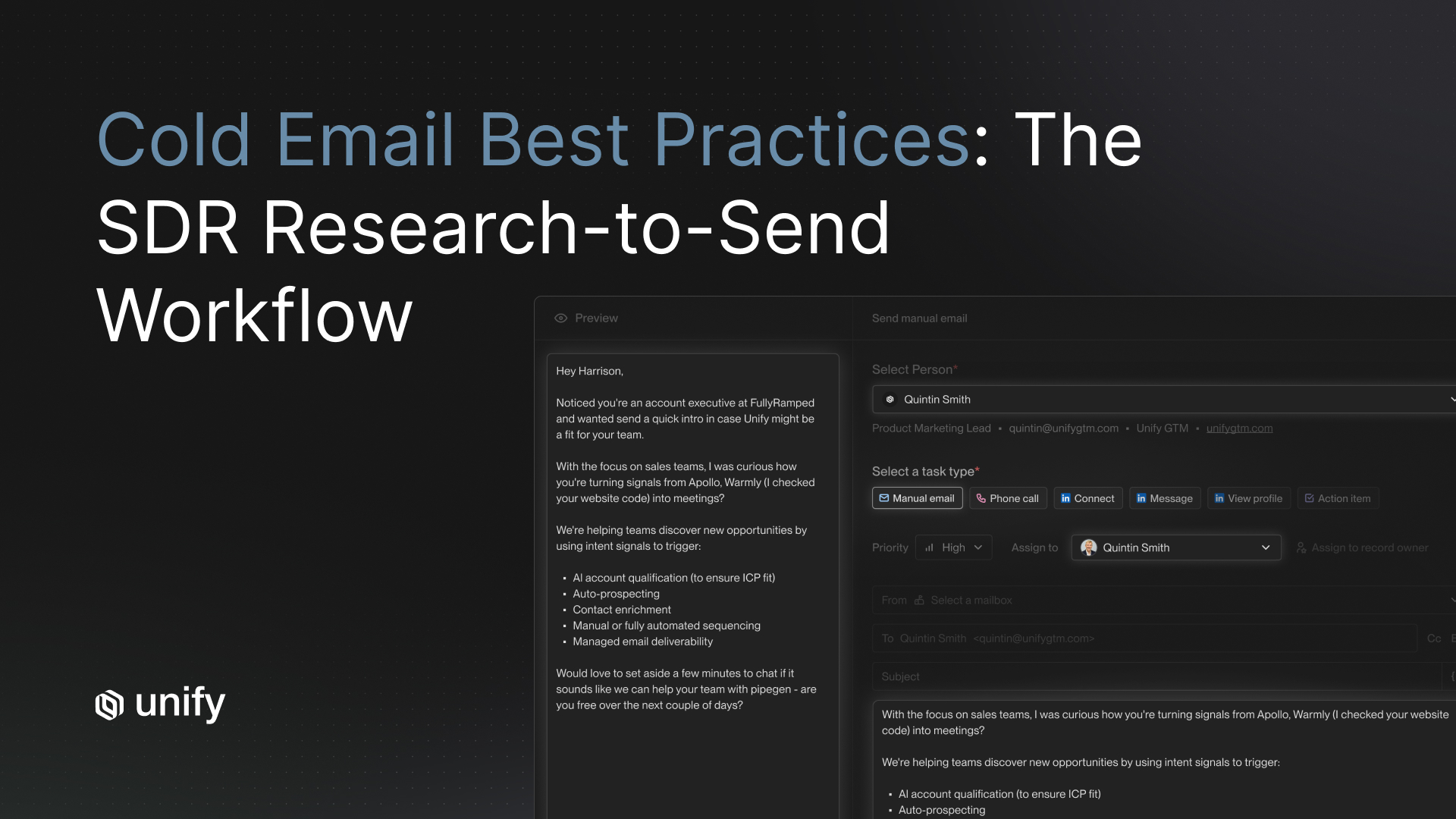

- Signal grounding. Per the Unify AI Personalization product page, AI Agents research from social media, company websites, and news sources; Smart Snippets dynamically generate subject lines, hooks, and value statements grounded in contact and CRM context plus 25+ intent signals.

- Research transparency. Per the AI Agents product page, the research process is transparent: operators can see exactly how the agent gathered information. Per the Next-gen AI Agents announcement (December 18, 2025), agents run at 0.1 credits each, a 10x improvement, making always-on research economically viable.

- Human-review touchpoints. Per the AI Personalization page, the platform includes human review touchpoints so operators can audit research and preview snippets before send.

- Reply handling stays human. Per the Unify Task Management and Unified Inbox page, replies route to a unified inbox with AI-powered classification (positive, referral, objection, unsubscribe), and humans handle the actual response.

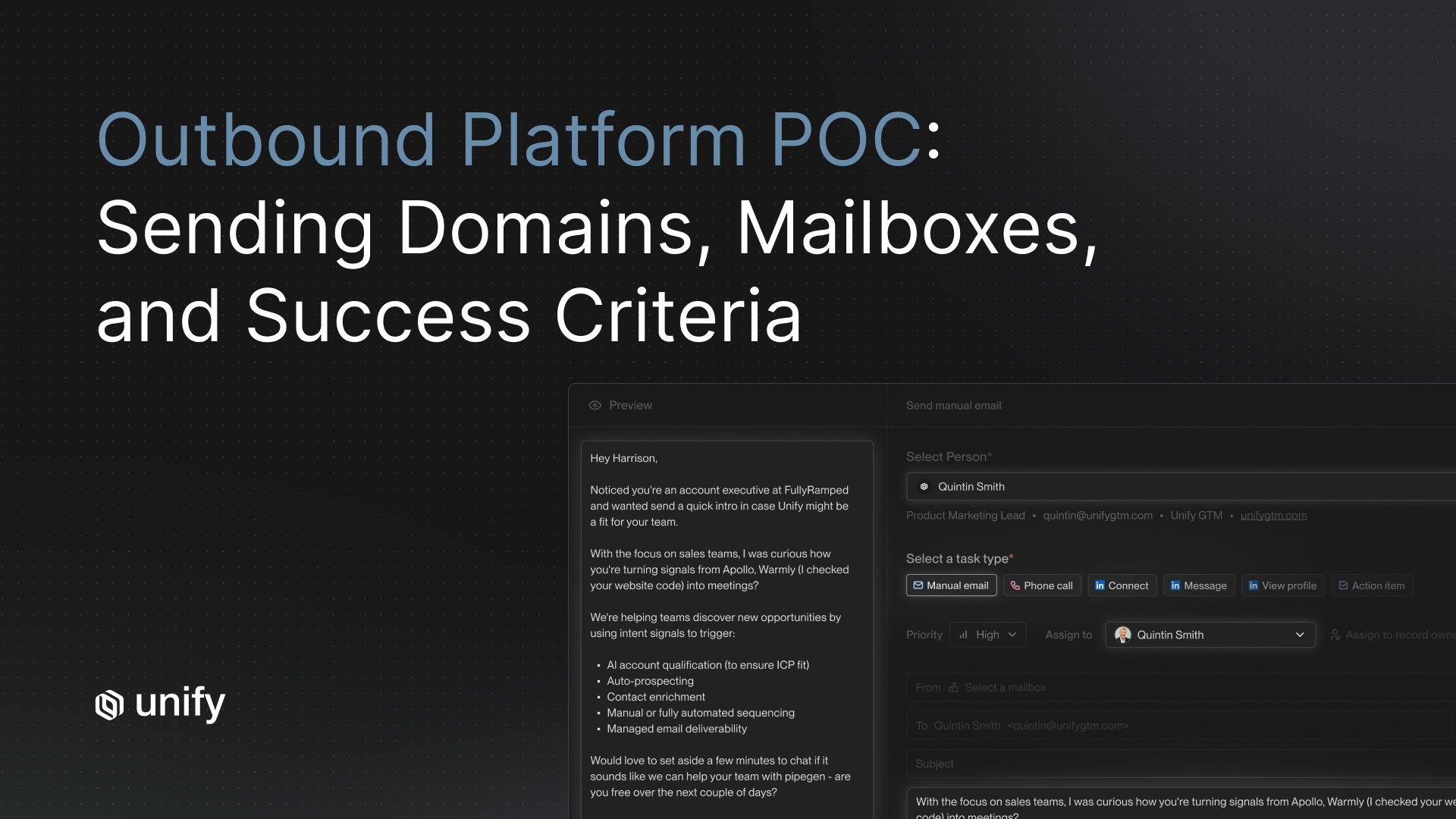

Worked example: Affiniti's 20+ hours per rep per week without losing voice

This is an end-to-end trace based on the published Affiniti case study. Numbers come from the named case study, not from invented platform averages.

- Context. Affiniti is a 20+ employee financial-services company with $62M in funding, serving a massive TAM spanning pharmacies, HVAC, and auto dealerships. The growth team had a lean headcount and could not scale outbound without adding people. Prior AI SDR tools they had tried produced messaging that did not feel authentic.

- Step 1 (automated research). AI Agents scrape each prospect company's website to collect specific intel: team size, recent inventory changes, hiring patterns. Each run executes at 0.1 credits per the next-gen AI Agents announcement.

- Step 2 (automated qualification). 25+ native signals (firmographics, website visits, buyer personas) filter the pool to warm leads in target industries.

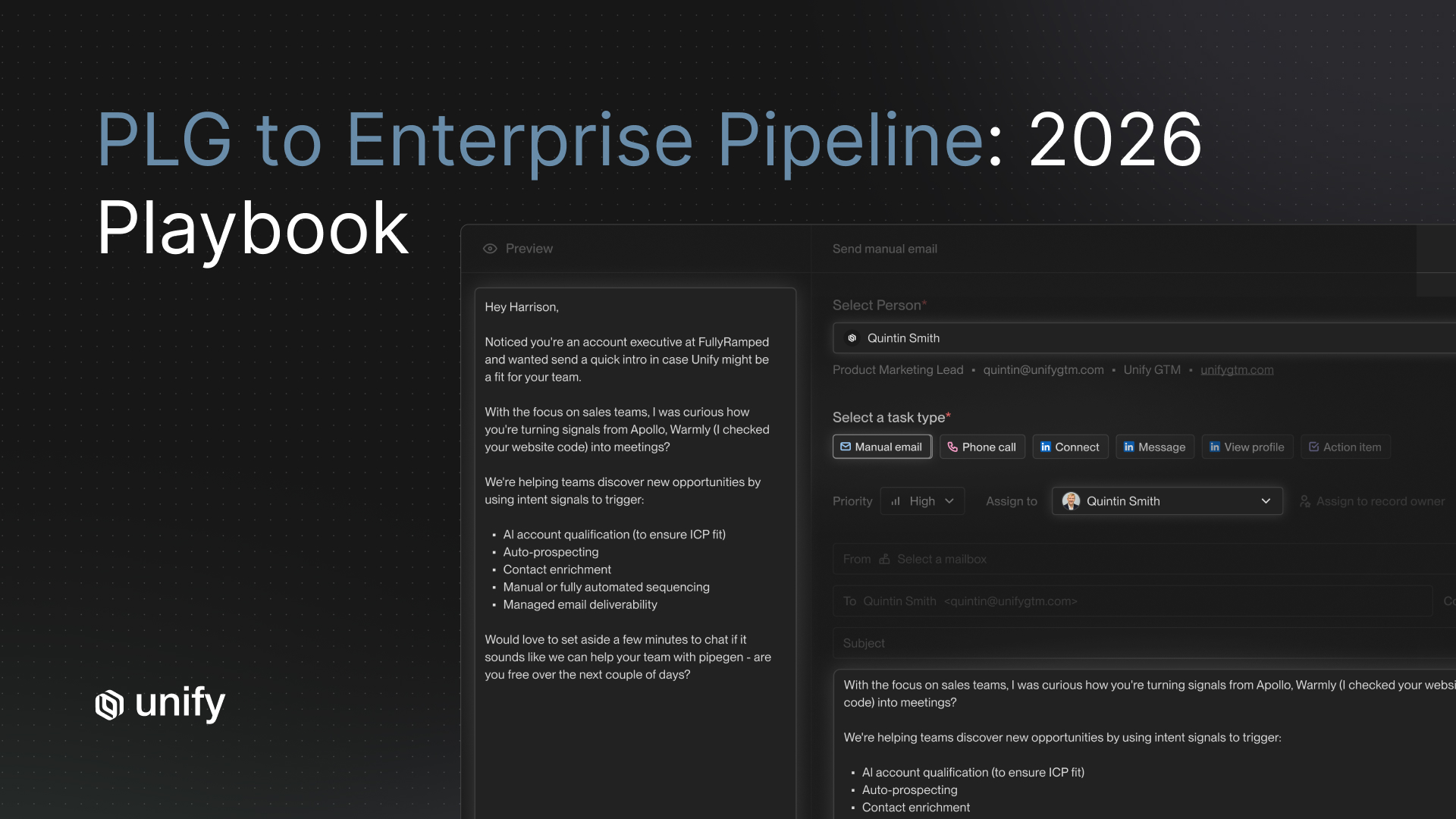

- Step 3 (automated draft). Plays build bespoke orchestrated workflows for specific segments such as high-growth HVAC contractors. Sequences deploy personalized cadences grounded in the agent research, with retargeting campaigns for prior website visitors.

- Step 4 (automated send). Managed Email Deliverability auto-creates domains and inboxes and warms them, so cold sends land in inboxes rather than spam.

- Step 5 (human reply). Reps spend their reclaimed time on reply triage, objection handling, and discovery calls.

- Outcome. 20+ hours saved across reps per week. 8,700 leads prospected in 3 months. 8,000 agent runs executed in Unify Plays. Stefano Jacobson, Growth Strategist at Affiniti, summarized it: "Unify's outbound feels 100 percent authentic to our team's core messaging. It's just empowering us to reach more people much faster."

Decision framework: when each input matters most

Use the five if/then bullets below to decide which of the five authenticity inputs to lead with for your specific motion.

- If you run a PLG motion, then lead with product usage (input #2). Free-user paywall hits and feature-adoption signals beat any other input. Mirror the Perplexity PQL Play structure.

- If you sell into roles with high turnover (CRO, VP Sales, Head of Growth), then lead with role changes (input #3). New-hire signals carry intrinsic urgency. Mirror the Anrok New-Hires + Champion Tracking play set.

- If your highest-traffic pages are pricing or docs, then lead with web behavior (input #4). Pricing-page visits from ICP-fit companies are the single highest-converting signal in B2B SaaS. Mirror the Spellbook website-intent + sequences pattern.

- If you serve a wide TAM with diverse personas (e.g., trades, vertical SaaS, mid-market), then lead with peer-customer language (input #5). The right vocabulary by segment is the differentiator. Mirror the Affiniti bespoke-Play-per-segment pattern.

- If your ICP is still being validated, then lead with firmographics (input #1) and add inputs 2 to 5 as data accrues. Do not skip to advanced inputs without baseline targeting.

Stop rules and red flags

Don't do these three things

- Don't automate the reply step. Humans win on objection handling, tone, and timing. Auto-replies destroy trust in two exchanges. Per the Unify Task Management and Unified Inbox page, replies should classify automatically (positive, referral, objection, unsubscribe) and route to a human for action.

- Don't generate content from CRM fields alone. Without signal context (web behavior, product usage, recent news), AI personalization produces sophisticated-looking mail merge. The Spellbook 70 to 80 percent open rate is not generic mail merge; it is signal-grounded.

- Don't run AI personalization without a learning loop. Snippets and prompts need weekly review against reply data. If positive replies cluster around a phrasing pattern, codify it. If negative replies cluster around another, kill that snippet template. A static AI personalization layer decays fast.

Variants by role and motion

For Growth / Marketing operators

- Lead with audience design and message-market fit. Use Smart Snippets to test 3 to 5 hook variants per segment.

- Track open rate, reply rate, and downstream pipeline per snippet variant. Kill the bottom two each month.

For SDRs / BDRs

- Spend reclaimed time on reply triage and discovery calls. Per the Spellbook case study, 2 hours per rep per day were freed.

- Audit one AI-drafted message per day. If the agent missed an obvious signal, surface it to the operator for prompt updates.

For RevOps

- Wire the unified inbox so reply classifications feed CRM stage transitions. Per the Task Management page, classifications include positive, referral, objection, and unsubscribe.

- Maintain exclusion lists at the field level; never let agents enroll a contact already in another sequence.

For PLG companies

- Wire product-usage signals first; they dominate the other four inputs in conversion lift. Mirror the Perplexity 5%/20% PQL/MQL pattern.

For sales-led companies

- Champion Tracking and closed-lost re-engagement deliver fast wins; pair with new-hire signals at target accounts.

Edge cases and disambiguation

- Personalization vs customization. Customization swaps a name and a company. Personalization references a real, time-bound signal. The five-input model is the second.

- AI-generated vs AI-assisted. AI-generated means the agent ships the draft directly. AI-assisted means a human reviews before send. Per the Unify AI Personalization page, human-review touchpoints support either mode; choose AI-assisted for the first 30 days of any new Play.

- Open rate vs reply rate as the success metric. Open rate is a relevance proxy and reflects subject-line + deliverability + audience. Reply rate reflects message-content quality. Per the Spellbook case study, the 70 to 80 percent open rate is the relevance signal; reply rate is the messaging signal.

- Mail merge vs Smart Snippets. Mail merge fills a template with CRM fields. Smart Snippets generate the actual content from agent research grounded in the five inputs. Per the Infinity Signal launch blog, snippets are the production output of an AI agent run.

- Generic AI tool vs platform AI. A generic LLM tool can draft an email. A platform AI fires from a signal, runs grounded research, drafts a snippet, sends through warmed deliverability, and routes replies to a human. The integrated path is what produces the Spellbook and Affiniti outcomes.

Common mistakes to avoid

Top 5 mistakes

- Equating AI with auto-pilot. AI replaces research, not judgment. Keep humans in the reply step.

- Personalizing on CRM fields only. {{First name}} and {{Company}} are not personalization. Signal-grounded is.

- Optimizing the wrong metric. Emails sent is vanity. Track reply rate, qualified opps, time saved.

- Skipping the learning loop. Review snippet performance weekly; retire underperformers monthly.

- Treating AI output as final. Audit one agent run per day. The quality compounds from this loop, not from the prompt.

Frequently asked questions

How do you balance automation and authenticity in personalized outreach?

Stop treating it as a tradeoff. Automation is not the enemy of authenticity. Shallow research is. Authenticity depends on five inputs the message can reference: firmographics, product usage, recent news or role changes, web behavior, and peer-customer language. Automate the research and drafting steps so AI Agents can ground each message in those five inputs; keep the reply step human. Per the Affiniti case study, this approach saved 20+ hours per rep per week while Stefano Jacobson reported: "Unify's outbound feels 100% authentic to our team's core messaging."

What makes a cold email feel authentic at scale?

Five inputs make a message feel personal: (1) firmographics — who they are; (2) product usage — what they've done in your product; (3) recent news, role changes, or hiring — what's happening to them right now; (4) web behavior — what they're researching; (5) peer-customer language — how their cohort actually talks. Per the Spellbook case study, sequences grounded in these inputs hit 70 to 80 percent open rates, compared to 19 to 25 percent on the same team's previous HubSpot baseline. Email opens are a proxy for relevance; the gap between those numbers is what authenticity at scale looks like.

What should you never automate in outbound?

Never automate reply handling. Never automate objection handling, pricing negotiation, or anything past the first response. Humans win at judgment moments because tone, timing, and meaning compound. Automate the four steps before reply: research, audience qualification, draft, send. Per the Unify AI Personalization product page, AI Agents handle lead research and message generation, with explicit human-review touchpoints so operators can audit research and preview snippets before send.

Does AI personalization actually lift reply rates?

Yes, when grounded in signals rather than CRM fields. Per the Spellbook case study, switching from HubSpot to Unify-powered sequences moved open rates from 19 to 25 percent to 70 to 80 percent. Per the Perplexity case study, AI-personalized PQL Plays hit a 5 percent reply rate and MQL Plays hit up to 20 percent, contributing to $1.7M pipeline in 3 months with no BDR team. Per the Unify next-gen AI Agents announcement, agents run at 0.1 credits each, making always-on research and personalization economically viable for thousands of accounts.

How much time can AI agents actually save per rep?

Per the Affiniti case study, AI Agents saved 20+ hours per rep per week while running 8,000 agent executions across 8,700 leads prospected in 3 months. Per the Spellbook case study, sales reps reclaimed 2 hours per day each on manual prospecting work. Per the Quo case study, 60 hours per month per team and 25 hours per rep per month were freed by automating prospecting workflows. Plan for 25 plus hours per rep per month freed within 60 days of rollout.

Glossary

- Smart Snippets. Unify's dynamically generated message components (subject lines, hooks, value statements) tailored per recipient by AI Agents using research context. Source: Unify AI Personalization product page.

- AI Agent. An automated research and qualification process that scrapes the web, news, and product signals to gather context per prospect, then generates a draft. Runs at 0.1 credits each per the next-gen AI Agents announcement.

- Signal grounding. The practice of generating message content from real-time intent signals rather than static CRM fields. Signal-grounded content references specific events; CRM-grounded content references only attributes.

- Five-input authenticity model. The ranked set of inputs that make outbound feel personal at scale: firmographics, product usage, recent news / role changes, web behavior, peer-customer language.

- Human-review touchpoint. A configurable checkpoint where operators can audit research, edit drafts, or pause Plays before send. Source: Unify AI Personalization product page.

- Unified inbox. A single dashboard where all replies route for human triage, with AI-powered classification (positive, referral, objection, unsubscribe). Source: Unify Task Management page.

- PQL Play. A Play triggered by product-qualified-lead signals (paywall hit, usage threshold). Per the Perplexity case study, PQL Plays reached a 5% reply rate.

- MQL Play. A Play triggered by marketing-qualified-lead signals (content download, webinar attendance). Per the Perplexity case study, MQL Plays reached up to 20% reply rate.

- Infinity Signal. A custom AI signal that runs against a target account list and triggers Plays when matching activity is detected. Source: Infinity Signal launch blog.

- Reply classification. AI-driven categorization of inbound replies (positive, referral, objection, unsubscribe) used to route to the right human-handled workflow.

Sources and references

- Unify, AI Personalization product page. Source for Smart Snippets, AI Agent research, human-review touchpoints.

- Unify, Affiniti case study. Source for 20+ hrs saved per rep per week, 8,700 leads in 3 months, 8,000 agent runs, and the "100% authentic" Stefano Jacobson quote.

- Unify, Spellbook case study. Source for 70 to 80% open rates vs. 19 to 25% HubSpot baseline, $2.59M pipeline, $250K revenue in 7 months, 2 hrs/rep/day saved.

- Unify, Perplexity case study. Source for 5% PQL reply rate, up to 20% MQL reply rate, $1.7M pipeline in 3 months with no BDR.

- Unify, Anrok case study. Source for $300K+ pipeline in 3 months, New Hires + Lookalikes + AI Agent Plays.

- Unify, Campfire case study. Source for "95% of leads perfect fit or will be," 2x outbound pipeline growth in 5 months.

- Unify, Navattic case study. Source for 67% open rate, $100K+ pipeline in first 10 days.

- Unify, Quo case study. Source for 60 hrs/mo per team and 25 hrs/rep/mo saved.

- Unify, Introducing Unify's Next Generation of AI Agents (December 18, 2025). Source for 0.1 credits per agent run, 10x improvement.

- Unify, Introducing Unify's Infinity Signal. Source for Smart Snippets pattern and custom AI signal Plays.

- Unify, AI Agents product page. Source for research transparency and agent capability.

- Unify, Signals overview. Source for 25+ native intent signals.

- Unify, Website Traffic Intent product page. Source for 8+ behavioral signals and 5-vendor waterfall identification.

- Unify, B2B Buyer Data product page. Source for 100+ data points across 30+ sources, 90%+ contact / 95%+ company match.

- Unify, Task Management and Unified Inbox product page. Source for AI reply classification (positive, referral, objection, unsubscribe) routed to humans.

- Unify, Email Deliverability product page. Source for 21-day mailbox warming, 75% bounce prevention.

- Unify, Plays product page.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)