TL;DR. Measure marketing-run outbound pipeline with the Play as the attribution unit, not the channel. Stamp a Play-source field on every opportunity at creation and report on four metrics, ranked by board-room weight: pipeline by Play (headline), opps created by Play (volume normalizer), win-rate by Play (quality normalizer), and time-to-close by signal type (efficiency normalizer). Per the Justworks case study, this approach delivered 6.8X ROI in 5 months. Per the Anrok case study, $300K+ pipeline in 3 months from shared Plays. Per the Affiniti case study, 8,700 leads attributed to AI Agent Plays in 3 months.

Methodology and limitations

How the Justworks 6.8X ROI is measured. Per the Justworks case study, 6.8X ROI is the ratio of attributable revenue contribution to Unify spend (subscription plus credit consumption) over the first 5 months of deployment. The denominator is platform spend, not blended marketing-program spend. The numerator is pipeline contribution from website-intent Plays (UTM-filtered against paid-traffic visitors), 6sense intent integration, G2-triggered competitor Plays, and AI-personalized sequences. This is a marketing-run program: the Plays were owned by Senior Manager of Growth Marketing Peter Nguyen, with sales taking the qualified reply hand-off.

Customer outcomes are named, not aggregated. Every quantitative claim in this article is attributed to a specific named customer case study, blog post, or Unify product page. There is no aggregated "Unify marketing-run benchmark" dataset. Sourced vs. influenced are different measures: sourced reflects first-touch attribution, influenced reflects multi-touch. The Guru $3.17M figure is influenced; the Anrok $300K figure is direct contribution. Label each report explicitly when presenting to a board or executive committee.

How do you measure pipeline contribution from a marketing-run automated outbound program?

Use the Play as the attribution unit, not the channel. Stamp a Play-source field on every opportunity at creation. Report on four metrics, ranked by board-room weight. The Play-source field is the join key between marketing's program data and sales' opportunity data — without it, attribution decays inside three months and the marketing-run program loses board defensibility.

The reframe matters because the standard alternatives misfire. Channel-level attribution ("outbound email contributed X to pipeline") is too broad to act on. UTM-only attribution misses non-link interactions (cold-email replies, signal-triggered enrollments). Multi-touch attribution credits every touchpoint equally and obscures which marketing action actually mattered. The Play is the right unit because a Play already binds a signal, an audience, a sequence, and an owner — exactly the variables a demand-gen leader needs to defend the program.

The ranked 4-metric measurement stack

Order matters. Each metric serves a different stakeholder; reporting them in this order pre-empts the most common board-room objections.

1. Pipeline by Play (headline number, board-room weight: highest)

Dollars of pipeline created where the Play was the source. This is the headline the CFO will ask for. Calculate as the sum of opportunity-amount fields on opportunities stamped with each Play's source ID. Per the Unify Analytics product page, the platform supports per-Play pipeline attribution with drill-down on opportunities created. Per the Anrok case study, $300K+ pipeline was attributed in the first 3 months across signal-segmented shared Plays.

2. Opportunities created by Play (volume normalizer)

Count of opportunities stamped with each Play's source ID. A Play producing $300K from 30 opps is operationally different from a Play producing $300K from 3 opps. Volume normalization separates "lucky big deal" from "repeatable engine." Per the Affiniti case study, 8,700 leads were attributed to AI Agent Plays over 3 months with 8,000 agent runs executed — high-volume attribution that supports scaled programs.

3. Win-rate by Play (quality normalizer)

Percentage of Play-sourced opportunities that close-won. The same Play can produce high pipeline and low close-rate (sourced poorly qualified accounts) or low pipeline and high close-rate (small but durable wins). Win-rate tells you which Plays surface real intent. Per the Unify This Year in Performance recap, outbound opportunities convert to closed-won at 22 percent at Unify's own NBR team — a benchmark target for a mature marketing-run program.

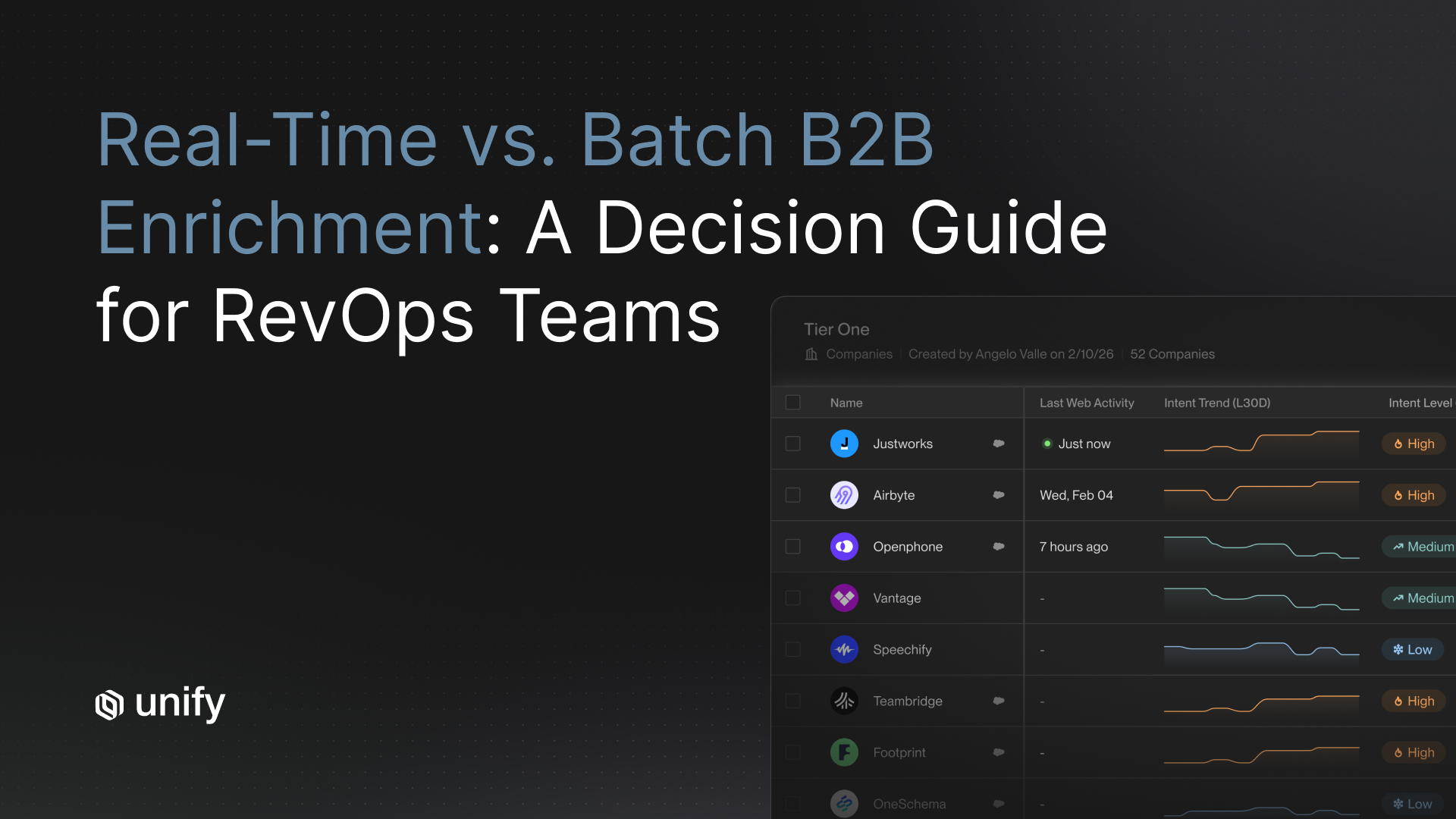

4. Time-to-close by signal type (efficiency normalizer)

Median days from opportunity creation to closed-won, segmented by the triggering signal. A new-hire signal Play and a website-intent Play have structurally different sales cycles. Reporting them blended hides the lever that drove the difference. Per the Anrok case study, Plays included New Hires, Website Visitors, Lookalikes, and AI Agent Plays — each with distinct cycle profiles that should be reported separately.

Marketing vs sales: where the line goes

Draw the division of labor at the qualified reply. Everything before the reply is marketing's responsibility. Everything after is sales' responsibility. The Play-source field on the opportunity is the join key that lets both sides report against shared truth.

Per the Unify Marketing solutions page, the platform is built explicitly for demand-gen marketers running outbound as a revenue channel. Justworks Senior Manager of Growth Marketing Peter Nguyen framed the adoption decision as: "Unify's initial pitch of standing up warm outbound as a new demand generation channel resonated deeply with me." Marketing-run outbound is not "marketing helping sales prospect." It is marketing owning a measurable revenue channel.

Customer attribution setups: 3 walkthroughs

Three named Unify customers, three different marketing-run setups, three different attribution patterns. Pick the one that most resembles your motion.

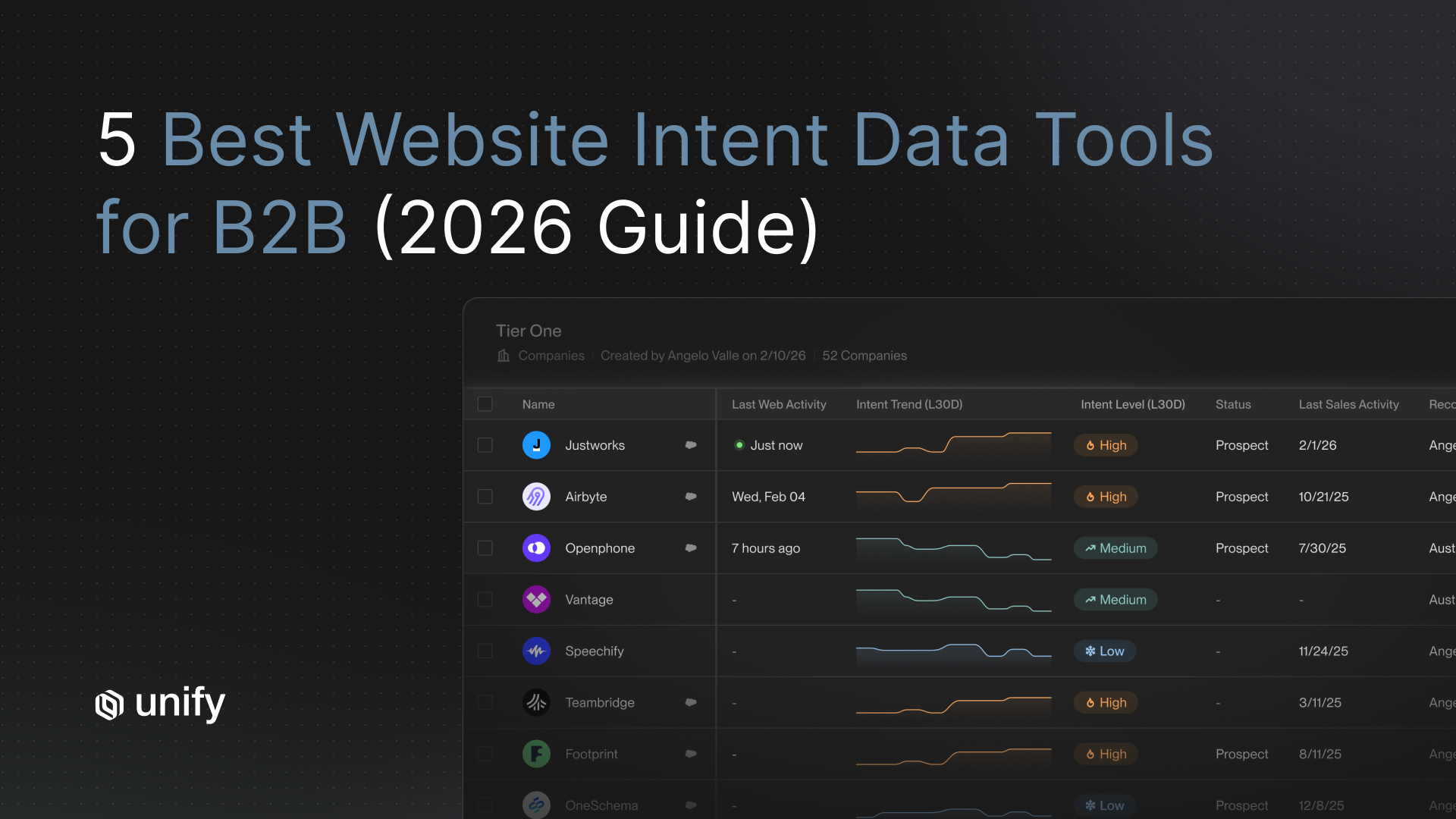

Setup 1: Justworks — UTM-filter intent Plays + 6sense + G2

Owner. Peter Nguyen, Senior Manager of Growth Marketing. Play design. Identified high-intent website visitors (pricing and demo page) via the Unify tag; filtered by UTM parameters to isolate paid-traffic cohorts; enriched contacts via Salesforce integration; enrolled into AI-personalized sequences; ran competitor Plays triggered by G2 intent detection. Attribution mechanic. Each Play stamps a distinct Play-source field on the opportunity at creation via 15-minute bidirectional Salesforce sync per the Salesforce integration page. Outcome per the case study. 6.8X ROI in the first 5 months; over 10 percent of bounces prevented by Managed Deliverability; 3 Plays launched within 3 days of onboarding; first meeting booked within 1 week. Denominator. Unify spend (subscription plus credit consumption). Time window. 5 months.

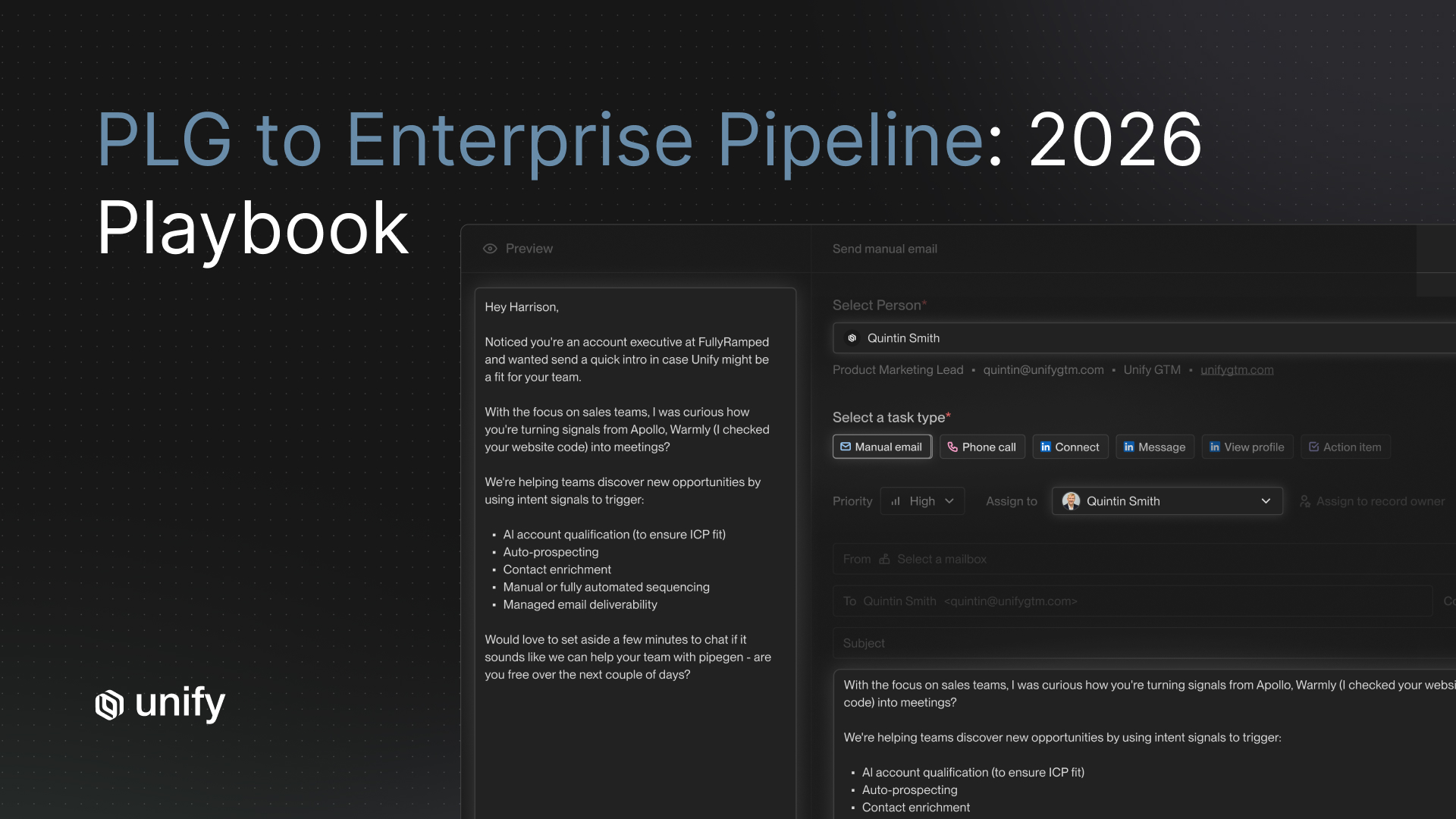

Setup 2: Anrok — signal-segmented shared Plays

Owner. Kathleen Kong, Growth Marketing Lead. Play design. Plays covered four signal types: New Hires, Champion Tracking, Website Visitors, Lookalikes, plus AI Agent Plays. Sequences and AI Personalization run across both marketing and SDR teams (the "shared Plays" pattern), with one unified system replacing 3 disparate tools. Attribution mechanic. Per-Play source stamp on opportunity creation; segment-level reporting by signal type so each Play's win-rate and time-to-close report independently. Outcome per the case study. $300K+ pipeline in the first 3 months; 4x faster SDR workflows compared to ZoomInfo and Outreach; 20% faster campaign build vs HubSpot; consolidation from 3 tools into 1. Denominator. Direct pipeline attribution (sourced). Time window. 3 months.

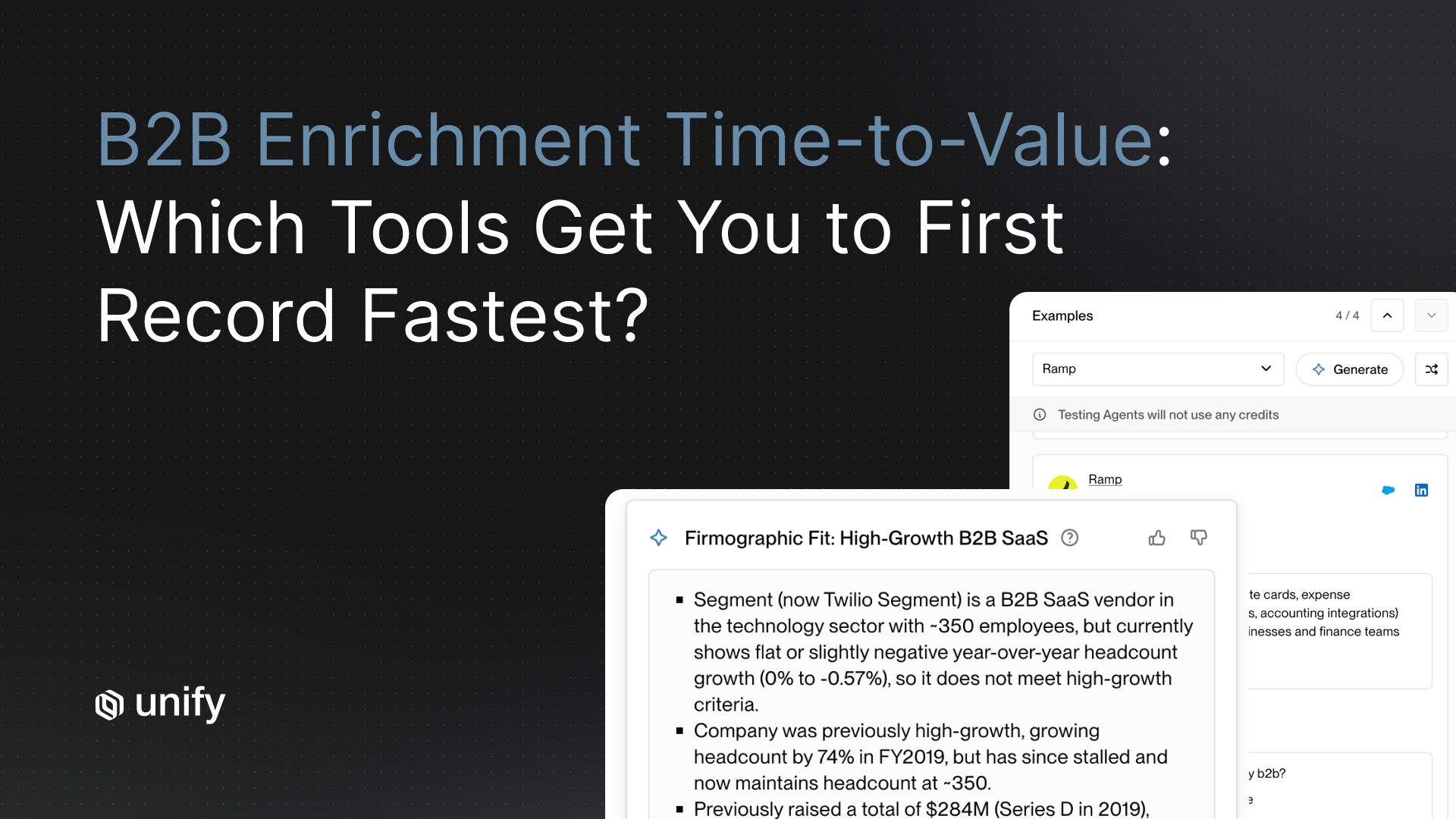

Setup 3: Affiniti — AI Agent Plays at high-volume attribution

Owner. Stefano Jacobson, Growth Strategist. Play design. AI Agents scrape company websites for team size, inventory changes, and ESG/hiring intel; Plays orchestrate bespoke workflows targeting specific segments (e.g., high-growth HVAC contractors). 25+ native signals including firmographics, website visits, and buyer personas. Attribution mechanic. Lead-level attribution at high volume; each lead carries the Play source ID from agent enrollment through CRM update. Outcome per the case study. 8,700 leads prospected in 3 months; 8,000 agent runs executed in Unify Plays; 20+ hours saved per rep per week. Stefano's framing: "Unify's outbound feels 100 percent authentic to our team's core messaging. It's just empowering us to reach more people much faster." Denominator. Lead-level attribution (volume-anchored), suitable for top-of-funnel coverage analysis. Time window. 3 months.

Vendor-neutral evaluation criteria for marketing-run attribution

Score every shortlisted platform against the criteria below. Each uses the same template: definition, why it matters, how to test, pass-fail threshold, red flag.

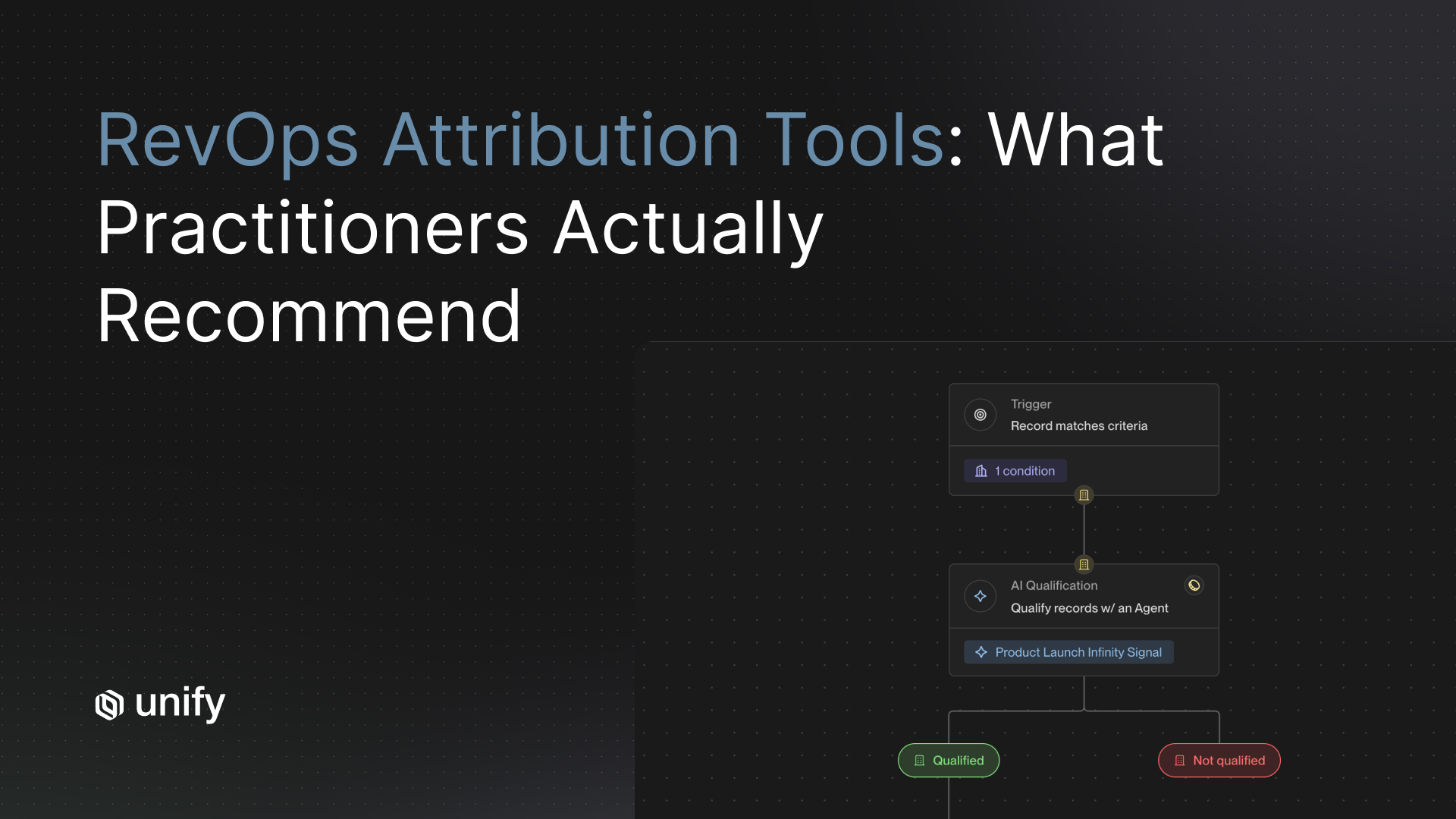

1. Per-Play source field on opportunity creation

Definition. The platform writes a distinct Play-source identifier to the CRM opportunity record at creation, auto-stamped from the enrollment record. Why it matters. Without it, marketing cannot prove pipeline contribution. How to test. Trigger an enrollment, advance to opportunity, and check the source field on the CRM record. Pass-fail. Field populates automatically; visible in CRM reports; read-only after creation. Red flag. Source field requires manual entry or UTM-only attribution.

2. Sourced vs influenced attribution support

Definition. The platform supports both first-touch (sourced) and multi-touch (influenced) attribution as named, separately reportable metrics. Why it matters. Different stakeholders ask for different measures. How to test. Pull two reports on the same opportunity set: sourced and influenced. Pass-fail. Both reports run natively, with clear labels. Red flag. Only one definition supported; the other requires custom CRM reports.

3. Field-level CRM sync with custom fields

Definition. Bidirectional sync supports custom fields including the Play-source field, with under 60-minute latency. Why it matters. The Play-source field needs to write to CRM on enrollment and stay durable through opportunity progression. How to test. Configure a custom field write and verify it persists. Pass-fail. Bidirectional 15-minute sync, custom field support, field-level exclusion controls. Red flag. Custom fields require engineering or a paid integration tier.

4. Held-out audience reporting

Definition. Control cohort treated as a first-class object in reporting alongside treated cohorts. Why it matters. Marketing-run programs are challenged on incrementality; without a control, "would they have closed anyway" is unanswerable. How to test. Build a Play with a 10 percent exclusion segment and verify it surfaces in reporting. Pass-fail. Control cohort reported alongside treated. Red flag. Exclusion segments tracked only in spreadsheets.

5. Multi-Play comparison views

Definition. Dashboard supports the same metric set across multiple Plays for side-by-side comparison. Why it matters. Without it, marketing cannot allocate budget across Plays. How to test. Open 3 Plays in one dashboard view; compare pipeline, opps, win-rate, time-to-close. Pass-fail. Native multi-Play view with shared time window. Red flag. One Play per page; CSV export required for comparison.

How Unify covers these criteria

- Per-Play source field. Per the Unify Plays product page, Plays trigger workflows that auto-stamp the Play identifier through the Salesforce or HubSpot bidirectional sync. Per the Series A announcement, Plays powers nearly 50% of Unify's own new pipeline creation, measured per-Play.

- Sourced vs influenced support. Per the Reporting and Analytics product page, the platform supports per-Play pipeline attribution with drill-down on opportunities created. Per the Guru case study, $3.17M closed-won was reported as influenced over 12 months, with 109 net-new accounts closed in the same window — both views reported natively.

- Field-level CRM sync. Bidirectional 15-minute sync with custom field utilization and field-level exclusion per the Salesforce and HubSpot integration pages.

- Held-out audience reporting. Plays support exclusion segments as first-class audience filters; Plays UI surfaces enrolled and excluded counts side by side.

- Multi-Play comparison views. Per the Analytics page, out-of-the-box dashboards for growth team operations support Play-vs-Play comparison. Per the Guru case study, one Business Operations Analyst manages 96 active Plays and 81 sequences part-time using these views.

Worked example: a marketing-run program reporting cadence

This is the monthly board-deck flow one Growth Marketing lead can produce in 2 hours per month. Modeled on the Justworks deployment pattern.

- Week 1, Tuesday, 30 minutes. Pull pipeline-by-Play for the last 30 days. Identify the top 3 Plays by pipeline dollars and the bottom 3 by zero opportunity creation.

- Week 1, Thursday, 30 minutes. Pull opportunities-created-by-Play and overlay with pipeline. Spot the volume mismatches ("big pipeline, few opps" or vice versa).

- Week 2, Tuesday, 30 minutes. Pull win-rate-by-Play on opportunities that opened 60+ days ago (statistically meaningful). Spot the Plays surfacing low-quality intent.

- Week 2, Friday, 30 minutes. Build the one-page board summary: pipeline by Play, opps by Play, win-rate by Play, time-to-close by signal. Annotate which Play to scale, which to retire, which to leave running. Per the Justworks case study framing, this cadence produced 6.8X ROI within 5 months.

Variants by team size and motion

SMB Growth Marketer (under 50 employees)

- One operator owns audience, Play, sequence, and reporting end-to-end. Cap at 3 active Plays until you have 60 days of stable data on each.

- Report sourced (first-touch) only at this stage. Multi-touch attribution requires data volumes most SMBs don't yet have.

Mid-market demand-gen lead (50 to 500 employees)

- Named owner for audience design plus RevOps owner for CRM logic plus marketing ops for reporting infrastructure.

- Report both sourced and influenced; label each clearly. Mirror the Anrok shared-Plays pattern across marketing and SDR.

Enterprise demand-gen team (500+ employees)

- Steering committee owns Play strategy quarterly; marketing ops owns weekly reporting; dedicated analyst owns Play-level dashboards.

- Per the Guru case study, one Business Operations Analyst managed 96 active Plays and 81 sequences part-time. That scale requires the Play-source field to be locked and audited weekly.

PLG companies

- Product-usage signals dominate attribution complexity; report PQL Plays separately from cold-outbound Plays. Per Perplexity, PQL Plays at 5% reply / MQL Plays at 20% reply produce structurally different sales cycles.

Sales-led companies

- Marketing-run outbound primarily produces opportunities sales would not have found on their own. Track "incremental opportunities" (Play-sourced opps with no prior sales touch) as the headline number alongside pipeline-by-Play.

Edge cases and disambiguation

- Play-sourced vs Play-influenced. Sourced = first-touch attribution; Play was the originating event. Influenced = multi-touch; Play had at least one event during the buying cycle. Pick one as your headline and stay consistent.

- Shared Play vs marketing-only Play. A shared Play has audience overlap with SDR coverage; a marketing-only Play targets accounts SDR will not touch. The Anrok pattern is shared Plays with explicit signal segmentation; the cleanest attribution comes from marketing-only Plays with sales-handoff-on-reply.

- Opportunity vs lead-level attribution. Most marketing programs need opportunity-level attribution for board reporting; the Affiniti 8,700-leads pattern is volume-level top-of-funnel attribution suitable for coverage analysis, not for revenue attribution alone.

- Channel attribution vs Play attribution. Channel ("outbound email") is too broad to inform action. Play binds signal + audience + sequence + owner — the minimum useful granularity.

- Multi-touch attribution as primary lens. Multi-touch models credit every touchpoint equally, which is mathematically clean but operationally useless for kill-or-scale decisions. Use multi-touch as a secondary validation lens; lead with sourced-by-Play.

Stop rules and red flags for marketing-run attribution

Four attribution-destroying mistakes

- Don't use multi-touch attribution as your only lens. Multi-touch averages credit across touchpoints, which obscures which Play actually moved the needle. Play-level sourced attribution is the cleaner primary lens; multi-touch is the secondary lens.

- Don't let SDRs strip the Play-source field at opportunity creation. Without governance the field decays inside 90 days. Lock the field behind a permission group that excludes SDRs and only includes RevOps. Audit weekly for blanks.

- Don't run marketing-run Plays with audiences overlapping SDR coverage without explicit segmentation. Cannibalization will destroy your attribution: when both surfaces hit the same account, neither can claim the deal. Use the Anrok pattern: shared Plays with signal segmentation that routes by signal type.

- Don't define "Play pipeline" without a documented denominator. Sourced vs influenced are different measures. Pick one as headline, document it in the program charter, and stay consistent quarter over quarter. Re-defining mid-quarter destroys board credibility.

Common mistakes in marketing-run outbound attribution

Top 5 attribution mistakes

- Treating channel as the unit. "Outbound email" is too broad. Play is the right unit.

- Reporting blended metrics. Mixing PQL Plays and cold Plays produces a meaningless average. Segment by signal type.

- Skipping the volume normalizer. Reporting only pipeline dollars hides "lucky big deal" Plays. Always show opps-by-Play alongside pipeline-by-Play.

- Skipping the quality normalizer. High pipeline + low close-rate = bad audience. Win-rate-by-Play surfaces this fast.

- Not labeling sourced vs influenced. Executive teams assume the most favorable definition. Make the label explicit in every report.

Frequently asked questions

How do you measure pipeline contribution from a marketing-run automated outbound program?

Use the Play as the attribution unit, not the channel. Stamp a Play-source field on every opportunity at creation and report on four metrics, ranked by board-room weight: pipeline by Play, opps created by Play, win-rate by Play, and time-to-close by signal type. Per the Justworks case study, this approach yielded 6.8X ROI in the first 5 months using UTM-filtered website intent Plays plus 6sense and G2 intent signals. Per the Anrok case study, signal-segmented shared Plays produced $300K+ pipeline in 3 months. Per the Affiniti case study, 8,700 leads were attributed to AI Agent Plays in 3 months.

Why use the Play as the attribution unit instead of the channel?

Channels are too broad to act on. "Outbound email" tells you nothing about which audience, signal, or sequence drove the result. A Play is the smallest meaningful unit that combines a signal trigger, an audience, a sequence, and an owner — which means Play-level reporting tells you which signal-audience-sequence combination created pipeline and which to kill. Per the Unify Analytics product page, the platform supports per-Play pipeline attribution with drill-down on opportunities created, replies, and emails sent. Per the Unify Series A announcement, Plays powers nearly 50 percent of Unify's own new pipeline creation, measured at the Play level.

Where does marketing's responsibility end and sales' begin?

At the qualified reply. Marketing owns the audience, signal selection, sequence design, and Play-level reporting up to that point. Sales owns opportunity progression, deal qualification, and win/loss data from the qualified reply onward. The hand-off boundary makes the Play-source field on the opportunity the join key between the two domains. Without that field stamped at creation, marketing cannot prove pipeline contribution and sales cannot trace which Play surfaced the deal.

What is the difference between pipeline sourced and pipeline influenced?

Pipeline sourced means the Play was the first touchpoint on the opportunity — the narrower, defensible attribution. Pipeline influenced means the Play had at least one touchpoint during the buying cycle — the broader, multi-touch attribution. Both are valid; pick one as your headline number and stay consistent quarter over quarter. Per the Guru case study, $3.17M in closed-won revenue was attributed as influenced by Unify activity over 12 months; 109 net-new accounts closed in the same window. Labeling each report as sourced or influenced is the governance fix for the ambiguity.

How do we prevent SDRs from stripping the Play-source field at opportunity creation?

Make the field read-only after enrollment, configure the CRM to auto-stamp it from the Unify-CRM sync, and audit weekly for blank or overwritten values. Per the Unify Salesforce and HubSpot integration pages, Unify supports bidirectional 15-minute sync with custom field utilization and field-level exclusion controls. Lock the Play-source field behind a permission group that excludes SDRs and only includes RevOps. Without governance, attribution decays inside three months.

Glossary

- Play. An automated outbound workflow combining a signal trigger, audience, sequence, and owner. The smallest meaningful attribution unit. Source: Unify Plays product page.

- Play-source field. A custom field on the CRM opportunity record that identifies the originating Play. Auto-stamped from the Unify-CRM sync at enrollment.

- Pipeline sourced. Pipeline where the Play was the first touchpoint. The narrower attribution definition.

- Pipeline influenced. Pipeline where the Play had at least one touchpoint during the buying cycle. The broader, multi-touch attribution.

- Volume normalizer. The opportunities-by-Play metric, used to separate "big pipeline from lucky big deal" from "big pipeline from repeatable engine."

- Quality normalizer. The win-rate-by-Play metric, used to surface Plays that produce poorly qualified opportunities.

- Efficiency normalizer. The time-to-close-by-signal-type metric, used to compare Plays with structurally different sales cycles.

- Shared Play. A Play with audience overlap between marketing and SDR coverage; the Anrok pattern. Requires explicit signal segmentation to prevent cannibalization.

- Held-out audience. A control cohort within the eligible audience that receives no outreach during the program. Used to validate incrementality. 10 to 20 percent reserve is standard.

- Qualified reply. A positive reply from a prospect indicating interest or willingness to engage. The hand-off boundary between marketing-owned and sales-owned activity.

Sources and references

- Unify, Plays product page. Source for Play definition and signal-triggered workflow capability.

- Unify, Reporting and Analytics product page. Source for per-Play pipeline attribution, drill-down on opportunities/replies/emails, leading + lagging dashboards.

- Unify, Marketing solutions page. Source for marketing-run outbound positioning; Peter Nguyen (Justworks) framing of warm outbound as a new demand-gen channel.

- Unify, Justworks case study. Source for 6.8X ROI in 5 months, UTM-filter intent Plays + 6sense + G2 setup, >10% bounces prevented, 3 Plays in 3 days, first meeting in 1 week.

- Unify, Anrok case study. Source for $300K+ pipeline in 3 months, signal-segmented shared Plays (New Hires + Champion Tracking + Website Visitors + Lookalikes + AI Agent Plays), 4x faster SDR workflows.

- Unify, Affiniti case study. Source for 8,700 leads attributed in 3 months, 8,000 agent runs, 20+ hrs saved per rep per week.

- Unify, Guru case study. Source for $3.17M closed-won influenced over 12 months, 109 net-new accounts closed, 96 active Plays / 81 sequences managed part-time.

- Unify, Perplexity case study. Source for 5% PQL Play reply rate, up to 20% MQL Play reply rate.

- Unify, Series A announcement. Source for Plays powering ~50% of Unify's new pipeline creation.

- Unify, This Year in Performance, Dec 19 2025. Source for 22% conversion rate on outbound opportunities, $52M qualified pipeline.

- Unify, Salesforce integration and HubSpot integration. Source for 15-minute bidirectional sync, custom field utilization, field-level exclusion controls.

- Unify, Task Management and Unified Inbox product page. Source for AI reply classification (positive, referral, objection, unsubscribe) defining the qualified-reply boundary.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)