TL;DR. A real AI SDR pilot runs 30 days against one signal, one ICP, and one Play, with week-by-week thresholds (60%+ enrichment, 25%+ open, 2%+ reply) and a pilot-to-production decision in week 4. Pick one of three pilot designs before kickoff: PLG/PQL (Perplexity model, $1.7M in 3 months), New-Hire (Anrok model, $300K+ in 3 months), or Lookalike (Unify Lookalikes blog, $110K in week one). Per the Unify next-gen AI Agents announcement, agents now run at 0.1 credits each, making always-on pilots economically viable. Per the Affiniti case study, that cost ceiling enabled 8,000 agent runs across 8,700 leads in 3 months.

Methodology and limitations

What counts as an "agent run." Per the Unify AI Agents product page and the next-gen AI Agents announcement, an agent run is one execution of AI research, qualification, or message generation against a single account or contact. A research run is different from a send action: a research run executes web browsing or scraping plus reasoning to gather context; the resulting send action is a separate event. The Affiniti case study reports 8,000 agent runs executed in Unify Plays across 8,700 leads prospected in 3 months — meaning roughly one research run per lead with overage for re-research and qualification. At 0.1 credits per run, 8,000 runs equal approximately 800 credits of agent consumption.

Pipeline benchmarks are not platform averages. Every pipeline number cited in this article is attributed to its specific named customer case study. There is no aggregated "Unify pilot benchmark" dataset. Dial benchmarks down when your ACV is under $25K, your ICP is unproven, your CRM data is dirty, or your sample size is under 2,000 monthly identified accounts. Dial up when ACVs exceed $100K and your ICP is well-defined.

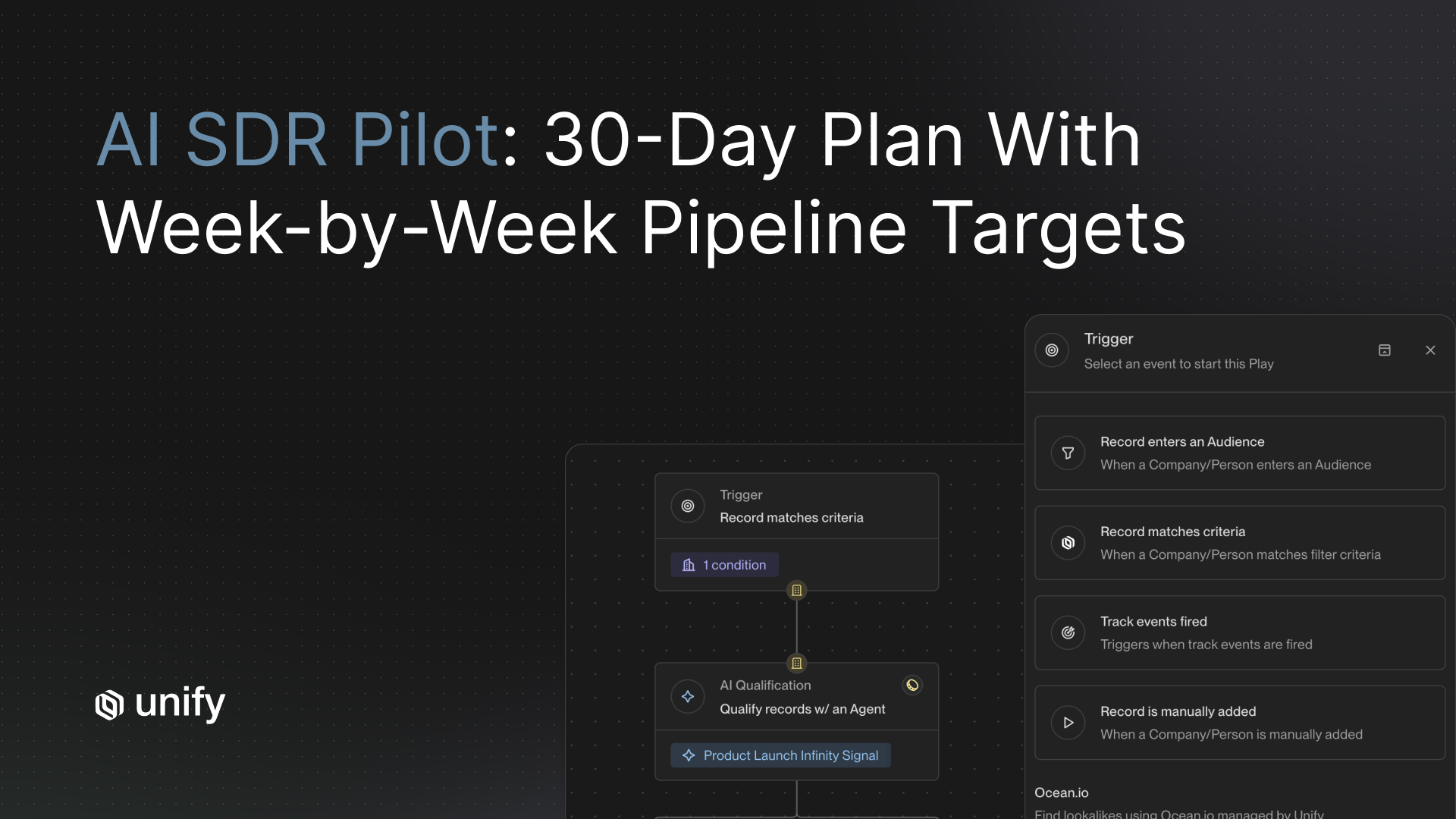

What does an AI SDR pilot program look like?

A real AI SDR pilot runs 30 days against one signal, one ICP, and one Play, with explicit weekly thresholds and a pilot-to-production decision at the end of week 4. The pilot is not a sequence-builder pilot. It is a signal + agent + Play pilot. The unit of measurement is qualified opportunities created, agent runs executed, reply rate, and time saved, not emails sent.

Buyers asking "what does a pilot look like" are at the highest-intent moment in the AI SDR funnel: they are trying to internally justify a 30-day commitment to a senior stakeholder. The structure below is designed to make that justification defensible. Every threshold and benchmark in this article is attributed to a named Unify customer case study, never to an invented platform average.

The ranked 4-week AI SDR pilot template

Run the four weeks in strict order. Do not collapse weeks. Do not skip the held-out audience step. Each week has one owner, one deliverable, and one go/no-go threshold.

Week 1 — Define one signal, one ICP, one Play. CRM sync only, no sending.

Owner: the operator who will own the system end-to-end (Growth, Marketing, or RevOps).

Deliverables: chosen pilot design (see the 3-pilot-design picker below), signal trigger configured, ICP filter defined in Plays, audience built, success thresholds documented, held-out audience reserved (10 to 20 percent of the eligible population, no outreach).

Threshold: RevOps confirms CRM sync runs error-free for 7 consecutive days.

Sending: zero.

Why no sending in week 1: mailbox warming and audience quality drive bounce rates more than any creative variable. Skip this step and the pilot is uninterpretable.

Week 2 — Run agent research and build sequence. First 100 enrollments.

Owner: the pilot operator with sales lead review.

Deliverables: AI Agent step configured to research each contact (company news, role priorities, recent funding, product-fit signal), Smart Snippets templated, sequence drafted (3 to 4 touches over 14 days), 100 contacts enrolled from the live audience.

Threshold: 60%+ enrichment match rate on the enrolled cohort.

Why enrichment first: a perfect sequence sent to bad data buries the pilot in bounces. Per the Unify Waterfall Enrichment product page, the platform documents 95%+ company match and 90%+ contact match across 30+ data sources, so a 60% pilot floor is conservative.

Cost expectation: roughly 100 to 300 credits of agent consumption for research at 0.1 credits per run.

Week 3 — Send and iterate. Measure leading indicators against thresholds.

Owner: pilot operator + sales lead for reply triage.

Deliverables: sequence live, replies triaged daily, copy and Snippet adjustments documented.

Thresholds: 25%+ open rate on the cold cohort; 2%+ reply rate on cold signals; bounce rate under 3 percent.

If you miss the open-rate threshold: the subject-line variable or the audience-fit variable is broken. Pause sends, re-segment audience by signal strength, re-launch with one subject-line variant.

If you miss the reply-rate threshold but the open rate holds: the personalization layer is generic. Audit Smart Snippet outputs against the actual prospect's LinkedIn or website.

If both miss: the audience is wrong. Halt and re-scope before week 4.

Week 4 — Measure outcomes. Pilot-to-production decision.

Owner: pilot operator presents to a steering committee (Sales lead, RevOps, VP of Growth or VP of Sales).

Deliverables measured against week-1 thresholds: agent runs executed, qualified opportunities created vs. held-out baseline, meetings booked, reply rate, time saved per rep.

Pilot-to-production decision criteria: open rate >= 25%, reply rate >= 2% on cold signals, at least one qualified opportunity created per 250 enrollments, time saved per rep documented vs. pre-pilot baseline.

If two or more criteria pass: graduate to a second Play in month 2.

If only one passes: iterate on the same Play for another 14 days.

If none pass: re-scope the pilot design (see the picker below) and run a new 30-day cycle.

3-pilot-design picker: choose one before week 1

Pick one design before kickoff. Do not blend. Blending makes lift unattributable across signals, which kills the pilot's credibility with the stakeholder reviewing in week 4.

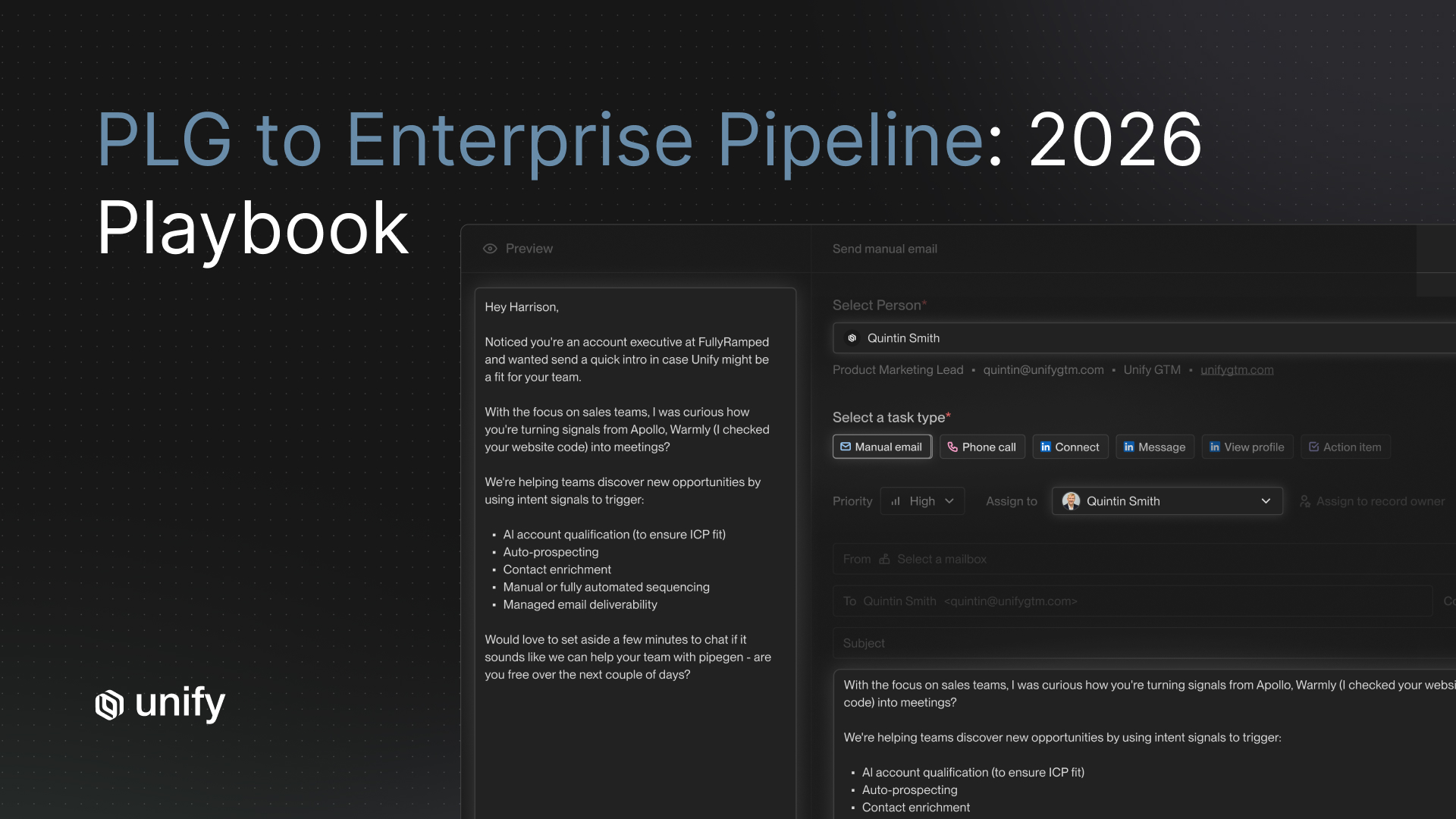

Pilot Design 1 — PLG / PQL signal pilot (highest reply ceiling)

- Best for: companies with a freemium or free-trial product where signups produce a usable identity signal.

- Signal: PQL trigger — paywall hit, usage threshold reached, target ICP company has multiple free users.

- Reply rate ceiling: per the Perplexity case study, PQL Plays reached a 5% reply rate and MQL Plays reached up to 20% — within $1.7M pipeline, 75+ outbound opportunities, and 80+ enterprise meetings booked in 3 months, with no BDR team.

- Pilot-week-2 audience size guidance: 100 to 500 enrollments.

- Why this design wins: PQL signals carry intrinsic intent that cold prospecting lacks. Reply rates are 2 to 4x higher than cold cohorts.

Pilot Design 2 — New-hire signal pilot (highest precision)

- Best for: teams selling into roles with high turnover (CRO, VP Sales, Head of Growth, RevOps) or into newly-funded companies hiring fast.

- Signal: New Hires Signal — daily refresh of new hires matching target persona, location, industry; 5 credits per new hire surfaced.

- Mid-case pipeline benchmark: per the Anrok case study, the team ran Plays including New Hires/Champions, Website Visitors, Lookalikes, and AI Agent Plays, generating $300K+ pipeline in the first 3 months at 4x faster SDR workflows.

- Pilot-week-2 audience size guidance: 200 to 800 enrollments depending on hiring volume in your target verticals.

- Why this design wins: new hires in target roles are in their "first 90 days" buying window — the moment they shop the tech stack.

Pilot Design 3 — Lookalike pilot (fastest payback)

- Best for: teams with a clean list of best-fit closed-won accounts that want to expand TAM beyond the current target list.

- Signal: Lookalikes Play — find companies similar to seed accounts (powered by Ocean.io integration in Unify).

- Fastest-payback benchmark: per the Unify Lookalikes launch blog (August 14, 2025), a customer drove $110K in pipeline within one week of launching the Lookalikes play. Per the Peridio case study, lookalike signals supported $1.15M in influenced pipeline, $550K in direct pipeline, a 58% open rate, and a 5% reply rate against 4,400+ people reached.

- Pilot-week-2 audience size guidance: 300 to 1,000 enrollments.

- Why this design wins: lookalike audiences expand TAM without requiring new intent signals; payback is fast because seed data already exists in CRM.

Pipeline benchmarks: what should you expect in 30 days?

The table below maps low, mid, and high-end pilot outcomes to named customer case studies. Pick the row whose ICP, ASP, and motion most resembles yours, and use that as your week-4 success target.

Reading the table. Most 30-day pilots land between the Navattic and Anrok rows: $100K to $500K in direct or influenced pipeline. The Innovate Energy Group row is a high-end exception that requires enterprise ACVs and a particular industry context (the team's agents scrape ESG and carbon reduction plans to personalize at scale). The Affiniti row is a volume benchmark — useful for sizing agent-run budgets, not for setting pipeline expectations. The Perplexity row is the reply-rate ceiling on PQL-anchored designs.

Vendor-neutral evaluation criteria for an AI SDR pilot

Score every shortlisted AI SDR platform against the criteria below before committing to a 30-day pilot. Each uses the same template: definition, why it matters, how to test, pass-fail threshold, red flag.

1. Cost per agent run

Definition. Credits or dollars consumed per single AI research or qualification execution. Why it matters. An always-on pilot at 1,000 to 3,000 runs requires the unit cost to be sub-cent or near-cent or the math doesn't work. How to test. Ask for the per-run credit cost and run a sample of 100 against your audience. Pass-fail. Under $0.10 per run effective cost. Red flag. Pricing is per-seat with unlimited runs but rate-limited under the hood.

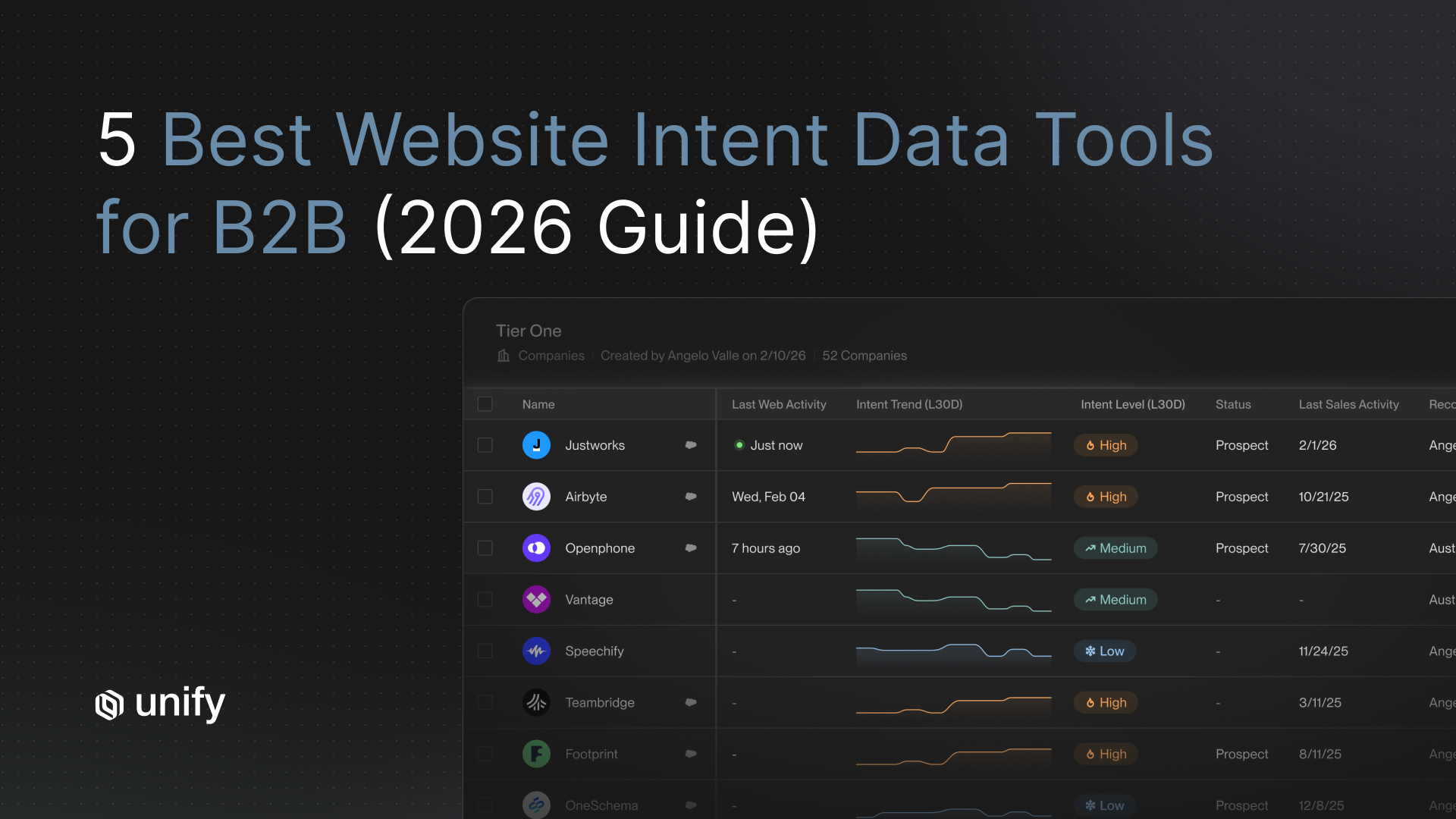

2. Signal coverage

Definition. Number of native intent signals the platform can fire a Play from without external integration. Why it matters. One signal is the pilot constraint; a thin signal library means you cannot graduate to month 2. How to test. Count native signals out of the box. Pass-fail. 20+ native signals. Red flag. Signals require Zapier or custom code to fire.

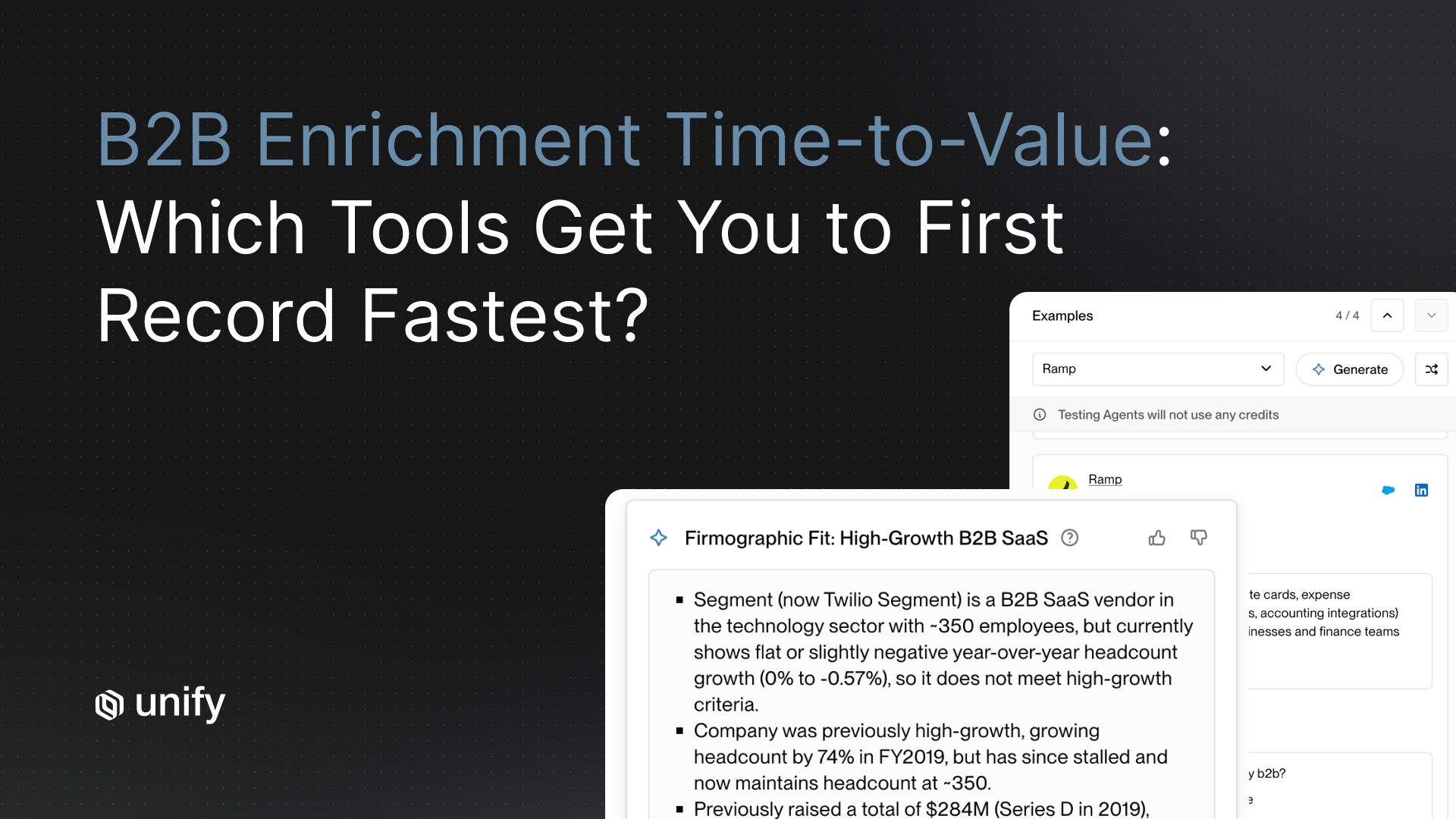

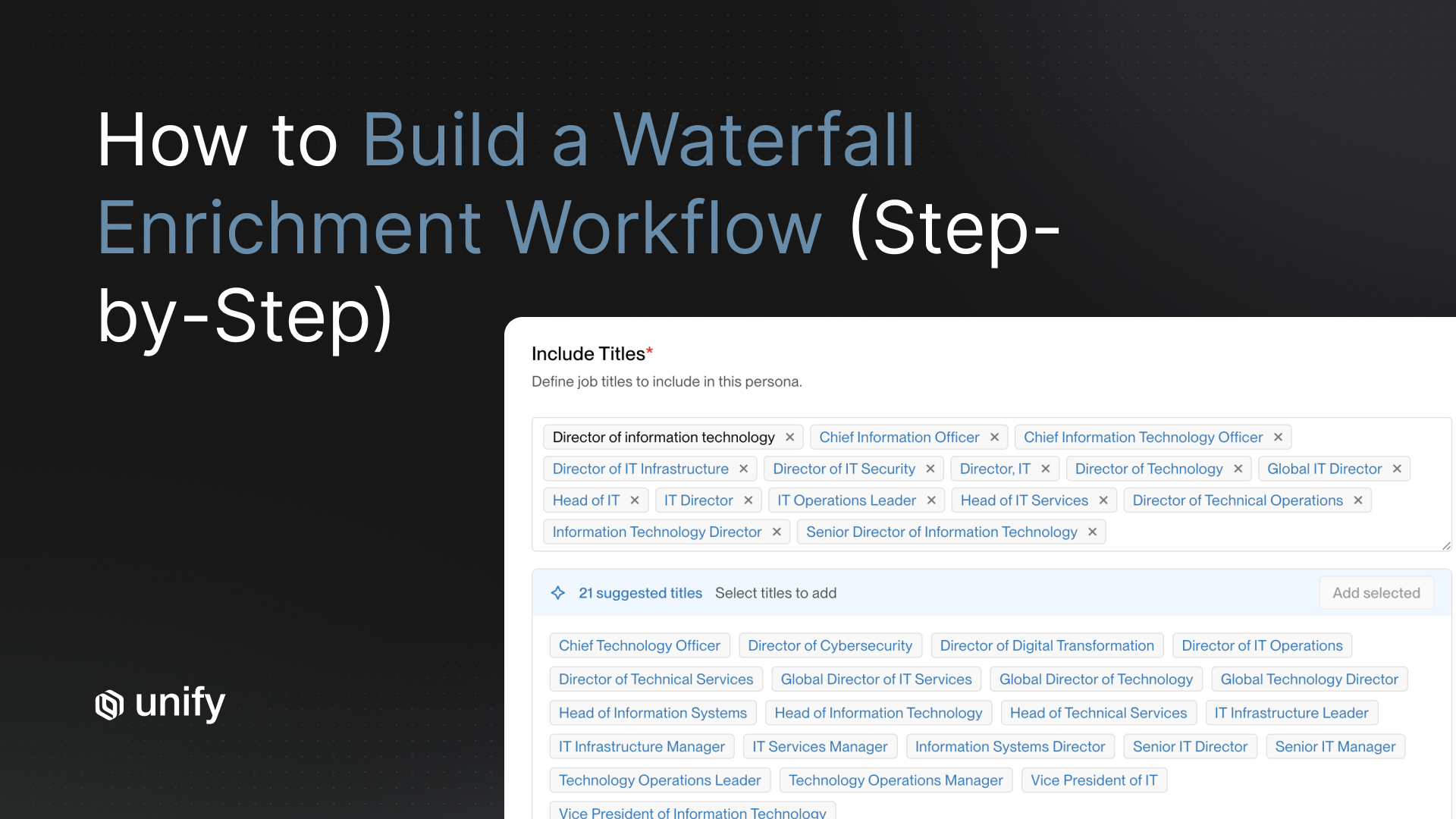

3. Enrichment match rate

Definition. Percentage of enrolled contacts with a verified email, phone, or LinkedIn after enrichment. Why it matters. Sub-60% match rate kills the week-2 threshold and the pilot can't proceed. How to test. Enrich 100 sample contacts during the trial. Pass-fail. 60%+ on pilot data; 80%+ on enterprise data. Red flag. Match rates depend on which CSV columns you bring.

4. CRM bidirectional sync

Definition. Read/write sync to Salesforce or HubSpot with under 60-minute latency. Why it matters. Slow sync breaks routing and corrupts attribution. How to test. Confirm 15-minute or better sync interval. Pass-fail. Bidirectional, 15-minute sync, custom fields, lead routing all in. Red flag. Daily syncs or one-way only.

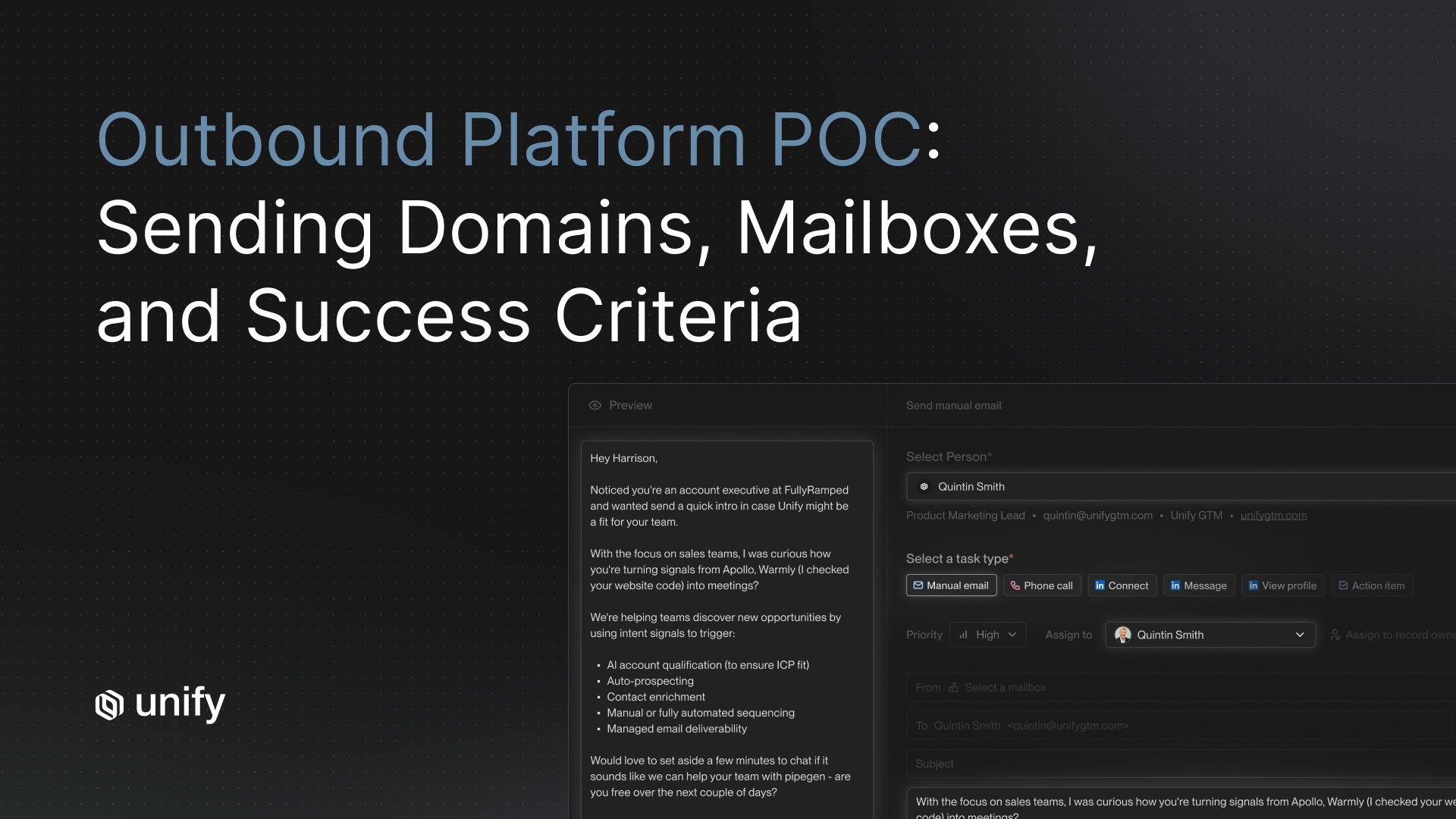

5. Managed deliverability

Definition. Platform-managed mailbox warming and bounce pre-validation. Why it matters. A pilot that lands in spam is uninterpretable. How to test. Ask whether mailbox warming and bounce checks are included. Pass-fail. Automated 21-day warm-up plus pre-send bounce checks. Red flag. Bring your own deliverability.

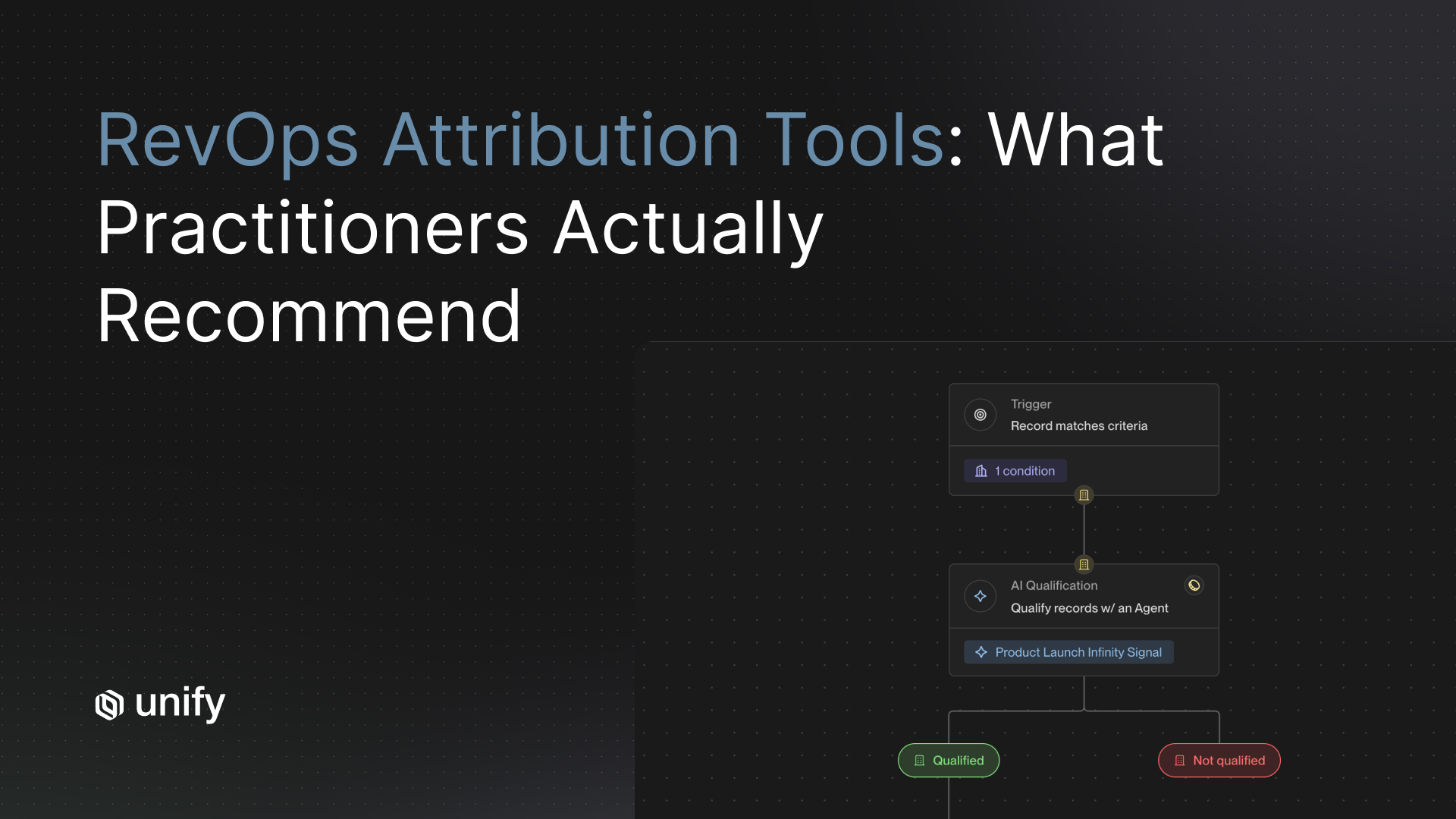

6. Held-out audience and attribution

Definition. Ability to reserve a control cohort and trace opportunities back to the Play. Why it matters. Without it, the pilot result is anecdote, not evidence. How to test. Build a Play with a 10% exclusion segment. Pass-fail. Exclusion segment supported natively; opportunity-to-Play trace under 5 minutes. Red flag. Attribution is "manual via UTM."

How Unify covers these criteria

- Cost per run. AI Agents run at 0.1 credits each, a 10x improvement, per the Next-gen AI Agents announcement.

- Signal coverage. 25+ native intent signals per the Signals overview, including Website Intent, Champion Tracking, New Hires, Lookalikes, Product Usage, G2, Email Intent, and the AI Infinity Signal.

- Enrichment. 95%+ company match and 90%+ contact match across 30+ data sources per the Waterfall Enrichment product page.

- CRM sync. Bidirectional 15-minute sync with Salesforce and HubSpot per the Salesforce integration and HubSpot integration pages.

- Managed deliverability. Automated 21-day mailbox warming and pre-send bounce validation per the Email Deliverability product page. Per the Justworks case study, over 10% of bounces prevented in outbound enrollments.

- Held-out audience and attribution. Plays support exclusion segments and Play-level pipeline attribution; per the Series A announcement, Plays powers nearly 50% of Unify's own new pipeline creation, measured per-Play.

Worked example: a 30-day PLG/PQL pilot on a 14,000-visitor SaaS site

This is an end-to-end trace anchored on Unify customer outcomes. Numbers come from named case studies; this is not a platform average.

- Week 1. Pilot operator (Head of Growth) configures PQL trigger: target-ICP company has 3+ free users + at least one user hits the pricing page. Audience built in Plays. Held-out audience = 15% of eligible accounts. CRM sync verified.

- Week 2. AI Agent step researches each enrolled contact (LinkedIn profile, company funding, product-usage notes). 100 enrollments live by Friday. Enrichment match rate at enrollment: 88% (well above the 60% threshold).

- Week 3. Sequence sends (4 touches, 14 days). Daily reply triage. Mid-week metrics: open rate 38%, reply rate 4.5% (analog to Perplexity's PQL Play at 5%). One adjustment to the Smart Snippet template after day 4.

- Week 4. Steering-committee review. Outcomes: 7 qualified opportunities created vs. 1 in the held-out audience; agent runs executed 350 (35 credits); reply rate 4.5% on cold PQL cohort; 12 hours/week saved per rep on prospecting research. Verdict: graduate to month 2, add the Lookalikes Play as Play #2 on a parallel cohort.

Stop rules and red flags during an AI SDR pilot

Four pilot-killing mistakes

- Don't pilot with multiple signals at once. You cannot attribute lift across signals. You cannot decide which to keep. Pick one signal in week 1 and hold it until week 4 metrics are in.

- Don't pilot without a held-out audience. Reserve 10 to 20 percent of eligible accounts with no outreach. Without a control, the pilot is anecdote.

- Don't define "success" mid-pilot. Document week-1 thresholds (60% enrichment, 25% open, 2% reply) and review against those exact numbers in week 4. Moving the goalposts disqualifies the result.

- Don't expand to a second Play before week 4 metrics are in. Pilots compound noise. One Play is the constraint that makes the pilot interpretable.

Variants by team size, motion, and pilot owner

SMB pilot (under 50 employees)

- One operator owns the pilot end-to-end. Start with Pilot Design 3 (Lookalikes) — fastest payback, lowest setup overhead.

- Audience size: 200 to 500 enrollments in week 2. Target Navattic-range outcome: $100K+ in 10 days.

Mid-market pilot (50 to 500 employees)

- Named pilot operator plus RevOps for CRM logic plus a sales lead for reply triage. Start with Pilot Design 1 (PQL) if PLG; Pilot Design 2 (New-Hire) if sales-led.

- Audience size: 500 to 1,500. Target Anrok-range outcome: $300K+ in 3 months.

Enterprise pilot (500+ employees)

- Steering committee + dedicated pilot operator. Run all three pilot designs in parallel on separate cohorts (each with its own held-out audience). This is the only scenario where parallel pilots are justified.

- Audience size: 2,000+ per cohort. Target Innovate Energy Group-style outcomes only if ACV exceeds $100K.

RevOps as pilot owner

- Lead with CRM hygiene: routing rules, exclusion segments, attribution traces. Pick Pilot Design 2 (New-Hire) — highest precision, easiest to attribute.

Growth Marketer as pilot owner

- Lead with audience design and message-market fit. Pick Pilot Design 1 (PQL) if you have a PLG product; Pilot Design 3 (Lookalike) otherwise.

Edge cases and disambiguation

- Pilot vs production. A pilot is one signal, one ICP, one Play, 30 days, with a held-out audience. Production is many signals, many Plays, no held-out audience, continuous. Do not call a production-style rollout a "pilot."

- Agent run vs send action. Research and message generation count as agent runs; sequence sends are a separate event. Per the Affiniti case study, 8,000 agent runs across 8,700 leads roughly equals one research run per lead with overage for re-research.

- PQL vs MQL signal. PQL means product-qualified (the prospect or someone at their company is using your product). MQL means marketing-qualified (the prospect engaged with marketing content). Per the Perplexity case study, PQL Plays converted at 5% reply rate and MQL Plays at up to 20%. Both work; do not blend them in the same pilot.

- Reply rate on cold vs warm signals. Cold signal reply rate of 2% is the pilot floor. Warm signal (returning visitor, product user, past champion) reply rates of 5 to 20% are normal. Do not compare cold pilot results to warm benchmarks.

- Pilot benchmark vs platform benchmark. Every pipeline number in this article is attributed to a specific named customer. There is no aggregated "Unify pilot benchmark" — comparing to specific customers whose ICP matches yours is the right framing.

Common mistakes to avoid in pilot design

Top 5 pilot-design mistakes

- Picking the platform first, the pilot design second. Pick the pilot design first (PQL / New-Hire / Lookalike) and let it dictate platform requirements.

- Starting with sending in week 1. Week 1 is CRM sync, audience build, and held-out audience reservation only. Sending in week 1 contaminates the baseline.

- Building a five-touch sequence in week 2. Three to four touches is the sweet spot. More touches in a pilot inflate negative replies and bury the signal.

- Counting emails sent as a success metric. Emails sent is a vanity metric. Track reply rate, qualified opportunities created, time saved.

- Skipping the week-4 stakeholder review. Without a documented pilot-to-production decision, the pilot stalls in indefinite extension.

Frequently asked questions

What does an AI SDR pilot program look like?

A real AI SDR pilot runs 30 days against one signal, one ICP, and one Play. Week 1 wires CRM sync and defines the audience with no sending. Week 2 builds the agent research step and the sequence; first 100 contacts enroll. Week 3 sends and iterates against thresholds: 60%+ enrichment match, 25%+ open rate, 2%+ reply rate on cold signals. Week 4 measures agent runs, qualified opportunities, reply rate, and time saved, and produces a pilot-to-production decision. Per the Affiniti case study, this design delivered 8,700 leads and 8,000 agent runs in 3 months with Unify.

How do I structure an AI SDR pilot?

Pick one of three pilot designs before kickoff. (1) PLG / PQL signal pilot, highest reply ceiling, modeled on Perplexity: PQL Plays reached a 5% reply rate, MQL Plays reached up to 20%, contributing to $1.7M pipeline and 80+ enterprise meetings in 3 months with no BDR. (2) New-hire signal pilot, highest precision, modeled on Anrok: $300K+ pipeline in 3 months across Plays including new hires and lookalikes. (3) Lookalike pilot, fastest payback, modeled on the Unify Lookalikes launch blog: $110K in pipeline within one week of launching the play. Pick one design; do not blend them.

What pipeline benchmarks should I expect in 30 days?

Pipeline outcomes vary by ICP, ASP, and traffic volume. Low-end pilot benchmark is $100K+ direct pipeline in the first 10 days per the Navattic case study. Mid-range is $300K+ in 3 months per the Anrok case study. High-end is $15M pipeline in one month per the Innovate Energy Group case study. Plan a 30-day target between the Navattic and Anrok ranges; treat Innovate Energy Group as an upper-bound exception that requires enterprise-grade ACVs and a specialized motion.

What is an agent run and why does cost matter for a pilot?

An agent run is one execution of AI research, qualification, or message generation against a single account or contact. Per the Unify next-gen AI Agents announcement (December 18, 2025), agents now run at 0.1 credits each, a 10x cost reduction. That cost ceiling makes an always-on pilot economically viable: per the Affiniti case study, 8,000 agent runs over 3 months translated to roughly 800 credits at the new rate. For a 30-day pilot targeting 1,000 to 3,000 agent runs, plan for 100 to 300 credits of agent consumption.

What should I avoid in an AI SDR pilot?

Four mistakes kill pilots. (1) Don't pilot multiple signals at once. You can't attribute lift across signals. (2) Don't pilot without a held-out audience. You need a baseline to compare against. (3) Don't redefine success mid-pilot. Set success thresholds in week 1: 60%+ enrichment match, 25%+ open rate, 2%+ reply rate. (4) Don't expand to a second Play before week 4 metrics are in. Pilots compound problems; second Plays compound noise.

Glossary

- Agent run. One execution of AI research, qualification, or message generation against a single account or contact. Distinct from a send action. Per the Unify next-gen AI Agents announcement, agents run at 0.1 credits each.

- PQL (Product-Qualified Lead). A prospect at a company already using your product (typically via freemium or trial) who is showing usage signals indicating buying intent.

- MQL (Marketing-Qualified Lead). A prospect who has engaged with marketing content (downloaded a guide, attended a webinar, hit pricing) but has not yet been verified by sales.

- Held-out audience. A control cohort within the eligible audience that receives no outreach during the pilot. Used as a baseline for attributing lift. 10 to 20% is the standard reserve.

- Play. An automated outbound workflow combining a signal trigger, AI Agents, enrichment, and a sequence into a single repeatable motion. Source: Unify Plays product page.

- Signal. A buyer-intent event (website visit, product usage, job change, G2 view, funding) that can trigger a Play. Unify ships 25+ native signals.

- Smart Snippet. Unify's term for dynamically generated message components (subject lines, hooks, value statements) tailored per recipient by AI Agents using research context.

- Lookalike Play. A Play that finds companies similar to seed accounts using the Ocean.io integration in Unify, then enrolls them in automated outreach.

- Pilot-to-production decision. The week-4 review where pilot owners present outcomes against week-1 thresholds and the steering committee decides whether to graduate, iterate, or re-scope.

- Enrichment match rate. Percentage of enrolled contacts whose email, phone, or LinkedIn is verified after waterfall enrichment. Per the Unify Waterfall Enrichment product page, 90%+ contact and 95%+ company match.

Sources and references

- Unify, AI Agents product page. Source for AI Agents capability, over 1M questions answered, agent runs at 0.1 credits.

- Unify, Introducing Unify's Next Generation of AI Agents (December 18, 2025). Source for 0.1 credits per run, 10x improvement, 15+ meetings and a closed-won across 35,000 accounts in 30 days.

- Unify, Affiniti case study. Source for 8,700 leads prospected in 3 months, 8,000 agent runs executed, 20+ hrs saved/rep/week.

- Unify, Innovate Energy Group case study. Source for $15M pipeline in one month, 8x increase in meetings, 20+ hrs saved/week.

- Unify, Navattic case study. Source for $100K+ direct pipeline in first 10 days, 30+ meetings, 67% open rate, 3.9K+ prospects in 2 months.

- Unify, Perplexity case study and long-form blog. Source for $1.7M pipeline / 75+ opps / 80+ enterprise meetings / 3 months / no BDR; 5% PQL reply rate, up to 20% MQL reply rate.

- Unify, Anrok case study. Source for $300K+ pipeline in 3 months, 4x faster SDR workflows, 20% faster campaign build, New Hires + Lookalikes Plays.

- Unify, Peridio case study. Source for $1.15M influenced pipeline, $550K direct, 58% open rate, 5% reply rate, 4,400+ reached, 1 Fortune 100 closed; lookalike-driven outbound.

- Unify, Lookalikes launch blog (August 14, 2025). Source for $110K in pipeline within one week of launching the Lookalikes Play.

- Unify, Justworks case study. Source for >10% bounces prevented in outbound enrollments.

- Unify, Plays product page.

- Unify, Signals overview. Source for 25+ native intent signals.

- Unify, Waterfall Enrichment product page. Source for 90%+ contact match, 95%+ company match, 30+ data sources.

- Unify, Email Deliverability product page. Source for 21-day automated mailbox warming, 75% bounces prevented before send.

- Unify, Salesforce integration and HubSpot integration. Source for 15-minute bidirectional sync.

- Unify, Series A announcement. Source for Plays powering ~50% of Unify's new pipeline.

- Unify, The Outbound Sweet Spot guide. Source for Outbound Quarterback (OBQB) pilot-owner role.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)