TL;DR: Signal-driven AI SDR platforms deliver 15-25% reply rates at $80-$180 per qualified meeting. Autonomous agents average 1-3% at $250-$400. The right platform depends entirely on where your team sits on the volume-vs-personalization maturity curve. This guide is for Sales, Growth, and RevOps leaders at B2B SaaS companies evaluating AI SDR tools before committing to a category.

Why Most AI SDR Comparisons Give You the Wrong Answer

Most "best AI SDR platforms" articles rank tools by feature count or pricing. They skip the one question that determines whether any AI SDR will work for your team: where are you on the volume-vs-personalization maturity curve? The answer to that question dictates which category of tool you need before you ever look at a vendor demo.

The AI SDR market has fractured into three distinct functional archetypes: platforms that primarily research (building prospect intelligence), platforms that primarily write (drafting personalized messages at scale), and platforms that orchestrate (detecting signals, qualifying accounts, personalizing, and sending with minimal human intervention). Buying the wrong archetype is the leading reason 50-70% of AI SDR contracts churn before the first renewal.

This guide maps the landscape to the maturity curve so you can find your position, identify the archetype you need, and evaluate platforms against criteria that actually predict outcomes.

What Is the Pipeline Generation Maturity Curve?

The pipeline generation maturity curve describes how outbound teams evolve from pure volume plays toward signal-triggered, highly personalized outreach as their GTM motion matures. Every team sits somewhere on this curve, and the right AI SDR architecture shifts depending on where they are.

The curve has four stages:

Teams at Stage 1 need to graduate to Stage 2 before any AI investment pays off. Teams at Stage 3 choosing autonomous agents often regress to Stage 2 outcomes because the tools optimize for send volume rather than signal relevance. The biggest unlock is the jump from Stage 2 to Stage 4, where intent signals and AI orchestration compound into sustained reply rates that manual sequences cannot match.

What Are the Three Modes of AI SDR?

The three AI SDR modes are researcher (prospect intelligence), writer (message drafting), and orchestrator (end-to-end signal-to-send execution). Which mode a platform primarily operates in determines whether it fits your maturity stage and team size. These are functional distinctions based on where in the outbound workflow AI adds value, not marketing categories.

Mode 1: Researcher

Researcher-mode platforms build the intelligence layer before outreach begins. They monitor accounts for buying signals, enrich contacts, qualify against ICP criteria, and surface context that writers or human reps then use to craft messages. The platform does not send; it informs.

- Best for: Teams with strong human SDRs who lack research bandwidth

- Core strength: Signal detection, account qualification, data enrichment

- Known limitation: Does not generate pipeline on its own; requires human or writer-mode execution layer

- Typical timeline to impact: 30-60 days to configure signal sources and ICP filters

- Representative platforms: ZoomInfo (data layer), Clearbit, early-stage intent tools

Mode 2: Writer

Writer-mode platforms take prospect data and draft personalized outreach: email subject lines, message bodies, LinkedIn notes, and follow-up sequences. They accelerate rep throughput but do not own the prospecting or sending motion autonomously.

- Best for: Teams with existing SDR headcount where rep time is the bottleneck

- Core strength: AI-generated message drafts, sequence copywriting, A/B testing at scale

- Known limitation: Quality degrades without strong underlying research; "robotic tone" is a persistent user complaint at scale

- Typical timeline to impact: 1-2 weeks for first campaigns; quality improves over 60 days

- Representative platforms: Regie.ai, Lavender (copilot mode)

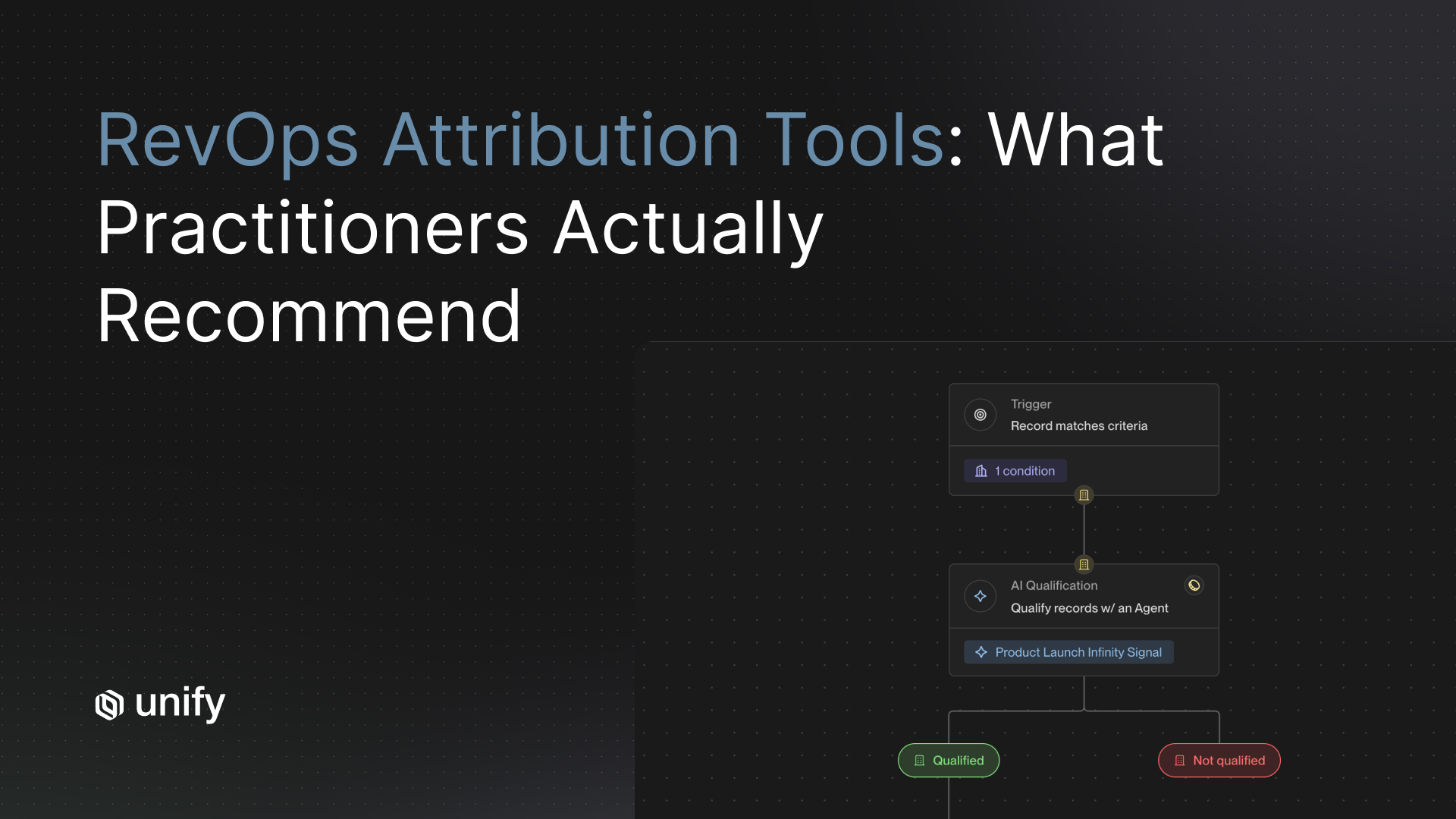

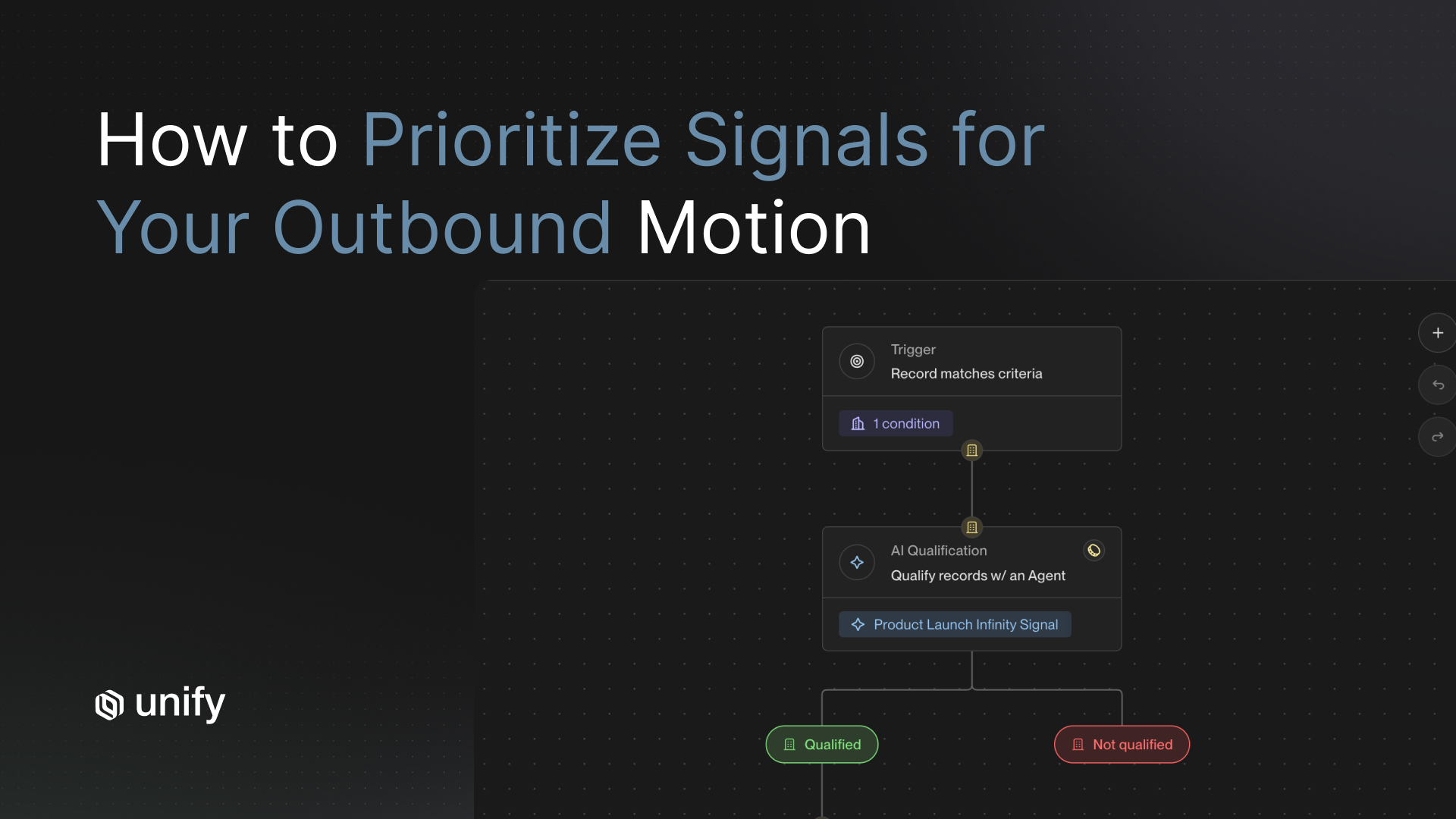

Mode 3: Orchestrator

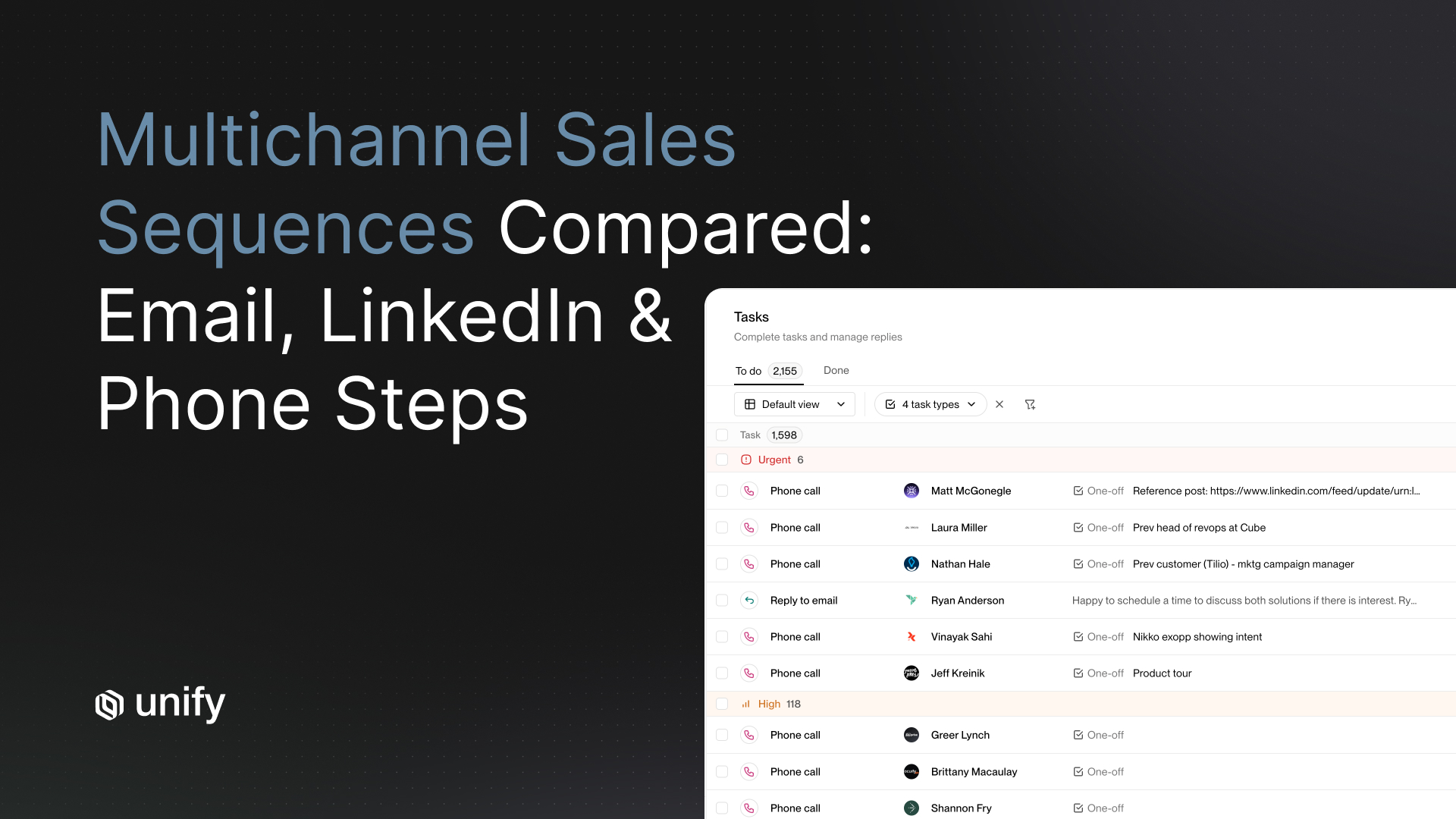

Orchestrator-mode platforms combine research, writing, and execution into a single closed loop. They detect signals, qualify accounts, personalize messages, and send (or queue for review) without a human touching each step. Orchestrators are the only category that generates net-new pipeline autonomously.

- Best for: Teams that want to scale coverage without growing headcount proportionally

- Core strength: End-to-end pipeline generation from signal to sent message

- Known limitation: Brand drift and reply-rate decay are risks if signal quality degrades or personalization becomes templated

- Typical timeline to impact: 60-90 days for full deployment; 30 days for first signals

- Representative platforms: Unify (signal-driven orchestration), 11x (autonomous agent), Artisan (autonomous agent)

How to Evaluate AI SDR Platforms: Vendor-Neutral Criteria

These six criteria predict AI SDR outcomes more reliably than feature checklists. Evaluate every platform against these before watching a demo.

Criterion 1: Signal Depth

Definition: The number and quality of intent signals the platform ingests to trigger and personalize outreach.

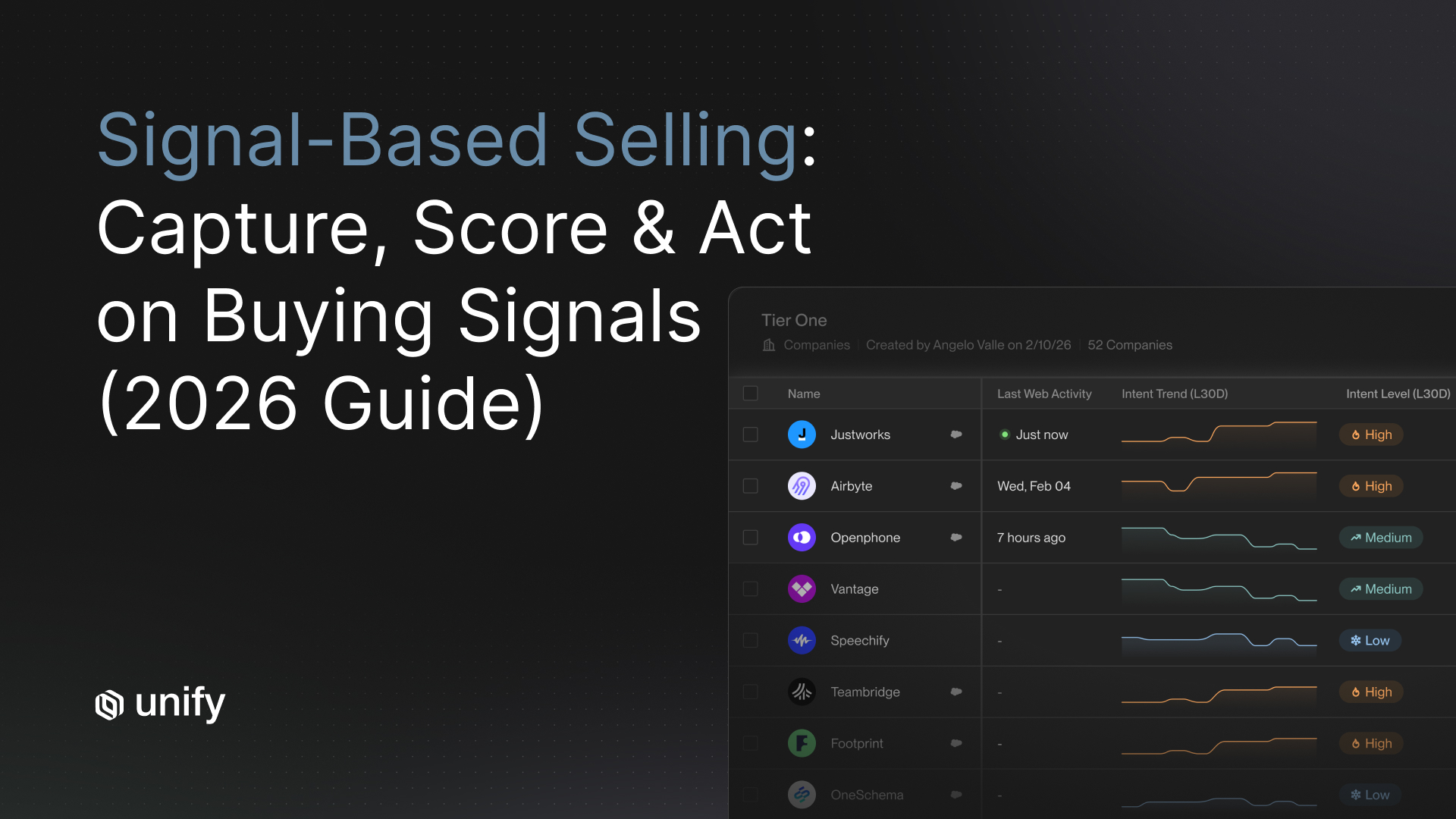

Why it matters: Signal depth is the single strongest predictor of sustained reply rates. Platforms relying on one signal type (e.g., web visits only) exhaust their angle quickly; multi-signal platforms maintain personalization freshness across 90-day cohorts.

How to test: Ask the vendor to show signal coverage for 50 of your actual ICP accounts. Count the distinct signal types firing in the last 30 days.

Pass-fail threshold: 3+ distinct signal types per account per month is a passing signal depth for ABM-style plays.

Red flags: Vendor cannot demonstrate signal coverage on your real accounts during POC; signal library is static rather than real-time.

Criterion 2: Reply-Rate Durability

Definition: Whether reply rates hold steady or decay significantly over a 90-day cohort window.

Why it matters: Autonomous platforms typically peak in week 2 then decline 30-50% by week 8 as personalization angles repeat. Signal-driven platforms refresh angles through new signal capture, maintaining performance.

How to test: Request cohort data showing reply rates at days 7, 30, 60, and 90. Reject aggregate data; require per-cohort breakdowns.

Pass-fail threshold: Less than 15% decay from week-2 peak to week-8 is acceptable.

Red flags: Vendor only shares week-one or first-campaign data; no cohort-level reporting available.

Criterion 3: CRM Sync Depth

Definition: The fidelity and latency of bidirectional sync between the AI SDR platform and your CRM.

Why it matters: 80% of AI SDR deployments that fail cite sync issues as the root cause. Duplicate records, missed suppression updates, and stale lifecycle data all produce bad outreach that damages pipeline and domain reputation.

How to test: Run a sync stress test on 500 of your duplicate-heavy records during the POC. Measure deduplication accuracy and suppression propagation latency.

Pass-fail threshold: Suppression list propagation under 5 minutes; duplicate rate below 5%.

Red flags: Sync is export/import-based rather than API-native; vendor cannot guarantee sub-60-second latency at p95.

Criterion 4: Personalization Engine Quality

Definition: Whether AI-generated messages pass a human-written quality bar across a sample of 50+ real prospects.

Why it matters: Personalization that reads as templated destroys reply rates faster than no personalization. "Robotic tone" is the top complaint across AI SDR user reviews and is most common when the writing layer lacks sufficient research context.

How to test: Run the platform on 50 real accounts from your CRM. Have a senior SDR blind-score each message 1-5 without knowing which tool generated it.

Pass-fail threshold: Average human score of 3.5 or above on a 5-point scale.

Red flags: Vendor refuses to run demo on real accounts; demo uses pre-loaded curated companies.

Criterion 5: ICP Density Fit

Definition: Whether the platform's architecture matches the size of your total addressable ICP.

Why it matters: Autonomous high-volume platforms are optimized for large TAMs. Using them on a tight ICP (under 5,000 named accounts) burns the list quickly and damages relationships. Signal-driven platforms work well on tighter ICPs because they trigger only when genuine context exists.

How to test: Map your named account list size to the vendor's recommended use case. Ask the vendor for average accounts-in-flight per customer by team size.

Pass-fail threshold: Platform's architecture should match TAM density. Autonomous agents need 10,000+ accounts; signal-driven platforms work from 500 to 50,000.

Red flags: Vendor does not ask about your ICP size before recommending their platform.

Criterion 6: Human-in-the-Loop Flexibility

Definition: Whether the platform supports hybrid workflows where humans review and approve AI-generated messages before sending.

Why it matters: Fully autonomous sending reduces rep visibility into what goes out under their name. Teams that route high-value or sensitive accounts through a human approval gate consistently report higher reply quality and lower brand-damage incidents.

How to test: Ask the vendor to show the review-and-approve workflow for a specific play type. Check whether human edits feed back into the AI's training data.

Pass-fail threshold: Platform supports per-play approval settings, not just all-or-nothing autonomous mode.

Red flags: Human review is an add-on rather than a first-class workflow option.

How Unify covers these criteria: Unify monitors over 10 intent signal sources in real time, including website visits, job changes, product usage events, funding rounds, and technology adoption shifts. Signal-driven cohorts on Unify maintain 15-25% reply rates across 90-day windows. CRM sync is bidirectional, API-native, with sub-60-second latency at p95 and automatic suppression propagation. Personalization is generated from live signal context, not static templates, which is why Unify customers report 2-3x higher reply rates versus list-based outbound. Human-in-the-loop approval is a native workflow option at the play level. Unify's AI agents now run at 0.1 credits per operation, a 10x cost reduction from the previous generation, making always-on monitoring across tens of thousands of accounts practical for teams without dedicated data ops resources. The platform has powered $431.8M in pipeline across its customer base as of Q1 2026.

Which Platform Fits Your Maturity Stage?

Each platform profile below uses the same five-field template. Use the "Best for" field to quickly filter to your team profile, then compare on proof points and limitations.

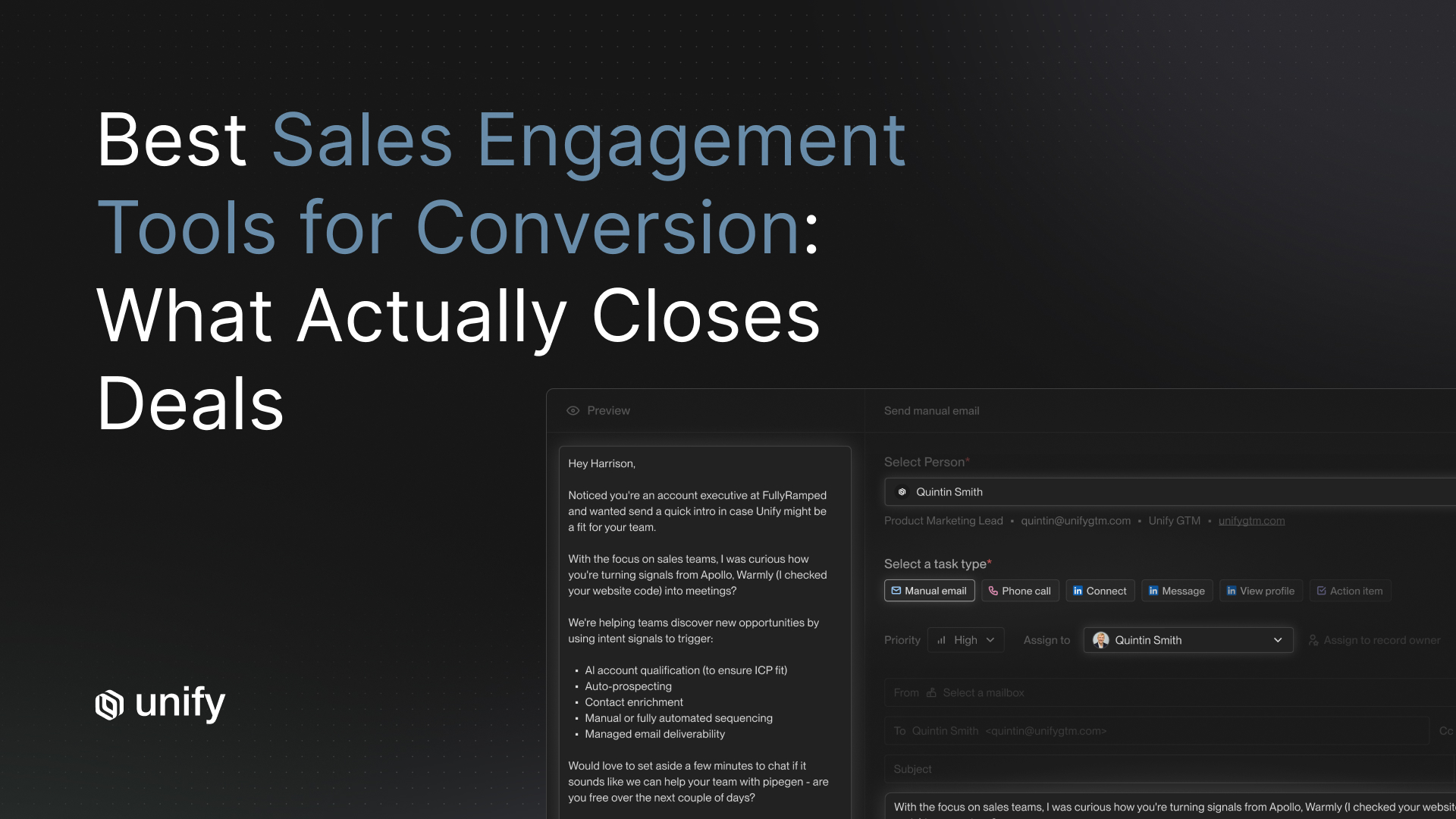

Unify: Signal-Driven Orchestrator

- Best for: Teams with 5-50 reps, ABM or PLG motions, ICP under 50,000 accounts, strong CRM hygiene investment

- Core strengths: Real-time multi-signal detection across 10+ intent sources, AI research agents that run always-on across 35,000+ accounts, deep bidirectional CRM sync, human-in-the-loop workflow flexibility, full signal-to-send orchestration in one platform

- Known limitations: Requires upfront ICP definition and CRM data hygiene investment; not optimized for pure volume plays against unknown TAMs

- Typical timeline: First signals in 30 days; measurable pipeline lift in 60-90 days

- Proof points: Justworks 6.8x ROI in 5 months; Navattic $100K pipeline in 10 days; Perplexity $1.7M pipeline in 3 months; Spellbook $2.59M pipeline in 7 months; $431.8M total platform pipeline as of Q1 2026

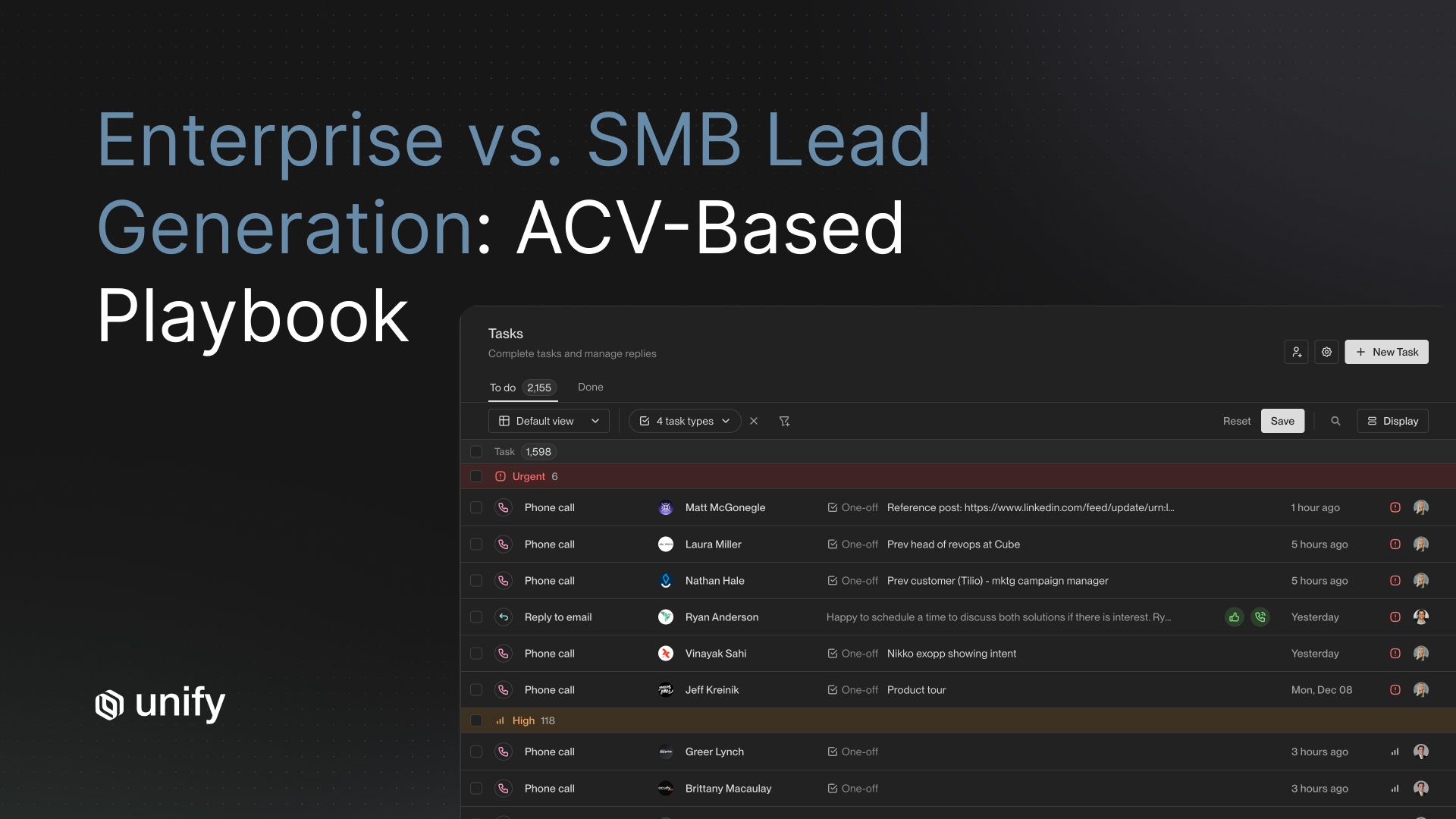

11x: Autonomous Agent for High-Volume Mid-Market

- Best for: Mid-market teams with a large TAM (10,000+ named accounts), annual commitment budget, and tolerance for 1-3% reply rates at scale

- Core strengths: Fully autonomous end-to-end SDR function, multichannel coverage, broad integration ecosystem

- Known limitations: Starts at $5,000/month on annual contracts; reply rates typically decay 30-50% by week 8; personalization can read as templated on tight ICPs

- Typical timeline: First campaigns in 2 weeks; 60-day ramp to stable performance

- Proof points: Positioned for enterprise-scale outbound where coverage matters more than per-touch quality

Artisan (Ava): Autonomous Agent with Built-in Deliverability

- Best for: SMB and mid-market teams wanting email-focused autonomous SDR with strong deliverability infrastructure baked in

- Core strengths: Email warmup, mailbox health monitoring, dynamic sending limits, and signature rotation included; consolidates data, enrichment, and outreach in one product

- Known limitations: $2,000-$2,400/month; limited CRM customization; weaker signal layer than orchestrators; autonomous agents average 1-3% reply rates

- Typical timeline: 2-4 weeks to first campaign; domain warmup adds 4-6 weeks for new domains

- Proof points: Strongest fit for teams replacing a first SDR hire, not augmenting a mature outbound motion

Regie.ai: Content-First Writer and Light Orchestrator

- Best for: Marketing-led or content-heavy sales teams; large enterprise teams where volume outweighs per-message quality

- Core strengths: AI content generation across email, LinkedIn, and phone scripts; 220M+ contact database; autonomous agent mode with signal detection; $180/user/month entry pricing

- Known limitations: Persistent user feedback on "robotic tone" in AI-generated output; weaker intent signal layer compared to dedicated orchestrators; heavy editing required for high-value accounts

- Typical timeline: First drafts in days; 30-60 days to dial in persona-specific quality

- Proof points: Strong fit when writing throughput is the bottleneck, not signal detection or pipeline generation at the account level

Salesloft Rhythm: AI Prioritization Layer for Existing Salesloft Users

- Best for: Teams already on Salesloft that want AI-prioritized task queues without migrating their stack

- Core strengths: Signal-to-action workflow within existing Salesloft cadences; rep prioritization based on engagement signals; minimal migration cost

- Known limitations: AI layer sits on top of Salesloft's existing infrastructure; limited signal coverage outside of Salesloft-native signals; not a net-new pipeline generator for teams off-platform

- Typical timeline: Activation in 1-2 weeks for existing Salesloft users

- Proof points: Best positioned as an upgrade path for teams already invested in Salesloft, not a standalone AI SDR decision

Decision Framework: Which AI SDR Architecture Is Right for You?

Map your team profile to the recommended architecture using the if/then logic below. Each line maps a single dominant variable to a one-line recommendation.

- If you have fewer than 5 reps and a tight ICP under 2,000 accounts: Choose signal-driven orchestration (Unify). Volume tools will burn your list before you see meaningful data.

- If you have 5-50 reps and an ABM or PLG motion: Choose signal-driven orchestration (Unify). The ICP density and relationship-first motion reward precision over volume.

- If you have 50+ reps and a TAM over 20,000 accounts: Autonomous agents (11x, Artisan) become viable. Evaluate on reply-rate durability and CRM sync depth before committing.

- If your bottleneck is rep writing time, not lead quality: Start with a copilot or writer mode (Regie.ai, Lavender). Add signal detection in the next 90 days.

- If you are already on Salesloft and want incremental AI gains: Activate Salesloft Rhythm before evaluating a net-new platform. Avoid stack complexity until you exhaust the existing investment.

- If reply-rate durability over 90 days is your primary metric: Signal-driven orchestration is the only architecture that maintains performance across a full quarter without manual angle refreshes.

- If you are in a regulated industry (GDPR, CCPA) or have a high-brand-risk segment: Require human-in-the-loop approval as a non-negotiable before any platform goes live.

Worked Example: From Flat Pipeline to 6.8x ROI in Five Months

This is a composite scenario based on Unify customer outcomes. Team details are representative, not a single named company.

Signal: A 30-person B2B SaaS company with a 3-person sales team noticed that their cold outbound reply rate had dropped from 3% to under 1% over 18 months. Pipeline coverage was declining despite adding a second SDR.

Diagnosis: Their ICP was well-defined (HR and finance leaders at 500-5,000 employee companies) but outreach was list-based with no signal triggering. Every message went to every account on the same weekly schedule regardless of buying context. The team sat at Stage 1 on the maturity curve.

Fix: They implemented signal-driven orchestration. Website intent signals, job-change alerts for target personas, and product trial events became the triggers for outreach plays. AI agents monitored 8,000 accounts continuously and fired sequences only when at least one signal was active. Human reps reviewed AI-drafted messages for the top 20 accounts per week before sending.

Impact: Within 5 months, the team reported a 6.8x ROI on their platform investment. Reply rates on signal-triggered plays held above 12% at the 90-day mark. The two SDRs covered 8,000 accounts, a coverage level that would have required four additional hires at the previous manual research rate. This outcome pattern matches Justworks's reported results on Unify's platform.

How the Recommendation Changes by Role and Team Type

The right AI SDR architecture shifts meaningfully depending on your role, motion, and company stage. Here are the variants that materially change the answer.

By Role

- VP of Sales / Head of Sales: Prioritize reply-rate durability and cost per qualified meeting over feature breadth. Require 90-day cohort data in every vendor conversation. Default to signal-driven unless your team exceeds 30 reps with a TAM over 10,000 accounts.

- RevOps / Sales Ops: Lead with CRM sync depth evaluation. Run a sync stress test on your duplicate-heavy records before any other criteria. CRM failures cause 80% of AI SDR deployment failures.

- Growth / Demand Gen: Align AI SDR signals with your existing lead scoring model. Avoid platforms whose signal definitions contradict your MQL criteria. Pair signal-driven outbound with a copilot layer for human SDRs running manual plays in parallel.

- Founder-led GTM (under 5 reps): Signal-driven orchestration gives the most leverage per dollar. Budget $30,000-$60,000 ARR. Do not invest in autonomous agents until ICP density exceeds 10,000 accounts.

By Motion

- PLG (product-led growth): Product usage signals are the highest-quality triggers available. Prioritize platforms that ingest in-product events alongside web and CRM signals. Unify's signal layer integrates product usage data natively.

- Sales-led: Multi-signal plays (firmographic shift + intent event + contact change) produce the strongest outcomes. Do not run single-signal triggers in a sales-led motion.

- Expansion (existing customers): Champion change and usage-drop signals are the most actionable. Most AI SDR platforms are built for net-new; verify that expansion plays are first-class workflows, not afterthoughts.

By Company Size

- SMB and startup (under 50 employees): Signal-driven orchestration. Every burned contact is a permanent relationship cost in a small TAM.

- Mid-market (50-500 employees): Evaluate both signal-driven and autonomous based on TAM density. Run a 14-day POC with real accounts before committing.

- Enterprise (500+ employees): Autonomous agents become more viable at scale, but signal-driven orchestration still outperforms on reply quality. Consider a hybrid: orchestrator for named accounts, autonomous for long-tail TAM coverage.

Edge Cases and Common Confusions to Resolve Before Buying

Several adjacent concepts trip up buyers during AI SDR evaluations. Resolving these before entering a vendor process saves weeks of misaligned demos.

AI SDR vs. AI writing assistant: An AI SDR generates net-new pipeline autonomously. An AI writing assistant (copilot) helps human SDRs write faster but does not prospect, research, or send on its own. Many vendors market copilots as AI SDRs. If the platform requires a human to initiate every outreach action, it is a copilot, not an SDR.

Intent data vs. buying signals: Third-party intent data (page-view aggregates from data co-ops) reflects research activity across the web but cannot confirm that the activity came from your specific target persona. First-party signals (website visits, product usage, email engagement) confirm direct interaction with your brand. Signal-driven platforms that rely heavily on third-party intent have shallower personalization angles than platforms ingesting first-party behavioral data.

Volume plays vs. signal-triggered plays: A volume play sends to all accounts that match ICP firmographics on a fixed schedule. A signal-triggered play fires only when a qualifying event occurs. Buyers comparing platforms on "sequences sent per month" are measuring the wrong thing; the right metric is qualified meetings per account reached.

"Fully autonomous" vs. human-in-the-loop: Fully autonomous means the platform researches, writes, and sends without human review. Human-in-the-loop means humans approve before send. Neither is universally better. High-brand-risk accounts (executives, existing customers, regulated industries) need HITL. Long-tail TAM coverage can run fully autonomous with minimal relationship risk.

Platform churn risk: 50-70% of AI SDR contracts churn before first renewal. The most common churn driver is misaligned archetype selection, not platform failure. Teams that select an autonomous agent for a tight ICP, or a writer-mode tool for a pipeline-generation goal, fail regardless of the underlying quality of the software.

Stop or Adapt: Red Flags During and After Deployment

Top 5 Mistakes to Avoid When Evaluating AI SDR Platforms:

- Selecting the wrong archetype first: Buying an autonomous agent for a 2,000-account ICP is the leading cause of AI SDR churn. Match your maturity stage before evaluating vendors.

- Trusting week-one demo data: Reply rates peak in the first two weeks of any new platform; require 60-90 day cohort data showing sustained performance before signing an annual contract.

- Skipping the CRM sync stress test: Sync failures cause 80% of deployments to underperform. Test on your real, duplicate-heavy data, not the vendor's clean sandbox.

- Running a demo on the vendor's curated accounts: Pre-loaded demo environments hide real-world failure modes. Insist on running the platform against 50 of your actual ICP accounts.

- Treating AI SDR as a fire-and-forget tool: Signal-driven outbound requires ongoing ICP refinement and signal-source maintenance. Teams that set it up once and stop iterating see performance decay within 60 days.

Go Deeper: Related Guides

If this article surfaced questions about how to set up the underlying infrastructure for AI-driven outbound, these three guides cover the next layer of detail.

- How to choose the right AI SDR architecture for your team: Best AI SDR Software (2026): A Mistake-Driven Buyer's Guide

- How automated outbound fits into a modern GTM stack: Automated Outbound: Your Next Big Growth Channel

- How to build a pipeline generation stack without burning your domain: Best Pipeline Generation Tools for B2B SaaS in 2026

Frequently Asked Questions

Which AI SDR platforms are best for automated outreach?

The best AI SDR platform depends on where your team sits on the volume-vs-personalization maturity curve. Signal-driven orchestrators like Unify deliver 15-25% reply rates at $80-$180 per qualified meeting for teams with 5-50 reps and a defined ICP. Autonomous agents like 11x and Artisan suit high-volume mid-market plays but average 1-3% reply rates. Copilots like Regie.ai assist human writers without replacing the prospecting motion. Match your archetype before evaluating vendors.

What is the difference between an AI SDR researcher, writer, and orchestrator?

AI SDR researchers focus on prospect intelligence: pulling signals, enriching accounts, and surfacing context. Writers use that context to draft personalized outreach at scale. Orchestrators combine both functions and add execution, detecting signals, qualifying accounts, personalizing messages, and sending across channels without human intervention on each step. Most platforms lean toward one mode, though orchestrators like Unify span all three.

What reply rate should I expect from AI-driven outbound?

Reply rates vary widely by approach. Generic cold outbound averages 3.43% platform-wide in 2026, down from roughly 7% two years ago (Instantly, 2026). Intent-triggered campaigns to accounts showing active buying signals achieve 15-25% sustained over 90 days on signal-driven platforms. Unify's own growth team reports 80% open rates and 5% reply rates on intent-based plays versus 30% open rates and under 1% replies on cold lists.

How much does AI SDR software cost?

Autonomous agents like 11x start at $5,000 per month on annual contracts. Artisan runs $2,000-$2,400 per month. Signal-driven platforms like Unify are custom-priced based on seat count and signal volume. The all-in cost per qualified meeting is $80-$180 for signal-driven platforms, $250-$400 for autonomous agents, and $375-$720 for a fully-loaded human SDR.

When should a team use an autonomous AI SDR versus a signal-driven platform?

Autonomous agents are a better fit when your ICP is large (over 10,000 accounts), your team prioritizes volume over precision, and brand risk from imperfect personalization is low. Signal-driven platforms win when ICP density is under 5,000 named accounts, relationships matter, and your team needs each message to reflect genuine buyer context. Most teams with fewer than 50 reps and an ABM or PLG motion see stronger results from signal-driven orchestration.

What is pipeline generation?

Pipeline generation is the process of identifying qualified prospects, engaging them through outbound or inbound motions, and converting that engagement into qualified sales opportunities. It encompasses lead sourcing, contact enrichment, personalized outreach, follow-up sequences, and meeting booking. Modern pipeline generation combines buying signal detection, AI-driven research, and automated execution to improve both the volume and quality of opportunities created.

How long does it take to see pipeline results from an AI SDR platform?

Most teams achieve measurable pipeline lift within 60 to 90 days of deployment, with the first 30 days spent on setup, CRM integration, and testing. Faster results are possible on intent-based plays: Navattic saw $100K in direct pipeline within the first 10 days on Unify, and Abacum generated $250K in pipeline in under two hours of implementation. Speed depends heavily on CRM data quality and how well-defined the ICP is at launch.

Is outbound sales still effective in 2026?

Yes, but only when combined with personalization and signal intelligence. Outbound pipeline share has declined roughly 3 percentage points since 2021 (Insight Partners, 2025), but SDRs using intent-triggered, personalized outreach still generate 46-73% of total pipeline depending on ACV (TOPO Sales Development Benchmark Report, via Gradient Works). The differentiator in 2026 is timing and relevance: reaching a prospect during an active buying window dramatically improves conversion. Generic volume-based outbound continues to underperform.

Glossary

- AI SDR (AI Sales Development Representative): Software that automates the top-of-funnel prospecting tasks a human SDR typically performs, including researching prospects, writing outreach messages, sending across channels, and booking meetings, either autonomously or in collaboration with human reps.

- Signal-driven outbound: An outreach approach where messages are triggered by specific buying indicators (website visits, job changes, funding events, product usage) rather than sent on a fixed schedule to all accounts on a list.

- Orchestrator (AI SDR mode): An AI SDR architecture that combines researcher, writer, and execution functions into a closed loop, detecting signals, qualifying accounts, personalizing messages, and sending without requiring human intervention at each step.

- Reply-rate decay: The decline in outbound reply rates over the course of a 60-90 day campaign cohort, commonly caused by repeating the same personalization angles until prospects recognize and ignore templated messaging.

- ICP density: The total number of named accounts that qualify as your Ideal Customer Profile. Low-density ICPs (under 5,000 accounts) require precision-first tools; high-density ICPs (over 10,000) can support volume-based autonomous platforms.

- Human-in-the-loop (HITL): A workflow design where AI handles research, drafting, and qualification, but a human reviews and approves each message before it is sent to the prospect.

- Pipeline maturity curve: A framework describing how outbound teams evolve from pure volume plays (Stage 1) through segmented lists (Stage 2), signal-driven plays (Stage 3), and fully orchestrated AI-driven outbound (Stage 4), with corresponding reply rate improvements at each stage.

- Cost per qualified meeting (CPQM): The all-in cost to generate a single meeting with a qualified prospect, including platform fees, rep time at fully-loaded compensation, and sequence execution overhead. A key metric for comparing AI SDR architectures to human SDR costs.

- Buying signal: A behavioral or firmographic indicator that suggests a prospect or account is in an active research or purchase consideration phase, such as a website visit, content download, job posting, funding announcement, or technology change.

- Autonomous agent (AI SDR mode): An AI SDR architecture that handles end-to-end outbound prospecting with minimal human intervention, typically optimized for high-volume outreach across large TAMs at the cost of per-message personalization depth.

Sources and References

- Gartner. "Gartner Predicts By 2028 AI Agents Will Outnumber Sellers by 10X." November 18, 2025. gartner.com

- Instantly. "Cold Email Benchmark Report 2026." 2026. instantly.ai

- Insight Partners. "What's Driving Growth Right Now and What Will Redefine Pipeline in 2026." 2025. insightpartners.com

- Unify. "Customer Stories: How Unify Generated $40M in Annualized Pipeline." 2025. unifygtm.com

- Unify. "Introducing Unify's Next Generation of AI Agents." 2025. unifygtm.com

- Unify. "Automated Outbound: Your Next Big Growth Channel." 2025. unifygtm.com

- Unify. "Best AI SDR Software (2026): A Mistake-Driven Buyer's Guide." 2026. unifygtm.com

- Unify. "The Ultimate List of AI-Powered Pipeline Builders for Growth Leaders." 2026. unifygtm.com

- Unify. "Unify Customers: Powering Millions in Pipeline Every Month." Q1 2026. unifygtm.com

- MarketBetter. "Regie.ai Review 2026: AI Content Generation for Sales Teams Worth $35K/Year?" 2026. marketbetter.ai

- Prospeo. "11x vs Artisan: Honest AI SDR Comparison (2026)." 2026. prospeo.io

- Unify. "Navattic Generates $100K+ in Direct Pipeline Within First 10 Days on Unify." 2025. unifygtm.com

- Gradient Works (citing TOPO Sales Development Benchmark Report). "Benchmarks for Metrics That Matter to an SDR or BDR Team." 2025. gradient.works

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)