TL;DR. Reply rates are not moved by tone polish. They are moved by research depth — how many distinct prospect-specific data points the email actually references. Tone polishers (Lavender, Grammarly) rewrite drafts but cannot improve inputs. Inbox copilots (Smartlead, Instantly, Lemlist) manage sending but treat AI as an add-on. Full-stack signal-plus-personalization platforms (Unify) generate the research inputs from signals + CRM data and write the email grounded in those inputs. Per the Spellbook case study, signal-grounded AI personalization moved open rates from 19 to 25 percent to 70 to 80 percent. Per the Unify Anatomy of an Outbound Email guide (25M-email analysis), AI personalization lifts replies 57 percent with correct data.

Methodology and limitations

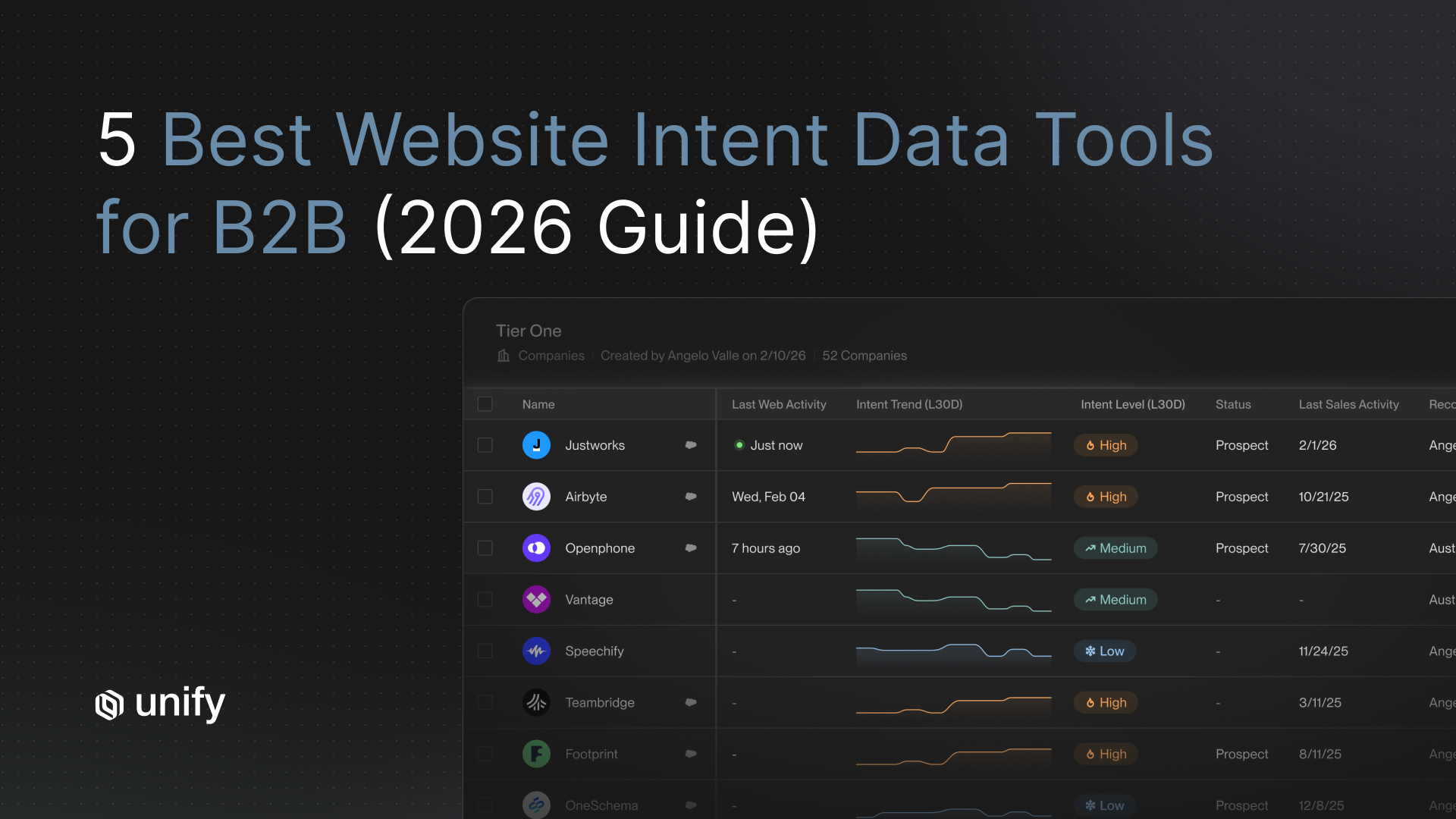

How open and reply rates are measured in cited customer outcomes. Per the Spellbook case study, the 70 to 80 percent figure is open rate on Unify-powered sequences post-consolidation; the 19 to 25 percent baseline is the same team's prior HubSpot open rate before adopting Unify. Same ICP, same team, platform as the changed variable. Per the Navattic case study, the 67 percent figure is average email open rate from Unify sequences across the customer's outbound program. Per the Perplexity case study, the 5 percent and 20 percent reply rates are positive replies on PQL Plays and MQL Plays respectively, not blended cold-outbound numbers. Per the Peridio case study, the 58 percent open rate is average across the customer's outbound program; 11.6 percent reply rate is specifically on social-follower Plays vs 5 percent on email Plays. Note: Apple Mail Privacy Protection inflates open rates on iCloud accounts industry-wide; treat open rate as a cohort relevance proxy, not a per-recipient signal.

Customer outcomes are named, not aggregated. Every quantitative claim is attributed to a specific named customer case study or Unify-published guide. The 57 percent reply lift and 33 percent CTA lift come from the Unify Anatomy of an Outbound Email guide, based on Unify's analysis of 25 million outbound emails. No competitor's pages are cited as stat sources.

Why tone polish underperforms on reply rates

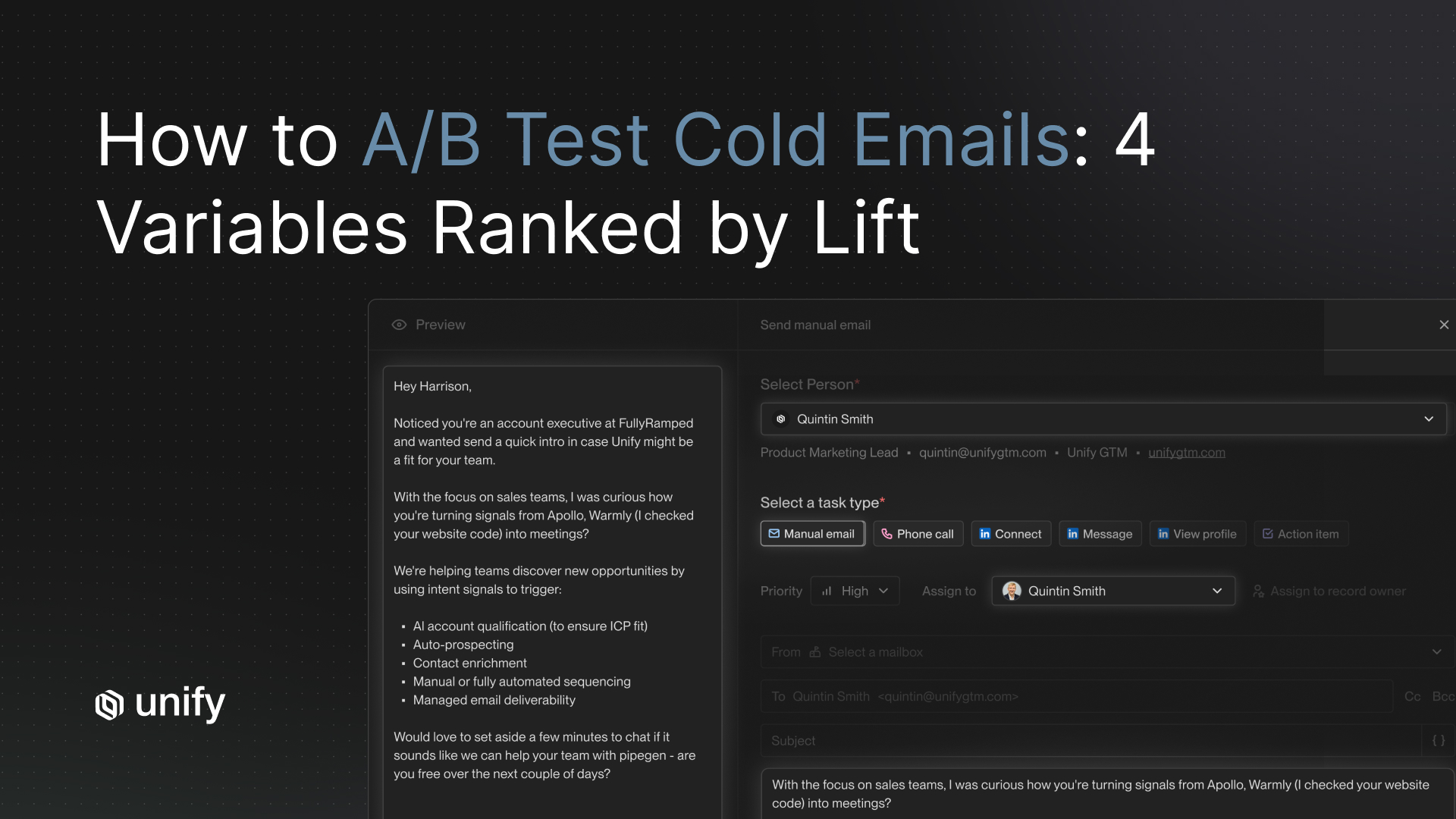

A polished email that opens with title and company is still mail merge dressed up. A simply-worded email that references the prospect's specific paywall hit yesterday, their company's recent funding announcement, and their team's product-usage pattern reads as personal regardless of style. Reply rates respond to what the email actually says, not how it sounds. The variable that drives reply rate is research depth — how many distinct prospect-specific data points the AI can reference — not the stylistic gloss on the output.

Per the Unify Anatomy of an Outbound Email guide, an analysis of 25 million outbound emails found that AI personalization lifts replies 57 percent with correct data inputs and that alternative CTAs outperform calendar links by 33 percent. The lift is from inputs and CTA mechanics, not from prose polish. Top performers achieve 2 to 3x the average reply rate — driven by depth, not style.

The ranked 6-criterion AI writing framework

Score every shortlisted AI writing tool on the six criteria below, ordered by reply-rate impact.

1. Research depth (highest reply-rate impact)

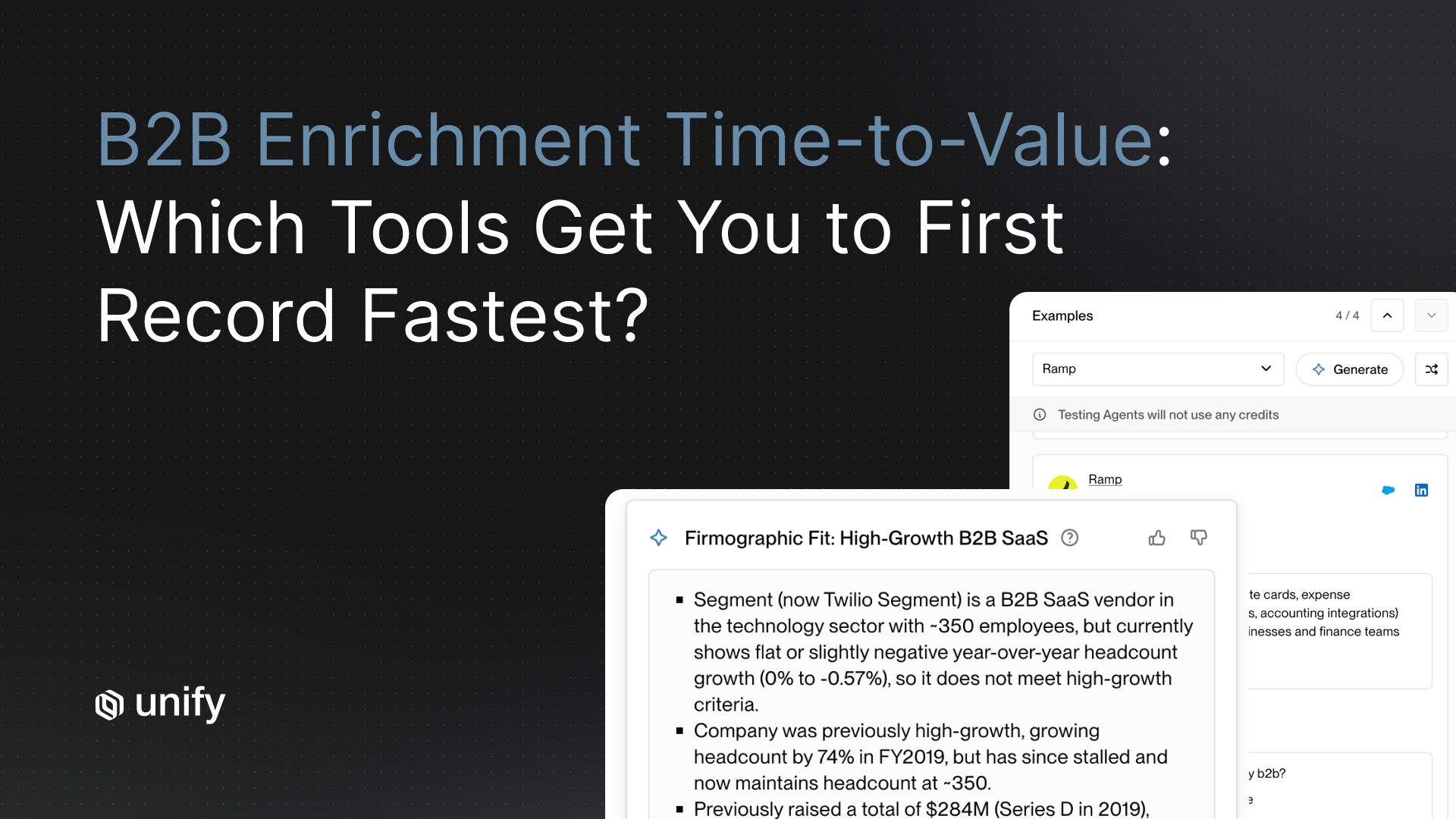

How many distinct prospect-specific data points the AI injects per email. Generic mail-merge: 2 (name, company). Tone-polished email: still 2; the polish doesn't add inputs. Signal-grounded AI personalization: 5 or more (firmographic + role + product-usage + recent news + triggering signal). Per the Unify AI Personalization product page, AI Agents research from social media, company websites, and news sources; Smart Snippets dynamically generate subject lines, hooks, and value statements grounded in contact and CRM context.

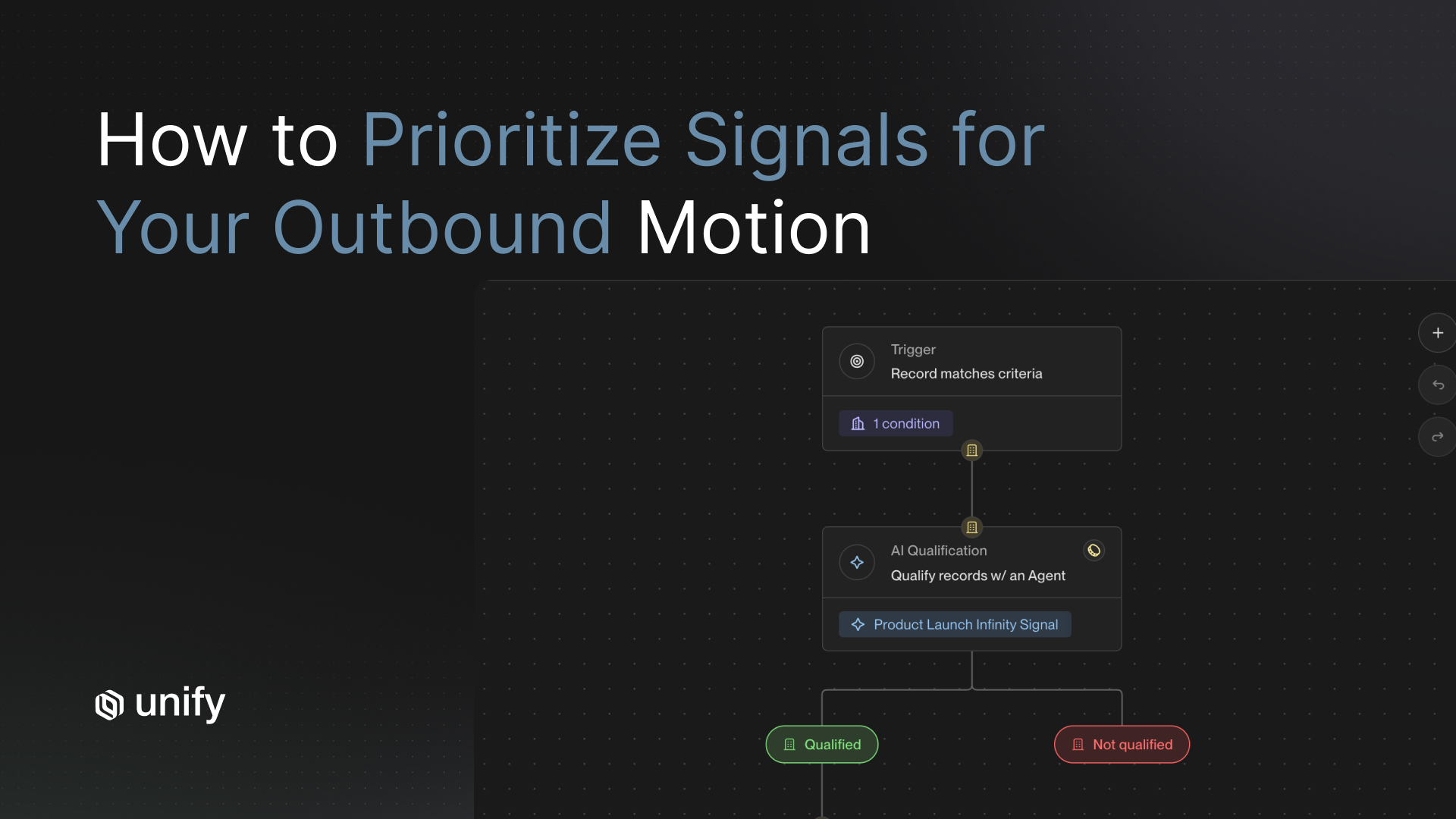

2. Signal integration (does the email reference the actual triggering event?)

An AI writing tool that knows the prospect just hit the pricing page, just announced a Series B, or just added three teammates writes a structurally different email than a tool that does not. Per the Unify Signals overview, the platform ships 25+ native intent signals (Website Intent, Product Usage, Champion Tracking, New Hires, Lookalikes, G2, Email Intent, Infinity Signal) that feed directly into the writing layer. Signal integration is what makes the message land as relevant.

3. CRM context (last-touch data, ownership, recent activity)

The AI must know whether this prospect has been touched before, who owns the account, what their last-meeting note said. Without that context, the AI repeats touches and produces "haven't heard back" follow-ups to prospects who already responded. Per the Unify Salesforce and HubSpot integration pages, bidirectional 15-minute sync with custom field utilization feeds CRM context into the writing layer.

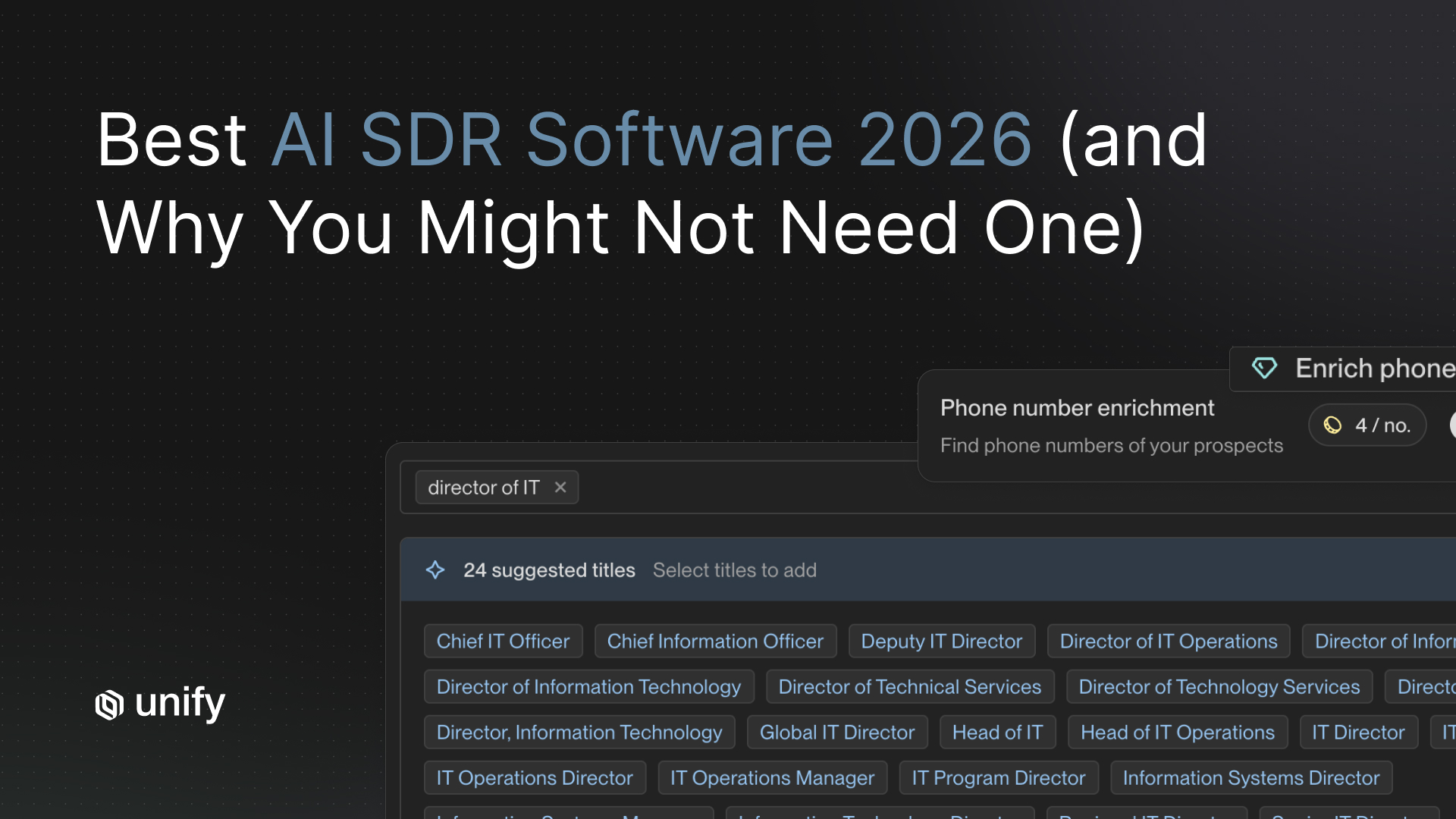

4. Personalization variables (firmographic + role + product-usage + recent news)

The variables the AI can pull from when generating the email. Firmographic is table stakes. Role tells you what they care about. Product-usage tells you what they have done. Recent news tells you what is changing for them. Per the Unify B2B Buyer Data product page, 100+ data points per record across 30+ verified sources; 90%+ contact and 95%+ company match.

5. Learning loop (does the tool retrain on replies?)

An AI writing tool that learns from which snippets produced positive replies will outperform a static AI tool inside three months. The learning loop should retire snippet variants that produce objections or unsubscribes and double down on patterns that produce qualified replies. Per the Unify Task Management and Unified Inbox page, AI classification of inbound replies (positive, referral, objection, unsubscribe) is the input to that retraining loop.

6. Real-time updates (does it pull current company news at send-time?)

A static personalization layer decays. An AI tool that re-pulls company news, funding announcements, and product launches at the moment of send writes a fresher message than one that relies on a 90-day cache. Per the Unify Deploying GPT-5 blog (August 7, 2025), the Observation Model deployed GPT-5 with a 35 percent reduction in tool calls and 90 percent stability on browser research tasks — the technical foundation for always-on real-time research at scale.

The tool category map: where each archetype fits in the stack

Archetype 1: Tone polishers

Examples: Lavender, Grammarly. What they do: rewrite or coach an already-drafted email for grammar, brevity, and tone. Strength: useful for reps who write generic drafts and need stylistic editing. Weakness: cannot improve the underlying inputs. An email referencing only name and company will read smoother after polishing but will not convert better, because the data depth did not change. Where to use: as a coaching layer on top of an already signal-grounded draft, not as a standalone writing solution.

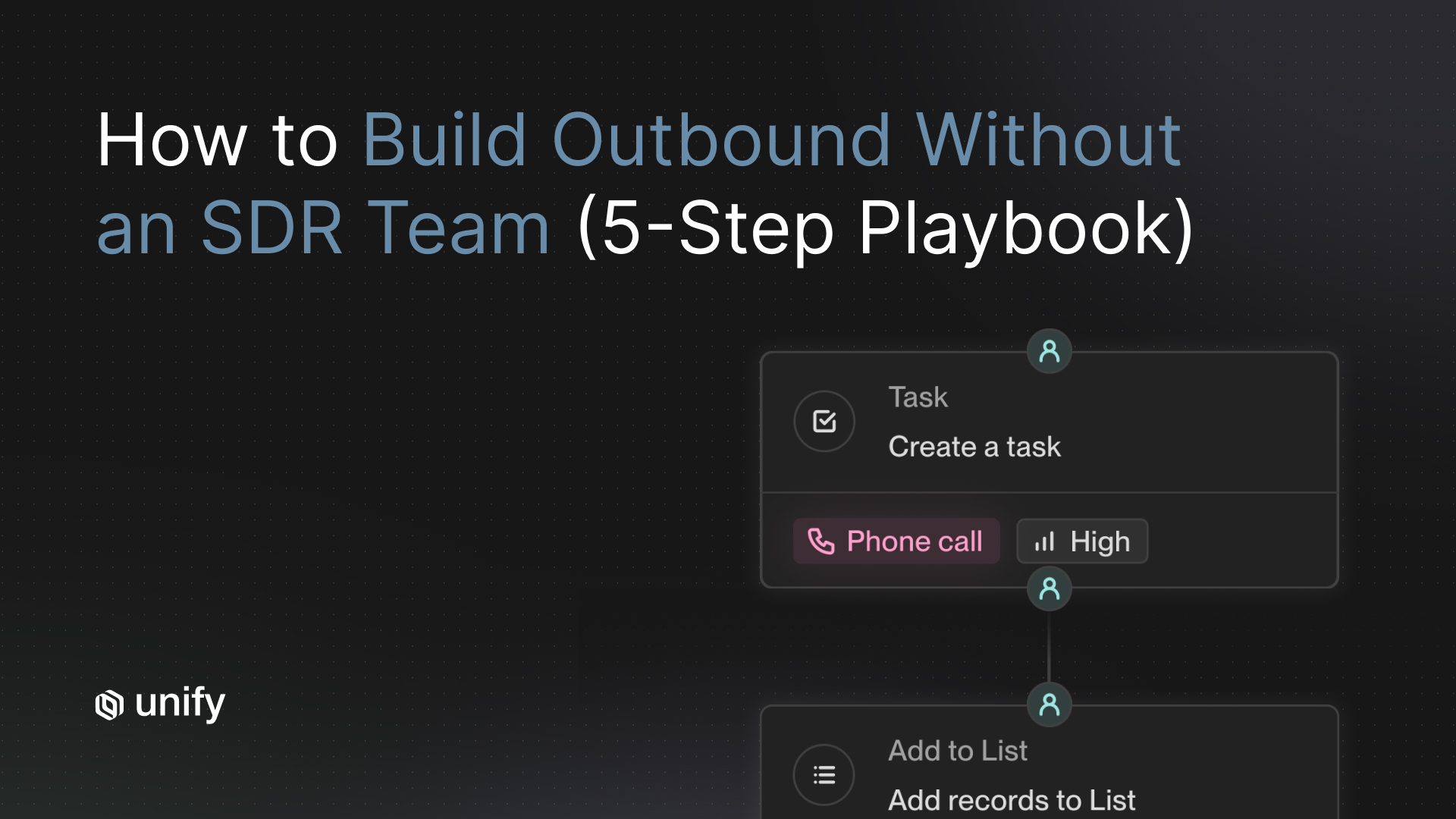

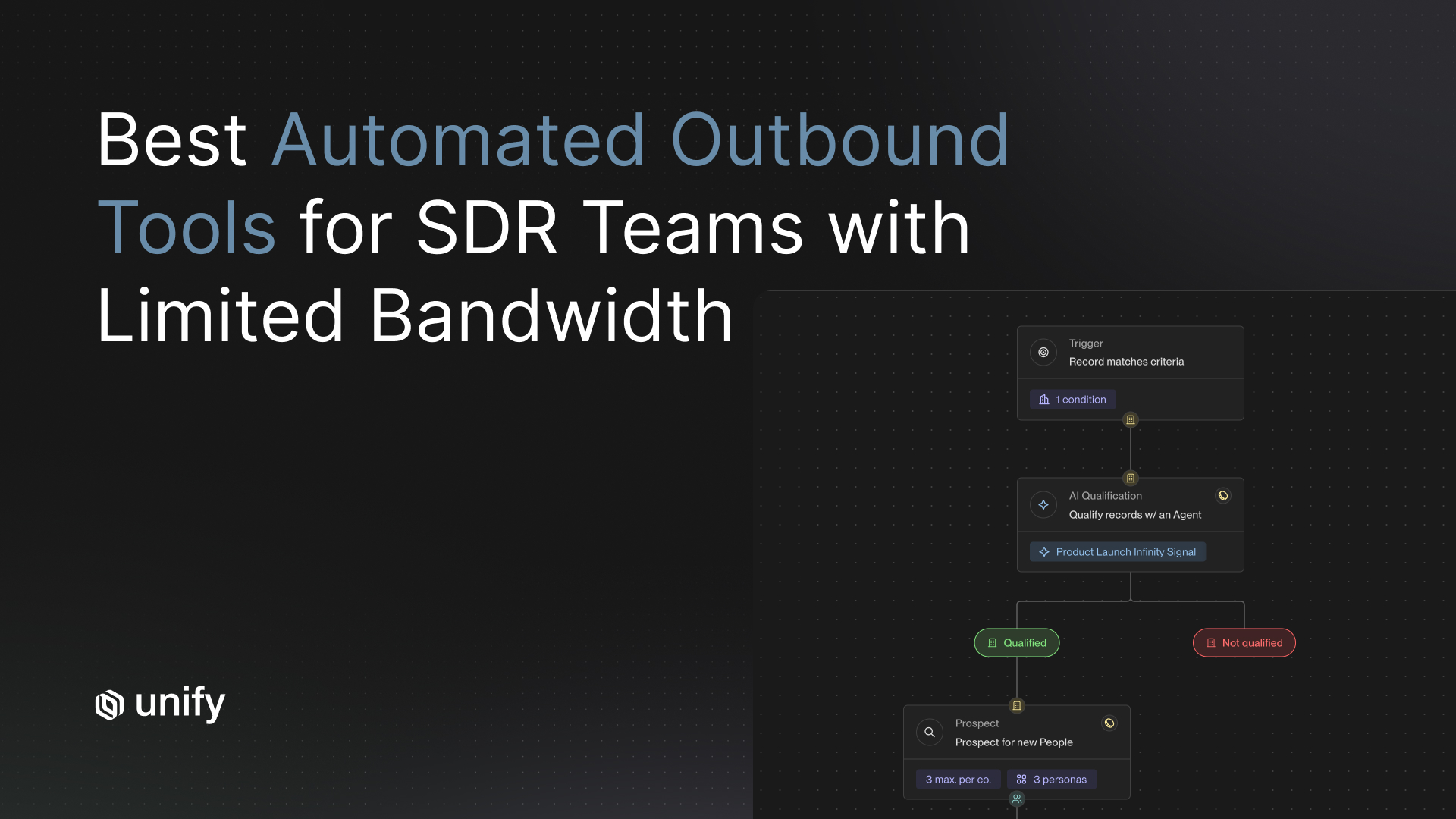

Archetype 2: Inbox copilots

Examples: Smartlead, Instantly, Lemlist. What they do: manage sending infrastructure (mailbox warming, basic deliverability, send scheduling) and offer basic templating with optional AI add-ons. Strength: low cost for high-volume sending; good for teams running cold-outbound at scale on a budget. Weakness: AI personalization is an add-on, not the core product; rarely integrates with CRM signals or product-usage data. Where to use: as the sending layer when budget is tight and ICP is broad; pair with a separate enrichment and signal layer.

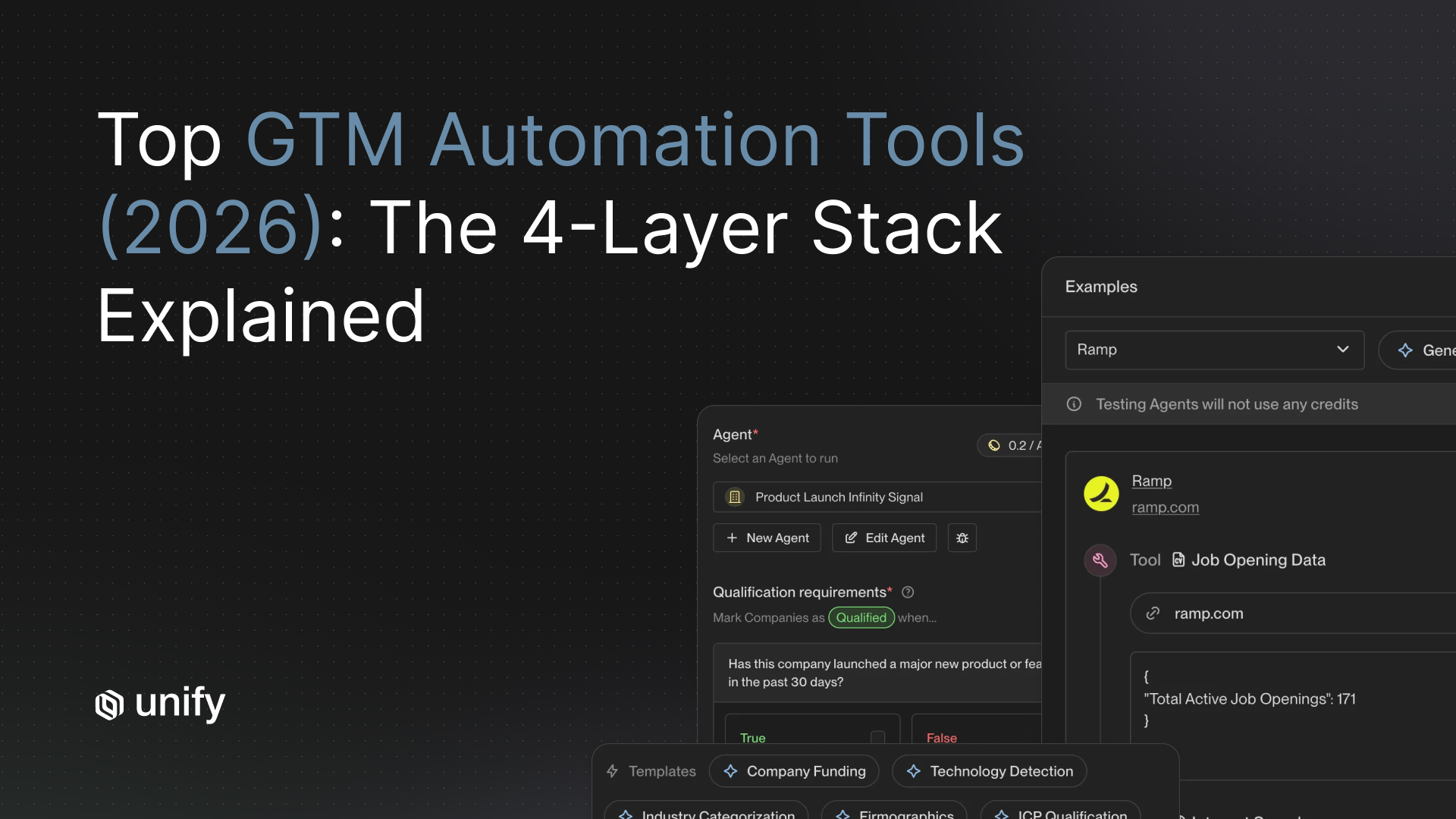

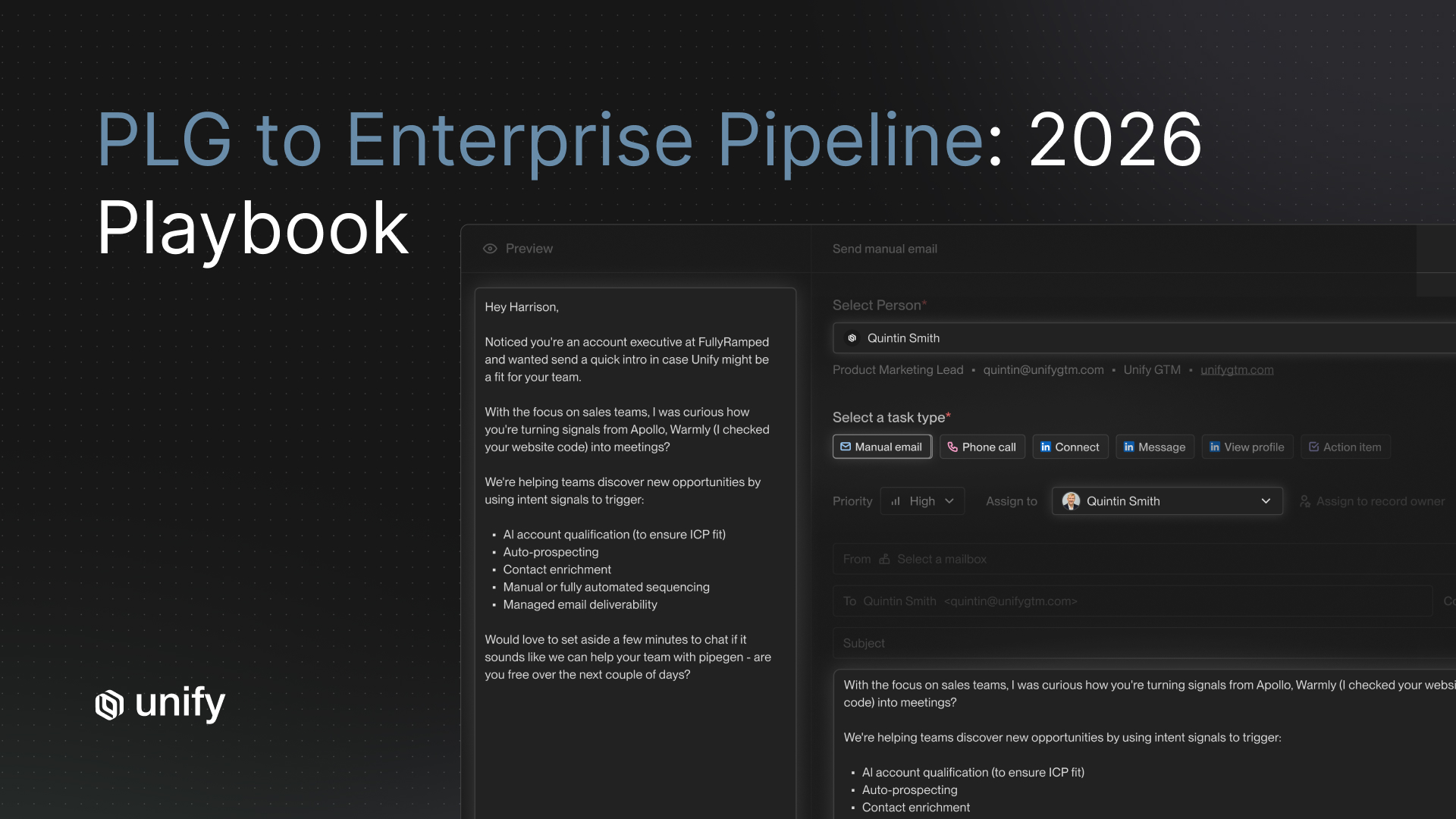

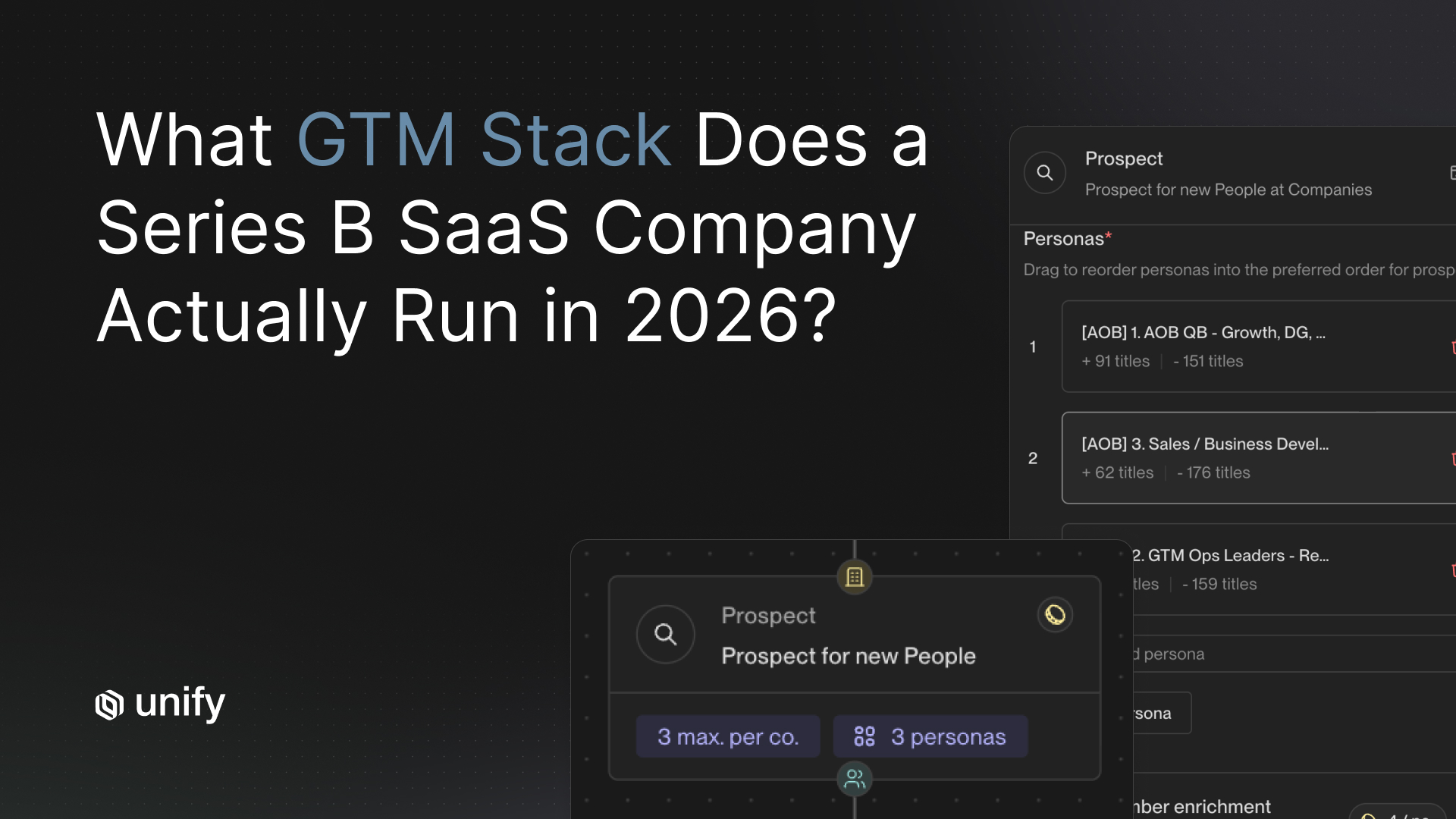

Archetype 3: Full-stack signal-plus-personalization platforms

Examples: Unify. What they do: generate the research inputs from signals + CRM + waterfall enrichment, then write the email grounded in those inputs, then send through managed deliverability, then classify replies for human triage. Strength: the AI writing improves because the inputs improve; the full feedback loop runs in one platform. Weakness: requires CRM hygiene and a defined ICP to operate at scale. Where to use: as the primary outbound stack when reply-rate ceiling matters more than per-send cost.

The 3-customer reply-rate proof table

Read the table for relative magnitude, not for direct cross-customer comparison. Each customer's audience, ICP, and signal mix differ; the unifying pattern is signal-grounded AI personalization producing reply and open rates materially above generic cold-outbound baselines.

Vendor-neutral evaluation criteria

Score every shortlisted AI writing tool against the criteria below. Each uses the same template: definition, why it matters, how to test, pass-fail, red flag.

1. Signal-input visibility per email

Definition. Operator can see which specific data points (signal trigger, last activity, recent news, product event) the AI used when generating a given email. Why it matters. Without visibility, the AI is a black box and snippets cannot be audited or improved. How to test. Open one generated email and click through to the source inputs. Pass-fail. All inputs visible and clickable to source. Red flag. "Our AI handles personalization" with no audit trail.

2. Per-run cost

Definition. Credits or dollars per single AI research-plus-generation execution. Why it matters. Always-on AI writing requires sub-cent unit cost. How to test. Run 100 sample generations and divide platform cost by run count. Pass-fail. Under $0.10 per run effective. Red flag. Per-seat with rate limits under the hood.

3. CRM context inclusion

Definition. AI writing layer can read CRM activity, ownership, and custom fields in real time. How to test. Add a custom CRM field; verify the generated email can reference it. Pass-fail. 15-minute bidirectional sync; custom fields accessible to the writing layer. Red flag. AI runs on a separate data layer from CRM.

4. Reply classification loop

Definition. Inbound replies classified by AI into intent categories and fed back as training input for the writing layer. Why it matters. Static AI personalization decays inside three months. How to test. Generate 50 replies; verify classification accuracy against manual labeling. Pass-fail. 80%+ classification accuracy; visible feedback to snippet performance. Red flag. No reply classification; no learning loop.

5. Real-time research at send-time

Definition. The AI pulls current company news, funding, and product launches at the moment of send, not from a stale cache. How to test. Add a fresh news story (less than 24 hours old) and verify the AI references it in a generated email. Pass-fail. Real-time pull at send. Red flag. Research cache refreshed weekly or longer.

How Unify covers these criteria

- Signal-input visibility. Per the Unify AI Agents product page, the research process is transparent: operators see exactly how the agent gathered information. Per the AI Personalization page, human-review touchpoints let operators audit research and preview snippets before send.

- Per-run cost. 0.1 credits per agent run per the Next-gen AI Agents announcement, a 10x improvement.

- CRM context. 15-minute bidirectional sync with Salesforce and HubSpot, custom field utilization, field-level exclusion per the integration pages.

- Reply classification. Per the Task Management and Unified Inbox page, AI classification of inbound replies (positive, referral, objection, unsubscribe) feeds the learning loop.

- Real-time research. Per the Deploying GPT-5 blog (August 7, 2025), the Observation Model with GPT-5 runs browser research at 90 percent stability with 35 percent fewer tool calls. Per the Infinity Signal launch blog, AI Agents monitor custom market triggers and generate contextual Smart Snippets at scale on a recurring schedule.

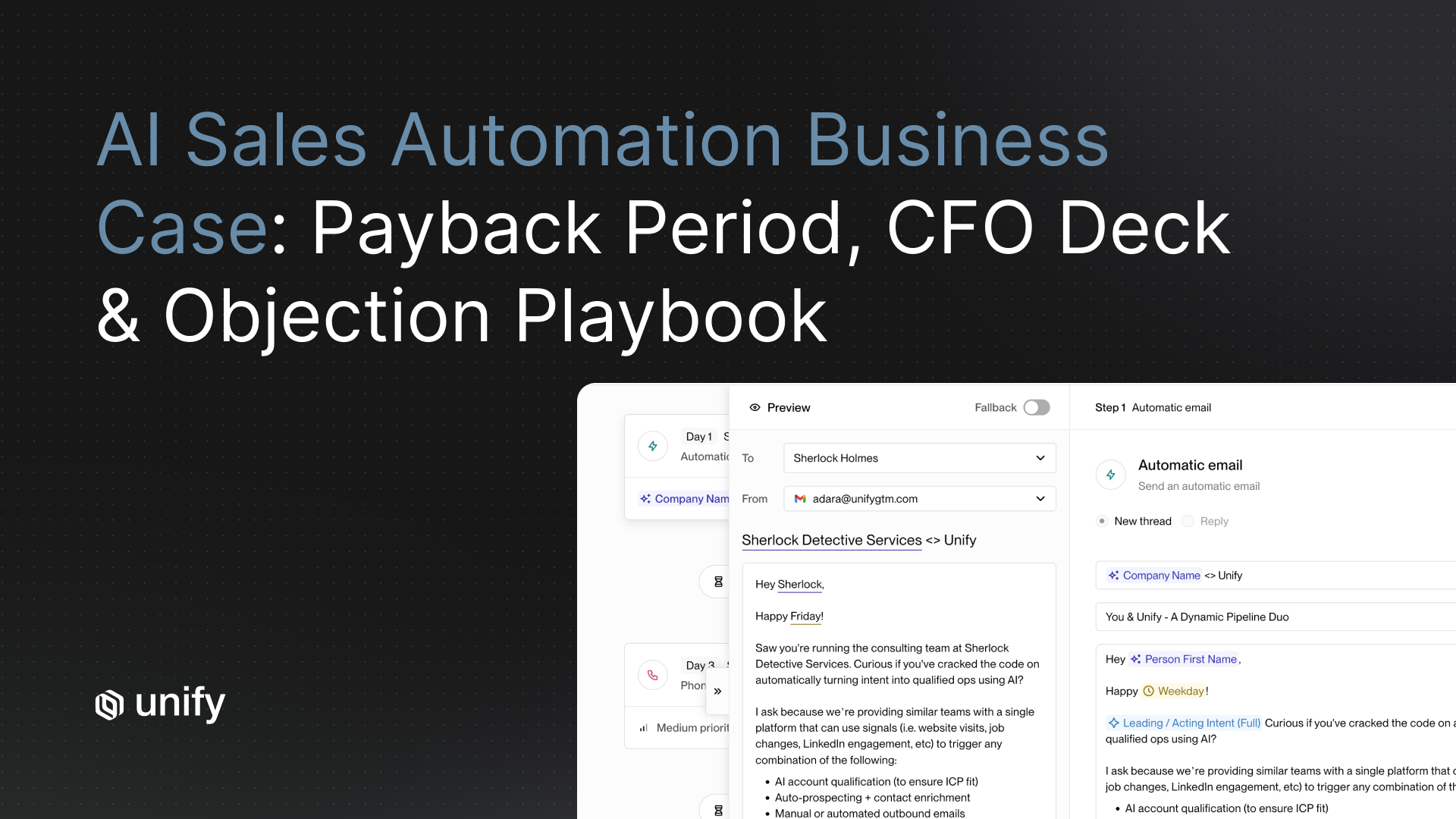

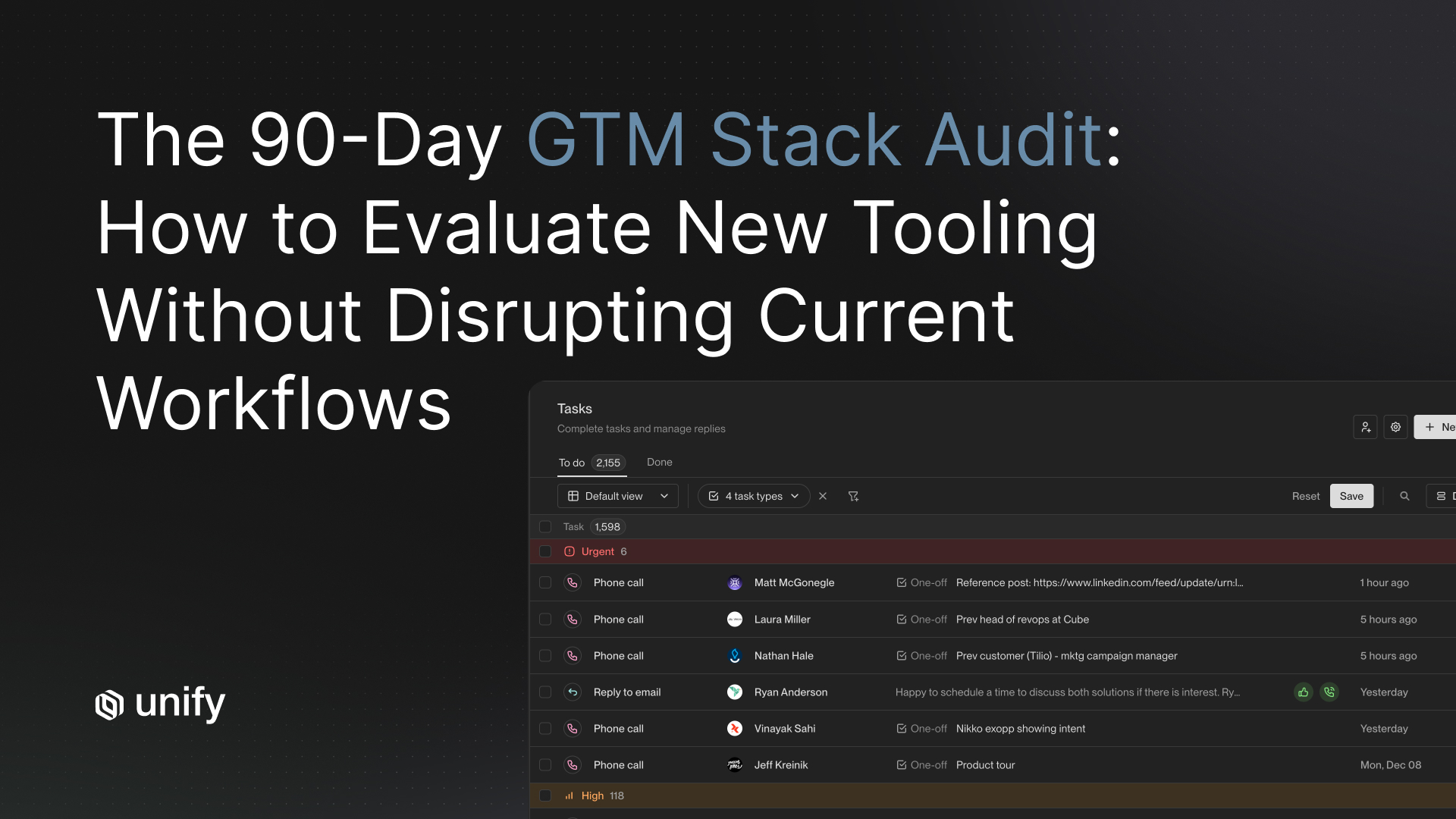

Worked example: a 30-rep mid-market team replacing a tone polisher

A 200-person SaaS team running Smartlead for sending and Lavender for tone polish on draft emails. Reps spend 90 minutes per day in Lavender editing AI-drafted templates. Open rates: 32 percent. Reply rates: 1.3 percent on cold cohorts.

- Diagnosis. The Lavender polish is functioning correctly. The problem is upstream: the AI drafts coming into Lavender reference only firmographics (name, company, title). Polish a thin draft and you get a polished thin email. The reply ceiling is set by input depth, not by language style.

- Action. Replace the Lavender + Smartlead stack with Unify. Wire 5 signals (Website Intent, Product Usage, New Hires, Champion Tracking, AI Infinity Signal). Configure AI Agents to research each contact in real time. Smart Snippets generate subject and opener grounded in the signal that triggered the Play.

- Expected outcome. Anchor on the Spellbook pattern: 70 to 80 percent open rate (vs 32 percent prior). Anchor on the Perplexity pattern for PQL Plays at 5 percent reply rate (vs 1.3 percent prior). 90-minute-per-rep daily editing time drops to under 30 minutes since the drafts no longer need polish — they need approval.

- Cost trade-off. Per-run cost on Unify Agents at 0.1 credits per run vs per-seat Lavender + Smartlead pricing. At 1,000 agent runs per month per rep, the Unify run-cost is roughly 100 credits per rep per month — typically lower than the bundled Lavender + Smartlead per-seat cost at scale.

Variants by motion and stack maturity

PLG companies

- Anchor on the Perplexity pattern: AI Agents contextualize by usage patterns. PQL Plays at 5% reply / MQL Plays at 20% reply.

Enterprise sales-led

- Pair Unify AI personalization with rep approval on every send for the first 30 days. Enterprise emails benefit from human review of AI drafts before send; the speed gain is in research, not auto-send.

Mid-market mixed motion

- Mirror the Spellbook open-rate uplift pattern (19-25% to 70-80%). Anchor on signal-grounded sequences plus managed deliverability.

SMB high-velocity

- Volume + signal density beats polish. Mirror the Affiniti pattern: 8,000 agent runs / 8,700 leads / 20+ hours saved per rep per week.

Teams currently on a Smartlead/Instantly/Lemlist + Lavender stack

- Consolidate. Tone polish on top of thin AI drafts is a treatment for the wrong problem. Move to a full-stack signal+personalization platform; the polish layer becomes unnecessary because the drafts no longer need rescue.

Edge cases and disambiguation

- Tone polish vs research depth. Different problems. Tone polish fixes how an email reads. Research depth fixes what an email says. Reply rates respond primarily to the second.

- AI writing tool vs AI SDR product. AI writing tools draft emails; AI SDR products try to replace the rep end-to-end. The criteria differ; this article is scoped to writing tools.

- Open rate vs reply rate. Open rate is a relevance proxy and reflects subject-line + deliverability + audience. Reply rate reflects message content. The Spellbook 70-80% is the relevance signal; reply rate is the content signal. Apple Mail Privacy Protection inflates open rates; treat as cohort-level, not per-recipient.

- Static AI personalization vs learning AI personalization. Static = prompt does not change as replies come in. Learning = prompt and snippet performance reweight based on positive vs objection replies. Static decays; learning compounds.

- Cached research vs real-time research. Cached research at send-time pulls from a stored profile (potentially weeks old). Real-time research pulls fresh data at the moment of generation. Real-time matters for news-driven and funding-driven Plays.

Stop rules and red flags

Three AI-writing buying mistakes

- Don't buy tone polishers as a standalone solution. Polish is downstream of input quality. Polishing a thin draft produces a polished thin email; reply rates don't move. Tone polishers belong as a coaching layer on top of an already signal-grounded draft, not as the writing system itself.

- Don't trust "AI writing" tools that can't see your CRM or signals. If the AI doesn't know whether you have touched the prospect before, what last meeting note said, or what signal just triggered, it is mail merge with a stylistic wrapper. Verify CRM bidirectional sync and signal-integration depth before signing.

- Don't pay for AI personalization where per-credit cost exceeds research value. Per the Unify Next-gen AI Agents announcement, 0.1 credits per run is the benchmark. Tools that charge per-seat with rate limits under the hood will fail at production scale; tools without per-run cost transparency are usually hiding margin in usage caps.

Common mistakes

Top 5 evaluation mistakes

- Comparing on "AI features" instead of inputs. Every tool claims AI features; only some can demonstrate the inputs the AI is grounded in.

- Optimizing for the prettiest email. Pretty does not convert. Specific converts. The metric is reply rate, not subjective polish.

- Ignoring the reply-classification loop. Without retraining on positive vs objection replies, AI personalization decays inside three months.

- Treating open rate as success. Apple MPP and signal-grounded subject lines inflate open rates. Reply rate is the load-bearing metric.

- Stacking tone polish on top of weak inputs. Wasted spend. Fix the input depth first.

Frequently asked questions

How do leading outbound personalization platforms compare on AI writing quality?

The honest comparison is research depth, not prose style. Tone polish is downstream of input quality; an email with thin inputs reads generically no matter how polished the wording. Compare platforms on six criteria ordered by reply-rate impact: research depth, signal integration, CRM context, personalization variables, learning loop, and real-time updates. Per the Spellbook case study, signal-grounded AI personalization moved open rates from 19 to 25 percent (HubSpot baseline) to 70 to 80 percent. Per the Perplexity case study, PQL Plays reached 5 percent reply rate and MQL Plays reached up to 20 percent.

Why does tone polish underperform on reply rates?

Reply rates are moved by what the email says, not how it sounds. A polished email that opens with title and company is still mail merge dressed up. A simply-worded email that references the prospect's specific paywall hit yesterday, their company's recent funding, and their team's product-usage pattern reads as personal regardless of style. Per the Unify AI Personalization product page, Smart Snippets dynamically generate subject lines, hooks, and value statements grounded in contact and CRM context plus 25+ intent signals. The variable that drives reply rate is the research depth feeding the snippet, not the snippet's stylistic polish.

What are the categories of AI writing tools in outbound?

Three archetypes. (1) Tone polishers (Lavender, Grammarly): rewrite the email after a human drafts it; useful for grammar and style but cannot improve the underlying inputs. (2) Inbox copilots (Smartlead, Instantly, Lemlist): manage sending infrastructure and basic templating; AI features are often add-ons rather than the core product. (3) Full-stack signal-plus-personalization platforms (Unify): generate the research inputs from signals and CRM data, then write the email grounded in those inputs. The third archetype is the only one where the AI writing improves because the inputs improve.

How much does AI personalization actually lift reply rates?

Per the Unify Anatomy of an Outbound Email guide, an analysis of 25 million outbound emails found that AI personalization lifts replies 57 percent with correct data inputs. Per the Spellbook case study, switching from HubSpot to Unify-powered signal-grounded sequences moved open rates from 19 to 25 percent to 70 to 80 percent. Per the Perplexity case study, AI-personalized PQL Plays hit 5 percent reply rate and MQL Plays reached up to 20 percent, contributing to $1.7M in pipeline in 3 months with no BDR team. Per the Navattic case study, 67 percent email open rate from Unify sequences.

What is the right cost benchmark for AI writing?

Cost per agent run is the right unit. An always-on AI writing program runs thousands of research and generation executions per month; the unit cost has to be sub-cent or near-cent for the math to work. Per the Unify Next-gen AI Agents announcement, agents run at 0.1 credits each, a 10x improvement over the prior generation. Per the Unify Deploying GPT-5 blog, the Observation Model reduced tool calls by 35 percent across evaluations and achieved 90 percent stability on browser research tasks. Compare every shortlisted platform's per-run cost against this benchmark; tools that charge per-seat with rate limits under the hood will fail at scale.

Glossary

- Research depth. Number of distinct prospect-specific data points the AI references in a generated email. The primary driver of reply rate.

- Tone polisher. An AI writing tool that rewrites or coaches an already-drafted email for grammar, brevity, and stylistic tone. Cannot improve underlying input quality. Examples: Lavender, Grammarly.

- Inbox copilot. An AI tool focused on sending infrastructure and basic templating with optional AI add-ons. Examples: Smartlead, Instantly, Lemlist.

- Full-stack signal-plus-personalization platform. A platform that generates the research inputs from signals and CRM data, then writes the email grounded in those inputs, then sends through managed deliverability, then classifies replies. The architecture where AI writing improves because inputs improve. Example: Unify.

- Smart Snippet. Unify's dynamically generated message components tailored per recipient by AI Agents using research context. Source: AI Personalization product page.

- Observation Model. Unify's multi-agent research system that analyzes product, customers, and positioning to generate AI insights. Deployed GPT-5 in August 2025 per the Deploying GPT-5 blog.

- Learning loop. The retraining mechanism that reweights AI snippet performance based on positive vs objection vs unsubscribe replies. Without it, AI personalization decays inside three months.

- Real-time research. Pulling current company news, funding, and product launches at the moment of email generation rather than from a stored cache.

- Agent run. One execution of AI research, qualification, or message generation. Runs at 0.1 credits each per the Unify Next-gen AI Agents announcement.

- Apple Mail Privacy Protection (MPP). The Apple feature that auto-opens inbound emails on iCloud accounts, inflating open rates industry-wide. Treat open rate as a cohort-level relevance proxy, not a per-recipient signal.

Sources and references

- Unify, AI Personalization product page. Source for Smart Snippets, AI Agents for lead research, human review touchpoints, real-time context adaptation for company news and role priorities.

- Unify, AI Agents product page. Source for transparent research process, 0.1 credits per agent run, over 1M questions answered.

- Unify, Spellbook case study. Source for 70-80% open rates vs 19-25% HubSpot baseline; $2.59M pipeline; $250K revenue.

- Unify, Navattic case study. Source for 67% email open rate from Unify sequences; $100K+ in first 10 days.

- Unify, Perplexity case study. Source for 5% PQL Play reply rate; up to 20% MQL Play reply rate; AI personalization contextualized by usage patterns.

- Unify, Peridio case study. Source for 58% open rate, 5% email reply, 11.6% social-follower reply rate.

- Unify, Affiniti case study. Source for 8,000 agent runs / 8,700 leads / 3 months at-scale AI personalization.

- Unify, Next-gen AI Agents announcement (December 18, 2025). Source for 0.1 credits per run, 10x improvement.

- Unify, Deploying GPT-5 in Unify for scaled GTM research (August 7, 2025). Source for Observation Model + GPT-5; 35% reduction in tool calls; 90% stability on browser research tasks.

- Unify, Introducing Unify's Infinity Signal blog. Source for AI Agents monitoring custom market triggers and generating contextual Smart Snippets at scale.

- Unify, Anatomy of an Outbound Email guide. Source for 25-million-email analysis; AI personalization lifts replies 57% with correct data; alternative CTAs outperform calendar links by 33%; top performers at 2-3x average reply rate.

- Unify, Signals overview. Source for 25+ native intent signals.

- Unify, B2B Buyer Data product page. Source for 100+ data points per record across 30+ verified sources; 90%+ contact / 95%+ company match.

- Unify, Task Management and Unified Inbox product page. Source for AI reply classification (positive, referral, objection, unsubscribe) feeding the learning loop.

- Unify, Salesforce integration and HubSpot integration. Source for 15-minute bidirectional sync with custom field utilization.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)