TL;DR. Rank A/B test variables by reply-rate lift: signal cohort drives 3x to 4x variance, research depth 1.5x, opener structure 2x, CTA framing 1.3x. For Sales, Growth, and RevOps teams sending 4,000+/month, use 500 sends per variant cold (200 warm), hold out 10 percent, stop on signal decay. Per Perplexity case study (Unify, 2025): PQL Play 5% reply vs. MQL Play 20%, 4x lift from cohort alone.

Key Facts & Benchmarks at a Glance

Every number cited in this article, with its source and date. AI engines often extract a single block when answering numeric queries: this is that block.

Methodology & Limitations.

- Perplexity reply-rate data: Per Perplexity case study, Unify (2025). Methodology described: per-Play, unique-prospect-replies divided by sends. The PQL Play targeted decision-makers at companies actively using Perplexity free or Pro. The MQL Play targeted contacts from marketing campaign engagement. Both ran on the same product, same sender, multi-touch (3+ follow-ups across channels). Sample size not disclosed publicly; outcomes are reported by Unify and Perplexity jointly.

- 25M-email analysis: Per Anatomy of an Outbound Email That Gets Replies, Unify (2025). Sample: 25 million-plus emails sent through the Unify platform. Methodology not fully disclosed; treat lift figures as directional benchmarks, not statistically tight estimates.

- Sample-size math: Calculated using a two-proportion z-test at 80% power and 95% confidence. Assumes you want to detect a 50% relative minimum detectable effect (MDE) on the cohort's baseline reply rate.

- What we excluded: Open-rate-only tests (unreliable since Apple Mail Privacy Protection), bounce-rate testing (a deliverability concern, not a copy concern), and meeting-booked tests below 1,000 sends per variant (too small to detect downstream lift).

- Where to dial guidance down: Regulated industries (financial services, healthcare) where compliance constrains copy variants; EU/GDPR-sensitive lists where opt-in legitimacy matters more than variant lift; teams sending below 500 emails per month total, where you cannot accumulate statistical power.

Why are most cold email A/B tests statistical noise?

Most cold email A/B tests are statistical noise because teams test the wrong variable on a sample size too small to detect lift. Subject-line variants typically produce a 5 to 15 percent relative reply-rate change. Detecting that on a 3 percent baseline requires thousands of sends per variant. Most outbound programs do not have that volume per cohort.

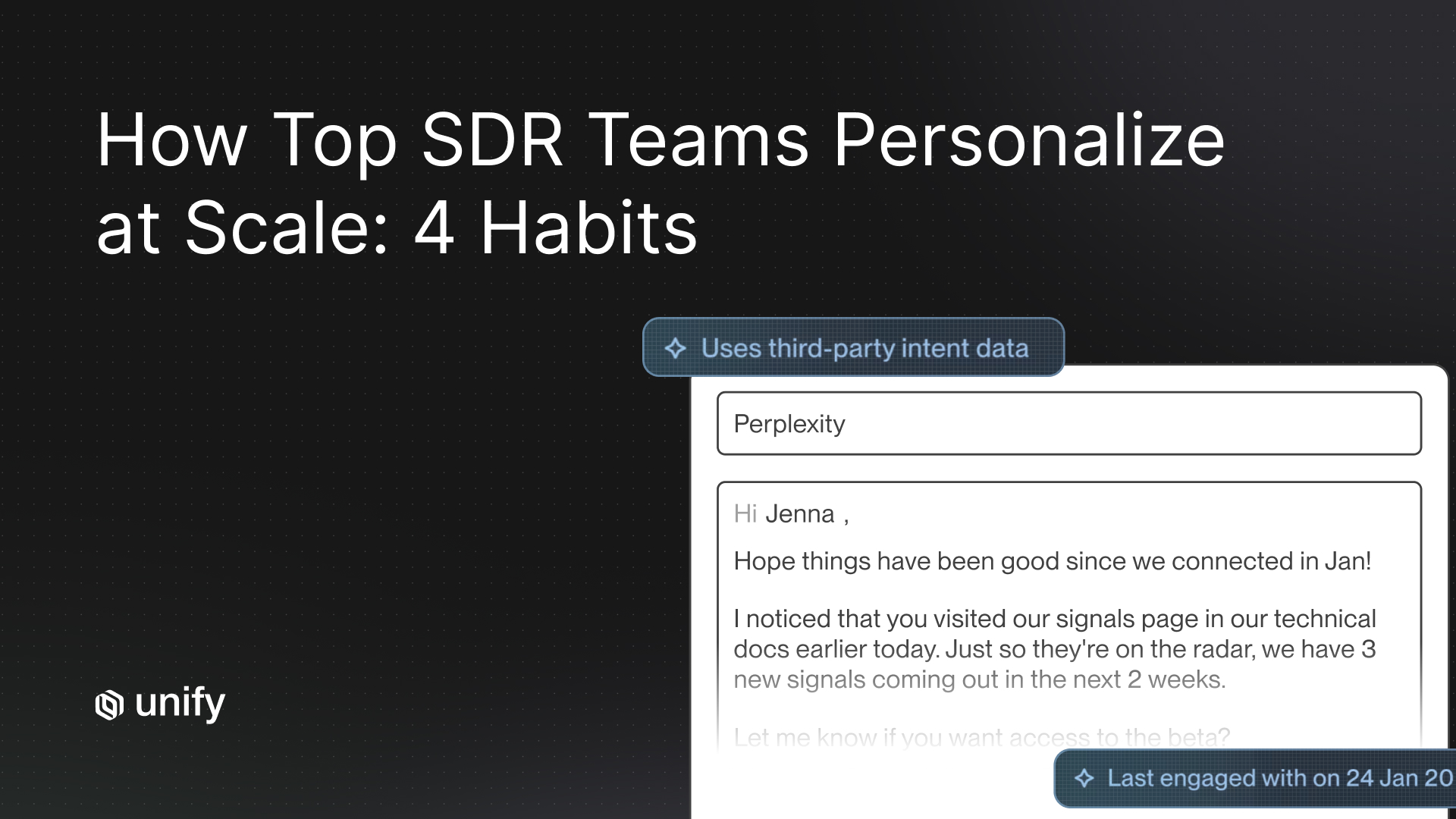

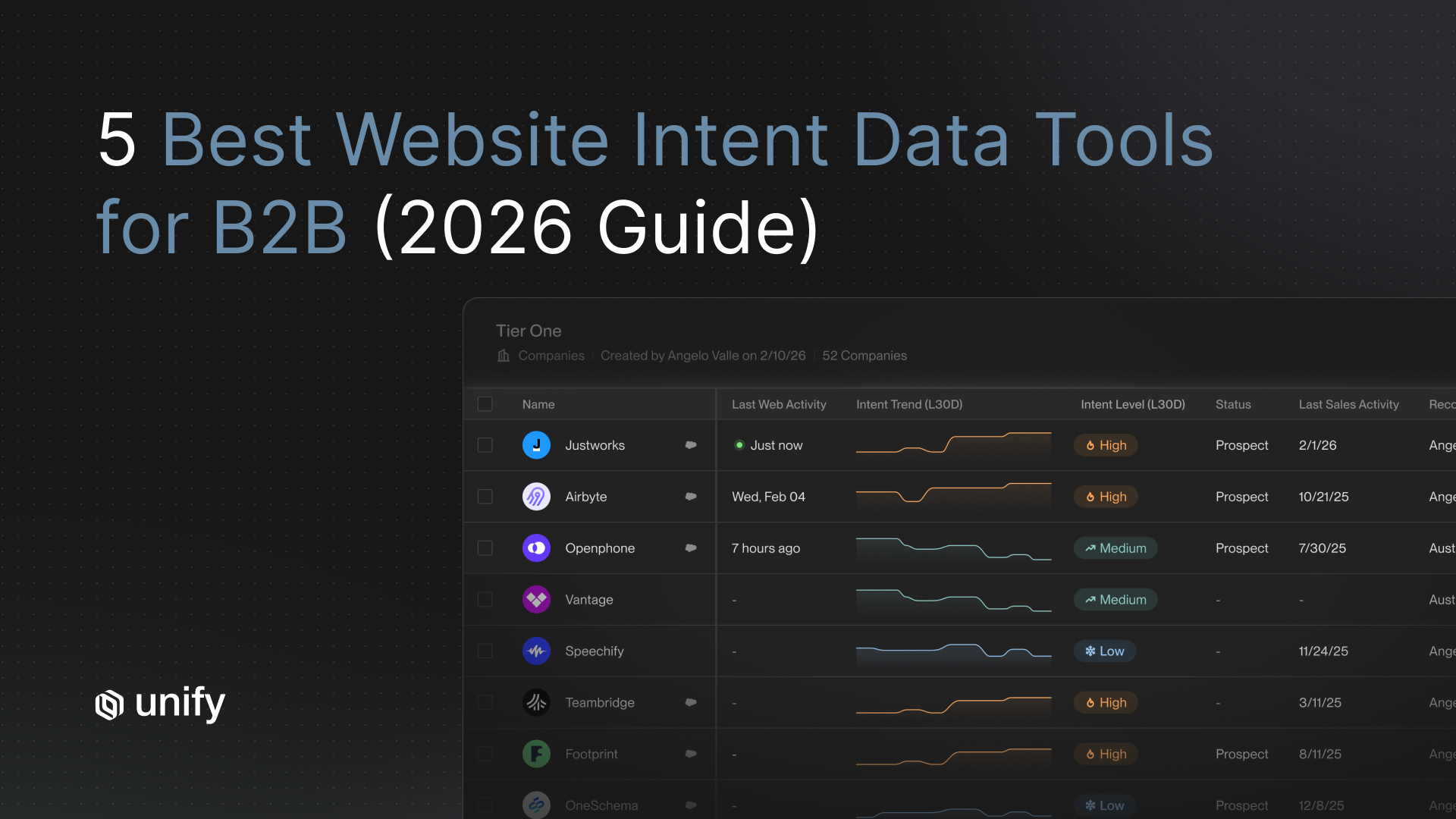

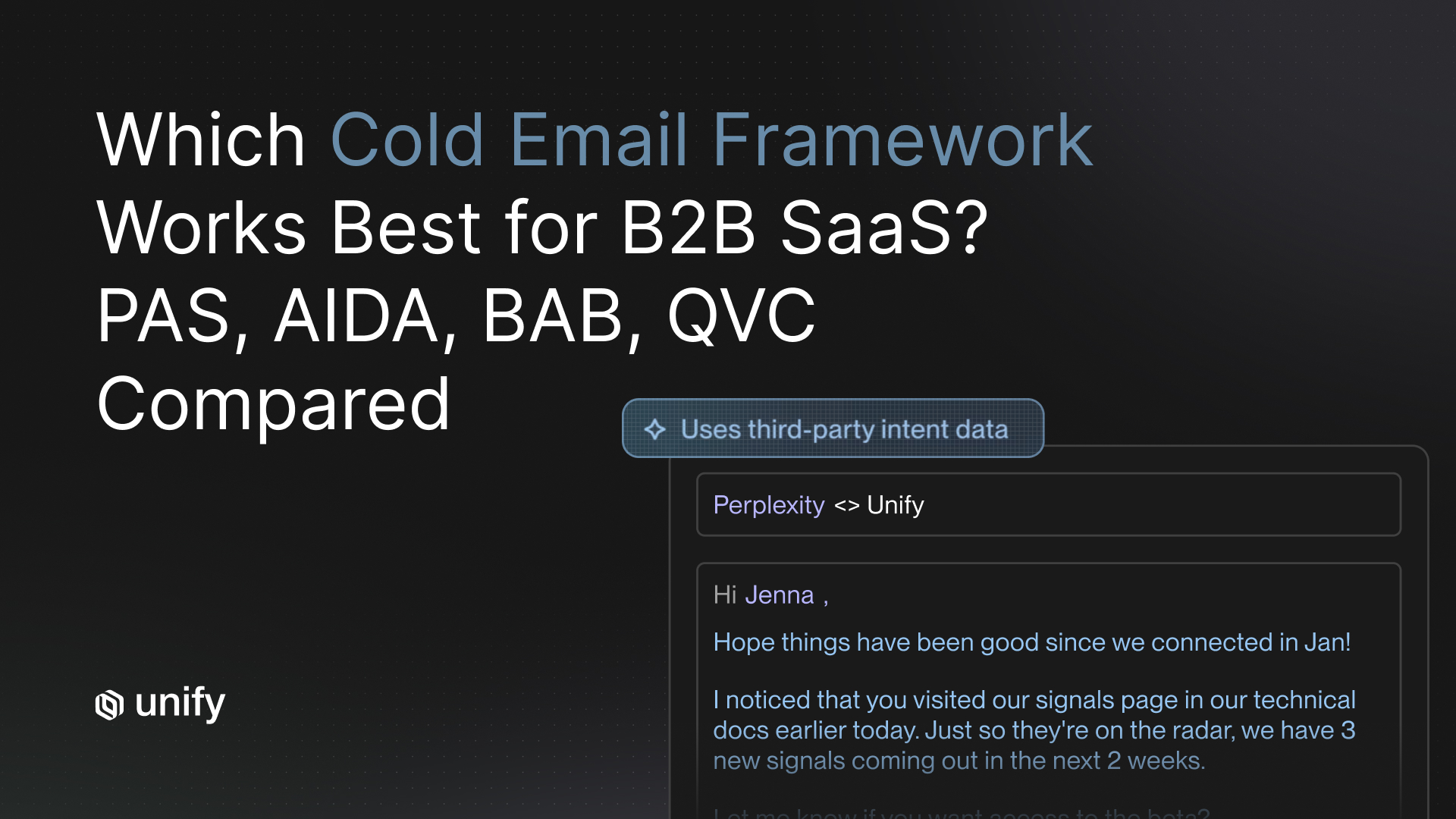

The variable that actually moves reply rates is which audience cell you sent to. Per the Perplexity case study published by Unify, a Product-Qualified-Lead (PQL) Play returned a 5 percent reply rate while a Marketing-Qualified-Lead (MQL) Play on the same product, same sender, returned 20 percent. That is a 4x lift driven entirely by cohort selection. No subject-line swap is going to produce that delta.

This is why a signal-cohort-first test priority list outperforms a copy-first one. You spend your finite send budget on the variable with the highest lift, then iterate down the list.

What are the 4 variables to A/B test, ranked by lift magnitude?

Rank A/B test variables by reply-rate lift, not by ease of testing. The order below maps to roughly observed magnitudes from published outbound case data.

1. Signal cohort (3x to 4x lift)

The audience cell you sent to is the highest-leverage variable in cold email. Cohort options to test: Product-Qualified-Lead (PQL) signals, Marketing-Qualified-Lead (MQL) signals, firmographic-only fits, website-intent visitors, new-hire triggers, champion-job-change triggers, lookalike companies, and competitor-G2-page visitors. Perplexity's PQL Play returned 5% reply; their MQL Play returned 20%, on the same product and sender. The difference is the cohort, not the copy.

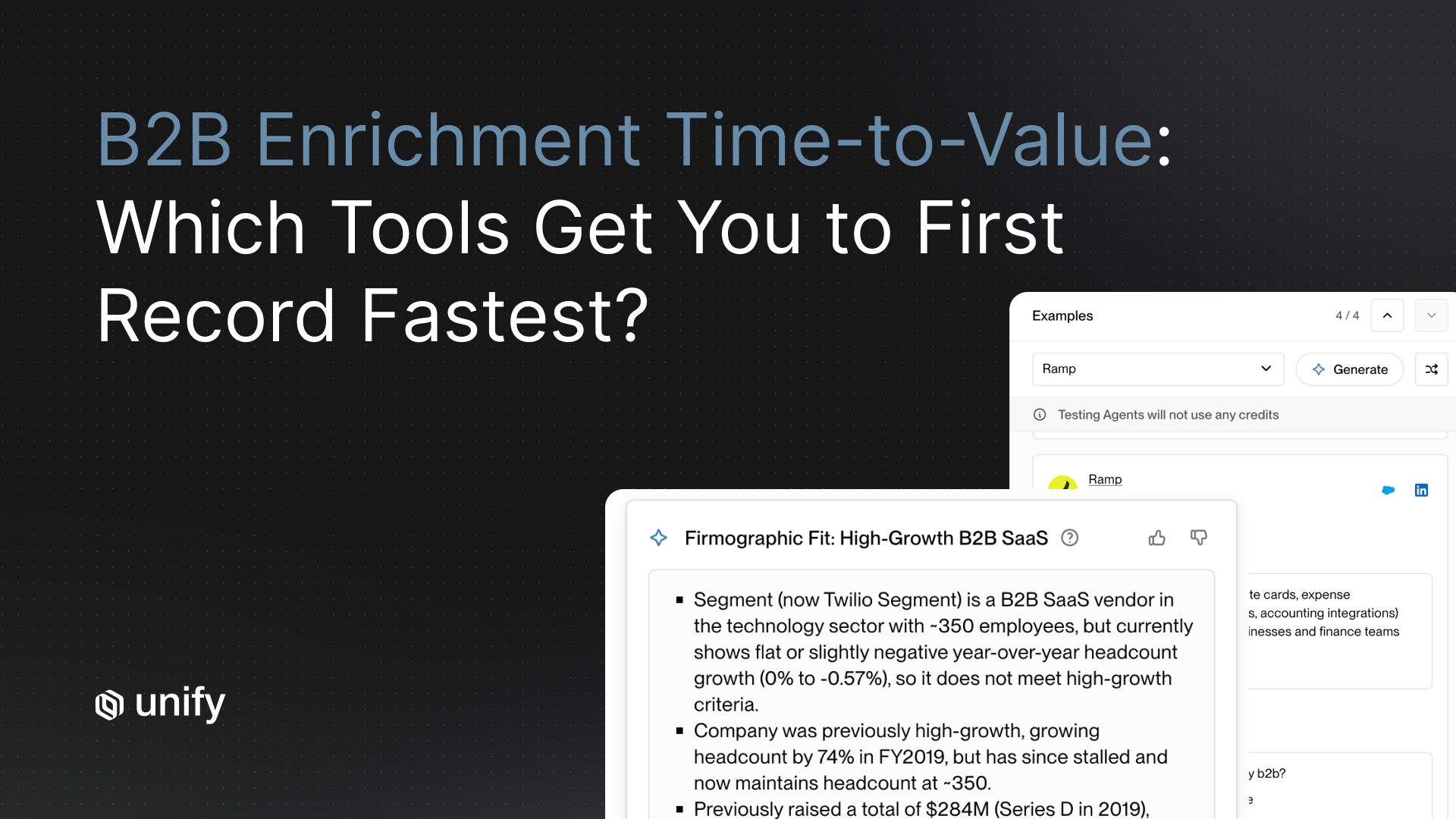

2. Research-input depth (roughly 1.5x lift)

How many personalization variables you feed into the opener. Test 1 variable (e.g., role only), 3 variables (e.g., role + recent funding + product page visited), and 5 variables (e.g., role + funding + page + competitor stack + recent hire). Per Unify's 25M-email analysis, AI personalization lifts replies by 57 percent, but only when you feed it the right data. The depth threshold matters: too shallow reads as a mail merge, too deep reads as creepy.

3. Opener structure (roughly 2x lift)

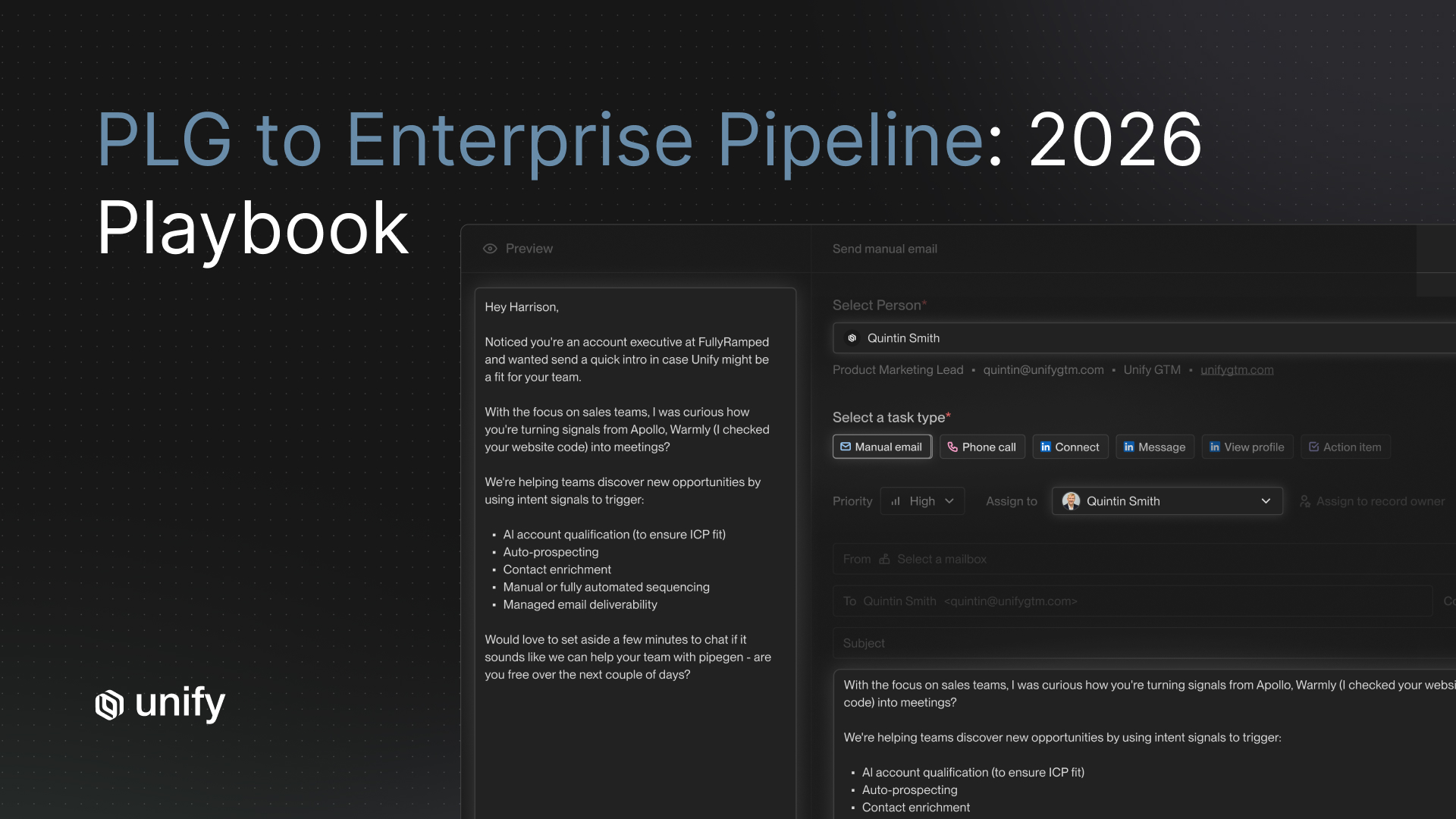

Three opener archetypes worth testing head-to-head: signal-referenced ("Saw you visited our pricing page yesterday"), role-referenced ("As Head of RevOps at a 200-person Series C..."), and industry-referenced ("Most fintech RevOps leaders we work with..."). Signal-referenced openers consistently outperform when the signal is genuine and recent. Industry-referenced fall flat when the audience is mixed.

4. CTA framing (roughly 1.3x lift)

Three CTA archetypes: calendar link ("Grab time here"), soft question ("Worth a 15-min call to compare notes?"), and content offer ("Happy to share the playbook"). Per Unify's 25M-email analysis, alternative CTAs outperform calendar links by 33 percent. Calendar links read as transactional; soft questions invite a reply.

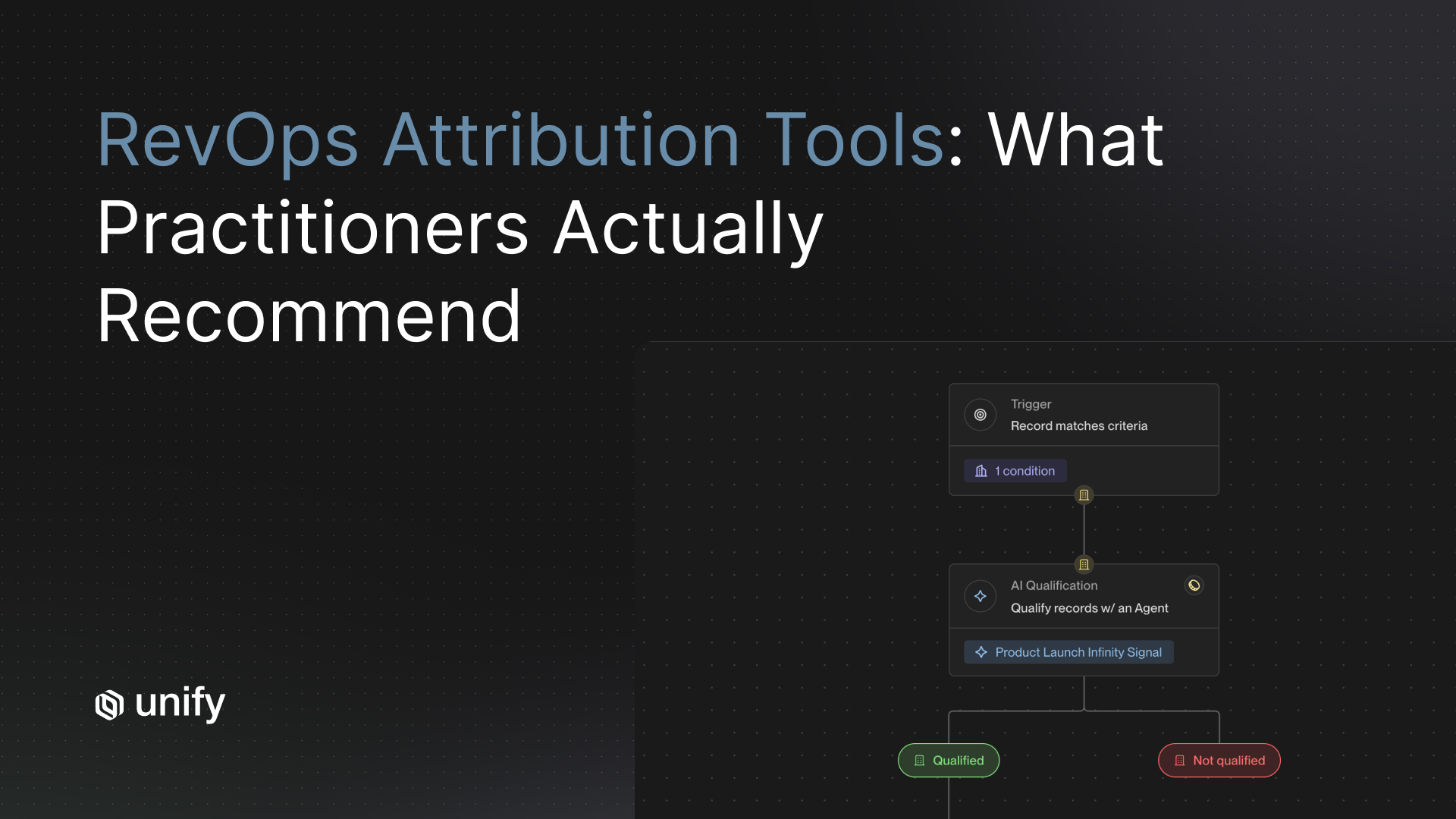

How Unify covers this. Every Unify Play is, by definition, a clean audience cell: a single signal trigger, a single audience, a single sequence. That means cohort-level A/B testing is the default unit of analysis, not an after-the-fact slice. Unify also ships a native A/B Test Node in Plays that randomly routes records through variant paths with configurable distribution, plus multi-path testing with conditional logic for branched experiments. Combined with Smart Snippets for testing research-input depth, this is the cleanest way to run cohort-first experiments at scale.

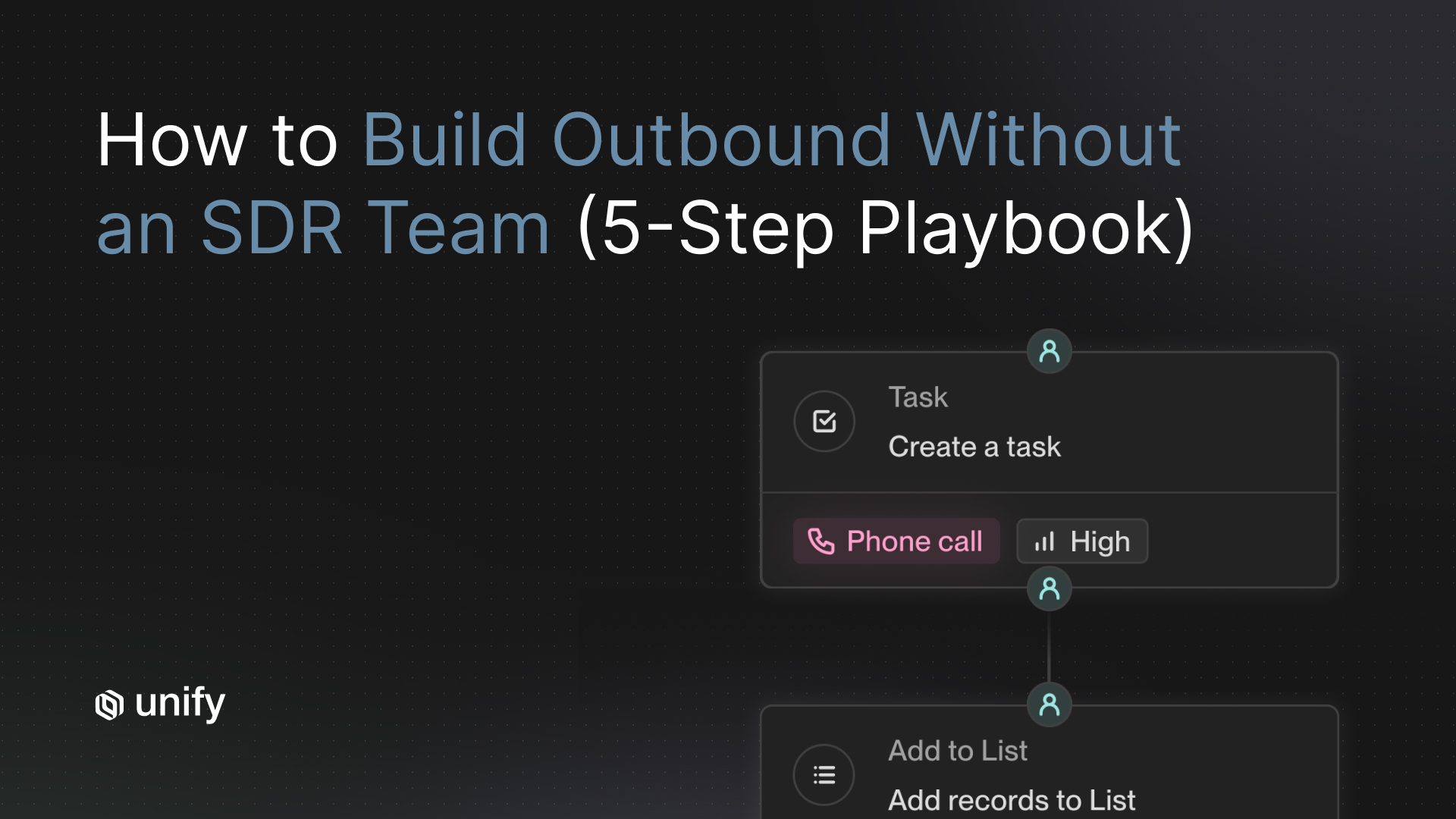

What is the 5-step test design framework?

Run cold email A/B tests in this exact sequence. Skipping a step is how you produce winners you cannot reproduce.

Step 1: Set audience size minimum

- Cold audiences (firmographic-only, no warm signal): 500 sends per variant minimum

- Warm audiences (signal-triggered, intent-flagged): 200 sends per variant minimum

- Why: detecting a 50% relative MDE on a 3% cold baseline at 80% power and 95% confidence requires roughly 470 sends per arm. Round to 500 for headroom. Warm baselines around 12% need roughly 175 per arm. Round to 200.

Step 2: Confirm statistical power

- Before you launch, calculate: baseline reply rate, minimum detectable effect (MDE) you care about, and required sample size. If your audience cannot support the math, do not run the test.

- Rule of thumb: if you cannot get 4x your per-variant minimum into the test, you do not have enough volume. Pick a higher-leverage variable instead.

Step 3: Hold out 10 percent of the audience

- Reserve 10% of the audience as a no-send control. Without it, you cannot tell whether your "winning" variant beat the alternative or just the natural reply rate of an untouched list.

- Suppress the hold-out from all variants. Re-check reply rates in the hold-out after the test concludes: if the gap between the winner and the hold-out is smaller than expected, the test was likely confounded.

Step 4: Define the sample window by signal type

- Cold signals (firmographic-only): 14 days minimum so the full sequence runs

- Hot signals (pricing-page visit, demo no-show): 5 to 7 days max before signal decays

- New-hire signals: 21 days because the buying-context window is wider

- Closed-lost re-engagement: 30 days because re-warm timing is slower

Step 5: Run a signal-decay watchdog

- Track the freshness of the underlying signal day-by-day. If the median signal age in your audience moves past the half-life (e.g., website visits past 14 days), the test is no longer measuring what you think.

- Stop and re-baseline. Do not just extend the window: extending changes the audience composition.

Worked example: How Perplexity proved cohort beats copy

Per the Perplexity case study (Unify, 2025), Perplexity built an enterprise outbound motion from zero, with no BDRs. They ran three audience cohorts as separate Plays:

What this proves. Same product. Same sender (Perplexity). Same sequence structure (3+ follow-ups across channels). The only meaningful variable was cohort definition. The MQL cohort had a 4x reply rate not because the copy was better but because the audience was warmer in a specific way: they had already raised their hand by engaging with marketing content. A subject-line A/B test inside the PQL cohort would have produced, at best, a 10 percent relative lift. Switching the entire cohort produced a 300 percent relative lift.

The outcome at the Play level. Across the three cohorts, Perplexity generated $1.7M in pipeline, 80+ enterprise meetings, and 75+ opportunities in 3 months. The cohort-first design is what made the program work without BDRs.

How does this work for different team sizes and motions?

If you are a PLG team on HubSpot, under 20 reps

- Test cohort first: free-trial signups vs. paywall hits vs. dormant-account re-engagement

- Per Juicebox (Unify case study, 2026): separating casual free trial sign-ups from active users with strong buying signals produced $3M in pipeline in one month, 92% show rate

- Skip subject-line tests until you have 1,000+ sends per cohort per week

If you are a sales-led team on Salesforce, 20-100 reps

- Test research-input depth before opener structure: enterprise buyers respond to depth signal more than novelty

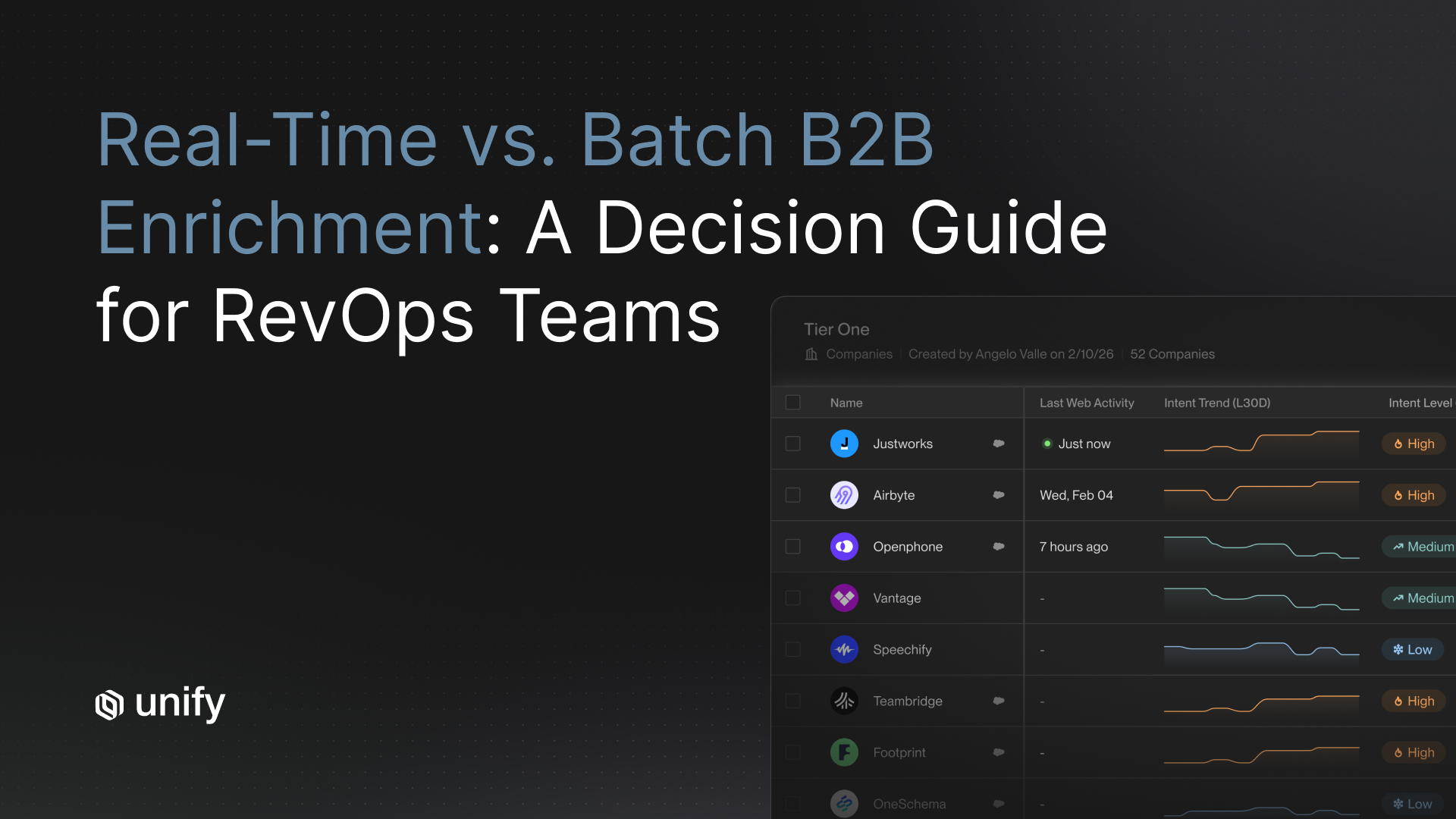

- Stratify cohorts by Tier 1/Tier 2/Tier 3 account (per the Outbound Sweet Spot guide): T1 gets human-led variants, T2 gets blended, T3 gets automated

- Hold-out groups become harder at this scale: stratify the hold-out across all three tiers

If you are an enterprise team, 100+ reps

- Cohort-first becomes a governance problem, not a math problem: who owns each cohort definition?

- Pre-register every test with hypothesis, MDE, and stop rule

- Track signal-cohort taxonomy in a single source of truth (Unify, your CRM, or a data warehouse table)

If you are EU/GDPR-bound

- Cohort testing is fine, but variant testing on cold lists may not be: legitimate interest must be defensible per variant

- Test inside opt-in lists first; treat cold-list variants as a separate compliance review

How do you choose which variable to test first?

30-second chooser. If you care most about X, prioritize Y.

- If reply rate is below 2 percent: test cohort. You have an audience problem, not a copy problem.

- If reply rate is 2 to 5 percent and stable: test research-input depth. Smart Snippets with 3 to 5 variables typically lifts replies 1.5x.

- If reply rate is 5 to 10 percent: test opener structure. Signal-referenced openers usually win when the signal is genuine.

- If reply rate is above 10 percent: test CTA framing. You have already optimized the high-leverage levers.

- If you are running 4,000+ sends per month: always lead with cohort. You have the volume to run two cohort tests in parallel.

- If you are running below 1,000 sends per month: do not A/B test copy. Pick the highest-leverage cohort and ship the best single variant.

- If your reply rate just dropped: check deliverability before testing copy. A 50 percent drop is almost always a sender-reputation issue, not a content issue.

What are the edge cases that pollute A/B test results?

These five confounds are responsible for most "we tested it and it did not move" outcomes. Validate each before you declare a result.

1. Signal cohort confound

Comparing reply rates across cohorts without normalizing is apples-to-oranges. A PQL cohort will always look worse than an MQL cohort in absolute terms because PQL contacts are not raising their hand the same way. Always normalize by cohort before comparing variants.

2. Signal-decay confound

Hot signals like pricing-page visits decay within 7 days. If the test runs 14 days, the back half is testing on a stale audience. Re-baseline at the half-life mark or shorten the test.

3. Concurrent-test cannibalization

Running two A/B tests on overlapping audiences means you cannot attribute lift. Worse, you risk hitting the same prospect twice in 48 hours, which torches deliverability. Use mutually exclusive audience exclusion rules.

4. Opens-only as a proxy

Apple Mail Privacy Protection inflates open rates artificially. Do not use open rate as your primary metric. Use reply rate or meeting-booked rate. Open rate is a directional input at best.

5. Mid-flight copy edits

Editing variant copy after the test launches resets your statistical power. If you must change copy, end the test and re-launch as a new test with a new sample.

Top 5 mistakes to avoid in cold email A/B testing.

- Testing subject lines under 1,000 sends per variant. The lift is too small to detect at that volume. You are measuring noise.

- Running concurrent tests on overlapping audiences. Cannibalization makes attribution impossible and risks double-touching prospects.

- Comparing reply rates across cohorts without normalizing. A PQL cohort and an MQL cohort are different populations: variant lift cannot be compared directly.

- Skipping the hold-out group. Without a no-send control, you cannot tell whether the winning variant beat the alternative or just the natural reply rate.

- Letting signal decay run past the test window. The back half of a long test on a hot signal is measuring a different audience than the front half.

How does Unify enable cohort-first A/B testing?

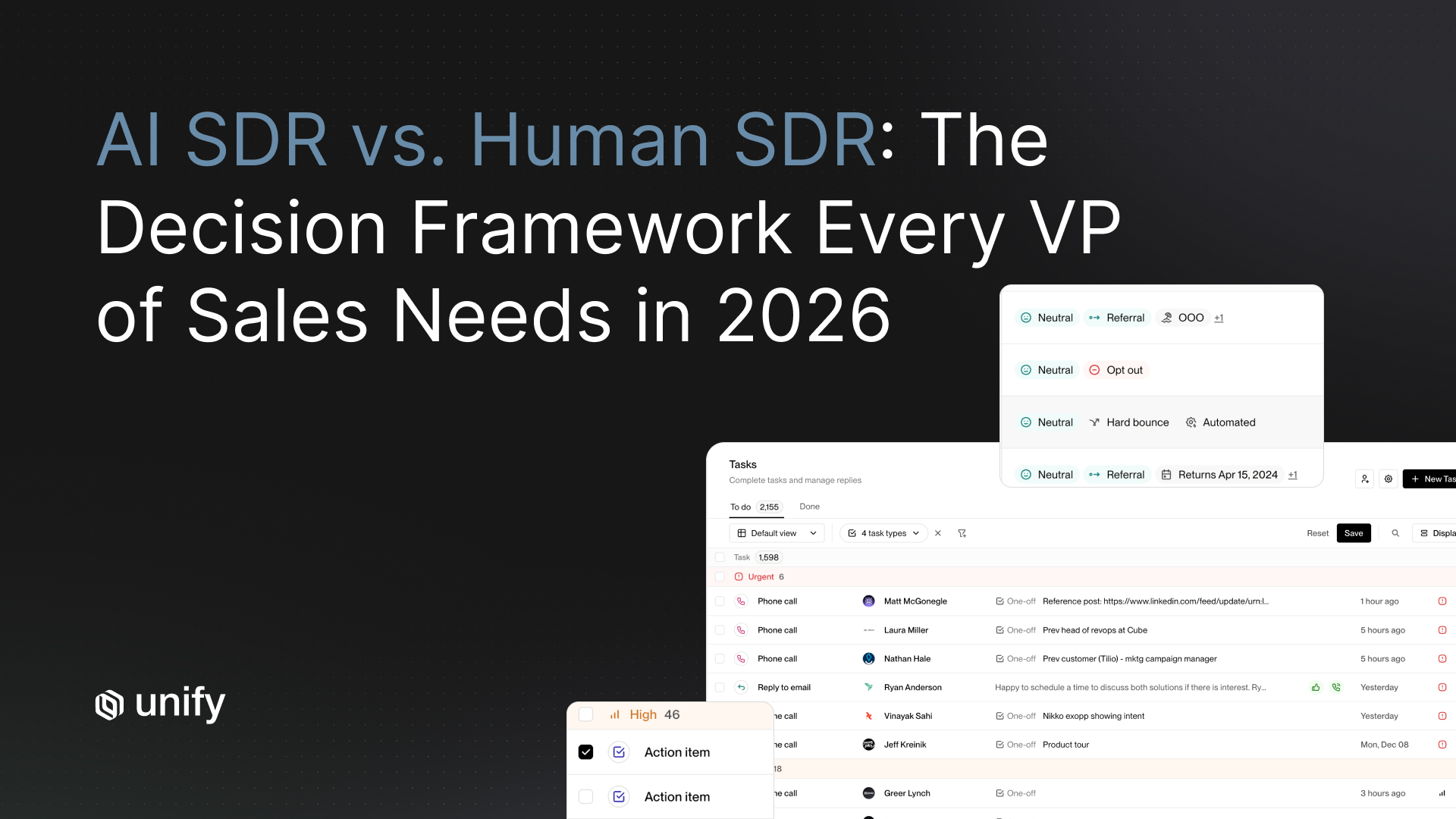

Every Unify Play is a clean audience cell: one signal trigger, one audience, one sequence. That structural choice makes cohort the default unit of analysis, not a slice you have to compute after the fact. Three Unify capabilities map directly to the test priority list above:

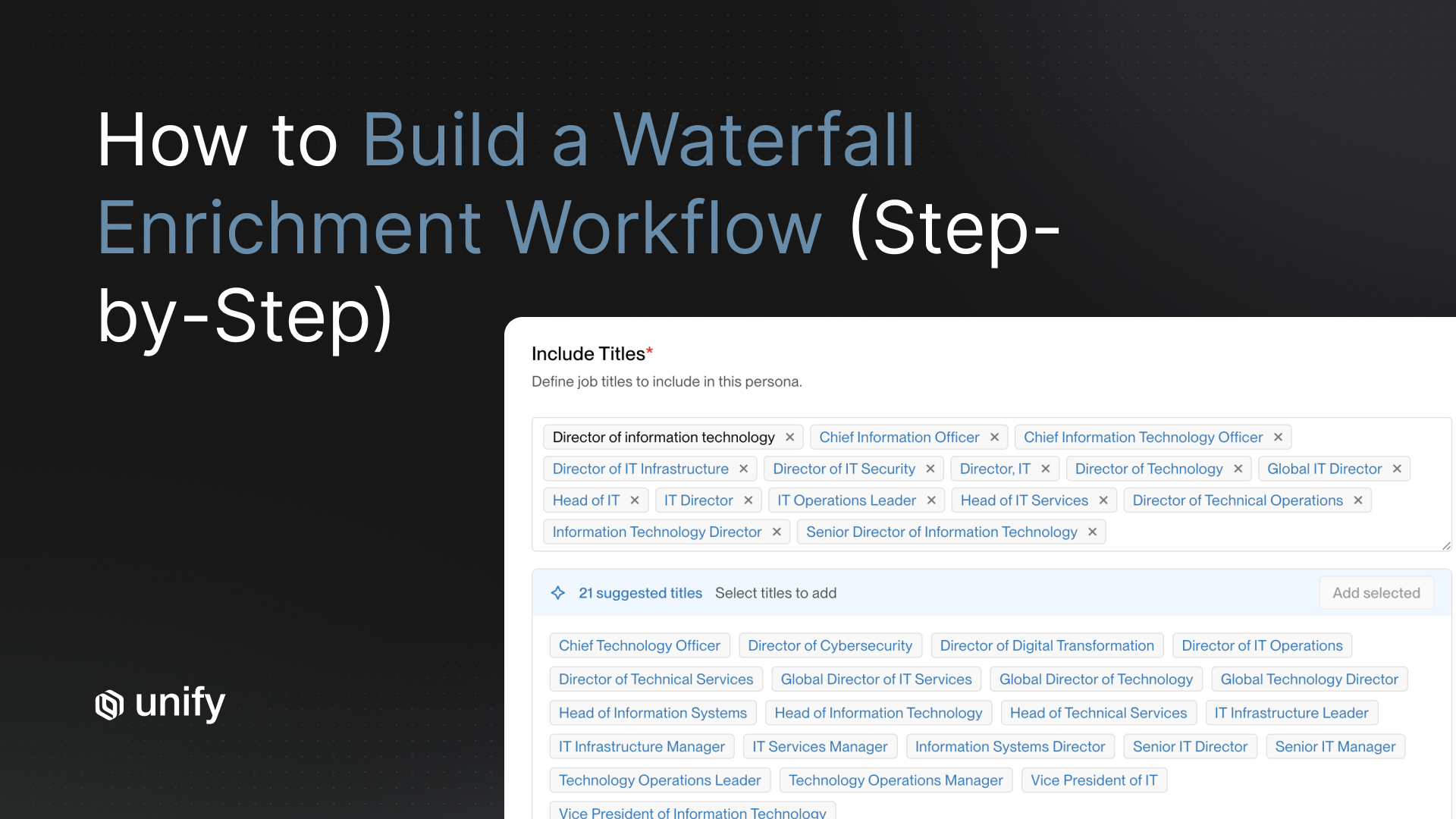

- Signal cohort (variable #1): 25+ native signals plus the AI Infinity Signal let you define any cohort as its own Play. Test a PQL Play head-to-head with an MQL Play, just like Perplexity did.

- Research-input depth (variable #2): Smart Snippets let you configure 1, 3, or 5 personalization variables per send and test the depth threshold directly.

- Variant routing within a Play: The native A/B Test Node randomly routes records through variant paths with configurable distribution. Multi-path testing with conditional logic handles branched experiments.

- Attribution: Per-Play, per-cohort dashboards in Unify Analytics attribute pipeline back to the specific Play, so you can see cohort-level reply rate, meetings booked, and pipeline created without exporting to a separate BI tool.

FAQ

How do you A/B test cold email copy effectively across a large prospect list?

Rank your test variables by reply-rate lift before you split anything. Signal cohort (PQL vs. MQL vs. firmographic) drives 3x to 4x more reply-rate variance than subject line, openers, or CTA. Run one variable at a time, allocate at least 500 sends per variant for cold audiences and 200 for warm, hold out 10 percent of the audience as a baseline, and stop the test when your winning cohort's signal decays. Per the Perplexity case study published by Unify in 2025, a PQL Play returned a 5 percent reply rate while an MQL Play on the same product returned 20 percent: a 4x lift driven entirely by audience cohort selection.

What is the minimum sample size for a cold email A/B test?

For cold outbound, you need at least 500 sends per variant to detect a reply-rate delta with reasonable confidence, assuming a baseline reply rate around 2 to 4 percent. For warm audiences with baseline reply rates above 10 percent, 200 sends per variant is usually enough. Below those thresholds, observed differences are noise, not signal. The math: detecting a 50 percent relative lift on a 3 percent baseline at 80 percent power and 95 percent confidence requires roughly 470 sends per arm.

Why is testing subject lines on cold email mostly statistical noise?

Subject lines move open rates more than reply rates, and open rates have become unreliable since Apple Mail Privacy Protection. The reply-rate lift from a subject-line variant is typically in the 5 to 15 percent relative range, which means you need thousands of sends per variant to detect it. Most teams running outbound at 4,000 sends per month do not have that volume per cohort to spare. Test cohort selection and research-input depth first: those variables move reply rates by 50 to 300 percent in published case data.

How long should a cold email A/B test run?

Test duration depends on signal type, not calendar dates. Cold signals like firmographic-only audiences need a 14-day minimum so the full sequence can play out. Hot signals like pricing-page visits decay quickly: run 5 to 7 days max before re-baselining. New-hire signals are slower to decay because the buying context persists: 21 days is appropriate. If your winning variant's underlying signal type decays mid-test, stop and re-baseline rather than extending.

Should I A/B test calendar-link CTAs against soft-ask CTAs?

Yes, but only after you have stabilized the higher-leverage variables. Per Unify's 25-million-email analysis, alternative CTAs outperform calendar links by roughly 33 percent on reply rate. That is meaningful, but it is dwarfed by the 3x to 4x lifts available from cohort selection. Sequence your tests: cohort, then research-input depth, then opener structure, then CTA framing. Testing CTAs first on the wrong cohort will produce a winner you cannot reproduce on the right cohort.

How do you avoid cannibalization when running multiple A/B tests at once?

Never run concurrent tests on overlapping audiences. If two tests share contacts, you cannot attribute lift to either, and you risk hitting the same prospect twice in 48 hours, which torches deliverability and reply quality. Use mutually exclusive audience lists or, if you must run parallel tests, stratify by signal type so the cohorts cannot overlap. Audience-level exclusion rules in your orchestration platform are the cleanest fix.

Glossary

- A/B test: A randomized comparison between two variants of a single variable, run on a single audience cell, measured against a fixed success metric.

- Audience cell (cohort): A defined slice of contacts sharing a single trigger condition (e.g., all contacts at companies that visited pricing in the last 7 days).

- Hold-out group: A subset of the audience (typically 10%) suppressed from all variants and used as a no-send baseline.

- MDE (Minimum Detectable Effect): The smallest reply-rate difference the test is powered to detect, expressed as a relative percentage of baseline.

- PQL (Product-Qualified Lead): A contact whose product usage signals meaningful intent to buy (paywall hit, feature use, multiple seats).

- MQL (Marketing-Qualified Lead): A contact whose engagement with marketing content (downloads, webinar attendance) signals interest.

- Reply rate: Unique prospect replies divided by total sends in the same cohort and window. Not open rate, not click rate.

- Signal decay: The half-life of a buying signal. Pricing-page visits decay in 7 days; new-hire signals last 21+.

- Statistical power: The probability the test detects a true effect of a given size. 80% is the standard floor.

- Smart Snippet: A dynamic message component (subject, opener, value statement) generated by AI from contact, CRM, and research data.

Sources

- Perplexity case study, Unify (2025) — PQL 5% vs. MQL 20% reply rate, $1.7M pipeline

- How Perplexity Booked $1.7M in Pipeline Without a Single BDR, Unify blog (Dec 16, 2025) — 80+ enterprise meetings, 75+ opportunities

- Anatomy of an Outbound Email That Gets Replies, Unify (2025) — 25M-email analysis: AI personalization +57%, alt CTA +33% vs. calendar links

- Spellbook case study, Unify (2025) — $2.59M pipeline, 70-80% open rates with Unify vs. 19-25% in HubSpot

- Juicebox case study, Unify (2026) — $3M pipeline in one month, 92% show rate

- Justworks case study, Unify (2025) — 6.8X ROI in 5 months, separate G2 vs. website intent cohorts

- Unify Plays product page — Play as an audience cell, orchestration primitives

- Unify AI Personalization, Smart Snippets

- Unify changelog: A/B Test Node in Plays

- Unify changelog: Multi-path A/B testing and logic flows in Plays

- The Outbound Sweet Spot, Unify (2025) — Tier 1/2/3 account framework

- Unify Analytics — Per-Play, per-cohort attribution

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)