TL;DR. Stop framing it as a dichotomy. The right answer is almost always the hybrid model: AI handles research, qualification, first-touch drafting, and signal monitoring; humans handle second-touch, objection handling, and complex deals. The two extremes are real but rare: Perplexity ran the max-AI playbook to $1.7M in pipeline / 80+ enterprise meetings in 3 months with zero BDRs. Unify's own NBR team runs the hybrid playbook at 1.6x industry-standard rep performance with 1-week ramp. Decide on six questions: cost-per-opp, bottleneck location, signal density, ramp tolerance, deal complexity, and hire-freeze status.

Methodology and limitations

What "1.6x industry standard" means in the Unify NBR figure. Per the Unify "New Business Reps 1.6x industry standard" blog, the figure describes Unify NBR compensation track relative to a general industry benchmark for SDR/BDR rep comp. The blog does not link to a specific third-party rep-comp dataset; treat the 1.6x as Unify's published framing of its own team's performance and compensation, not as a comparison against a named external dataset. The same blog reports that outbound opportunities convert to Closed-Won at "about 20 percent" for Unify; the broader "This Year in Performance" recap reports 22 percent on the full $52M qualified pipeline year. Both numbers are valid, in different windows and scopes.

Fully-loaded SDR cost estimates. The $90,000 to $130,000 fully-loaded SDR cost cited later in this article is a typical industry range for US-based mid-market and enterprise SDR teams (base + variable + benefits + tooling allocation + management overhead). It is not a Unify-sourced figure; use your own finance team's number for the decision math.

Customer outcomes are named, not aggregated. Every quantitative claim in this article is attributed to a specific named customer case study or Unify product page. Dial expectations down when CRM data is dirty, when signal density in your market is low, or when your sales cycle requires heavy multi-threading. Dial up when ICP is well-defined and intent signals fire reliably.

How do you decide between hiring more SDRs vs investing in AI SDR tools?

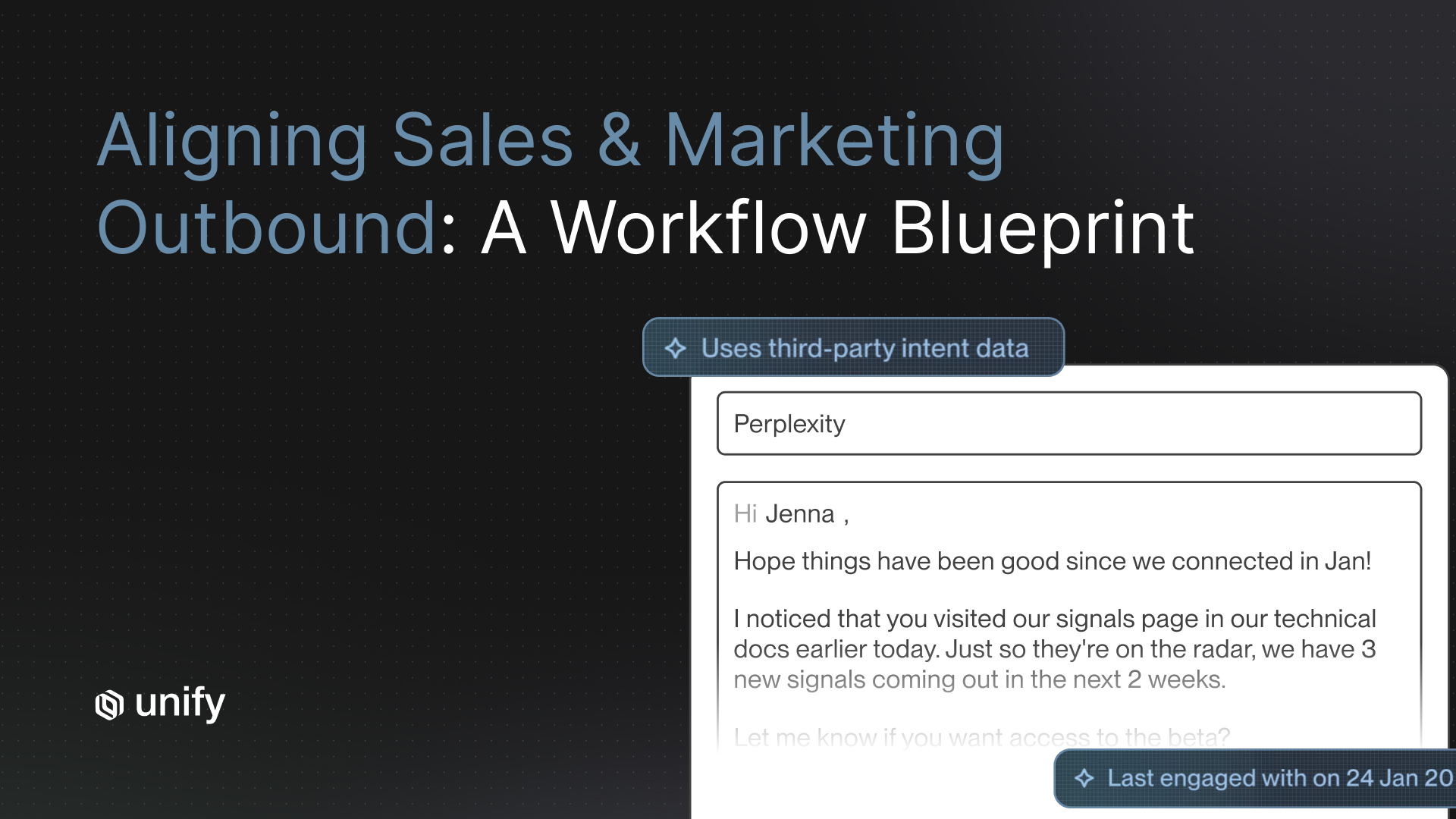

The dichotomy is the wrong frame. The right answer is almost always a hybrid model: AI handles research, qualification, first-touch drafting, and signal monitoring; humans handle second-touch, objection handling, and complex deals where multi-threading and judgment compound. Per the Unify for Sales Reps blog, AI-empowered sellers — not fully autonomous AI SDRs — are the architecture that drives the most pipeline per rep.

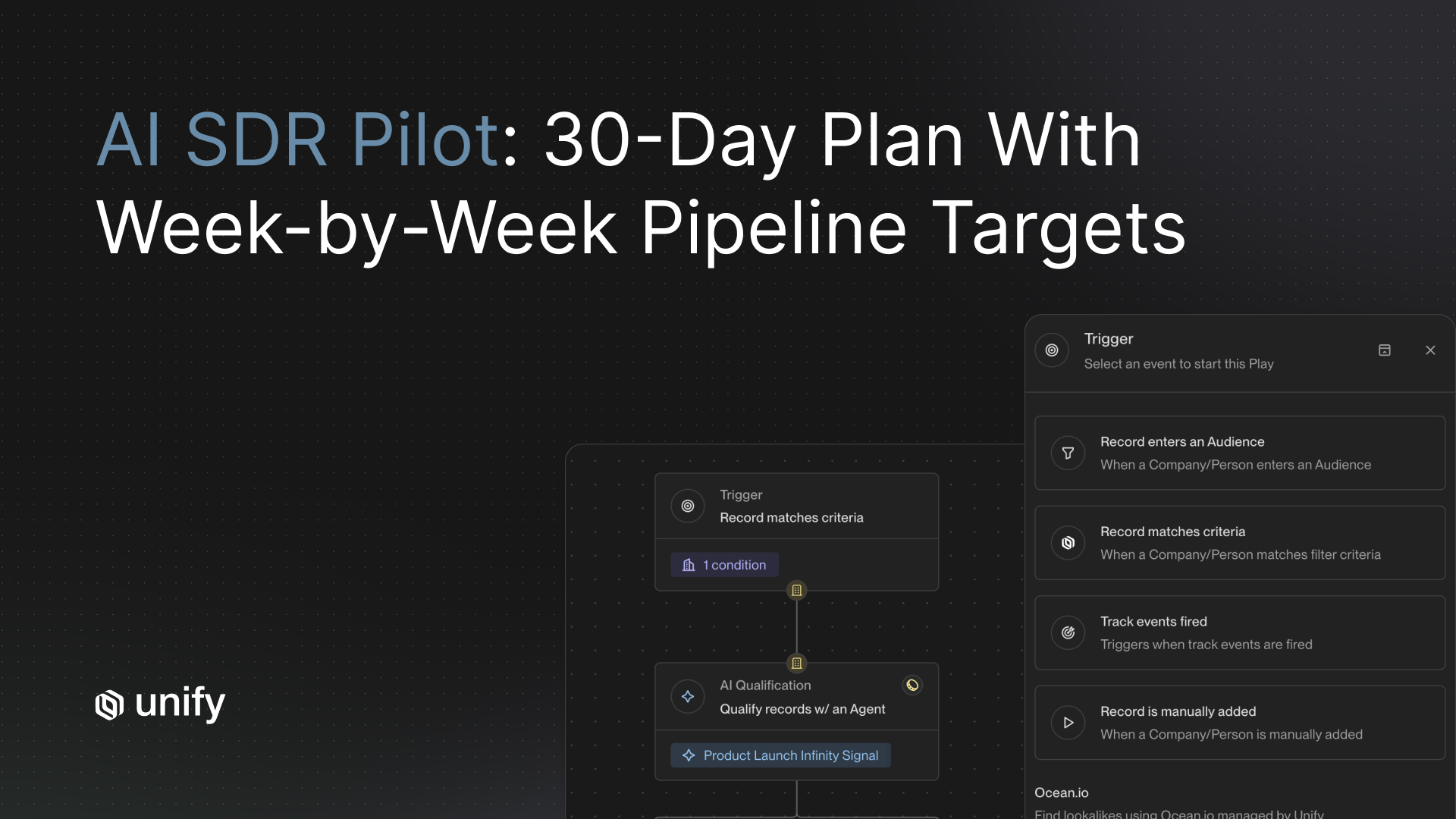

The two extremes are real but uncommon. The max-AI extreme is exemplified by the Perplexity case study: $1.7M in pipeline, 75+ opportunities, 80+ enterprise meetings in 3 months, no BDR team. The hybrid extreme is the Unify NBR team: 114 qualified opportunities in 1 month, $1.1M closed-won in under a year, 1-week ramp, with humans operating at 1.6x industry-standard performance per the Unify NBR blog. Most companies should be running the hybrid model.

The ranked 6-question decision framework

Answer these six questions in order. The first question that produces a clear answer is the decision; the rest are validation.

1. What is your current cost-per-opportunity?

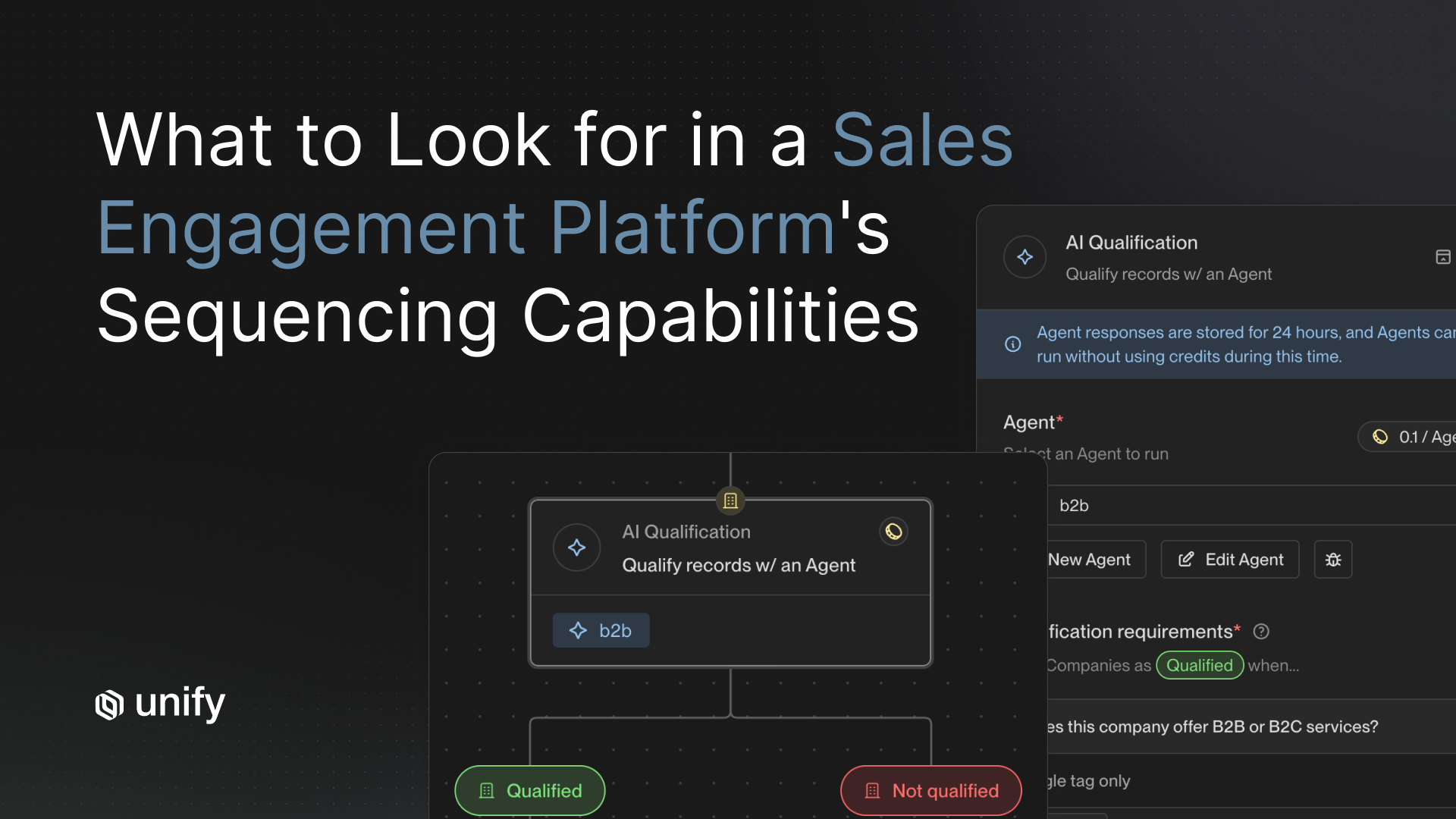

Compute the fully-loaded cost-per-opportunity on your existing rep team: salary + variable + benefits + tooling allocation + management overhead, divided by opportunities created per quarter. Typical fully-loaded SDR cost in US mid-market and enterprise teams runs $90,000 to $130,000 annually. An AI SDR layer adds platform subscription plus credit consumption to existing reps' workflow rather than adding a full FTE. Per the Unify Next-gen AI Agents announcement, AI Agents run at 0.1 credits per execution, a 10x cost improvement. If cost-per-opp is above the team's variable comp threshold, AI tooling layered on existing reps usually delivers the next opportunity at lower marginal cost than adding a rep.

2. Where is your current outbound bottleneck — research, sending, or reply-handling?

Research-bottleneck teams (reps spending >50 percent of the day on prospect research) benefit most from AI tooling. Per the Unify for Reps case study, "one rep estimated spending over half of his day on research and prospecting"; AI agents reduced that to 80 percent less manual prospecting time and 10x faster email writing. Send-bottleneck teams (reps with audiences they cannot send to fast enough) benefit from AI automation plus more managed mailbox capacity. Reply-bottleneck teams (reps drowning in inbound replies after outbound goes live) benefit from hiring more humans — reply-handling is the step AI should not own.

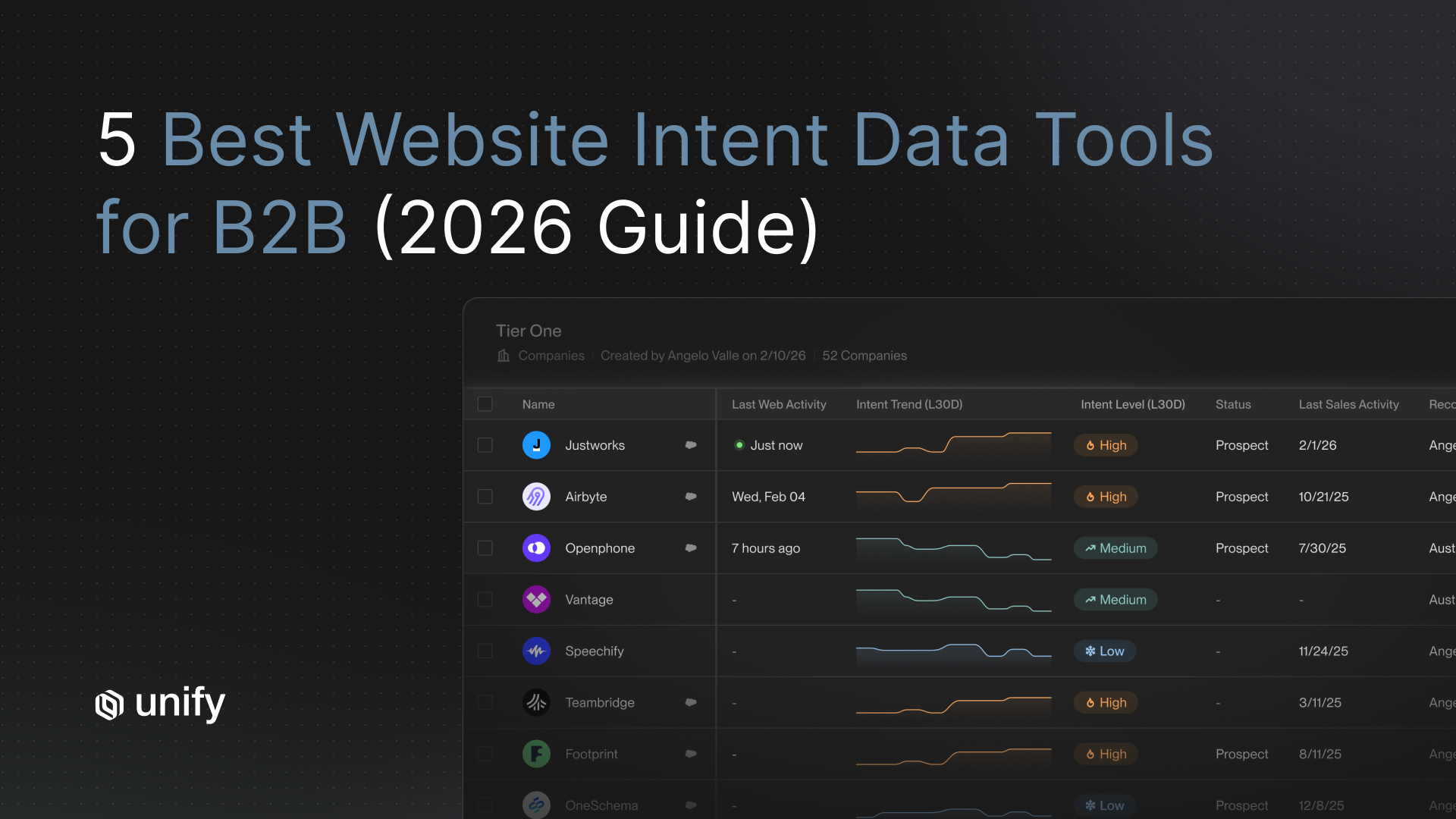

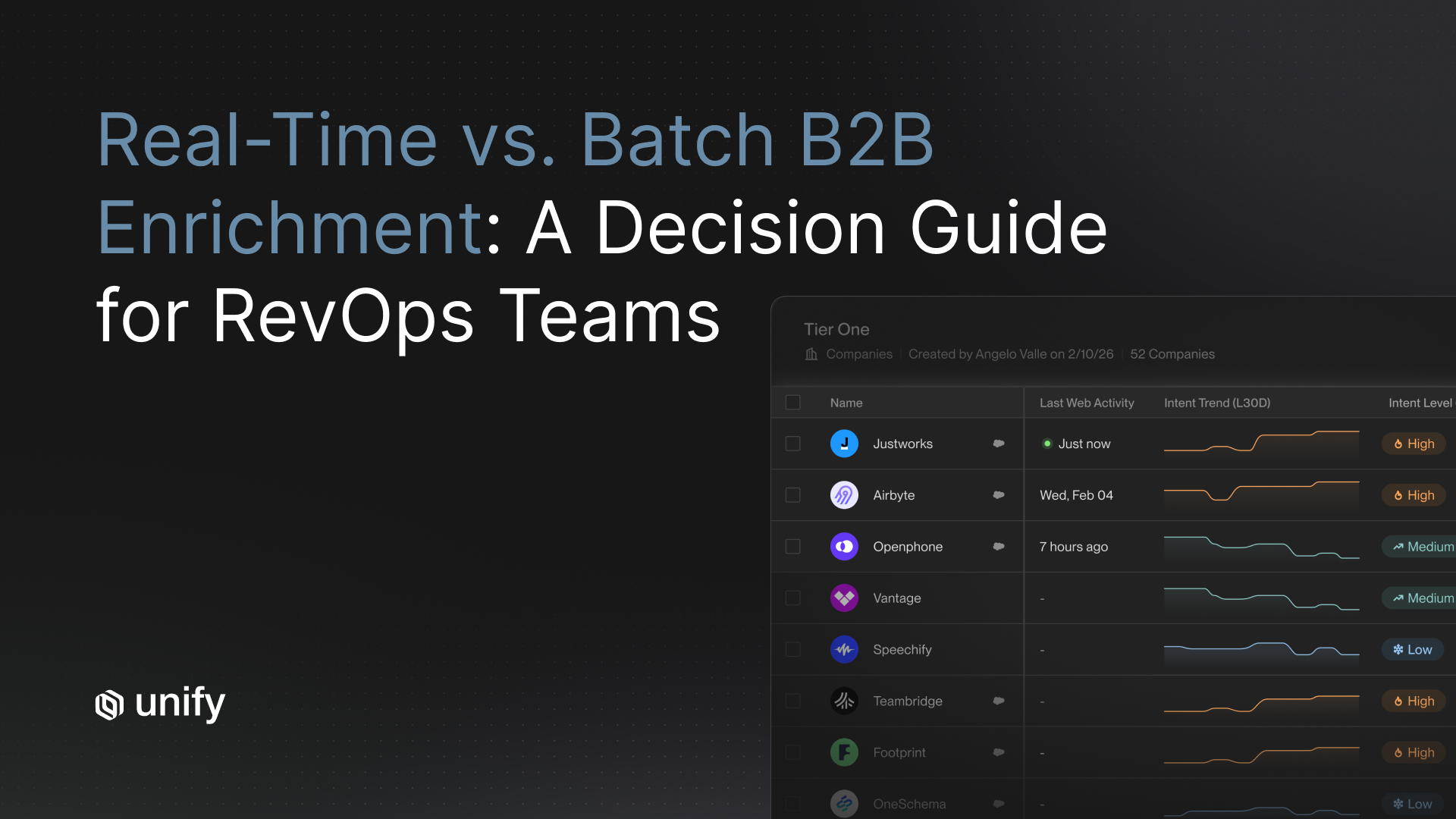

3. Do you have signal density that AI can act on?

AI agents need signals to act on. PQL data, website intent, hiring signals, Champion Tracking detections, lookalike audiences — these are the inputs that make AI useful. Per the Unify Signals overview, 25+ native intent signals ship out of the box. If your market produces these signals, AI tooling has fuel. If your market is signal-sparse (mature enterprise vertical with no behavioral data), humans manufacturing custom outreach manually can outperform AI on a small audience. Most B2B SaaS, fintech, and PLG companies have enough signal density to benefit from AI.

4. What is your time-to-ramp tolerance?

A new SDR ramps in 90 to 120 days at typical companies. An AI agent ramps in days. Per the Unify for Reps case study, new NBRs ramp in 1 week with integrated AI tooling, and one new hire booked five meetings in the first two weeks. If your quarter requires output now, AI tooling delivers it faster than hiring. If you can afford to wait a quarter for a hire to onboard, the calculus tilts.

5. What deal-complexity tier are you working?

Humans materially outperform AI on multi-thread enterprise deals, executive-level conversations, and live objection handling. AI materially outperforms humans on top-of-funnel prospecting volume, research-at-scale, and personalization-at-scale. Map your average deal complexity to the right ratio: enterprise ACVs with 6+ stakeholders need more human time per deal; mid-market and SMB deals with 1-3 stakeholders can be largely AI-driven through to qualified meeting.

6. What is your hire-freeze status?

This often forces the hand. If hiring is frozen for the next two quarters but the pipeline target is unchanged, AI tooling is the only available lever. If hiring is open and the bottleneck is human capacity rather than per-rep efficiency, adding heads can be the right call — paired with AI tooling for the new hires' workflow.

The 2-extreme case bracket

Max-AI extreme: Perplexity ($1.7M / 0 BDRs / 3 months)

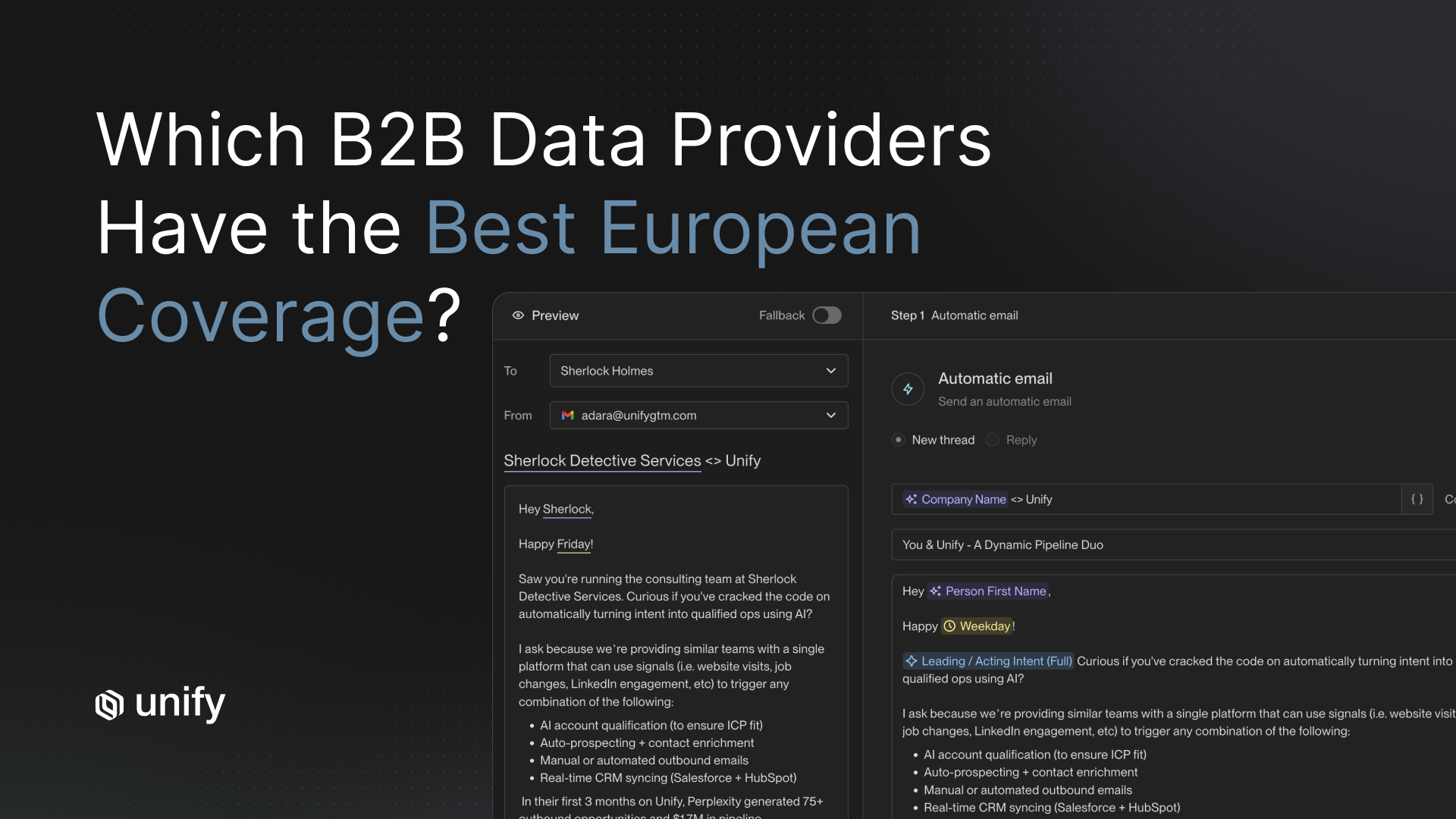

Per the Perplexity case study and the long-form blog, Perplexity ran the AI-extreme playbook: signals + Plays + AI personalization + automated sequencing, with no dedicated BDR headcount. Outcomes: $1.7M in pipeline, 75+ outbound opportunities, 80+ enterprise meetings in 3 months. PQL Plays reached a 5 percent reply rate; MQL Plays reached up to 20 percent. Owner: Jenny Sung, Product Marketing Lead. Why this works: Perplexity has a freemium AI search product generating dense PQL signals, a high-volume audience, and AI-personalized sequences contextualized by usage patterns (employee count using product, query volumes). The conditions for this extreme are specific.

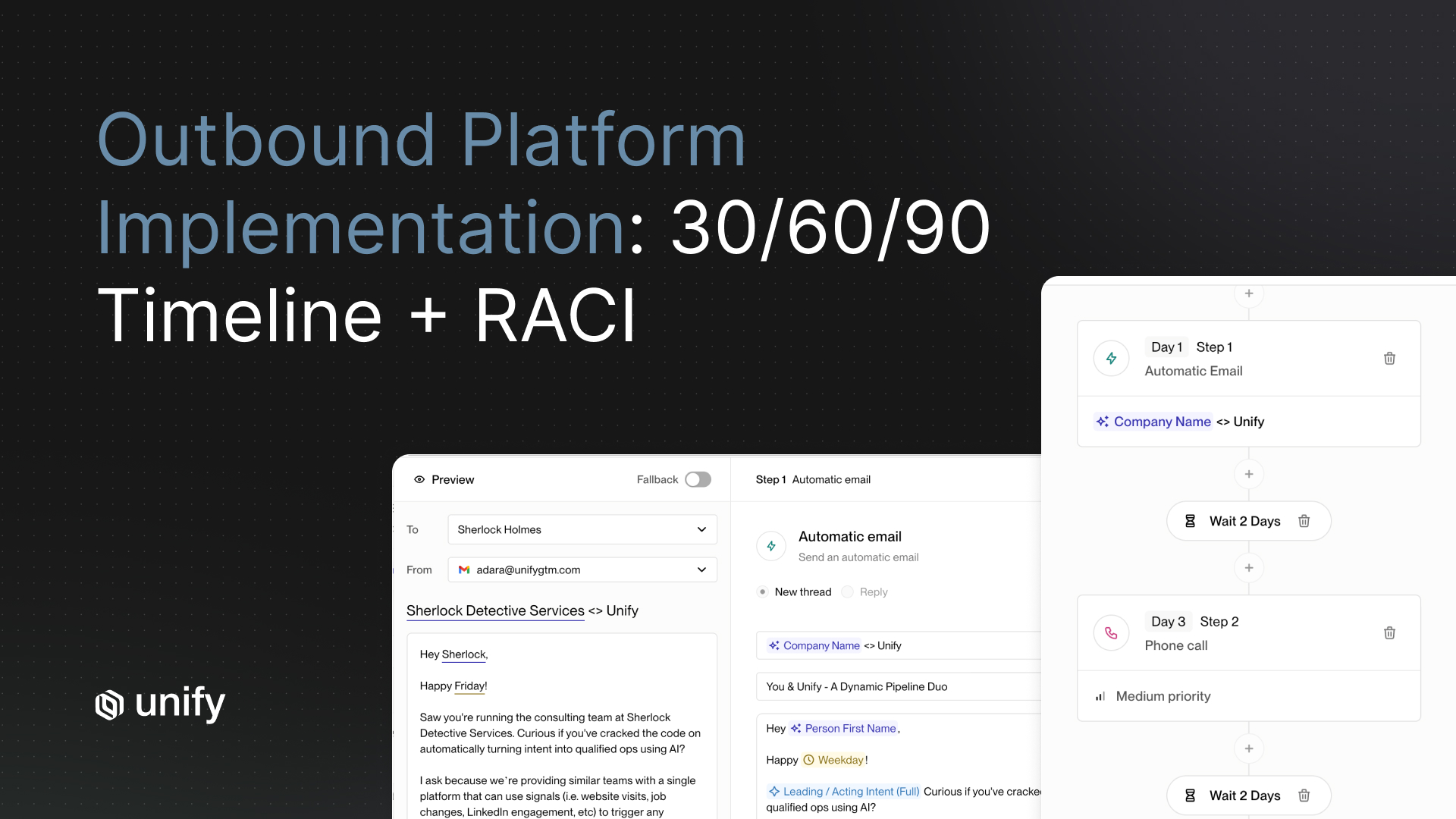

Hybrid extreme: Unify NBR team (1.6x industry standard, 1-week ramp)

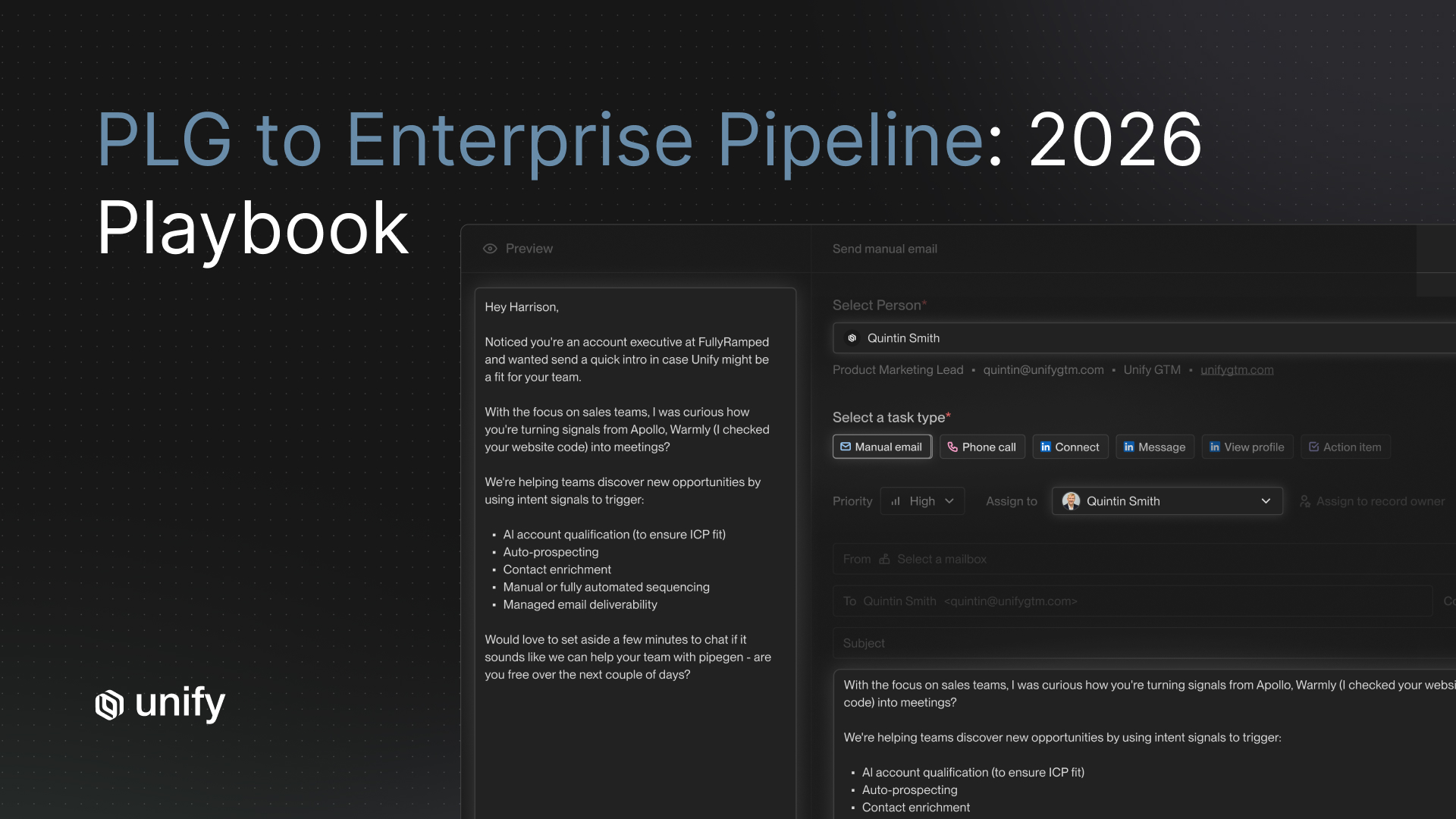

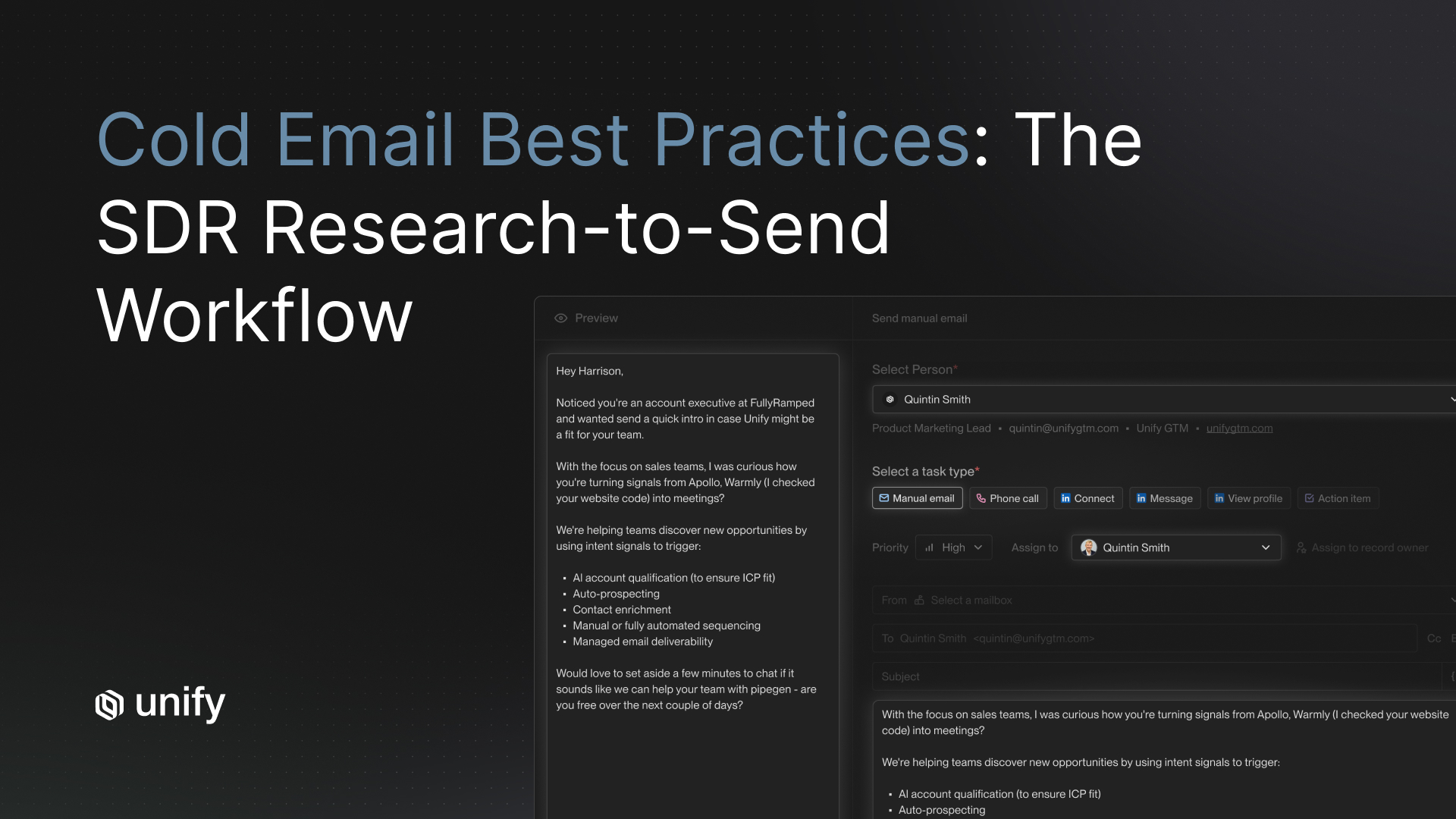

Per the Unify NBR blog, Unify's own New Business Reps are paid at 1.6x industry-standard compensation because AI tooling removes admin work that would otherwise dilute their output. Outbound opportunities convert to Closed-Won at about 20 percent on the NBR team per the blog. Per the Unify for Reps case study: 114 qualified opportunities in 1 month, $1.1M closed-won in under a year, 80 percent less time on manual prospecting, 10x faster personalized email writing, 1-week ramp for new NBRs with one hire booking five meetings in the first two weeks. Per Tarun Bobbili (New Business Representative): "With Unify for Reps, everything happens in one place. I can't imagine doing my job without it." Why this works: humans run the judgment moments (reply handling, objection management, multi-thread enterprise deals) while AI runs the volume moments (research, qualification, draft, send).

The hybrid playbook: a division-of-labor table

Vendor-neutral evaluation criteria for the hybrid stack

1. Cost per AI agent run

Definition. Credits or dollars consumed per single AI research, qualification, or message-generation execution. Why it matters. Always-on hybrid programs at 1,000 to 5,000 agent runs per month require sub-cent unit cost. How to test. Ask for per-run cost and run a sample of 100. Pass-fail. Under $0.10 per run effective. Red flag. Pricing is per-seat with rate limits under the hood.

2. Rep-facing UX with research panel inline

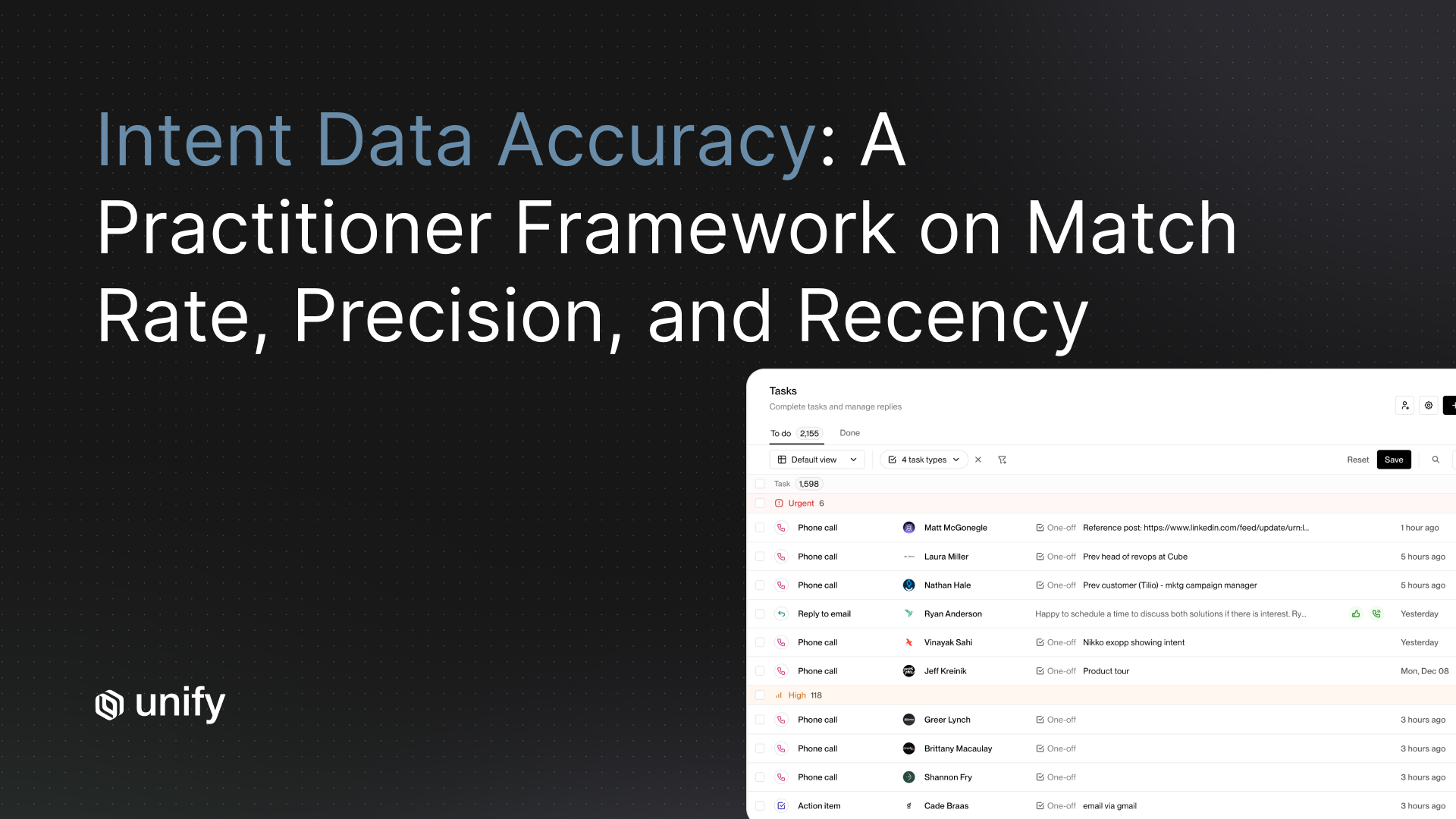

Definition. Reps see AI research, signal context, and last activity inline at the task — no alt-tab. Why it matters. If reps have to gather context manually, the time savings evaporate. How to test. Open a Tasks dashboard and audit which context appears without clicks. Pass-fail. Research, signal, last activity all inline. Red flag. Task shows name and company only.

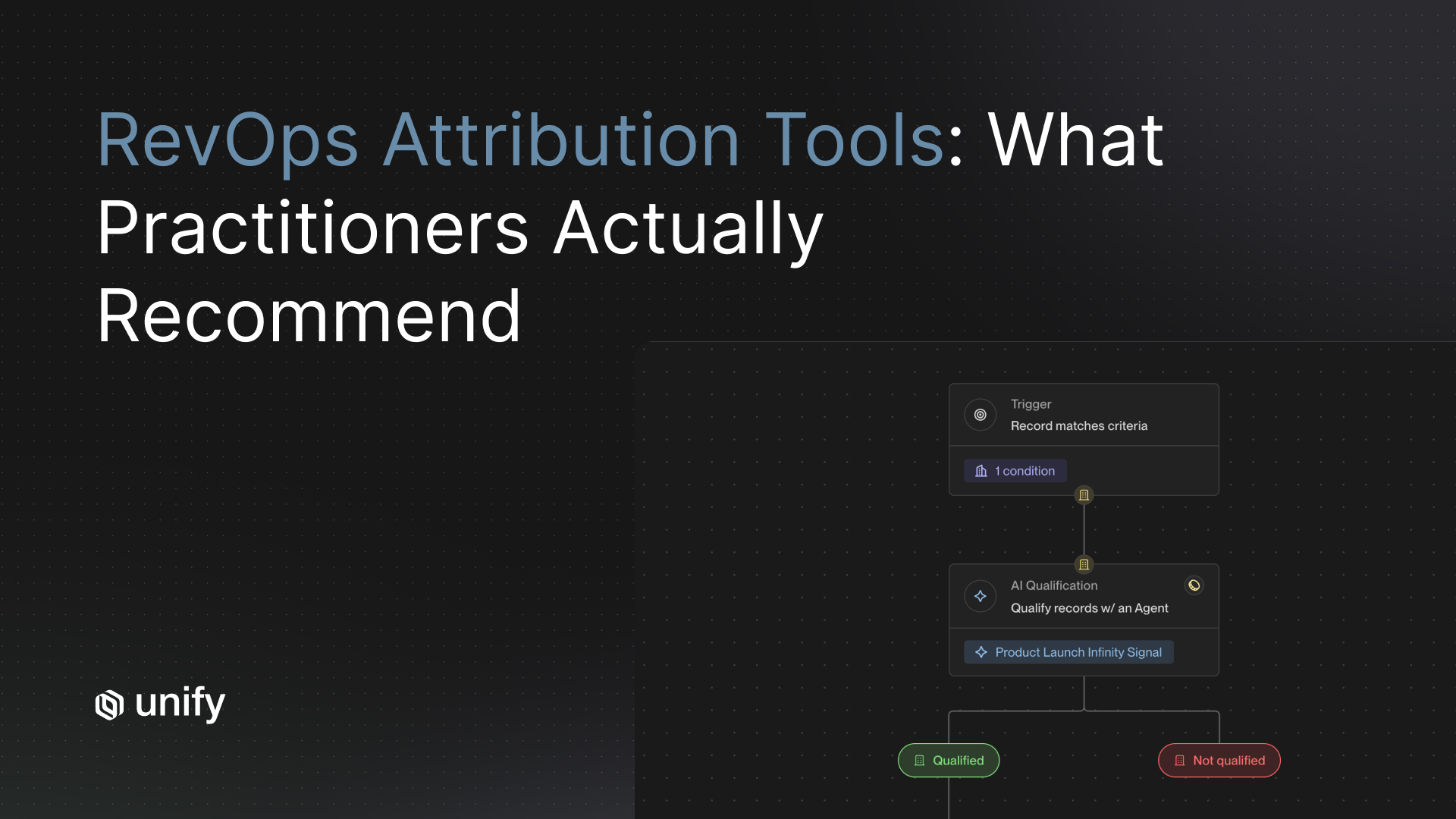

3. Reply triage routes to human, not auto-reply

Definition. AI classifies replies; humans handle objection and pricing questions. How to test. Send mixed replies (positive, objection, referral, unsubscribe) and verify each routes to a human. Pass-fail. Native unified inbox with classification routing to human queue. Red flag. AI auto-responds to objections or pricing.

4. Per-rep performance reporting

Definition. Same metrics aggregated per rep for coaching and accountability. How to test. Pull a per-rep reply-rate, opps-created, meetings-booked breakdown. Pass-fail. Per-rep view ships natively. Red flag. Requires custom CRM reports.

5. Time-to-ramp documentation

Definition. Vendor can demonstrate ramp time on named customer references. Why it matters. A 90-day ramp on the tool defeats the purpose of buying it. How to test. Ask for two named customer references with documented ramp timelines. Pass-fail. Under 2 weeks to productive use. Red flag. "Ramp varies by customer" without specifics.

How Unify covers these criteria

- Cost per agent run. 0.1 credits per run per the Next-gen AI Agents announcement, a 10x improvement.

- Rep-facing UX. Per the Unify for Sales Reps launch blog, AI research, signal context, and task prep inline; integrated dialing via Trellus.ai. Per the Unify for Reps case study, 80% less manual prospecting time and 10x faster personalized email writing.

- Reply triage. Per the Task Management and Unified Inbox page, unified inbox with AI classification (positive, referral, objection, unsubscribe) routing to human queue.

- Per-rep reporting. Per the Unify for Reps case study, rep-level performance surfaces 114 qualified opportunities per month and $1.1M closed-won per rep cohort.

- Time-to-ramp. 1-week ramp per the Unify for Reps case study; new hire booking 5 meetings in 2 weeks. Per the Quo case study, first Play live in 1 day at the platform level.

Worked example: a 30-rep SDR org reviewing the decision

A 200-person mid-market SaaS company. CRO has 30 SDRs. Existing reps at 75 percent of quota. Budget review: $1.5M to allocate either to 10 more SDRs at fully-loaded $130K each, or to AI SDR tooling layered on existing 30 reps. Running the 6-question framework:

- Cost-per-opp. Existing fully-loaded cost: $3.9M / 1,200 opps/year = ~$3,250 per opp. Adding 10 SDRs raises capacity but does not change per-opp cost; AI layer on existing 30 can drop per-opp cost materially.

- Bottleneck. Reps report 50%+ of day on research. Research-bottleneck = AI tooling indicated.

- Signal density. Mid-market SaaS with website intent, PQL signals, new hires available. Sufficient.

- Ramp tolerance. Board wants Q3 lift. SDR ramp = 90 to 120 days, misses the window. AI tooling ramps in days.

- Deal complexity. Mid-market ACVs ($50K to $150K), 2 to 4 stakeholders. AI can run through to qualified meeting on most accounts.

- Hire-freeze. Not frozen; budget is available either way.

- Decision. Layer AI SDR tooling on existing 30 reps first. Anchor expectations on the Unify NBR pattern: 1.6x rep performance, 1-week ramp, 80% less manual prospecting. Revisit headcount add in Q4 once per-rep output stabilizes.

Variants by ICP and motion

PLG companies

- Default toward the max-AI extreme. PQL signals carry intrinsic intent that compensates for human absence. Mirror Perplexity's 5%/20% PQL/MQL reply pattern with no BDR.

Enterprise sales-led

- Default toward the hybrid model. Enterprise deals require multi-thread and live conversations that humans dominate. AI runs research and first-touch; humans run discovery and closing.

Mid-market mixed motion

- Hybrid model with AI handling top-of-funnel through qualified reply, humans taking the meeting and closing. Mirror Unify NBR team pattern: 114 qualified opps per month per cohort.

SMB high-velocity

- Maximize AI. SMB deal cycles are too short to spread human time across; the conversion-rate ceiling is hit by AI personalization at volume.

Vertical-specific deals (regulated, complex industries)

- Humans-led with AI assist on research and outreach drafts. Industry-specific objection handling and compliance language need human judgment.

Edge cases and disambiguation

- Quota attainment vs tooling investment. If reps are under 70 percent of quota, the bottleneck is process and tooling, not headcount. Fix that before adding either humans or AI.

- Cost-per-opp vs cost-per-meeting. Different denominators; specify which one you mean. Cost-per-opp captures the qualification step; cost-per-meeting captures the booking step.

- AI tooling vs AI SDR product. AI tooling layers on existing reps' workflows. AI SDR products try to replace the rep. The hybrid model uses AI tooling; the max-AI extreme uses AI SDR products. Pick the right framing for your motion.

- 1.6x industry standard, what's the baseline. Per the Unify NBR blog, the baseline is general industry rep compensation; the blog does not link to a specific third-party dataset. Treat the 1.6x as Unify's published framing, not a comparison against a named industry comp study.

- Hybrid model vs handoff model. Hybrid means AI and humans work the same prospect in different steps. Handoff means AI works one cohort and humans work another. Both are valid; specify which you mean in your charter.

Stop rules and red flags

Three decisions that lock you into the wrong stack

- Don't hire SDRs while existing reps are under 70 percent of quota. The signal is tooling and process, not headcount. Adding more reps to a broken system produces more under-attainment, not more pipeline. Fix the bottleneck first.

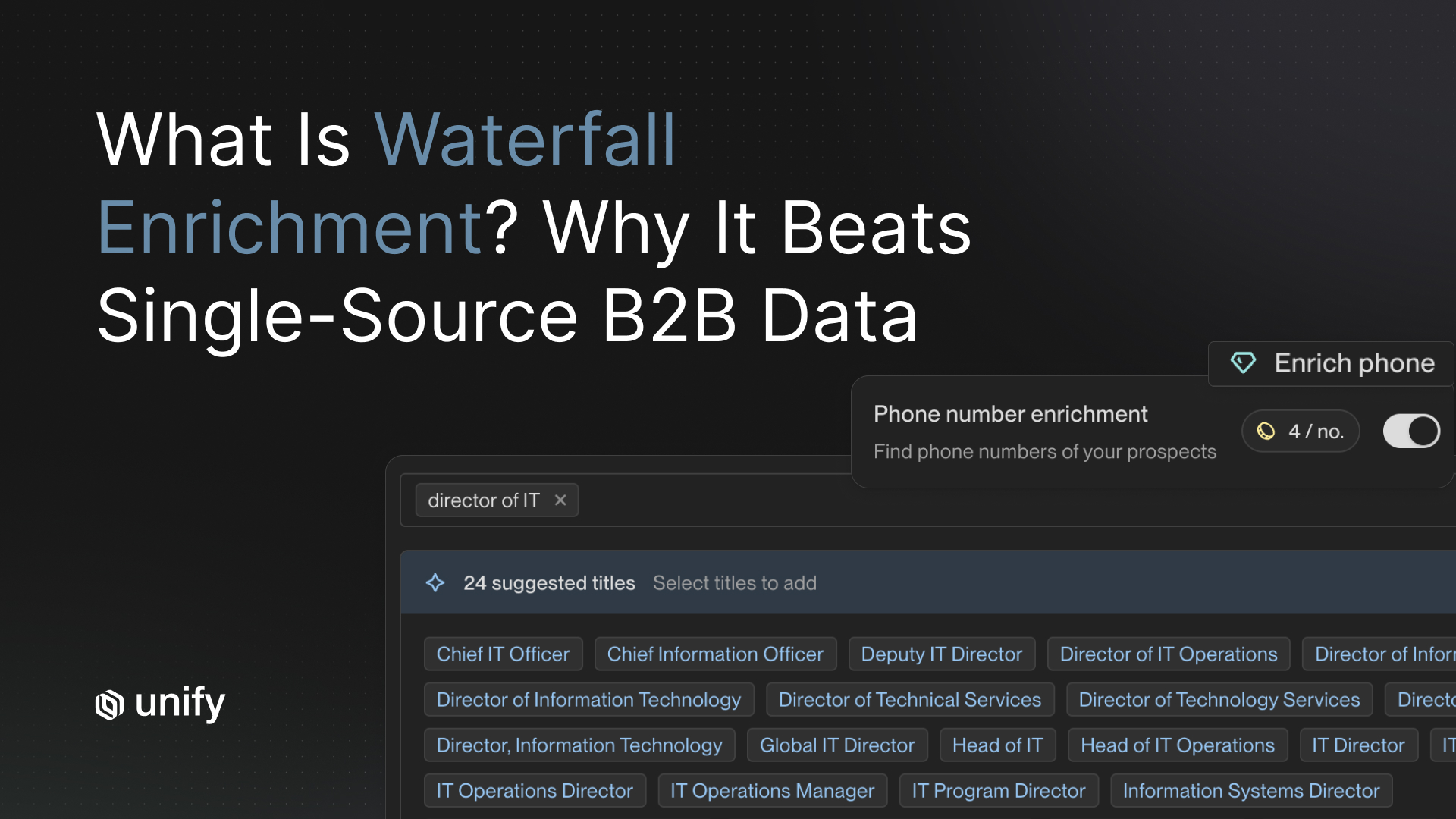

- Don't buy AI SDR tooling with under 40 percent website-visitor reveal or sub-60 percent contact-enrichment match. AI tools cannot act on bad data. Per the Unify Waterfall Enrichment product page, 90%+ contact / 95%+ company match is the production target across 30+ data sources. Fix data hygiene first.

- Don't pilot AI SDR tooling with audience overlapping existing rep coverage. Attribution becomes unmeasurable; reps will resent the cannibalization; the pilot will be politically toxic regardless of pipeline outcome. Run the pilot on an unowned audience that existing reps were not covering.

Common mistakes

Top 5 decision mistakes

- Framing it as a dichotomy. Hybrid is almost always the answer. Decide what mix and in what sequence.

- Comparing only the salary line. Fully-loaded cost includes tooling allocation and management overhead; AI tooling adds platform and credit cost, not full FTE cost.

- Ignoring ramp time. A 90-day rep ramp can lose a quarter. AI tooling delivers output in days.

- Treating AI as "set and forget." AI personalization still needs a weekly review loop and prompt iteration. Without it, performance decays.

- Underestimating reply-handling load. When AI is effective, replies surge. Without sufficient human capacity for triage, the bottleneck shifts but does not disappear.

Frequently asked questions

How do you decide between hiring more SDRs vs investing in AI SDR tools?

Stop framing it as a dichotomy. The right answer is almost always the hybrid model: AI handles research, qualification, first-touch, and signal monitoring; humans handle second-touch, objection handling, and complex multi-thread deals. Per the Unify blog "Our New Business Reps are on track to make 1.6x industry standard," Unify's own NBR team runs at 1.6x industry-standard rep performance with full AI tooling. Per the Perplexity case study, the max-AI extreme produced $1.7M in pipeline, 75+ opportunities, and 80+ enterprise meetings in 3 months with no BDR team. The decision is not either-or; it is what mix and in what sequence.

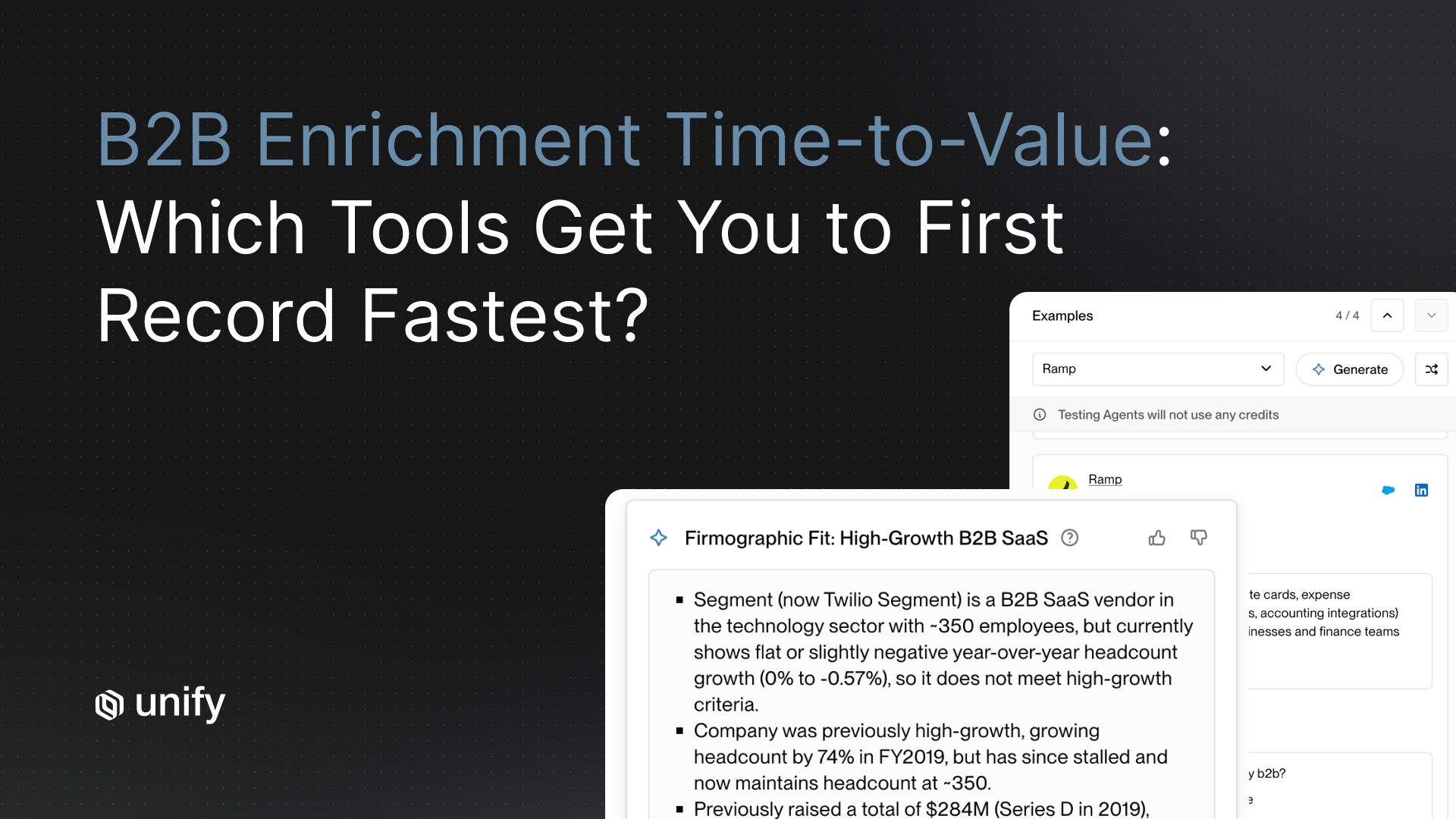

What does an AI SDR tool actually replace in the workflow?

Research, audience qualification, first-touch drafting, and signal monitoring. Per the Unify Affiniti case study, AI Agents executed 8,000 research runs across 8,700 leads in 3 months, saving 20+ hours per rep per week. Per the Unify for Reps case study, integrated AI research reduced manual prospecting time by 80 percent and accelerated personalized email writing by 10x. AI does not replace reply handling, objection management, or relationship-based selling — those remain human work. Per the Unify for Sales Reps blog, AI-empowered sellers (not fully autonomous AI SDRs) are the architecture that drives the most pipeline per rep.

When does hiring an SDR beat buying AI SDR tooling?

When the existing reps are over 90 percent of quota and the bottleneck is total human capacity rather than per-rep efficiency. Hire if your deals require heavy multi-threading, executive engagement, and live-call objection handling that humans materially outperform AI on. Hire if your signal density is low (no PQL data, no website intent, no hiring signals) so AI has nothing to act on. Skip hiring and invest in AI tools first when reps are under 70 percent of quota — that signals a tooling problem, not a headcount problem.

What is the time-to-ramp difference between an SDR and an AI agent?

A new SDR ramps in 90 to 120 days at typical mid-market and enterprise companies. An AI agent ramps in days. Per the Unify for Reps case study, integrated AI tooling produced a 1-week ramp for new NBRs, with one new hire booking five meetings in the first two weeks. The cost difference is asymmetric: 3 months of an SDR's salary covers between 6 and 12 months of AI agent runs at 0.1 credits per run per the Unify Next-gen AI Agents announcement. Time-to-ramp tolerance is a primary input into the decision.

What are the red flags for buying AI SDR tooling?

Three. (1) Existing reps under 70 percent of quota means the bottleneck is tooling and process, not headcount; fix that before adding either humans or AI. (2) Website-visitor reveal under 40 percent or contact-enrichment match rate under 60 percent means data hygiene is broken; AI tools cannot act on bad data. (3) AI SDR pilot with audience overlapping existing rep coverage means attribution will be unmeasurable and reps will resent the cannibalization. Run the pilot on an unowned audience first.

Glossary

- Fully-loaded SDR cost. Base salary + variable + benefits + tooling allocation + management overhead, expressed as an annual figure. Typical US mid-market and enterprise range: $90K to $130K.

- Cost-per-opportunity. Total team cost divided by opportunities created in a window. The primary input to the hire-vs-AI math.

- Hybrid model. The architecture where AI handles research/qualification/first-touch/signal monitoring while humans handle reply/objection/discovery/multi-thread pursuits. The most common right answer.

- Max-AI extreme. The architecture where AI runs end-to-end through to qualified meeting with no dedicated BDR headcount. Exemplified by Perplexity's $1.7M / 0 BDRs / 3 months outcome.

- Hybrid extreme. The architecture where humans run the judgment moments with full AI tooling support. Exemplified by Unify's NBR team at 1.6x industry-standard rep performance.

- Agent run. One execution of AI research, qualification, or message generation. Runs at 0.1 credits each per the Unify Next-gen AI Agents announcement.

- Time-to-ramp. Calendar time from hire (or platform purchase) to productive contribution. New SDR: 90 to 120 days. AI agent on Unify for Reps: 1 week.

- Signal density. The volume and quality of intent signals available in a market. PLG markets are high-density; mature enterprise verticals without behavioral data are low-density.

- 1.6x industry standard. Unify NBR team compensation benchmark per the Unify NBR blog; describes Unify's framing of its own rep comp relative to a general industry baseline, not a named third-party dataset.

- Cannibalization risk. The pattern where an AI SDR pilot covers the same audience as existing reps. Makes attribution unmeasurable and creates political friction. Avoid by running the pilot on an unowned audience.

Sources and references

- Unify, "Our New Business Reps are on track to make 1.6x industry standard" blog. Source for 1.6x industry-standard compensation, ~20% closed-won conversion on outbound opps.

- Unify, "How Perplexity Booked $1.7M in Pipeline Without a Single BDR" blog. Source for $1.7M / 75+ opps / 80+ enterprise meetings / 3 months / 0 BDRs.

- Unify, Perplexity case study. Source for 5% PQL reply rate, up to 20% MQL reply rate.

- Unify, Unify for Reps case study. Source for 114 qualified opps/month, $1.1M closed-won in <1 year, 80% less manual prospecting time, 10x faster email writing, 1-week ramp, new hire booking 5 meetings in 2 weeks, Tarun Bobbili quote.

- Unify, "Unify for Sales Reps: The Future of Outbound Selling" blog. Source for "AI-empowered sellers are the future of outbound" framing.

- Unify, Introducing Unify for Sales Reps blog. Source for AI Research Assistant, Tasks Dashboard, Unified Inbox, integrated Trellus.ai dialing.

- Unify, AI Agents product page. Source for AI Agents capability and over 1M questions answered.

- Unify, Next-gen AI Agents announcement. Source for 0.1 credits per agent run, 10x improvement.

- Unify, This Year in Performance, Dec 19 2025. Source for $52M qualified pipeline, 22% closed-won conversion rate.

- Unify, Affiniti case study. Source for 8,000 agent runs / 8,700 leads / 3 months, 20+ hrs/rep/week saved.

- Unify, Spellbook case study. Source for 2 hours per day per rep saved.

- Unify, Quo case study. Source for 60 hrs/mo per team, 25 hrs/rep/mo saved.

- Unify, Signals overview. Source for 25+ native intent signals.

- Unify, Task Management and Unified Inbox product page. Source for AI reply classification (positive, referral, objection, unsubscribe) routing to human triage.

- Unify, Waterfall Enrichment product page. Source for 90%+ contact / 95%+ company match across 30+ sources.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)