TL;DR. Build a lookalike account list in five passes: extract clean closed-won seeds, score on firmographic, technographic, and behavioral layers, scrub false positives, size the list to 1,000-5,000 accounts, and activate it as an outbound Play. For B2B SaaS GTM, RevOps, and Growth teams, expect $100K to $1.7M in influenced pipeline per quarter when activation is tight.

Key Facts at a Glance

Methodology & Limitations. This article synthesizes five named Unify customer case studies (Peridio, Anrok, Guru, Juicebox, and the Lookalikes launch post) published between 2025 and 2026, plus operator guidance from Unify's published guides and product pages. Each Unify outcome below is attributed to its specific case study. We do not present a single aggregated "Unify benchmark" across customers because no such unified dataset exists. Outcomes will vary based on TAM size, motion (PLG, sales-led, expansion), data quality, and activation quality. Heavily regulated industries (financial services, healthcare) should dial down behavioral-signal weighting and add compliance overlays. EU and GDPR-sensitive teams should require an opt-in or contractual basis before applying behavioral signals.

What is a lookalike account list?

Direct answer: A lookalike account list is a ranked list of B2B companies that resemble your best closed-won customers across firmographic, technographic, and behavioral signals, scored against a seed list of your strongest accounts. It is built from CRM data, not from a paid-ads audience pixel.

Unlike a paid-media lookalike audience (which Meta or Google builds from anonymous user behavior), a B2B account lookalike list is a deterministic list of company entities. You can ship it to your CRM, hand it to a BDR, or enroll it in an outbound sequence. The unit is the company, not the user.

Three things separate a useful lookalike list from a noisy one:

- A clean seed. Your closed-won subset must be filtered for one-off deals, churn-soon accounts, and outliers.

- Layered scoring. Firmographic plus technographic plus behavioral together beats any single layer.

- A defined refresh cadence. Stale lookalike lists silently degrade pipeline quality.

What data should you use to seed a lookalike account list?

Direct answer: Seed your lookalike list from closed-won accounts in your CRM that meet four conditions: deal size at or above your median ACV, tenure over 90 days, healthy product adoption, and no active churn risk. Optionally weight each seed by billing tier and product-usage depth.

Closed-won is the seed, not the model

Most ICP guides start at a hypothetical buyer profile. That is the wrong starting point when you already have signed contracts in Salesforce or HubSpot. Closed-won is empirical evidence of fit.

Your seed list should be the deduplicated set of account records where Stage equals Closed Won, with these exclusion rules:

- Exclude one-off deals. Strip one-shot consulting work, partner-driven referrals, and any deal with a discount above 40%.

- Exclude churn-soon accounts. Drop any account with a flagged churn risk in CS data or a downgrade event in the trailing 90 days.

- Exclude accounts under 90 days tenure. You do not yet know whether the deal is good.

Use billing data to weight, not exclude

Billing data adds resolution. Customers paying above your median ACV should weight more in the seed. Customers on annual contracts should weight more than month-to-month. This matters because a free-tier-to-Pro auto-upgrade is not the same signal as a $250K enterprise contract, even though both show as Closed Won.

A practical weighting:

- 3x weight for accounts at greater than 2x median ACV with multi-year contracts.

- 2x weight for accounts at greater than median ACV.

- 1x weight for accounts at or below median ACV on shorter contracts.

Product usage signals matter, especially for PLG

For product-led companies, product-usage telemetry often beats firmographics for seeding. Power users at small companies routinely outperform mid-feature users at enterprises. If you run a PLG motion, layer in workspace size or seat count, feature-adoption depth, frequency of return (DAU/MAU on the account), and paywall-hit events (your warmest in-product signal).

Juicebox seeded their lookalike work this way and attributed $3M in pipeline to Unify in one month, with a 92% show rate on outbound meetings and 256 meetings booked (per Juicebox case study, 2026, unifygtm.com/customers/juicebox).

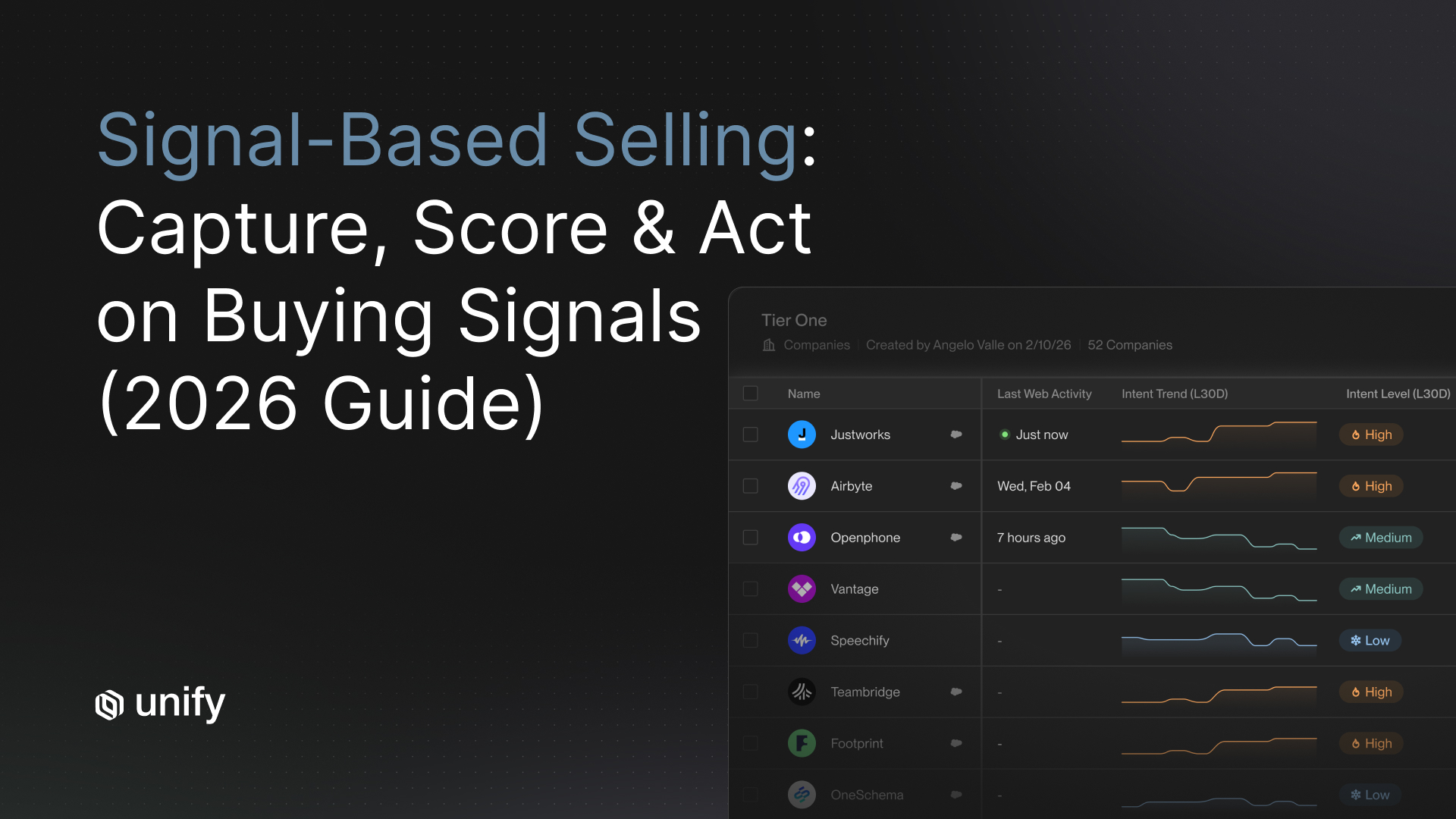

How do firmographic, technographic, and behavioral signals differ?

Direct answer: Firmographic signals describe what a company is (industry, headcount, revenue, geography). Technographic signals describe what a company uses (tech stack, integrations, vendors). Behavioral signals describe what a company is doing right now (website visits, hiring, funding, product usage). The three layers answer different questions and should be scored independently before they are combined.

Score each layer separately

Operators get burned when they collapse all three layers into a single composite fit score that hides which layer drove the match. Score them independently and tier the output:

- Tier A = strong on all three layers.

- Tier B = strong on firmographic plus technographic, mid behavioral.

- Tier C = strong on firmographic, weak on technographic and behavioral.

Anrok used a layered model in its play rotation (including a Lookalikes Play) and built $300K of pipeline in three months while running 4x faster SDR workflows than its previous ZoomInfo plus Outreach stack (per Anrok case study, 2025, unifygtm.com/customers/anrok).

How do you scrub false positives from a lookalike list?

Direct answer: Scrub false positives by adding negative-keyword filters, source-quality checks, and a manual sample review before activation. Three high-frequency false positives kill lookalike list quality: job-seeker traffic mistaken for buyer intent, irrelevant funding events, and content-syndication noise.

Run this scrub pass before any list goes live:

- Negative-keyword filter on titles and pages. Strip out career-page visits, "careers" or "jobs" URL paths, and visits where the only known person is a recruiter.

- Source-quality filter on funding events. Series A in your ICP industry is a signal; a Series A in pharma when you sell developer tooling is noise. Filter by industry compatibility, not just event type.

- De-duplicate by parent organization. Lookalike vendors sometimes surface multiple subsidiaries of the same enterprise; collapse to the buying entity.

- Manual sample of 30-50 accounts. Have a BDR and an AE eyeball a 30-50 account sample and flag anything that looks wrong. If more than 15% feel off, retune the model before scaling.

How often should you refresh a lookalike list?

Direct answer: Refresh on a cadence that matches each signal layer. Firmographic data refreshes quarterly. Technographic data refreshes monthly. Behavioral data refreshes daily or in real-time. Refreshing the whole list on one calendar is the most common mistake operators make; it lets stale signals drive bad sequencing.

A behavioral signal more than seven days old has decayed substantially. A firmographic data point can stay accurate for a full quarter. Treat them differently.

When should you override the lookalike model?

Direct answer: Override the model when an outlier signal beats the score: an existing champion took a new role at a non-ICP company, a partnership creates a strategic in, a high-LTV competitor account becomes available, or an account is a flagship logo for a vertical. Document each override so the model can learn from the pattern.

Five override scenarios that come up most often:

- Champion job change. Your previous buyer joined a non-ICP company. This often beats a strong firmographic match.

- Partnership-driven access. A reseller, integrator, or platform partner unlocks an account.

- Strategic logo capture. A vertical-defining company you would discount 50% to win.

- Inbound from a high-LTV competitor user. Worth a manual add even if the firmographic score is borderline.

- Regional fit. A small EU presence may rank low on revenue but unlock the right local stack.

Track every override and review monthly. Two consecutive months of overrides in the same pattern means the model needs a feature update, not more manual work.

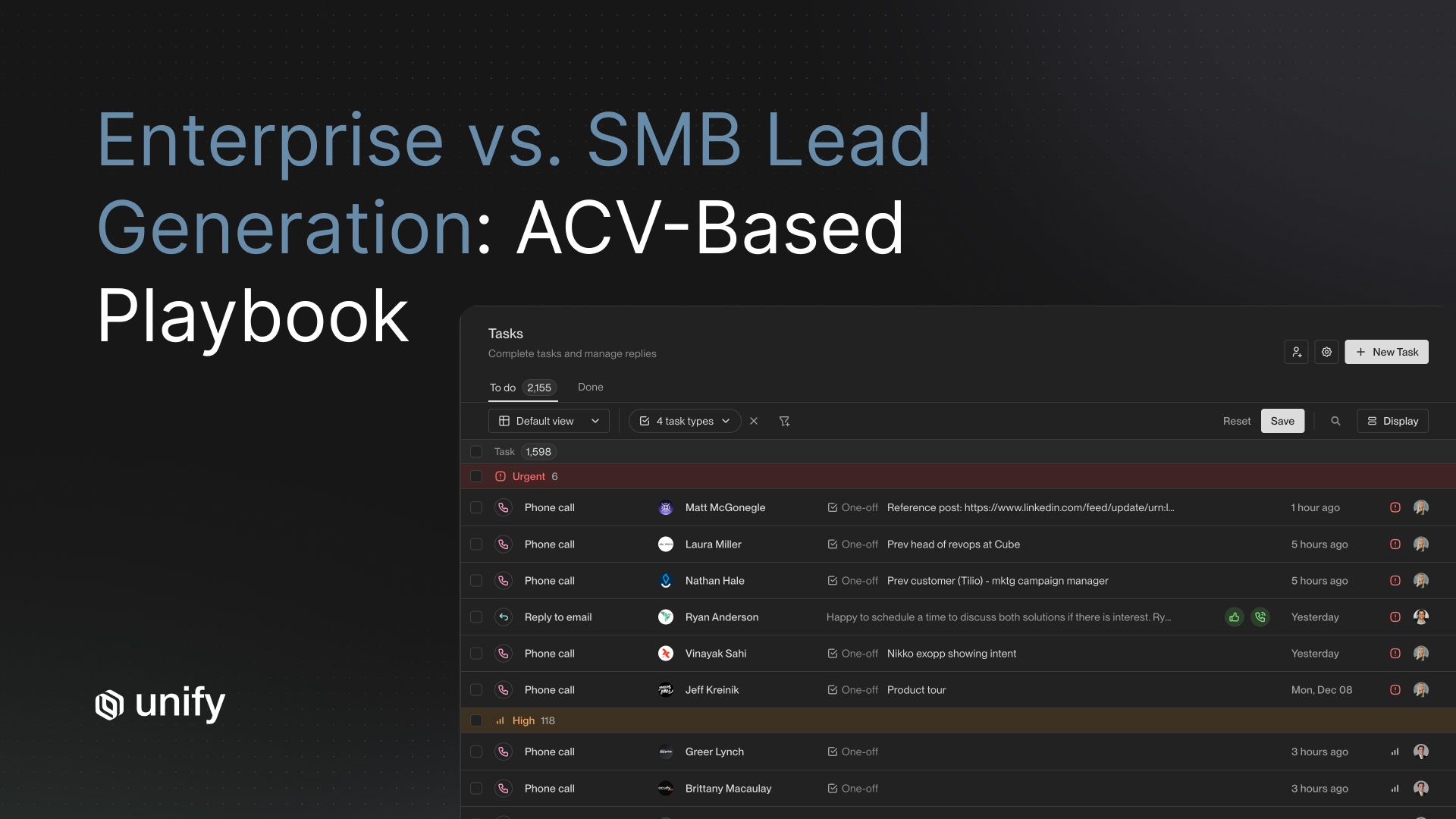

How big should a lookalike account list be?

Direct answer: Size a lookalike account list between 1,000 and 5,000 accounts for active outbound. Below 1,000 you starve the funnel; above 5,000 you start damaging deliverability and personalization quality, and your tooling cost climbs with no marginal lift.

The sweet spot varies by motion:

- PLG companies: 2,000-5,000 accounts (broader top-of-funnel, depth on product-usage signals).

- Sales-led companies: 800-1,500 accounts (named accounts, higher rep involvement).

- Expansion motions: 200-800 accounts (existing customer base, narrower targeting, deeper personalization).

This is operator guidance synthesized from how Unify runs lookalike plays for high-growth B2B SaaS customers (see Unify's Lookalikes launch post and the Unify /explore library for related plays). It is not a published external benchmark. Smaller teams can run at the bottom of these bands and still get strong results.

Decision Framework: which lookalike list build should you start with?

30-second chooser. Match your motion to the build pattern.

- If you have fewer than 50 closed-won accounts → start with firmographic-only ICP expansion. You do not yet have enough seed data to support technographic or behavioral layers.

- If you run a PLG motion on HubSpot with fewer than 50 AEs → prioritize product-usage seeding plus website-intent overlay. Speed of action and signal breadth beat firmographic depth.

- If you run sales-led on Salesforce with more than 50 AEs → prioritize three-layer scoring with weekly manual-override review. Governance matters more than coverage at this scale.

- If you are running an expansion motion → seed only from customers at First Results or Tangible ROI (per Unify's Customer Value Journey framework) and run a smaller list with deeper personalization.

- If you sell into a regulated vertical → dial down behavioral signals and add compliance overlays before activation.

- If you are EU-targeted or GDPR-sensitive → behavioral signals require opt-in or contractual basis; consider firmographic plus technographic only.

How do you turn a lookalike list into pipeline?

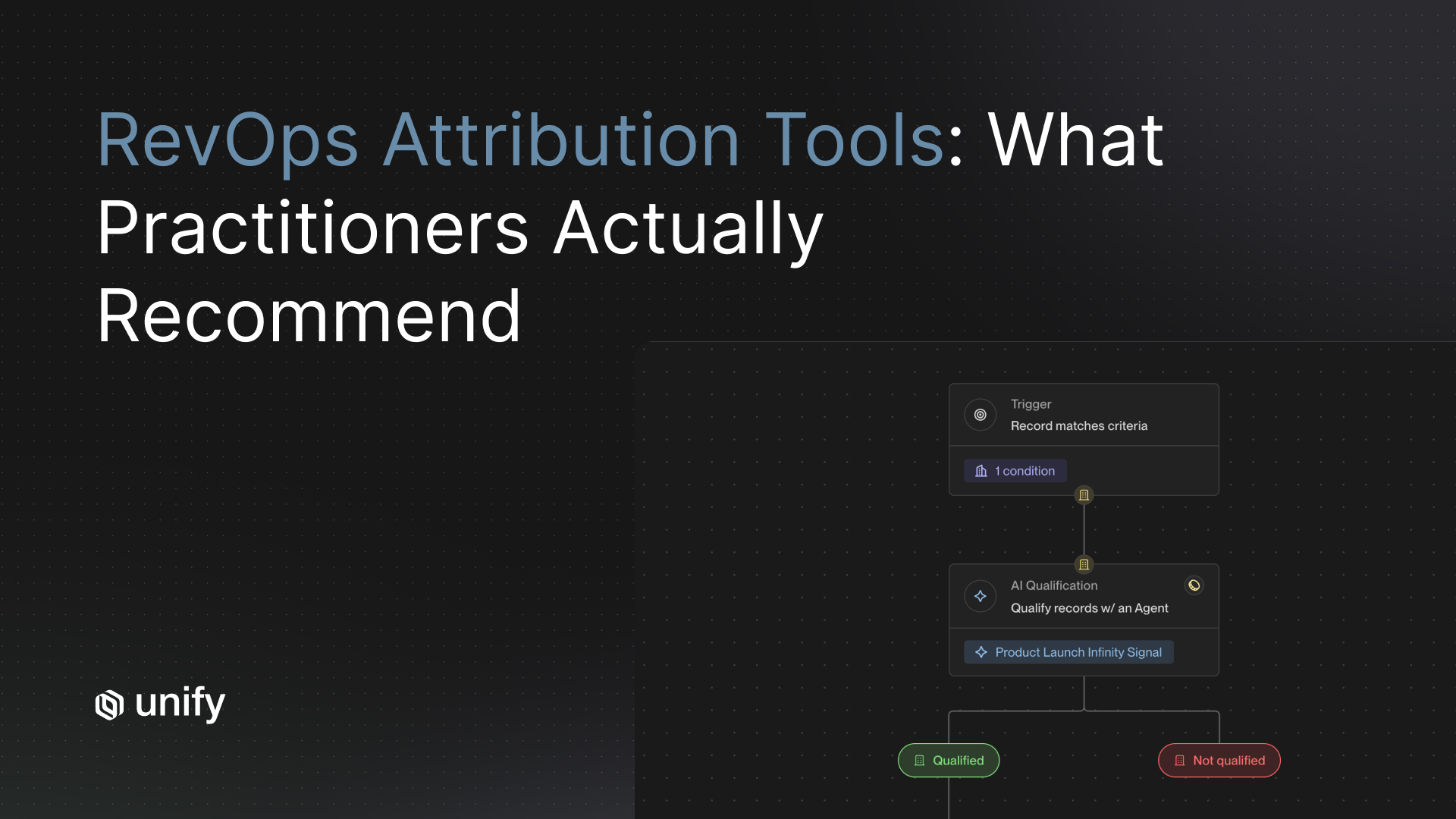

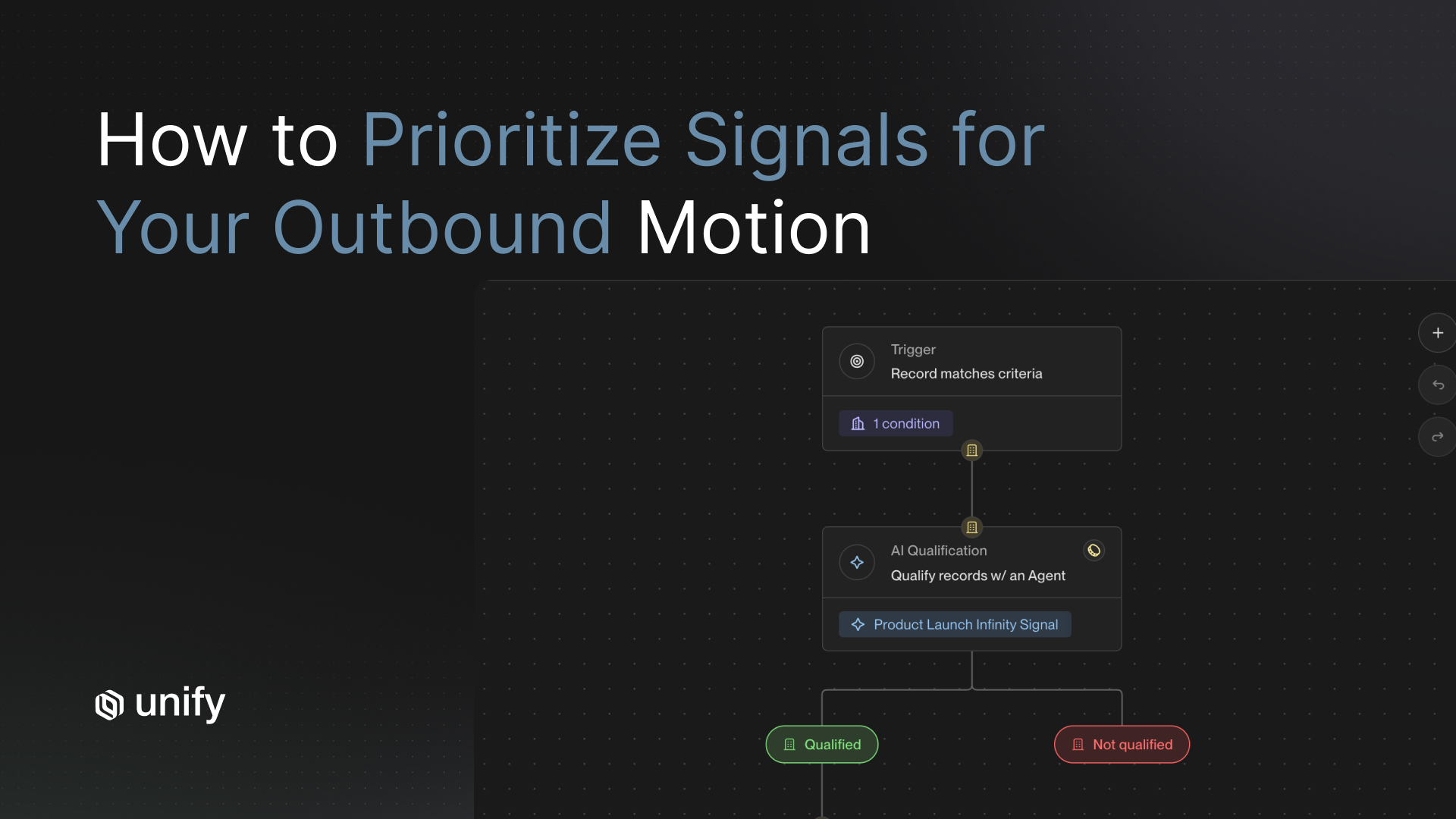

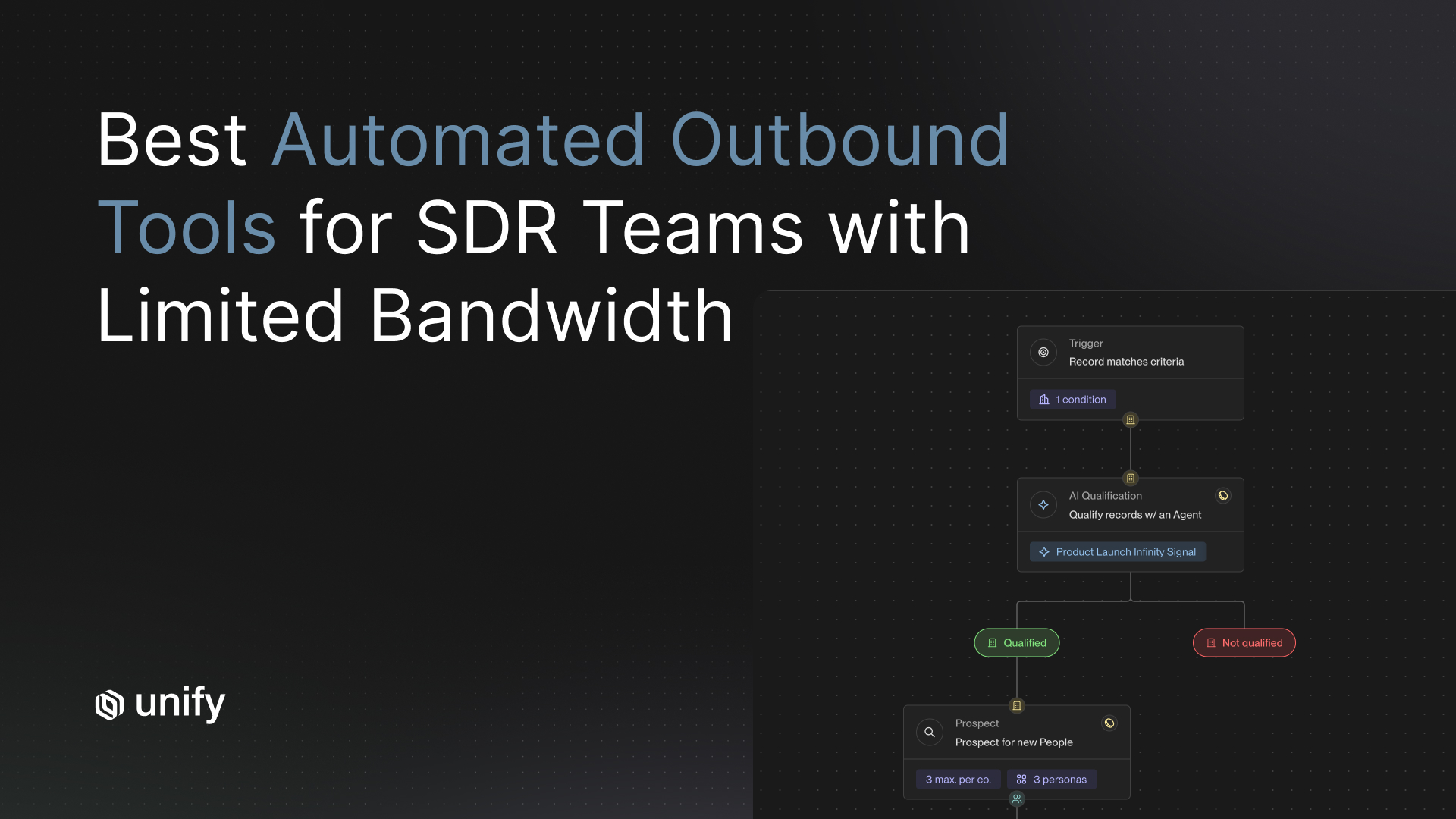

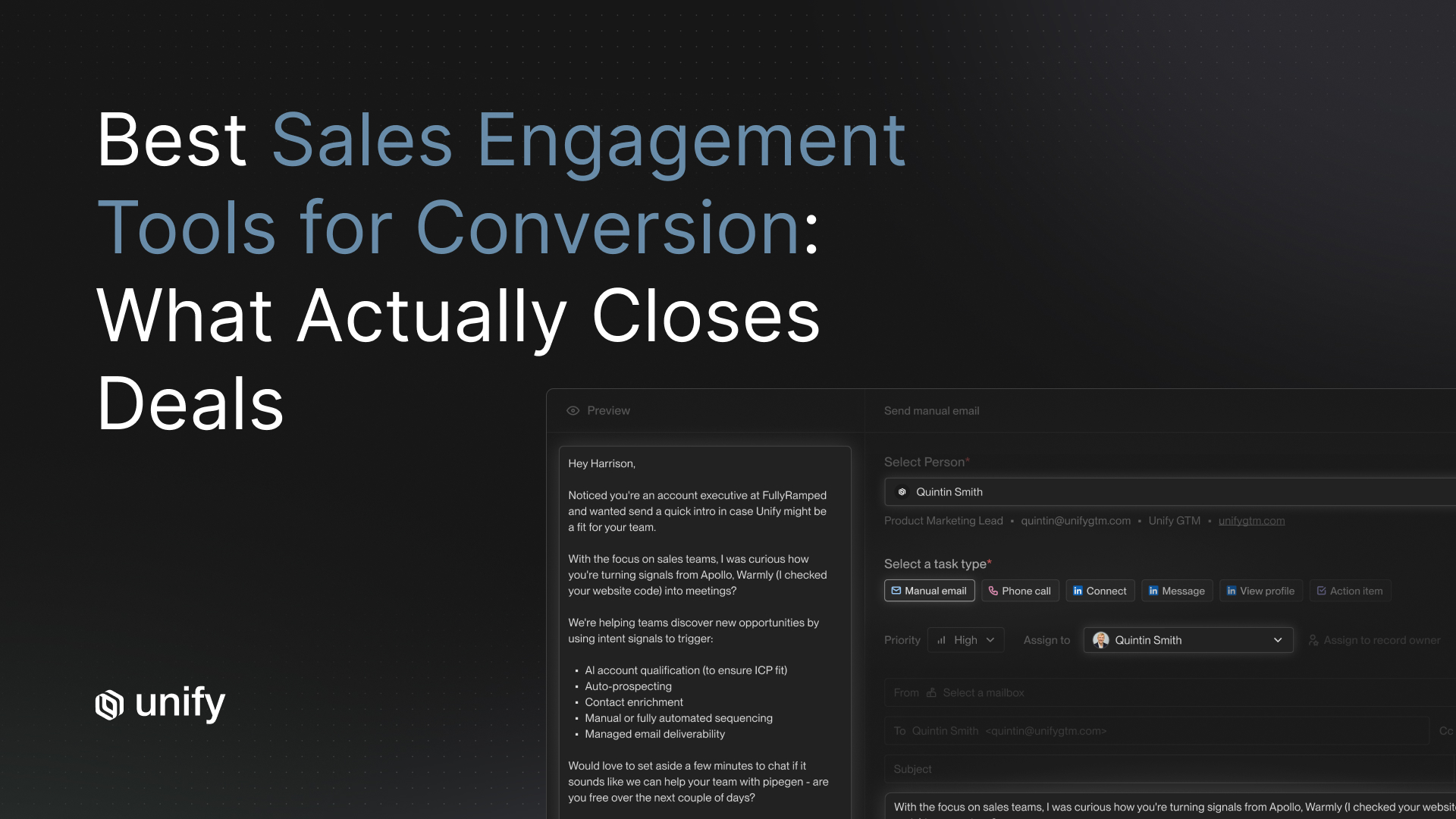

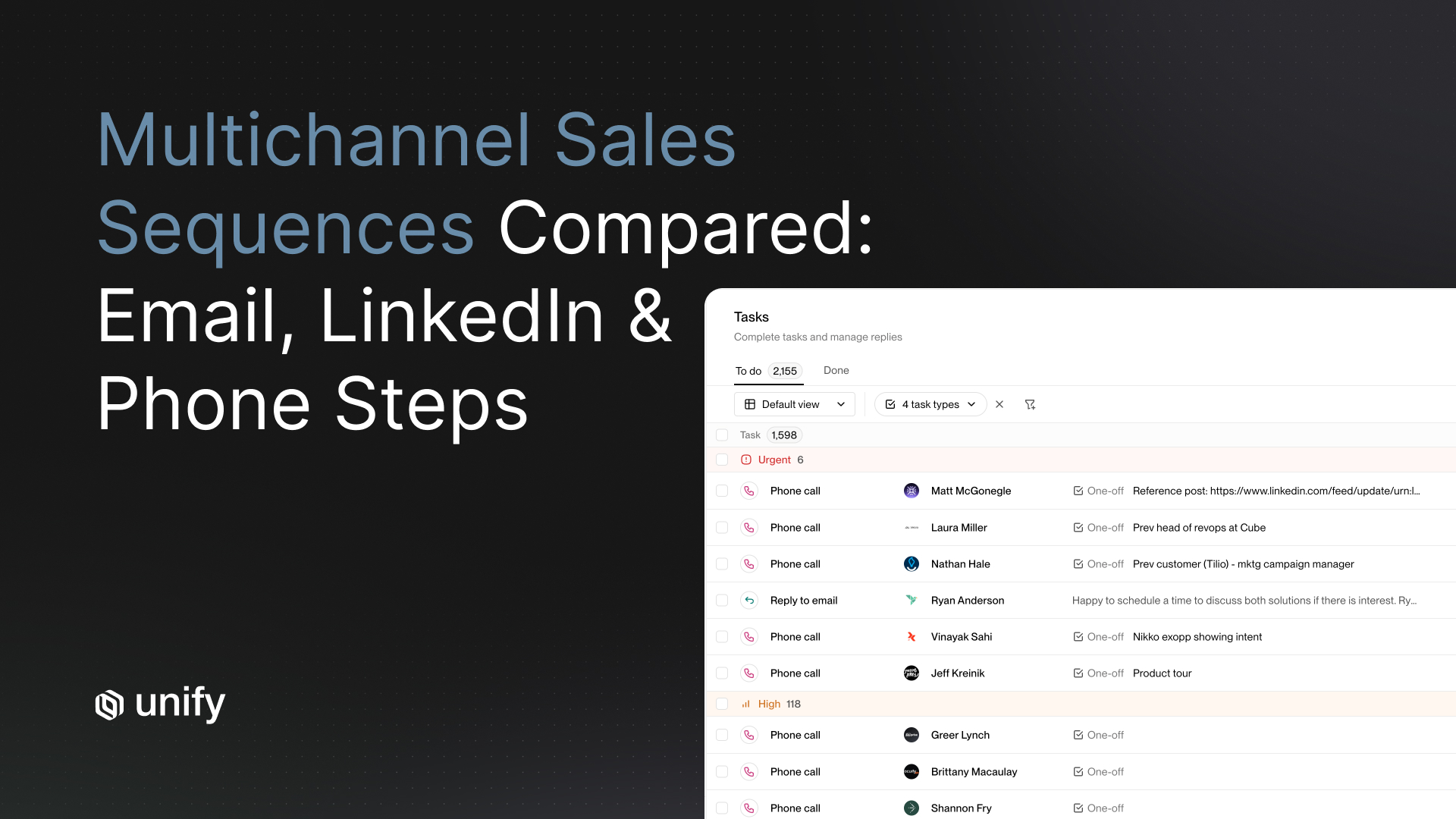

Direct answer: Activate the lookalike list as an outbound Play that triggers on either entry into the list or a high-intent behavioral signal on a listed account. The list is worthless without an activation motion. The Play should enroll matched companies into a multi-touch sequence and route real-time alerts on top-tier signals to the owning rep.

A working activation pattern:

- The matched list enters a Play as the trigger audience.

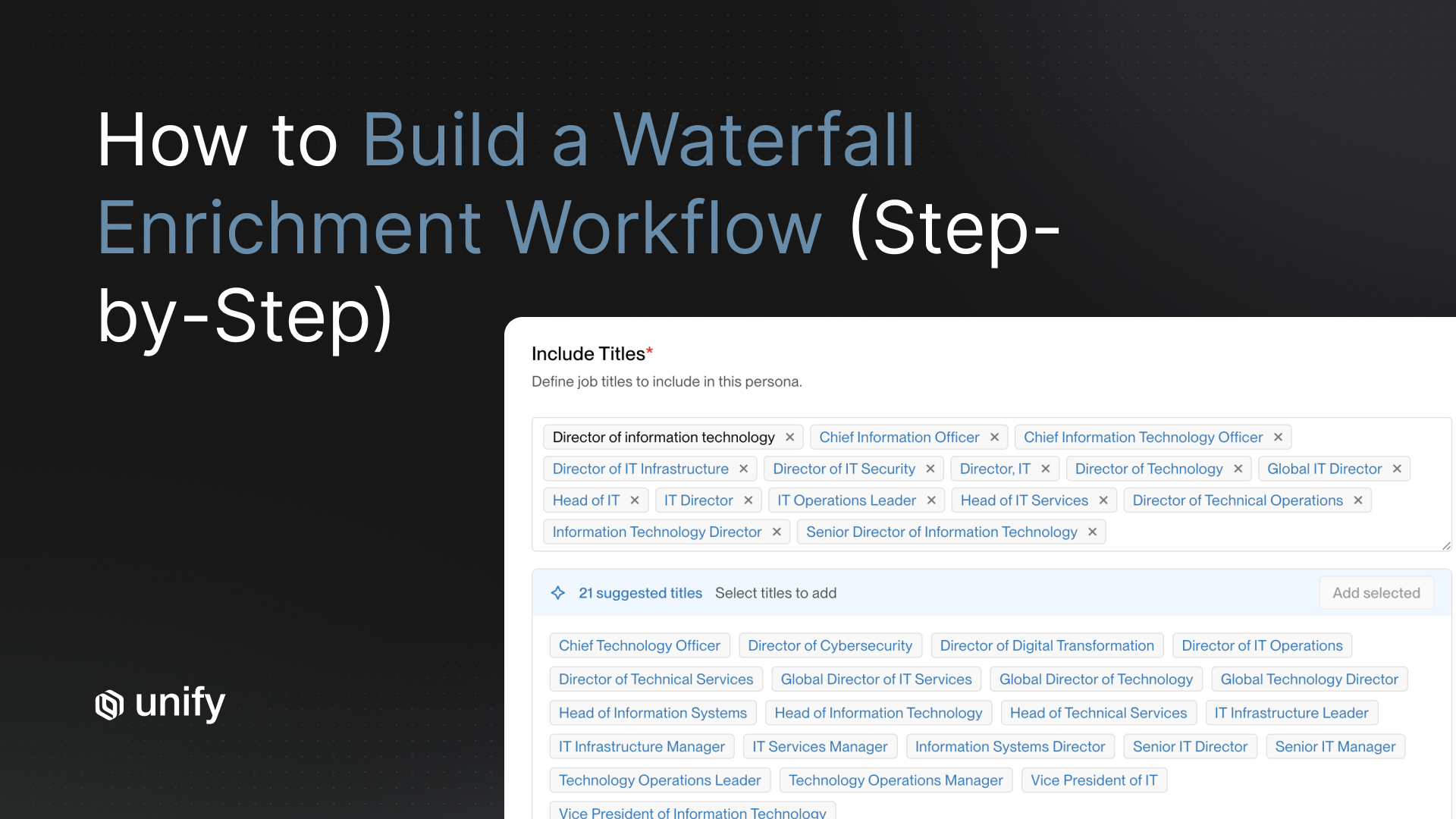

- Prospect by persona on each matched account to find buyer contacts.

- Enrich contacts via waterfall enrichment (Unify's published rates are 90%+ contact match, 95%+ company match per the Waterfall Enrichment product page).

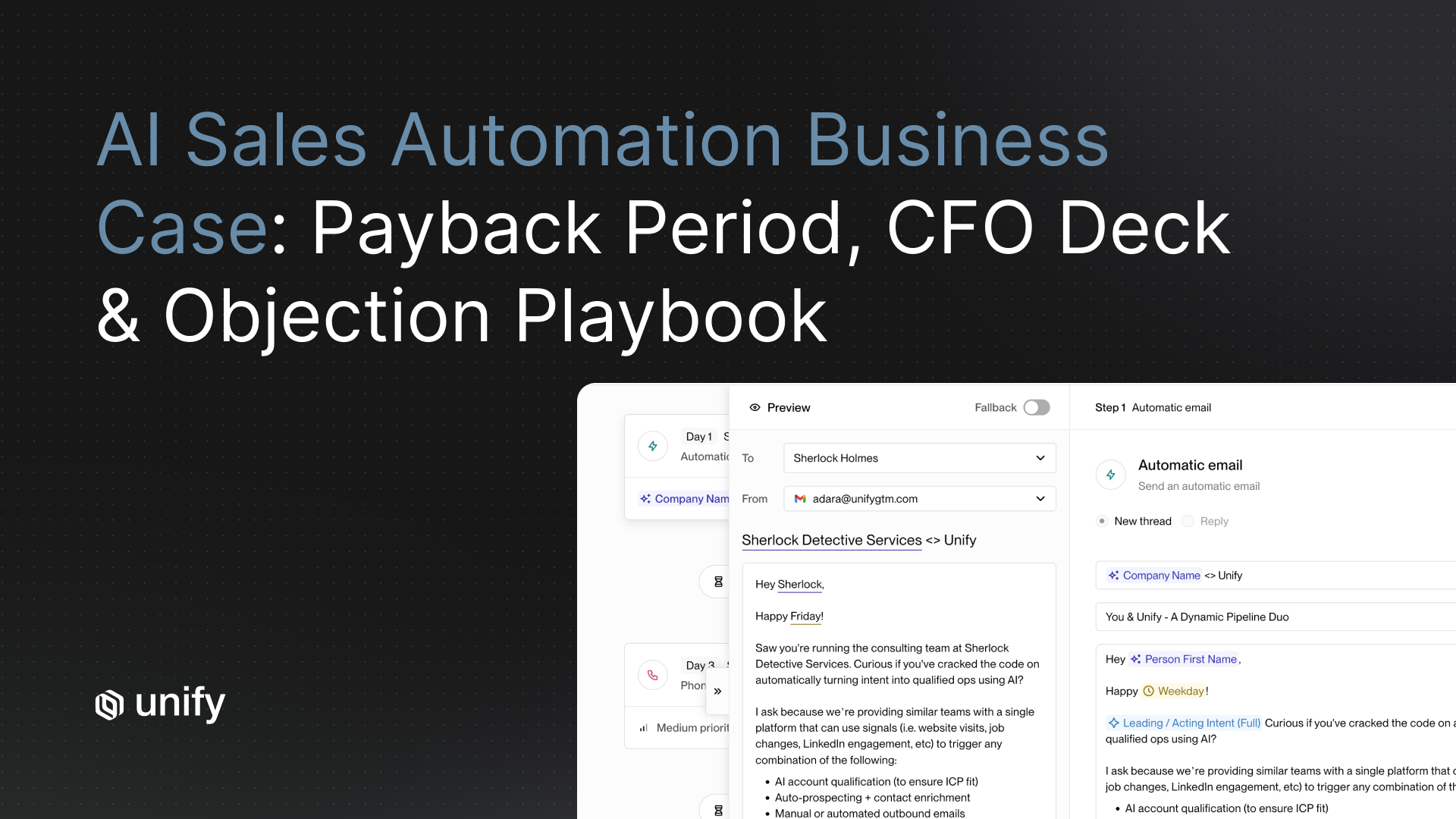

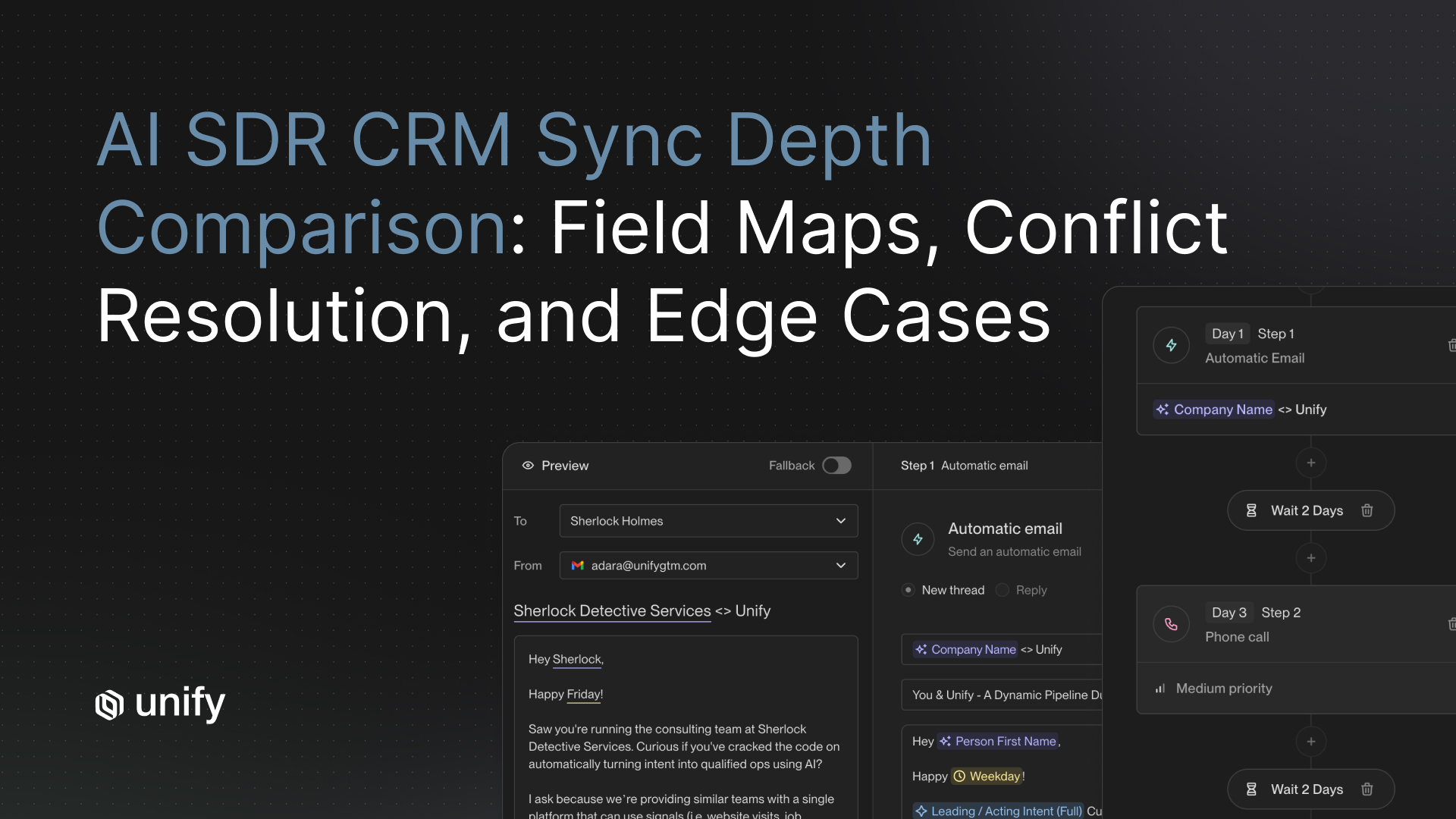

- Enroll matched contacts in a multi-channel sequence with AI-personalized first touch.

- Route real-time alerts on top-tier signals to the owning AE or BDR.

Peridio used lookalike signals exactly this way and produced $1.15M in influenced pipeline, $550K in direct pipeline, one Fortune 100 closed, 4,400+ people reached across 1,400+ companies, at a 58% average open rate (per Peridio case study, 2025, unifygtm.com/customers/peridio).

Guru ran industry-specific lookalike plays after each sales win, influenced $3.17M in closed-won revenue, and closed 109 net-new accounts (per Guru case study, 2025, unifygtm.com/customers/guru).

The Unify Lookalikes launch post reported one customer driving $110K in pipeline within the first week of launching their first lookalike play: "After launching this play one week ago, we have already driven $110k in pipeline" (per Unify Lookalikes launch post, Aug 2025).

Worked Example: From CRM to Live Play in Seven Days

Day 1 — Pull the seed. Filter Salesforce: Stage = Closed Won, Created Date over 90 days ago, ACV at or above median, no active churn flag, discount under 40%. Output: 78 accounts.

Day 2 — Apply weighting. Multiply each seed by 1x, 2x, or 3x based on ACV band and contract length. Output: 78 weighted seeds.

Day 3 — Generate the lookalike list. Feed seeds into a lookalike provider (Ocean.io powers Unify's Lookalikes signal per unifygtm.com/signals/lookalikes). Set filters: industry match, employee count band 50-2,000, geo = US plus CA. Output: 2,300 candidate accounts.

Day 4 — Layer technographic. Filter for accounts using one of three required integrations (e.g., HubSpot, Salesforce, Stripe). Output drops to 1,580 accounts.

Day 5 — Score behavioral. Run a 30-day backward window on website visits, executive job changes, and funding events. Tag any account with one or more behavioral signals as Tier A. Output: 220 Tier A, 540 Tier B, 820 Tier C.

Day 6 — Manual scrub. Sample 50 random accounts. BDR and AE flag mismatches. Find 3 (6%) bad matches. Pass.

Day 7 — Activate as a Play. Build the Play: trigger on entry to Tier A audience, prospect 2-3 personas per account, enroll in a 4-step sequence with AI personalization on the first touch, route Slack alert to AE on reply. By end of week 4, expect 30-60 booked meetings if seed quality and message-market fit are tight.

When should you stop sending to an account?

Direct answer: Stop or pause when one of five signals fires: opt-out, OOO over 30 days, three touches with opens but no reply, an explicit "wrong fit" reply, or a closed-lost flag in CRM. Each has a different next action.

Role and Segment Variants

Sales-led (more than 50 AEs, Salesforce)

- Seed list weighted to current-quarter pipeline patterns.

- Manual override review every Friday.

- 800-1,500 account list size, named-account tags.

- Real-time Slack alerts to owning AE on Tier A signals.

PLG (HubSpot, freemium product)

- Seed list weighted on product-usage depth, not ACV.

- Pull in paywall-hit and DAU signals as primary behavioral inputs.

- 2,000-5,000 account list size, automated enrollment.

- Alerts to growth team on workspace-level expansion signals.

Expansion (existing customers)

- Seed only from customers at First Results or Tangible ROI on the Unify Customer Value Journey (see the Unify Expansion Playbook).

- Smaller list (200-800 accounts) with deeper personalization.

- Owned by AM or CSM, not BDR.

- Activation messaging references prior usage milestones, not generic value props.

Edge Cases and Disambiguation

- Lookalike audience vs lookalike account list. Paid-media audiences are anonymous user clusters in Meta or Google. A lookalike account list is a deterministic set of company records with names, domains, and enrichable contacts. They are not interchangeable.

- ICP vs lookalike list. ICP is the firmographic envelope you defined. A lookalike list is the operational set of accounts you actually outreach to that fall inside (or near) that envelope.

- Job-seeker traffic vs buyer interest. A spike in visits to your /careers page is recruiting interest, not buying interest. Strip career-path visits from behavioral signals.

- Funding noise. A Series B funding event matters when the company sells into your category. A Series B in a wholly unrelated industry is noise.

- Content syndication vs intent. Gated-content downloads on a third-party site are interest data, not buyer intent. Treat as lower-priority signal.

Top 5 Common Mistakes

- Seeding from all closed-won — including one-off deals and churn-soon accounts that produce noisy lookalikes.

- Refreshing every signal layer on the same calendar — stale behavioral data silently drives bad sequencing.

- Skipping the manual sample review — a 30-50 account eyeball check catches issues no automated scrub will.

- Building a 20,000-account list — nukes deliverability and dilutes personalization with no marginal lift.

- Stopping at the list — never wiring it to a Play, sequence, or alert routing means zero pipeline.

How Unify covers this

The evaluation criteria above are vendor-neutral. If you are evaluating tooling, here is how Unify's stack maps onto each step:

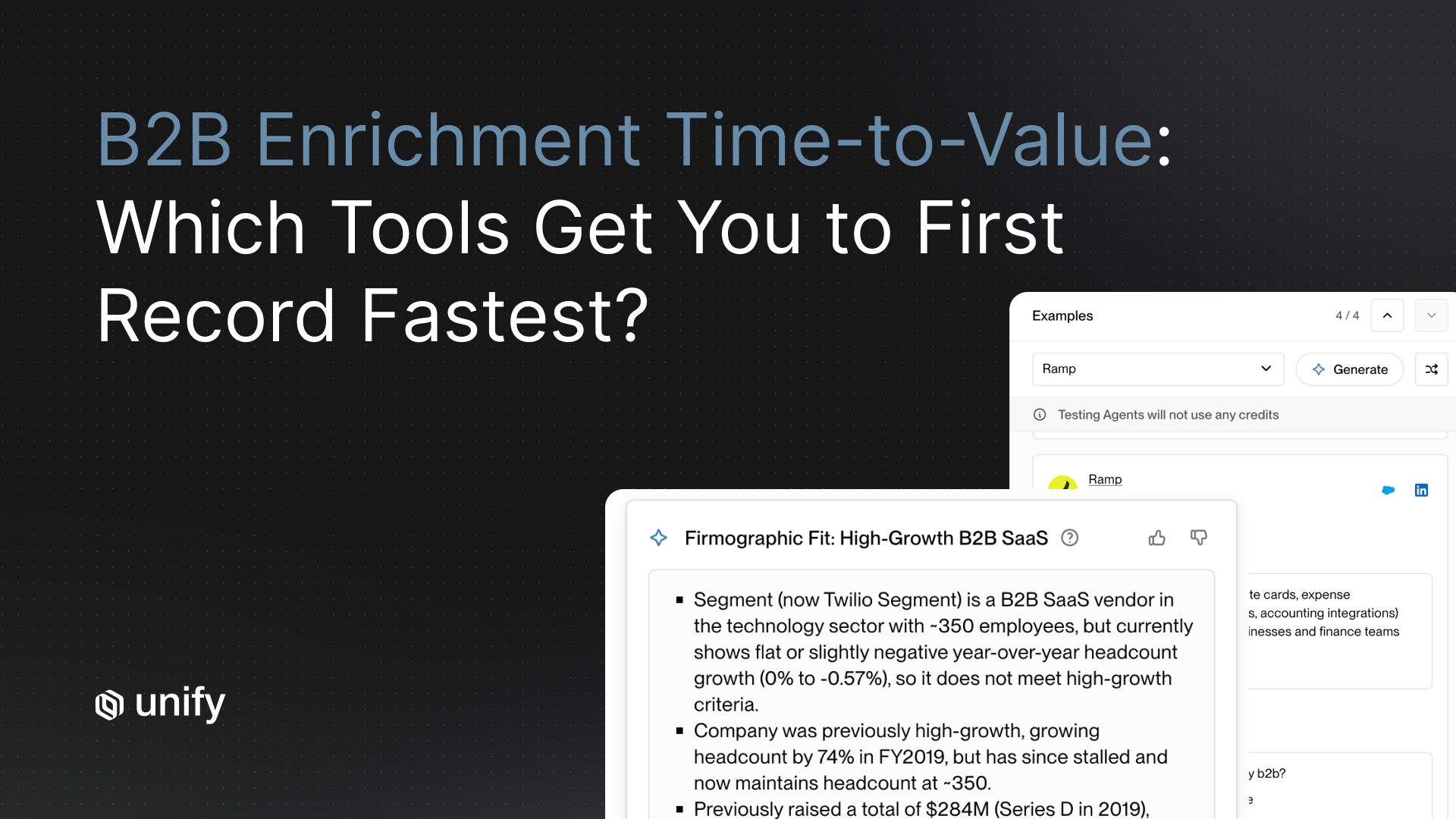

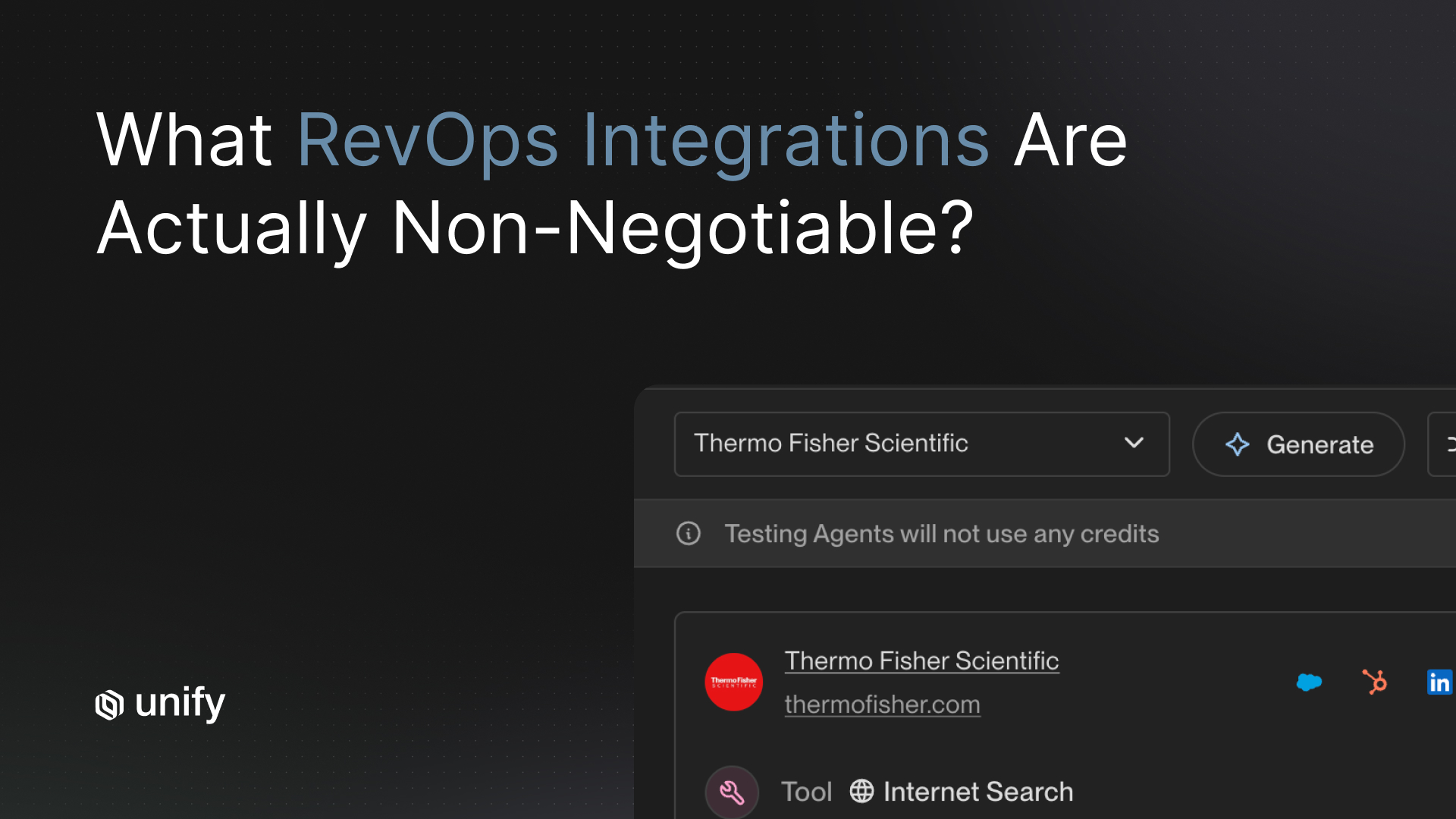

- Seed list extraction. Native Salesforce and HubSpot integrations with 15-minute bi-directional sync surface closed-won audiences without manual exports.

- Lookalike generation. The Lookalikes signal (powered by Ocean.io) generates similar-company lists with filters on employee count, industry, and geography.

- Technographic layer. Unify's signals library includes 25+ intent signals; Infinity Signal supports natural-language custom signals for niche technographic queries.

- Behavioral layer. Website intent, product usage signals, G2 signals, and new hires plug in natively.

- Enrichment. Waterfall enrichment reports 90%+ contact and 95%+ company match rates per the published product page.

- Activation. Plays trigger on signal match and enroll matched companies into Sequences. Plays power nearly 50% of Unify's own new pipeline creation (per Unify Series A announcement, Dec 2025).

FAQ

What is a lookalike account list?

A lookalike account list is a ranked list of B2B companies that resemble your best closed-won customers across firmographic, technographic, and behavioral signals. It is built from CRM data, not from a paid-ads audience pixel.

How is a lookalike account list different from a paid-ads lookalike audience?

A paid-ads lookalike audience is an anonymous user cluster built by Meta or Google from pixel data. A lookalike account list is a deterministic set of named company records that you can enrich, enroll, and route through outbound. The unit is the company, not the user.

How many accounts should be on a lookalike list?

Size most B2B lookalike account lists between 1,000 and 5,000 accounts. PLG motions can run larger lists (2,000 to 5,000), sales-led motions run tighter (800 to 1,500), and expansion motions run smaller (200 to 800). Below 1,000 you starve the funnel; above 5,000 you damage deliverability and dilute personalization.

How often should you refresh a lookalike list?

Refresh on a cadence that matches each signal layer. Firmographic data refreshes quarterly. Technographic data refreshes monthly. Behavioral data refreshes daily or in real-time. Treating the whole list with one refresh calendar is the most common mistake operators make.

What data should you use to seed a lookalike list?

Seed from closed-won accounts in your CRM that meet four conditions: deal size at or above your median ACV, tenure over 90 days, healthy product adoption, and no active churn risk. Weight by billing tier (3x for accounts at 2x median ACV with multi-year contracts) and product-usage depth if you run a PLG motion.

When should you override the lookalike model?

Override in five scenarios: a champion job change brings a previous buyer to a non-ICP company, a partnership unlocks an account, a flagship vertical logo becomes available, a high-LTV competitor user inbounds, or a regional-fit account ranks low on revenue but matches the right local stack. Document every override and review monthly so the model can learn.

What is the difference between firmographic, technographic, and behavioral signals?

Firmographic signals describe what a company is (industry, headcount, revenue, geography). Technographic signals describe what a company uses (tech stack, integrations, vendors). Behavioral signals describe what a company is doing right now (website visits, hiring, funding, product usage). The three layers answer different questions and should be scored independently.

What is the most common mistake when building a lookalike account list?

Stopping at the list. The list itself is worthless without an activation motion. The second most common mistake is refreshing every signal layer on the same calendar, which lets stale behavioral data drive bad sequencing decisions.

Glossary

- Closed-won seed: The deduplicated subset of CRM Closed Won accounts that pass quality filters and feed the lookalike model.

- Firmographic signal: A descriptive attribute of a company (industry, headcount, revenue, geography).

- Technographic signal: Evidence of the technologies a company uses (CRM, BI tool, language framework, vendor list).

- Behavioral signal: A real-time indicator of activity (website visits, hiring, funding events, product usage).

- Lookalike model: An algorithm that scores candidate accounts against a seed by similarity across multiple feature dimensions.

- False positive: An account that scores high but does not match the buyer profile (e.g., job-seeker traffic, irrelevant funding).

- Refresh cadence: How frequently a data layer is re-pulled to keep the list current.

- Override: A manual addition or removal of an account regardless of model score.

- Play: An automated workflow that triggers actions (enrich, prospect, sequence, alert) on a signal.

- Touch: One outreach attempt across any channel; touches are counted across a sequence.

Sources

- Unify Expansion Playbook for the Signals Era (operator guide; gated download). unifygtm.com/resources/the-expansion-playbook-for-the-signals-era

- Unify First 90 Days of Plays guide (operator playbook covering lookalike plays, website intent, champion tracking).

- Unify Lookalikes launch post, Aug 2025. unifygtm.com/blog/lookalikes

- Unify Lookalikes signal page. unifygtm.com/signals/lookalikes

- Peridio case study, 2025. unifygtm.com/customers/peridio

- Anrok case study, 2025. unifygtm.com/customers/anrok

- Guru case study, 2025. unifygtm.com/customers/guru

- Juicebox case study, 2026. unifygtm.com/customers/juicebox

- Unify Waterfall Enrichment product page, 2026. unifygtm.com/product/enrichment

- Unify Series A announcement, Dec 2025. unifygtm.com/blog/series-a

- Unify Plays product page. unifygtm.com/plays

- Unify Sequences product page. unifygtm.com/sequences

About the author. Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)