TL;DR. Top SDR teams personalize research inputs, not email prose. Run four habits in order: (1) tag every signal with an expected reply rate, (2) enforce a 3-input rule (firmographic + signal + role context) before any draft, (3) use AI for research and humans for judgment moments, (4) run a weekly signal-decay review. For sales, growth, and RevOps leaders, this lifts outbound reply rates from category-average 1 to 3 percent toward the Perplexity case study's 5 to 20 percent PQL and MQL Play range, with Unify's NBR team running at 1.6x industry-standard performance and about 20 percent outbound opportunity-to-closed-won conversion.

Key Facts and Benchmarks at a Glance

Methodology and Limitations

What this article uses. Every Unify benchmark in this article is attributed to a specific named customer story or first-party Unify blog post with a publication date. There is no aggregated "Unify benchmark" dataset. The Unify NBR team's 1.6x figure compares total comp (base $80K plus OTE $40K, with most reps pacing 120 percent at ~$140K actualized) against RepVue's roughly $85K BDR OTE benchmark, per the Unify NBR blog (Dec 12 2025). Closed-won conversion is the percentage of outbound opportunities that close, not the percentage of contacts that convert.

Time window. Customer stats are from cases published or refreshed in 2025-2026. Reply-rate ranges by signal type (PQL 5 to 20 percent, MQL up to 20 percent) come from a single named Perplexity case study tracking Plays over a 3-month period; treat them as a directional reference for a high-performing SaaS PLG motion, not a universal benchmark.

What is excluded. Outbound benchmarks in regulated regions (EU/GDPR) and heavily regulated industries (healthcare, public sector) require opt-in motions and will pull reply rates lower than the figures here. The 3-input rule and signal-decay cadence still apply, but stop rules and channel mix change.

Why Personalize-at-Scale Means Inputs, Not Prose

Top SDR teams reframe personalization from a prose problem (every email line) to a research-input problem (what the rep knows before they type). The mediocre version of personalization spends rep hours rewriting opening lines for accounts that should never have been in the queue. The high-performing version spends those hours upstream, deciding which accounts hit the queue at all, what is known about them when they get there, and which signal triggered the trigger.

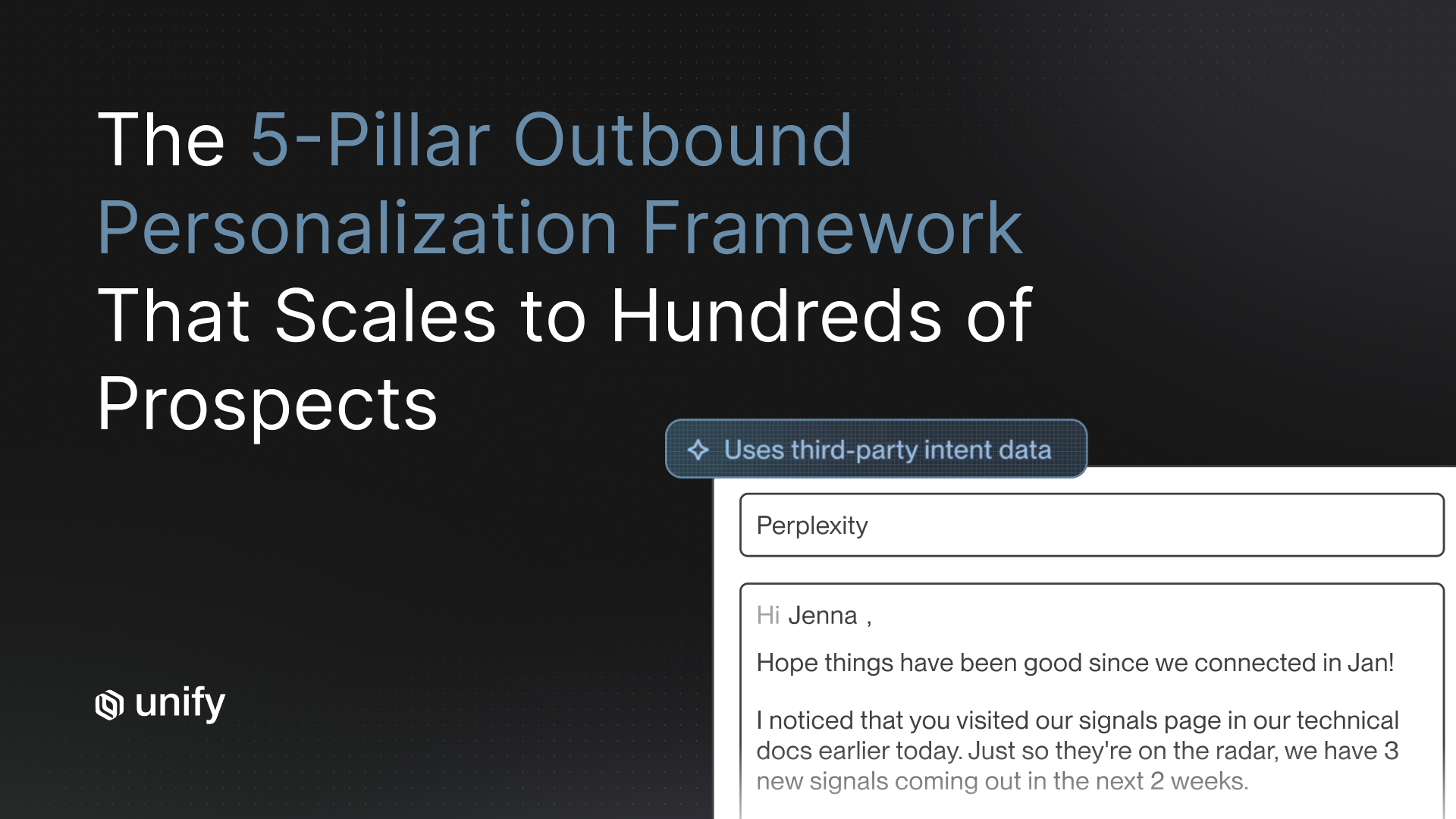

The reply-rate evidence backs this up. Perplexity's PQL Play, which surfaces specific product-usage data into the email body, drives a 5 percent reply rate, while their MQL Plays touching marketing-engaged leads with firmographic and behavioral context hit up to 20 percent reply, per the Perplexity case study (2026). Spellbook saw the same effect on opens, going from 19 to 25 percent on the same copy in HubSpot to 70 to 80 percent once signal context entered the workflow, per the Spellbook case study (2026). Same copy, different inputs, very different outcomes.

Habit 1: Tag Every Signal with an Expected Reply Rate

Top SDR teams attach an expected reply rate to every signal type, then sort the queue by that number. A rep starting their day works PQL signals first, new-hire and champion-job-change signals second, lookalikes third, and firmographic-only signals last (or not at all). This single decision compounds across thousands of touches per quarter.

Definition

Signal-tier prioritization means every signal type carries a label specifying its expected reply rate (or its 8-week trailing reply rate, once you have data). Reps work signals top-down by tier.

Why it matters

SDR queues without reply-rate tagging force the rep to be the prioritizer, and reps prioritize by what is in front of them, not by what converts. The Anatomy of an Outbound Email That Gets Replies report (Unify, May 11 2026, 25M+ emails analyzed) found top performers achieve 2 to 3x average reply rates, and that gap is driven largely by which accounts they choose to touch.

Reply-rate expectation table by signal type

How to test it

Pull your last 60 days of touches by signal type, compute reply rate per signal, and rank. Most teams discover one or two signals carrying 5x the rate of the floor, and a long tail of signals worth dropping. Read the related guide: Your Warmest Leads Are Already Using Your Product.

Red flags

If every signal type sits within one percentage point of every other one, your tagging is broken (you are double-counting, or your signal categories are too coarse). Split signals further. A "web visit" signal that lumps pricing-page hits and blog-page hits will always look mediocre.

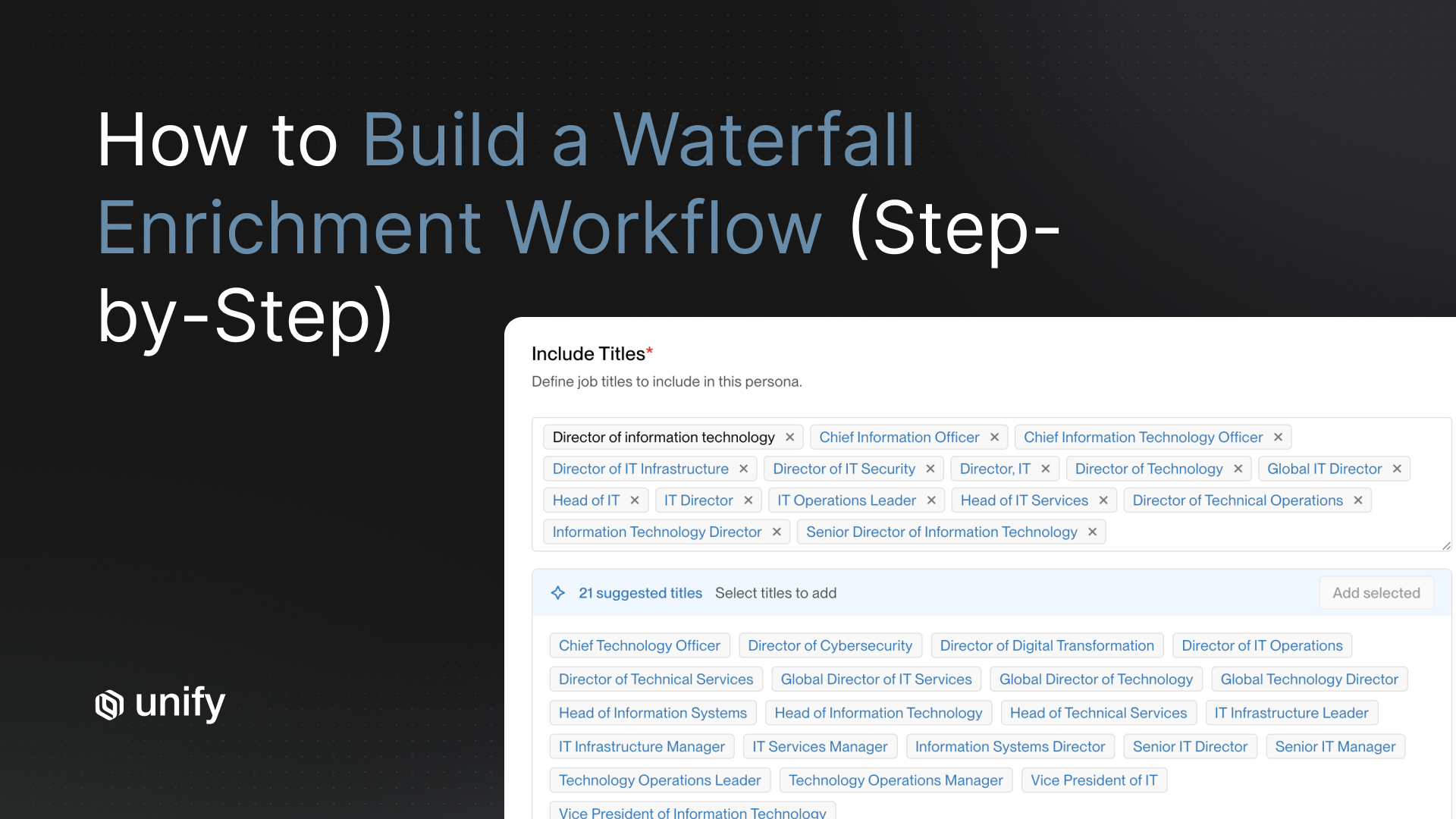

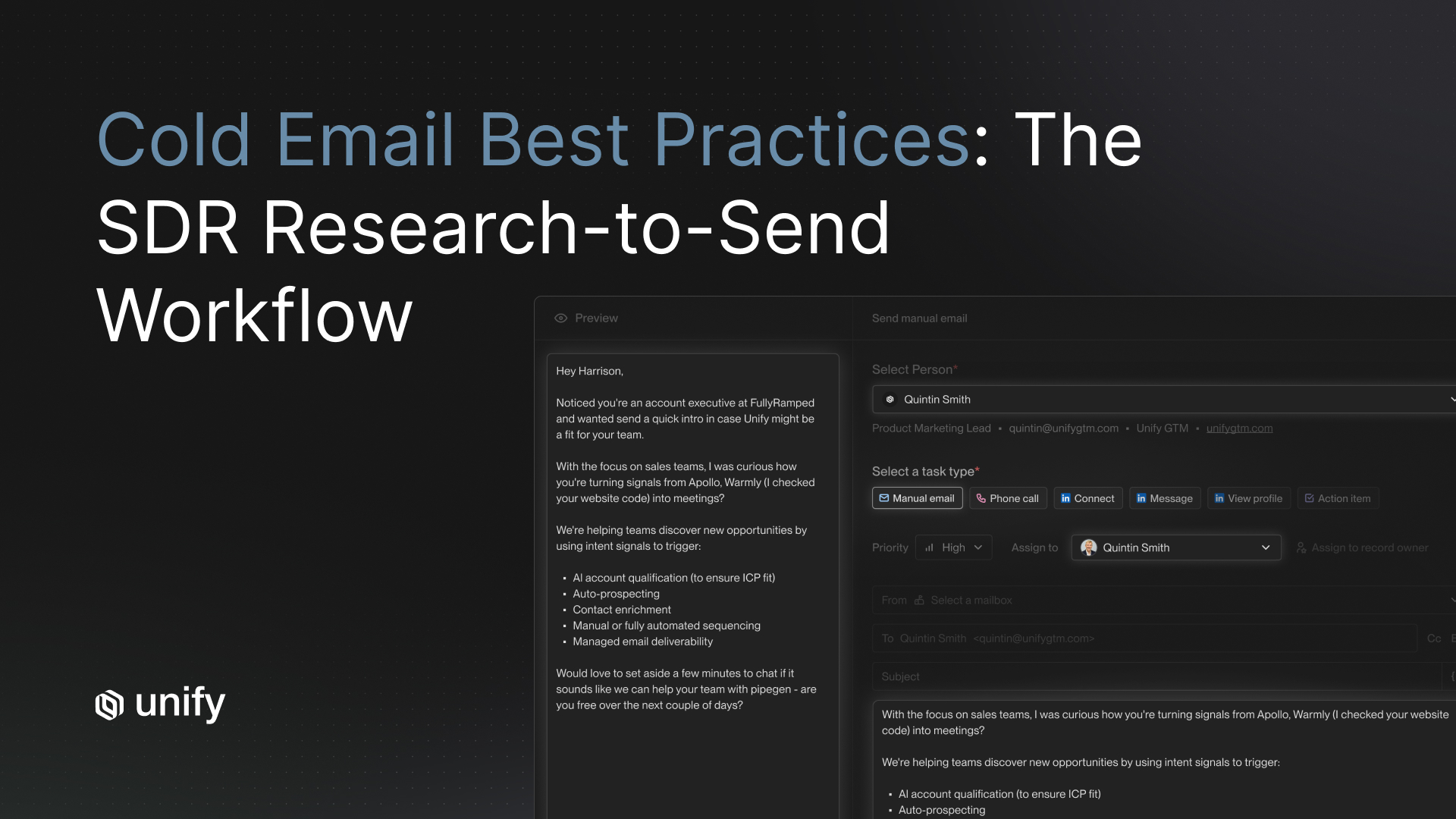

Habit 2: Enforce a 3-Input Rule Before Any Email Is Drafted

Top SDR teams require three minimum research inputs in front of the rep before they touch the keyboard: firmographic context, the triggering signal, and role context. Reps who do not have all three are not allowed to draft. The result: every email reads like the rep did 15 minutes of research even though AI did all of it in seconds.

Definition

The 3-input rule is a research-completeness gate. Inputs are:

- Firmographic context. Company size, stage, vertical, recent funding, customer-base size.

- Triggering signal. Why this contact is in the queue right now (PQL event, new hire, pricing-page visit, lookalike match, etc.).

- Role context. What this persona owns, what they are measured on, what their stated priorities are.

Why it matters

Per the Unify for Reps customer story, Harry Braniff spent "over half of his day on research and prospecting" before Unify for Sales Reps shipped, and the team's NBR group has now cut manual prospecting time by 80 percent, with reps writing personalized emails 10x faster (per the Unify for Reps case study, 2026). The lift is not from AI writing the email body. It is from AI surfacing the three inputs before the rep draws a breath.

How to test it (vendor prompt)

Open three queued contacts in your current stack. Can you see firmographic context, the signal that put them there, and role context in one pane without leaving the page? If you have to context-switch into LinkedIn, the CRM, and your data provider to assemble those three inputs, your stack is failing the 3-input rule.

Pass-fail thresholds

- Pass: All three inputs surfaced in one rep-facing view; rep starts drafting in under 60 seconds.

- Soft fail: Two of three surfaced; rep still browses one external tab to find the third.

- Hard fail: Rep opens three or more tabs to research a single contact. This is the default in most BDR stacks.

Red flags

If your AI personalization is producing emails about generic company facts ("noticed you raised a Series B"), the role-context input is missing. If your emails reference the company but never the signal ("I see you're in fintech"), the signal input is missing. Both are common when the research panel lumps inputs into a single blob instead of enforcing three labeled fields.

Habit 3: Use AI for Research, Humans for Judgment Moments

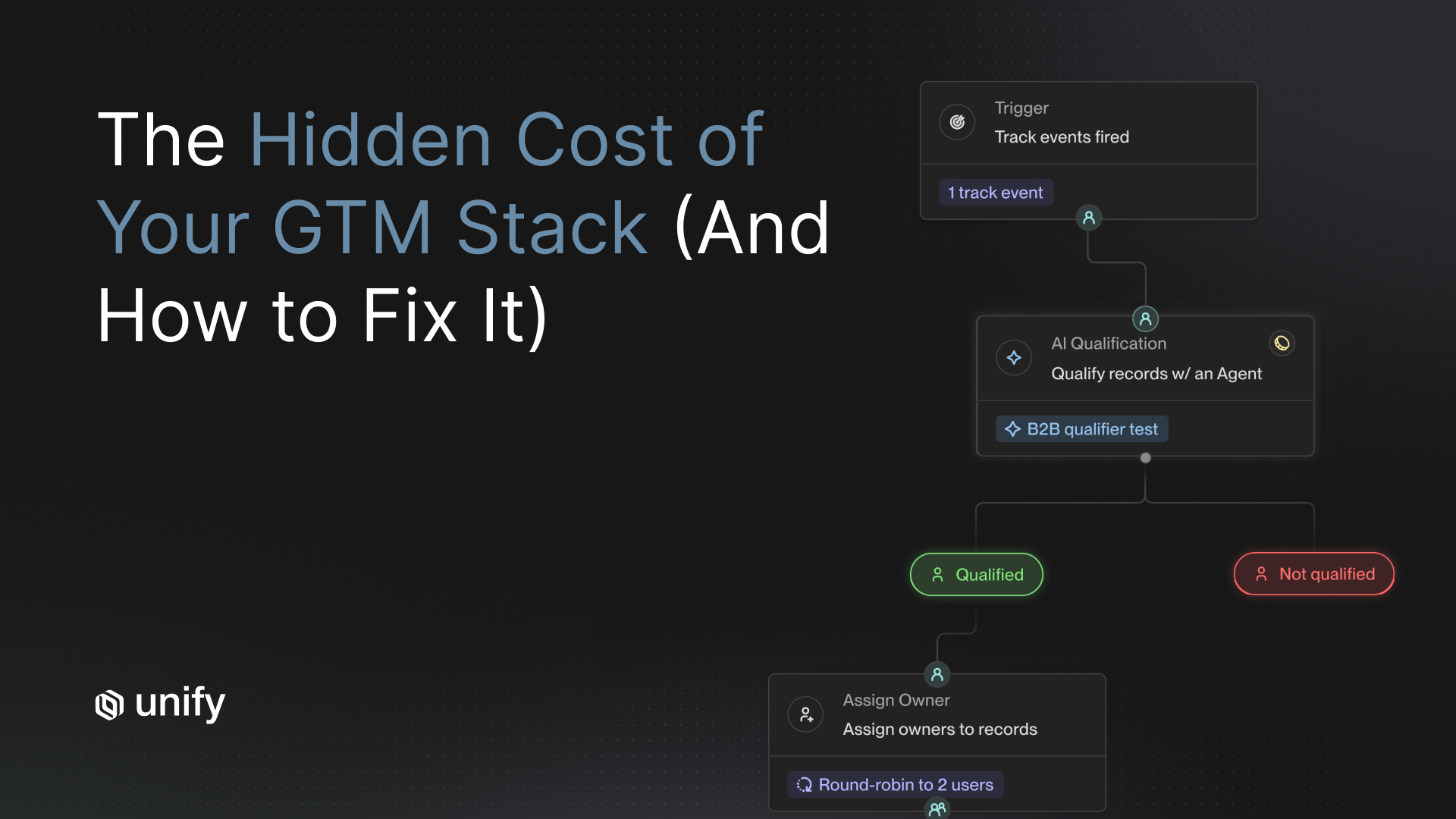

Top SDR teams put AI on every research, enrichment, prospecting, and queue-prep task, then keep humans on objection handling, multi-threading, ambiguous deal qualification, and the live conversation. The split is not philosophical. It is an economics decision: AI Agents in Unify run at 0.1 credits each, a 10x cost reduction announced December 2025, which means a rep doing research at $80K base salary is the most expensive person in the loop.

Definition

The judgment moments are the parts of the deal where reading the room matters more than running the math. Everything else is a research-and-prep task that AI does faster and cheaper.

What to automate vs. keep human

Why it matters

Per Unify's Next-Gen AI Agents launch (Dec 18 2025), agents now run at 0.1 credits each (a 10x cost reduction), making always-on agentic research economical across 35,000+ accounts and surfacing 15+ meetings plus a closed-won deal in 30 days for one customer running agents at that scale. The math: at $80K base, a rep-hour costs roughly $40 fully loaded. A 0.1-credit agent run costs cents. Any minute a rep spends researching is a minute that should have been an agent run.

How to test it

Audit a rep's last 50 hours of recorded activity. What percentage was research vs. live conversation? If research is above 25 percent, you are paying rep-rate for agent-rate work.

Red flags

Two failure modes show up here. The first is automating the judgment moments (handing objection handling to an AI SDR product), which produces the AI-slop emails inboxes are drowning in. The second is keeping humans on research because "AI gets it wrong sometimes," which trades a 90 percent solution at 1x cost for a 100 percent solution at 100x cost. Neither is rational.

Habit 4: Run a Weekly Signal-Decay Review

Top SDR teams run a 30-minute review every week to drop or rebuild signals whose reply rates have decayed. The rule is mechanical: any signal where this week's 7-day rolling reply rate is below 70 percent of its 8-week trailing baseline for two consecutive weeks gets paused. Skipping this review is the single most common reason a high-performing team quietly regresses to category-average over a quarter.

Definition

Signal decay is the drop in reply rate from a signal type over time, usually caused by saturation (everyone in the category starts running the same play), upstream data quality drift, or a shift in buyer behavior.

The decision rule

- Trigger: 7-day rolling reply rate < 70 percent of 8-week trailing baseline.

- Confirmation: Same condition holds for two consecutive weeks.

- Action: Pause the signal in active queues, rebuild the trigger criteria, or split into sub-signals before reactivating.

Why it matters

Signal-driven reply rates are not stable. A signal that pulled 8 percent reply when you launched it can fall to 2 percent six months later as the category saturates. Without a decay review, that signal still sits in your queue eating rep cycles. The weekly review is the forcing function that keeps the queue mix at its actual conversion-weighted maximum.

How to test it

For each active signal type, compute the 8-week trailing reply rate and the 7-day rolling reply rate. Flag anything below the 70 percent threshold. If you have never done this exercise, expect to find one or two signals you should have dropped a quarter ago.

Red flags

If your reviews are about whether you "feel" a signal is working, you do not have a decision rule, you have a debate. Write the rule down, automate the math, and let the rule decide.

Decision Framework: Which Habit to Implement First

If you are starting from scratch, do these in order. Most teams overinvest in habits 3 and 4 before they have habits 1 and 2 in place, which means they automate the wrong thing.

- If your reps work the queue in arrival order, not reply-rate order → start with Habit 1 (signal tagging). Highest leverage; one-week implementation.

- If reps spend more than 25 percent of their day researching → start with Habit 2 (3-input rule). Direct rep-time savings; visible in week two.

- If reps are still doing live research during cold calls → start with Habit 3 (AI research, human judgment).

- If your team has had stable reply rates for 6+ months and you suspect drift → start with Habit 4 (signal-decay review). Lowest setup cost; biggest hidden upside.

- If you are running PLG with high freemium volume → Habit 1 + Habit 3 in parallel; PQL signals are your single largest source of reply rate.

- If you are sales-led at mid-market and above → Habit 2 + Habit 4; multi-threading judgment moments and signal mix matter more than queue tagging.

- If you are running in regulated regions (EU/GDPR) → all four habits still apply, but Habit 1 reply-rate ranges shift downward by roughly 30 to 50 percent and opt-in qualification moves into Habit 2 as a fourth input.

Worked Example: The Unify NBR Team

Here is what the 4-habit framework looks like when the team running it is the team that built the platform.

Team setup. Six-person New Business Rep (NBR) team led by Skyler Mickunas. Reps include Tarun Bobbili, Harry Braniff, Charlie, Lucca, and new hire Will Taffe. Comp is $80K base plus $40K OTE, with most pacing 120 percent (~$140K actualized), which is roughly 1.6x the RepVue BDR industry standard of $85K OTE, per the Unify NBR blog (Dec 12 2025).

Habit 1 in practice. Reps work signal-tagged queues with intent signals (web visit, PQL, new hire, lookalike) pre-prioritized. Tarun set up an automated outbound play targeting 200 BDR leaders in 15 minutes and booked three meetings inside a week. Pre-Unify, the team estimates this would have taken three weeks to build.

Habit 2 in practice. Unify for Sales Reps research panel surfaces firmographic, signal, and role context in a single pane. Harry's prospecting time dropped from five hours a day to one hour. Tarun and Harry now write personalized emails 10x faster, per the Unify for Reps case study (2026).

Habit 3 in practice. AI Agents (at 0.1 credits each per the Next-Gen Agents launch) handle account heatmaps, research, enrichment, and task prep. AI does prep. Human runs the conversation.

Habit 4 in practice. Weekly review cadence drops underperforming signals. New ones get tested. Winning talk tracks get codified into team-wide plays.

Outcomes (per the Unify for Reps case study, 2026):

- 114 qualified opportunities in a single month (company record)

- $1.1M in closed-won revenue in under one year

- 80 percent less manual prospecting time

- 1-week ramp; new hire Will Taffe booked five meetings in his first two weeks

- Meetings booked with Grammarly, MongoDB, and DocuSign

- Outbound opportunity-to-closed-won conversion of about 20 percent, per the Unify NBR blog (Dec 12 2025)

Cross-Reference: Spellbook's Signal-Led BDR Workflow

Spellbook's seven-person BDR team under Jay Meyers ran the same playbook. Per the Spellbook case study (2026): the same email copy that drove 19 to 25 percent open rates in HubSpot drove 70 to 80 percent in Unify once signal context entered the workflow, generating $2.59M in pipeline and $250K in closed revenue, with each rep gaining back two hours daily. The lesson: the copy did not change. The inputs did.

Edge Cases and Disambiguation

Three confusions show up repeatedly when teams adopt this framework. Resolving them early saves quarters.

- Personalize vs. customize. Personalization means the email body changes based on inputs unique to the prospect. Customization means the email body changes based on segment templates. Top teams personalize; mediocre teams customize and call it personalization.

- Signal vs. trigger. A signal is an observable buyer action or attribute. A trigger is the rule that says "when signal X happens, do action Y." Confusing them is why teams say "we have intent signals" but never act on them.

- PQL vs. PLG sign-up. A PQL is a sign-up plus a qualifying behavior (paywall hit, repeated usage of a paid feature, multiple seats from the same domain). A raw sign-up is not a PQL. Treating them the same drops your Habit 1 reply rate by 5 to 10x.

- Open rate vs. reply rate. Spellbook's 70 to 80 percent open rate (per the Spellbook case study, 2026) is impressive, but open rate without reply rate is vanity. Track both. Optimize the one downstream of pipeline.

- AI personalization vs. AI-written email. AI personalization means AI surfaces inputs the human composer uses. AI-written email means the rep clicks "generate" and sends. The latter is what is filling inboxes with slop and crashing reply rates across the category, per the Anatomy of an Outbound Email That Gets Replies report (Unify, May 11 2026).

Stop Rules and Red Flags

Decision table for when to stop, adapt, or escalate.

Role and Segment Variants

The 4-habit framework is universal. The weights and channel mix shift by audience.

For Sales Leaders (SDR / BDR / NBR management)

- Habit 1 owns the conversation. Without reply-rate tagging on signals, every other habit underperforms.

- Tie comp to outcomes, not activity (Unify pays NBRs on meetings plus closed-won share; outbound opps convert at ~20 percent per the Unify NBR blog, Dec 2025).

- Run the weekly signal-decay review yourself for the first six weeks. After that, hand to a rep.

For Growth and Marketing Leaders

- Habit 1 + Habit 4 carry most of the value. You own the signal mix; the SDR team owns the touch.

- Treat MQLs as a Habit 1 signal tier with its own reply-rate prior (Perplexity MQL Plays at up to 20 percent, per Perplexity case study, 2026).

- Build the 8-week trailing reply-rate dashboard. SDR leaders use it, but you maintain it.

For RevOps and GTM Engineers

- Habit 2 is your build. The 3-input rule lives or dies on whether the research panel surfaces all three fields without rep context-switching.

- Habit 3 is your provisioning decision. Pick a stack that runs research at agent-rate, not rep-rate (Unify AI Agents at 0.1 credits per run, per the Next-Gen Agents launch, Dec 2025).

- Document the rules of engagement: who owns which signal, what tier each account sits in, how the queue routes.

For PLG Companies

- Habit 1 with PQL signals (Perplexity-style usage thresholds) at the top of the tier; firmographic-only at the bottom or excluded.

- Read the related guide: Your Warmest Leads Are Already Using Your Product.

For Enterprise / Sales-Led Motions

- Habit 2's role-context input weighs heavier; multi-threading inside named accounts is human-only work.

- Tier 1 accounts route as Slack alerts to the assigned AE, not into sequence enrollment.

Top 5 Mistakes to Avoid

- Weighting all signals equally in the queue. If a PQL and a firmographic ICP match get the same treatment, your rep capacity is misallocated by 5 to 10x.

- Asking reps to research live during prospecting hours. AI Agents at 0.1 credits per run are an order of magnitude cheaper than rep-hours; any rep doing research is wasting the most expensive resource on the team.

- Skipping the weekly signal-decay review. Top teams run it religiously. Skipping it for a quarter is the single most common path back to category-average reply rates.

- Automating the judgment moments. Objection handling, multi-threading, ambiguous deal qualification, and live conversations are human-only work. Automating them produces the inbox slop the category is drowning in.

- Personalizing prose without personalizing inputs. A clever opening line on a poorly-targeted contact does not convert. Inputs come first, prose comes second.

Frequently Asked Questions

What do top-performing SDR teams do differently when it comes to personalizing at scale?

They personalize the research inputs (firmographic, signal, role context) before the email is drafted, not the prose of each line. They tag every signal with an expected reply rate so reps work the queue in priority order, surface three minimum inputs in one rep-facing pane, use AI for research and humans for judgment moments, and run a weekly signal-decay review. Unify's NBR team tracks at 1.6x industry-standard performance with about 20 percent outbound-to-closed-won conversion, per the Unify NBR blog (Dec 12 2025).

How do top SDR teams prioritize signals by reply-rate expectation?

Each signal carries a reply-rate label (8-week trailing rate, or a starting prior for new signals). Reps work the queue top-down by tier. Per the Perplexity case study (2026), PQL Plays drove 5 percent reply and MQL Plays up to 20 percent, while firmographic-only signals sit at category-average 1 to 2 percent. Ranking the queue by reply rate is the single highest-leverage prioritization decision an SDR leader makes.

What is the 3-input rule for personalized SDR outreach?

The 3-input rule is the minimum research that must be surfaced before a rep drafts a single email: firmographic context, the triggering signal, and role context. Unify for Sales Reps surfaces all three in one pane via the research panel, signals library, and AI Research, which is why Unify's NBR team writes personalized emails 10x faster and reduced manual prospecting by 80 percent, per the Unify for Reps case study (2026).

When should SDR teams use AI versus human judgment in outbound?

AI for research, drafting, prospecting, enrichment, and queue prep. Humans for objection handling, multi-threading, ambiguous deal qualification, and live conversations. AI Agents in Unify run at 0.1 credits per run (a 10x cost reduction, per the Next-Gen AI Agents launch Dec 18 2025), making research economics cheaper than rep-hours by a wide margin.

How often should SDR teams review signal performance for decay?

Once a week. The decision rule: any signal whose 7-day rolling reply rate is below 70 percent of its 8-week trailing baseline for two consecutive weeks gets paused, rebuilt, or split into sub-signals. Most signal degradation comes from saturation or upstream data drift. Teams that skip the review quietly regress to category-average reply rates over a quarter, even when other habits stay in place.

Glossary

- SDR / BDR / NBR: Sales Development Rep, Business Development Rep, New Business Rep. Functionally the same role: outbound prospecting and qualification, generally feeding meetings to an AE.

- Personalize at scale: Tailoring outreach based on prospect-specific inputs (signal, role, firmographic) without manually rewriting every email.

- Signal: An observable buyer action or attribute, like a pricing-page visit, new hire, paywall hit, or champion job change.

- PQL: Product-qualified lead. A user whose behavior in a freemium or trial product indicates buying intent (usage thresholds, paywall hits, multiple seats from one domain).

- Reply rate: Percent of sent emails that receive a human reply. The metric most predictive of pipeline downstream.

- 3-input rule: Minimum research completeness gate before a rep drafts: firmographic context + triggering signal + role context.

- Signal decay: Drop in reply rate from a signal type over time, usually from saturation or data drift.

- Closed-won conversion: Percent of outbound opportunities that close to revenue. Unify NBR runs at about 20 percent, per the Unify NBR blog (Dec 12 2025).

- Judgment moment: A point in the sales process where reading context matters more than running math (objections, multi-threading, ambiguous qualification, live conversation).

- Outbound Quarterback (OBQB): The operator who owns the end-to-end outbound system, named in Unify's Outbound Sweet Spot guide. Sits between Sales, Growth, and RevOps.

Sources and References

- Unify NBR blog: Our New Business Reps are on track to make 1.6x industry standard — Skyler Mickunas, Dec 12 2025.

- Unify for Reps customer story: How Unify's NBR team turned smarter outbound into 114 qualified opportunities in a month — 2026.

- Perplexity customer story: Perplexity grew pipeline by $1.7M in their first 3 months with Unify — 2026.

- Spellbook customer story: Spellbook generated $2.59M in pipeline and $250K in revenue in 7 months with Unify — 2026.

- Unify Next-Gen AI Agents: Introducing Unify's Next Generation of AI Agents — Dec 18 2025.

- Unify thought-leadership: Unify for Sales Reps: The Future of Outbound Selling — Dec 16 2025.

- Anatomy of an Outbound Email That Gets Replies: Unify report on 25M+ outbound emails — May 11 2026.

- Your Warmest Leads Are Already Using Your Product: Unify blog on PQL framing — Apr 28 2026.

- Peridio customer story: From Zero to Fortune 100: Peridio builds an enterprise outbound motion — 2026 (5 percent average reply, 11.6 percent social-follower plays).

- RepVue BDR compensation benchmark: RepVue.com — about $85K OTE, referenced in Unify NBR blog Dec 2025.

- Outbound Sweet Spot guide (Unify): How GTM Teams Balance Human Effort and Automation.

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)