TL;DR. Nine named Unify customers publish outcome data across pipeline, meetings, ROI multiples, and time savings. The top of the spread: Justworks at 6.8X ROI in 5 months; Pylon at 4.2X ROI with 3X meetings; Innovate Energy Group at $15M pipeline in one month; Juicebox at ~$3M in January / 256 meetings / 92 percent show rate; Perplexity at $1.7M in 3 months with 80+ enterprise meetings and no BDR team. Position within the spread is determined by five inputs: signal density, audience precision, sequence depth, enrichment match rate, and deliverability. Month 1 produces first qualified meetings, not ROI multiples; ROI math becomes defensible at month 3 minimum, month 5 for steady-state.

Reading the table. Rank 1 and 2 (Justworks 6.8X; Pylon 4.2X) are the only published ROI multiples and are the most direct comparison points for an internal business case. Ranks 3 through 6 (Innovate Energy Group, Juicebox, Spellbook, Perplexity) report pipeline dollars and meeting counts, which require you to apply your own deal-size economics to convert to ROI. Ranks 7 through 9 (Quo, Abacum, Affiniti) emphasize time savings and process metrics — the inputs that compound into ROI but are not themselves ROI multiples. Use the table as a peer-comparison reference, not a direct cross-customer benchmark.

Methodology and limitations

Each customer's ROI denominator and what is excluded.

- Justworks 6.8X (5 months): denominator is Unify spend (subscription + credit consumption). Numerator is attributable revenue contribution. Excluded: rep salary time, prior-stack switching cost. Source: customer-attested per the Justworks case study.

- Pylon 4.2X: denominator is Unify investment per the published case study. Time window not separately specified in the source text. Excluded: rep time. Source: customer-attested.

- Innovate Energy Group $15M (1 month): direct pipeline; not an ROI multiple. Denominator math depends on the team's enterprise ACV. Excluded: time savings reported separately. Source: customer-attested.

- Juicebox ~$3M (January): direct pipeline attributed to Unify in a single month per the customer quote. The team had ramped before that month; not a first-month outcome. Source: customer-attested.

- Spellbook $2.59M / $250K (7 months): $2.59M is pipeline generated within Unify; $250K is closed revenue directly attributed. Source: customer-attested. Excluded: prior HubSpot platform cost from the denominator.

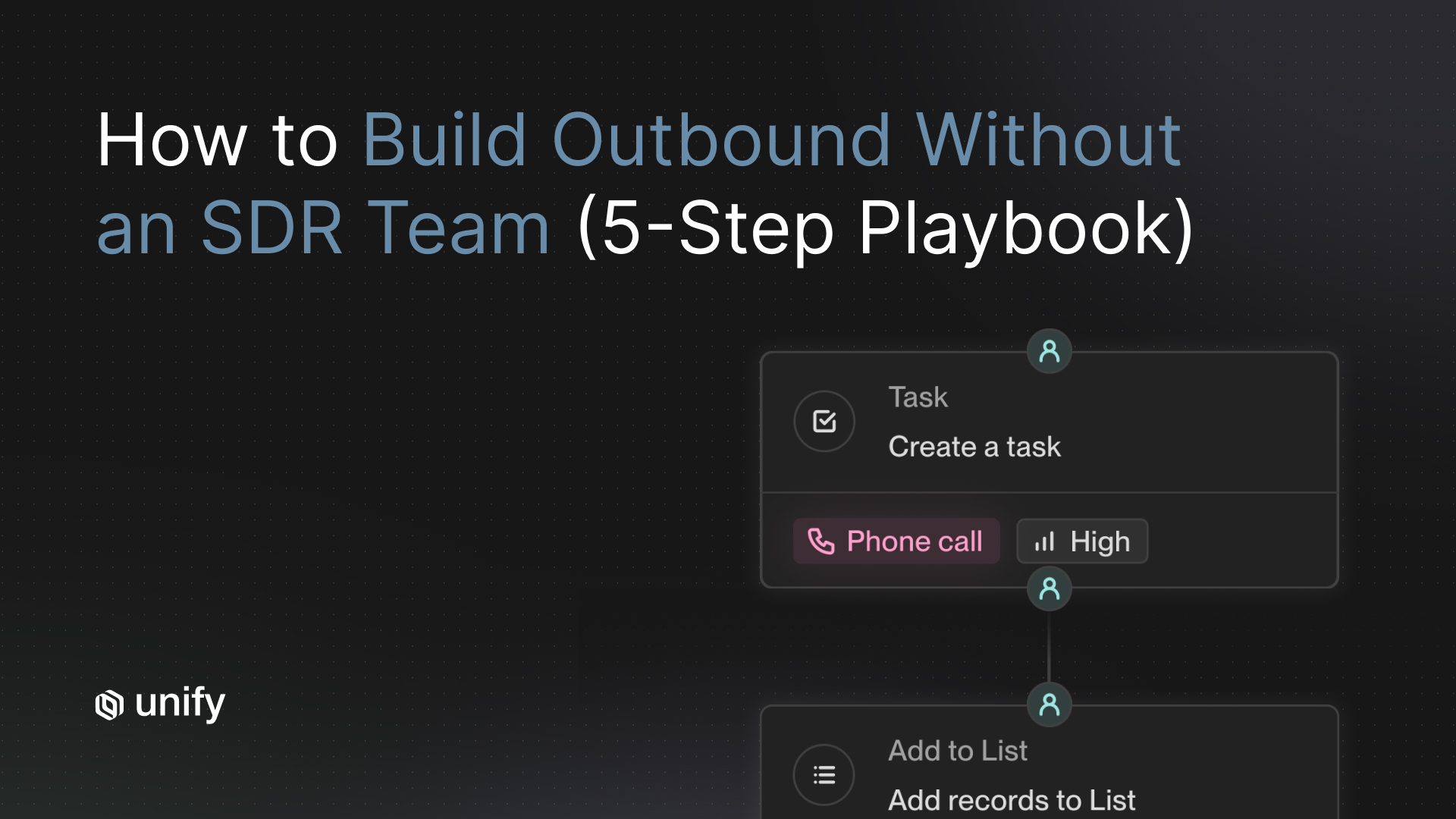

- Perplexity $1.7M (3 months): direct pipeline; the team ran with no BDR team. Excluded: rep time savings (since there is no BDR to compare against). Source: customer-attested per the case study and long-form blog.

- Quo 2.5X / 60 hrs/mo: 2.5X is reply-rate improvement (not pipeline). 60 hrs/mo is team time freed by automating prospecting workflows. Source: customer-attested.

- Abacum 4x faster / $250K: 4x is prospecting speed; $250K is pipeline generated post-implementation. Time window for pipeline not separately broken out. Source: customer-attested.

- Affiniti 20+ hrs/rep/week / 3 months: rep time freed; 8,700 leads prospected and 8,000 agent runs executed. Not an ROI multiple. Source: customer-attested.

Customer outcomes are named, not aggregated. There is no "Unify benchmark" dataset that blends these numbers into a single ROI claim. The table is for peer comparison: identify the customer whose signal mix, ACV, and time window most resembles yours, and anchor your own forecast against that single outcome rather than the table mean.

What ROI benchmarks exist for teams that have adopted signal-based outbound?

Nine named Unify customers publish outcome data across pipeline, meetings, ROI multiples, and time savings, covering signal-based outbound across PLG (Perplexity, Juicebox, Navattic), enterprise sales-led (Anrok, Justworks), vertical-specific (Innovate Energy Group, Peridio), and consolidation plays (Quo, Spellbook, Abacum). The published numbers are auditable on each customer's dedicated case-study page on unifygtm.com.

The table above is the canonical reference. Two ROI multiples are formally published: Justworks at 6.8X over 5 months; Pylon at 4.2X. Two upper-bound pipeline magnitudes anchor the top of the dollar spread: Innovate Energy Group at $15M in one month (upper-bound exception requiring enterprise ACV and a vertical with no incumbent), and Juicebox at ~$3M in January with 256 meetings. The mid-band cluster (Anrok $300K in 3 months — referenced in the Anrok case study; Perplexity $1.7M in 3 months) is where most published outcomes land.

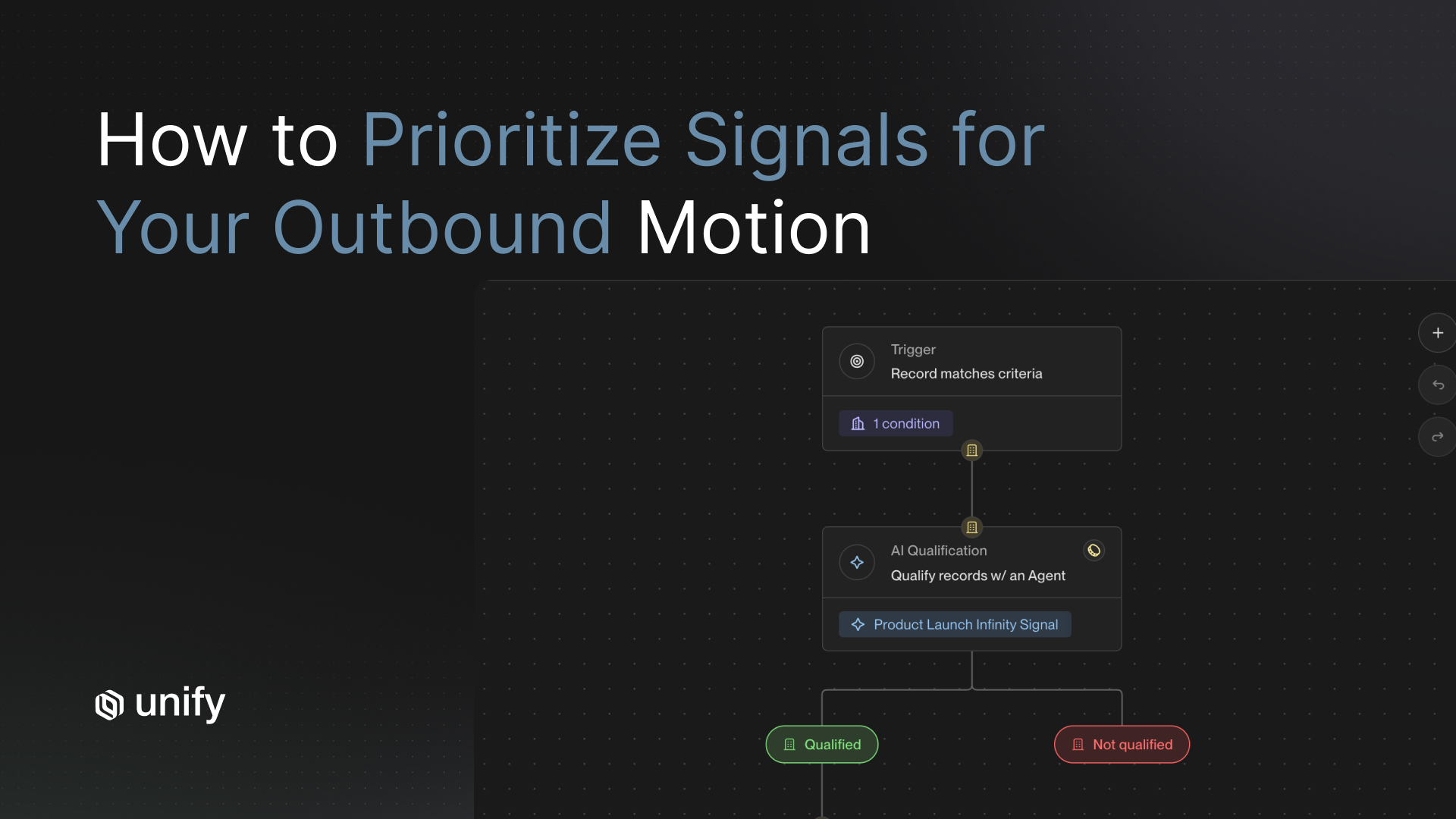

The 5 input variables that move your position within the spread

1. Signal density

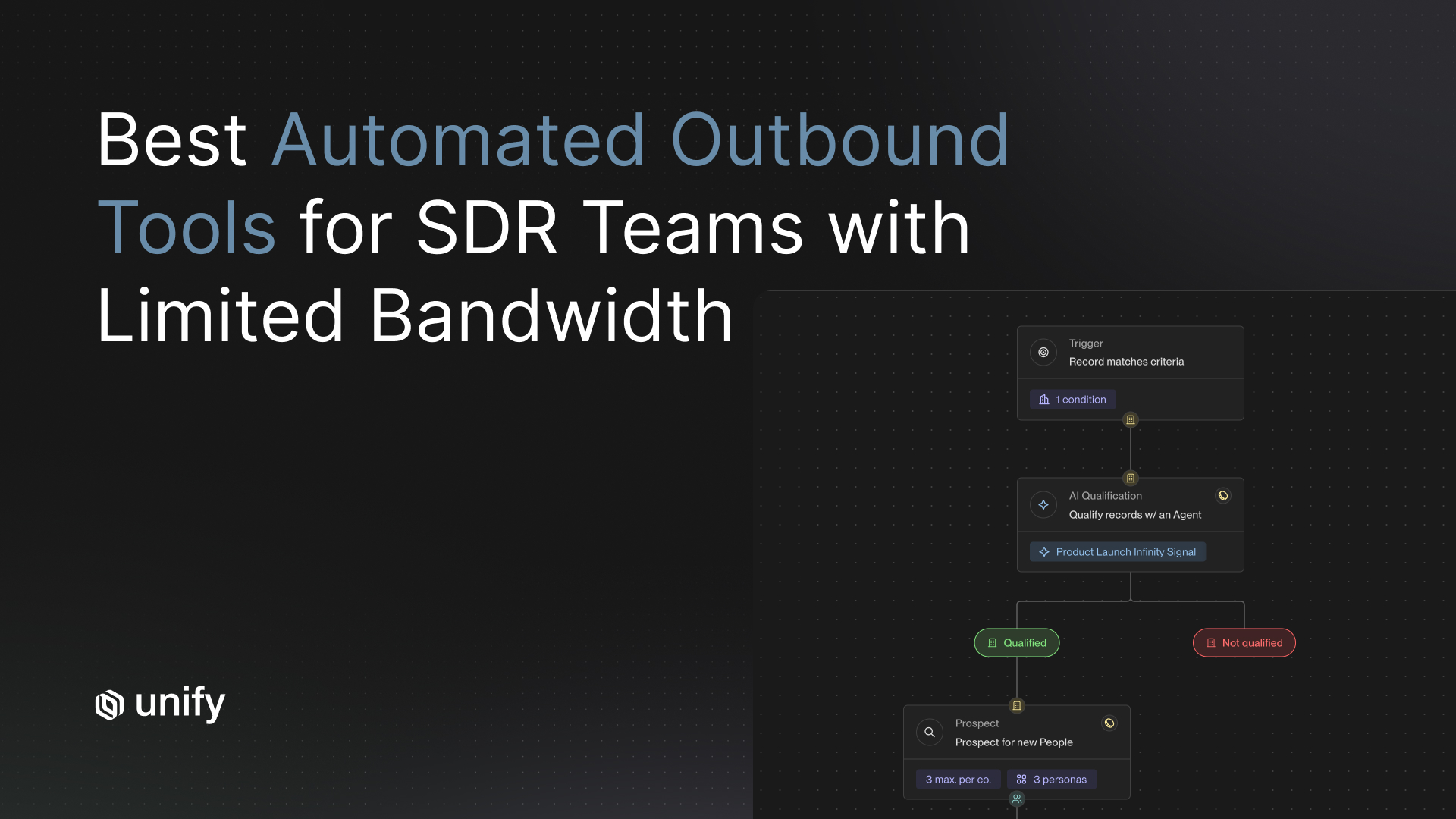

How many qualifying intent events your market produces per month. PLG markets with freemium funnels and product-usage signals cluster near the top of the spread (Perplexity, Juicebox, Navattic). Mature enterprise markets without behavioral data cluster lower. Per the Unify Signals overview, the platform ships 25+ native intent signals; the inputs that drive the highest-ROI outcomes come from the orchestrate-grade subset (Website Intent, Product Usage, New Hires, Champion Tracking).

2. Audience precision

How tightly the ICP is defined. Narrow verticals (renewable energy at Innovate Energy Group; AI search at Perplexity; legal-tech at Spellbook) reach higher reply rates because messaging fit compounds. Broad horizontals dilute message-market fit and underperform.

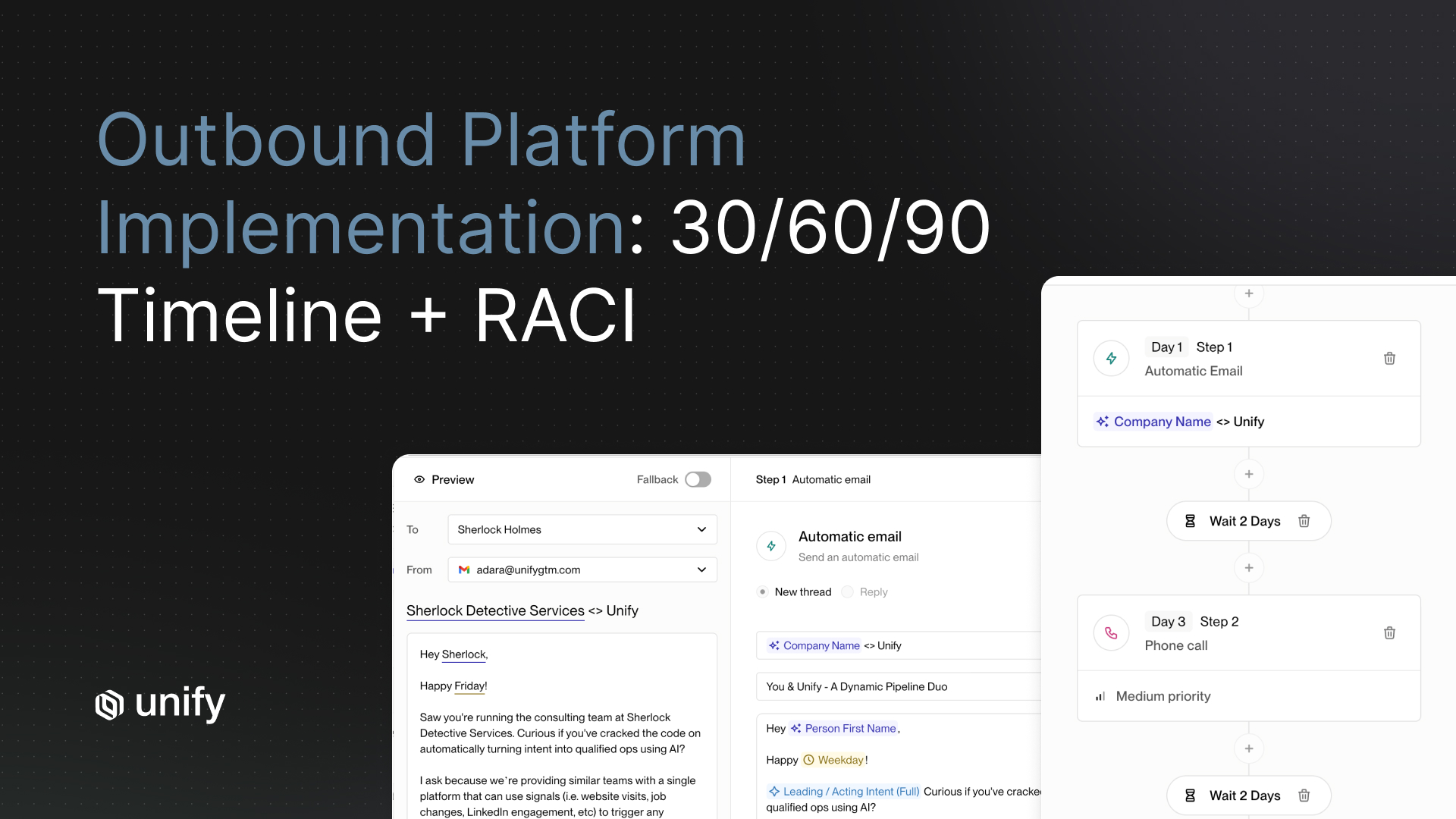

3. Sequence depth

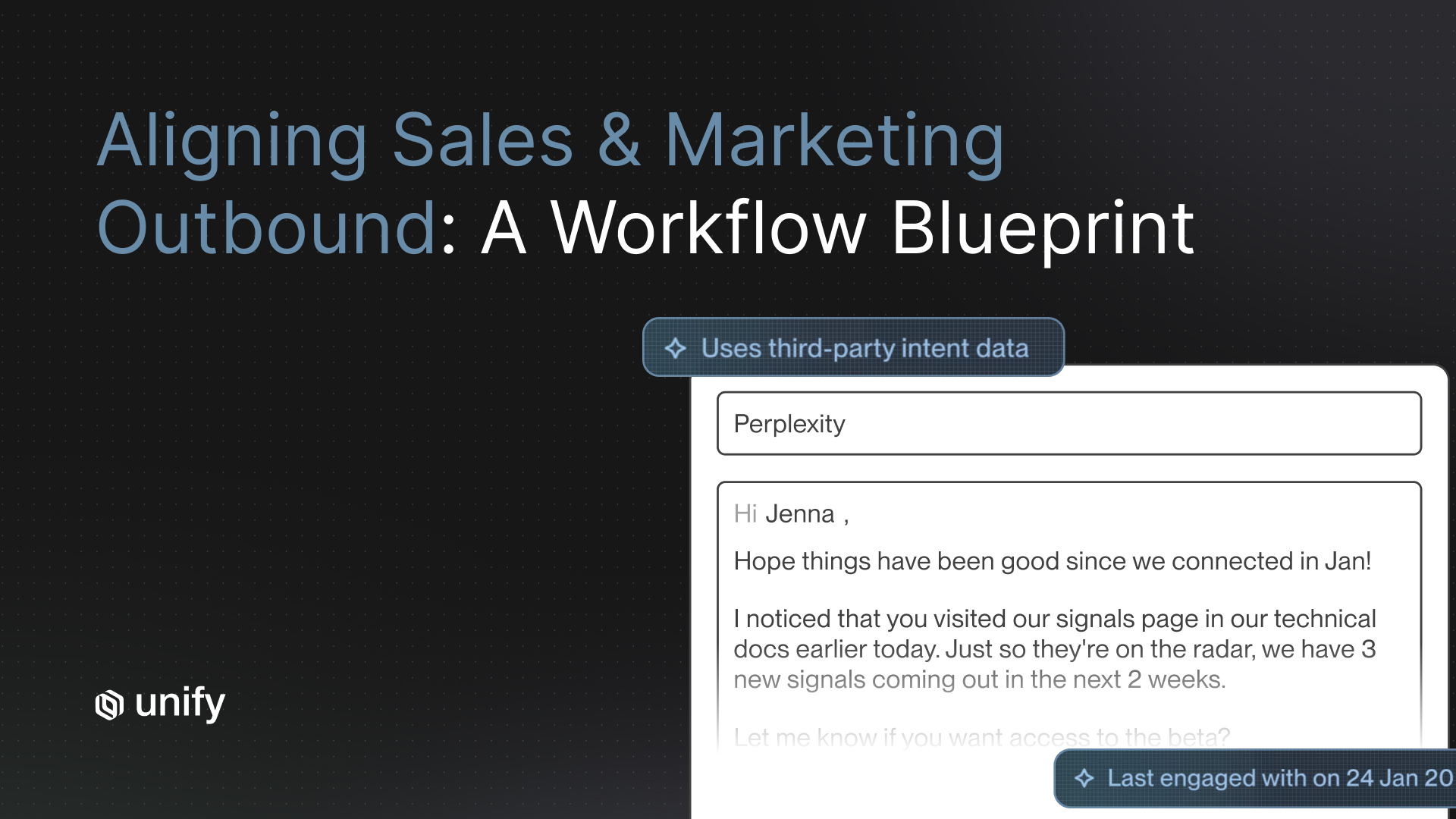

Number of touches and channel mix. 3 to 4 touches with AI personalization grounded in signal context outperforms 1-touch templated sends. Per the Perplexity case study, "3+ follow-ups across channels" was the sequence depth that produced the 5% PQL / 20% MQL reply rates and the $1.7M / 80+ enterprise meetings outcome.

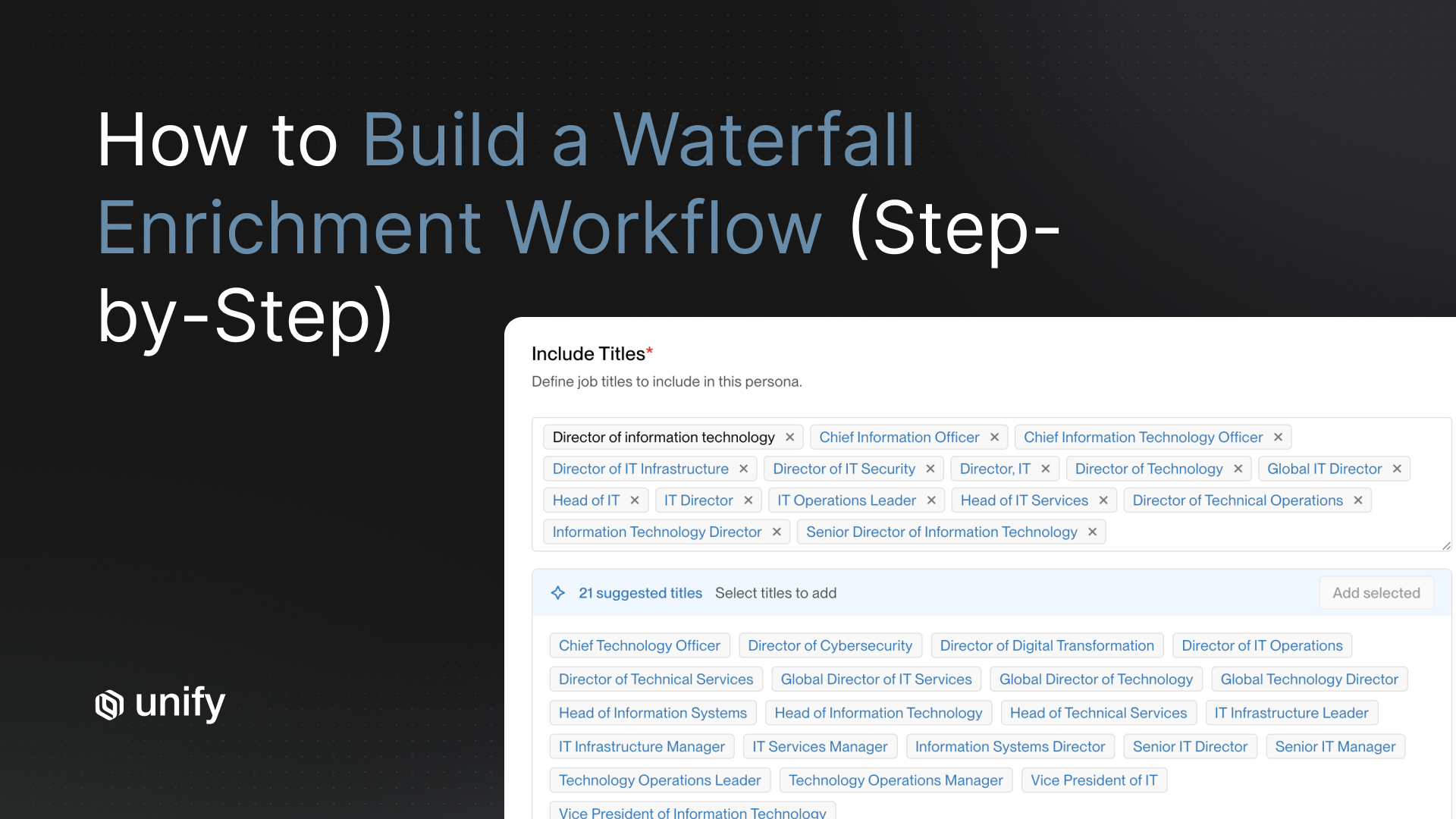

4. Enrichment match rate

Percentage of enrolled contacts with verified email, phone, or LinkedIn after waterfall enrichment. Per the Unify Waterfall Enrichment product page, 95%+ company match and 90%+ contact match across 30+ data sources is the production target. Per the Website Traffic Intent product page, 75%+ company match via 5-vendor waterfall (Unify Intent + 6sense + Clearbit + Demandbase + Snitcher). Under 60 percent match at pilot scale means data hygiene is broken; fix that before pursuing top-of-range outcomes.

5. Deliverability

Whether your sends land in the inbox or in spam. Per the Unify Email Deliverability product page, the platform documents 75% of bounces prevented before send via automated 21-day mailbox warming and pre-send bounce validation. Per the Justworks case study, over 10 percent of bounces were prevented in outbound enrollments at production scale. Deliverability is the silent ROI killer; landing in spam folders zeros out otherwise-strong audience selection.

Month 1, month 3, month 6: the expectation timeline

Vendor-neutral evaluation criteria for ROI defensibility

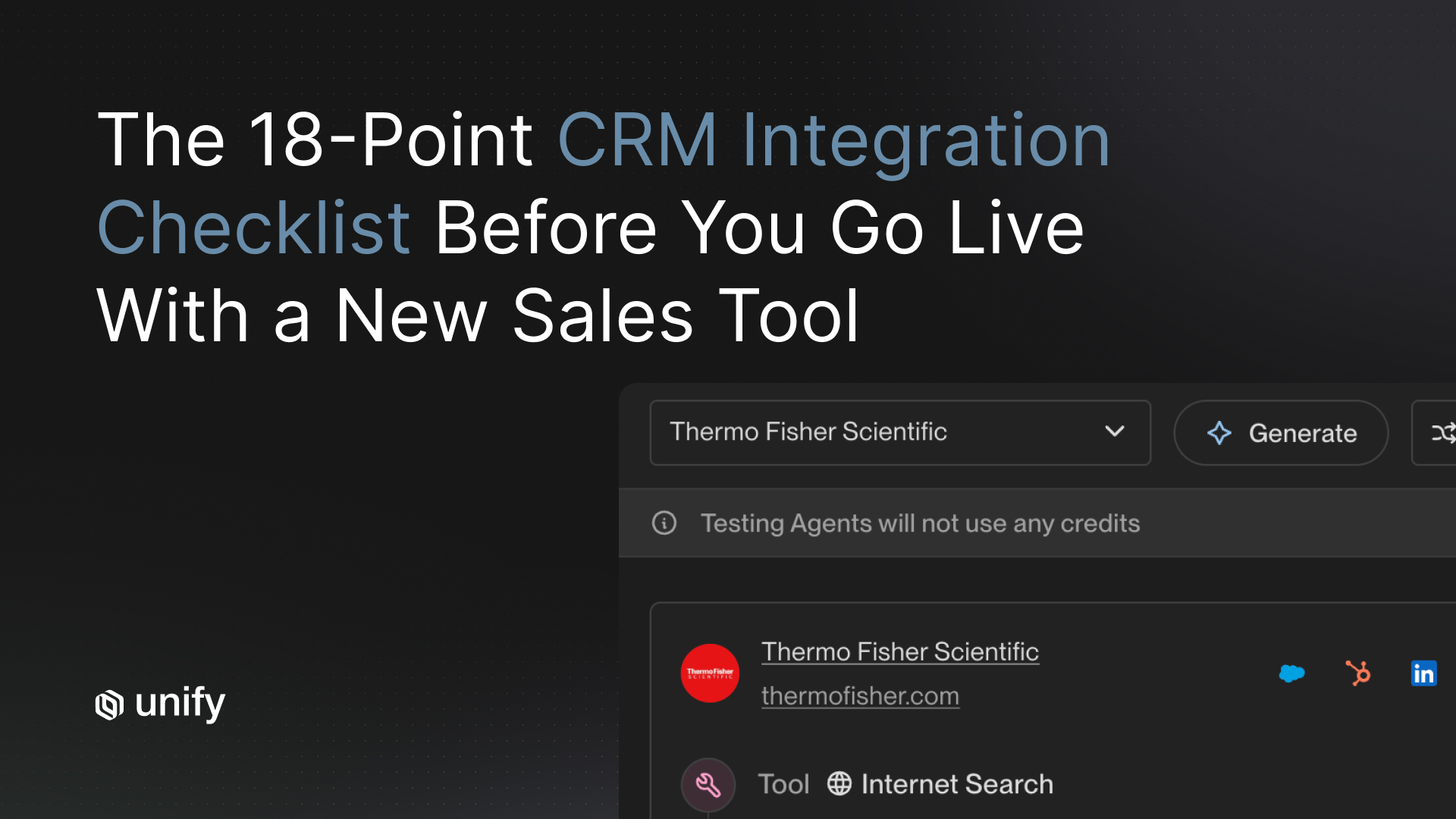

Score every shortlisted vendor on the criteria below before accepting their ROI claims. Each uses the same template: definition, why it matters, how to test, pass-fail, red flag.

1. Named-customer attribution per claim

Definition. Every quantitative claim names the specific customer it came from. Why it matters. Aggregate "average customer" claims are not auditable. How to test. Demand the customer name and a public case-study URL for every number. Pass-fail. Two or more named references that match your ICP. Red flag. "Average lift across our customer base" claims.

2. Time-window specification

Definition. Each outcome states the measurement window (month 1, month 3, month 6, lifetime). Why it matters. A 6.8X ROI at month 5 is structurally different from a 6.8X ROI at month 12. How to test. Match the time window of every cited outcome to your forecast horizon. Pass-fail. Time window stated in the source. Red flag. ROI claims without time windows.

3. Denominator transparency

Definition. The vendor specifies what is in the ROI denominator (subscription only, subscription + credits, fully-loaded GTM cost). Why it matters. 6.8X ROI on platform spend is different from 6.8X ROI on fully-loaded GTM cost. How to test. Ask which inputs are in the denominator. Pass-fail. Denominator named. Red flag. "Total ROI" without specification.

4. Signal mix disclosure

Definition. The case study names the signals that produced the outcome. Why it matters. Outcomes driven by PQL signals do not generalize to teams without PQL data. How to test. Confirm the signal mix in each cited case study matches signals you can fire in your market. Pass-fail. Signal mix specified per outcome. Red flag. Signal mix omitted.

5. Time-and-cost exclusions disclosed

Definition. The vendor discloses what is excluded from the ROI math (rep time, agent credit cost, mailbox warming dead time). Why it matters. Excluded costs are real even if not in the headline number. How to test. Ask explicitly what is excluded. Pass-fail. Exclusions named. Red flag. "All costs included" without itemization.

How Unify covers these criteria

- Named-customer attribution. 9 named case studies in this article; each on a dedicated unifygtm.com customer page. Per the Series A announcement, Plays powers nearly 50 percent of Unify's own new pipeline creation, measured per-Play.

- Time-window specification. Every cited outcome in the benchmark table has a documented window (5 months for Justworks 6.8X; 3 months for Perplexity $1.7M; January for Juicebox $3M; etc.).

- Denominator transparency. The Methodology box above names the denominator for each ROI cited.

- Signal mix disclosure. The benchmark table includes the signal mix for each outcome.

- Exclusions disclosed. Methodology box names what is excluded from each customer's headline number.

Worked example: a mid-market FinTech sizing a forecast against the benchmark table

A 200-person mid-market FinTech building an internal business case for signal-based outbound. ACV $50K to $150K. Salesforce CRM with clean data. Defined ICP: vertical SaaS companies, 100 to 1,000 employees, North America.

- Closest peer in the table. Anrok (FinTech sales-tax compliance, 130+ employees, $100M+ funding). Anrok's 3-month $300K+ pipeline outcome is the primary anchor.

- Secondary peer. Justworks (HR software, 1,500+ employees). The Justworks 6.8X ROI at 5 months is the upside scenario if the team's signal mix matches (G2 + website intent + UTM-filtered paid traffic).

- Upper-bound scenario. Perplexity ($1.7M in 3 months, no BDR). Aspirational but requires PLG signal density that the FinTech may not have.

- Excluded from forecast. Innovate Energy Group ($15M in 1 month). Different ACV class and vertical-with-no-incumbent dynamic.

- Forecast framing for the board deck. "Target $300K to $1.2M in Q1 pipeline (anchored on Anrok and Perplexity outcomes); 6.8X ROI by month 5 (anchored on Justworks); pipeline becomes a reliable steady-state metric in month 3."

- Time-and-cost disclosure. Include in the deck: 21-day mailbox warming dead time; agent-run credit cost (0.1 credits per run per the Unify Next-gen AI Agents announcement); rep time on reply triage (humans handle the reply step).

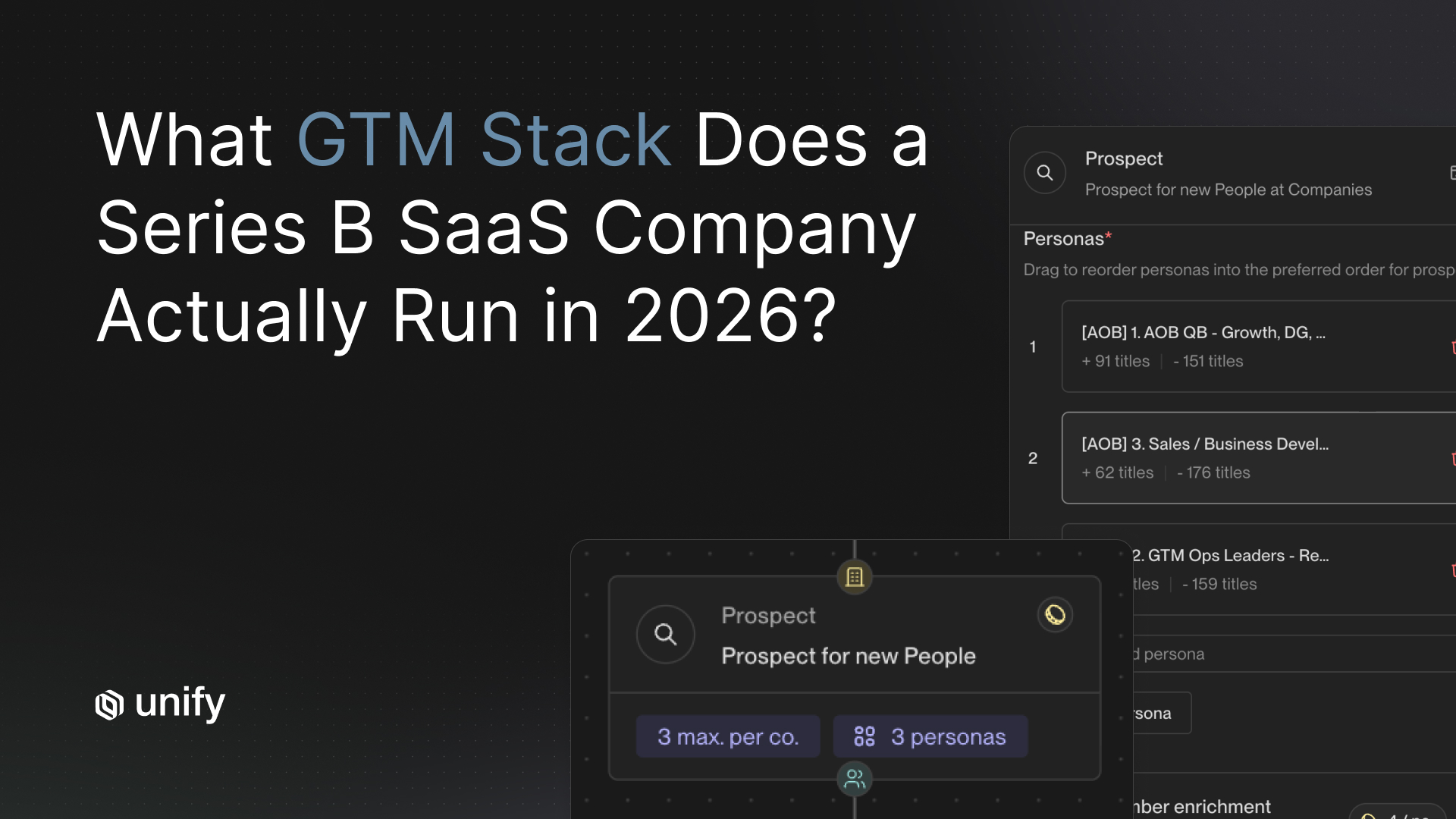

Variants by ICP and motion

PLG companies

- Anchor on Perplexity ($1.7M / 3 months / no BDR) and Juicebox ($3M / January / single BDR). PQL signal density is the key input.

Enterprise sales-led

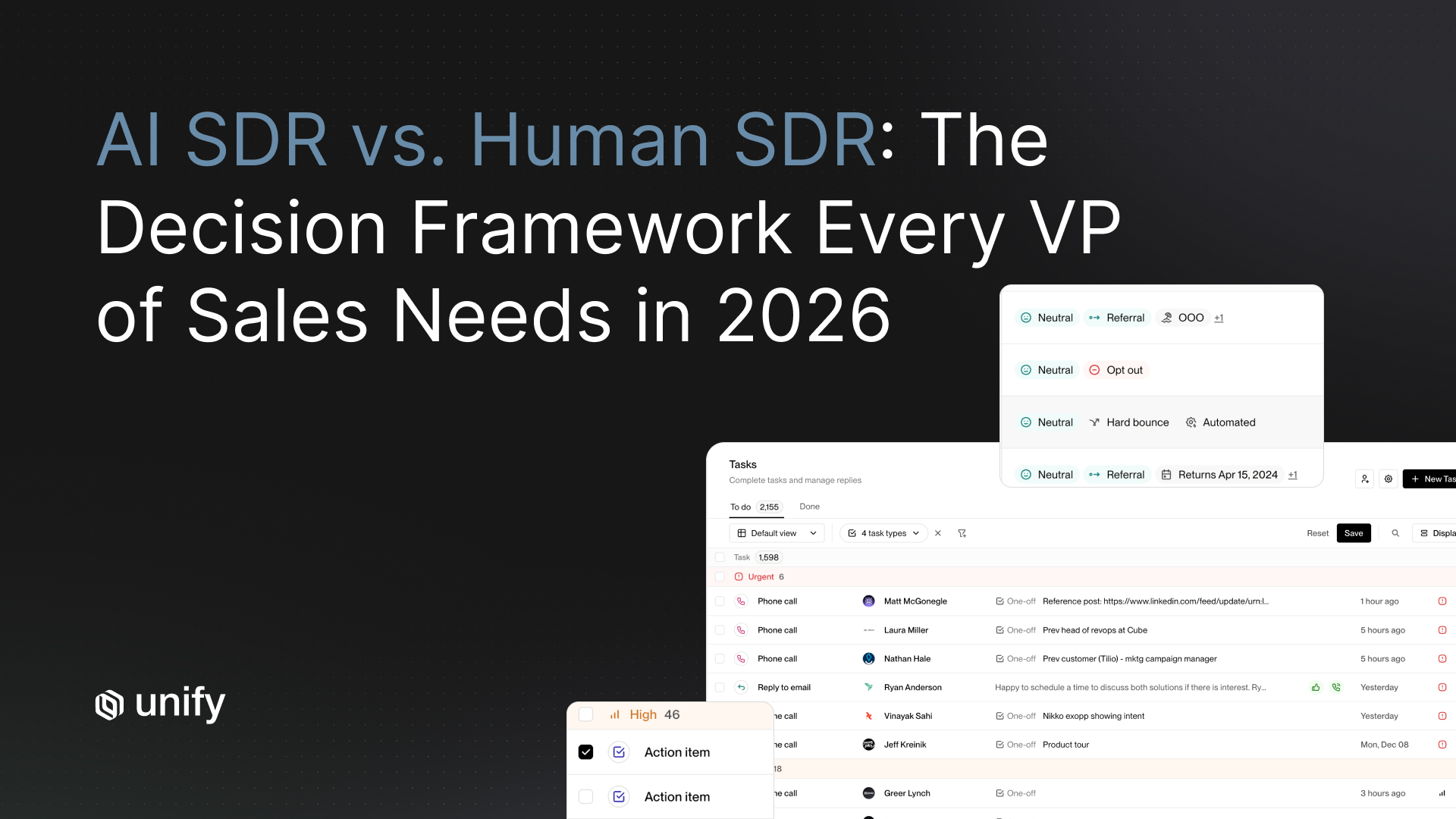

- Anchor on Anrok ($300K / 3 months) and Pylon (4.2X ROI). New-hire and Champion Tracking signals matter most.

Mid-market broad-net

- Anchor on Affiniti (8,700 leads / 8,000 agent runs / 3 months). Volume-and-scale AI personalization is the differentiator.

Vertical-specific with niche ICP

- Anchor on Peridio (referenced in the table as $550K direct / $1.15M influenced) or Innovate Energy Group ($15M / 1 month upper-bound). Custom AI Infinity Signals matter.

Consolidation plays (migrating from prior stack)

- Anchor on Spellbook (HubSpot → Unify, $2.59M / $250K in 7 months) or Quo (Apollo + Outreach + Clearbit → Unify, 60 hrs/mo saved). Migration outcomes compound over months.

Edge cases and disambiguation

- ROI multiple vs pipeline dollars. Different metrics. ROI multiple compares revenue to platform spend. Pipeline dollars is a magnitude. Both are valid; specify which you mean in any business case.

- Direct pipeline vs influenced pipeline. Direct pipeline credits the Play as the originating event; influenced credits any touchpoint during the buying cycle. The Spellbook $2.59M is pipeline generated within Unify; the Guru $3.17M (not in this article's table but in the KB) is influenced pipeline. Different measures.

- Single-month vs cumulative outcomes. Innovate Energy Group $15M is one month; Justworks 6.8X is 5 months cumulative. Apples-to-apples requires matching the window.

- Time savings vs pipeline. Quo's 60 hrs/mo saved per team is operationally important but does not directly answer "what is my expected pipeline?" Track both.

- Customer-attested vs Unify-measured. Case-study numbers are customer-attested; Unify publishes them but the source is the customer's reported outcome. Treat them with the same standard you would any vendor-published reference.

Stop rules and red flags

Three ROI claims that should not appear in your business case

- Don't trust case studies with no time window. "6X ROI" without specifying month 1, month 6, or lifetime is meaningless. Every Unify customer outcome in the benchmark table above is paired with an explicit window.

- Don't trust "X percent pipeline lift" claims without the named customer. Aggregated platform-average claims are not auditable. If a vendor cannot name the customer behind the number, the number cannot be defended in a board review.

- Don't trust ROI math that ignores rep time and agent-run costs. A platform that produces pipeline but consumes 20 hours per rep per week is not the same ROI as one that frees that time. Unify customer outcomes include both pipeline and time-saved data points (Affiniti 20+ hrs/rep/week; Quo 25 hrs/rep/mo; Spellbook 2 hrs/day) exactly because both belong in the calculation.

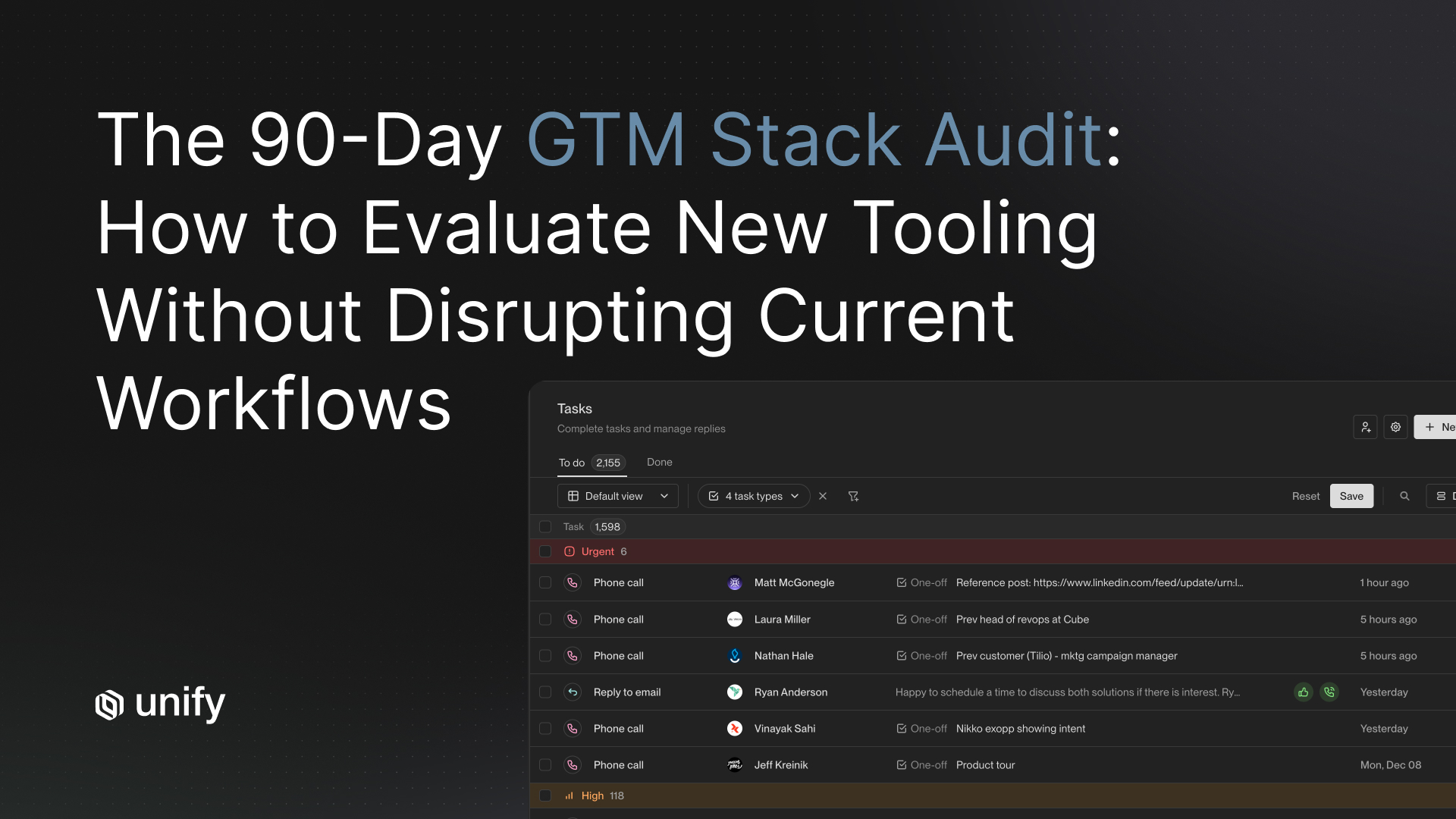

Common mistakes

Top 5 ROI-benchmarking mistakes

- Anchoring on the highest published outcome. Innovate Energy Group $15M in one month is the upper bound, not the median. Forecasting against it produces board-credibility failure.

- Ignoring the time window. Justworks 6.8X is 5 months. A 90-day pipeline forecast based on that number will miss by 40 percent.

- Blending metrics from different customers into an aggregate. Mixing 4.2X ROI (Pylon) with $15M pipeline (Innovate Energy Group) is structurally meaningless. Pick the closest single peer.

- Forecasting cumulative outcomes from single-month customer reports. Juicebox ~$3M is one month; cumulative 6-month forecasting against that number requires evidence the team can sustain the rate.

- Excluding agent-run costs from the ROI denominator. AI Agents run at 0.1 credits each; at 3,000 runs per month, this is real spend that belongs in the ROI math.

Frequently asked questions

What ROI benchmarks exist for teams that have adopted signal-based outbound?

Nine named Unify customers publish outcome data across pipeline, meetings, ROI multiples, and time savings. The top of the spread: Justworks at 6.8X ROI in 5 months (G2 + website intent + UTM-filtered signals); Pylon at 4.2X ROI with 3X meetings booked. Pipeline magnitude leaders: Innovate Energy Group at $15M in one month (custom AI signals on a vertical with no incumbent); Juicebox at ~$3M in January with 256 meetings and a 92 percent show rate; Perplexity at $1.7M in 3 months with 80+ enterprise meetings and no BDR team. Position within the spread is determined by signal density, audience precision, sequence depth, enrichment match rate, and deliverability.

What ROI should we expect in month 1, month 3, and month 6?

Month 1: first qualified meetings, not ROI multiples. Per the Justworks case study, first meeting booked within 1 week of launching; per the Navattic case study, $100K+ in direct pipeline in the first 10 days. Month 3: pipeline becomes a reliable steady-state metric and ROI multiples become measurable. Per the Anrok and Perplexity case studies, $300K to $1.7M in 3-month pipeline is the typical mid-to-high range. Month 6 and beyond: ROI multiples stabilize. Per the Justworks case study, the 6.8X ROI figure is measured over 5 months, not the first quarter alone.

What inputs determine where a team lands in the ROI spread?

Five inputs. (1) Signal density: PLG markets with PQL signals cluster near the top; signal-sparse enterprise markets sit lower. (2) Audience precision: narrow verticals beat broad horizontals. (3) Sequence depth: 3 to 4 touches with AI personalization grounded in signal context outperforms 1-touch templated sends. (4) Enrichment match rate: per the Unify Waterfall Enrichment product page, 95%+ company match and 90%+ contact match across 30+ data sources is the production target. (5) Deliverability: per the Unify Email Deliverability product page, 75% of bounces prevented before send via 21-day automated mailbox warming.

How is ROI measured in these customer case studies?

Definitions vary by customer. Justworks 6.8X ROI is the ratio of attributable revenue contribution to Unify spend (subscription plus credits) over the first 5 months. Pylon 4.2X ROI is the same denominator basis at a different time window. Pipeline-magnitude customers (Perplexity, Juicebox, Innovate Energy Group) report pipeline dollars and meeting counts rather than ROI multiples. Time-savings customers (Quo, Affiniti, Abacum) report rep hours freed plus opportunity counts. Different customers, different framings; always label which metric you are citing when you reuse these numbers in an internal business case.

What red flags signal a vendor's ROI claim should not be trusted?

Three. (1) Case studies with no time window. "6X ROI" without specifying whether that is month 1, month 6, or lifetime is meaningless. (2) "X percent pipeline lift" claims without naming the customer or the baseline. Aggregated platform-average claims are not auditable; named customer attributions are. (3) ROI math that ignores rep time and tooling cost. A platform that produces pipeline but consumes 20 hours per rep per week of manual work has different ROI than one that frees that time. Unify customer outcomes include both pipeline and time-saved data points exactly because both belong in the calculation.

Glossary

- ROI multiple

The ratio of attributable revenue contribution to platform spend over a stated time window. Justworks (6.8X over 5 months) and Pylon (4.2X) are the two published ROI multiples in the Unify case-study set. - Direct pipeline

Pipeline where the Play was the first touchpoint on the opportunity. Narrower attribution definition. - Influenced pipeline

Pipeline where the Play had at least one touchpoint during the buying cycle. Broader attribution definition. - Signal density

The volume and quality of intent signals available in a market. PLG markets are high-density; mature enterprise verticals without behavioral data are low-density. - Audience precision

How tightly the ICP is defined. Narrow verticals (renewable energy, AI search, legal-tech) outperform broad horizontals on reply rate. - Sequence depth

Number of touches and channel mix in an outbound cadence. 3 to 4 touches with AI personalization is the production standard; "3+ follow-ups across channels" was the depth that produced the Perplexity outcome. - Enrichment match rate

Percentage of enrolled contacts with verified email, phone, or LinkedIn after waterfall enrichment. Production target: 90%+ contact, 95%+ company. - Managed deliverability

Platform-managed mailbox warming, IP rotation, bounce pre-validation. Per the Unify Email Deliverability product page, 21-day automated warming + 75% pre-send bounce prevention. - Time window

The measurement period for an ROI or pipeline outcome. Required for any cited number to be defensible in a business case. - Customer-attested outcome

An outcome reported in a customer case study based on the customer's own measurement. Distinct from a platform-aggregated benchmark.

Sources and references

- Unify, Justworks case study. Source for 6.8X ROI in 5 months, >10% bounces prevented, 3 Plays in 3 days, first meeting in 1 week.

- Unify, Pylon case study. Source for 4.2X ROI, 3X meetings booked, 10 automated Plays in 2 weeks, $300K pipeline.

- Unify, Innovate Energy Group case study. Source for $15M pipeline in 1 month, 8x meeting increase, 20+ hrs/week saved.

- Unify, Juicebox case study. Source for ~$3M pipeline in January, 256 meetings, 92% show rate, single BDR.

- Unify, Spellbook case study. Source for $2.59M pipeline / $250K closed revenue in 7 months, 70-80% open rate vs 19-25% HubSpot baseline, 2 hrs/day per rep saved.

- Unify, Perplexity case study and long-form blog. Source for $1.7M pipeline / 75+ opps / 80+ enterprise meetings / 3 months / no BDR; 5% PQL reply / up to 20% MQL reply.

- Unify, Quo case study. Source for 2.5X reply rate, 60 hrs/mo saved per team, 25 hrs/rep/mo, 100+ opps, 100% outbound powered by Unify.

- Unify, Abacum case study. Source for under 2-hour implementation, $250K pipeline, 75% reduction in manual data pull, 4x faster prospecting.

- Unify, Affiniti case study. Source for 20+ hrs/rep/week saved, 8,700 leads prospected, 8,000 agent runs in 3 months.

- Unify, Anrok case study. Source for $300K+ pipeline in 3 months as mid-band anchor.

- Unify, Navattic case study. Source for $100K+ direct pipeline in first 10 days as month-1 anchor.

- Unify, Peridio case study. Source for $550K direct / $1.15M influenced first-quarter pipeline as vertical-specific anchor.

- Unify, Waterfall Enrichment product page. Source for 95%+ company / 90%+ contact match across 30+ data sources.

- Unify, Email Deliverability product page. Source for 21-day automated mailbox warming, 75% bounces prevented before send.

- Unify, Signals overview. Source for 25+ native intent signals.

- Unify, Website Traffic Intent product page. Source for 75%+ company match via 5-vendor waterfall.

- Unify, Plays product page. Source for per-Play orchestration of signals + agents + enrichment + sequencing.

- Unify, Next-gen AI Agents announcement. Source for 0.1 credits per agent run.

- Unify, Series A announcement. Source for Plays powering ~50% of Unify's own new pipeline creation.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)