TL;DR: A waterfall enrichment workflow queries B2B data providers in a defined priority sequence, only moving to the next source when the previous one returns a blank or low-confidence result. Setting one up involves five decisions: which providers to put in your stack and in what order, what cascade logic and confidence thresholds trigger the next call, how to handle conflicting data between sources, how to control costs by skipping unnecessary calls, and how to monitor match rates and cost-per-enriched-record over time. Teams that get all five right consistently see match rates above 85% and reduce their cost per enriched record by 30% to 50% compared to parallel multi-source enrichment. Unify operationalizes this entire workflow natively, without requiring custom engineering.

What Does a Waterfall Enrichment Workflow Actually Include?

A complete waterfall enrichment workflow has five functional layers: a provider stack, cascade trigger logic, conflict resolution rules, cost controls, and a monitoring system. Most teams who struggle with enrichment have the first layer roughly configured but have built little or nothing for the remaining four. That gap is where match rates stall, data quality degrades, and provider costs spiral.

A waterfall enrichment workflow is not just a vendor contract or a series of API integrations. It is an architecture where each layer depends on the ones before it. Cascade logic only works well if the provider stack is ordered correctly. Conflict resolution only produces reliable outputs if confidence thresholds are calibrated. Cost controls only fire when field-state checks are wired into the pre-call logic. This guide covers each layer in the order you need to build them.

This guide covers all five layers in sequence. If you already understand the fundamentals of waterfall enrichment and why it outperforms single-source enrichment, this article picks up where the concept ends and starts with implementation. If you want to understand the foundational "why" first, read What Is Waterfall Enrichment? Why It Beats Single-Source B2B Data before continuing here.

How Do You Choose Your Provider Stack and Priority Order?

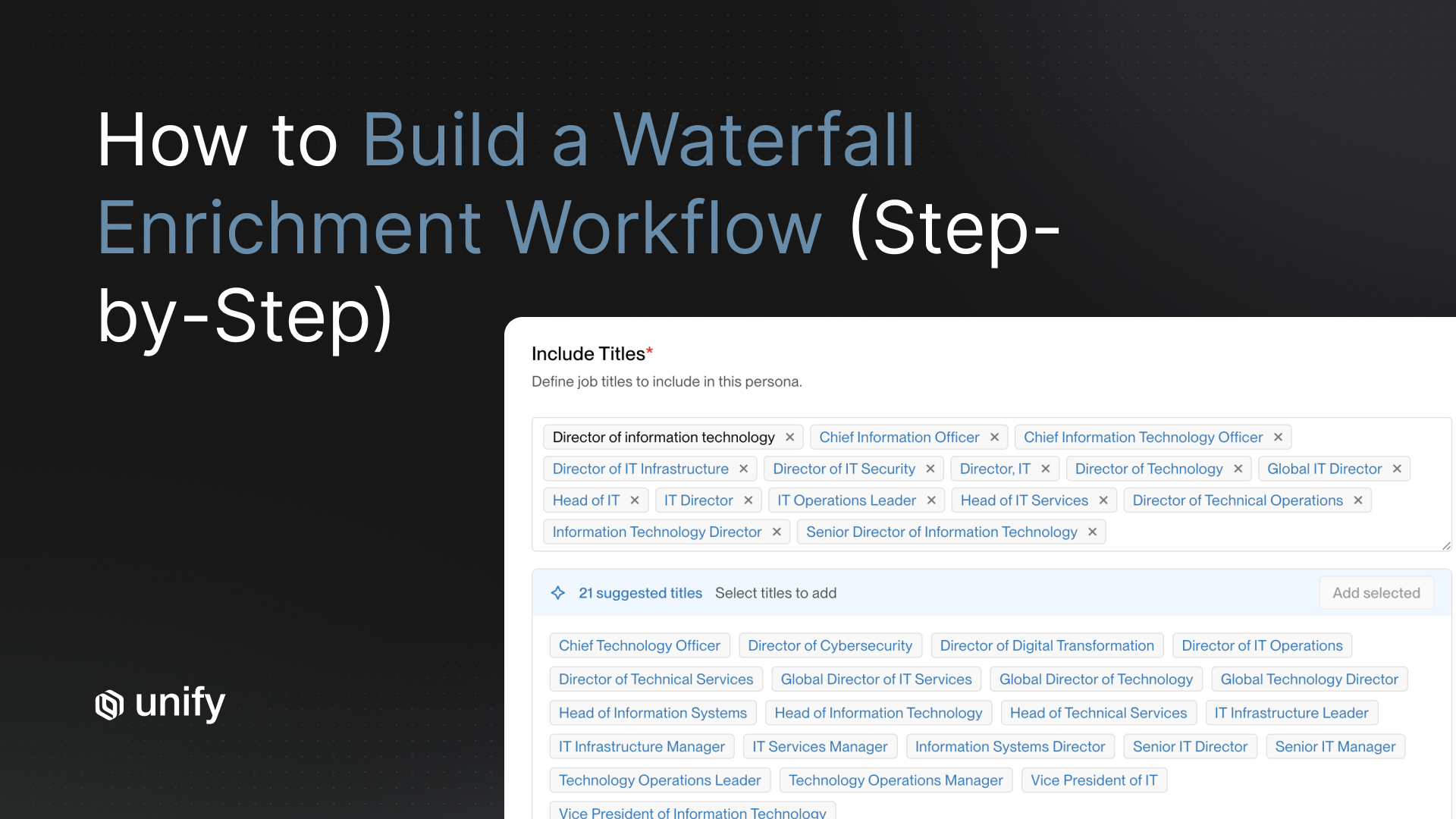

The right provider stack for a waterfall enrichment workflow depends on the data types you need, your target market geography, and the size of companies you are selling into. There is no single universal stack. The mistake most teams make is choosing providers based on brand recognition or pricing alone, rather than coverage benchmarks for their specific Ideal Customer Profile (ICP).

Match your provider choices to data type, not to brand

Different providers have meaningfully different strengths by data type. Work email addresses, direct-dial phone numbers, firmographic data, technographic data, and intent signals are not equally covered by any single vendor. A provider strong on North American enterprise emails may have weak direct-dial coverage and almost no international contact data. Your stack should reflect these differences rather than treating providers as interchangeable.

As a starting framework, B2B data providers can be evaluated across five data types. The table below maps common provider strengths to data type categories based on independent coverage analysis:

Run a coverage audit before finalizing your stack

The fastest way to validate a provider stack is to run a sample of 500 to 1,000 known target accounts through each vendor's API and measure match rate, field completeness, and email deliverability. Most enterprise providers offer trial API access for exactly this purpose. Do not skip this step. A provider that matches 80% of your exact ICP may only match 50% of another team's ICP with different firmographic characteristics.

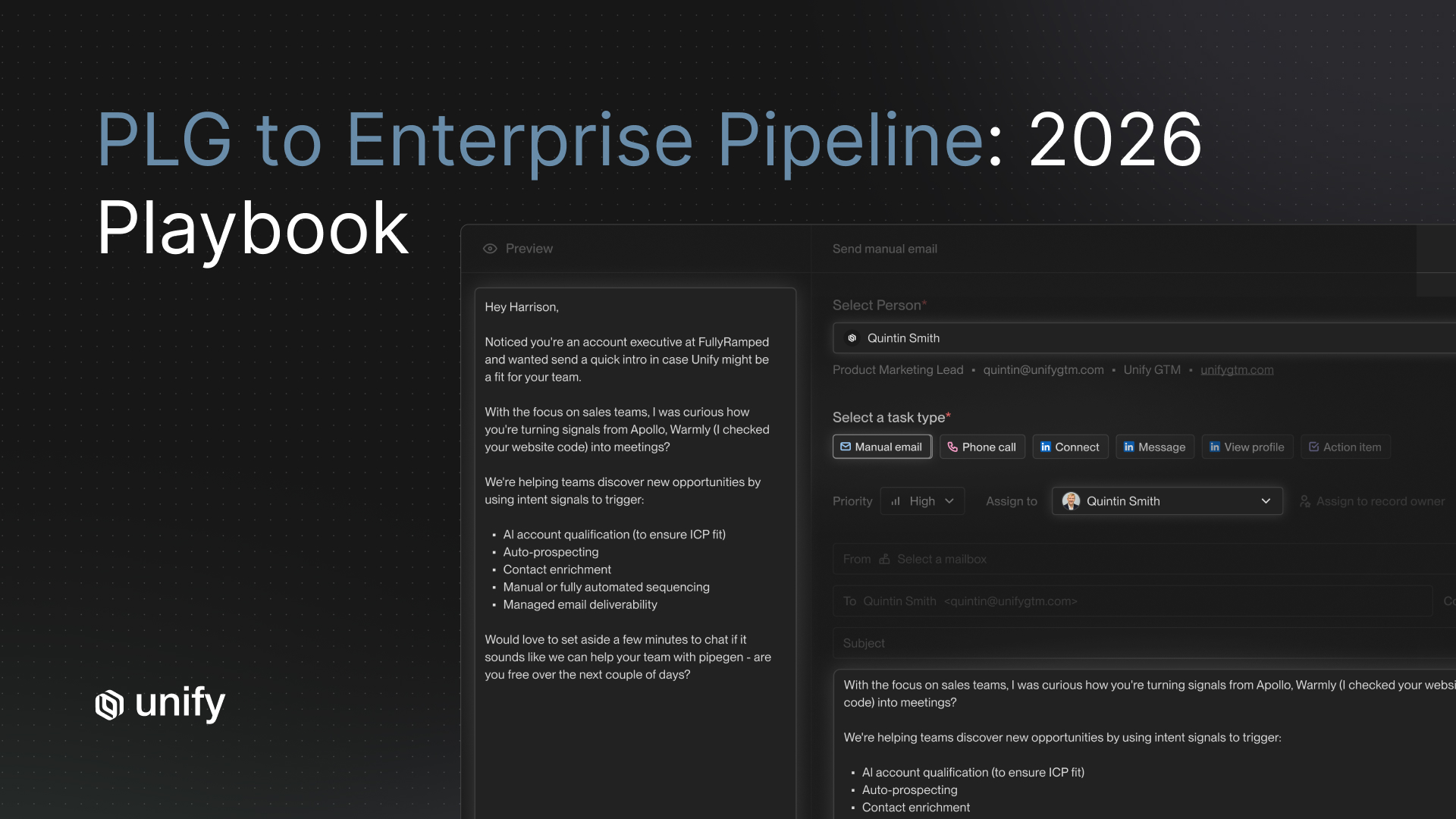

Within Unify's platform, this coverage benchmarking happens automatically. When you configure your waterfall, Unify runs sample queries against each provider in your stack and surfaces match rate estimates for your specific ICP before you commit to a full enrichment run. This typically saves teams two to three weeks of manual API testing and prevents expensive stack choices based on vendor-supplied statistics that may not reflect actual coverage for your target accounts.

Put your most complete and cost-effective provider first

The first provider in your waterfall handles the highest volume of queries, so it should be the one with the best match rate for your ICP at the lowest per-record cost. High-cost, high-precision providers (those with premium phone verification or GDPR-compliant European coverage) belong further down the cascade, called only for records the cheaper primary source could not cover. This single ordering decision drives the majority of cost savings in a waterfall enrichment architecture.

How Do You Set Up Cascade Logic and Confidence Thresholds?

Cascade logic determines when your workflow stops querying one provider and moves to the next one. The most common mistake teams make is treating cascade triggers as binary: either the field is empty (move on) or it has a value (stop). Binary cascade logic leaves significant quality on the table. A provider might return an email address that is syntactically valid but flagged as likely invalid by its own confidence score, or a phone number formatted correctly but not verified against a live directory. Confidence thresholds fix this.

What is a confidence threshold and why does it matter?

A confidence threshold is a minimum quality score a returned value must meet before the waterfall stops querying for that field on that record. Instead of asking "did the provider return a value?", your cascade logic asks "did the provider return a value with confidence above X%?" If not, the cascade continues to the next provider even though a value was technically returned. This distinction is the difference between a match rate metric and a deliverability metric, and most teams only measure the former.

For email addresses, a reasonable confidence threshold for stopping the cascade is 85% or above on the provider's internal validation score. Below that, the email is likely to bounce. For direct-dial phone numbers, the threshold depends on whether the provider performs live verification (a mobile number confirmed active in the last 90 days is a harder stop than an unverified office switchboard number). For firmographic fields like company headcount and revenue, threshold logic is simpler: accept the value if the provider's data source is less than 12 months old.

Setting thresholds by data type

Confidence thresholds are not one-size-fits-all. The right threshold for a given field depends on the downstream cost of a bad value. A bad email address results in a bounce and potential domain reputation damage. A bad phone number wastes a sales rep's time on one bad dial. The financial and operational consequences differ substantially, so your thresholds should reflect those costs.

A practical starting point for threshold calibration: run your first enrichment batch without strict confidence thresholds, collect deliverability data on the outputs (bounce rates, connection rates), then work backward to identify the confidence score range above which delivered data was accurate and below which it was not. After the first batch, set your thresholds at the lower bound of the reliable range. Adjust quarterly as provider quality shifts.

How Do You Handle Conflicting Data Between Providers?

Data conflicts in a waterfall enrichment workflow occur when two providers return different values for the same field on the same record. In a well-designed field-level waterfall, conflicts are minimized by design because each field stops at the first provider that meets the confidence threshold. But conflicts still arise during initial stack evaluation, during re-enrichment of existing records, and when you add a new provider to an existing stack mid-cycle.

Recency wins as the default conflict resolution rule

When two providers return different values for the same field and you cannot determine which is more reliable from confidence scores alone, recency is the most defensible tiebreaker. According to Salesforce research, B2B contact data decays at roughly 22% per year as people change jobs, get promotions, and update contact details. The provider whose data was sourced or last verified more recently is statistically more likely to be correct. Most enterprise providers include a data_sourced_at or last_verified timestamp in their API response. Build your conflict resolution logic to surface and compare these timestamps when confidence scores are tied.

Source reliability scoring for persistent conflicts

Recency handles most conflicts, but some providers are structurally more accurate for specific fields regardless of when the data was sourced. Direct-dial phone data from a provider that performs live mobile verification is more reliable than a number scraped from a web directory, even if the scraped number was touched more recently. Source reliability scoring lets you encode this domain knowledge into your conflict resolution logic.

A simple source reliability scoring system assigns each provider a field-level reliability score from 1 to 10, based on your observed deliverability and accuracy data from previous enrichment runs. When resolving a conflict, your system multiplies the provider's reliability score by a recency multiplier (sourced within 30 days = 1.5x, 30 to 90 days = 1.25x, 90 to 180 days = 1.0x, older than 180 days = 0.75x). The provider with the higher composite score wins. This two-factor model catches the cases where a highly reliable provider has slightly older data than a lower-reliability provider but is still the correct choice.

Unify manages this conflict resolution layer automatically, maintaining a live accuracy model for each provider in your stack based on observed email delivery outcomes, call connection rates, and customer-reported data corrections. The reliability scores update continuously rather than requiring manual quarterly recalibration.

How Do You Optimize Cost in a Waterfall Enrichment Workflow?

Cost optimization in waterfall enrichment comes down to one principle: never pay a provider to return data you already have. This sounds obvious, but most teams violate it constantly because their enrichment workflow does not check existing field values before issuing API calls. Every unnecessary call is pure waste, and at scale, unnecessary calls add up fast.

Implement pre-call field checks

Before issuing any provider API call for a record, your workflow should check the current state of every field in that record in your CRM or data warehouse. If a field already has a value that was sourced within your acceptable staleness window (often 90 to 180 days, depending on the field type), skip the provider call for that field on that record entirely. This pre-call field check alone reduces unnecessary provider calls by 20% to 35% on typical re-enrichment runs, based on Unify platform data across customer enrichment workflows.

Route records to providers based on known coverage gaps

If you know from historical data that Provider A has essentially zero coverage for companies with fewer than 50 employees, do not query Provider A for those records. Route small-company records directly to Provider B, which has better SMB coverage. This coverage-aware routing eliminates a full provider tier for a subset of your records, cutting both API costs and latency.

In Unify, this routing logic is configured as provider coverage rules: you define which firmographic segments each provider is likely to cover, and the workflow routes records accordingly before the cascade starts. Teams running coverage-aware routing in Unify see a 30% to 50% reduction in total provider API spend compared to running every record through the full cascade from the top.

Tier your enrichment cadence by account priority

Not every record in your CRM deserves the same enrichment investment. A Tier 1 named account in your highest-value ICP segment should be re-enriched every 30 days with the full provider stack. A Tier 3 account that has never engaged with any of your signals probably does not warrant re-enrichment more than once a quarter, and only with your cheapest primary provider. Tiering your enrichment cadence by account priority is one of the highest-leverage cost controls available, and it is a configuration decision rather than an engineering problem.

For more context on how account prioritization integrates with your broader GTM data strategy, see B2B Data Providers: Contact Accuracy Comparison.

Cost-per-enriched-record benchmarks

Understanding what you should be paying helps you evaluate whether your current workflow is optimized. Based on Unify platform data across B2B customer enrichment workflows:

- Unoptimized single-source enrichment: $0.12 to $0.25 per enriched record

- Unoptimized parallel multi-source enrichment: $0.30 to $0.60 per enriched record (paying for redundant calls)

- Optimized waterfall enrichment with pre-call checks and coverage routing: $0.08 to $0.18 per enriched record

- Unify waterfall enrichment with full optimization layer: $0.06 to $0.14 per enriched record

The cost gap between an unoptimized parallel approach and a well-configured waterfall is typically 40% to 60%. For a team enriching 50,000 records per month, that is $6,000 to $14,000 in avoidable provider spend every month.

How Do You Monitor and Optimize a Waterfall Workflow Over Time?

A waterfall enrichment workflow is not a set-it-and-forget-it system. Provider coverage changes. ICP characteristics shift. New vendors enter the market. Without an active monitoring layer, your carefully configured workflow will degrade quietly, and you will not know until you notice a drop in email deliverability or connect rates six months later.

The three metrics every enrichment workflow must track

Three metrics tell you whether your waterfall is performing correctly: match rate by provider and by field, email deliverability rate on enriched contacts, and cost-per-enriched-record. Match rate tells you whether each provider is doing its job in the cascade. Deliverability rate tells you whether the data quality meets the confidence thresholds you set. Cost-per-enriched-record tells you whether your pre-call checks and coverage routing are working as intended. If all three are healthy, your workflow is running well. If any one degrades, it points to a specific layer that needs attention.

What good match rate metrics look like

A well-configured waterfall enrichment workflow should achieve the following match rates across its provider stack:

- Overall match rate across the full cascade: 85% or above

- Primary provider match rate (before cascade): 55% to 70% (this is normal and expected)

- Match rate lift from secondary provider: 10% to 18% of total records

- Match rate lift from tertiary provider: 4% to 8% of total records

- Email deliverability rate on enriched contacts: 96% or above (bounce rate below 4%)

If your overall match rate falls below 80%, it usually means either your primary provider is a poor fit for your ICP (run a coverage audit), your confidence thresholds are too strict (you are cascading more than necessary), or your record population has shifted toward a segment where your stack has weaker coverage.

Quarterly provider stack reviews

Set a calendar reminder to review your provider stack performance every quarter. The review should compare each provider's match rate, deliverability contribution, and cost against the quarter prior. Providers with declining match rates or deteriorating deliverability should be moved down the cascade or replaced. New providers worth evaluating should be run against a sample of your ICP records before being added to production. This quarterly cadence keeps your waterfall calibrated as the data landscape evolves.

Within Unify, match rate and deliverability dashboards update in real time rather than requiring manual report pulls. When a provider's match rate drops below a configurable threshold, the platform surfaces an alert and suggests a cascade reorder based on current performance data. Teams using Unify's enrichment monitoring report spending roughly 80% less time on manual enrichment QA compared to teams running custom-built waterfall systems.

How Unify makes this architecture turnkey

Building this architecture from scratch typically takes a two to three person engineering team four to eight weeks, depending on how many providers are in the stack and how sophisticated the conflict resolution logic needs to be. The ongoing maintenance burden is substantial: provider APIs change, coverage drifts, and the confidence scoring model needs retraining as your ICP evolves.

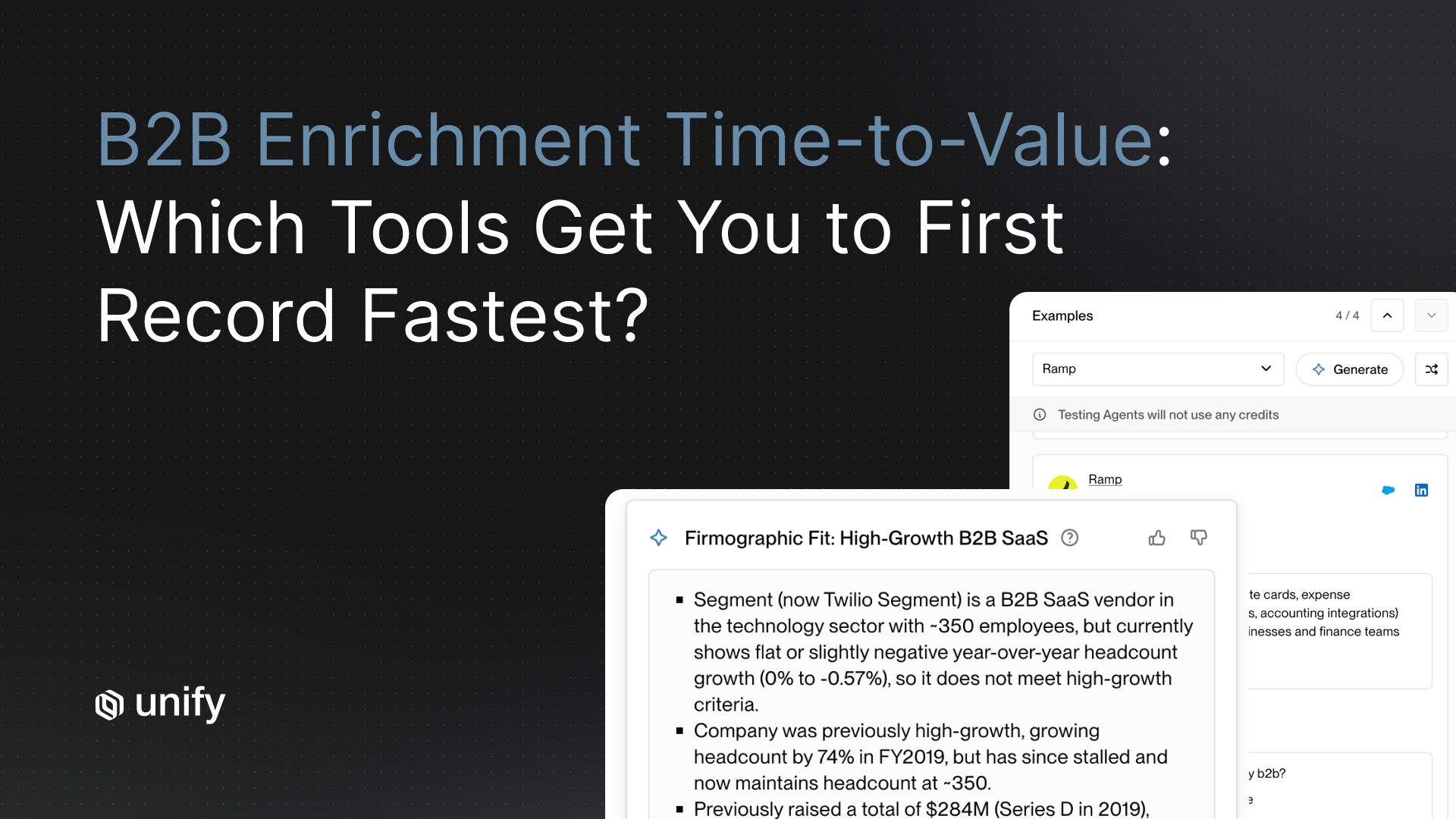

Unify operationalizes this entire architecture natively. The platform connects to all major B2B data providers through pre-built integrations, handles field-state checking and coverage routing automatically, and maintains the conflict resolution model using live outcome data from your enrichment runs. The result is that GTM teams at companies like Ramp and others using Unify get the match rate and cost efficiency benefits of a sophisticated waterfall workflow without dedicating engineering resources to build or maintain it.

For teams evaluating whether to build or buy their waterfall enrichment infrastructure, the relevant comparison is not just build cost but ongoing maintenance cost. A custom-built waterfall with three providers typically requires 20 to 40 hours of engineering attention per quarter to keep provider integrations current and the cascade logic tuned. At a fully-loaded engineering cost of $150 to $200 per hour, that is $3,000 to $8,000 per quarter in engineering overhead alone, before accounting for the opportunity cost of that engineering time.

For a broader view of how enrichment fits into your overall GTM data stack, see Best B2B Prospecting Tools and Outbound Automation With a Human-in-the-Loop CRM.

What Are the Most Common Waterfall Enrichment Setup Mistakes?

Even teams that understand waterfall enrichment architecturally tend to make a set of recurring implementation mistakes. Knowing these before you build saves weeks of debugging.

Mistake 1: Using record-level cascades instead of field-level cascades

A record-level cascade moves the entire record to the next provider when the primary provider cannot return all required fields. A field-level cascade evaluates each field independently. Record-level cascades are simpler to build but dramatically less efficient. If Provider A returns a valid email but no phone number, a record-level cascade will re-query Provider B for both fields, wasting a call for the email field you already have. Field-level cascades only call Provider B for the phone field. At scale, this difference accounts for 20% to 40% of avoidable provider spend.

Mistake 2: Not attributing enriched data back to its source provider

Every enriched field value should be stored with provider attribution and a sourced_at timestamp. Without attribution, you cannot diagnose which provider is contributing to email bounces, you cannot measure each provider's match rate accurately, and you cannot make data-driven decisions about stack reordering. Attribution metadata is also essential for GDPR and CCPA compliance documentation.

Mistake 3: Setting confidence thresholds too low to save on cascade calls

Some teams lower confidence thresholds to reduce the number of cascade calls (and thus reduce API costs) without realizing that accepting lower-confidence values drives up downstream costs through email bounces, domain reputation damage, and wasted sales rep time. A domain reputation incident from consistently bad email data affects every email your sales team sends, not just the ones that bounced. The cost of a domain reputation hit is almost always higher than the cost of running more thorough cascade queries. This is a case where apparent cost savings at the enrichment layer create larger costs downstream in deliverability and pipeline generation.

Mistake 4: Never re-enriching existing records

Many teams treat enrichment as a one-time onboarding step and never systematically re-enrich their existing CRM records. But B2B contact data decays at roughly 22% per year (Salesforce State of Sales research). A contact record that was accurate when enriched 18 months ago has roughly a 30% chance of being materially wrong today. High-performing outbound teams re-enrich their active target accounts on a rolling 90-day cycle, which keeps email deliverability consistently above 96% and connect rates stable over time.

Frequently Asked Questions About Waterfall Enrichment

Quick answers to the questions that come up most often when teams are scoping, implementing, or troubleshooting a waterfall enrichment workflow.

What is a waterfall enrichment workflow?

A waterfall enrichment workflow queries B2B data providers in a defined priority order, only moving to the next provider when the previous one returns a blank or low-confidence value for a given field. It is the opposite of parallel multi-source enrichment, which queries every provider at once. Waterfall logic reduces API spend, because you only pay the expensive providers for the records your cheaper primary cannot cover.

How do you decide the order of providers in a waterfall?

Order providers by match rate for your specific ICP divided by per-record cost. Your cheapest, highest-coverage provider for your ICP goes first. Higher-cost or region-specific providers (GDPR-compliant European data, live-verified mobile numbers) belong in position two or three, reserved for records the primary could not cover. Run a 500 to 1,000 record sample through each vendor's API before locking in the order, since vendor-advertised coverage rarely matches actual ICP coverage.

What does waterfall enrichment cost per record?

An optimized waterfall with pre-call field checks and coverage-aware routing typically costs $0.08 to $0.18 per enriched record. Unoptimized parallel multi-source enrichment usually costs $0.30 to $0.60 per record, because you pay every provider for every call. Inside Unify, full-stack waterfall enrichment runs $0.06 to $0.14 per enriched record based on platform benchmark data, driven by pre-call checks, coverage routing, and field-level cascade logic.

What is a good match rate for a waterfall enrichment workflow?

Across the full cascade, a healthy waterfall should hit 85% or higher overall match rate, with email deliverability above 96%. Your primary provider alone should land between 55% and 70% match rate before cascading (that range is normal and expected). Your secondary provider should add 10% to 18% match-rate lift, and your tertiary another 4% to 8%. If overall match rate falls below 80%, it usually points to a primary-provider fit problem or thresholds set too strictly.

What is a confidence threshold and why does it matter?

A confidence threshold is the minimum quality score a returned value must meet before the cascade stops and accepts the value. Without thresholds, cascade logic is binary (field empty or field has any value), which lets through low-quality emails that bounce and unverified phone numbers that waste rep time. For email, set the threshold around 85% on the provider's own validation score. For phones, require live verification. For firmographic data, require the source to be less than 12 months old.

How do you handle conflicts when two providers return different values?

Default to recency: B2B contact data decays at roughly 22% per year, so the more recently sourced or verified value is statistically more likely to be correct. For persistent conflicts, layer on source reliability scoring: multiply each provider's field-level accuracy score (1 to 10, based on observed deliverability) by a recency multiplier (1.5x for under 30 days, down to 0.75x for over 180 days). Highest composite score wins.

How often should you re-enrich existing CRM records?

Tier by account priority. Tier 1 named accounts in your highest-value ICP segment: every 30 days with the full provider stack. Tier 2 engaged accounts: every 60 to 90 days. Tier 3 long-tail accounts: once per quarter with only your cheapest primary provider. Skipping re-enrichment entirely is the most common cause of email deliverability decline, because B2B contact data decays around 22% annually as people change jobs and update details.

What are the most common mistakes when setting up a waterfall?

Four mistakes account for most failed implementations: using record-level cascades instead of field-level cascades (wastes 20% to 40% of spend on redundant calls), not attributing enriched data to its source provider (breaks deliverability diagnostics), setting confidence thresholds too low to save on cascade calls (creates downstream bounce and domain reputation costs), and treating enrichment as a one-time onboarding step instead of a rolling 90-day re-enrichment cycle for active accounts.

Should you build a waterfall enrichment workflow in-house or use a platform?

A custom-built waterfall with three providers typically takes a two-to-three-person engineering team four to eight weeks to build and 20 to 40 hours per quarter to maintain (roughly $3,000 to $8,000 in quarterly engineering overhead). A platform like Unify handles provider integrations, field-state checking, coverage routing, conflict resolution, and monitoring natively. The decision usually comes down to whether enrichment is a differentiated capability for your team or a commodity infrastructure layer.

Sources

- Salesforce. "State of Sales Report." B2B contact data decay rates and impact on sales productivity. https://www.salesforce.com/sales/state-of-sales/

- Dun & Bradstreet. "Marketing Solutions: Why Clean Data Wins." https://www.dnb.com/en-us/solutions/grow/marketing.html

- G2. "Best Data Enrichment Software Reviews." https://www.g2.com/categories/data-enrichment

- Gartner. "Market Guide for Revenue Data Solutions." Available via Gartner subscription portal.

- Unify platform data. Match rate and cost-per-enriched-record benchmarks derived from aggregate customer enrichment workflow data across Unify's customer base, 2024-2025.

- LinkedIn. "State of Sales Report 2024." https://business.linkedin.com/sales-solutions/b2b-sales-strategy-guides/the-state-of-sales-report

- Bombora. "B2B Intent Data: Benchmarks and Best Practices." https://bombora.com/resources/

- HG Insights. "Technographic Data for B2B Sales and Marketing." https://hginsights.com/resources/

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)