TL;DR: Most teams measure AI SDRs the same way they measure human reps — total emails sent, total meetings booked. That comparison is broken. AI tools operate at 10x the volume, work around the clock, and cost a fraction of a human salary. The right measurement framework adjusts for all of this. This article covers five normalized metrics — per-touch reply rate, quality-adjusted pipeline, speed-to-lead, off-hours coverage, and cost per qualified meeting — plus a dashboard template and a 90-day evaluation timeline so you can make a fair, defensible call on your AI SDR investment.

Why Measuring AI SDRs With Human SDR Metrics Produces the Wrong Answer

The core problem with measuring AI SDR performance is a framework mismatch. Human SDR metrics were built for a world where one person sends 50-80 emails a day, prioritizes accounts manually, and operates within an eight-hour window. AI SDR tools operate by entirely different rules: they send at 10x or more the volume of a human rep, respond to signals in minutes rather than hours, run overnight and on weekends, and generate pipeline at a cost structure that looks nothing like a human headcount.

Applying human-rep metrics directly to an AI system produces a misleading result in both directions. On activity metrics (total emails, total calls), the AI looks artificially strong because volume inflates every absolute number. On quality metrics (reply rate, meeting-to-opportunity conversion), the AI often looks artificially weak because its denominator — touches sent — is so much larger. Neither reading tells you whether the AI is actually generating efficient, qualified pipeline.

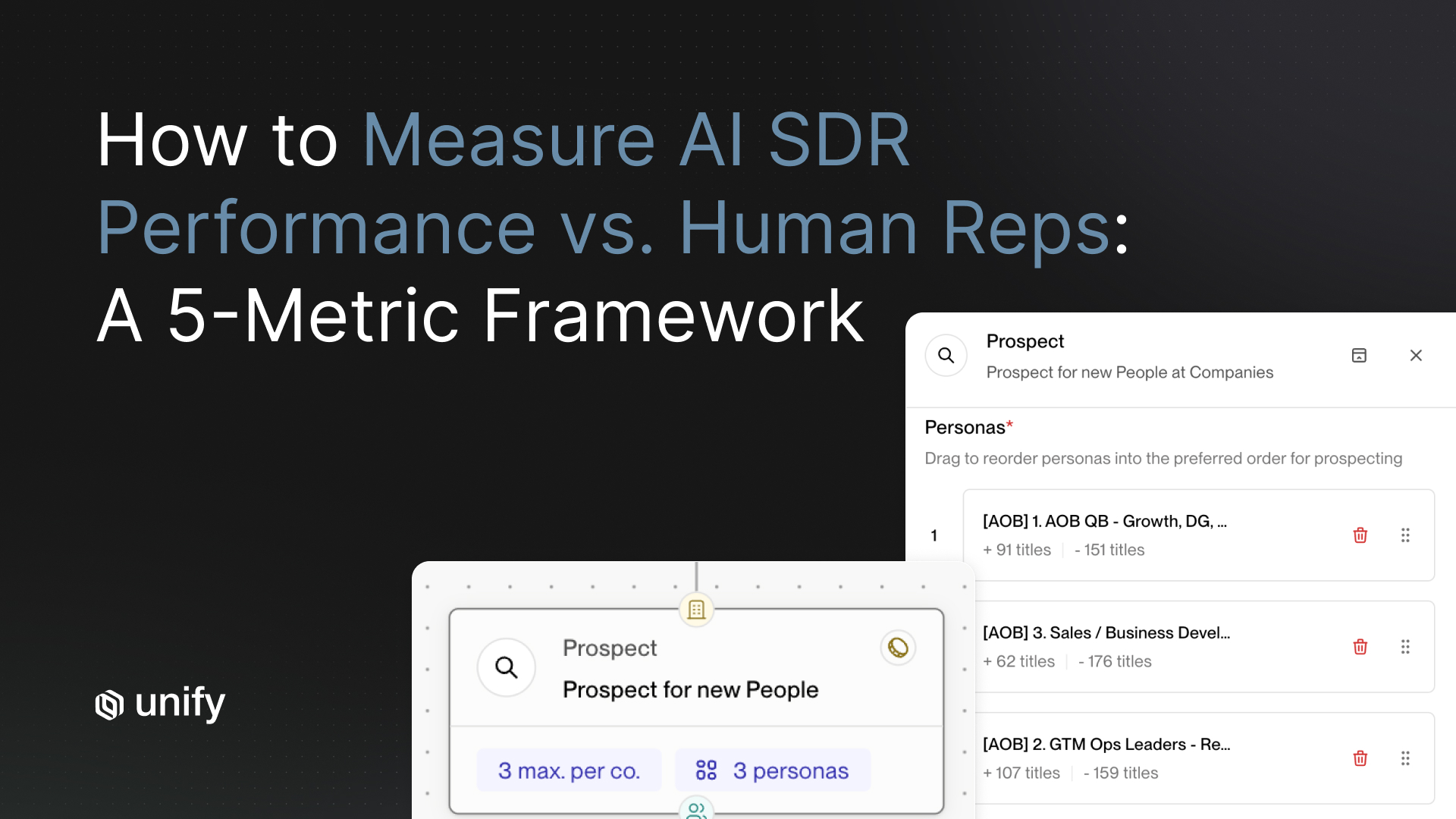

What you need is a normalized scorecard — five metrics that account for how AI and human SDRs differ, isolate what actually matters for pipeline health, and give you a defensible basis for the expand-or-cut decision at the end of a 90-day evaluation. Those five metrics are: (1) per-touch reply rate, normalized for volume; (2) quality-adjusted pipeline, measuring meeting-to-opportunity conversion; (3) speed-to-lead, tracking response time to inbound signals; (4) off-hours pipeline coverage, isolating incremental revenue from 24/7 operation; and (5) cost per qualified meeting, the efficiency bottom line. The following framework explains each one and how to measure it.

Why Should You Measure Per-Touch Reply Rate Instead of Total Replies?

Per-touch reply rate — total positive replies divided by total touches sent — is the most important normalization you can make when comparing AI and human SDR performance. Measuring total replies without adjusting for volume will always make the AI look better than it may actually be, and disguises targeting and personalization problems that compound over time.

Consider a common scenario: a top human SDR sends 60 emails per day and receives 9 positive replies — a 15% per-touch reply rate. An AI SDR system running in parallel sends 600 emails per day and receives 36 replies — a 6% per-touch reply rate. In raw terms, the AI generated four times more replies. But its per-touch efficiency is less than half the human's. The AI is working harder to accomplish less per interaction, which signals a problem with targeting precision, personalization quality, or account selection.

Per-touch reply rate is a direct measure of how well your AI SDR's outreach is resonating with the people it reaches. A low per-touch rate relative to your human benchmark usually points to one of three root causes: sequences targeting accounts outside the in-market window, personalization that is too generic to drive a response, or a trigger logic problem sending outreach based on weak or stale signals. Each of these is fixable, but only if you are measuring at the touch level.

How to measure it: Track total positive replies divided by total touches sent for both your AI and human SDR cohorts over the same 30-day window. Set a minimum threshold of 1,000 AI touches before drawing conclusions — smaller samples produce noisy data that will drive incorrect decisions. Positive replies should be defined consistently: responses that indicate interest or request more information, excluding auto-replies and unsubscribes.

Benchmark to aim for: AI SDR tools with well-calibrated targeting should reach 60-80% of a human SDR's per-touch reply rate. Below 50% is a red flag indicating a targeting or personalization problem that will degrade all downstream metrics. Unify customers using signal-based targeting — routing outreach only to accounts showing active buying signals such as job changes, intent data spikes, or pricing page visits — typically see per-touch reply rates 1.4x higher than teams running static list-based AI sequences, because every touch is contextualized to a real, observable in-market signal.

How Do You Measure the Quality of Pipeline Your AI SDR Generates?

Quality-adjusted pipeline measures the percentage of AI-booked meetings that convert to a qualified sales opportunity. It is the single most important metric for evaluating AI SDR performance, and the one most teams skip in favor of easier-to-track activity numbers.

Booking a meeting is not hard at scale. Any system sending enough volume at enough contacts will generate calendar accepts. The critical question is whether those meetings are with the right buyers, at the right companies, at the right stage of their buying cycle — or whether they are calendar clutter from contacts who accepted out of curiosity and have no real intent to buy. The meeting-to-opportunity conversion rate is how you answer that question with data.

Quality-adjusted pipeline matters more for AI SDRs than for human reps because the volume differential makes it easy for a poor-quality AI motion to generate a misleading pipeline number. If an AI books 100 meetings but only 12% convert to opportunities, versus a human rep who books 20 meetings with 40% converting, the human is generating more than twice the qualified pipeline at one-fifth the meeting volume. The raw meeting number is almost useless without the conversion layer.

How to measure it: Pull every meeting booked by your AI SDR motion over a defined period — 90 days is the minimum for statistical validity. Track each meeting through your CRM to opportunity stage. Divide qualified opportunities created by total meetings held, excluding no-shows from the denominator (or track no-show rate as a separate signal of targeting quality). Compare this rate to your human SDR cohort over the same period.

What to watch for: AI SDR tools typically underperform human reps on this metric in the first 30 days of deployment, when calibration is still in progress. By Day 60-90, a well-configured AI motion running on signal-based triggers should reach 70-85% of a human rep's meeting-to-opportunity rate. Unify's internal data shows that customers using buying-signal triggers for AI outreach achieve meeting-to-opportunity conversion rates of 35-45%, compared to 18-25% for teams running high-volume, untargeted AI sequences. The signal layer is what separates a tool that generates activity from one that generates qualified pipeline.

For more on how signal-based triggers improve pipeline quality throughout the outbound funnel, see the Unify guide on signal-based selling and how it changes outbound prioritization.

How Does Speed-to-Lead Differ Between AI and Human SDRs?

Speed-to-lead measures how quickly your sales system responds when a buying signal fires — a target account visits your pricing page, a key contact changes jobs to an ICP-fit company, or a trial user crosses a usage threshold. AI SDRs can respond in minutes; human reps typically respond in hours. That gap is one of the clearest, most measurable performance advantages an AI system delivers.

The business impact of response time on inbound signals is well-documented. The foundational research on this, published in the Harvard Business Review and based on a study of 100,000 inbound leads across B2B companies, found that firms responding to inbound leads within one hour were seven times more likely to qualify the lead than those who waited even one hour longer. Subsequent analysis by sales researchers and organizations including InsideSales.com and Drift has consistently replicated this directional finding: faster first response produces significantly higher qualification rates, with the sharpest drop-off occurring in the first hour. Human SDRs, managing a full book of outreach and follow-up tasks across packed schedules, routinely respond to inbound signals two to four hours after they fire during business hours — and not at all during evenings and weekends.

AI SDR tools, when properly configured, eliminate this delay. A signal fires, the system evaluates it against ICP criteria and sequence rules, and outreach goes out within minutes. The prospect receives a relevant, personalized message at the moment their intent is highest, rather than hours later when their attention has moved elsewhere or a competitor has already responded.

How to measure it: Log the timestamp when each inbound signal is captured by your system and the timestamp when the first outreach touch is sent. Track this for both your AI motion and your human SDR motion. Calculate the median response time and the 90th percentile response time for each cohort — the 90th percentile reveals how often your system fails on slow-response outliers.

Benchmark: Human SDRs at high-performing B2B SaaS companies average 2-4 hours response time to inbound signals during business hours, with near-zero coverage outside those hours. AI SDR tools should achieve a median response time under 15 minutes. Any AI system consistently responding in more than 30 minutes indicates a trigger configuration problem or a queue backup in the sequence logic that needs to be addressed before the evaluation window closes.

The incremental opportunity this metric reveals: Calculating speed-to-lead by time of day surfaces how much of your inbound signal volume fires outside business hours — typically 25-35% of total daily signal volume for North American B2B SaaS companies with any international customer base. That signal volume, if captured only by an AI system, represents pipeline your human team structurally could not have generated. This sets up the next metric directly.

How Much Incremental Pipeline Does Your AI SDR Generate After Hours?

Off-hours pipeline is the clearest proof of incremental AI SDR value: the meetings booked and opportunities created from signals that fired while your human team was offline. This pipeline does not cannibalize human rep output — it is additional coverage that did not exist before the AI was deployed.

Human SDRs typically work 40-45 hours per week across five business days, covering roughly 8-9 hours per day in their local time zone. An AI SDR system operates across all 168 hours in a week — covering evenings, weekends, and every time zone your ICP spans. For B2B SaaS companies with buyers across North America and EMEA, this coverage gap is substantial. An account in London showing buying signals at 9 AM GMT is generating those signals at 4 AM Eastern — outside any human team's coverage window. Without an AI system active, that signal decays before anyone acts on it.

How to measure it: Tag every meeting booked and opportunity created by your AI SDR with the timestamp of the initiating outreach touch. Segment results into two categories: business hours (your primary team's local timezone, Monday through Friday) and off-hours (all other times). Calculate off-hours pipeline as a percentage of total AI-generated pipeline, tracked monthly. Run this calculation for at least 60 days before drawing conclusions, as weekly variation can be significant.

What the data typically shows: Unify customers with global ICP coverage — accounts spanning North America, EMEA, and APAC — attribute 30-40% of AI-generated pipeline to off-hours outreach. For North America-only coverage, off-hours attribution drops to 15-25%, but still represents pipeline that a human team would have missed. This metric becomes particularly powerful in board-level ROI conversations: if your AI SDR generates $2M in qualified pipeline per quarter and 30% originated from off-hours coverage, that is $600,000 per quarter in pipeline your human organization structurally could not have created, regardless of headcount.

Important distinction: Off-hours pipeline should be tracked separately from the AI SDR's business-hours performance when evaluating overall ROI. Mixing them understates the AI's incremental contribution. If you shut down the AI tomorrow, your human reps would not capture that off-hours pipeline — they would simply miss it.

What Is the True Cost Per Qualified Meeting for an AI SDR vs. a Human Rep?

Cost per qualified meeting (CPQM) is the efficiency metric that converts all five measurements into a single comparable number: what does it cost, fully loaded, to generate one meeting with a qualified buyer? This metric cuts through the volume-versus-quality debate and gives you a defensible basis for investment decisions.

Calculating CPQM for human SDRs requires accounting for total compensation, benefits, recruiting and onboarding costs, tools, and management overhead. A fully-loaded SDR in a major market — base salary, variable, benefits, tools, and a share of management time — runs $90,000-$130,000 per year as of 2025, according to compensation data from Betts Recruiting and LinkedIn Salary benchmarks. At 15-20 qualified meetings per month, a strong human SDR generates CPQM in the range of $375-$720 per meeting, depending on their performance tier and total comp.

Calculating CPQM for an AI SDR motion includes platform fees, implementation and configuration time, and the human oversight required to monitor and tune the system — typically a fraction of a full-time role for a properly configured deployment. Unify customers running signal-based AI outbound at scale report cost per qualified meeting in the range of $80-$180, depending on ICP size, sequence complexity, and the volume of signal data being processed. That is a 3-5x efficiency improvement over a fully-loaded human SDR, before accounting for the off-hours and speed-to-lead advantages the AI adds on top.

How to measure it: Sum all costs attributable to your AI SDR motion for a quarter — platform fees, plus the estimated hourly cost of oversight and configuration time. Divide by the total number of qualified meetings generated by the AI during that quarter. Qualified meetings should use the same definition applied in Metric 2: meetings that converted to a sales opportunity, not just calendar accepts. Compare to the identical calculation for your human SDR team.

Critical caveat: CPQM must always be evaluated alongside quality-adjusted pipeline rate. A low CPQM from a motion that produces low-quality meetings is not a good deal — it is a cheap way to clog your AE calendar with unwinnable opportunities. The goal is low CPQM combined with a meeting-to-opportunity conversion rate that meets or approaches your human SDR benchmark. When both conditions are met, the economic case for AI SDR investment is essentially self-evident.

For a deeper look at calculating fully loaded AI outbound ROI and benchmarking it against your current GTM spend, see the Unify breakdown of automated outbound metrics and pipeline attribution.

What Should Your AI SDR Measurement Dashboard Track?

An effective AI SDR measurement dashboard tracks the five core metrics at two cadences: a weekly operational layer for early warning signals, and a monthly pipeline layer for investment decisions. Separating these cadences prevents you from overreacting to short-term noise while keeping you close enough to the data to catch real problems before they compound.

Where to build it: Most teams build this dashboard in Salesforce or HubSpot using custom fields that tag each touchpoint at the activity level — not the account or opportunity level — as AI-generated or human-generated. Account-level tagging loses the attribution detail you need to separate the AI and human contributions to a single deal. Unify's platform automatically tags all AI-generated touches and syncs them to your CRM with timestamps, making this dashboard buildable without additional manual attribution work or data engineering.

What Does a 90-Day AI SDR Evaluation Timeline Look Like?

A 90-day AI SDR evaluation runs in three phases: baseline and calibration (Days 1-30), first performance signal (Days 31-60), and full evaluation window (Days 61-90). This timeline provides enough pipeline data to make a defensible investment decision while staying short enough to course-correct if something is not working. Teams that try to evaluate AI SDR performance in 30 days or fewer almost always reach the wrong conclusion, because meeting-to-opportunity conversion — the most important quality metric — takes time to materialize in the CRM after meetings are booked.

Days 1-30: Baseline and Calibration

The first 30 days of an AI SDR deployment are not a performance window. Investment decisions made on Month 1 data are almost always wrong — the system is still calibrating to your ICP, your sequences have not been tuned based on early reply signals, and pipeline from early meetings has not had time to progress to opportunity stage in your CRM. Month 1 is a setup month, not an evaluation month.

The critical action for Days 1-30 is pulling 90 days of historical data for your human SDR team across all five metrics. This is your benchmark. Without a pre-established human baseline, you have no reference point for the comparison at Day 90. Set up CRM tagging at the activity level, configure your sequence triggers and signal rules, define your ICP tiers for targeting priority, and document every baseline number before the AI motion generates any data of its own.

Key milestone: Human SDR benchmarks documented. CRM tagging live. AI sequences and signal triggers configured and active.

Days 31-60: First Signal of Real Performance

By Day 31, your AI SDR motion has enough volume to generate statistically meaningful data on per-touch reply rate and speed-to-lead. These two metrics are your early-warning system. If per-touch reply rate is below 50% of your human benchmark at Day 45, adjust targeting or personalization before the 60-day review rather than waiting for the full evaluation window to close.

Meeting-to-opportunity conversion will also begin to become visible during this phase, as meetings booked in Days 1-20 progress through the sales pipeline. Early conversion data is noisy — sample sizes are small and pipeline cycles vary — but meaningful trends in the wrong direction (conversion rates below 15%) warrant immediate investigation into the quality of accounts being targeted.

Key milestone: Per-touch reply rate reaches 60% or more of human SDR benchmark. Speed-to-lead P90 is under 20 minutes for AI motion.

Days 61-90: Full Performance Window

The final 30 days of the evaluation window are when all five metrics become readable with statistical confidence. Meeting-to-opportunity conversion is now visible for most meetings booked during the evaluation period. Cost per qualified meeting can be calculated with a full quarter's cost data. Off-hours pipeline attribution is meaningful. This is the window for the investment decision.

The decision framework at Day 90 is not binary. The question is not "AI or humans" — it is "what is the optimal ratio of AI coverage to human relationship-building for our specific GTM motion?" For most B2B SaaS companies at growth stage, the answer is a blended model: AI SDRs handle high-volume signal-triggered outreach and full off-hours coverage, while human SDRs focus on Tier 1 enterprise accounts, referral-driven pipeline, and high-touch multi-threaded deals where judgment and relationship-building create differentiated value.

Key milestone: Meeting-to-opportunity rate reaches 70% or more of human SDR benchmark. CPQM is at least 2x better than human SDR equivalent. Off-hours pipeline attribution is documented and included in board-level ROI calculation.

Why Unify Outperforms Standalone AI SDR Tools on Every Scorecard Metric

Unify outperforms standalone AI SDR tools on every metric in this scorecard — per-touch reply rate, meeting-to-opportunity conversion, speed-to-lead, off-hours pipeline, and cost per qualified meeting — because of a structural difference: Unify starts with buying signals, not contact lists. Most AI SDR tools generate volume without context. They send sequences based on static lists or basic intent data, track surface-level engagement like opens and clicks, and optimize for activity rather than pipeline quality. The measurement problem this article describes — the inability to produce quality-adjusted pipeline at scale — is a direct consequence of AI SDR tools that lack a real buying-signal layer.

Unify is built around the signal layer first. Every outreach sequence is triggered by a real, observable buying signal: a job change at a target account, a product usage spike, a funding announcement, a pricing page visit cluster, or a technology change that reveals a new strategic initiative. This signal context does two things: it ensures outreach reaches accounts that are actually in-market, which drives per-touch reply rate and meeting-to-opportunity conversion; and it personalizes the message with specific, relevant context that makes a generic AI sequence feel like a well-researched human outreach.

The result is measurable across all five scorecard dimensions. Signal-based targeting drives per-touch reply rates 1.4x higher than static-list approaches. Buying-signal triggers produce meeting-to-opportunity conversion of 35-45%, versus 18-25% for volume-only AI SDR motions. Automated signal-to-sequence routing achieves median response times under five minutes. And the combination of higher quality and lower platform cost versus human headcount delivers CPQM of $80-$180 — a 3-5x improvement over a fully-loaded human SDR at any reasonable performance tier.

If you are running an AI SDR evaluation and want to benchmark your current motion against these five metrics, Unify offers a pipeline audit that surfaces where your current stack is losing efficiency. For a deeper look at how the AI-versus-human decision actually plays out across different deal sizes and GTM motions, see Unify's guide to AI SDR vs. human SDR.

Frequently Asked Questions

Why can't you measure AI SDRs with the same metrics as human SDRs?

Human SDR metrics were built for a world where one rep sends 50-80 emails a day within an eight-hour window. AI SDR tools operate at 10x the volume, respond to signals in minutes, and run 24/7. Applying human metrics directly makes the AI look artificially strong on total activity and artificially weak on per-touch quality. You need a normalized scorecard that accounts for volume, response time, off-hours coverage, and cost structure to get a fair comparison.

What are the five metrics for measuring AI SDR performance?

The five normalized metrics are: (1) per-touch reply rate, which divides positive replies by touches sent to control for volume; (2) quality-adjusted pipeline, measured as the meeting-to-opportunity conversion rate; (3) speed-to-lead, the response time to inbound buying signals; (4) off-hours pipeline coverage, isolating pipeline generated outside human work hours; and (5) cost per qualified meeting (CPQM), the fully-loaded efficiency bottom line.

What is a good per-touch reply rate benchmark for AI SDRs?

Well-calibrated AI SDR tools should reach 60-80% of a human SDR's per-touch reply rate. Below 50% is a red flag indicating a targeting, personalization, or signal-quality problem. Unify customers using signal-based targeting typically see per-touch reply rates 1.4x higher than teams running static list-based AI sequences.

How fast should an AI SDR respond to inbound signals?

A properly configured AI SDR should achieve a median response time under 15 minutes to inbound buying signals. Any AI system consistently responding in more than 30 minutes indicates a trigger configuration problem or queue backup. For comparison, human SDRs at high-performing B2B SaaS companies average 2-4 hours during business hours with near-zero coverage outside work hours.

What is a realistic meeting-to-opportunity conversion rate for AI SDRs?

By Day 60-90 of deployment, a well-configured AI SDR running on signal-based triggers should reach 70-85% of a human rep's meeting-to-opportunity conversion rate. Unify customers using buying-signal triggers achieve meeting-to-opportunity conversion rates of 35-45%, versus 18-25% for teams running high-volume, untargeted AI sequences.

What is the typical cost per qualified meeting (CPQM) for AI SDRs vs. human reps?

A fully-loaded human SDR generates CPQM in the range of $375-$720 per meeting, based on $90,000-$130,000 in total annual cost and 15-20 qualified meetings per month. Unify customers running signal-based AI outbound at scale report CPQM in the range of $80-$180 per meeting — a 3-5x efficiency improvement, before accounting for off-hours and speed-to-lead advantages.

How long should an AI SDR evaluation take?

A defensible AI SDR evaluation needs 90 days, structured in three phases: Days 1-30 for baseline setup and calibration, Days 31-60 for the first real performance signal on reply rate and speed-to-lead, and Days 61-90 for full evaluation across all five metrics including meeting-to-opportunity conversion. Evaluations shorter than 60 days almost always reach the wrong conclusion because pipeline quality metrics take time to materialize in the CRM.

Sources

- Harvard Business Review: "The Short Life of Online Sales Leads" — hbr.org/2011/03/the-short-life-of-online-sales-leads. Foundational primary research on lead response time based on 100,000 inbound leads. The directional finding — that response within the first hour dramatically outperforms later response — has been replicated across multiple subsequent studies by sales research organizations including InsideSales.com and Drift.

- LinkedIn: "State of Sales 2025" — business.linkedin.com/sales-solutions/b2b-sales-strategy-guides/the-state-of-sales-report. Annual research report on B2B sales rep behaviors, including response time data and AI adoption benchmarks.

- Betts Recruiting: SDR Compensation Benchmarks 2025 — insights.bettsrecruiting.com/compensation-guide/. Annual SDR and sales compensation guide covering base salary, OTE, and benefits benchmarks by market and role level.

- Gartner: Sales AI and Revenue Intelligence Research (2024-2025) — gartner.com/en/sales/topics/sales-ai. Gartner's research hub covering AI adoption in sales development and revenue operations.

- G2: AI Sales Assistant Software Category Reviews — g2.com/categories/ai-sales-assistant. Buyer reviews and comparative ratings for AI SDR and sales assistant platforms.

- Unify: Platform customer data on signal-based outreach performance, CPQM benchmarks, and off-hours pipeline attribution — aggregated and anonymized across the Unify customer base. Internal data, referenced as proprietary benchmarks throughout this article — unifygtm.com.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)