TL;DR: To measure the success of an automated outbound program in 2026, track metrics across three tiers: (1) Tier 1 activity metrics like accounts contacted, deliverability rate, and signal-to-send latency that confirm the program runs at scale, (2) Tier 2 engagement metrics like reply rate (benchmark: 3.43% average, 5.5%+ top quartile), positive reply rate, and meeting held rate that show prospects are responding, and (3) Tier 3 revenue metrics like pipeline generated, cost per opportunity, pipeline-to-spend ratio, and outbound-attributed revenue that prove business impact. Most teams only track Tier 1. The teams that keep and grow their outbound budget track all three tiers and present them in a format executives can evaluate against ROI criteria. This guide includes 2026 benchmarks by company stage, an ROI formula with a worked example, and the 5-slide executive presentation template.

Most outbound metric dashboards are built for SDRs, not for CFOs.

They count emails sent, calls logged, and sequences enrolled. Those numbers look productive in a weekly standup. They fall apart the moment someone in finance asks what the program actually returned on the dollars invested.

This matters more in 2026 than ever before. Gartner predicted that large organizations would synthetically generate 30% of their outbound messages by 2025, and current data from SalesOS shows that AI-generated messages already account for 30% of all outbound, a 98% increase since 2022. That shift means the metrics themselves are changing. Signal-to-send latency, AI personalization quality, and multi-channel attribution accuracy are now just as important as reply rates and meetings booked.

This guide answers the question revenue leaders ask when building or defending an automated outbound program: what should we actually measure, and how do we present it in a way that secures budget? The answer is a three-tier metrics framework that works whether your outbound is run by human SDRs, AI SDRs, or a hybrid of both.

What Is the 3-Tier Automated Outbound Metrics Framework?

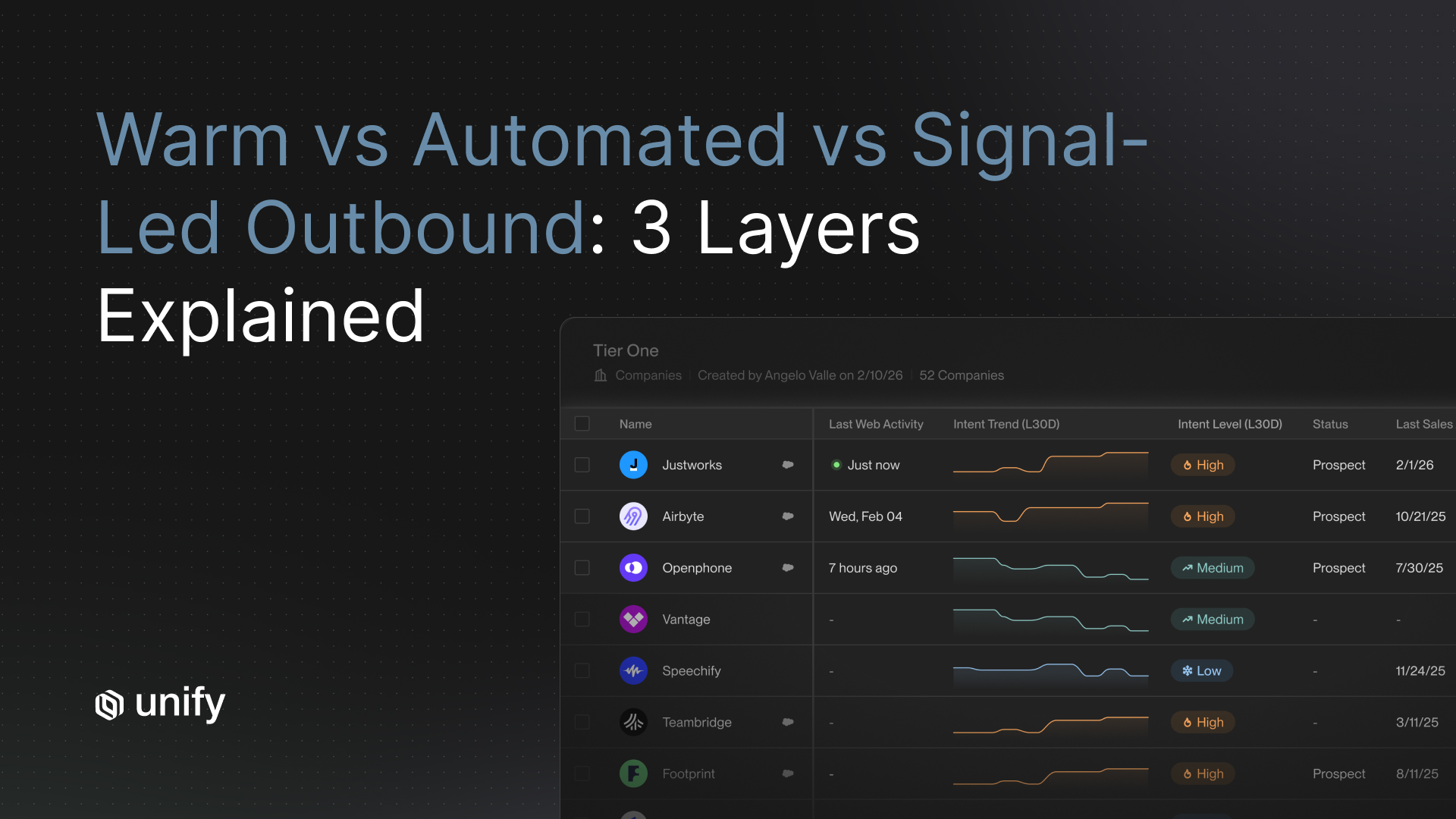

The 3-tier automated outbound metrics framework organizes every outbound metric into one of three tiers, each representing a different stage in the chain from outreach to revenue. Tier 1 covers activity (did the program run?), Tier 2 covers engagement (are prospects responding?), and Tier 3 covers revenue (is the program generating pipeline and closed deals?).

- Tier 1: Activity Metrics. Did the program run? Are we reaching the right number of accounts at the right cadence with acceptable deliverability?

- Tier 2: Engagement Metrics. Are prospects responding? Is the messaging resonating well enough to create a conversation and a booked meeting?

- Tier 3: Revenue Metrics. Is the outbound program converting those conversations into pipeline and closed revenue at a cost that justifies the investment?

Each tier answers a different question for a different stakeholder. Tier 1 belongs in your operational dashboard for SDR managers. Tier 2 belongs in your weekly revenue review for sales leadership. Tier 3 belongs in your board slide for executives and finance.

At Unify, our growth team uses this exact framework to manage the automated outbound plays that contributed to over $120M in annual pipeline. We track all three tiers in a single analytics view. That unified measurement is what allows teams to connect a buying signal detected on Monday to a meeting booked on Wednesday to a deal closed three months later, with full attribution at every step.

"The mistake most teams make is presenting Tier 1 activity data to executives and wondering why the budget conversation goes sideways. Executives don't fund activity. They fund outcomes. Show them Tier 3 first, then work backward."

What Are the Tier 1 Activity Metrics for Automated Outbound?

Tier 1 activity metrics confirm that your automated outbound program is actually running as designed. They are the foundation of the framework, not the headline. Think of them as vital signs for program health rather than performance grades.

The Core Tier 1 Metrics

- Accounts contacted per week. The total number of unique accounts that received at least one outbound touch during the period. This tells you whether your list coverage matches your ICP definition and whether your sending infrastructure is operating at planned capacity.

- Touches per account per sequence. The average number of steps (email, LinkedIn, call) delivered per enrolled account. Under-touching leaves deals on the table. Over-touching damages deliverability and brand reputation. According to Instantly's 2026 Cold Email Benchmark Report, the optimal sequence length is 4-7 touchpoints, with the first email capturing 58% of all replies.

- Sequence completion rate. What percentage of enrolled accounts received every planned touch in the sequence? A low completion rate often reveals deliverability problems or CRM data quality issues before they show up in reply rates.

- Email deliverability rate. The percentage of sent emails that landed in the inbox rather than spam or bouncing entirely. The global average inbox placement rate is approximately 84%, according to Validity's 2025 Email Deliverability Benchmark Report. High-performing outbound teams maintain inbox placement rates above 90%. For a deeper look at how to maintain strong deliverability, see our guide on cold email deliverability in 2026.

- Bounce rate. Google, Yahoo, and Microsoft now enforce bulk sender rules requiring bounces under 2% and spam complaints below 0.3%. Hard bounces above 2% signal list quality problems. Soft bounces above 5% suggest sending volume is outpacing mailbox warm-up.

- Signal-to-send latency. The time between detecting a buying signal (website visit, job change, tech install) and sending the first outbound touch. This metric did not exist five years ago. In 2026, it is one of the most important Tier 1 metrics. Teams that act on signals within 24 hours see significantly higher conversion rates than teams that batch signal-based outreach weekly. Responding within 5 minutes makes a conversion 9x more likely, according to research from SalesOS.

Tier 1 Benchmarks by Company Stage (2026)

If your Tier 1 numbers are within range, your program is healthy at the infrastructure level. Now you need to know whether those touches are actually resonating.

What Are the Tier 2 Engagement Metrics That Show Outbound Is Working?

Tier 2 engagement metrics measure whether your messaging is resonating with the right prospects. A high open rate with a low reply rate means the subject line works but the message does not. A high reply rate with low meeting conversion means the ICP is curious but not in-market. Each engagement metric tells you something specific about where the program needs adjustment.

The Core Tier 2 Metrics

- Open rate. Open rate is a directional signal, not a definitive one. Apple Mail Privacy Protection and similar tools inflate this number by auto-loading tracking pixels. The average B2B cold email open rate is roughly 27.7% in 2026, down from 36% in 2023, according to Martal Group's B2B cold email statistics report. Track trends over time rather than absolutes, and do not use open rate as a primary performance indicator.

- Reply rate. According to Instantly's 2026 Cold Email Benchmark Report (analyzing billions of cold emails), the average cold email reply rate is 3.43%. Top-quartile campaigns achieve 5.5%, and elite performers exceed 10.7%. Below 1% signals a messaging or ICP targeting problem that more volume will not solve.

- Positive reply rate. The subset of replies that express genuine interest. This is the most important engagement metric because it strips out unsubscribe requests, out-of-office auto-replies, and negative responses. A positive reply rate of 1-2% on cold outbound is a reasonable baseline. Hyper-personalized emails generate 2-3x higher positive reply rates than generic templates, according to SalesOS SDR statistics.

- Meeting booked rate. The percentage of contacted accounts that convert to a first meeting. High-performing outbound programs convert 2-4% of contacted accounts to first meetings, with enterprise sequences targeting C-suite trending toward 1-2% and mid-market sequences targeting directors and VPs landing closer to 3-5%.

- Meeting held rate. The percentage of booked meetings that were actually held. A meeting held rate below 70% signals that pre-meeting qualification is too loose. The industry benchmark is 15 meetings booked per month with an 80% show rate, yielding 12 actual meetings held. Top-performing teams book 20-25 meetings monthly with show rates above 85%.

- Opt-out / unsubscribe rate. Should stay below 0.5% per campaign. Sustained unsubscribe rates above 1% are a deliverability warning sign and often precede domain reputation damage. Gmail enforces a spam complaint threshold of 0.3% for bulk senders.

Tier 2 Benchmarks by Company Stage (2026)

Reply rates often decrease as company stage increases because larger teams send higher volume to broader lists. Positive reply rate and meeting held rate tend to improve because larger teams have more refined ICP targeting and warmer signal inputs feeding their outbound.

Multi-channel sequences that combine email, LinkedIn, and phone produce 287% higher engagement than single-channel approaches, according to SalesOS outbound SDR statistics. This is why modern outbound platforms like Unify orchestrate touches across all three channels within a single sequence, rather than running email-only campaigns. The engagement lift from multi-channel is one of the most consistent findings in outbound benchmarking data.

What Are the Tier 3 Revenue Metrics Executives Care About?

Tier 3 revenue metrics are the only metrics that matter when executives decide whether to continue or expand an outbound investment. Every Tier 3 metric connects the outbound program directly to the revenue line. If you only present Tier 1 and Tier 2 data, you are asking for budget based on activity, not outcomes.

The Core Tier 3 Metrics

- Pipeline generated from outbound. The total dollar value of opportunities where the first meaningful touch came from your automated outbound program. SDRs typically generate 46-73% of total pipeline, with the median SDR-generated pipeline hitting $3 million annually, according to SalesOS SDR statistics. Track this as both a raw dollar number and as a percentage of total pipeline.

- Outbound-sourced win rate. The percentage of outbound-originated opportunities that close. This number is typically 5-15 percentage points lower than inbound win rates because outbound creates demand rather than capturing it. Signal-based outbound programs see significantly higher win rates than volume-based approaches because they target accounts already showing buying intent.

- Pipeline-to-spend ratio (P:S). A P:S ratio of 5:1 or higher is generally considered healthy for automated outbound. At 10:1 or above, you have a compelling case for program expansion. This is the single most useful leading indicator for weekly and monthly decision-making because it moves faster than closed revenue.

- Cost per opportunity (CPO). Total outbound program cost divided by qualified opportunities created. This normalizes performance across different team sizes and spending levels, making direct comparisons between channels possible. AI-assisted programs using signal-based targeting at Unify often achieve CPO reductions of 30-50% compared to volume-based approaches, because they generate higher-quality pipeline from fewer total touches.

- Outbound-attributed revenue. Closed-won revenue where outbound was the originating source. This is the number your CFO wants to see. Track it monthly and show the trend over at least three quarters.

- Payback period. How many months does it take for the revenue generated by outbound-originated deals to pay back the cost of the program? For most B2B SaaS companies with ACV above $20K, a payback period under 12 months is strong justification for sustained investment. The median B2B SaaS LTV:CAC ratio is 3.8x, with top performers reaching 5-7x.

Tier 3 Benchmarks by Company Stage (2026)

For a deeper look at how top teams build pipeline through signal-based outbound plays, see our breakdown of the metrics that matter in modern outbound.

How Should You Measure AI SDR Performance in 2026?

AI SDRs should be measured on the same Tier 2 and Tier 3 outcome metrics as human SDRs: positive reply rate, meeting held rate, pipeline generated, and cost per opportunity. The difference is in Tier 1, where activity volume becomes less meaningful because AI operates at a fundamentally different scale. The real question is whether the AI's output quality matches or exceeds human-level engagement.

AI-generated messages now account for 30% of all outbound volume, according to SalesOS outbound SDR statistics, and multi-agent AI systems deliver 7x higher conversion than traditional models. That means AI is not just sending emails. It is deciding which accounts to contact, when to contact them, and what to say. Each of those decisions needs its own measurement layer.

AI SDR-Specific Metrics to Track

- AI vs. human positive reply rate. Compare the positive reply rate on AI-generated messages against human-written messages sent to the same ICP segments. This tells you whether AI personalization is actually working. If AI messages match or beat human-written messages, the AI is adding real value. If they consistently underperform by more than 30%, the personalization model needs tuning.

- Signal-to-send latency. AI SDRs should act on buying signals within minutes, not hours. Track the median time between signal detection and first outbound touch. This is often the single biggest advantage AI has over human SDRs. At Unify, our automated plays can trigger outreach within minutes of a signal, which is an advantage no human team can match at scale.

- Personalization quality score. Rate a sample of AI-generated messages weekly on relevance (does it reference the right signal?), accuracy (are company and role details correct?), and tone (does it read like a human wrote it?). A score below 70% on any dimension means the AI is hurting your brand more than helping it.

- Cost per opportunity: AI vs. human. Compare the fully loaded CPO for AI-sourced pipeline against human-sourced pipeline. Include platform costs for the AI tools and full compensation costs for human SDRs. This is the metric that justifies the investment in AI. AI-assisted outbound programs typically reduce CPO by 30-50% compared to volume-based human approaches.

- Escalation rate. What percentage of AI-initiated conversations require human intervention before converting to a meeting? A healthy escalation rate is 20-40%. Below 20% may mean the AI is handling everything well, or it may mean warm leads are falling through the cracks. Above 40% suggests the AI is creating conversations it cannot manage.

For a broader view of how AI is reshaping the outbound playbook, see our guide on the 3 frameworks behind $120M in pipeline.

How Do You Calculate the ROI of an Outbound Program?

The outbound ROI formula is: (Outbound-Attributed Revenue - Total Program Cost) / Total Program Cost x 100. Run it every quarter and include it in every budget review. This single calculation is what separates teams that defend their outbound budget from teams that lose it.

Example calculation for a Series A team running a hybrid human + AI outbound program:

This calculation uses projected revenue based on win rate rather than only closed-won revenue, because outbound deals typically have a longer sales cycle than the measurement period. Show the CFO both the lagging indicator (closed-won from last quarter's outbound) and the leading indicator (pipeline-to-spend ratio). Present both numbers side by side so finance can evaluate the program on both realized and projected returns.

Customer acquisition cost across B2B SaaS has risen 40-60% between 2023 and 2025. That makes the efficiency story more important than ever. A program delivering a P:S ratio above 10:1 has a strong counterargument to cost-cutting pressure, because every dollar cut removes ten or more dollars from the pipeline.

Teams running signal-based outbound through Unify frequently see their P:S ratios improve over time as the platform learns which signals correlate with pipeline creation. That compounding efficiency is harder to achieve with manual outbound, where optimization depends entirely on human iteration speed.

How Do You Present Outbound Metrics to Executives for Budget Approval?

The most effective way to present outbound metrics for budget approval is a 5-slide sequence: headline number, funnel walkthrough, efficiency trend, investment ask, and risk of inaction. Finance teams and executives evaluate investments using a mental model of: did it work, can it scale, and what do we lose if we stop? Each slide maps to one of those questions.

Slide 1: The Headline Number

Lead with outbound-attributed revenue and P:S ratio. One number proves performance. The other proves efficiency. Example: "Last quarter, outbound sourced $210K in closed revenue at an 18.9:1 pipeline-to-spend ratio, with signal-based plays converting at 2.3x the rate of cold list plays."

Slide 2: The Funnel

Walk through the tier structure top to bottom: accounts contacted, reply rate, meetings held, opportunities created, closed-won. This shows executives that the program is a managed system, not a spray-and-pray operation. Include the benchmark comparison to show where you outperform and where you are improving. If you run both human and AI SDR motions, show the funnel for each side by side.

Slide 3: The Efficiency Trend

Show cost-per-opportunity and win rate trending over the last three quarters. If both are improving, the program is maturing. A CPO that drops from $3,500 to $2,100 over three quarters is a compelling argument for reinvestment. An improving win rate shows the ICP targeting is getting sharper over time.

Slide 4: The Investment Ask

Present the investment in terms of pipeline output, not headcount cost. Instead of "we need $200K for two more SDRs," say "a $200K incremental investment is projected to generate $2M in pipeline at our current 10:1 P:S ratio." That reframes the conversation from cost to return. If the incremental investment is in AI tooling rather than headcount, the efficiency argument is even stronger.

Slide 5: The Risk of Inaction

What pipeline goes ungenerated if the investment is not made? If outbound currently sources 40% of your pipeline and the program is cut or frozen, quantify the gap in dollars. Finance teams respond to downside risk as strongly as they respond to upside potential. SDRs generate 46-73% of total pipeline at most B2B SaaS companies. Cutting that source creates a hole no other channel can fill on the same timeline.

How Do You Attribute Revenue to Outbound When Buyers Touch Multiple Channels?

Multi-channel attribution is the single largest reason outbound programs are underfunded. When a prospect receives an outbound email, visits the website twice, then fills out a demo request form two weeks later, the CRM credits the inbound form. The outbound sequence that initiated the relationship gets no attribution, and the program's actual contribution becomes invisible.

Campaigns using 3+ channels drive 287% higher purchase rates than single-channel efforts, according to SalesOS outbound research. That multi-channel reality makes accurate attribution both more important and more difficult.

Fixing outbound attribution requires three things:

- First-touch attribution tracking at the account level, not the contact level. If any contact at an account received outbound before the opportunity was created, that opportunity should carry an outbound-source tag. Account-level attribution captures influence that contact-level attribution misses entirely.

- Signal-based engagement tracking. Knowing that an account visited your pricing page the day after receiving your outbound email is a critical attribution signal. Most teams lose this data because it lives in a separate tool from their sequencing platform. Unify's approach connects these signals in a single account timeline.

- A unified record of all outbound touches per account. If a prospect is reached via email, LinkedIn, and a cold call before converting, all three touches should be visible in the same account timeline to establish the outbound-source relationship clearly.

This is where Unify changes the measurement equation. Unify connects buying signal data directly to your outbound sequences and tracks engagement at the account level across every channel. When an account that received outbound later converts, Unify's unified account timeline makes the outbound attribution clear, even when the final conversion happened through a different channel. That clean attribution is what allows revenue teams to produce the Tier 3 data that wins budget conversations.

Instead of manually assembling data from your sequencing tool, CRM, and web analytics, Unify gives you a single account-level view that shows exactly which outbound touches preceded which pipeline events. That data is what separates teams that defend their outbound budget from teams that lose it.

Practical Attribution Rules for Outbound

Perfect multi-touch attribution is nearly impossible for outbound. Instead of chasing a perfect model, implement these practical rules:

- Outbound-sourced: Any opportunity where the first touch was an outbound sequence, regardless of what happened after.

- Outbound-influenced: Any opportunity where an account received outbound within 90 days before the opportunity was created, even if the final conversion was inbound.

- Shared attribution: When both inbound and outbound touched an account before conversion, credit both channels in your reporting. Use shared attribution for trend analysis, but use outbound-sourced for budget justification.

What Are the Most Common Outbound Measurement Mistakes?

The six most common outbound measurement mistakes are: tracking only activity metrics, aggregating across different ICPs, measuring booked meetings instead of held meetings, ignoring signal-to-sequence lag, resetting attribution on re-engagement, and treating AI and human outbound as one program. Each of these mistakes systematically under-reports outbound's true contribution to revenue and makes it harder to justify continued investment.

Mistake 1: Measuring only what is easy to count

Emails sent and calls logged are easy to count. Pipeline generated from outbound is hard. Most teams default to easy metrics, which means they can never make a revenue-based argument for their investment. Build Tier 3 measurement into your program from day one, even if the initial numbers are small. The average SDR spends only 2 hours per day actively selling, according to SalesOS SDR statistics. Measuring activity alone tells you nothing about whether those 2 hours are producing revenue.

Mistake 2: Aggregating metrics across very different ICPs

A reply rate of 2.3% might look healthy until you segment it and realize that mid-market accounts are replying at 4.1% while enterprise accounts are at 0.8%. Aggregating across ICPs hides the signal that tells you where to focus. Segment every Tier 2 and Tier 3 metric by ICP segment, company size, and persona. This is especially critical for teams running AI SDRs, where a single aggregate number can mask the fact that the AI performs well on one segment and poorly on another.

Mistake 3: Measuring meetings booked instead of meetings held

A meeting booked is not a qualified opportunity. A team that books 50 meetings per month with a 55% hold rate is underperforming a team that books 30 meetings with an 85% hold rate. The industry benchmark is 80% show rate on booked meetings. Always track held rate separately and use it as the input to your pipeline calculations.

Mistake 4: Ignoring the signal-to-sequence lag

If your program uses intent signals or buying triggers to initiate outbound, the time between signal detection and outreach matters enormously. Research consistently shows that responding to a new lead within 5 minutes makes conversion 9x more likely. Waiting 48-72 hours to act on a buying signal means reaching out after a competitor already has. Track your signal-to-send latency as an operational metric and include it in your Tier 1 dashboard.

Mistake 5: Resetting attribution on re-engagement

If a prospect who received outbound 90 days ago re-engages through inbound, that deal belongs in a shared attribution model, not purely inbound. Outbound often plants the seed that inbound harvests weeks or months later. Clean up your attribution logic so outbound gets credit for deals it influenced, even when a later touchpoint closed the loop.

Mistake 6: Treating AI and human outbound as a single program

If you run both human SDRs and AI SDRs, do not combine their metrics into a single dashboard view for performance analysis. AI outbound operates at different volumes, different speeds, and different cost structures. Combining the two masks where each motion is strong and where it needs work. Report them separately in Tier 1 and Tier 2, then combine them only at Tier 3 for the aggregate revenue picture.

How Do You Build an Outbound Metrics Dashboard?

A functional outbound metrics dashboard has three views: one for SDRs (Tier 1 daily), one for revenue leaders (Tier 2 weekly), and one for executives (Tier 3 monthly). Each view uses a different cadence and a different set of metrics because each audience is making different decisions with the data.

The SDR Daily View (Tier 1)

- Today: Accounts contacted, emails delivered, deliverability rate, signal-to-send latency

- This week: Sequence completion rate, bounce rate, opt-out rate

- Action threshold: Pause sequences if deliverability drops below 85% or bounce rate exceeds 2%. Escalate immediately if spam complaint rate exceeds 0.3%.

The Revenue Leader Weekly View (Tier 2)

- This week: Reply rate, positive reply rate, meetings booked, meetings held, AI vs. human performance split

- This month: Reply rate trend vs. prior period, meeting held rate, sequence variant performance, multi-channel engagement breakdown

- Action threshold: A/B test messaging if positive reply rate drops below 0.8% for two consecutive weeks. Review AI personalization quality if AI positive reply rate drops more than 30% below human baseline.

The Executive Monthly View (Tier 3)

- This month: Pipeline generated from outbound, CPO, P:S ratio, AI vs. human CPO comparison

- This quarter: Outbound-attributed revenue, win rate, payback period trend, outbound as percentage of total pipeline

- Action threshold: Flag for budget review if P:S ratio drops below 4:1 for two consecutive months. Present expansion case if P:S exceeds 10:1 for a full quarter.

Unify's built-in analytics dashboards are designed around exactly this three-view structure. The platform connects play-level activity metrics to account-level pipeline attribution, which means you can drill from an executive-level P:S ratio down to the specific play, sequence, and signal that generated each opportunity. Teams using Unify's dashboards typically spend less than 30 minutes per week on reporting, because the data flows automatically from sequence execution to revenue attribution without manual assembly across multiple tools.

Frequently Asked Questions About Automated Outbound Metrics

What is a good reply rate for automated outbound?

According to Instantly's 2026 Cold Email Benchmark Report (analyzing billions of cold emails), the average cold email reply rate is 3.43%. Top-quartile campaigns achieve 5.5%, and elite performers exceed 10.7%. A positive reply rate (interest-only replies) of 1-2% is a healthy baseline for cold outbound. If you are sending 1,000 emails per week and getting fewer than 10 positive replies, the issue is targeting or messaging, not volume.

How do I calculate the ROI of my outbound program?

Use the formula: Outbound ROI = (Outbound-Attributed Revenue - Total Program Cost) / Total Program Cost x 100. Total program cost should include SDR compensation, tooling (including AI platforms), and a management overhead estimate. Outbound-attributed revenue should use both closed-won from the current period and a projected figure based on current pipeline and historical win rate.

What metrics should I present to the CFO for outbound budget approval?

Lead with pipeline-to-spend ratio and outbound-attributed revenue. Support with CPO trend (declining CPO shows the program is maturing), win rate, and a projection of pipeline gap if the program is reduced. Executives approve investments that show evidence of performance, efficiency improvement, and quantified downside of inaction.

How do AI SDR metrics differ from human SDR metrics?

AI SDRs should be measured on the same Tier 2 and Tier 3 outcome metrics as human SDRs: positive reply rate, meeting held rate, pipeline generated, and cost per opportunity. The key difference is in Tier 1. AI SDRs operate at much higher volume, so activity metrics like accounts contacted per week become less meaningful as a performance indicator. Focus instead on signal-to-send latency (should be minutes, not hours), personalization quality score, and the ratio of AI-generated messages that receive positive replies versus human-written ones.

How many metrics is too many for an outbound dashboard?

Keep each dashboard view to five to seven metrics. More than that creates noise and prevents clear action signals. The three-tier structure naturally constrains dashboard complexity: Tier 1 gets five to six metrics, Tier 2 gets five to six, Tier 3 gets five to six. That is a total of 15-18 metrics across three views, which is sufficient to fully characterize any automated outbound program.

At what point should I start tracking Tier 3 revenue metrics?

Start tracking Tier 3 revenue metrics from day one, even if the numbers are zero for the first several weeks. The time lag between outbound activity and closed-won revenue means you need a long measurement runway. If you wait until month four to set up Tier 3 tracking, you will not have the baseline data to show a trend when budget review happens at month six. Set up tracking on day one, expect the first Tier 3 data to appear around weeks 6-10, and begin trend analysis at the 90-day mark.

What is the difference between pipeline-to-spend ratio and outbound ROI?

Pipeline-to-spend ratio (P:S) is a leading indicator. It measures the pipeline value generated for every dollar spent on the outbound program. Outbound ROI is a lagging indicator. It measures actual closed revenue against total program cost. Use P:S for weekly and monthly decision-making because it moves faster than closed revenue. Use ROI in quarterly and annual budget reviews because it is the definitive measure of return. A healthy program will show a P:S of 5:1 or higher and an ROI that grows as the program matures and win rates improve.

What is a good cost per opportunity for outbound in 2026?

Cost per opportunity varies significantly by company stage and deal size. Seed-stage companies typically see $800-$2,500 CPO. Series A/B companies range from $1,500-$4,000. Series C+ and enterprise teams range from $3,000-$8,000. Customer acquisition costs have risen 40-60% since 2023, making CPO efficiency more important than ever. AI-assisted outbound programs using signal-based targeting can reduce CPO by 30-50% compared to volume-based approaches.

The Bottom Line

Measuring automated outbound success is not complicated, but most teams do it wrong by stopping at Tier 1 activity metrics. The 3-Tier framework gives every stakeholder in your organization the data they need: operational health for SDR managers, engagement performance for revenue leaders, and business impact for executives and finance.

In 2026, two additional factors make measurement even more critical. First, AI SDRs require their own measurement layer. Tracking AI personalization quality, signal-to-send latency, and AI-vs-human CPO comparisons is now table stakes for any team running hybrid outbound. Second, multi-channel attribution is harder than ever, and the teams that solve it are the teams that get to keep their budget.

The teams that build and defend outbound programs year after year are the ones that track all three tiers from the start, maintain clean account-level attribution, and present their results in a language finance teams understand: return on investment, cost per outcome, and projected pipeline.

Unify is built to make exactly this measurement possible. By connecting buying signals to outbound sequences and tracking engagement at the unified account level, Unify gives revenue teams the attribution clarity they need to produce Tier 3 data without manually assembling it from five different tools. If you are building an automated outbound program and want the metrics infrastructure in place from day one, see how Unify works.

Sources

- Instantly, "Cold Email Benchmark Report 2026: Reply Rates, Deliverability and Trends"

- SalesOS, "Outbound SDR Statistics 2025: AI, Metrics & Performance Data"

- Martal Group, "B2B Cold Email Statistics 2026: Benchmarks & What Works Now"

- Gartner, "7 Technology Disruptions That Will Completely Change Sales By 2027"

- Apple, "Use Mail Privacy Protection on iPhone"

- Unify, "Pipeline as a Science: Metrics that Matter in Modern Outbound"

- Unify, "How Our Growth Team Operates: 3 Frameworks for $120M Pipeline"

- Unify, "Cold Email in 2026: Domains, Deliverability, Sequences"

- Validity, "2025 Email Deliverability Benchmark Report"

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)