Quick answer: The best AI SDR tool is the one that scores highest on twelve hard criteria, not the one with the loudest marketing. Grade every vendor on signal quality, agent controllability, CRM sync fidelity, objection handling, deliverability hygiene, human-in-the-loop review, ROI attribution, deployment time, prompt and persona customization, channel breadth, pricing model, and enterprise security. Weight each criterion by what your deal motion actually requires, run a 2-week POC, and buy based on scorecard totals. Most "AI SDR" tools score below 50 out of 100 on this rubric.

Every AI SDR buyer's guide online has the same problem. The vendor blogs rank themselves first. The aggregator sites rank whoever paid for the placement. And the "criteria" they cite are usually feature checklists that tell you nothing about whether the thing actually works in your pipeline.

This guide is different. It gives you the 12-criteria scorecard we use at Unify when customers ask us how to evaluate AI SDR tools against each other, including us. It includes the weights, a side-by-side comparison of the major categories of vendors, a 2-week POC test plan you can run yourself, and the three questions buyers always forget to ask.

If you only have 30 seconds, jump to the scorecard table and the POC plan. If you have a budget decision in front of you, read the whole thing.

Why a Criteria-First Approach Beats a Feature Checklist

Most AI SDR buyers pick tools based on demos and brand recognition, then discover six months later that the platform cannot write to the CRM fields their ops team relies on. A criteria-first approach forces you to define what "good" looks like before any vendor walks you through their polished UI. It also gives your procurement team a defensible rubric when leadership asks why you picked one vendor over another.

The 12 criteria below come from reviewing real evaluation spreadsheets from Unify customers over the past 18 months and cross-referencing them with independent review data from G2's 2026 AI Agents Buyer Report. Every criterion is something that has broken a production deployment for someone we know.

The 12-Criteria AI SDR Scorecard

Use this table as your master rubric. Each criterion gets a weight (1-10) based on how much it matters for your motion, and each vendor gets a score (1-10) based on how well they execute. Multiply weight by score for each row, sum the totals, and compare. A perfect score is 600. Most vendors we see land between 280 and 420.

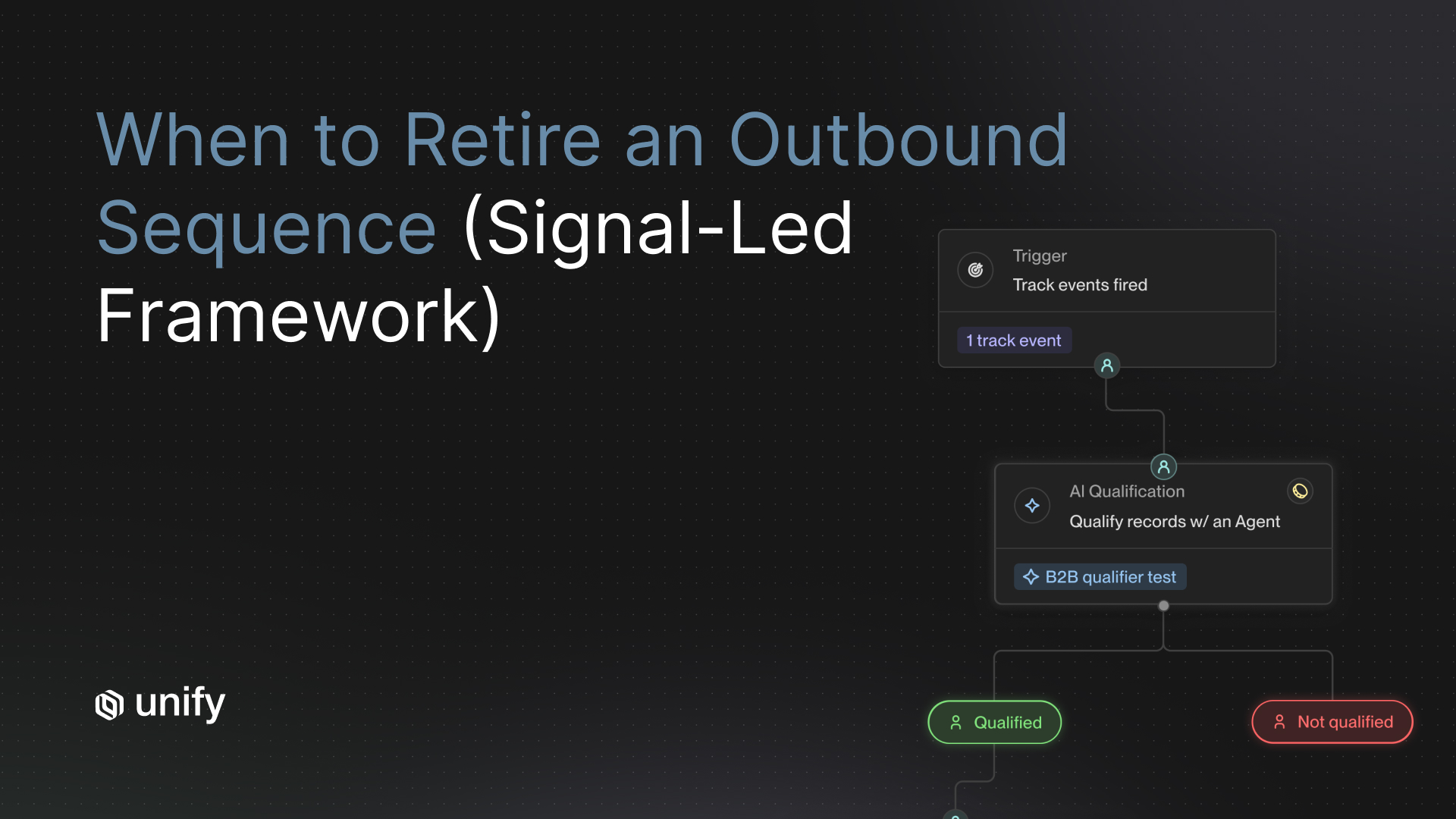

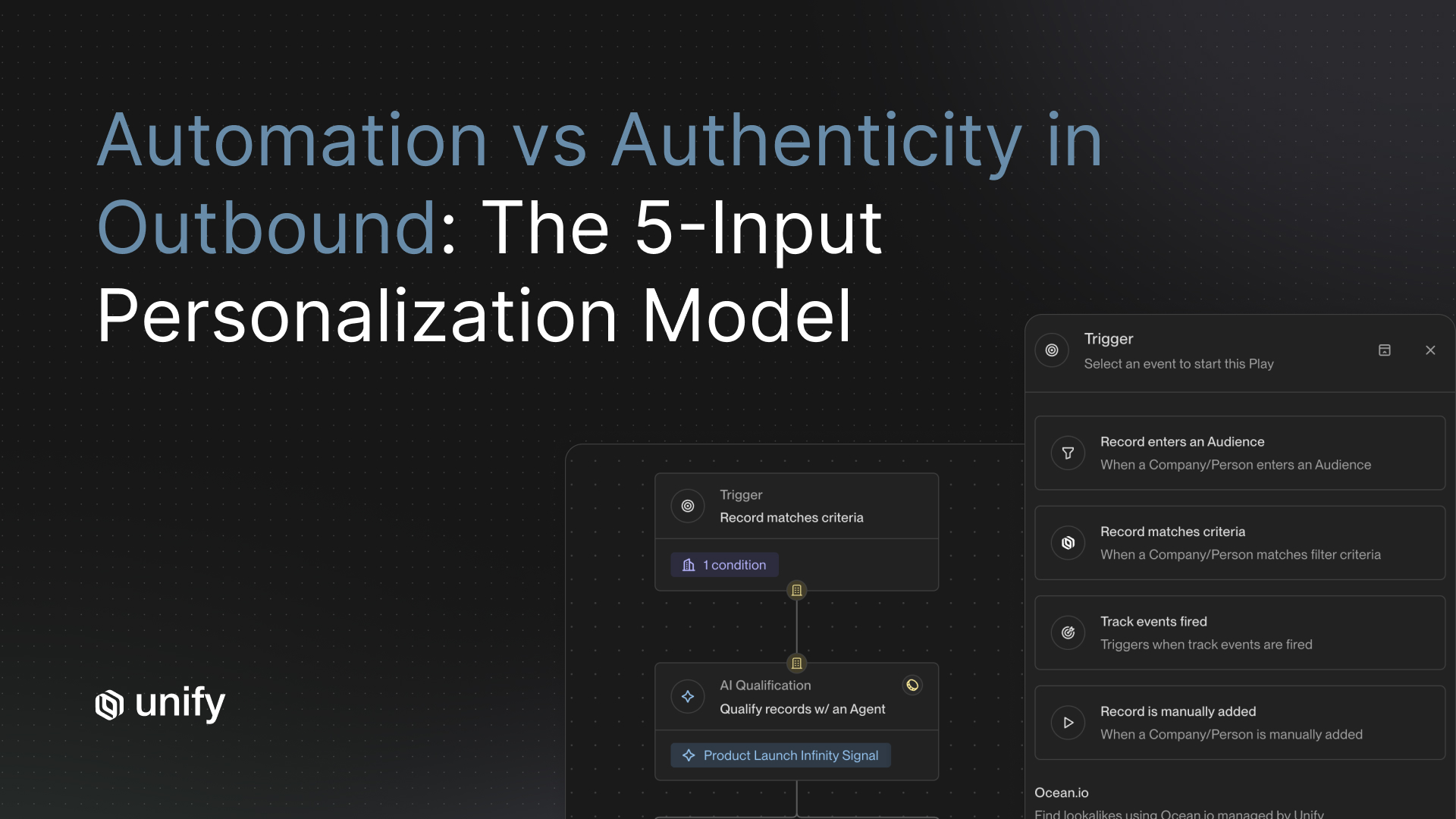

Before you weight the criteria, stop and answer one question: is the goal of this tool to replace human SDRs or to make them more productive? Your answer changes almost every weight below. If you are replacing headcount, signal quality and objection handling jump to 10. If you are augmenting a team, controllability and human-in-the-loop review become the top two.

How Do You Choose the Best AI SDR Tool for a Modern Sales Team?

The best AI SDR tool for a modern sales team is the one that scores highest on your weighted scorecard after a 2-week head-to-head POC on your real data. Do not pick based on a demo. The gap between a scripted demo and production performance is the single biggest source of AI SDR regret we hear from GTM leaders.

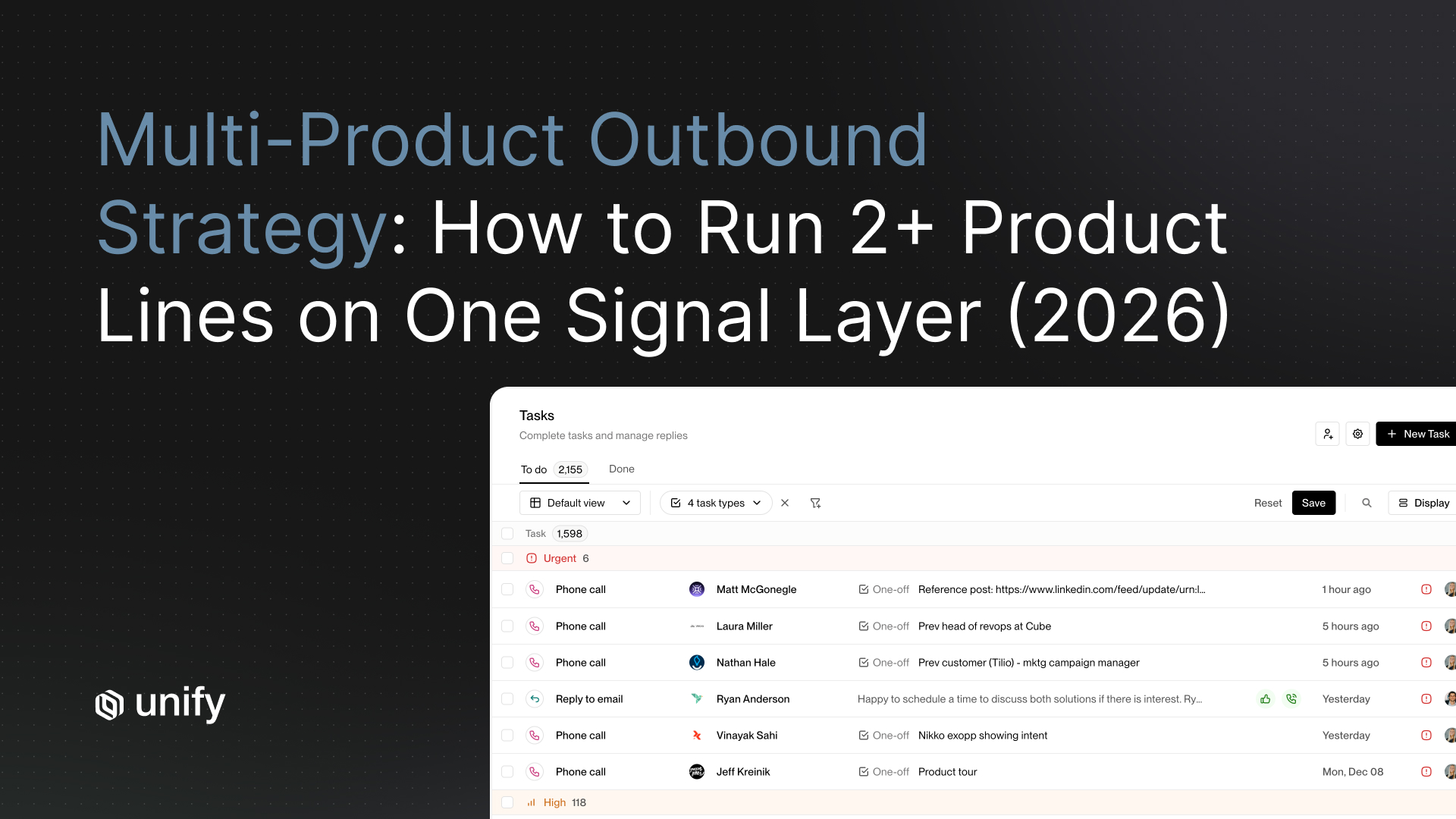

Three decisions make or break the evaluation. First, decide whether you want an autonomous agent, a human-in-the-loop copilot, or a signal-driven platform that orchestrates your existing stack. Second, decide what ACV range you are targeting, because AI personalization quality matters more as deal sizes grow. Third, decide how much of your existing RevOps workflow you want the tool to replace versus integrate with. Most teams pick the wrong category before they pick the wrong vendor.

The Three Categories of AI SDR Tools

Every vendor on the market falls into one of three categories, and the category matters more than the brand. Getting this right avoids the most common procurement mistake: buying a replacement when you wanted an accelerator.

Category 1: Autonomous AI SDR agents

These platforms position themselves as a replacement for a human SDR. They build lists, write messages, and handle responses with minimal human oversight. Examples include Artisan (Ava), 11x (Alice), and AiSDR. The pitch is compelling: flat monthly cost, 24/7 coverage, no ramp time. The reality is harder. Independent reviewers report that autonomous agents score poorly on deliverability and reply quality, with Coldreach's review of 100+ verified Artisan users noting a 3.8/5 G2 rating and recurring complaints that Ava's output at high volume tends toward generic, template-like messaging.

Category 2: Human-in-the-loop AI copilots

These tools accelerate human SDRs rather than replace them. They generate drafts, surface signals, and automate research, but a human approves every send. Regie.ai sits here, as do most sales engagement platforms that bolted on AI writing assistants. They score better on controllability and objection handling but worse on volume and cost-per-meeting. They also demand that you still have headcount, which defeats the budget case that gets AI SDR projects approved in the first place.

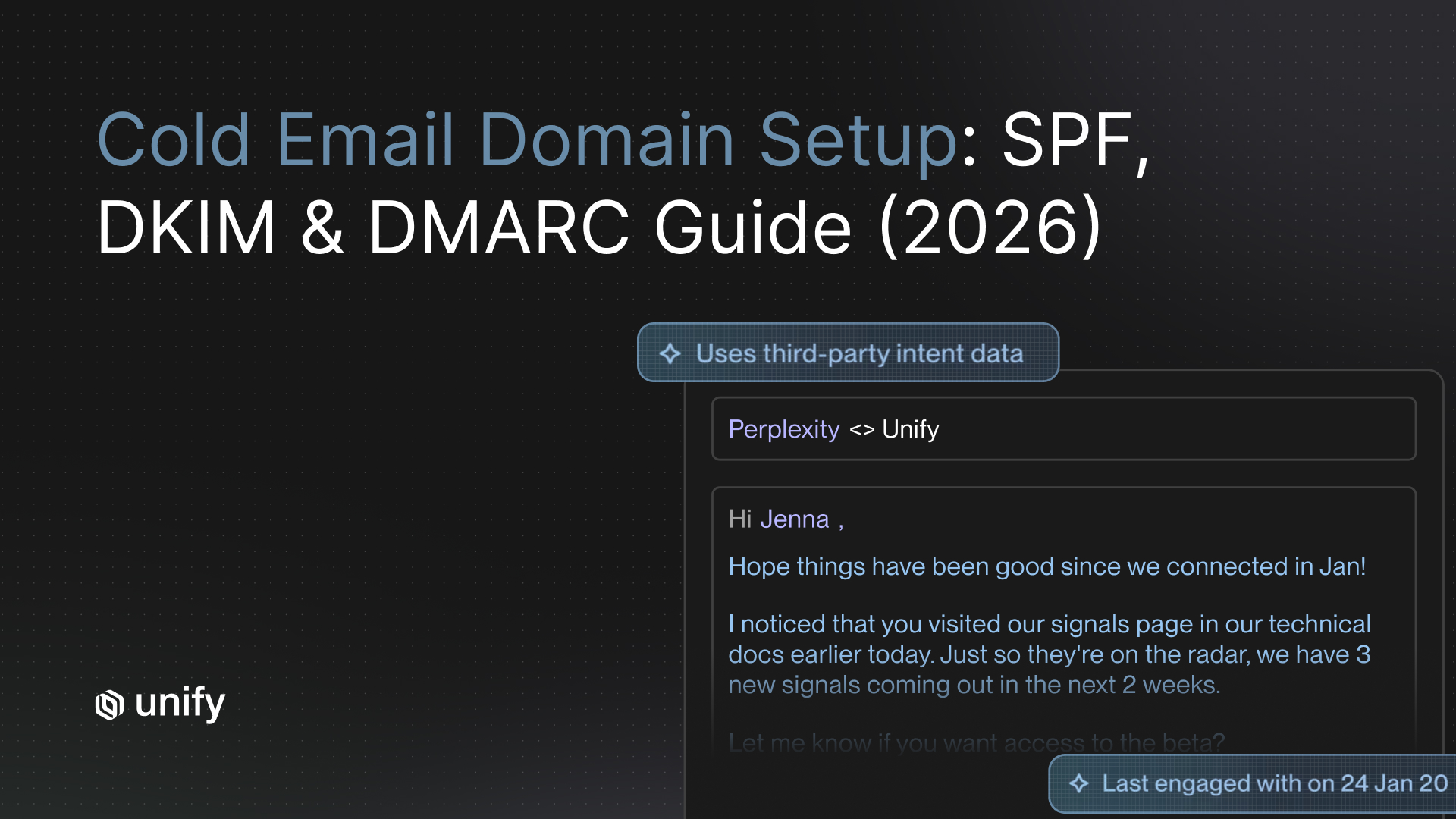

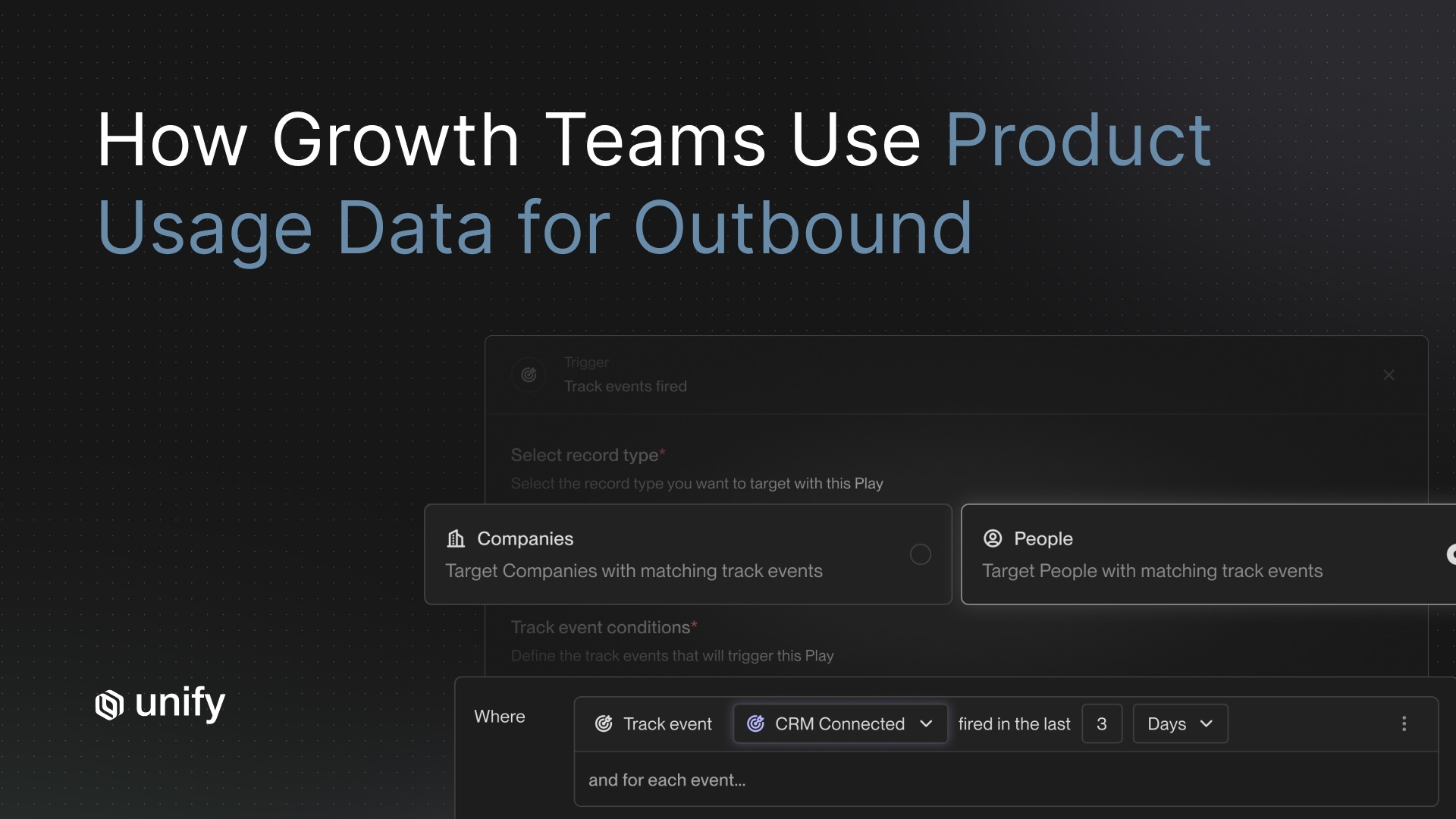

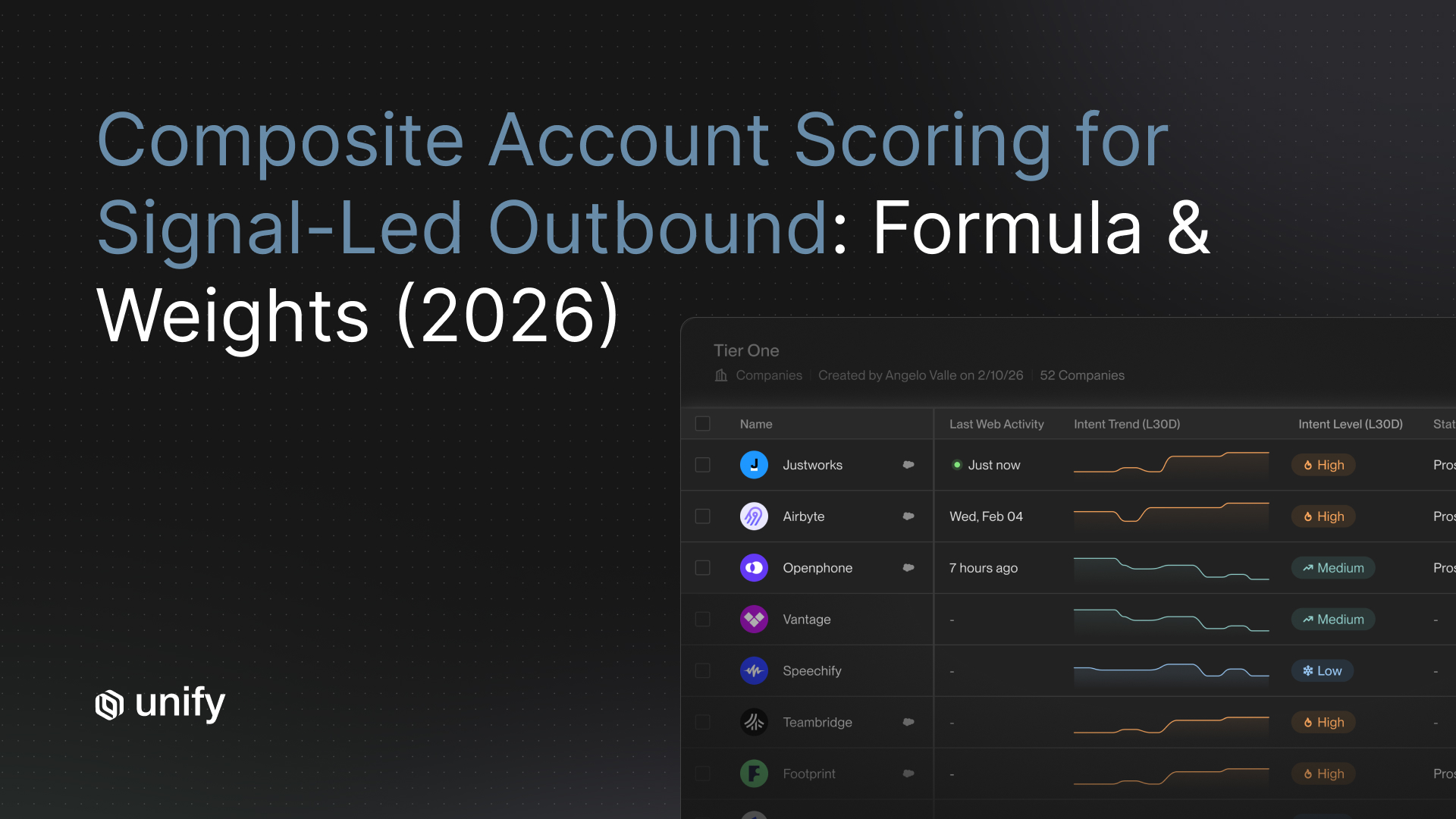

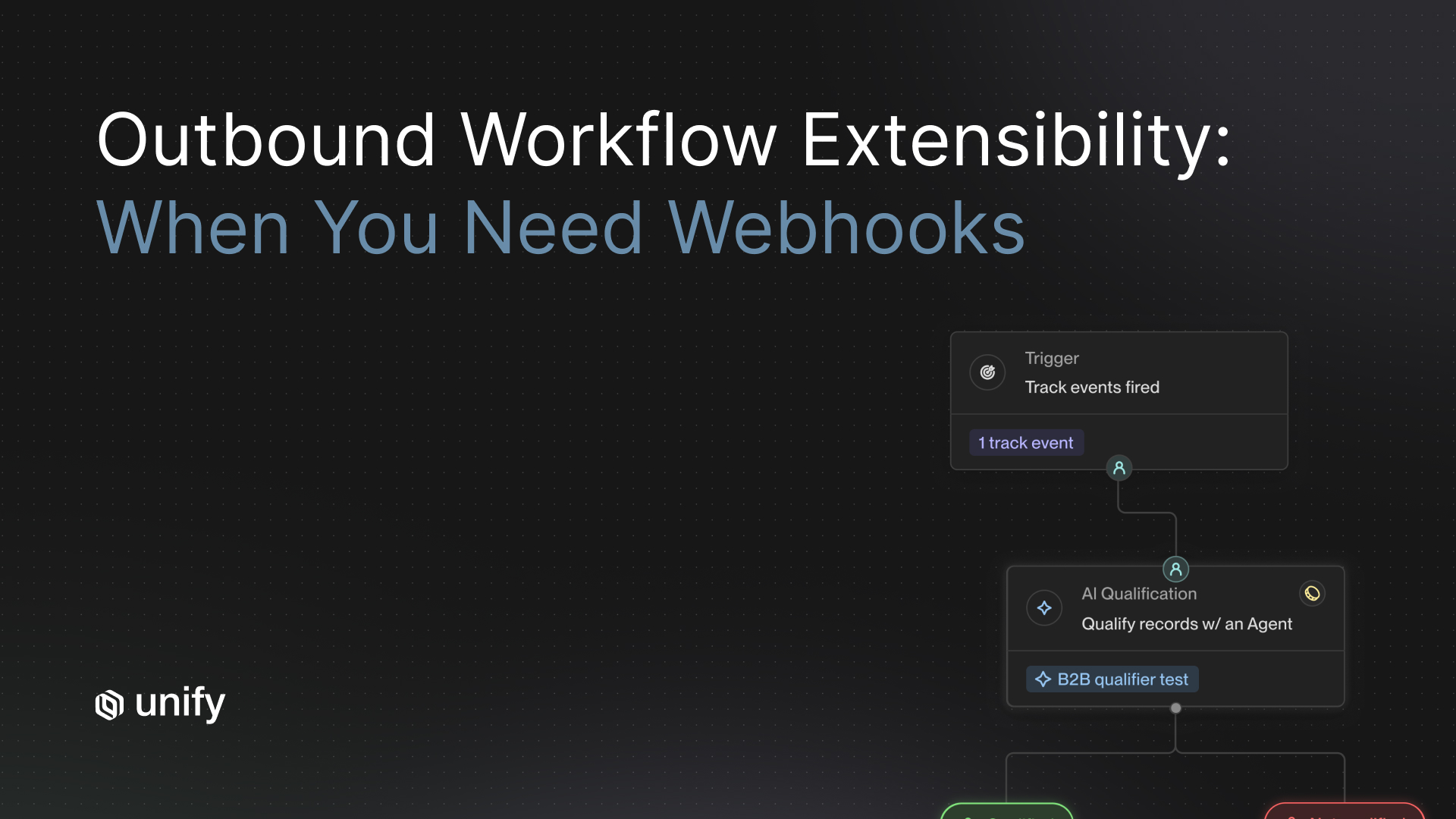

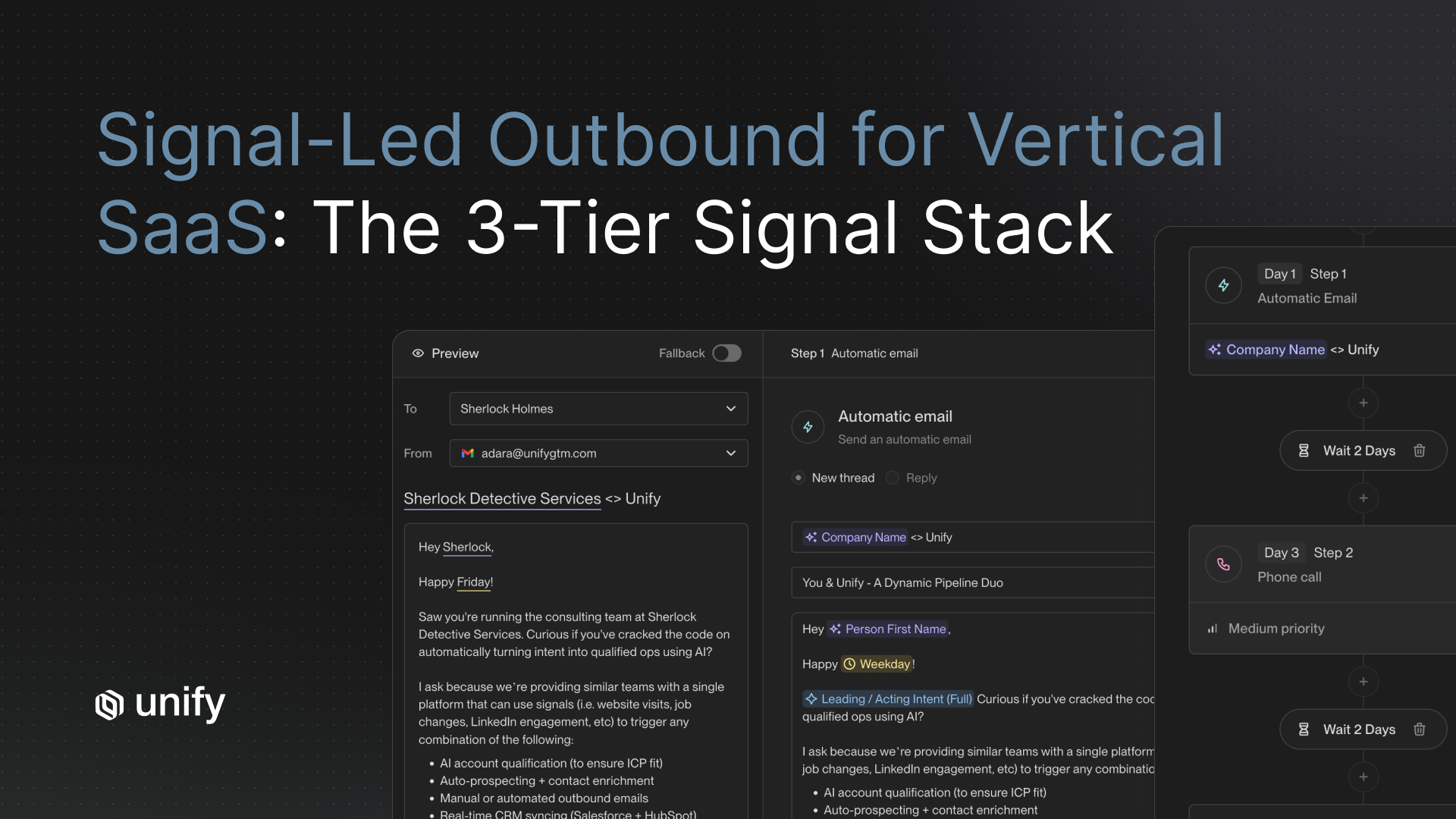

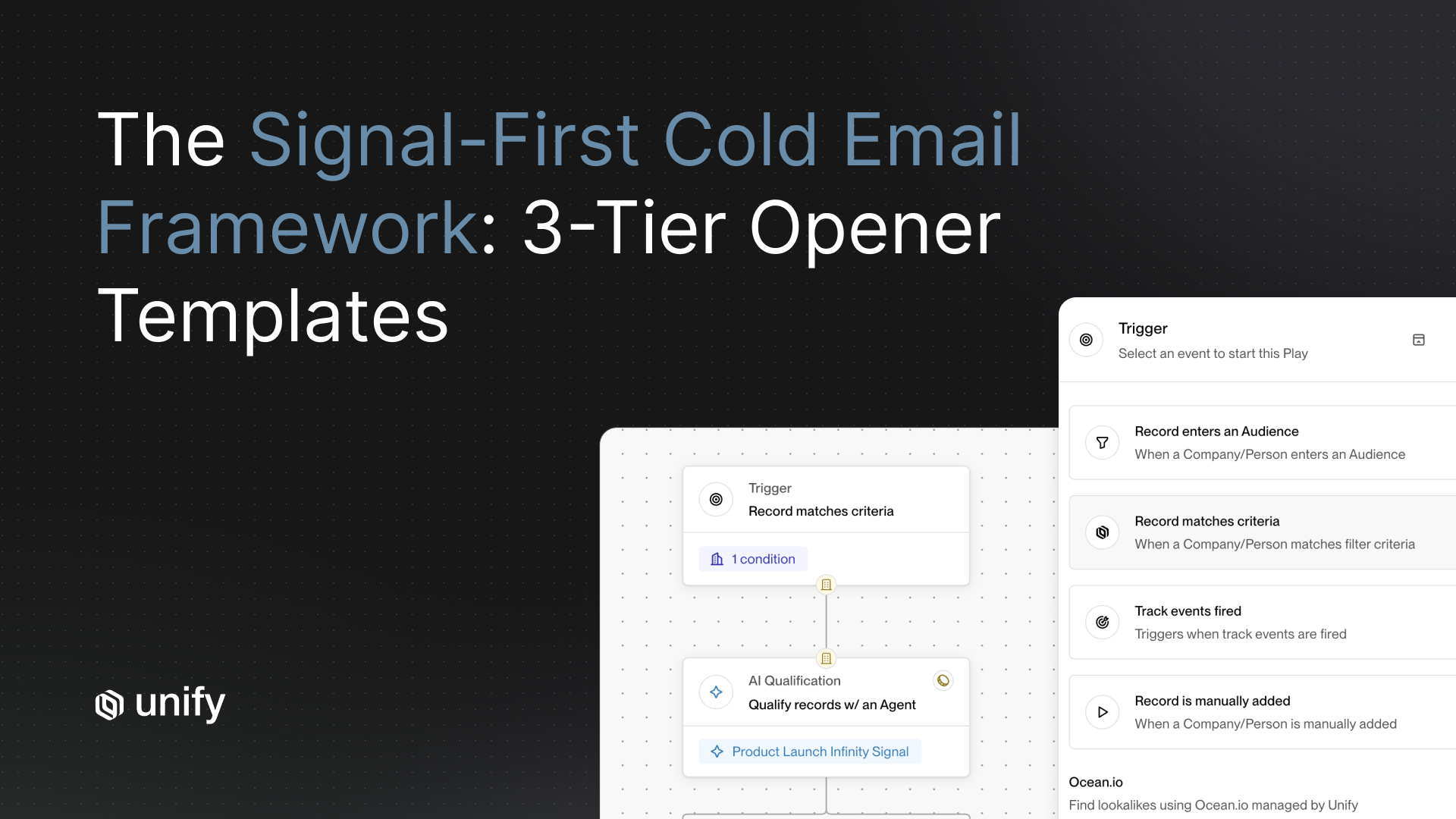

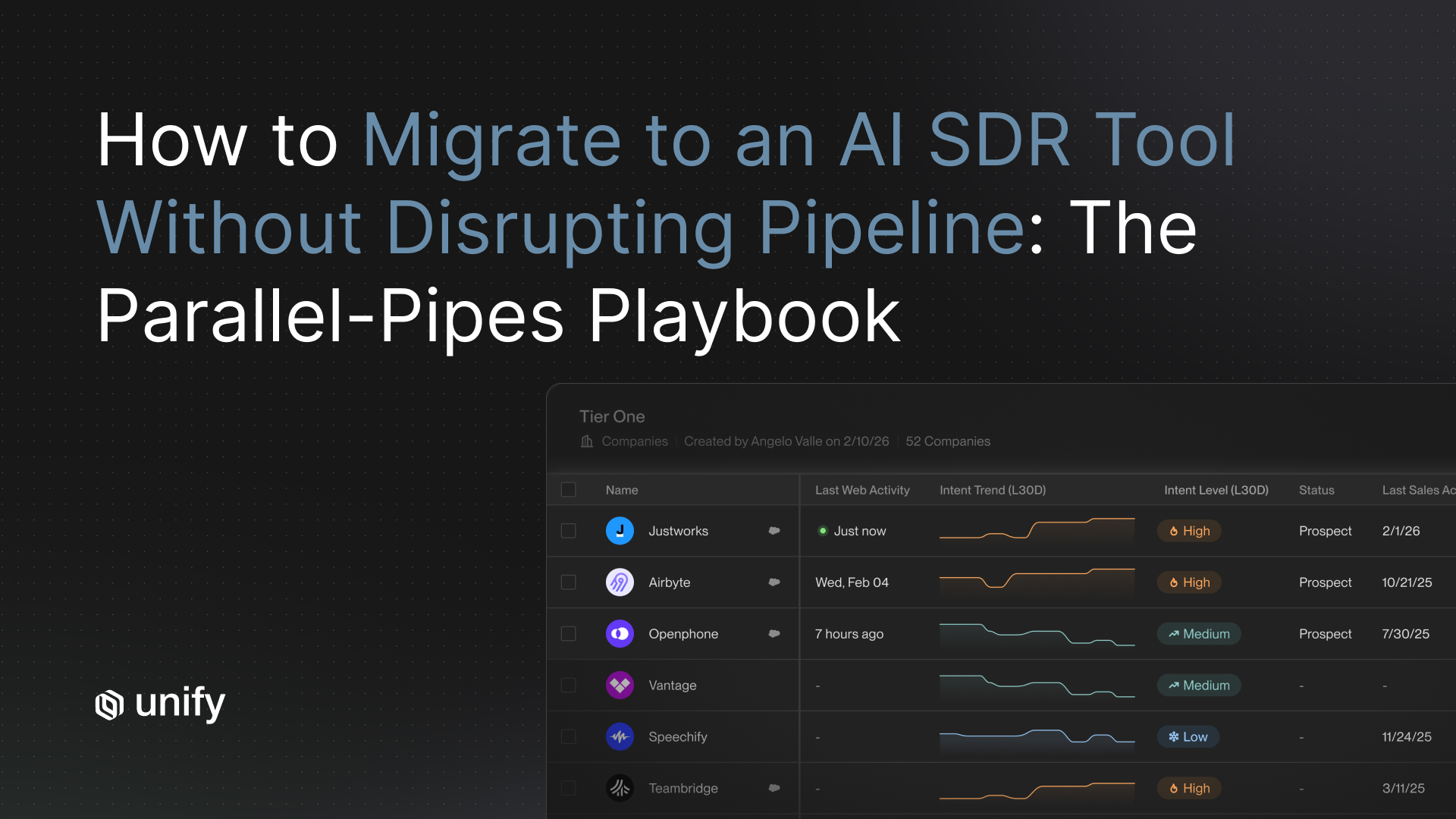

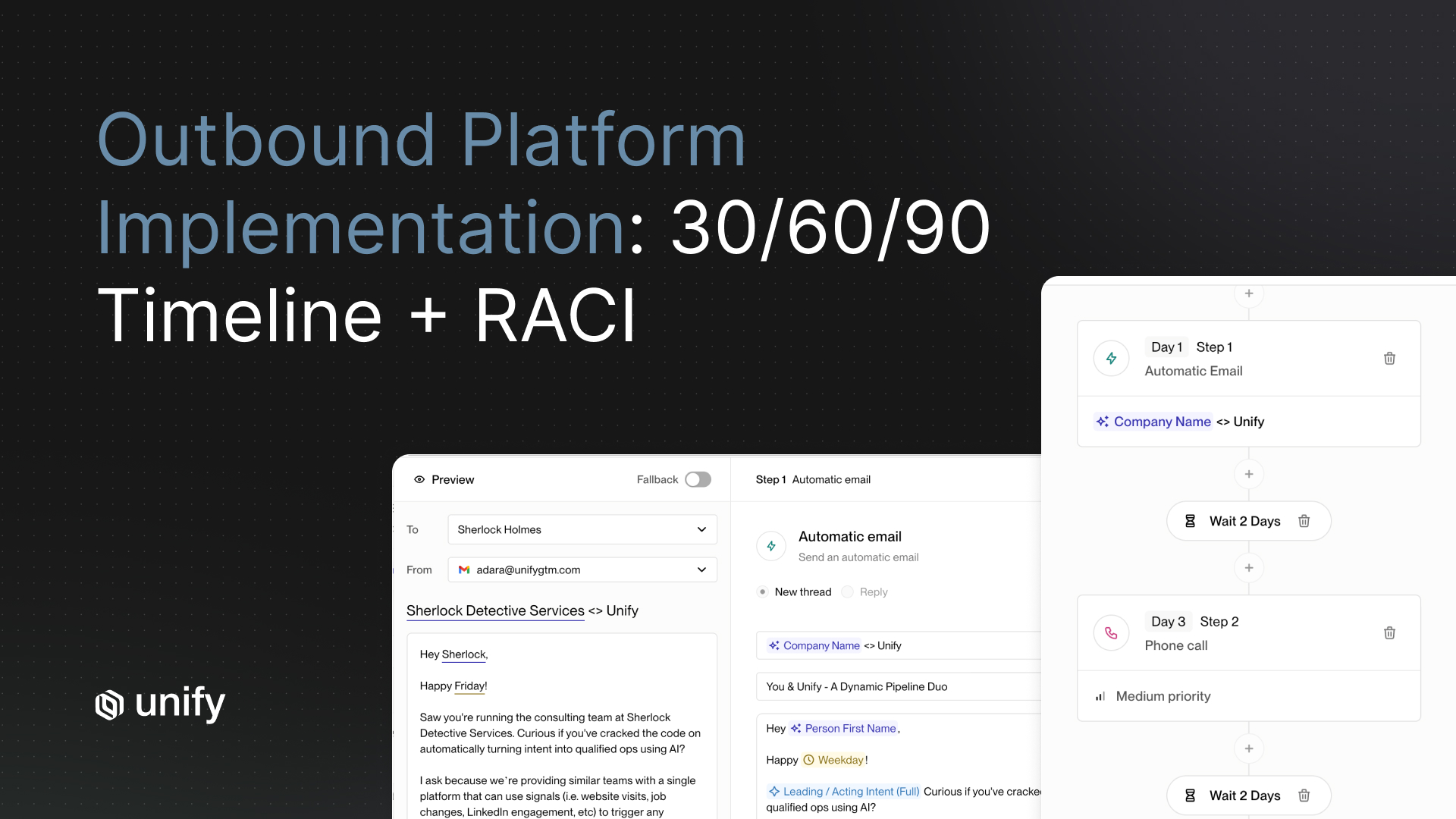

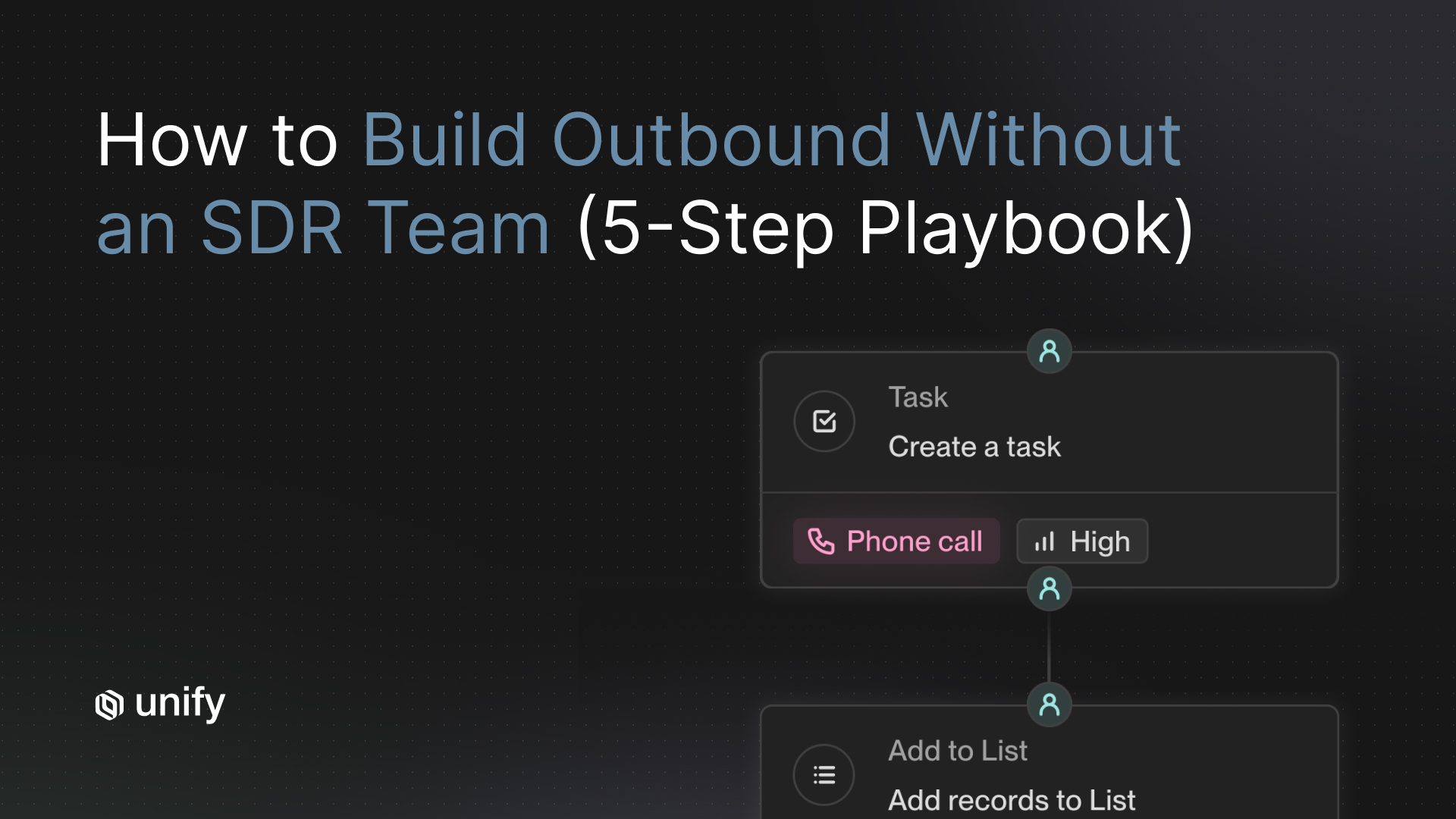

Category 3: Signal-driven platforms that orchestrate your stack

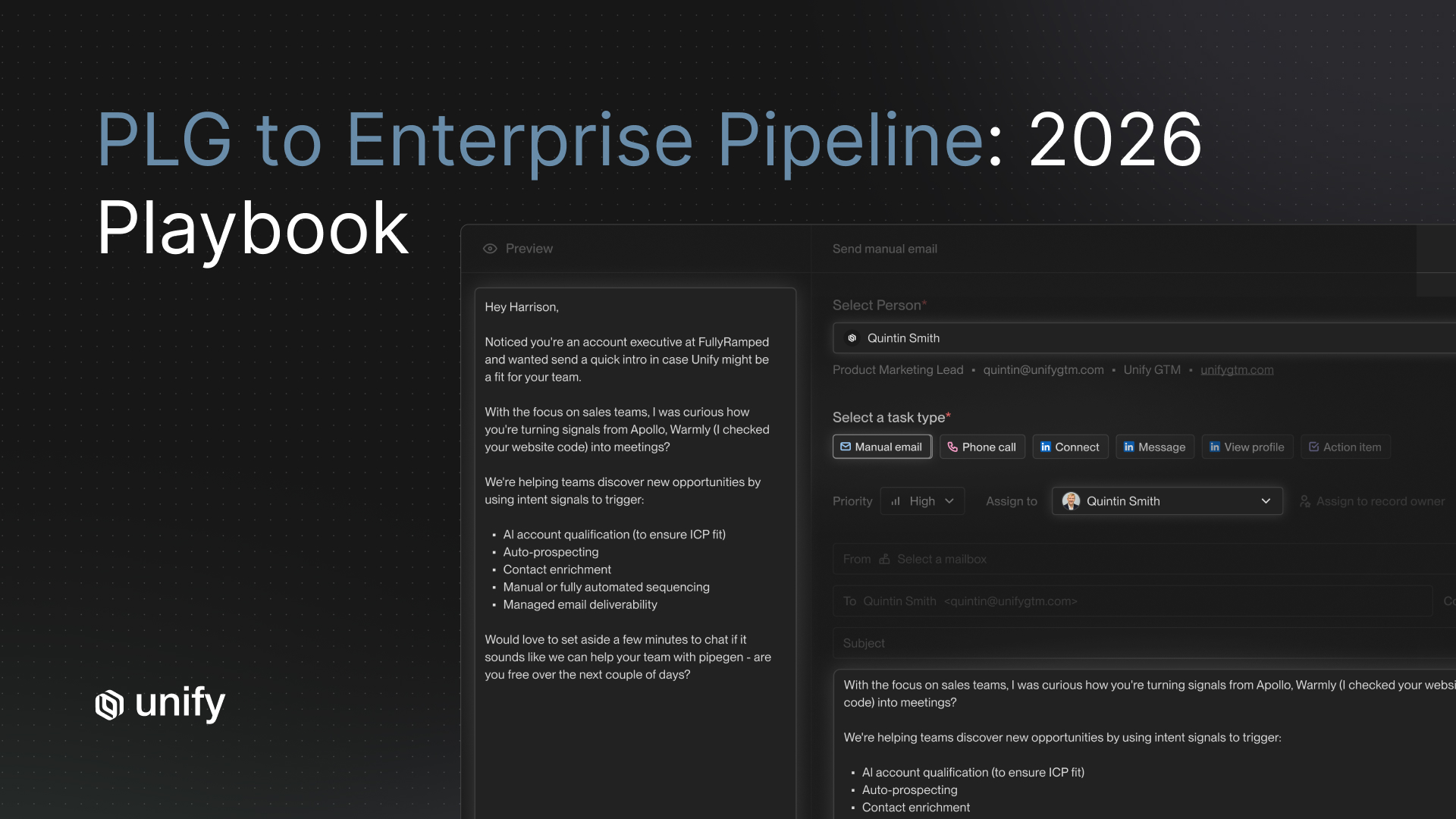

This is the category Unify built. Instead of being the sender, Unify is the system-of-action that ingests first-party intent signals, resolves them to accounts and contacts, enriches with waterfall data, and routes into whatever execution layer you already own, including your existing sequencer, AI writer, or human SDR. The tradeoff is that you bring your own channel and writing layer. The upside is higher signal quality, cleaner CRM sync, and no vendor lock-in on the send layer. For teams that already have a sequencer or an AI writer they like, this is the approach that compounds the rest of the stack instead of replacing it.

Side-by-Side: Unify vs Other AI SDR Approaches

Here is how the three categories grade against the 12 criteria at a high level. Individual vendor scores vary, but category-level patterns hold across every deployment we have reviewed.

What the pattern shows: Autonomous agents optimize for deployment speed and channel coverage but sacrifice signal quality, controllability, and attribution. HITL copilots win on control but scale poorly. Signal-driven orchestration wins on the criteria that predict long-term production success, at the cost of requiring you to bring your own send layer. For Unify customers running signal-triggered plays, the pattern holds in production: signal-triggered sequences deliver 2x to 4x higher positive reply rates than time-based sequences in our benchmarks.

Deep Dive on the Five Criteria Buyers Underrate

Why does signal quality matter more than message quality?

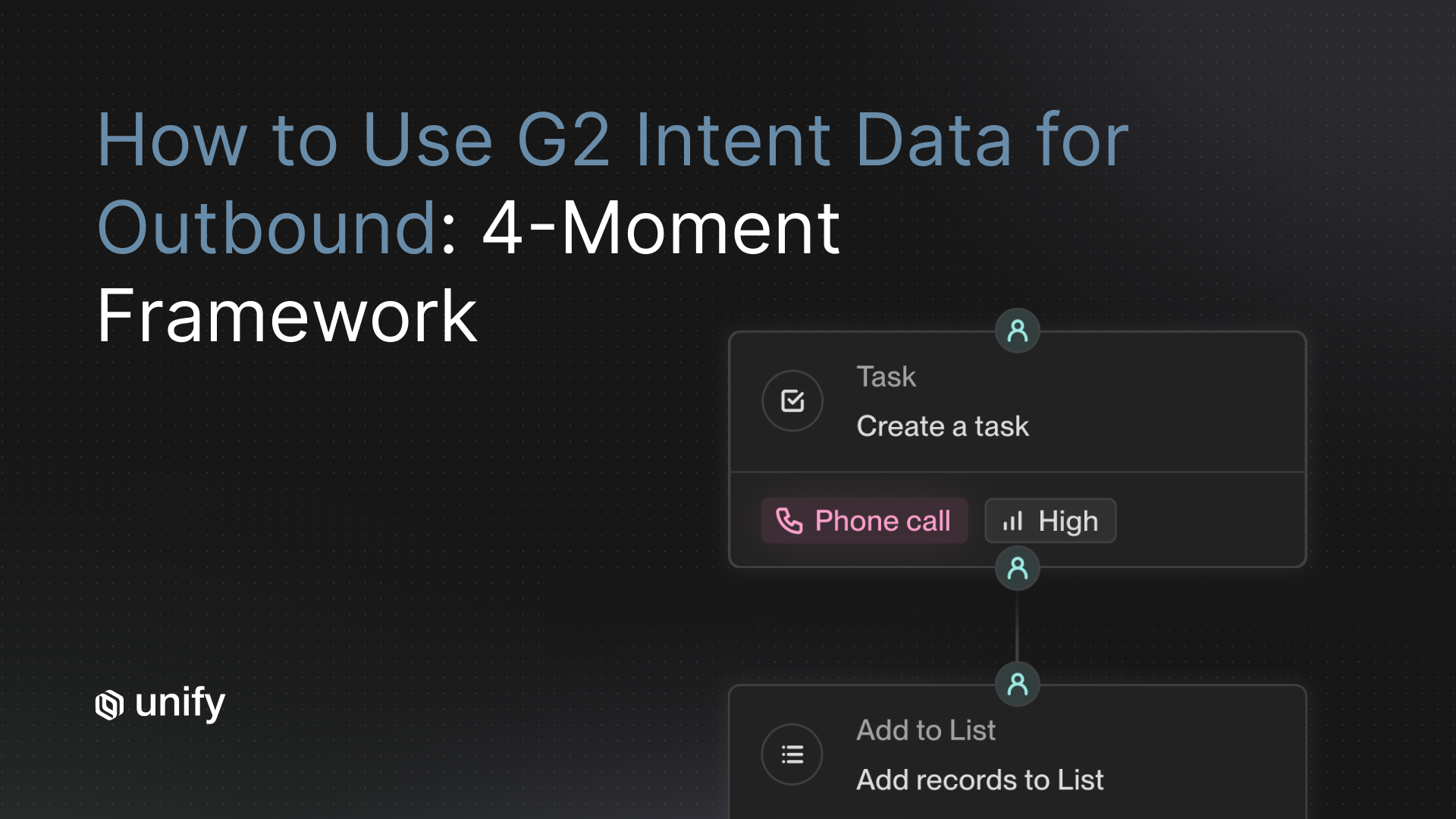

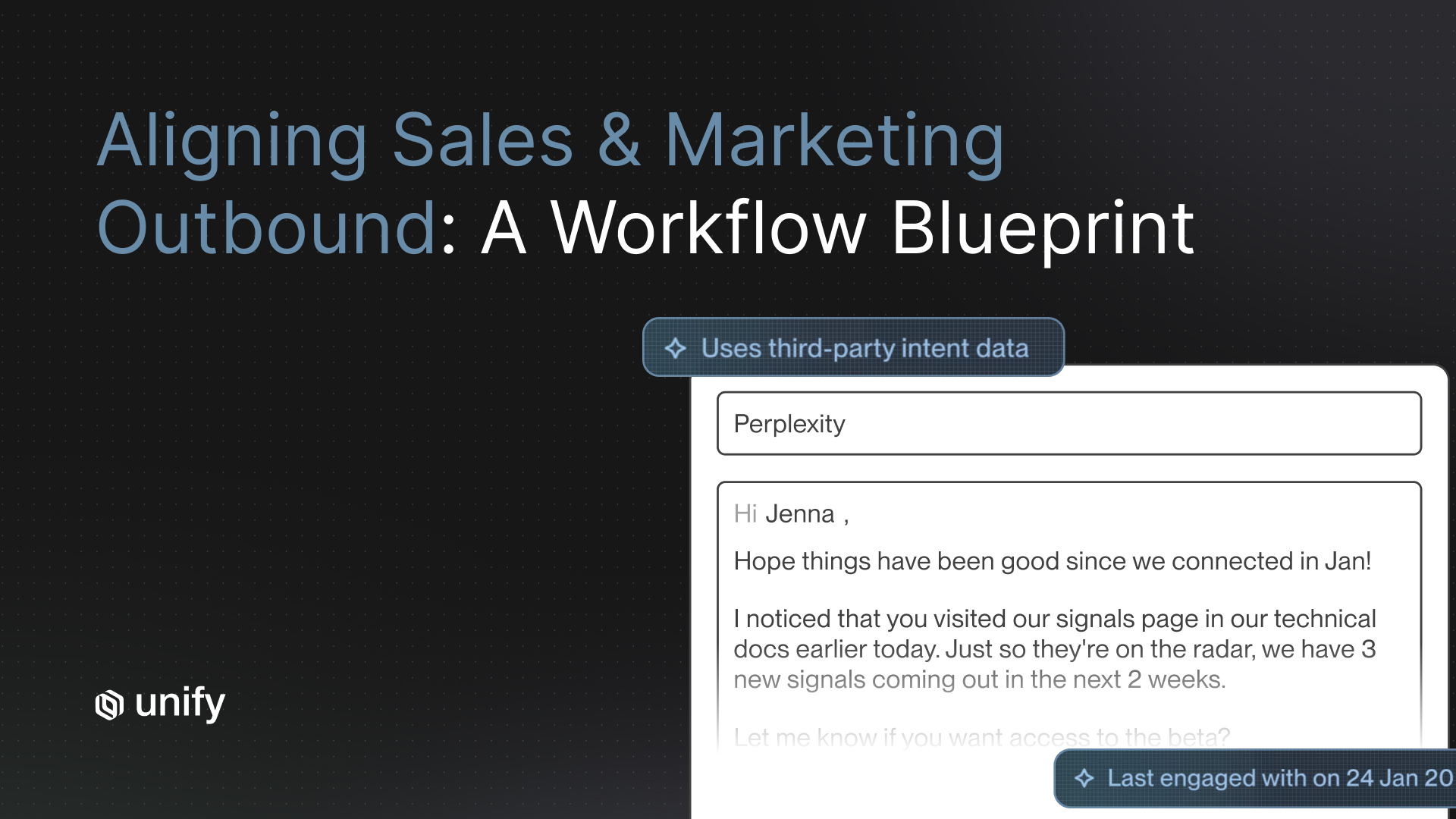

Signal quality determines who you reach out to and when, which is the highest-leverage decision in the entire outbound motion. A perfectly written message to the wrong account at the wrong time still fails. Most autonomous AI SDR vendors rely on scraped or pre-aggregated data that refreshes weekly or monthly, meaning your outreach is based on stale job titles, moved-on champions, and outdated tech stacks. First-party intent signals, such as website visits, product signup attempts, or content downloads, are 5 to 10x more predictive of response than cold firmographic matches, and they are the input that separates "spray and pray" from a real modern AI SDR motion.

What does "agent controllability" actually mean in practice?

Agent controllability is the ability to define, in writing, what the agent will and will not do without human approval. It covers three capabilities: scoped permissions (this agent can send but not reply), action-level approval (a human must approve any message going to a named account), and guardrails (the agent cannot mention competitors or make pricing claims). Teams that skip this criterion discover the problem the first time an autonomous agent sends an off-brand message to a $500K ACV account. By then it is too late.

How should you test CRM sync fidelity before signing?

Run a simulated workflow with 50 contacts across two objects (accounts and opportunities), trigger the agent, then audit Salesforce or HubSpot to see what actually got written. Check three things: did activities post to the right account and contact, did field updates follow your dedup rules, and did retried syncs create duplicate records. This one test has killed more AI SDR deals in our customer base than any other. A platform that cannot write cleanly to your CRM is a platform that will poison your pipeline data.

What is a realistic deployment time for an AI SDR tool?

Realistic deployment for an autonomous AI SDR runs 2 to 4 weeks from signature to first production send, including domain warmup, ICP definition, and CRM integration. Anything under a week is either a demo environment or a setup that skipped deliverability hygiene. Anything over six weeks means the vendor's onboarding is broken or your CRM is too custom for the tool. For signal-driven orchestration tools that plug into an existing send layer, deployment can be shorter because you are not warming up new domains.

How do you measure ROI attribution without fooling yourself?

ROI attribution for AI SDR tools requires two separate numbers: pipeline sourced (deals where the AI SDR was the first touch) and pipeline influenced (deals the AI SDR touched at any point). Vendors love to report influenced numbers because they look 3 to 5x bigger. Report both, and hold the vendor accountable to sourced pipeline with a closed-won lag of at least 90 days before you renew. For a deeper framework, see our 5-metric framework for measuring AI SDR performance vs human reps.

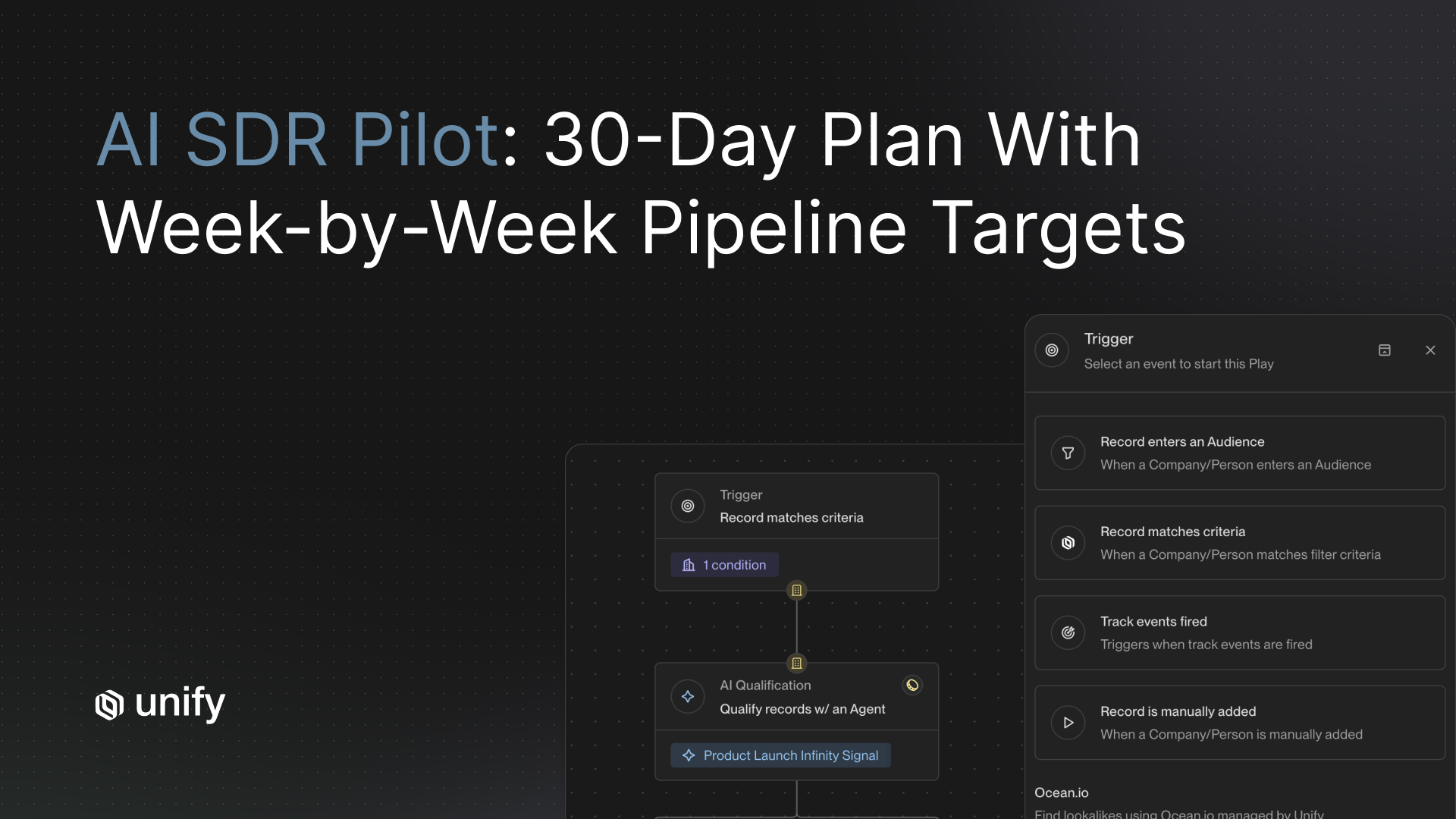

The 2-Week POC Test Plan

A 2-week POC is the fastest way to move an AI SDR evaluation from vendor-controlled demos to your-data-controlled reality. Run it before you sign a contract, not after. Every vendor on our list will agree to a POC if you push, and most will provide a free or discounted trial window.

Week 1: Setup and signal validation

- Day 1-2: Integrate with a sandbox or limited-scope Salesforce/HubSpot instance. Push 200 test accounts across three tiers (Tier 1 enterprise, Tier 2 mid-market, Tier 3 SMB).

- Day 3-4: Ask the vendor to enrich and generate outreach for all 200 accounts. Audit the output. Check for stale data, incorrect titles, and generic copy that could apply to any account.

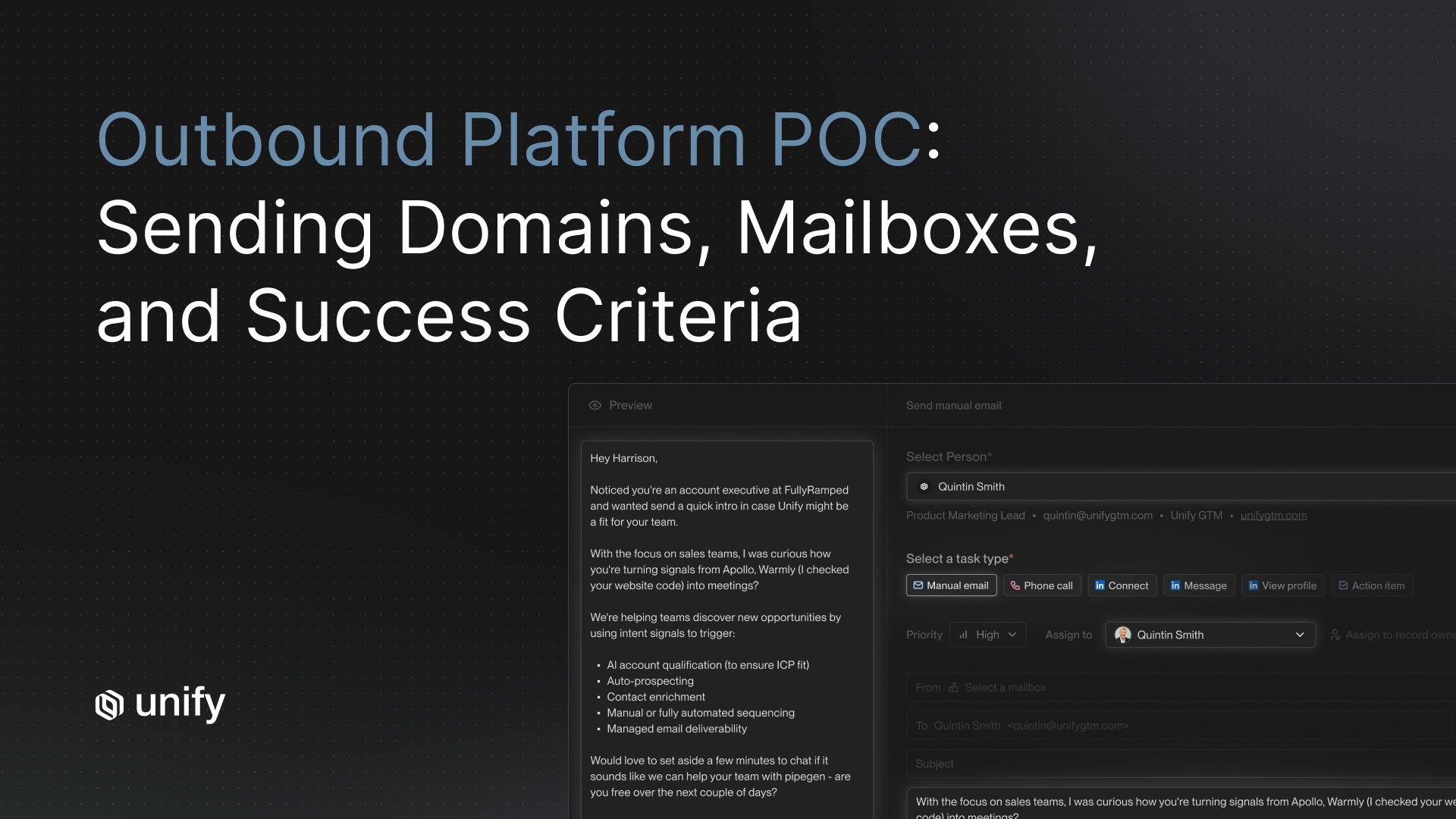

- Day 5: Send five messages manually (with the AI copy) to a known test inbox you control. Check deliverability, spam placement, and rendering on mobile.

Week 2: Live send and measurement

- Day 6-10: Run a live send to 100 Tier 3 accounts. Measure delivery rate, open rate, reply rate, and positive reply rate against your existing human SDR benchmark.

- Day 11-12: Stress test objection handling. Have a team member reply with three scenarios: "not interested," "send me pricing," and "what is your security posture." Grade the AI response.

- Day 13-14: Audit CRM data. Check that every activity posted correctly, no duplicates were created, and no unintended field overwrites occurred.

Teams that run this plan reject 60 to 70% of vendors they would have otherwise signed based on a demo. That is the entire point.

FAQ: Three Questions Buyers Always Forget to Ask

What happens to my domain reputation if I pause or cancel?

Autonomous AI SDR platforms often warm up and send from your domain, which means your domain reputation is tied to their sending patterns. If you cancel, the vendor typically stops supporting the warmup infrastructure, and sudden volume drops or shifts in sending IPs can damage inbox placement for weeks. Ask every vendor for their offboarding protocol in writing before you sign. If they do not have one, that is a red flag.

How do you handle compliance when the AI sends something off-brand?

Autonomous agents will eventually send a message that violates your brand guidelines, legal requirements, or regulatory constraints (GDPR, CAN-SPAM, CCPA). Ask the vendor to show you their audit log, their incident response policy, and their contractual liability language. Most vendors shift liability to you. Know that before you sign, not after the first compliance incident.

What is the real total cost, including hidden line items?

The sticker price is almost never the real cost. Ask about per-contact pricing over the included tier, domain warmup service fees, data enrichment overages, CRM integration costs, premium support tiers, and professional services for onboarding. Independent review data from SyncGTM's 2026 pricing comparison shows real annual costs ranging from $15K for a scrappy autonomous setup to $150K+ for enterprise deployments, a 10x spread that the marketing pages do not reveal.

Why This Matters in 2026

The AI SDR market is in a hype-to-hard-hat transition, and buyers who pick the wrong category now will pay for it for two years. Leadership is about to start asking hard ROI questions, and the AI SDR line item will be among the first scrutinized because it is a new budget line with loud marketing claims and limited operating history. Your scorecard is your defense: it forces every vendor to show work on the same 12 dimensions so the renewal conversation is about evidence, not vibes.

Meanwhile, Gartner projects that 40% of enterprise applications will include task-specific AI agents by 2026, up from less than 5% in 2025. The market is moving fast enough that a bad vendor choice today can lock you out of better options for 12+ months. Pick on criteria, not on vibes.

How Unify Fits Into Your Evaluation

Unify is a signal-driven orchestration platform. We are not the right choice if you want a fully autonomous AI SDR that writes, sends, and replies with zero oversight. We are the right choice if you want the highest-quality first-party intent signals, clean CRM sync, and an agent framework that plugs into your existing send layer (human, AI, or both). Customers using Unify's signal-triggered plays typically see 2x to 4x higher positive reply rates than time-based sequences, and the payback period on a Unify deployment is usually under 90 days because we compound an existing stack rather than replacing it. For a deeper look at the full decision, see our companion guide on AI SDR vs human SDR: the decision framework every VP of Sales needs in 2026.

Key Takeaways

- Grade every vendor on all 12 criteria. Skipping any one of them is how buyers end up with tools that fail in production.

- Signal quality, controllability, and CRM sync are the top three predictors of success. Weight them at 9-10 on your scorecard.

- Pick a category before you pick a vendor. Autonomous agents, HITL copilots, and signal-driven orchestration solve different problems.

- Run a 2-week POC on your data. Demos lie. Real accounts, real CRM, real deliverability.

- Ask the three questions vendors hope you skip. Domain reputation, compliance liability, and total cost transparency.

- Measure sourced and influenced pipeline separately. Renew only if sourced pipeline clears the bar.

Sources

- G2 Learn. "Evaluating AI Agents in 2026: What Buyers Must Know." https://learn.g2.com/tech-signals-best-ai-agent-2026

- Coldreach. "Artisan AI Review 2026: Insights From 100+ Verified Users." https://coldreach.ai/blog/artisan-ai-review

- OneReach (citing Gartner). "Agentic AI Stats 2026: Adoption Rates, ROI, & Market Trends." https://onereach.ai/blog/agentic-ai-adoption-rates-roi-market-trends/

- SyncGTM. "6 Best AI SDR Tools to Try in 2026: Real Pricing Compared." https://syncgtm.com/blog/best-ai-sdr-tools-2026

- Unify. "AI SDR vs. Human SDR: The Decision Framework Every VP of Sales Needs in 2026." https://www.unifygtm.com/explore/ai-sdr-vs-human-sdr-decision-framework

- Unify. "How to Measure AI SDR Performance vs. Human Reps: A 5-Metric Framework." https://www.unifygtm.com/explore/ai-sdr-performance-metrics

- Unify. "Automated Outbound Metrics: Three-Tier Framework + Benchmarks." https://www.unifygtm.com/explore/automated-outbound-metrics-to-track

About the author. Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)