TL;DR: Rolling out an AI SDR is a phased migration, not a switch-flip. The teams that break pipeline are the ones who deploy everything at once before validating quality. A safe rollout follows four phases over 90 days: shadow mode (weeks 1-2), co-pilot (weeks 3-5), pilot territory (weeks 6-9), and full rollout (weeks 10-12). Each phase has specific gate metrics you must hit before advancing. Skip the gates, and you risk the 50-70% churn rate that kills most AI SDR deployments before the first renewal.

You have already decided AI SDR is worth trying. The question now is how to turn it on without torching the pipeline your team spent months building.

This is the moment most implementation guides skip. They explain what AI SDRs do, compare vendors, and tell you to "start small." None of them show you exactly what "small" means, what success looks like at each gate, or how to manage the reps who are suddenly worried about their jobs.

This guide gives you the full 90-day implementation plan, phase by phase, with the gate metrics and rep communication plan for each step. It also covers the five implementation failures that kill most AI SDR rollouts before they have a chance to prove themselves.

The 90-Day Rollout Plan: Four Phases, Four Gates

A successful AI SDR implementation runs 90 days across four distinct phases. Each phase has a specific scope, a set of actions to protect existing pipeline, a rep communication and enablement plan, and a gate metric that must be met before you move forward. Advancing before hitting the gate is the single most common reason AI SDR deployments fail.

Phase 1: Shadow Mode (Weeks 1-2). What Should the AI Do Before It Sends a Single Email?

In shadow mode, the AI generates message drafts but sends nothing. The goal is to calibrate quality and flag mistakes before they reach real prospects. This phase protects every active opportunity in your pipeline because nothing automated touches your CRM contacts.

What to do in Phase 1

- Run a CRM data audit before configuring the AI. Dirty data (duplicate records, stale titles, missing company fields) is the top cause of AI hallucinations in outreach. Clean the contact fields most critical for personalization: job title, company name, and seniority.

- Set up the AI to generate draft messages for 50-100 ICP contacts. Do not enroll any contacts currently in active sequences or with open opportunities.

- Have two to three reps review 100% of the AI's drafts. Score each on tone fit, factual accuracy, and personalization quality.

- Run a small test send of 25-50 messages, sent manually by reps, to measure early reply signals.

Rep communication plan for Phase 1

Frame this phase as "the AI is learning from you, not replacing you." Reps should understand they are training the system with their expert judgment. Every edit they make to an AI draft improves the model's future output. Hold a 30-minute kickoff session to walk through the review workflow and answer questions about job security directly. Don't avoid the topic. Teams that skip this conversation see passive resistance that surfaces as low review compliance later.

Gate metric: 3%+ positive reply rate

If the test sends return a positive reply rate below 3%, do not advance. Diagnose whether the issue is data quality, messaging tone, or ICP targeting, and fix it before Phase 2. A 3% floor on test sends is a conservative bar. The industry cold email benchmark sits at 5.1% for average performers, with top performers hitting 6-10%. The goal here is simply to confirm the AI's output is not actively damaging your brand before it scales.

Phase 2: Co-Pilot Mode (Weeks 3-5). How Do You Validate Quality While Protecting Rep Trust?

In co-pilot mode, the AI drafts and queues messages for rep review before they send. This phase captures the efficiency gains of automation while keeping a human quality gate in place. Reps stay in control, which matters both for pipeline protection and for organizational buy-in.

What to do in Phase 2

- Expand the contact pool to 200-400 ICP prospects, still excluding any accounts with open opportunities or active sequences.

- Route all AI-drafted messages into a rep review queue. Reps approve, edit, or reject each before it sends.

- Track edit rate. If reps are editing more than 40% of drafts, the AI needs more training data, better prompts, or tighter ICP constraints. Edit rate above 40% signals you have not hit the quality threshold needed for Phase 3.

- Begin measuring reply rate and meeting booking rate weekly, not monthly. Weekly tracking lets you catch drops quickly.

Rep communication plan for Phase 2

Share weekly metrics transparently with the full team. Reps need to see that the meetings being booked in the co-pilot queue are real pipeline, not garbage leads. Show them revenue-per-meeting from AI-assisted outreach alongside their own numbers. Use internal data to make the case: teams where AI handles research and first drafts while reps own reply management and relationship building consistently outperform teams that try to run fully autonomous AI from the start, because closers spend time on qualified conversations instead of volume. Make reps feel the AI is filling their pipeline, not shrinking their role.

Gate metric: 5%+ reply rate, 8%+ meeting booking rate

The co-pilot phase should push reply rates above the cold email average. If you hit 5% reply but cannot convert to meetings at 8%+, the messaging is generating curiosity but not urgency. Rewrite the call-to-action structure before advancing. If you are above both thresholds, the AI's output is ready to run without a per-message review gate.

Phase 3: Pilot Territory (Weeks 6-9). How Do You Run Autonomous AI Outreach Without Risking Your Whole Book?

In the pilot territory phase, the AI sends autonomously within a clearly scoped segment, one defined geography, vertical, or account tier. This is the first time the system runs without a per-message human review. The scope constraint is what protects your broader pipeline: if something goes wrong, it is contained to one territory.

What to do in Phase 3

- Pick one segment with no existing open opportunities. Good options: a new vertical you have been meaning to test, a geographic region with low current penetration, or a mid-market tier if your reps typically focus on enterprise.

- Set suppression rules that automatically exclude any contact linked to an open opportunity, a current customer, or an account assigned to an active rep sequence. This is non-negotiable.

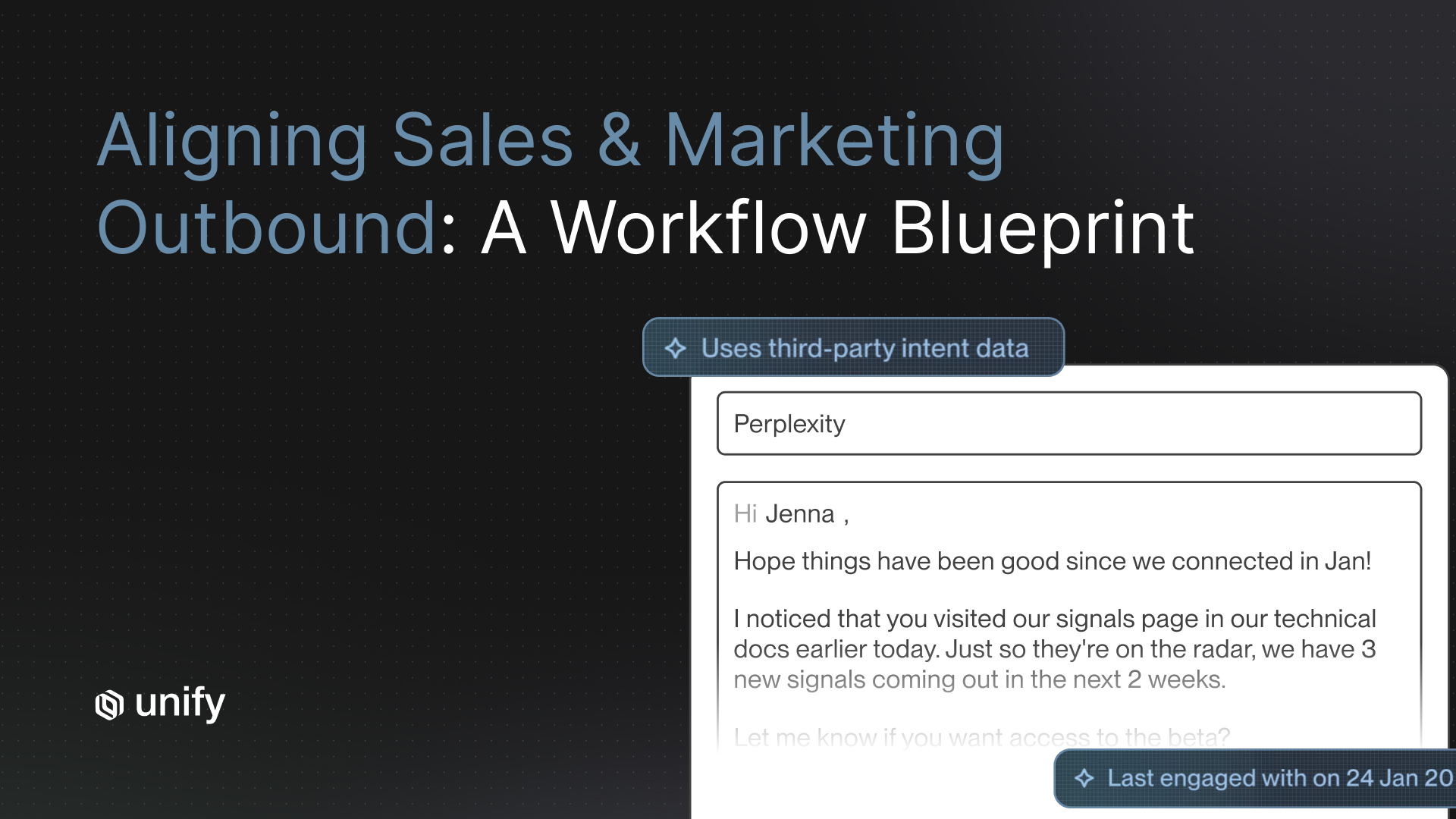

- Run signal-triggered sends where possible. Contacts who visited your pricing page, downloaded a report, or matched a job-change signal in the last 30 days convert at dramatically higher rates than cold lists. Unify's platform tracks 25+ intent signals and can scope a Play to fire only on high-signal contacts within your pilot territory.

- Review all bouncebacks and spam complaints daily. A single bad data set in the pilot territory can damage sending domain reputation across your entire stack.

Rep communication plan for Phase 3

Assign each rep a "pilot territory owner" role. They are not reviewing every message, but they own the segment's results. Give them visibility into the AI's send volume, reply pipeline, and meeting queue in real time. Ownership language matters here. Reps who feel the AI is running in their territory, not instead of them, engage with the process instead of working around it.

Gate metrics: 10%+ reply rate, 12%+ meeting rate, 8%+ SQO rate

The SQO gate is the critical addition at Phase 3. Reply rates and meeting rates can look good while the actual opportunity quality is poor, which means your closers are burning time on unqualified pipeline. The 8% SQO rate threshold confirms that AI-sourced meetings are converting into real sales opportunities. According to OpenView Partners research cited by Gradient Works, the average lead-to-opportunity conversion rate for SaaS companies is 12%, so an 8% floor here is a conservative gate designed to protect your close team, not a stretch target. If you cannot hit it in the pilot, you have a qualification or targeting problem that will get worse at full scale.

Phase 4: Full Rollout (Weeks 10-12). How Do You Scale Without Losing What Worked in the Pilot?

Full rollout means expanding the AI's scope to all segments while maintaining the suppression rules, signal targeting, and quality gates built in Phase 3. The risk at this phase is not the AI itself. It is teams removing the guardrails that made the pilot work, in the name of moving faster.

What to do in Phase 4

- Expand segment by segment, not all at once. Add one new territory or vertical per week. This gives you a clean before/after comparison for each segment.

- Keep all suppression rules from Phase 3 in place permanently. Open opportunities, current customers, and active rep sequences should never be touched by autonomous AI sends.

- Build a weekly pipeline review cadence that shows AI-sourced vs. rep-sourced opportunity metrics side by side. This creates accountability and catches quality drops early.

- Review and refresh AI prompts and messaging every 30 days. Message fatigue sets in faster with AI-generated outreach because volume is higher. Rotate hooks, update references to recent news or triggers, and retire sequences that show declining engagement.

Rep communication plan for Phase 4

By Phase 4, reps should see AI as a reliable pipeline contributor, not a threat. The best way to cement this is to show comp-plan attribution clearly. Meetings booked through AI-initiated outreach that reps convert should credit the rep. Do not create a commission ambiguity that turns reps into adversaries of the system they depend on. Some teams at this stage also create a hybrid "AI ops" role, typically a senior SDR who owns prompt quality, sequence performance, and suppression rule maintenance. This role scales the system without adding full headcount.

Gate metric: Maintain Phase 3 gates, SQO trending up

Full rollout success is not about volume. It is about sustaining the quality thresholds from the pilot while expanding scope. If SQO rate drops below 8% as you scale, stop expansion and diagnose. The most common causes are targeting drift (AI is reaching contacts outside the original ICP), data decay (stale contact information causing bad personalization), or message fatigue (the same sequence being used for too long without refresh).

What Are the Most Common AI SDR Implementation Failures?

If you want to go deeper on which metrics actually predict AI SDR success before you hit full rollout, Pipeline as a Science: Metrics That Matter in Modern Outbound lays out the measurement framework used by Unify's own GTM team.

Industry data tracking AI SDR adoption found that 50-70% of companies churn off their AI SDR platform before the first contract renewal. UserGems has reported this rate and it is widely cited across GTM research tracking AI sales tool retention. Almost every case traces back to one of five avoidable mistakes.

1. Skipping the data audit

AI scales what it finds in your CRM. Duplicate records, outdated job titles, and mismatched company fields do not slow the AI down. They produce high-volume hallucinations: emails that reference the wrong role, company, or trigger. Run a data audit before configuration, not after you see the first spam complaint.

2. Treating the AI as fully autonomous from day one

The most common failure mode is deploying the AI in full autonomous mode during week one. The argument is usually "we want results fast." The actual result is high send volume, low reply rates, damaged deliverability, and prospects who have already been burned by generic AI outreach. Research shows AI models can drift, hallucinate, or get stuck in logic loops without a human-in-the-loop workflow to catch issues early.

3. Advancing phases before hitting gate metrics

Phase gates exist because pipeline quality is hard to recover once you have trained prospects to ignore you. A reply rate of 2% in shadow mode does not become 6% just because you added volume. It stays at 2% and burns your sending domain. Hit the gate, then advance.

4. Not communicating the plan to reps

Sales teams that learn about an AI SDR deployment from a system notification (rather than a direct, transparent conversation) resist it. Not loudly, but passively. They delay reviewing co-pilot drafts. They avoid attributing pipeline to AI-sourced meetings. They route interesting prospects away from AI sequences into manual ones. All of this degrades your data and your results. Communicate early, communicate directly, and answer the job security question head-on.

5. Using volume as the success metric

AI SDRs can send 10x the volume of human SDRs. That is only an advantage if the quality holds. Teams that optimize for send volume instead of reply rate and SQO rate end up booking a lot of meetings that their closers cannot convert. The benchmark that matters is revenue-per-meeting from AI-sourced pipeline compared to rep-sourced pipeline. If it is within 70% of human pipeline quality, you have a system worth scaling. If it is below 70%, you have a targeting or messaging problem, regardless of what your booking numbers look like.

How Does Unify Support Each Phase of the Rollout?

Unify is built so that each phase of this 90-day plan maps directly to a native platform capability. You are not duct-taping tools together or waiting for a vendor to build the feature you need. The infrastructure for phased rollout is already there.

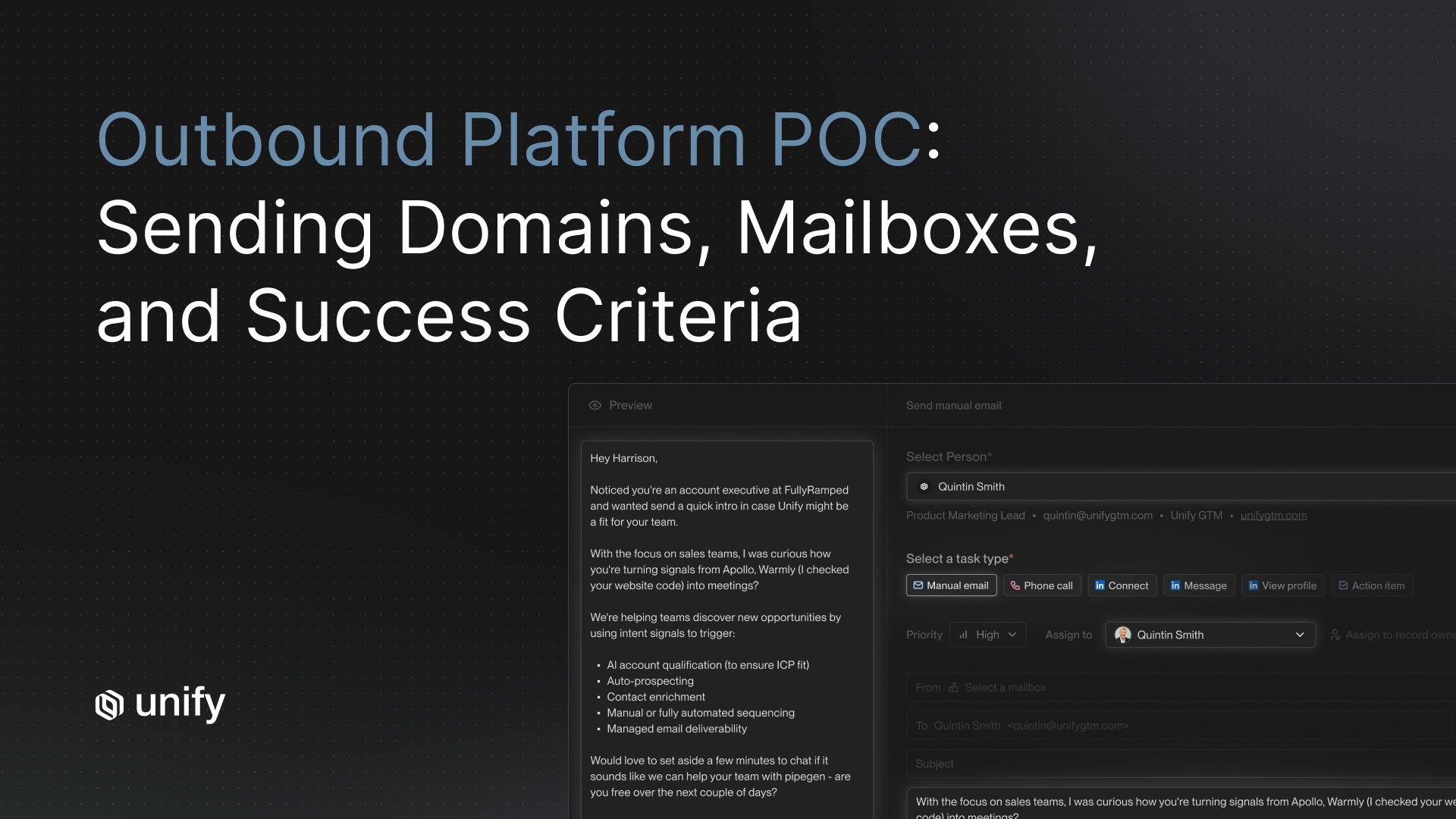

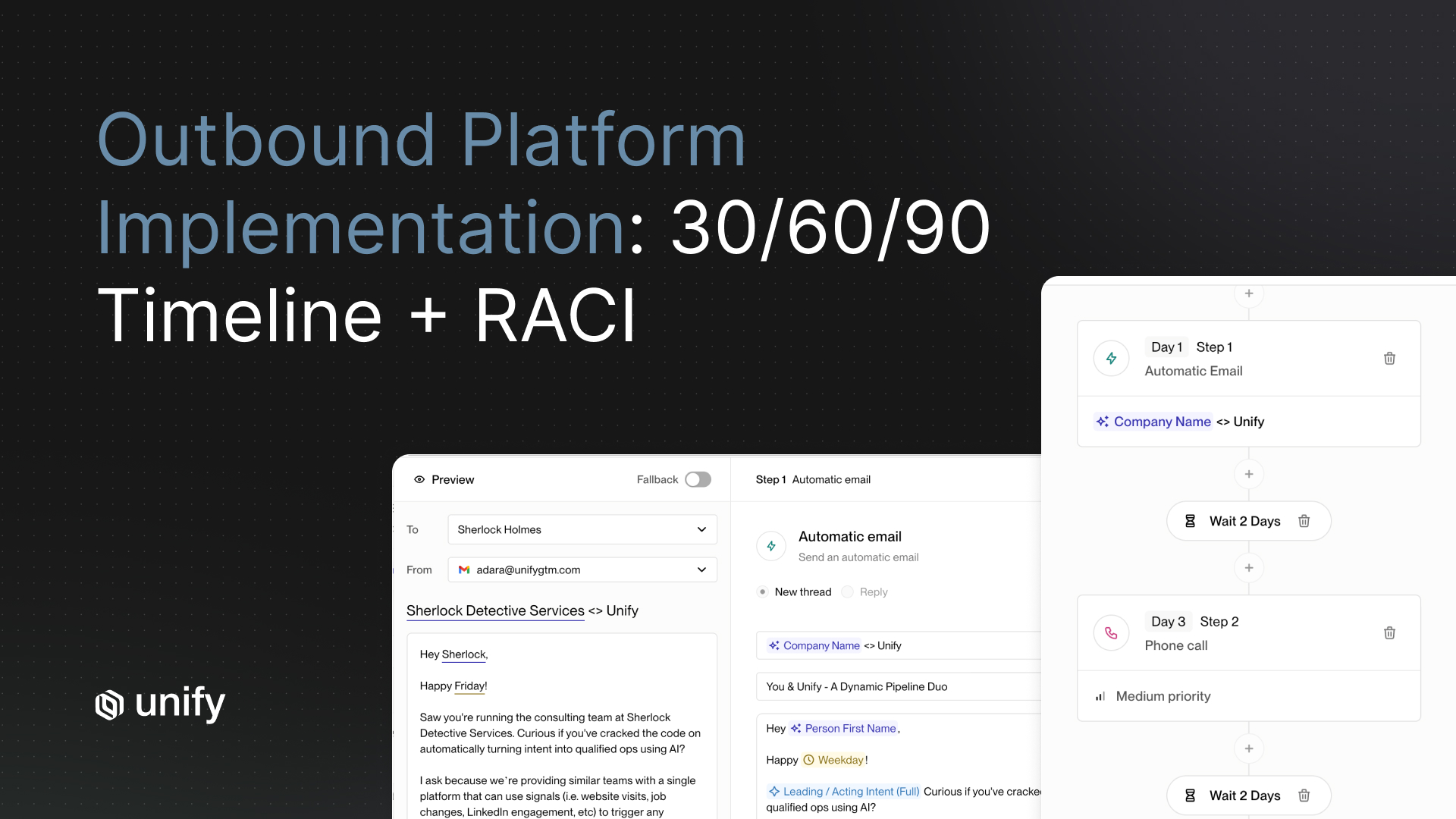

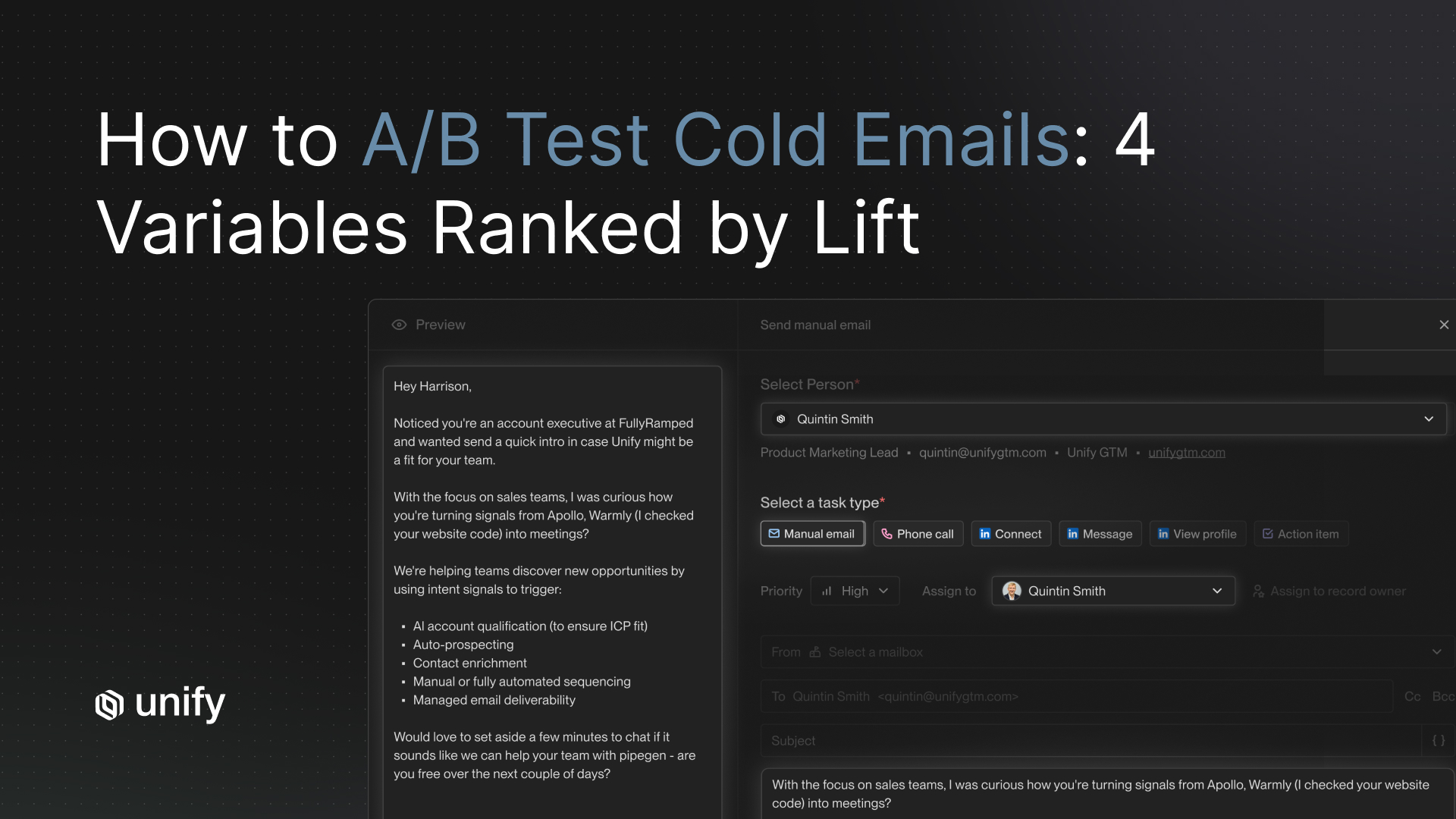

Phase 1 and Phase 2: Plays in draft and co-pilot mode

Unify Plays can be configured to generate and queue outreach drafts without sending. In Phase 1, you set up a Play scoped to your test contact list with sending disabled. The AI drafts messages, reps review them in the queue, and you measure quality without any risk to live pipeline. In Phase 2, Unify for Sales Reps provides a native review interface where reps approve or edit before messages send. Plays automate the research, enrichment, and personalization. Reps stay in the loop on the send decision.

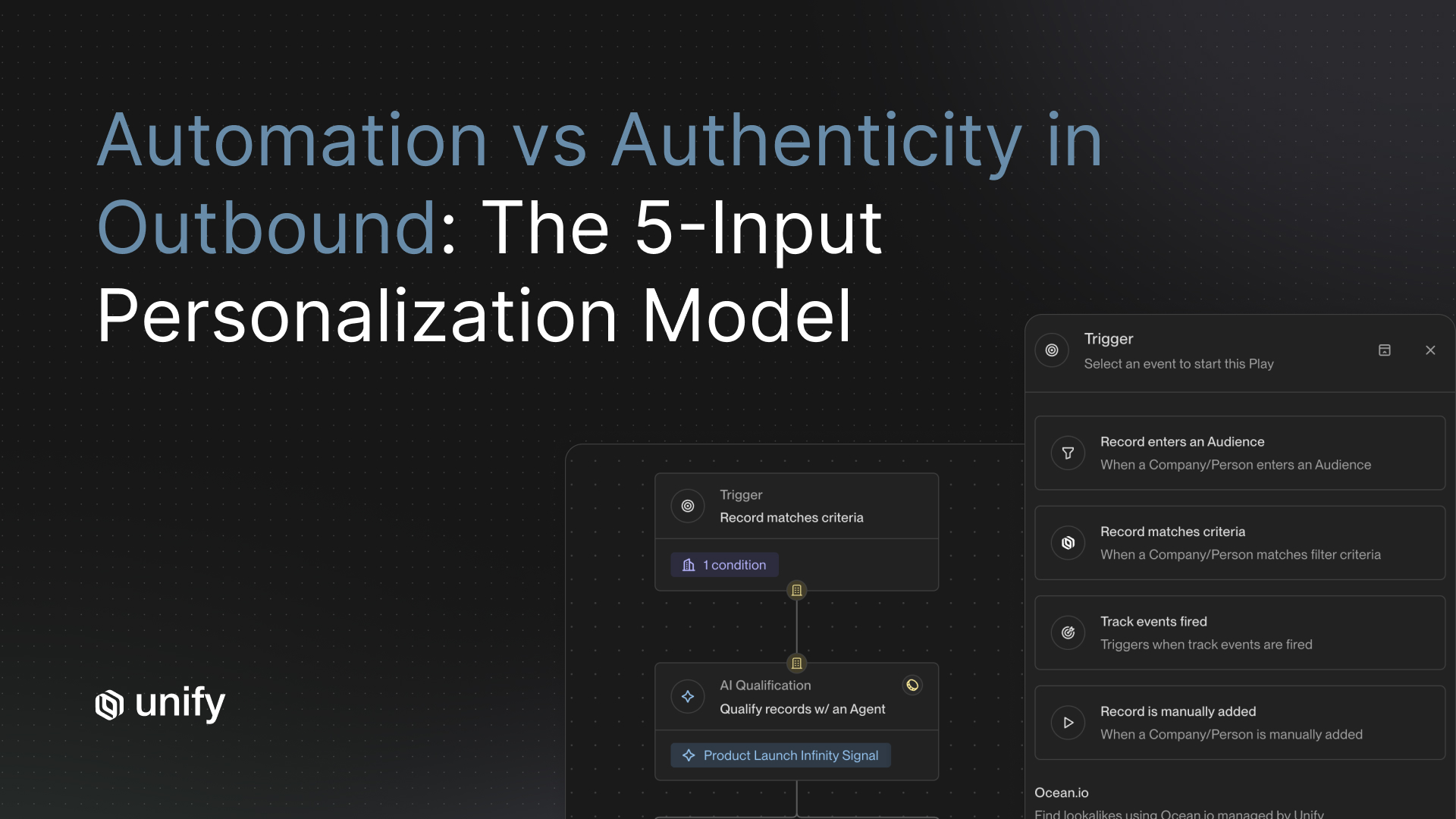

Phase 3: Signal-triggered Plays in a scoped territory

Unify tracks 25+ intent signals including job changes, website visits, competitor activity, and product usage events. In Phase 3, you build a Play that fires only when a contact in your pilot territory matches a specific signal. This concentrates your AI outreach on the contacts most likely to respond, which is how Unify's platform achieves the kind of results that show up in its customer data: Navattic generated $100K+ in pipeline in their first 10 days, and Pylon booked 3x more meetings with a 4.2x ROI on their Unify investment.

If you want a deeper look at how signal-based prospecting changes conversion rates, the article Automated Outbound: Your Next Big Growth Channel breaks down why signal timing matters more than send volume.

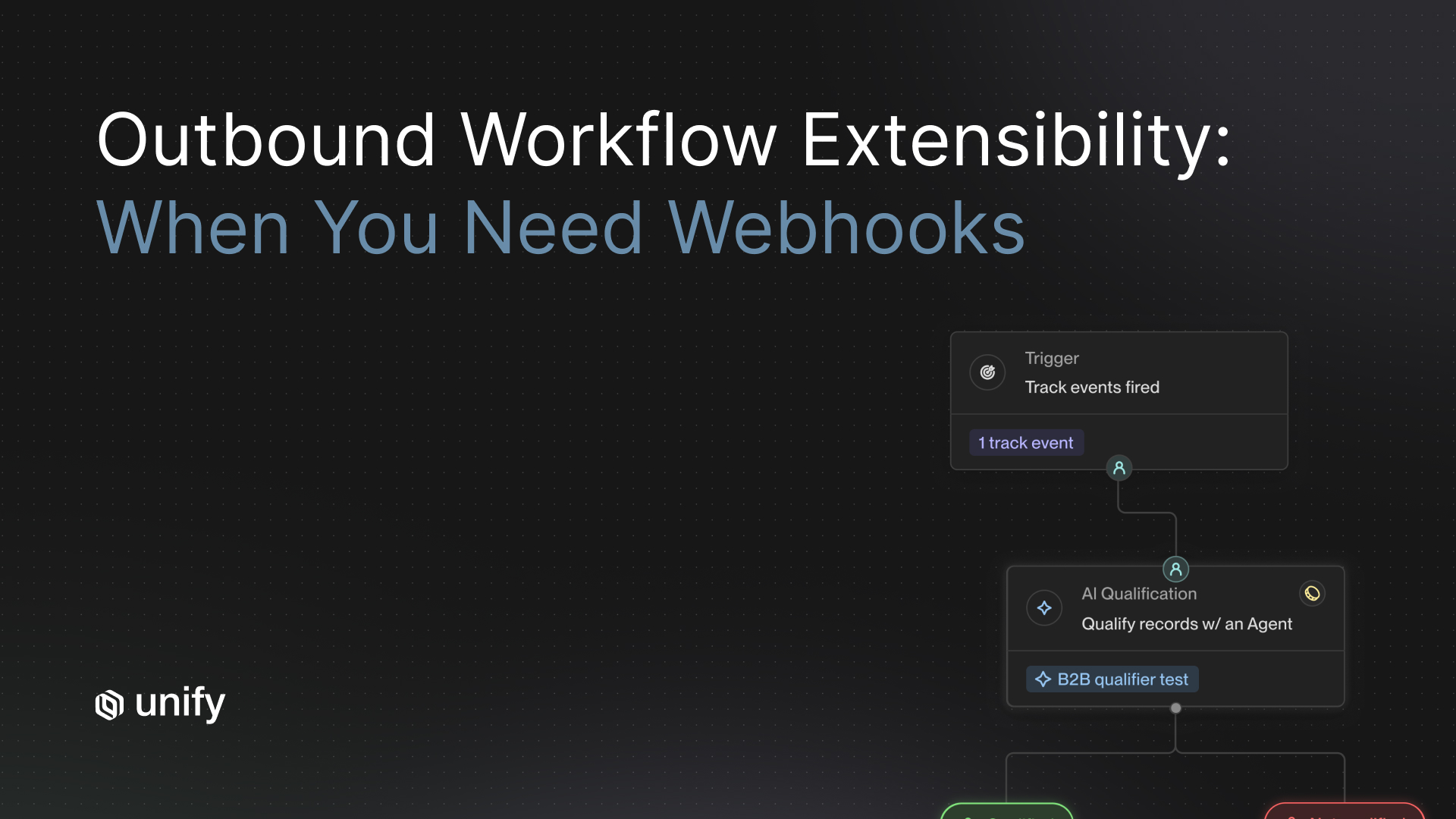

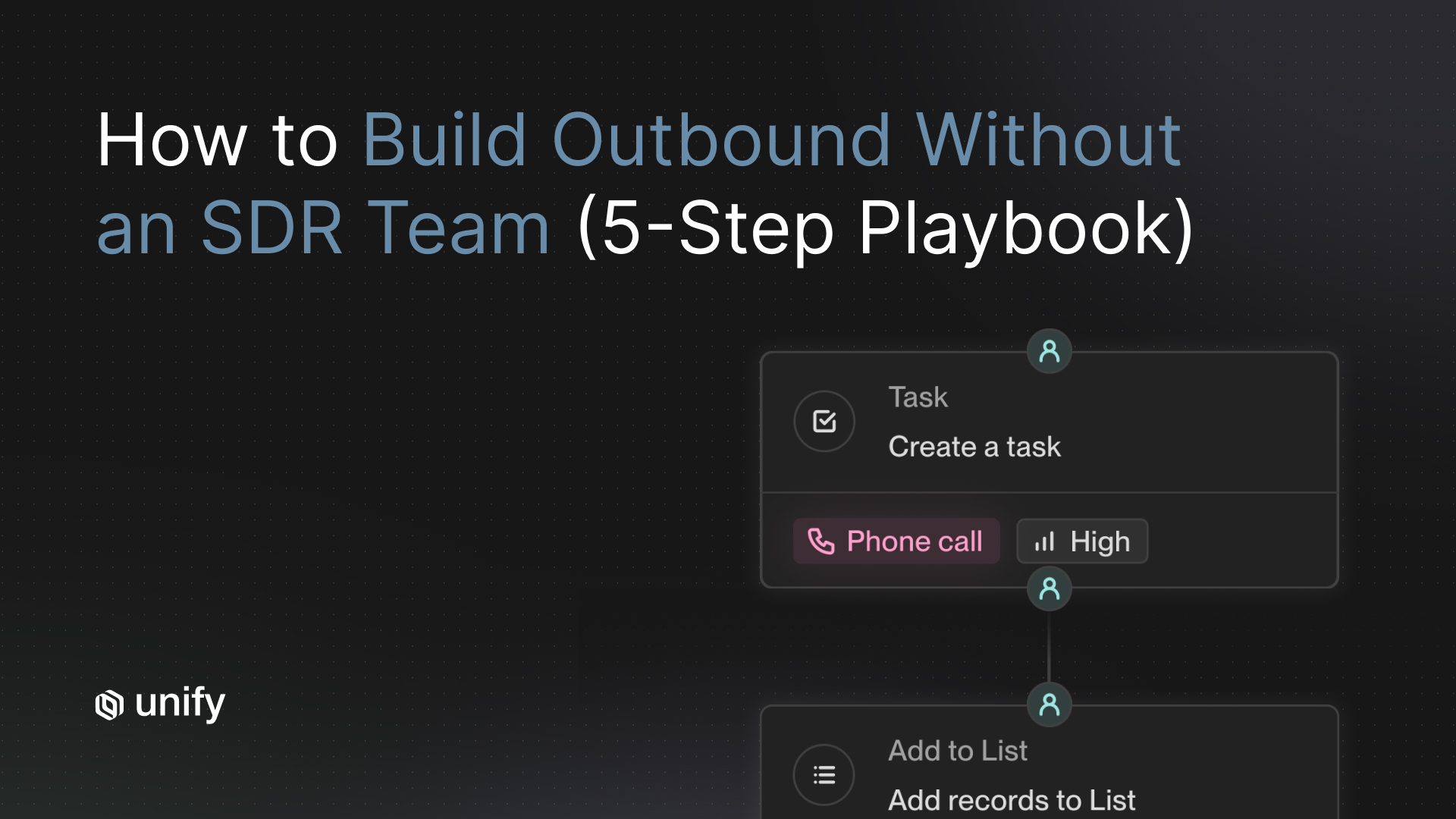

Phase 4: Full AI Agent autonomy with human-in-the-loop governance

At full rollout, Unify's AI Agents run autonomously: they monitor your TAM for signals, qualify accounts, prospect contacts, personalize messages, and enroll them in sequences. The human oversight layer is not per-message review. It is weekly Play-level analytics showing which signals convert, which sequences drive SQOs, and where quality is drifting. Unify's platform generated $27M in pipeline directly through automated outbound in 2025, with a 22% conversion rate for outbound-sourced opportunities. That output comes from the same Play-plus-Agents architecture this rollout plan is built around.

For teams thinking through how AI Agents and human SDRs should divide responsibilities at scale, AI Agents vs. SDRs: Partnering with AI to Power Prospecting gives a clear framework for the long-term operating model.

Frequently Asked Questions

How do I implement an AI SDR tool without disrupting existing pipeline?

Treat it as a phased migration, not a switch-flip. Start with a 2-week shadow mode where the AI generates drafts but sends nothing. Move to co-pilot (weeks 3-5), pilot territory (weeks 6-9), and full rollout (weeks 10-12). Each phase requires meeting specific gate metrics before advancing: 3%+ reply rate to exit shadow mode, 5%+ reply and 8%+ meeting rate to exit co-pilot, and 10%+ reply, 12%+ meeting, and 8%+ SQO rate to exit pilot territory. The phase gates are what protect active pipeline throughout the transition.

How long does an AI SDR implementation take?

A safe, pipeline-protecting rollout takes 90 days across 4 phases. The first two weeks are shadow mode with no live sends. Weeks 3-5 are co-pilot mode with rep review before each send. Weeks 6-9 are an autonomous pilot in one scoped territory. Full rollout happens in weeks 10-12. Teams that skip phases and attempt full deployment in 2-4 weeks see 50-70% churn from their AI SDR platform before contract renewal, based on research from UserGems tracking AI SDR adoption patterns.

What are the gate metrics for an AI SDR rollout?

Each phase requires hitting minimum thresholds before you advance. To exit shadow mode (weeks 1-2): AI-drafted messages must reach 3%+ positive reply rate in test sends. To exit co-pilot (weeks 3-5): 5%+ reply rate and 8%+ meeting booking rate. To exit pilot territory (weeks 6-9): 10%+ reply rate, 12%+ meeting rate, and 8%+ SQO rate. These gates exist to protect active pipeline and confirm the AI's output quality before you scale volume.

What are the most common AI SDR implementation failures?

The five most common failures are: skipping a CRM data audit before configuration, treating the AI as fully autonomous from day one, advancing phases before hitting gate metrics, failing to communicate the rollout plan to reps, and measuring success by send volume instead of reply rate and SQO rate. Research from UserGems found that 50-70% of companies churn off their AI SDR platform before renewal, with each failure traceable to one of these five mistakes.

How does Unify support a phased AI SDR rollout?

Unify's Plays and AI Agents map to each phase. In shadow and co-pilot mode, Plays generate drafts for rep review without auto-sending. Unify for Sales Reps provides a native review queue before messages go out. In pilot territory, you scope a Play to one segment and trigger sends only on high-intent signal contacts. At full rollout, AI Agents operate autonomously on signal-triggered workflows. Pylon achieved 4.2x ROI within weeks of onboarding, and Navattic generated $100K+ in pipeline in their first 10 days on the platform.

Sources

- UserGems: Are AI SDRs Really Worth It in 2026?

- Qualified: AI SDR Implementation: The Comprehensive Guide

- Unify Customer Story: Navattic generates $100K+ in pipeline in 10 days

- Unify Customer Story: Pylon achieves 4.2x ROI with Unify

- Unify: This Year in Performance (2025)

- Unify Explore: Pipeline as a Science: Metrics That Matter in Modern Outbound

- SalesSo: Outbound SDR Statistics 2025

- Gradient Works: Benchmarks for Metrics That Matter to an SDR or BDR Team

- Product Growth Blog: The AI SDR Playbook: What Actually Works in 2026

- AiSDR: State of AI SDR Industry 2026 Report

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)