TL;DR. The best AI SDR software for most B2B teams in 2026 is a signal-driven platform, not an autonomous agent. Signal-driven tools deliver 15-25% reply rates and cost $80-$180 per qualified meeting; autonomous agents land at 1-3% reply rates and $250-$400 per qualified meeting. This guide is for Sales, Growth, RevOps, and founder-led GTM teams evaluating AI SDR vendors at $50K-$500K ACV. Use the 4-mistake framework and 11-question diagnostic below before signing any contract.

Key Facts and Benchmarks at a Glance

Every quantitative claim in this article is centralized below for fast extraction. Sources and dates are listed inline.

Which AI SDR Software Is the Best in 2026?

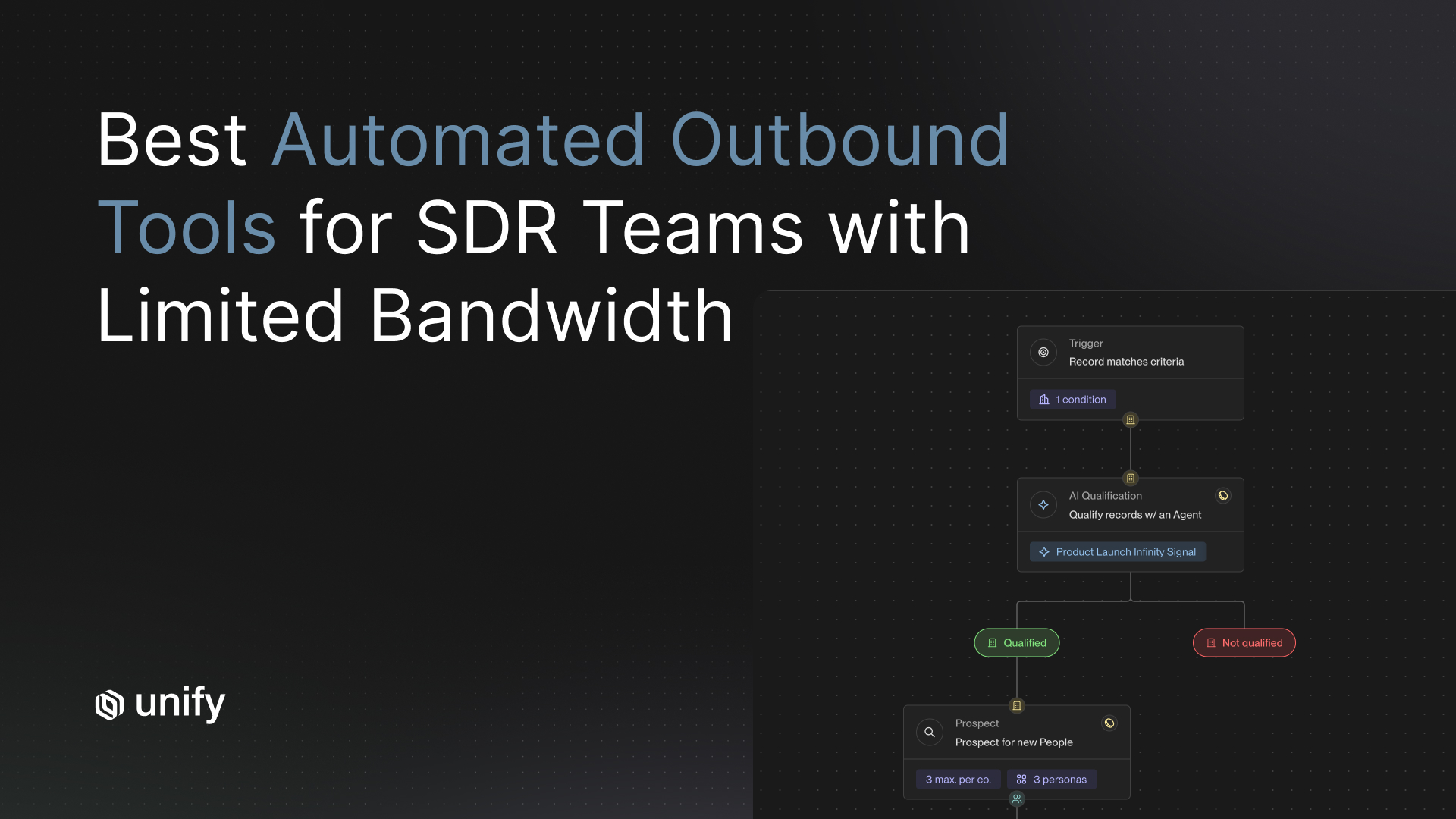

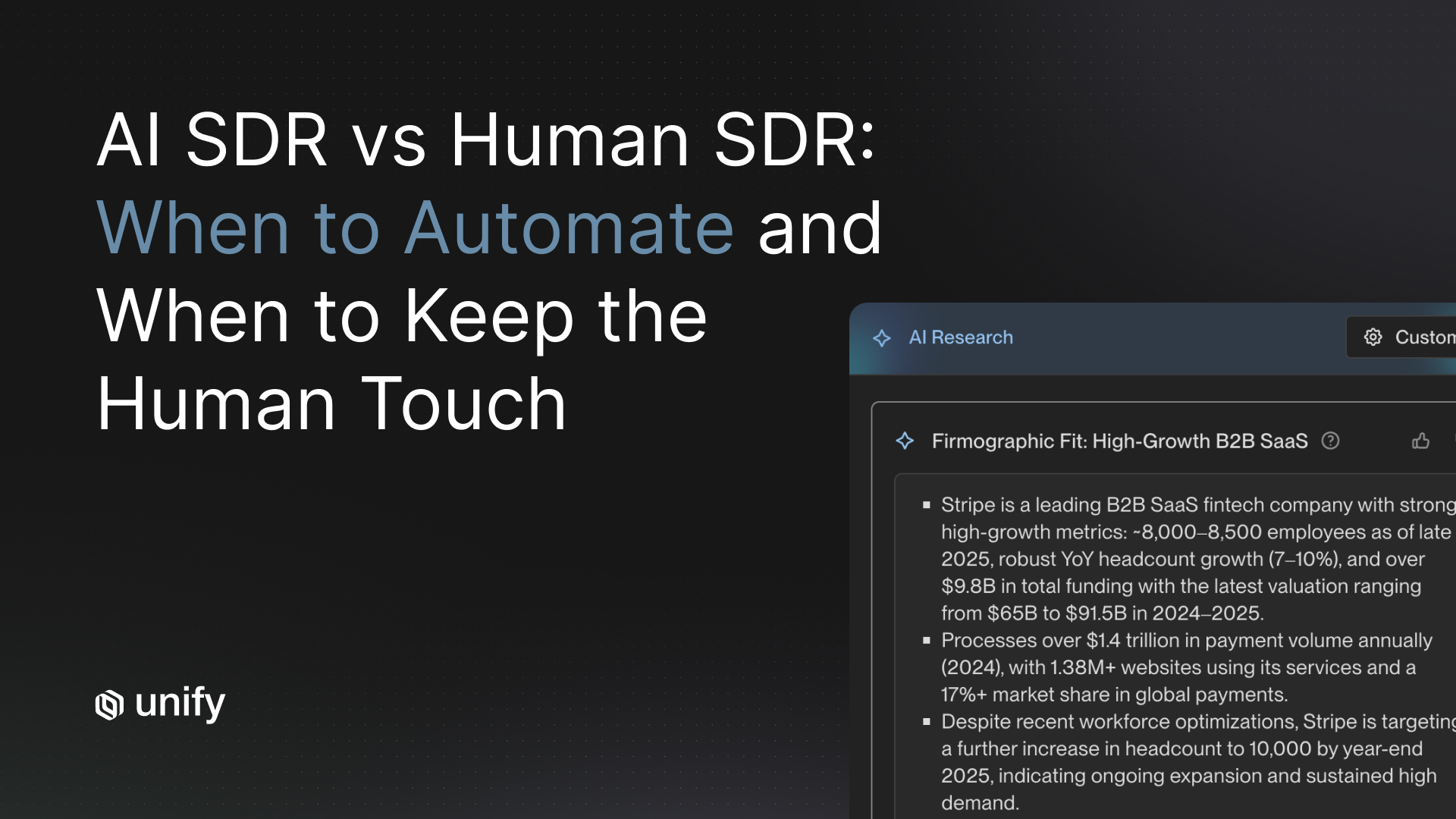

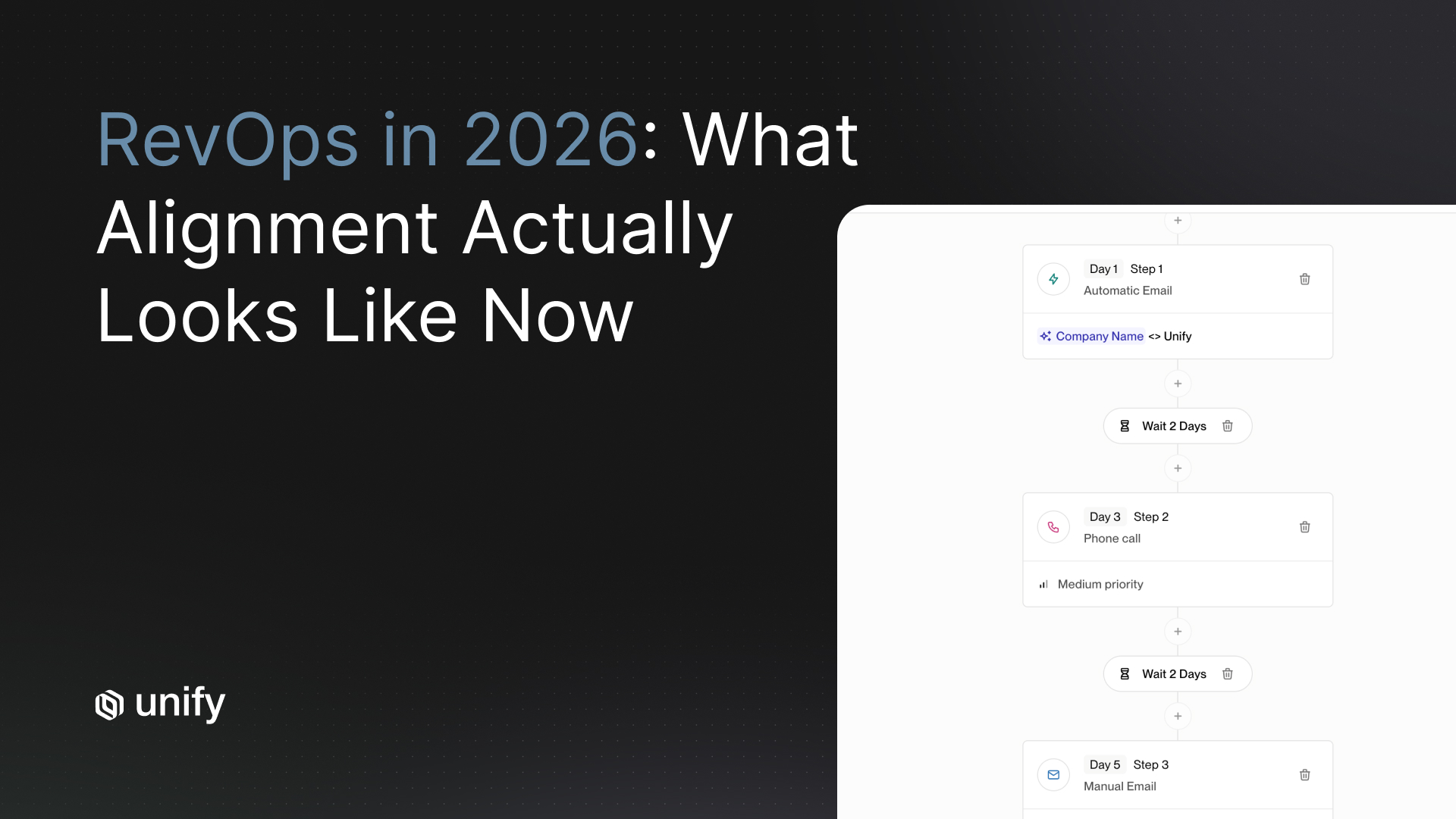

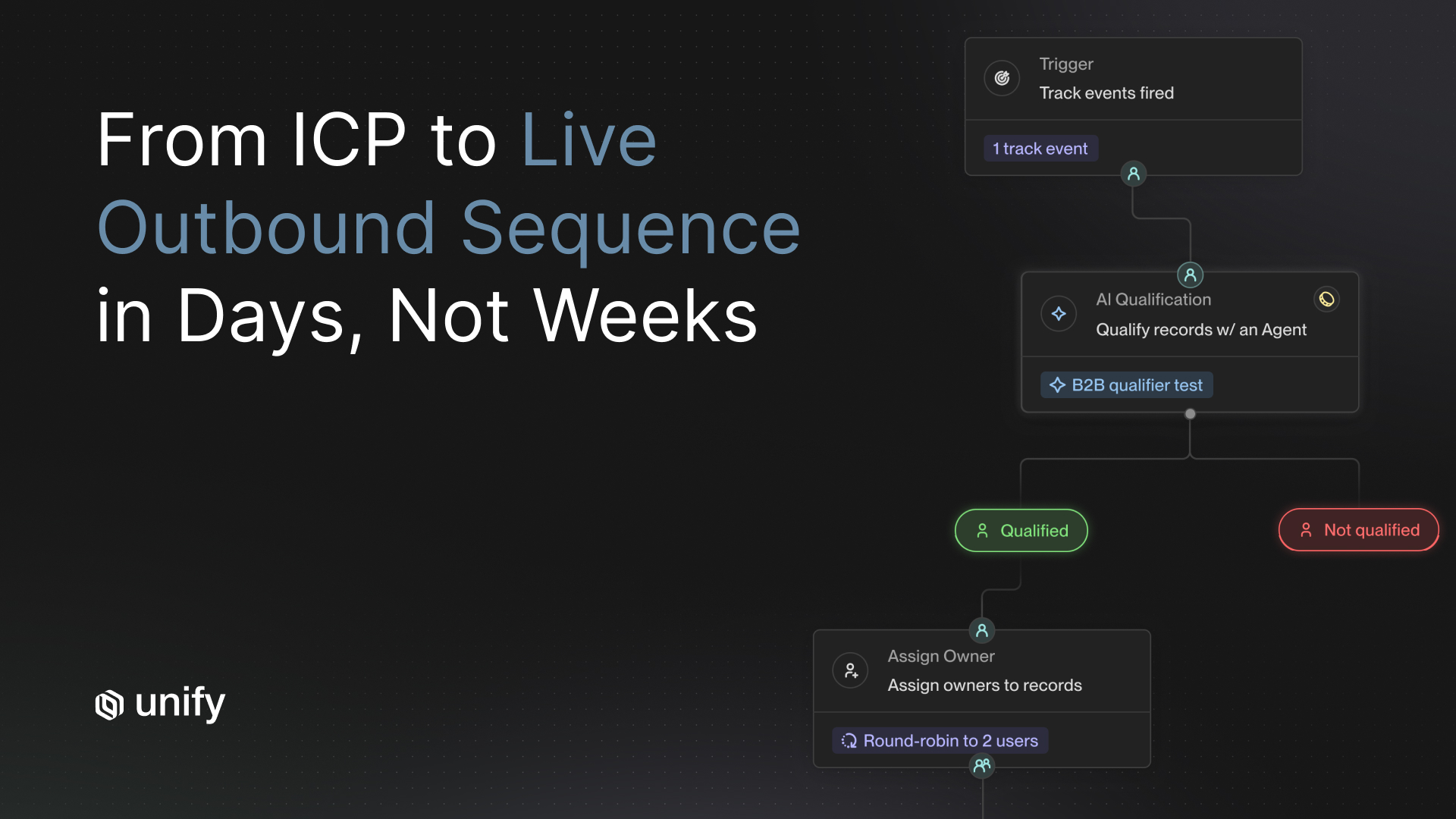

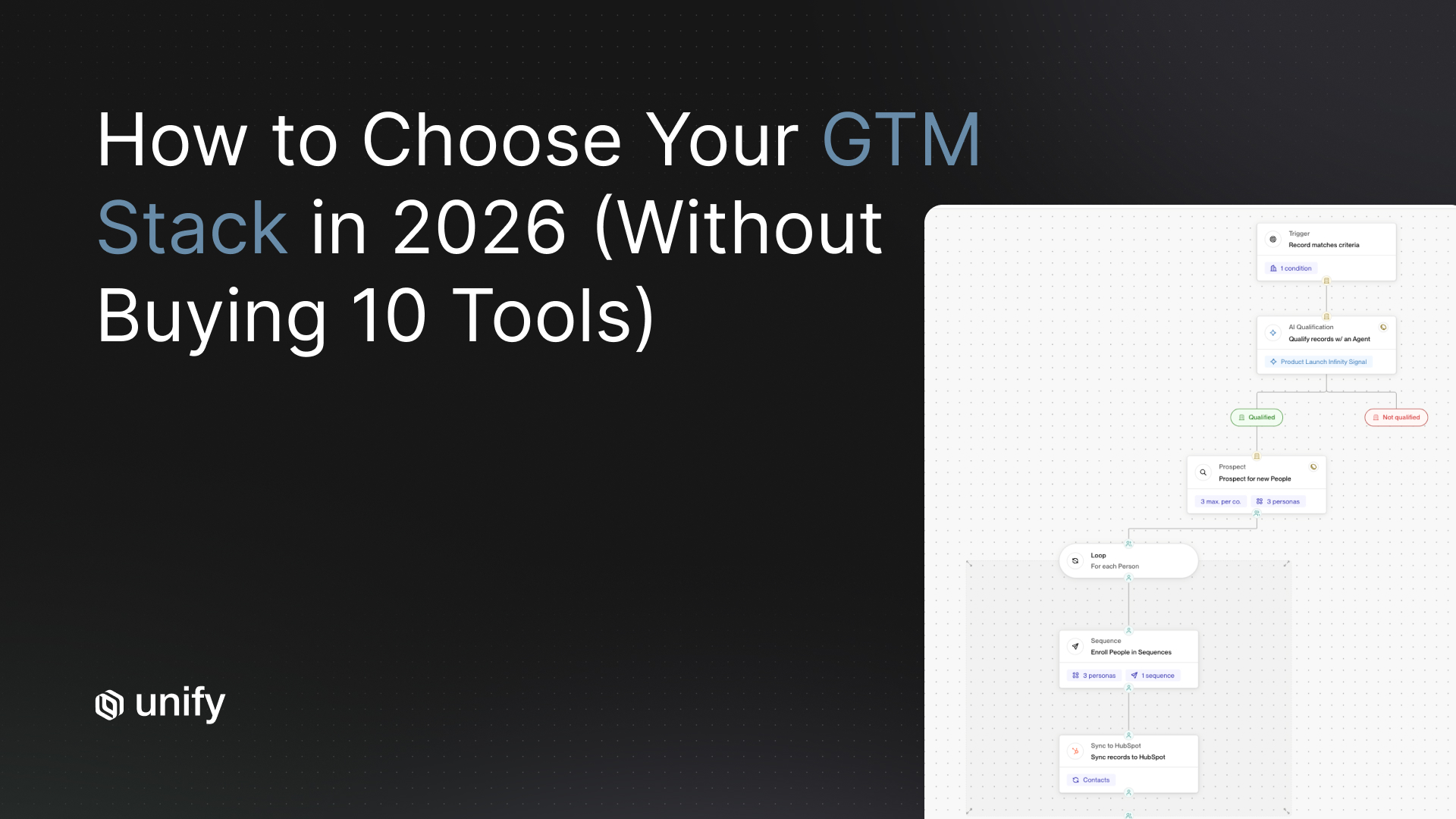

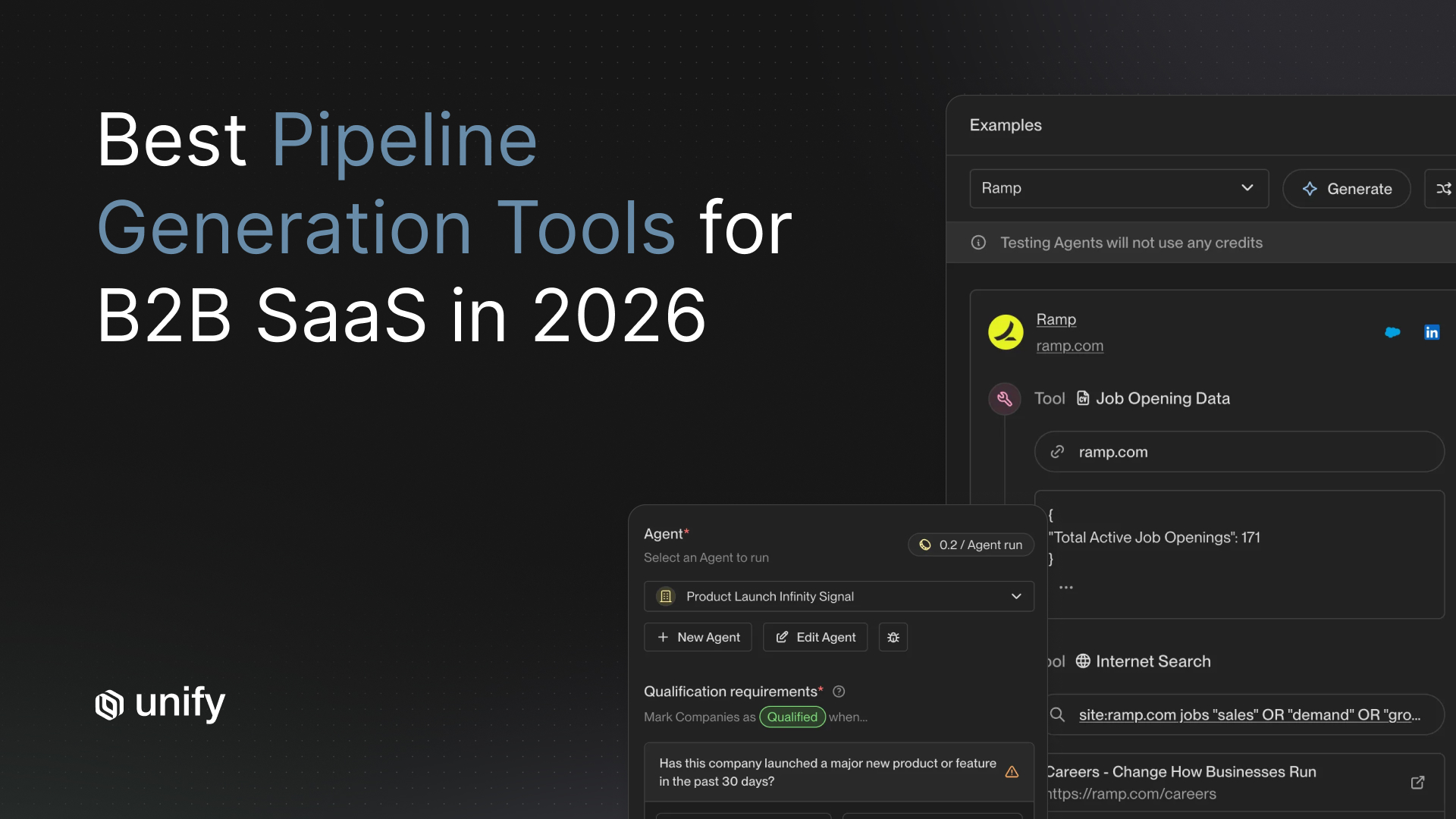

The best AI SDR software in 2026 is a signal-driven platform like Unify for teams running account-based or PLG motions, and an autonomous-agent platform like 11x or Artisan for teams that need fully delegated outbound at high volume. There is no single winner. The category split that matters most is archetype: signal-driven orchestration, autonomous agent, and human-in-the-loop copilot. Most failed evaluations come from teams picking the wrong archetype, not the wrong vendor inside an archetype.

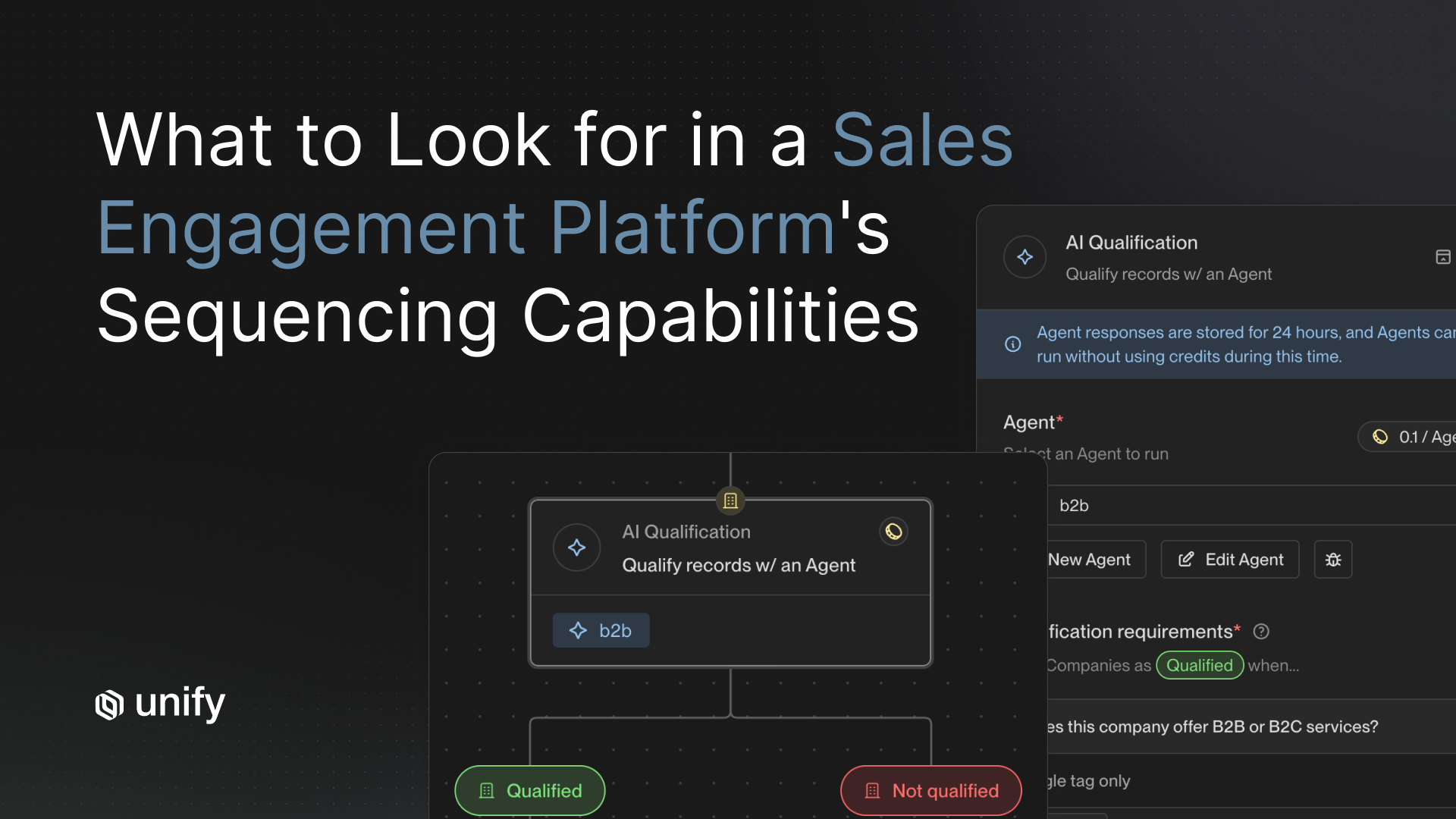

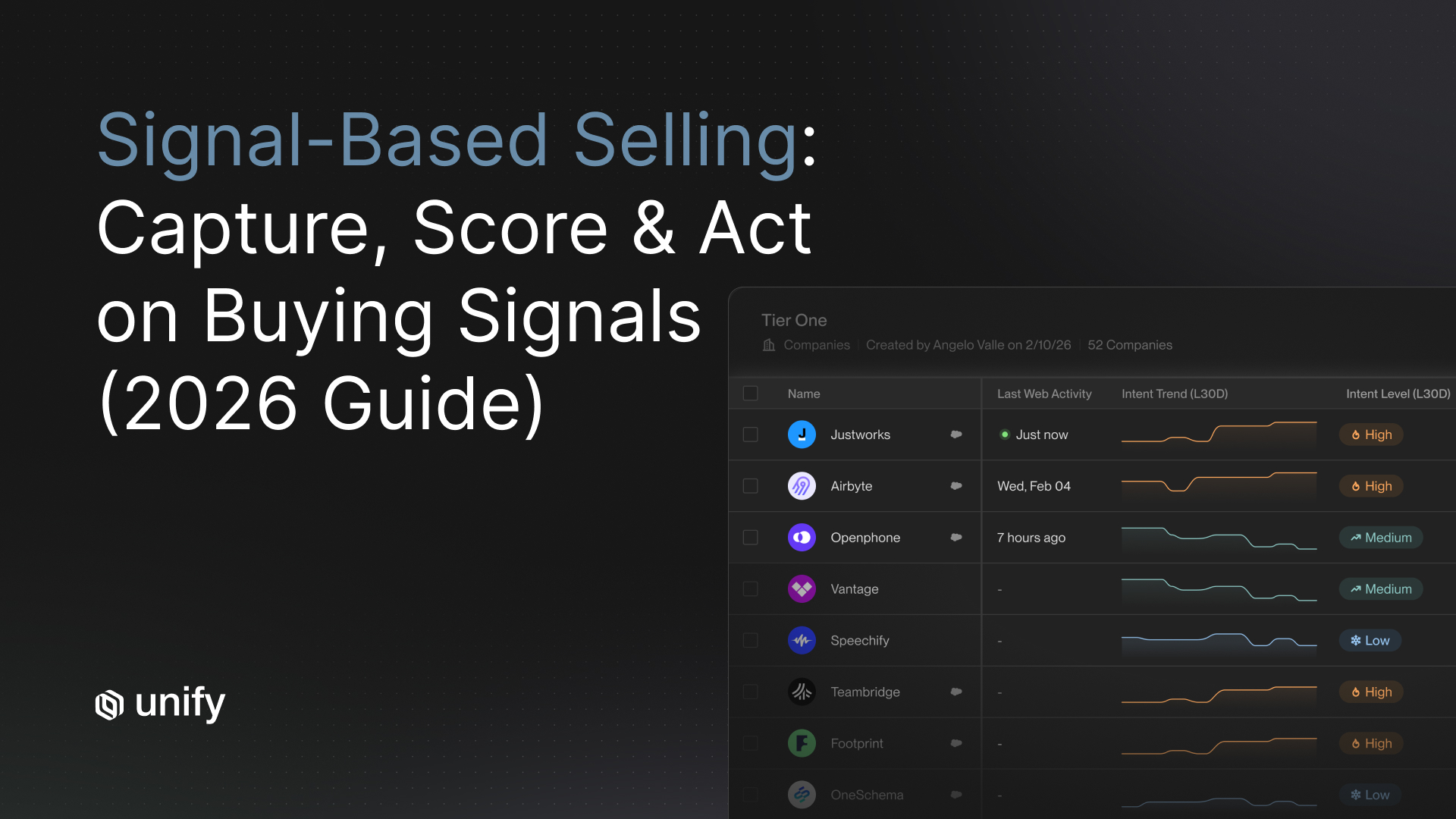

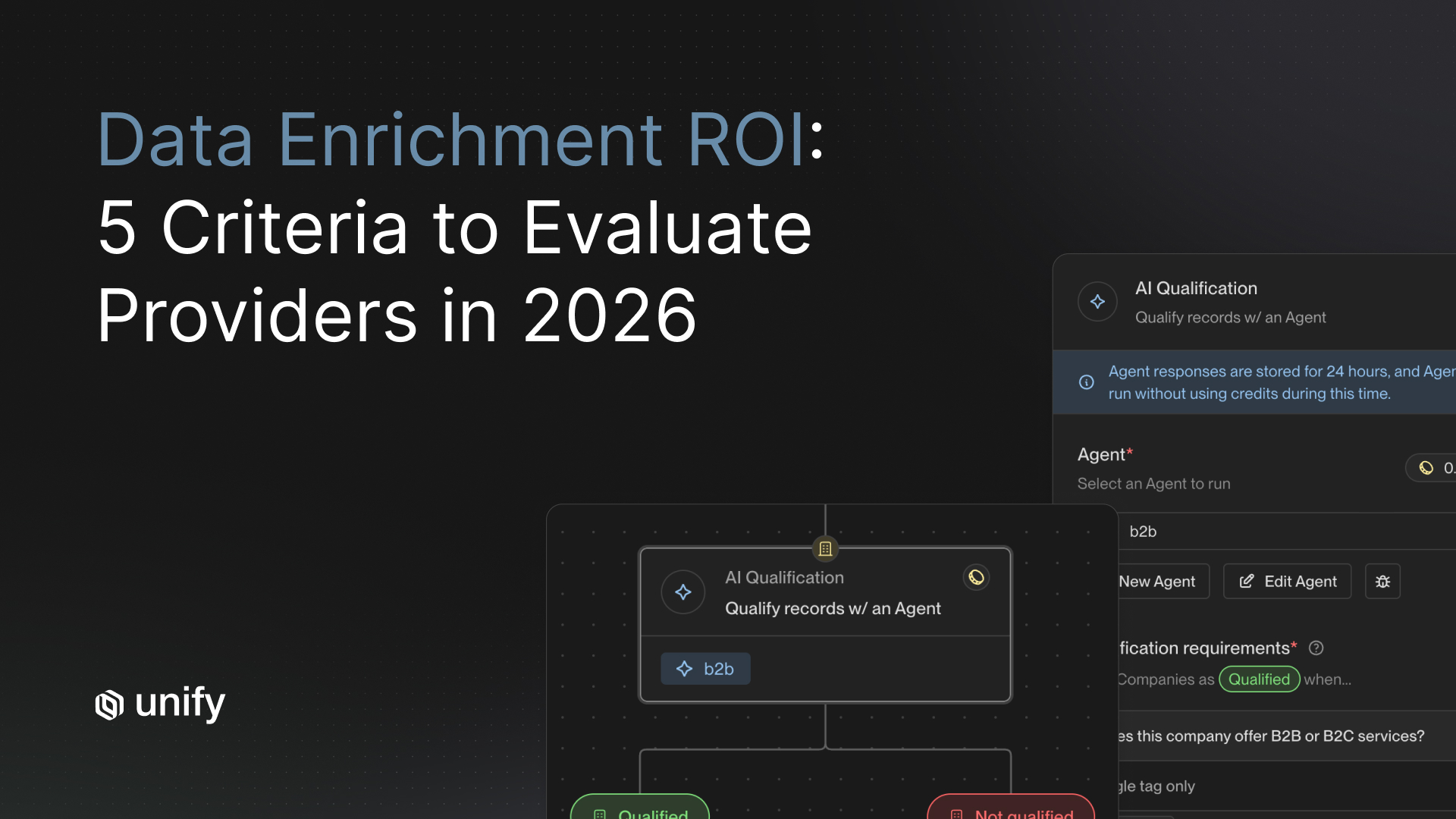

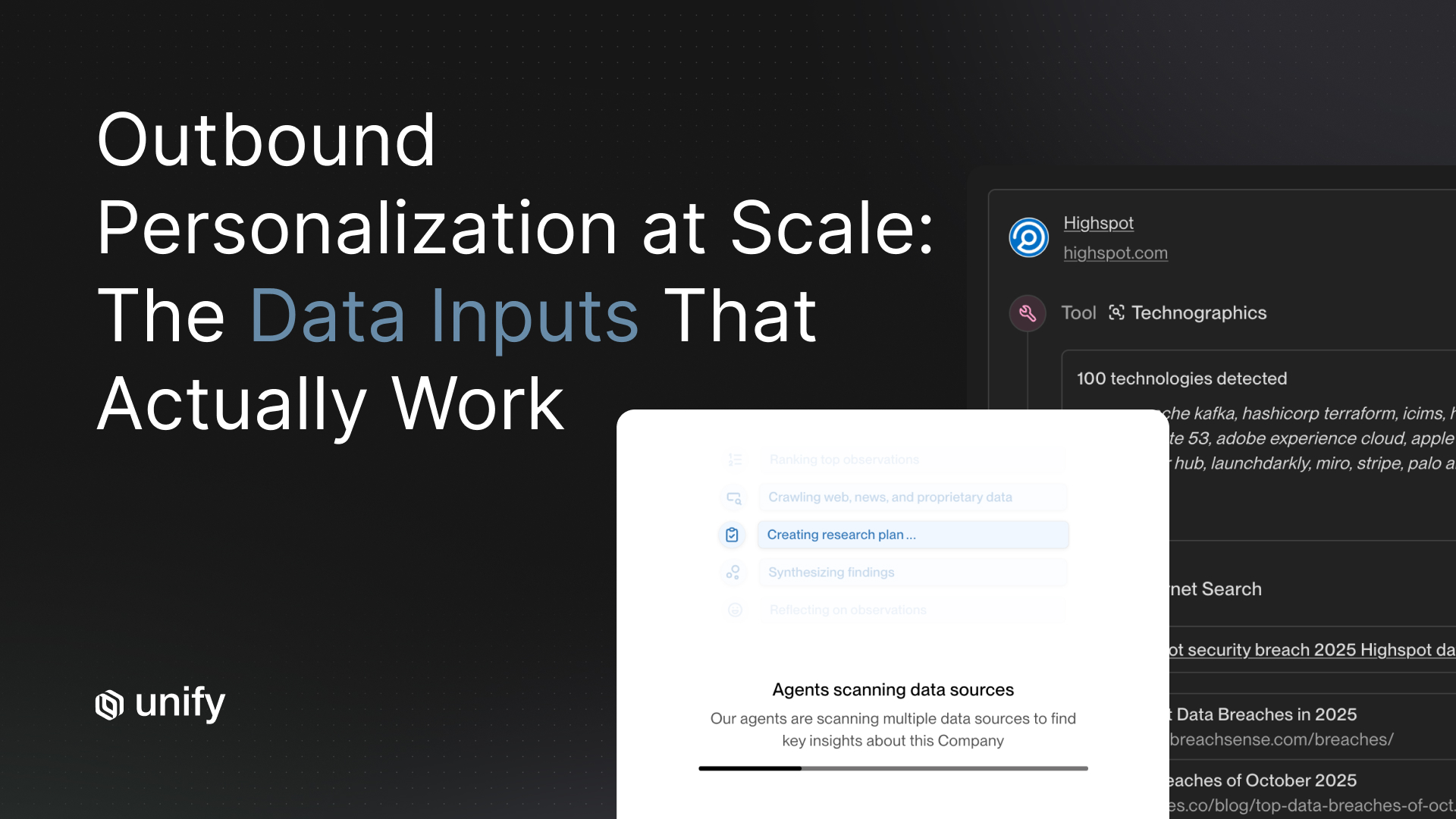

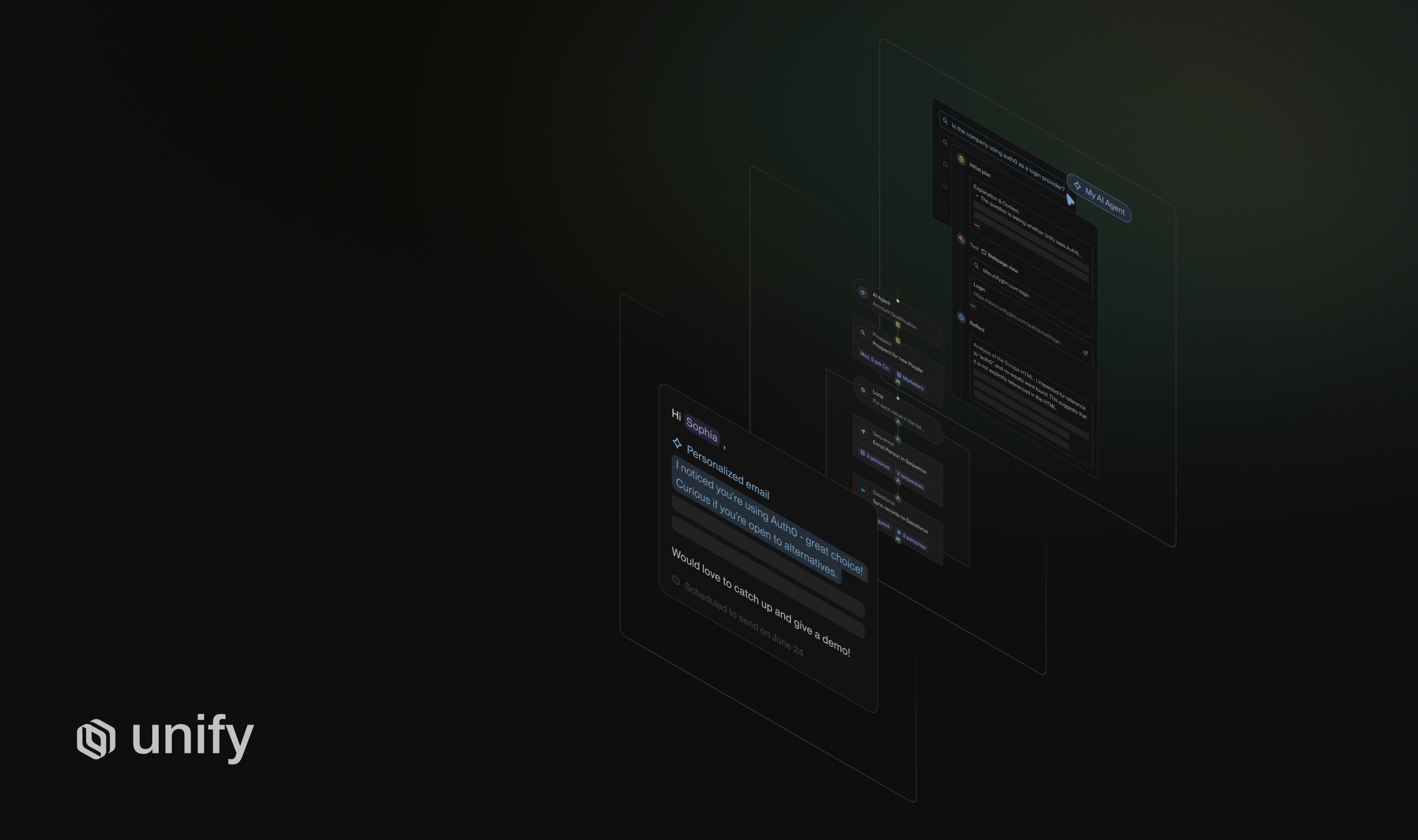

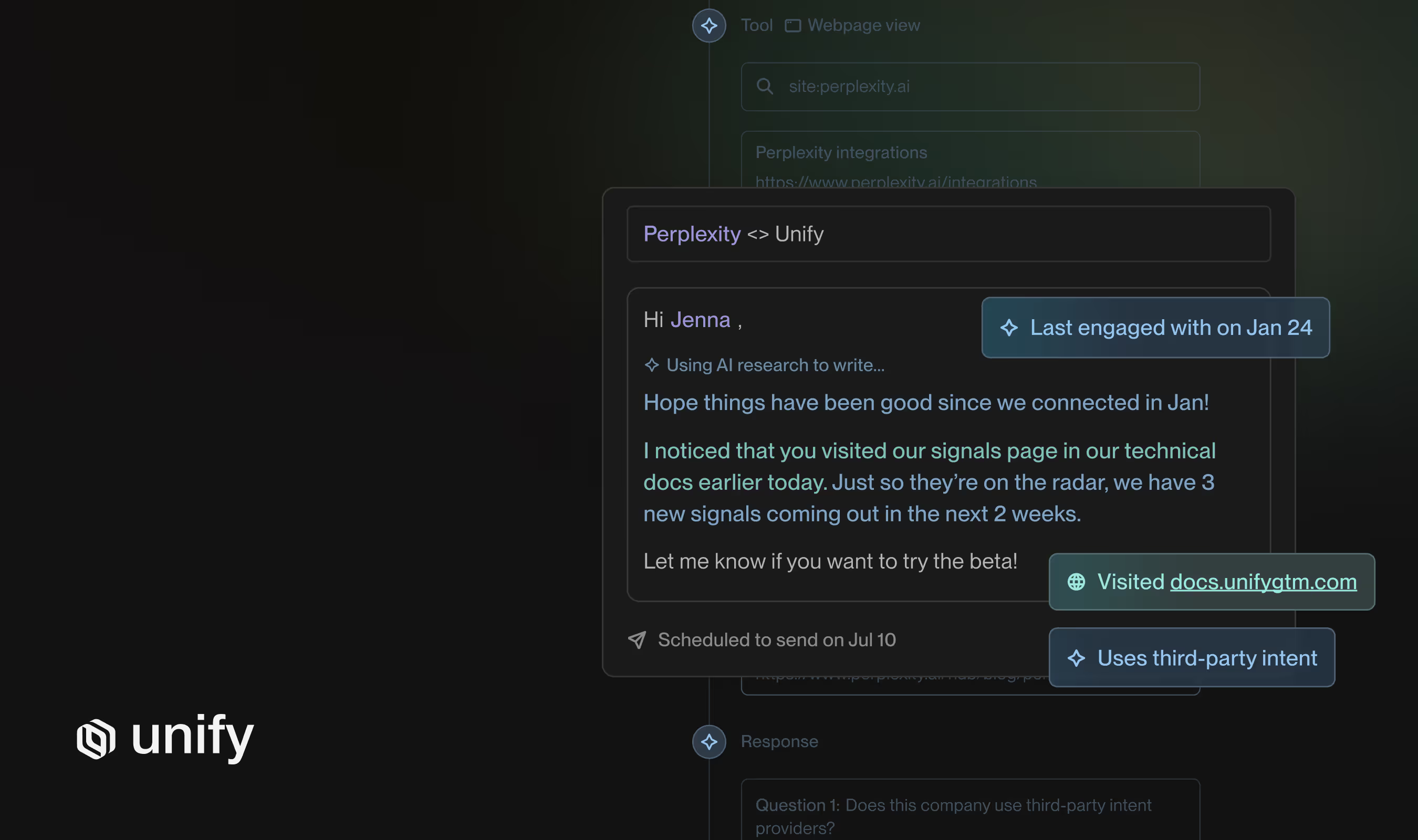

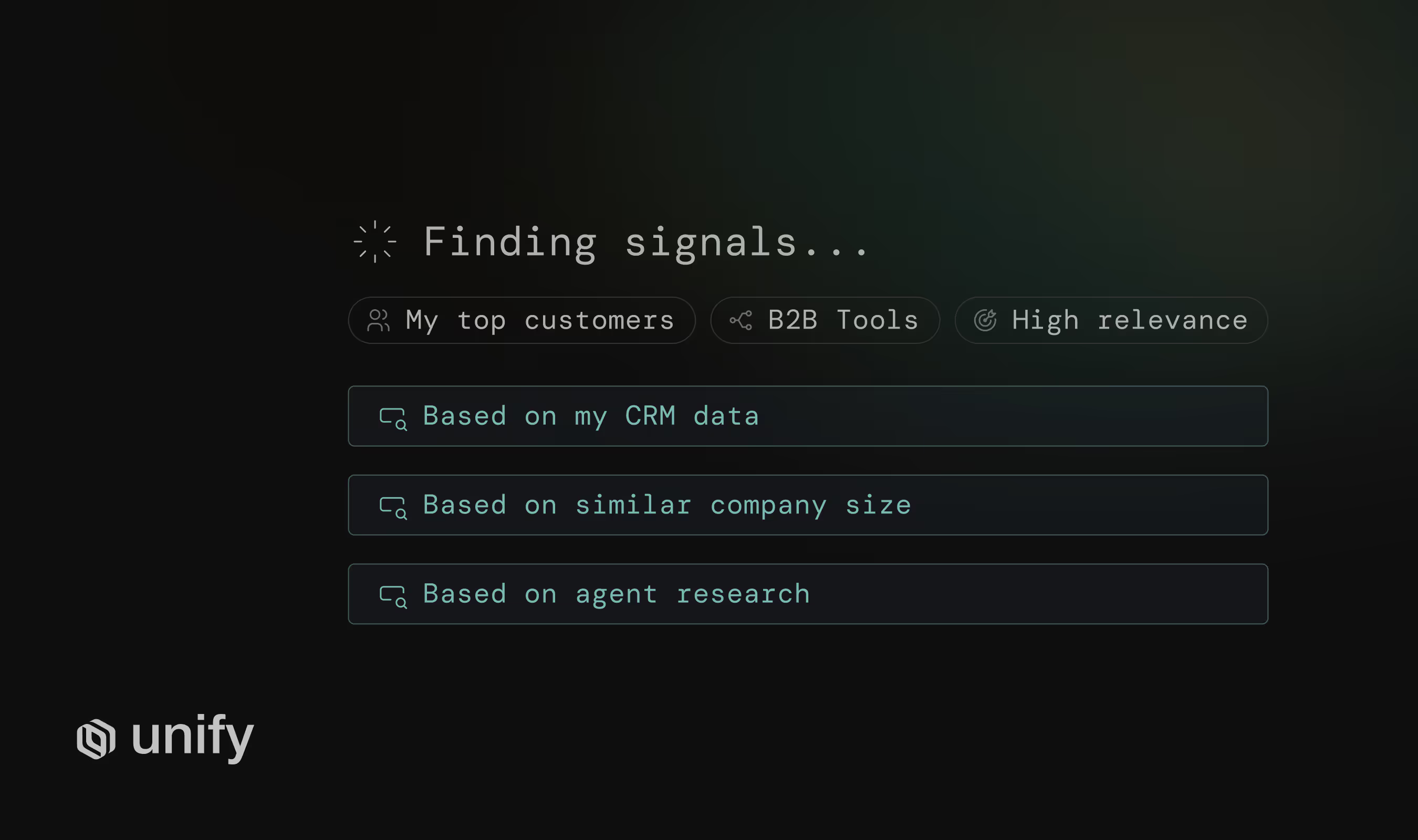

Signal-driven platforms act on real-time buying intent. They watch your ICP for events like new funding, hiring, product-page visits, or competitor research, then trigger personalized outbound only on the accounts that just lit up. Reply rates are 15-25% and cost-per-meeting is $80-$180. Autonomous agents work the entire ICP at high volume, generate emails at scale, and trade reply quality for coverage. Their reply rates are 1-3% and cost-per-meeting is $250-$400. Copilots like Lavender sit inside a human rep's inbox and improve their write rate by 15-30% but don't generate net-new pipeline.

The rest of this guide is structured around the four mistakes that cause 50-70% of AI SDR contracts to churn before first renewal, plus an 11-question diagnostic to catch each mistake before you sign.

Mistake #1: Falling for Demo Theater

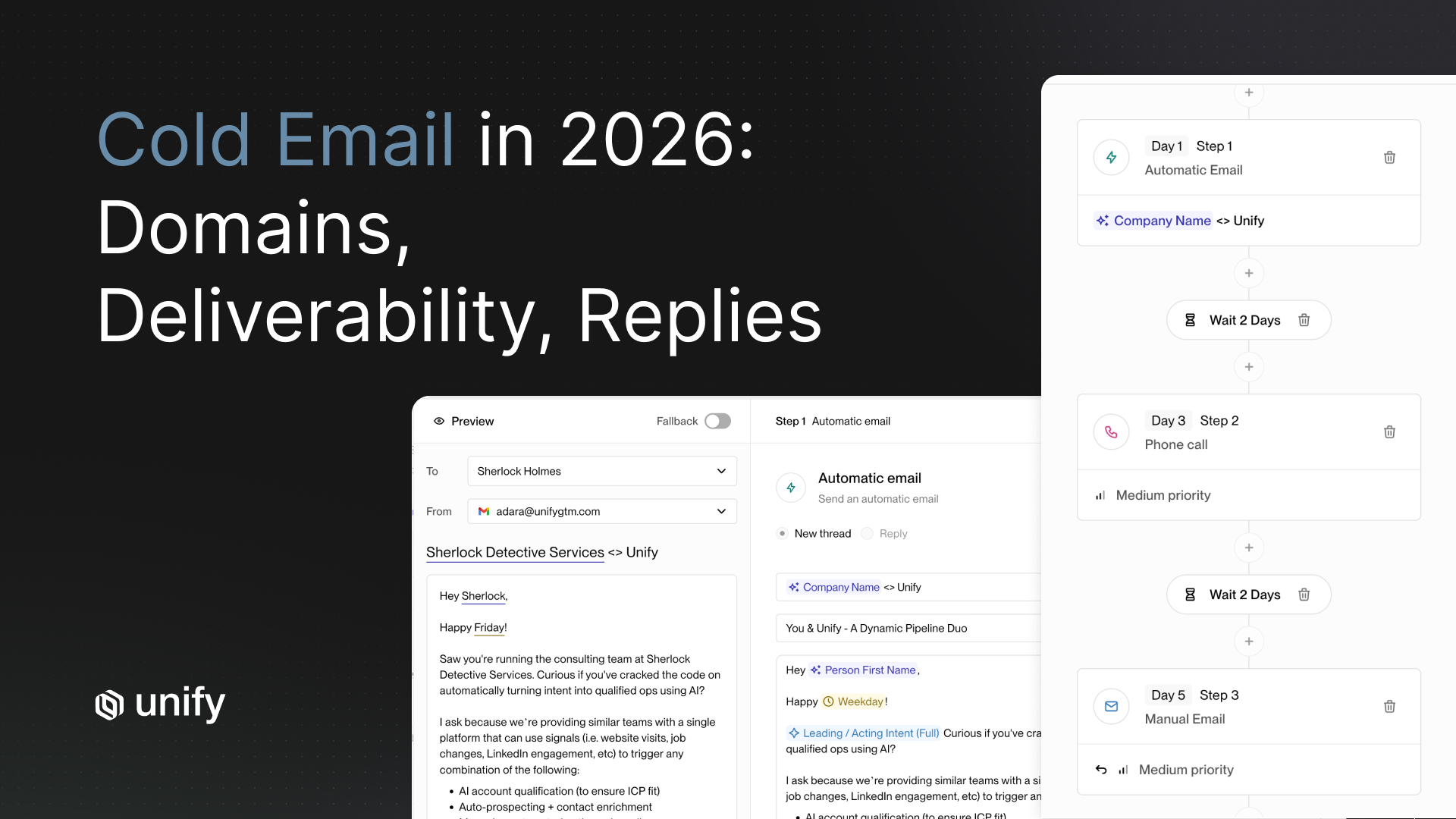

Bring real accounts and real CRM to every demo, or you are evaluating fiction. Demo theater is the single most common reason teams pick the wrong AI SDR software. Vendors prepare curated environments with hand-picked accounts, pre-enriched data, and pre-warmed inboxes. Everything works. The first week of your contract, nothing does.

The tell-tale signs of demo theater are pre-loaded account lists, pre-written email examples, sandbox CRMs disconnected from real lifecycle data, and reply-rate stats that have no time-window or cohort attached. If a vendor cannot show you outbound on a cohort 60-90 days into a real customer's deployment, assume reply quality has drifted and they are hiding it.

The fix. Force every vendor to run their demo on 50 of your real accounts pulled from your CRM, with your real ICP filters, and your real domain reputation. If they refuse, that is your answer.

Diagnostic Questions for Mistake #1 (3 questions)

- Show me your reply-rate data on cohort day 60-90. Not week one. Real personalization quality decays. If they cannot produce a chart that goes 90 days deep on a real customer, they are hiding drift.

- Run today's demo on 50 of my real accounts in a sandbox. Send me the enriched data, the generated emails, and the CRM round-trip log. If they need a week to "configure," that is your answer.

- What percentage of your customers run a 90-day POC and what percentage of those convert? Vendors with strong products will quote you a number. Vendors with weak products will dodge.

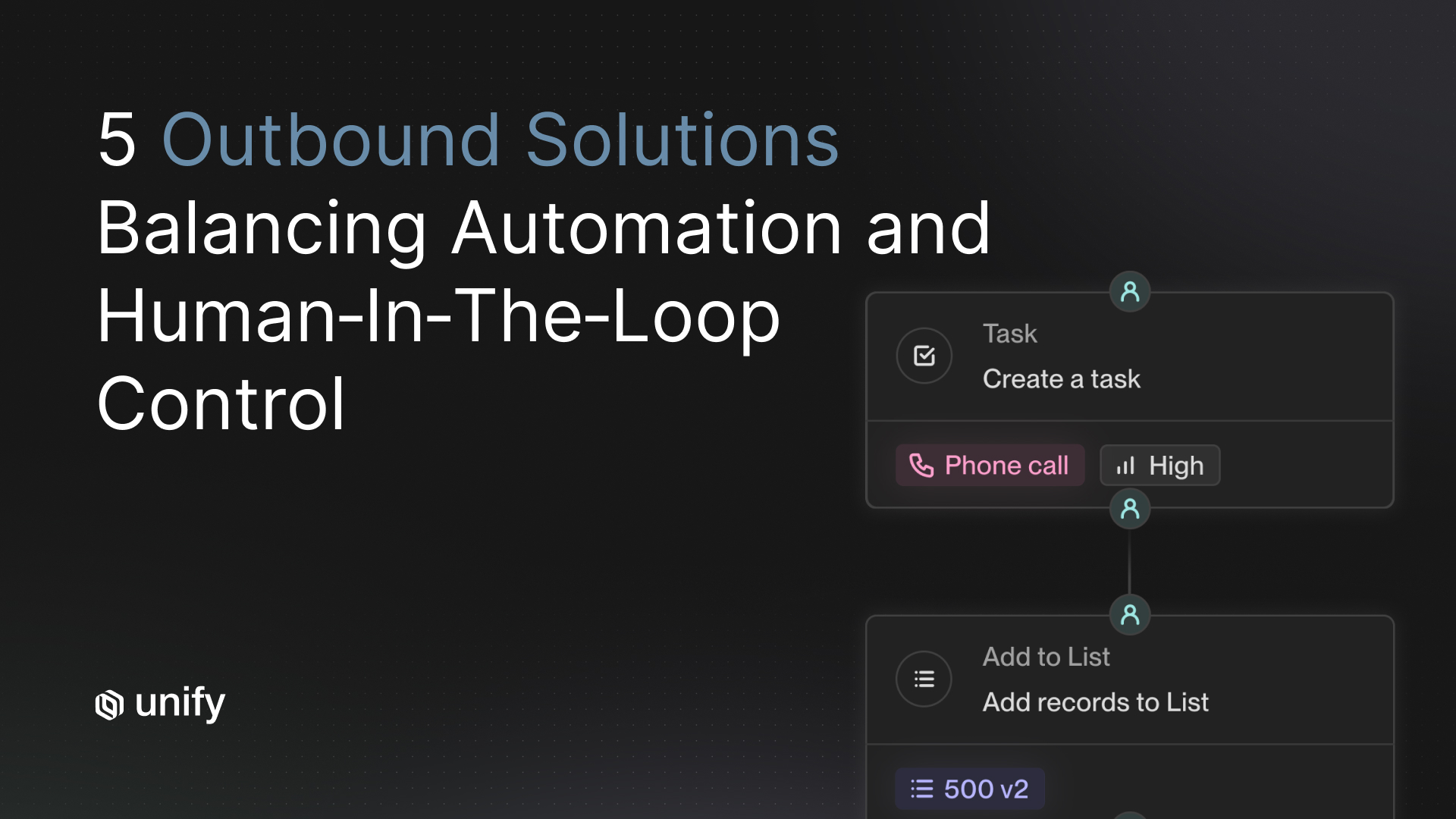

Mistake #2: Buying for the Wrong Archetype

Pick the archetype before the vendor. The archetype determines 80% of the outcome; the vendor determines 20%. Most teams skip archetype selection entirely and pick whichever vendor had the loudest LinkedIn presence in Q1. This is how a 30-rep enterprise team ends up with an autonomous agent that emails CFOs, and how a 3-person seed team ends up with a $60K signal-driven platform they cannot configure.

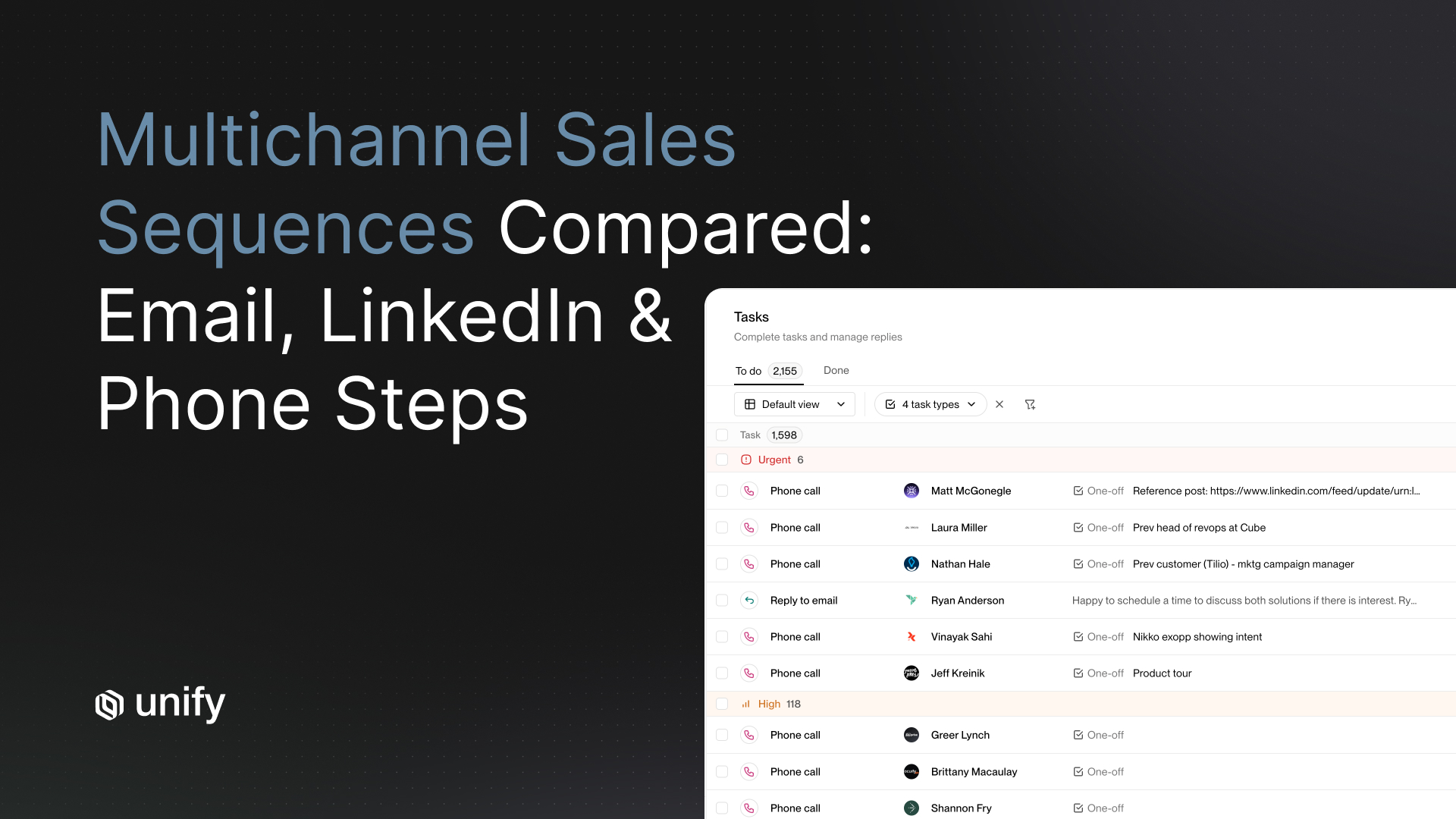

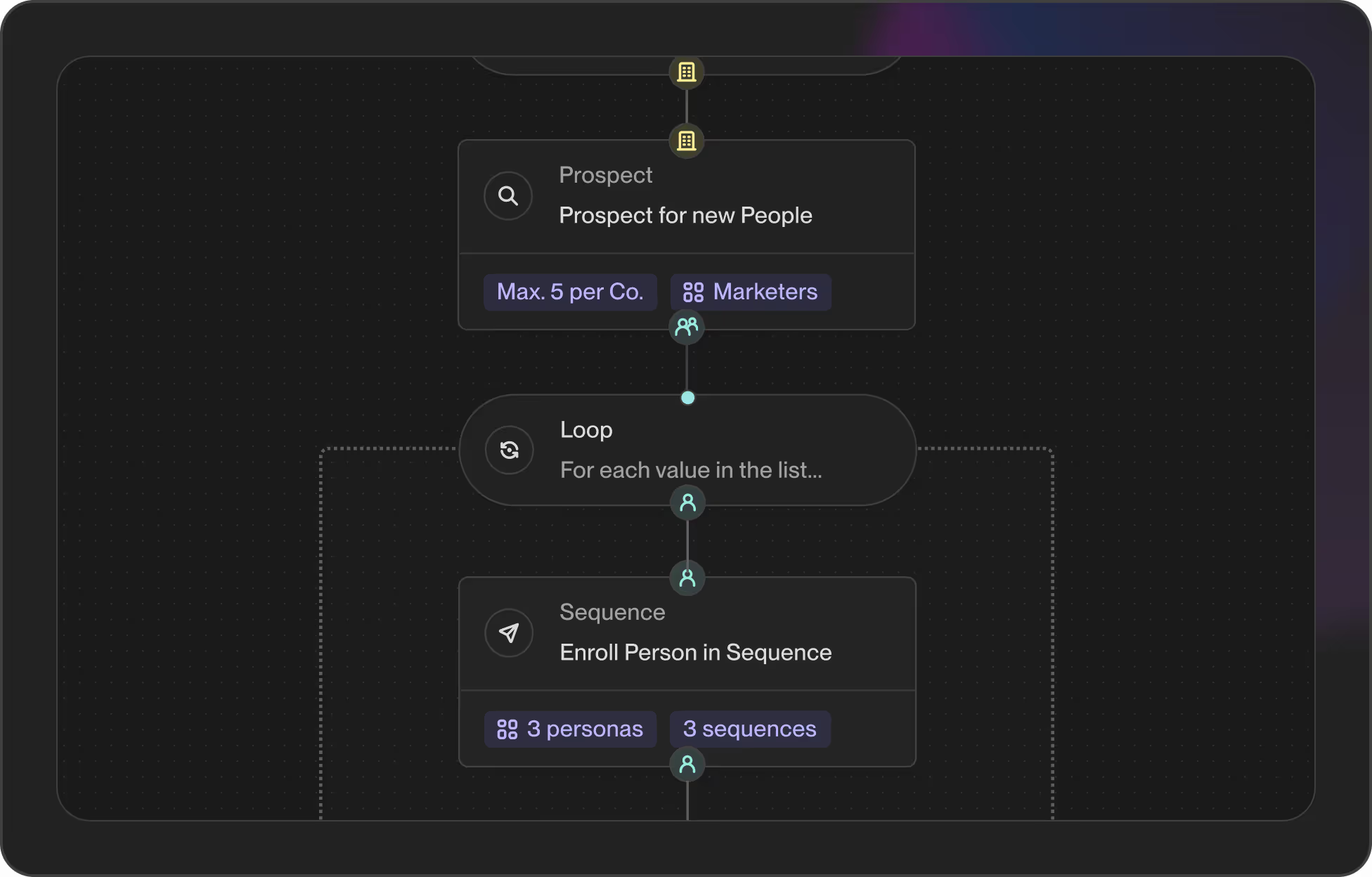

The three archetypes have different ideal users, different deliverability profiles, and different failure modes. Signal-driven orchestration (Unify, signal-first vendors) suits teams with a clear ICP and an investment in CRM data hygiene. Autonomous agents (11x, Artisan) suit teams that want to delegate outbound entirely and accept lower-quality replies. Copilots (Lavender, Regie.ai) suit human-led teams that want better email writing without changing their motion.

If you don't know which archetype you are, you are not ready to evaluate AI SDR software yet. Read our AI SDR vs. Human SDR Decision Framework first.

Standardized Vendor Mini-Profiles

Every vendor below uses the same template: archetype, best-fit team, core strength, known limitation, typical entry price band.

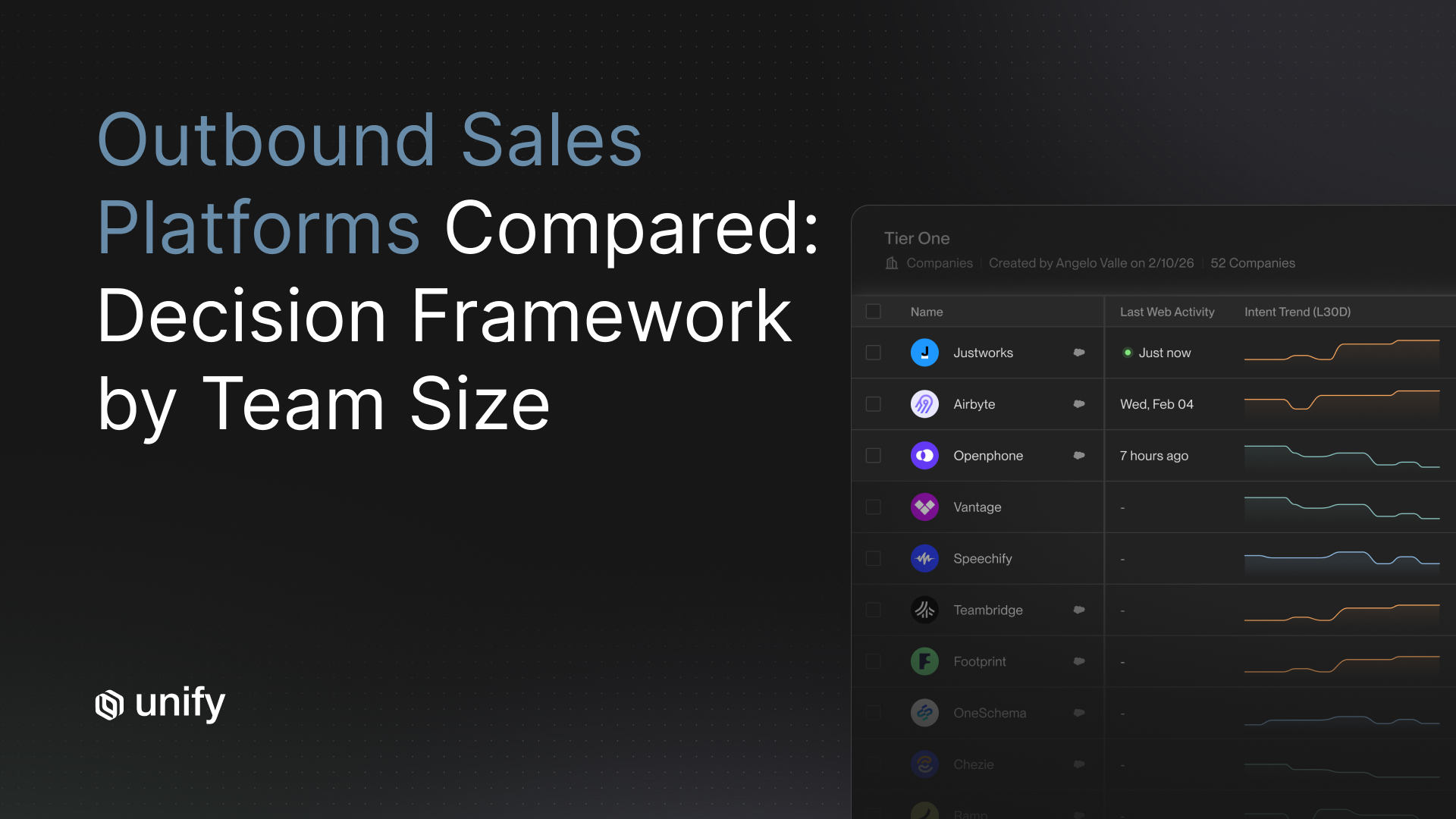

- Unify. Archetype: signal-driven orchestration. Best fit: 5-50-rep teams running ABM or PLG with strong CRM. Core strength: real-time signal capture across 25+ sources, deep Salesforce/HubSpot sync. Known limitation: requires meaningful data hygiene investment.

- 11x. Archetype: autonomous agent (Alice). Best fit: mid-market teams that want fully delegated outbound. Core strength: high-volume autonomous sending. Known limitation: low reply rate, brand-voice drift, deliverability risk on cold domains.

- Artisan. Archetype: autonomous agent (Ava). Best fit: SMB and mid-market teams with thin SDR headcount. Core strength: end-to-end autonomous prospecting with built-in data. Known limitation: limited CRM customization, generic personalization.

- Apollo AI. Archetype: data-first with AI bolted on. Best fit: teams already on Apollo's data platform. Core strength: contact data + AI write features. Known limitation: AI features are surface-level versus dedicated AI SDR platforms.

- Regie.ai. Archetype: copilot + light autonomous. Best fit: marketing-led teams generating sequences at scale. Core strength: content generation engine. Known limitation: weaker signal layer, surface-level CRM sync.

- Lavender. Archetype: copilot inside human inbox. Best fit: human SDR teams that want better email writing. Core strength: in-inbox AI scoring on email quality. Known limitation: does not generate net-new pipeline; per-seat pricing scales linearly with team.

- Outreach Smart Plays. Archetype: AI features bolted onto a sales engagement platform. Best fit: existing Outreach customers. Core strength: tight integration with existing Outreach workflows. Known limitation: not a true AI SDR; AI is recommendation-only.

- Salesloft Rhythm. Archetype: AI prioritization on top of Salesloft. Best fit: existing Salesloft customers. Core strength: signal-to-action workflow inside Salesloft. Known limitation: signal coverage limited to Salesloft's data graph.

Diagnostic Questions for Mistake #2 (3 questions)

- Which archetype am I buying? Signal-driven orchestration, autonomous agent, or copilot. If your shortlist crosses two archetypes, you are still in archetype-selection mode, not vendor-selection mode.

- What does my ICP density look like? If <1,000 named accounts, signal-driven wins because every account matters. If >10,000 accounts and most are interchangeable, autonomous agent wins because volume matters more than precision.

- Will my SDRs lose their job if this works? Be honest. Autonomous agents work better when there is no political resistance from the team they replace. Copilots work better when the team needs to feel augmented, not replaced.

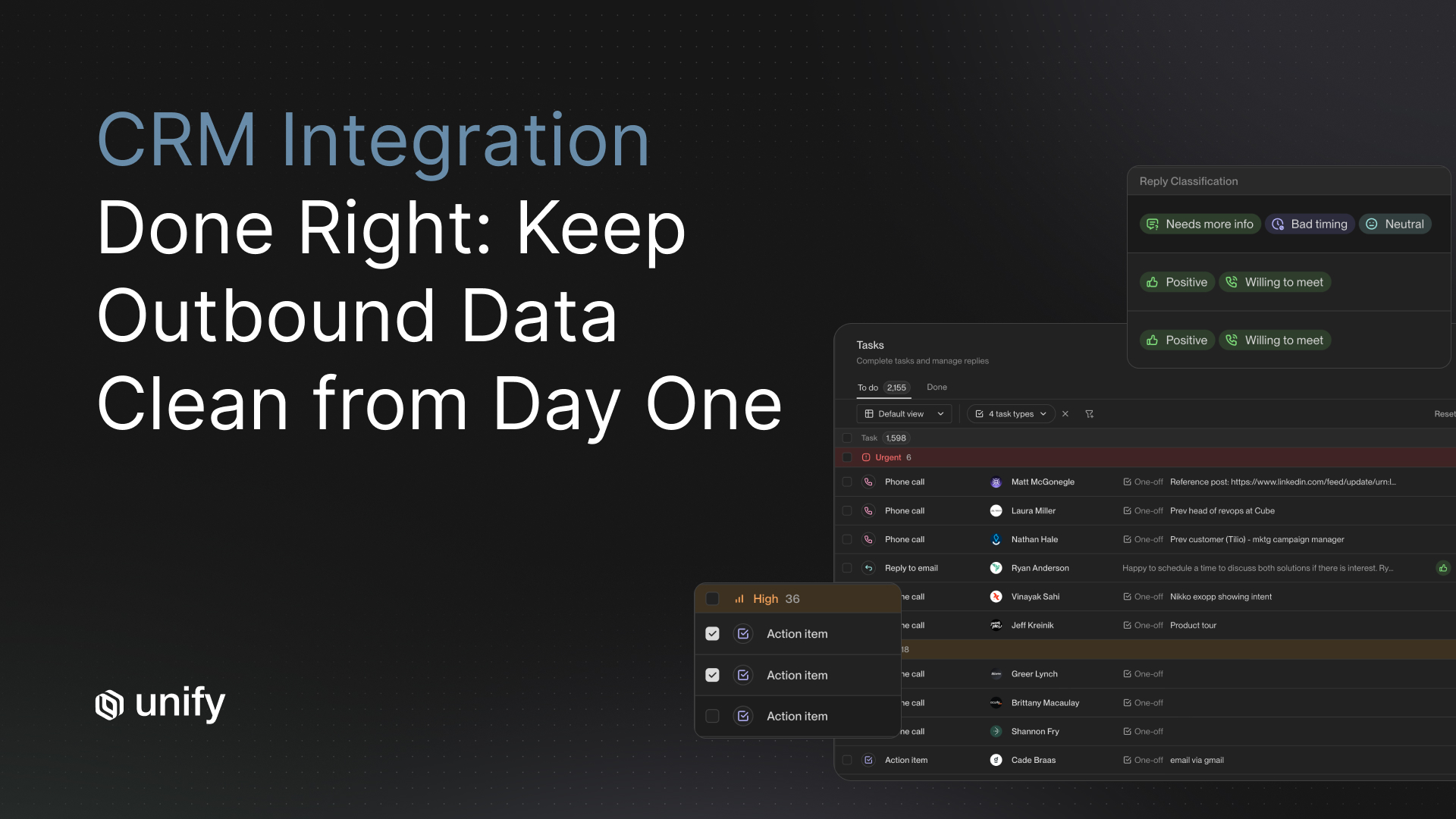

Mistake #3: Ignoring CRM Sync Depth

CRM sync is where 80% of AI SDR deployments die in production. Test it on day one of the POC, not week ten. Surface-level demos hide where bidirectional sync, dedup logic, lifecycle-stage updates, custom-field mapping, and activity logging actually break. The result: duplicate accounts, contacts written to the wrong owner, lifecycle stages overwritten, and SDR managers losing trust within two weeks of go-live.

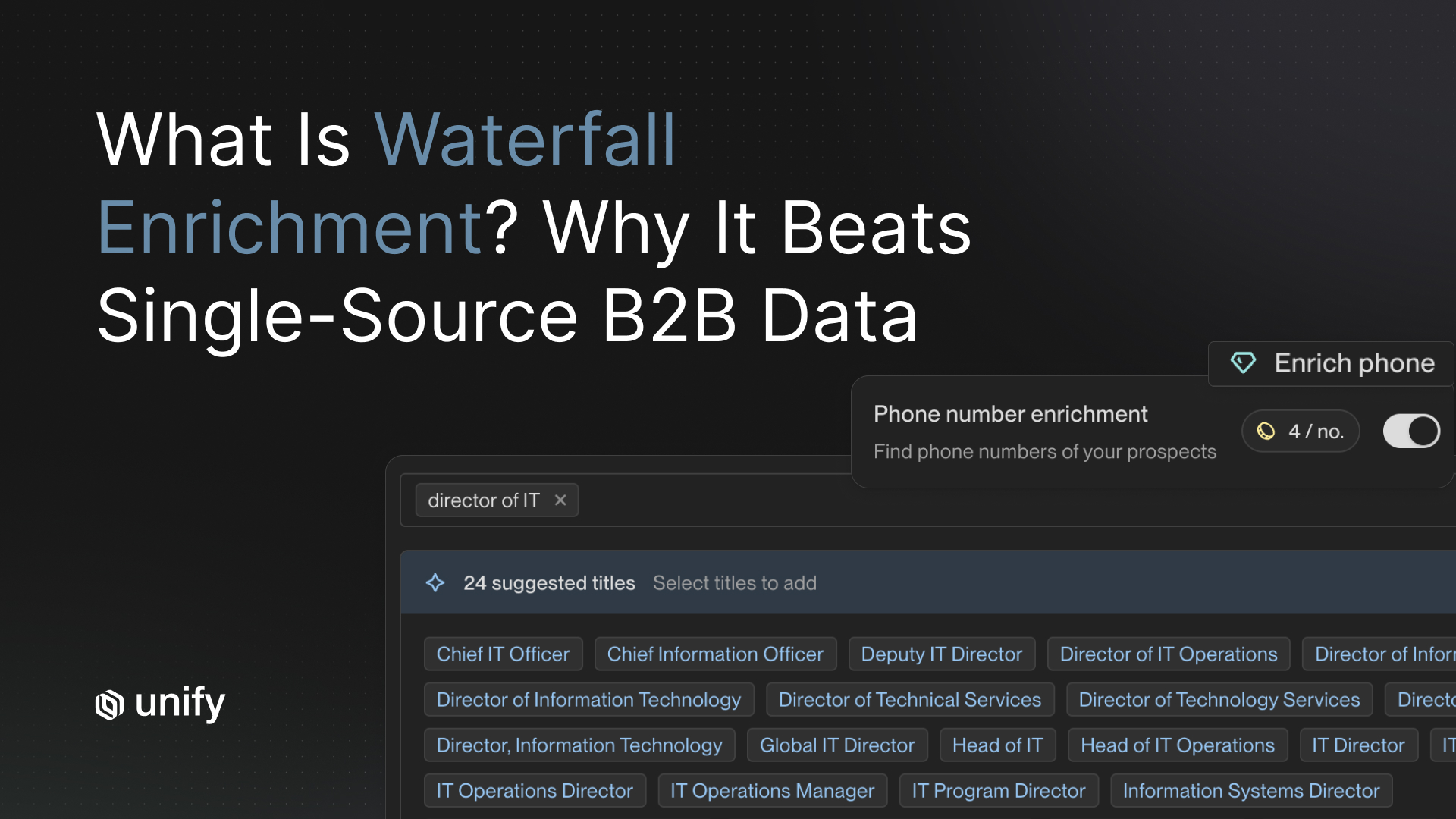

The seven CRM sync dimensions to test are bidirectional sync (not one-way push), custom field mapping, account-level dedup, contact-level dedup, lifecycle-stage updates, activity logging on the correct object, and sync latency under 60 seconds. If any one of these breaks, the AI SDR creates more cleanup work than it generates pipeline. We see this kill 30-40% of POCs in their first 14 days.

The fix. Build a CRM stress-test in your sandbox before any vendor demo. Include 10 known duplicate accounts, 5 accounts with non-standard custom fields, and 3 accounts at edge-case lifecycle stages. Refuse to advance any vendor that cannot pass all three tests live, on screen, in under 30 minutes.

Diagnostic Questions for Mistake #3 (3 questions)

- Show me the sync log on a duplicate-heavy account. Specifically: when your AI enriches an account that already exists in our Salesforce with a different domain, what happens? If the answer is "we create a duplicate and reps clean it up later," you are done.

- Can you write to custom fields and respect existing field-level security? Most autonomous agents cannot. Most signal-driven platforms can. This is the cleanest archetype tell on day one of a POC.

- What is your sync latency p50 and p95 under load? If they cannot quote you a number under 60 seconds at p95, your AI SDR will be sending emails to contacts that are simultaneously being marked unsubscribed in your CRM. That is a deliverability disaster.

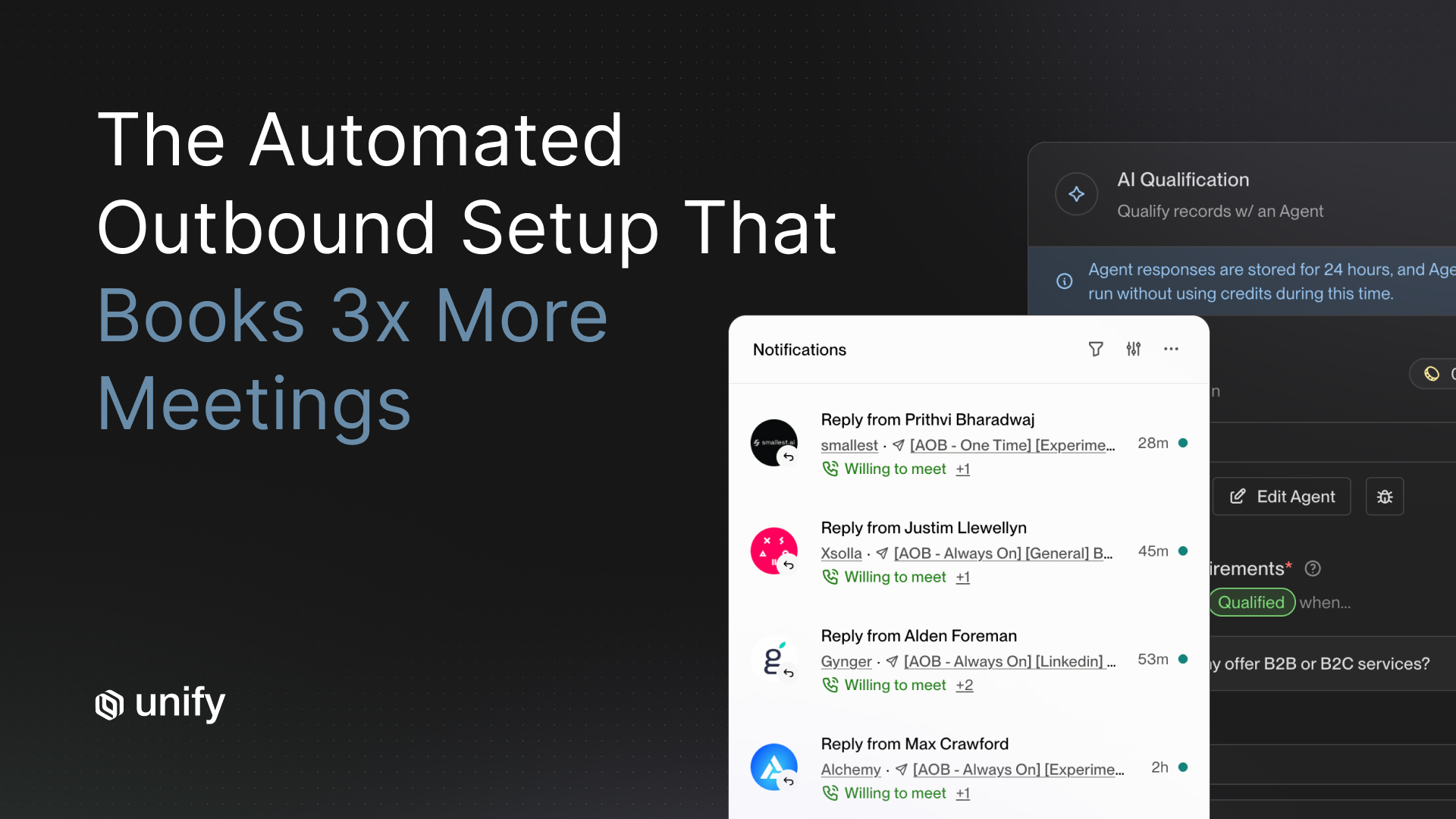

Mistake #4: Missing Reply-Quality Drift

Reply quality decays after 30-60 days on autonomous platforms. Measure it explicitly or you will only see it in churn metrics. Reply-quality drift is the silent killer of AI SDR contracts. Week one looks great. Personalization is novel, deliverability is fresh, prospects respond. Week eight, your AI is running out of legitimate angles, recycling templates, and burning sender reputation. Reply rate falls 30-50% from peak. Most teams attribute this to "the market" instead of the platform. Then they renew for another year.

The fix is to require every vendor to surface a reply-rate-by-cohort chart before signing. Real signal-driven platforms refresh their angle library continuously because they pull personalization from new signals (funding, hiring, product launches). Autonomous agents tend to plateau because they personalize from static account-level data that doesn't change much. This is why the Unify benchmark shows signal-driven platforms holding 15-25% reply rates over 90 days while autonomous agents typically peak at week 2 and decay to 1-3% by week 8.

Diagnostic Questions for Mistake #4 (2 questions)

- What is your reply-rate decay curve, week 1 through week 12, on a real customer cohort? Get a chart. If they show you a flat line, ask for the underlying data. If they show you decay, ask what they do to stop it.

- What new personalization angles do you generate per account per month? Signal-driven platforms quote a number (typically 2-4 fresh angles per active account per month). Autonomous agents typically cannot.

Decision Framework: Which AI SDR Should You Pick?

Use these if/then rules to map your situation to a single archetype recommendation in 30 seconds.

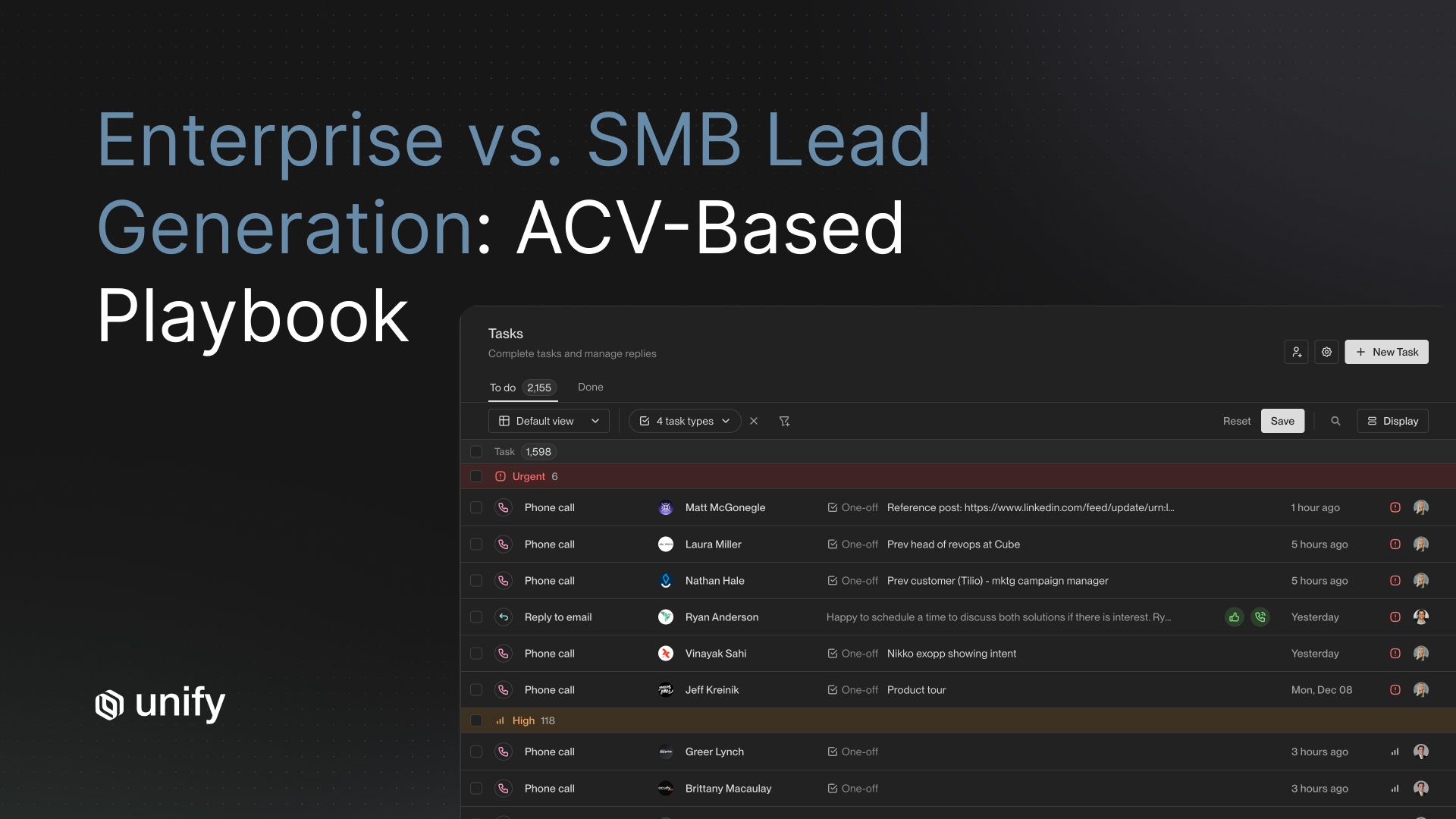

- If you are a 5-50-rep team on Salesforce or HubSpot with a clear ICP under 5,000 accounts → pick signal-driven orchestration (Unify). Optimize for reply quality and CRM hygiene.

- If you are a mid-market team with thin SDR headcount and an ICP >10,000 accounts → pick an autonomous agent (Artisan or 11x). Optimize for volume and delegation.

- If you have a strong human SDR team and want to lift their write rate without replacing them → pick a copilot (Lavender). Optimize for in-inbox augmentation.

- If you are already heavily invested in Outreach or Salesloft and want a low-risk add → pilot Outreach Smart Plays or Salesloft Rhythm before evaluating standalone AI SDRs.

- If you are a founder-led GTM team with no SDRs yet → pick signal-driven orchestration. You need fewer, better conversations, not more emails.

- If you are a regulated industry (financial services, healthcare) or EU-based with GDPR exposure → pick the platform with the deepest opt-in / suppression-list controls, regardless of archetype. Reply quality matters less than compliance.

- If your CRM data hygiene is bad and your team will not invest in fixing it → do not buy AI SDR yet. Fix the CRM first. Otherwise you are buying an expensive amplifier of bad data.

How Should You Evaluate AI SDR Software (Vendor-Neutral)?

Score every shortlisted vendor on the same 11-question diagnostic from Mistakes #1-#4. Add a 14-day live POC on real data, real CRM, and real domain reputation. Reject any vendor that fails to produce reply-rate-by-cohort data, fails the CRM stress test, or refuses to run on your real accounts. The criteria themselves should be brand-neutral; whoever scores highest wins.

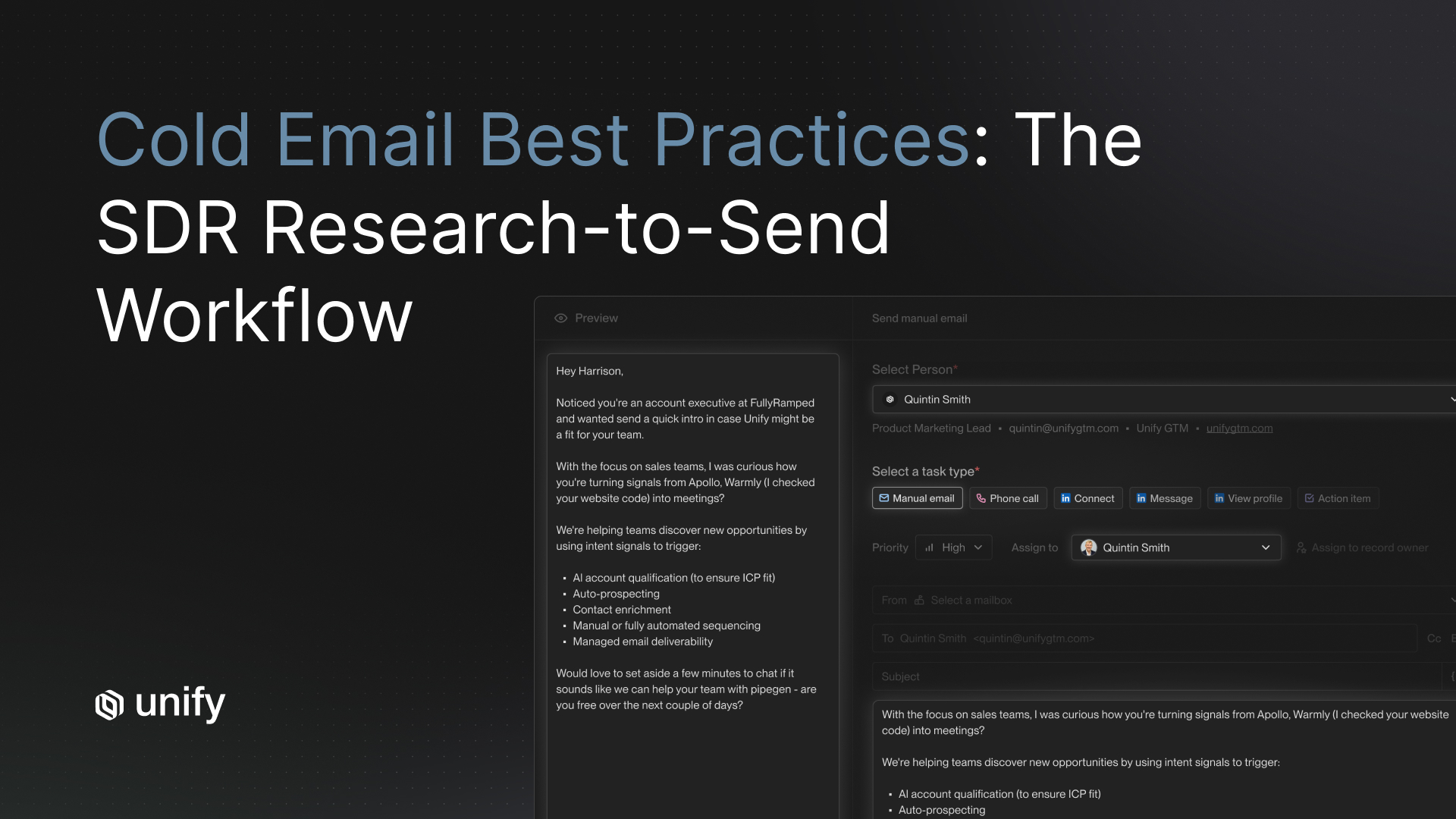

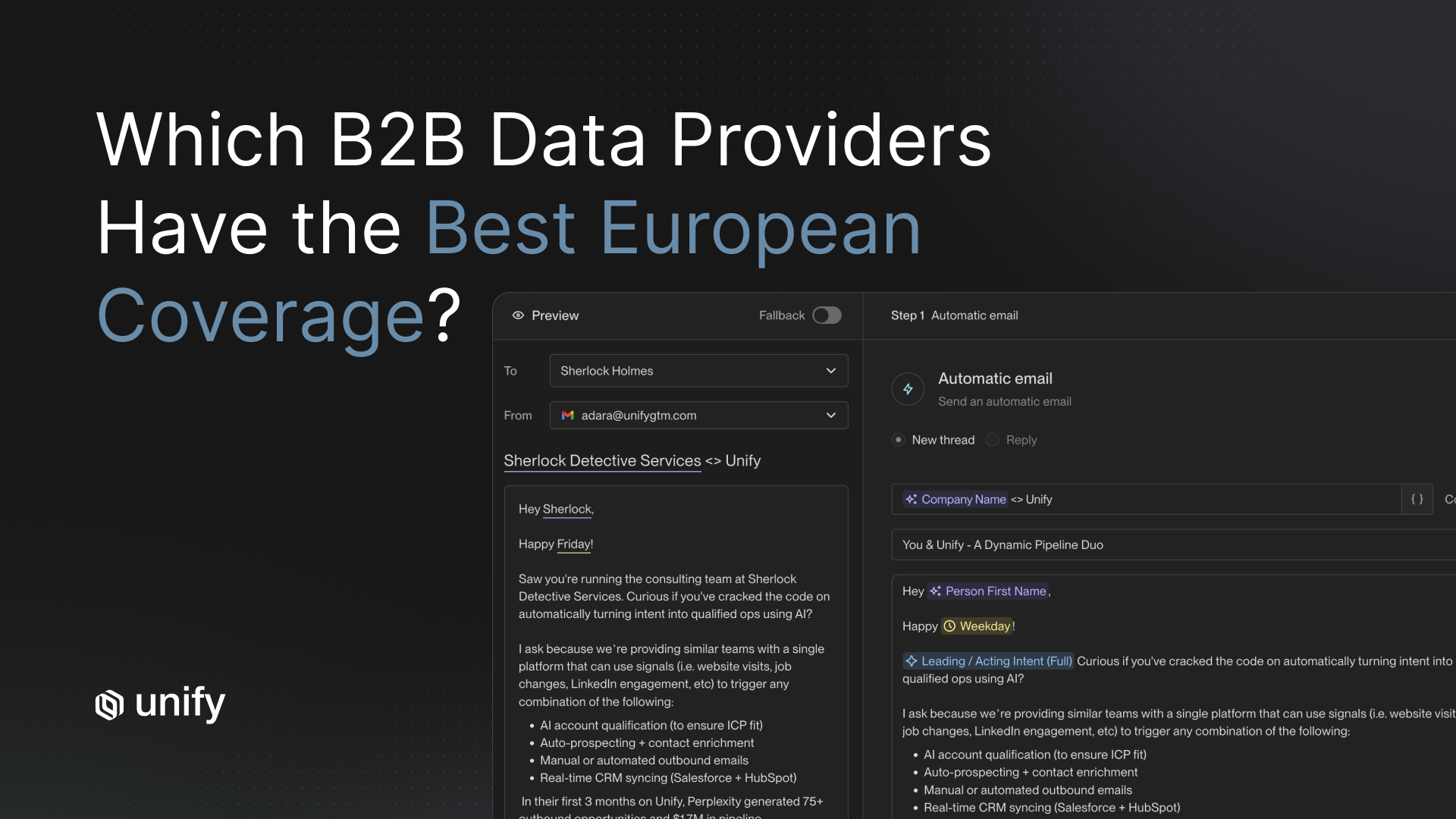

How Unify covers this. Unify is built around signal-driven orchestration and is purpose-fit for the criteria above. Customer benchmarks: 15-25% reply rates sustained over 90 days, $80-$180 cost per qualified meeting, sub-15-minute speed-to-lead on signal-triggered accounts, and bidirectional Salesforce + HubSpot sync with sub-60-second p95 latency. Unify pulls signals from 10+ first-party and third-party sources (job changes, funding, hiring, product-page visits, competitor research, intent data, sandbox usage) and refreshes personalization angles 2-4 times per account per month. The platform is used by teams at OpenAI, Ramp, Justworks, and Lattice. Run a 14-day POC on your real CRM at unifygtm.com.

Worked Example: A 12-Rep Mid-Market Team Picks the Wrong AI SDR

This is a composite case based on 8 Unify customer post-mortems. Names and numbers are anonymized but representative.

Setup. A 12-rep mid-market RevOps team at a $40M ARR vertical SaaS company wants to deploy AI SDRs to cover their long-tail accounts. ICP: 3,200 named accounts. CRM: Salesforce. Existing motion: 4 SDRs running Outreach sequences, 18% reply rate on warm cohorts.

Day 0: vendor selection. Team picks Vendor A (autonomous agent) after a strong demo. Demo showed 12% reply rate on a curated 500-account cohort. Contract signed at $48K ARR.

Day 14: first signs of trouble. Vendor A's reply rate on the live deployment is 4%, not 12%. Account-level duplicates appear in Salesforce. Two SDR managers report contacts being emailed who already replied "remove me" two months ago. CRM sync log shows 11-second latency at p95 under load, but suppression list updates take 6 minutes to propagate.

Day 45: drift confirmed. Reply rate has fallen to 2.1%. Vendor A's monthly business review shows the same template clusters being used across accounts. Personalization is account-name-plus-vertical, not signal-based. Cost per qualified meeting: $480.

Day 90: contract termination triggered. Team writes off the $48K and switches to a signal-driven platform. Three months later: 19% reply rate, $140 cost per qualified meeting, no Salesforce duplicates.

Lesson. Mistake #2 (wrong archetype) and Mistake #3 (ignored CRM sync) compounded. The 11-question diagnostic would have caught both on day zero. Total cost of the mistake: $48K in software + an estimated $180K in opportunity cost from 90 days of bad outbound.

Recommendations by Role and Segment

The right AI SDR software depends on who you are inside the company. Variants below.

If you are a Head of Sales or VP Sales

- Optimize for reply quality and pipeline conversion, not volume.

- Default to signal-driven orchestration unless you have >30 reps and >10K-account ICP.

- Insist on a 14-day POC on real accounts before signing.

- Read our 12-Criteria Scorecard for the deeper rubric.

If you are RevOps or Sales Ops

- Run the CRM stress test on day one. Reject any vendor that fails it.

- Demand bidirectional sync, custom-field write, and sub-60-second p95 latency.

- Set up a phased rollout (shadow → co-pilot → pilot territory → full).

If you are a Growth or Demand Gen leader

- Signal-driven orchestration aligns with your funnel; autonomous agents do not.

- Tie AI SDR signals to your existing scoring model. Do not let the vendor define MQL.

- Worth pairing with a copilot like Lavender for outbound from human reps.

If you are a founder-led GTM team (<5 reps)

- Pick signal-driven orchestration. You cannot afford the noise of autonomous agents.

- Pre-built data + signals matter more than write quality at this stage.

- Budget $30K-$60K ARR. Anything cheaper means thin signals.

Edge Cases and Common Confusions

These are five edge cases that confuse first-time AI SDR buyers. Validate each one before signing.

- Job-change signals vs. job-seeker signals. Buying signals come from new hires moving into target roles. Job-seeker signals come from candidates updating their LinkedIn — these are noise, not buying intent. Validate by asking the vendor how they distinguish the two.

- Funding rounds: which ones count? A $50M Series C at a relevant ICP company is a buying signal. A $2M pre-seed at a non-ICP company is noise. Validate by requiring funding-amount and stage filters.

- Content syndication vs. real intent. Lead-gen vendors selling "intent" data often serve recycled content syndication leads. Validate by asking the vendor for the originating data source per signal.

- Email open rates vs. real engagement. Apple Mail Privacy and bot-prefetchers inflate open rates by 30-60%. Treat opens as noise; treat clicks and replies as signal. Validate by asking the vendor how they filter Apple Mail and bot prefetches.

- US opt-in vs. EU opt-in. US cold outbound is generally legal under CAN-SPAM. EU cold outbound is heavily regulated under GDPR. Validate by asking how the vendor handles suppression lists, opt-out propagation, and double opt-in for EU contacts.

Stop Rules and Red Flags

Use this table to decide when to pause, escalate, or kill an AI SDR vendor evaluation or live deployment.

Top 5 Mistakes to Avoid

These are the most common mistakes Unify sees teams make when picking AI SDR software, in order of frequency.

- Falling for demo theater. Pre-loaded data and pre-warmed inboxes hide every real-world failure mode.

- Skipping archetype selection. Buying without knowing whether you need autonomous agent, signal-driven, or copilot.

- Treating CRM sync as a checkbox. Bidirectional sync, dedup, and lifecycle handling are make-or-break.

- Ignoring reply-quality drift. Week-one reply rates lie. Always require cohort 60-90 data.

- Rushing to autonomous agents because the demo looked magical. Magic is a leading indicator of demo theater.

Frequently Asked Questions

Which AI SDR software is the best?

There is no single best AI SDR software. The right pick depends on your archetype: signal-driven orchestration (Unify) for ABM and PLG teams with strong CRM hygiene, autonomous agents (11x, Artisan) for high-volume mid-market motions, and copilots (Lavender, Regie.ai) for human SDR teams that want better email writing. Use the 11-question diagnostic and a 14-day POC on real data before signing.

What are the most common mistakes when picking AI SDR software?

Four mistakes account for 50-70% of failed AI SDR purchases: falling for demo theater, buying for the wrong archetype, ignoring CRM sync depth, and missing reply-quality drift over 30-60 days. Most teams catch zero of these on day one and discover all four by month three. The 11-question diagnostic in this article is designed to surface each of them in a single 90-minute evaluation session.

How long does an AI SDR proof-of-concept take?

Plan for a 14-day live POC and a 90-day evaluation window. Two weeks is the minimum to test signal capture, CRM round-trip, and initial reply quality on your real data. Ninety days is the minimum to draw a defensible verdict on cost-per-meeting, opportunity-conversion, and reply-quality drift. Anything shorter is demo theater dressed in a project plan.

How much does AI SDR software cost?

AI SDR platforms typically range from $1,500 to $15,000 per month depending on volume, signal sources, and seat count. The more useful metric is cost-per-qualified-meeting. Unify benchmarks show signal-driven AI SDR motions land at $80-$180 per qualified meeting versus $375-$720 for fully-loaded human SDRs and $250-$400 for autonomous agents. Cheaper per touch does not mean cheaper per outcome.

What is the difference between an AI SDR and an AI copilot like Lavender?

An AI SDR executes prospecting work autonomously: signal capture, list building, message generation, and sending. An AI copilot like Lavender helps a human rep write better emails inside their existing inbox. Copilots improve human productivity by 15-30% on reply rate but do not generate net-new pipeline volume. AI SDRs generate net-new volume but require stricter governance to avoid deliverability and brand-voice drift.

Should I pick an autonomous AI SDR or a signal-driven platform?

Pick signal-driven if you have a high-value ICP, a complex CRM, and you care about reply quality and brand voice. Pick autonomous if you have a high-volume mid-market motion and need fully delegated outreach with thin internal headcount. Signal-driven platforms achieve 15-25% reply rates by acting on real-time intent; autonomous agents land at 1-3% because they treat the entire ICP as equally relevant.

How do I avoid demo theater when evaluating AI SDR software?

Bring three things to every demo: 50 of your real accounts, your real CRM sandbox, and 10 questions about deliverability, signal sourcing, and reply quality on cohort 60-90. Refuse pre-built demo environments. Force the vendor to enrich your accounts, sync to your CRM, and show you outbound on cohorts older than 30 days. Most demo theater collapses within 20 minutes of this exercise.

What CRM sync requirements should I evaluate for AI SDR software?

Evaluate seven things: bidirectional sync (not one-way push), custom field mapping, account-level dedup, contact-level dedup, lifecycle-stage updates, activity logging on the correct object, and sync latency under 60 seconds at p95. Surface-level demos hide where every one of these breaks. Ask to see the sync log on a duplicate-heavy account during the POC, not the QBR.

Glossary

- AI SDR. A software platform that autonomously executes parts of the SDR workflow, including prospecting, signal capture, message generation, and sending, without a human in every loop.

- Archetype. The category of AI SDR product: signal-driven orchestration, autonomous agent, or human-in-the-loop copilot. Choosing the wrong archetype is the single biggest evaluation mistake.

- Signal-driven orchestration. An AI SDR motion where outbound is triggered by real-time buying signals (funding, hiring, intent, product engagement) rather than static account lists.

- Autonomous agent. An AI SDR that operates the full prospecting workflow end-to-end without rep involvement, optimizing for volume over per-touch reply quality.

- Copilot. An AI tool that augments a human rep inside their existing workflow (typically the email client) without taking actions on its own.

- Reply-quality drift. The decay in reply rate over time as personalization templates exhaust and sender reputation degrades. Typically 30-50% drop from peak by week 8 on autonomous platforms.

- Demo theater. A vendor demo run on curated data and pre-warmed infrastructure that hides real-world failure modes such as CRM duplicates, deliverability problems, and personalization decay.

- Cohort 60-90. A reply-rate measurement window that captures days 60-90 of a real customer deployment. The truest indicator of post-honeymoon performance.

- CRM sync depth. The level of bidirectional integration between an AI SDR and a CRM, including custom-field write, dedup logic, lifecycle handling, and sync latency.

- POC (Proof of Concept). A time-boxed pilot on real data and real CRM, used to validate vendor claims before signing. Minimum 14 days; defensible at 90 days.

Sources and References

- Salesforce, State of Sales 2026 (n=4,050 sellers, 22 countries, August-September 2025). salesforce.com/news/stories/state-of-sales-report-announcement-2026

- Salesforce, 40 Sales Statistics to Watch for 2026. salesforce.com/sales/state-of-sales/sales-statistics

- Gartner, Predicts 2026: Leading Sales in the Age of AI Contradictions (Document ID: 7147230). gartner.com/en/documents/7147230

- Gartner Press Release, By 2028 AI Agents Will Outnumber Sellers by 10X, November 18, 2025. gartner.com Nov 2025 press release

- Gartner, The Role of Artificial Intelligence (AI) in Sales. gartner.com/en/sales/topics/sales-ai

- Unify, AI SDR vs. Human SDR Decision Framework, April 2026. unifygtm.com/explore/ai-sdr-vs-human-sdr-decision-framework

- Unify, Best AI SDR Tools: The 12-Criteria Scorecard (2026). unifygtm.com/explore/best-ai-sdr-tools-12-criteria-scorecard

- Unify, How to Implement an AI SDR Without Disrupting Existing Pipeline, April 2026. unifygtm.com/explore/how-to-implement-ai-sdr-without-disrupting-pipeline

- Unify, Signal-Based Selling Guide. unifygtm.com/explore/signal-based-selling

- Unify, How to Measure AI SDR Performance: A 5-Metric Framework. unifygtm.com/explore/ai-sdr-performance-metrics

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)