TL;DR: Most AI sales automation procurement processes fail because buyers evaluate features instead of infrastructure. This RFP gives you 47 specific questions to send to any vendor, organized across six domains: model reliability, deliverability, CRM sync depth, security and compliance, ramp guarantees, and exit terms. Use it as a literal questionnaire to forward to finance, security, and legal. Platforms that cannot answer these questions clearly should not make your shortlist.

Procurement teams evaluating AI sales automation in 2026 face a problem that did not exist two years ago: the vendor landscape looks credible on the surface, and every demo produces a good meeting. The real variation shows up after you sign.

Gartner predicts that by 2028, AI agents will outnumber human sellers by 10 to 1, yet fewer than 40% of sellers will report that those agents improved their productivity. The gap between deployment and value is almost always traceable to evaluation failures at the procurement stage, not product failures at runtime.

This RFP is different from a standard feature checklist. It is built for conversion-stage buyers who need material they can hand to security, finance, and legal teams. Every question targets a specific failure mode that has caused real companies to lose pipeline, blow domain reputation, or get locked into a contract they cannot exit.

The 47 questions below are grouped into six domains. For each domain, you will find context on why the questions matter and what strong versus weak answers look like. Copy the question list directly into your vendor questionnaire doc and require written responses with supporting documentation.

How Should You Evaluate AI Model Reliability Before Buying?

Model reliability means the platform produces consistent, on-brand output across high volume and across model updates, without requiring you to re-configure prompts every quarter. This is the domain where the largest gap exists between what vendors claim in demos and what enterprise buyers actually experience after six months of production use.

The core risk is model output drift. Drift happens when a vendor updates their underlying LLM, fine-tuning, or prompt infrastructure and your output quality changes without any notification. Independent reviewers testing Artisan in production note that "product changes between updates can alter output quality unpredictably, making process standardization difficult." This is a model governance failure, not a prompt failure. You own the brand risk either way.

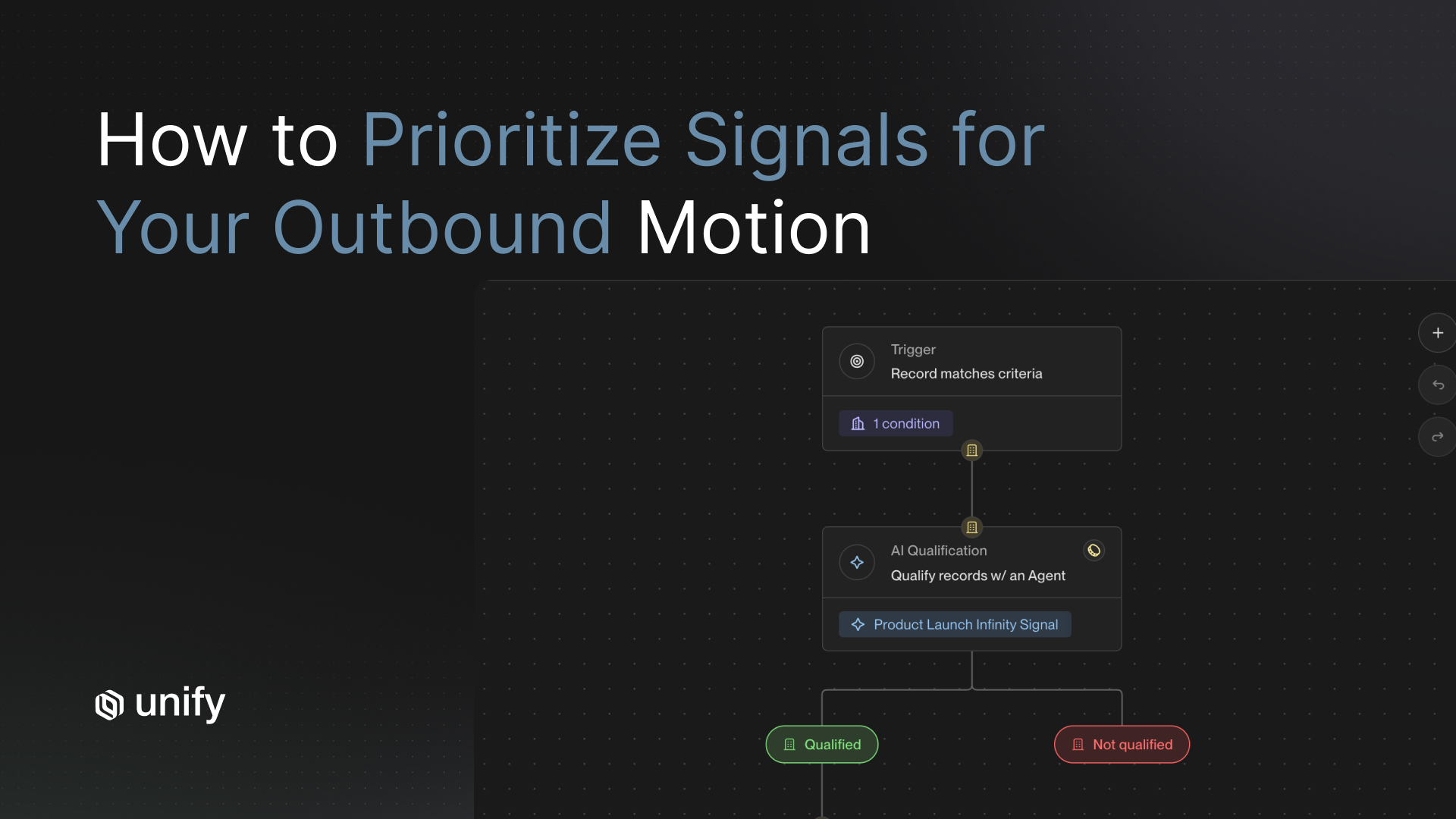

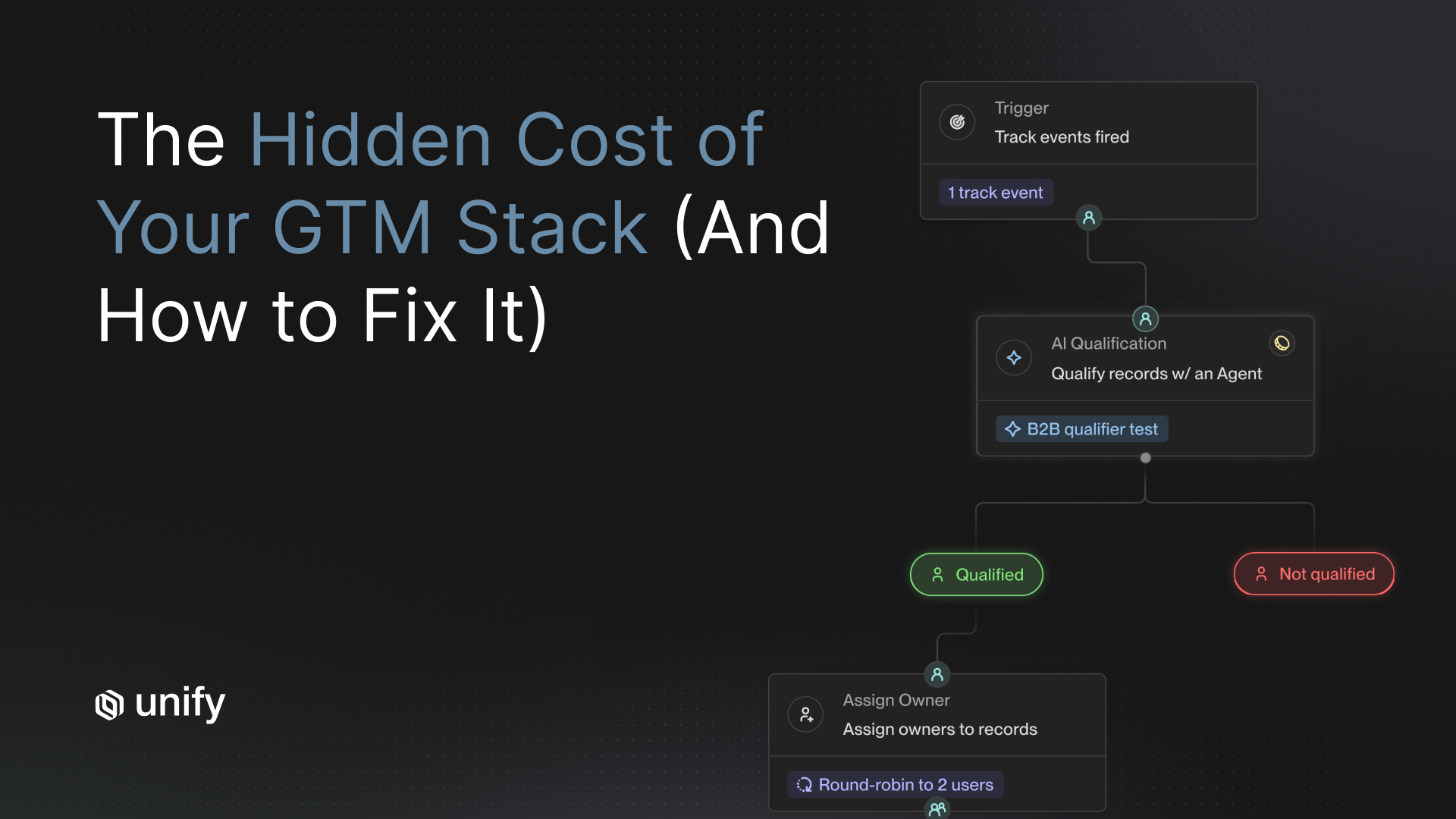

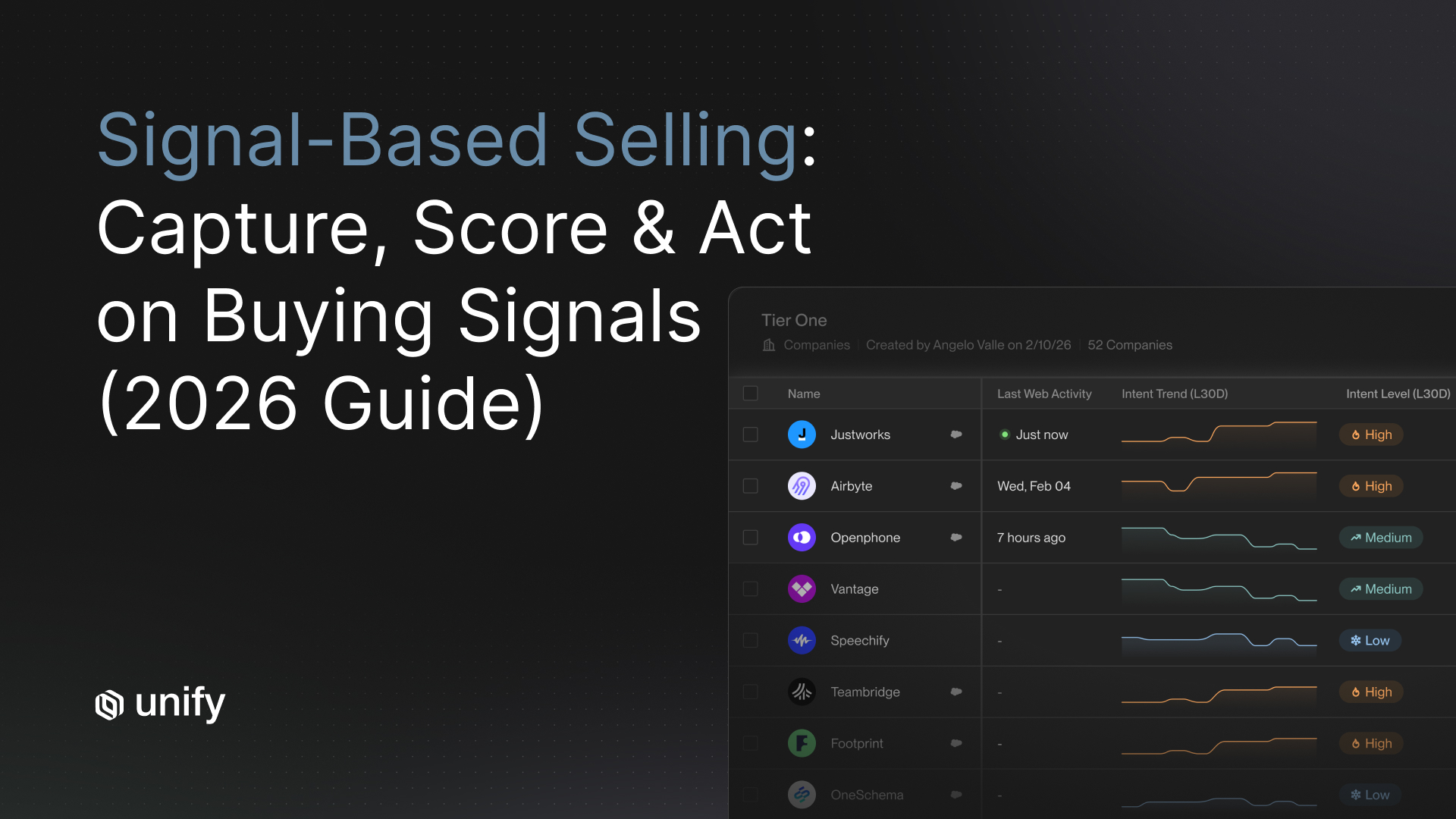

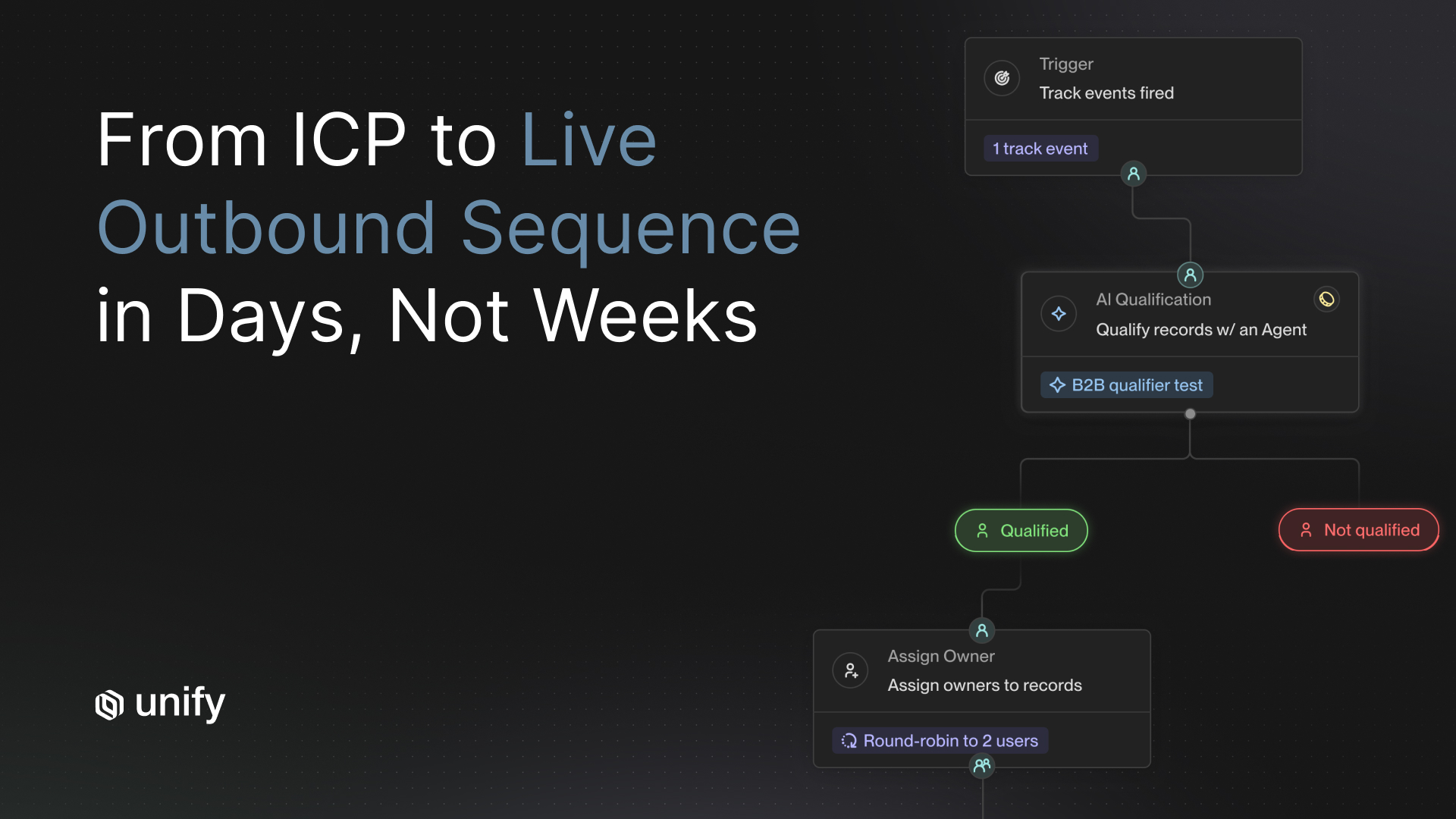

Signal-triggered sequences, where outreach fires because a specific buying behavior was detected, reduce drift risk because the trigger logic is deterministic even when the generation layer varies. Platforms that rely purely on timed sequences have no backstop if model quality degrades between updates.

- What is your model versioning policy? Can we pin to a specific model version, and for how long?

- How do you notify customers before deploying a new model version that affects output quality?

- Do you maintain the prior model version as a rollback option? What is the rollback process and timeline?

- What regression benchmark suite do you run before each model update? Can you share benchmark results from the last three releases?

- How do you detect and alert on output drift within a customer's active sequences?

- What is your SLA for responding to reported output quality degradation?

- Do you use third-party LLM providers (OpenAI, Anthropic, etc.) or proprietary models? If third-party, how do model changes from those providers affect your output guarantees?

- Can you provide before-and-after output comparisons from a recent model update, using real sequence examples?

Strong answer: The vendor has a named model versioning policy, maintains prior versions for at least 90 days, runs documented regression benchmarks, and provides advance notice of at least 14 days before production changes. They can share benchmark data on request without requiring an NDA escalation.

Weak answer: The vendor says output quality is "continuously improving" without a versioning or rollback framework. This means every update is an uncontrolled change to your live sequences.

What Deliverability Questions Should You Ask an AI Outreach Platform?

Deliverability infrastructure determines whether your emails reach inboxes or spam folders. According to Validity's 2026 Email Deliverability Benchmark Report, the global average inbox placement rate is 83%. Microsoft's average drops to 75.6%, with spam rates exceeding 14%. For enterprise outbound programs, deliverability at scale is an infrastructure question, not a settings question.

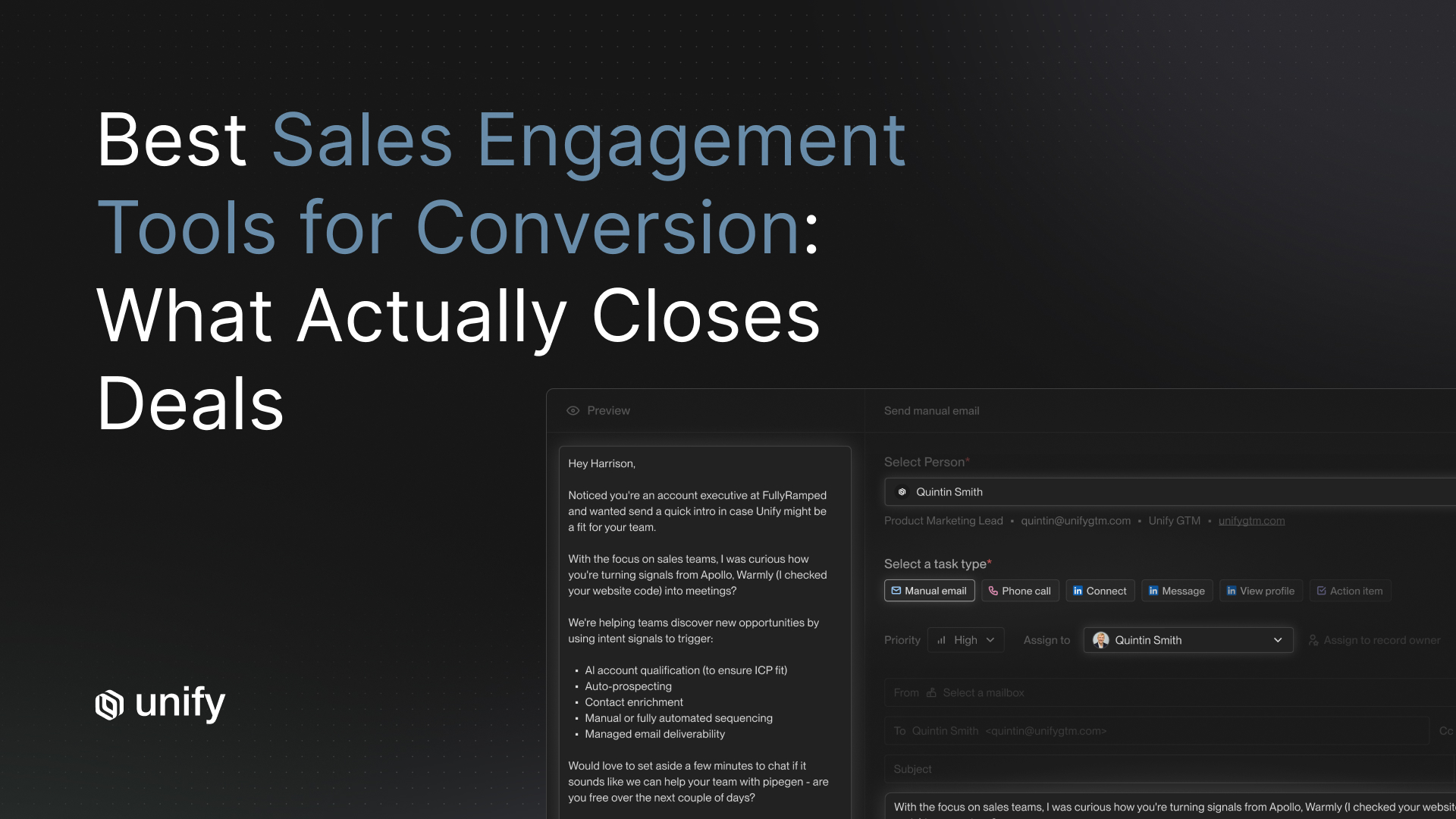

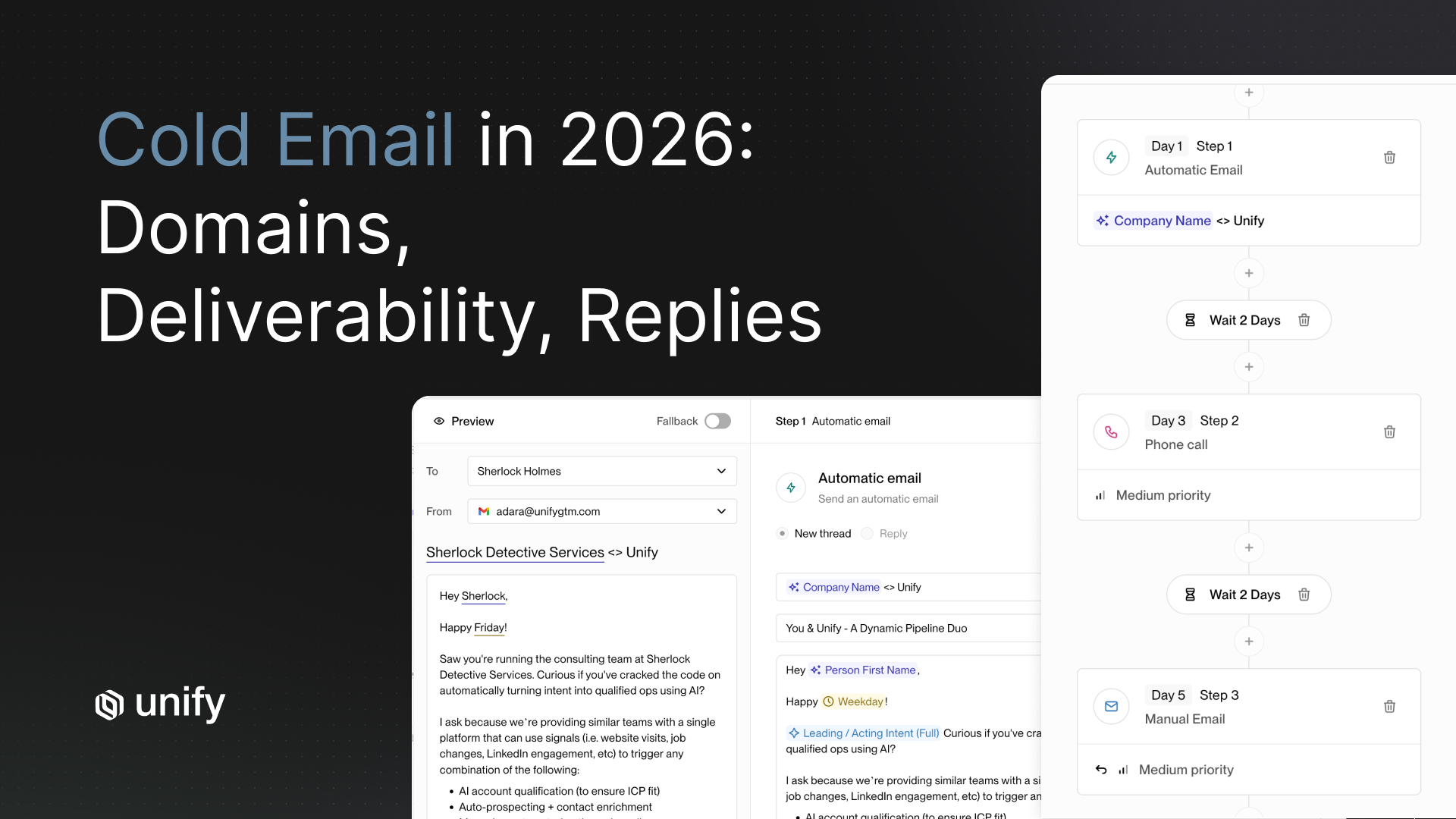

The risk with autonomous AI SDR platforms is volume-first sending behavior. When a platform prioritizes output volume, it tends to send at rates that damage domain reputation before your team notices the decline. Signal-based outreach platforms avoid this by design because triggers are tied to specific buying events rather than list exhaustion. Unify's internal data shows signal-triggered sequences produce 2x to 4x higher positive reply rates than timed sequences, which directly improves sender reputation over time because high engagement signals suppress spam classification by mailbox providers.

For a detailed comparison of how specific platforms handle inbox placement, see the email deliverability comparison of sales engagement platforms.

- Do you manage domain warmup internally, or is the customer responsible for their own warmup infrastructure?

- What is your standard inbox placement rate across active customer domains? Can you provide audited placement data broken down by mailbox provider?

- How do you monitor and respond to spam complaint rate spikes? What is your threshold for automatically pausing a sequence?

- Do you support SPF, DKIM, and DMARC authentication natively, or do these require third-party configuration?

- What is your shared versus dedicated sending infrastructure model? Are customer domains isolated from each other's sender reputation?

- How do you handle bounce management, including hard bounces, soft bounces, and role-address filtering before send?

- What is your policy on sending to unverified addresses? Do you pre-validate contact data before sequence enrollment?

- Can you show inbox placement rate data broken down by mailbox provider (Google, Microsoft, others) from the last 90 days?

- How does your platform handle one-click unsubscribe compliance across Gmail and Yahoo bulk sender requirements introduced in 2024?

Strong answer: The vendor manages warmup infrastructure, provides audited inbox placement rates by mailbox provider, maintains dedicated sending infrastructure per customer, and runs pre-send address validation. They can show historical complaint rate data from real customer accounts, not aggregated marketing figures.

Weak answer: The vendor uses a shared send pool and points you to a third-party tool for domain warmup. Shared infrastructure means another customer's volume behavior directly affects your sender reputation, with no isolation guarantee.

How Deep Should CRM Sync Be for an AI Sales Automation Platform?

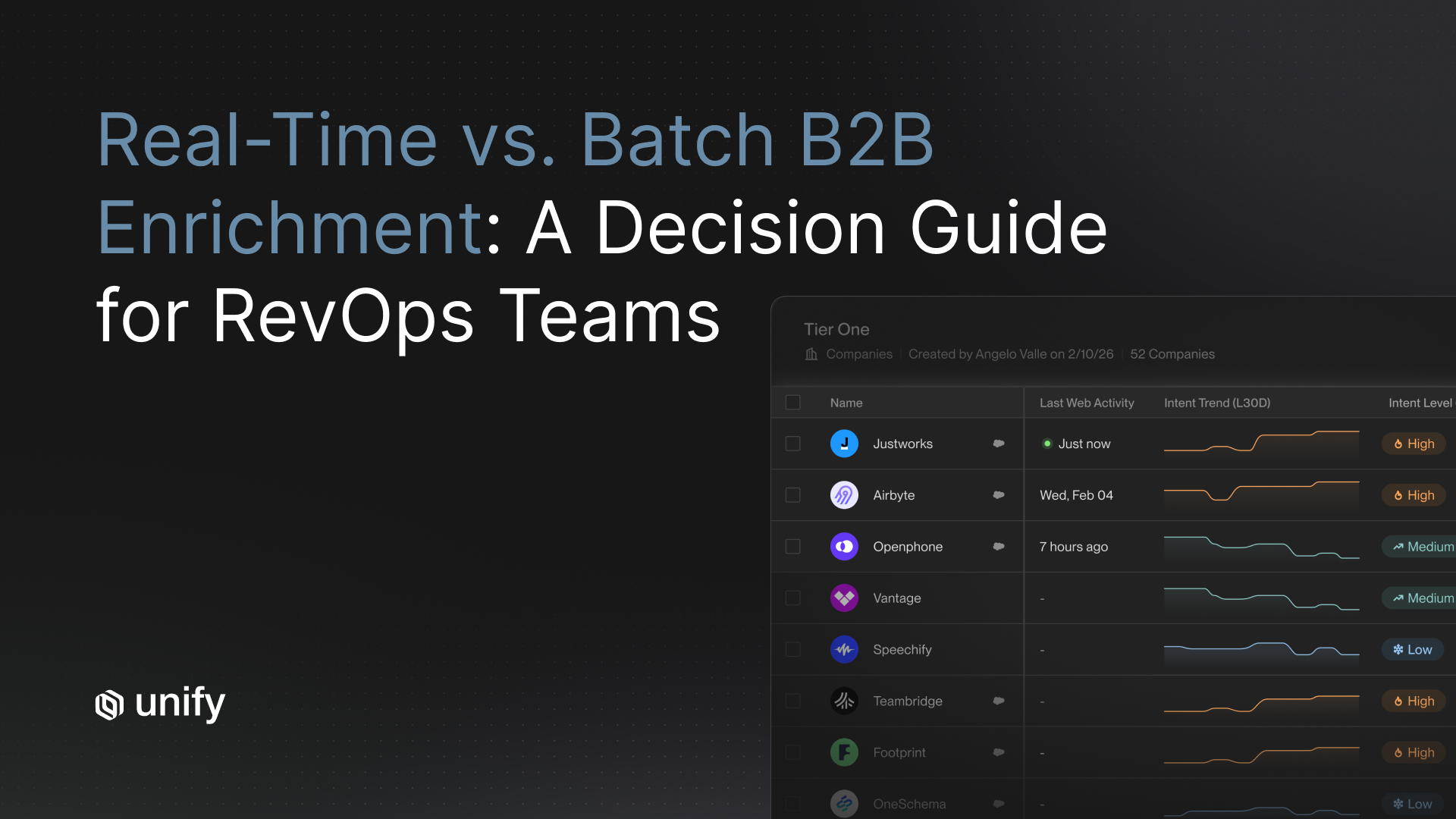

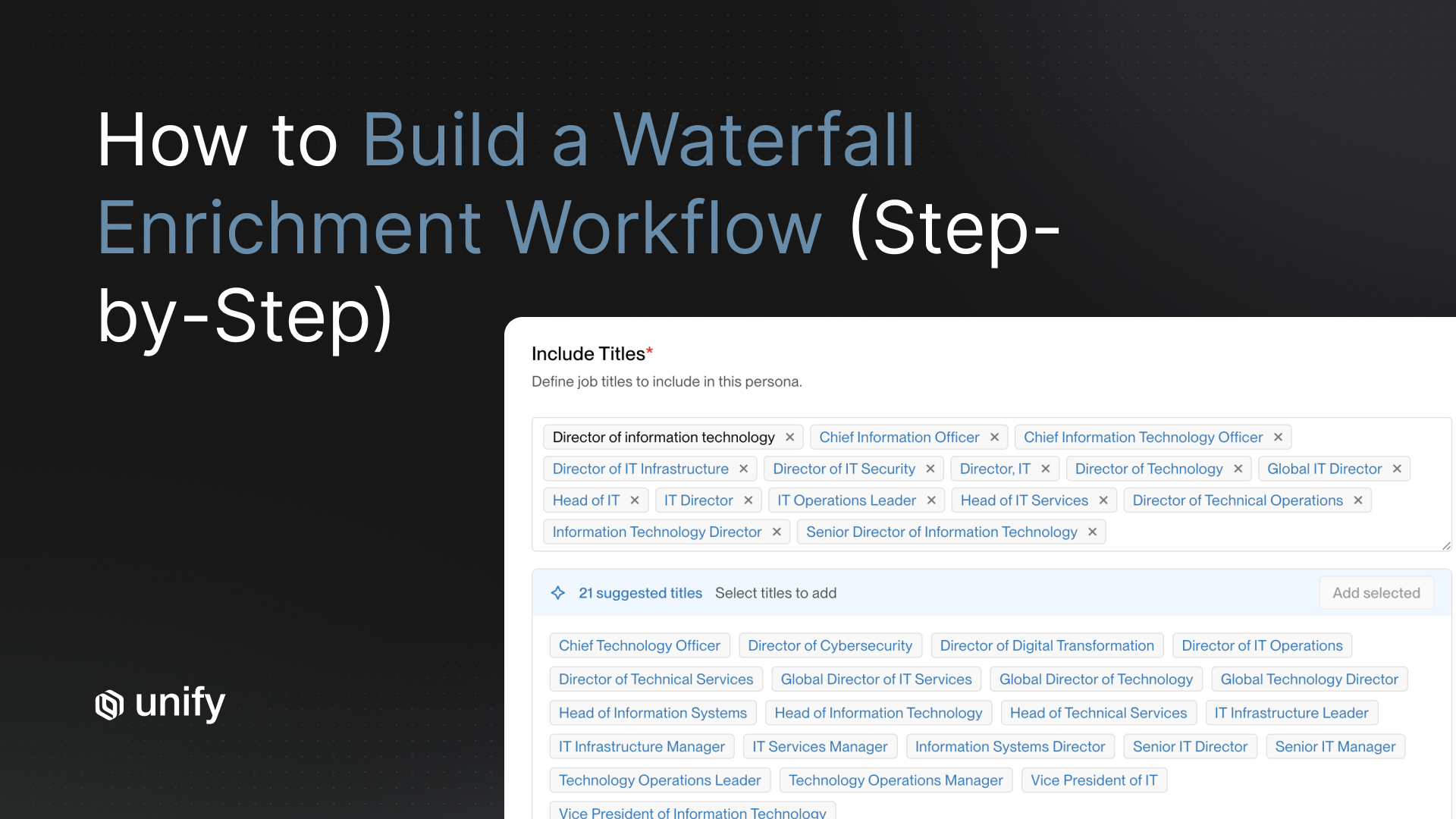

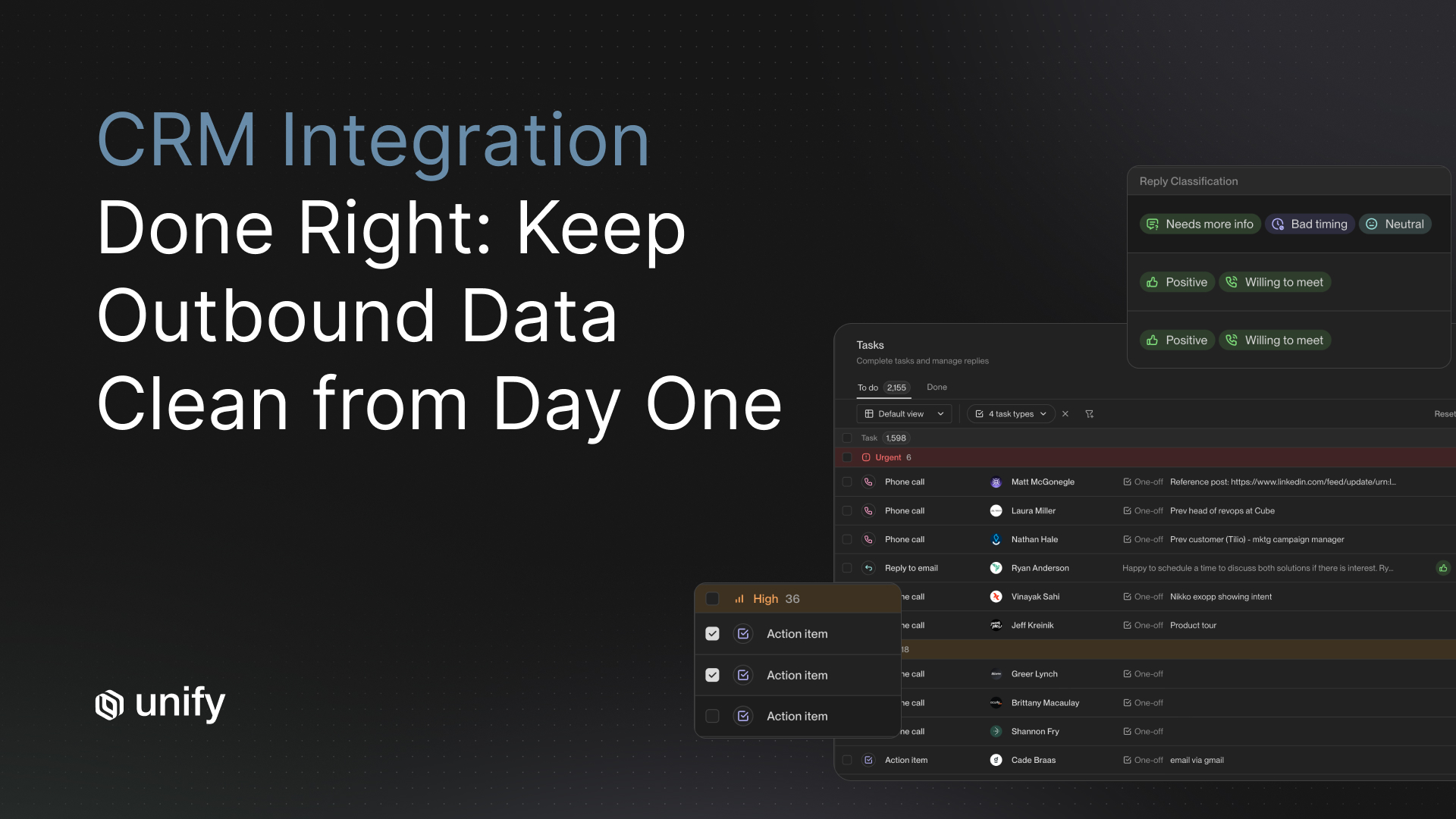

CRM sync depth is the second most common post-contract complaint from enterprise buyers, after output quality. Gartner research shows poor data quality costs the average organization $12.9 million per year. Research from Plauti found 45% of records are duplicates across organizations, a rate that jumps to 80% for API-based integrations specifically. Every AI sales automation platform creates CRM records. The question is whether those records are clean, de-duplicated, and bidirectionally synced, or whether they create a data cleanup project you did not budget for.

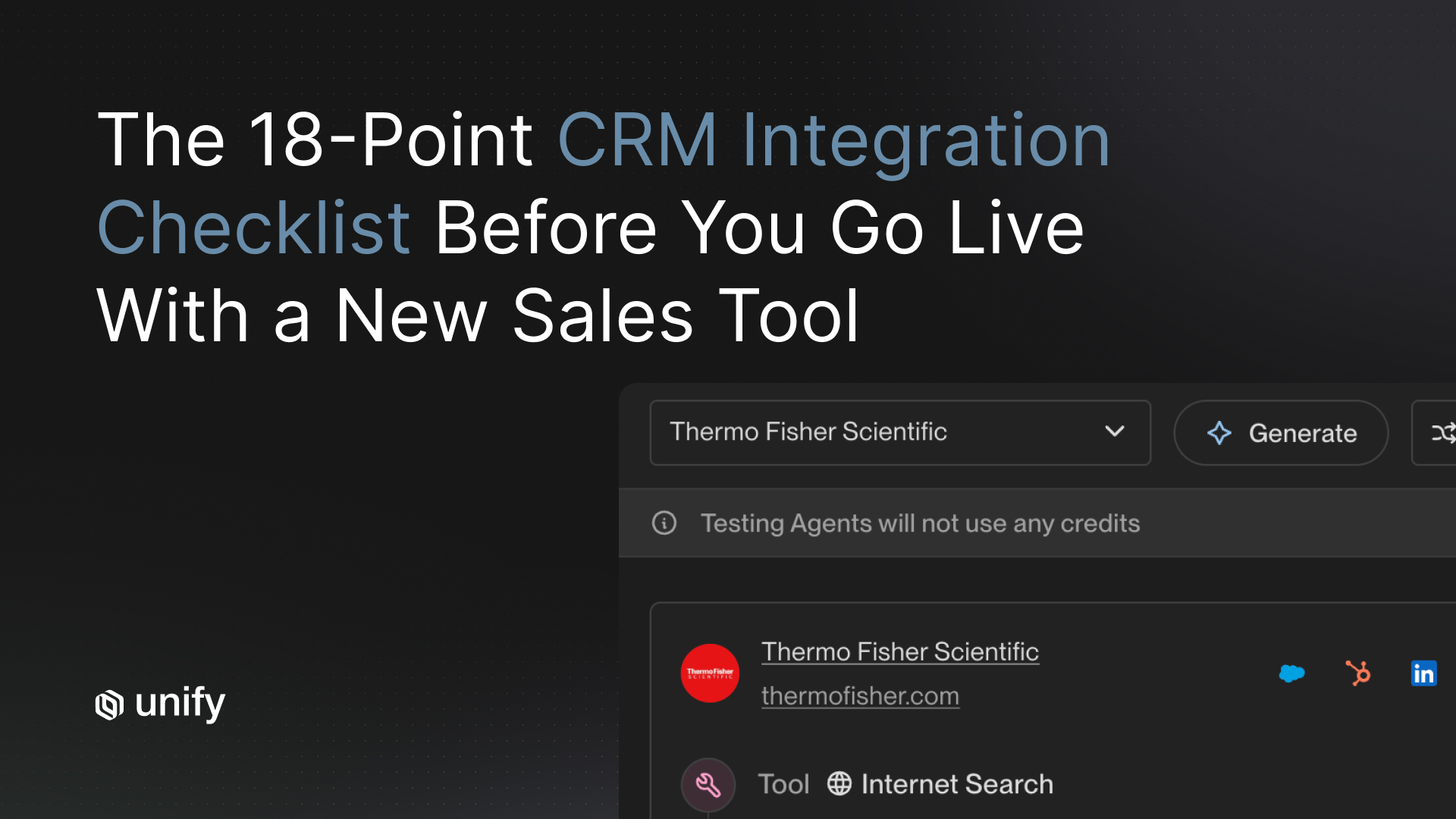

Unify's native CRM integration uses 15-minute sync intervals, bidirectional sync without Zapier dependency, and built-in duplicate prevention with configurable match logic. That is the benchmark to hold other vendors to. If a vendor's integration requires Zapier or a third-party middleware layer, you are accepting batch-sync delays and a dependency that breaks silently when either platform updates its API.

For the complete framework on what good CRM sync looks like in practice, see the CRM sync evaluation checklist.

- Is your CRM sync bidirectional natively, or does it require Zapier, Make, or another middleware tool?

- What is the sync interval? Real-time, 15-minute, hourly, or batch? What is the maximum delay in normal operating conditions?

- How do you handle duplicate records when a contact already exists in the CRM? Describe your match logic and who controls the deduplication rules.

- Can you map custom fields from your platform to our CRM's custom objects and fields, including picklist values?

- What CRM activity gets logged automatically? Provide a complete list of every activity type your platform writes back to the CRM natively.

- How does your platform handle CRM record ownership? Can sequences respect existing rep assignments without overriding them?

- What happens to CRM records if we cancel our subscription? Are records retained, deleted, or exported back to us?

- Do you support Salesforce custom objects in addition to standard Lead, Contact, and Opportunity objects?

- Can you provide a sync health dashboard showing error rates, failed syncs, and record counts in real time, not just an API status page?

Strong answer: Native bidirectional sync with intervals under 30 minutes, configurable duplicate handling with match logic your team controls, custom field mapping to your CRM's full schema including custom objects, and a real-time sync health dashboard. No Zapier or middleware dependency.

Weak answer: The vendor describes sync as "integration with Salesforce" without specifying directionality, interval, or match logic. Middleware dependencies and hourly or daily batch syncs are both red flags for a production outbound program at scale.

What Security and Compliance Questions Are Non-Negotiable for AI Outreach Platforms?

Security and compliance are gating criteria at most enterprise companies, not evaluation criteria. According to Secureframe's 2026 Cybersecurity and Compliance Benchmark Report, 61% of companies say achieving compliance certification is required to win or renew contracts, and 38% have lost revenue or competitive bids specifically for lacking a required certification. When finance and security review your vendor shortlist, a missing SOC 2 Type II report will end the evaluation before procurement even starts.

Beyond SOC 2, AI sales automation platforms handle contact data that falls under GDPR, CCPA, and 20 US state privacy laws as of 2026. GDPR fines have reached EUR 7.1 billion cumulatively since 2018. California CPPA penalties run up to $7,988 per intentional violation. Platforms that cannot answer data residency, data processing agreement, and subprocessor questions do not belong in an enterprise stack. Vendors who make you negotiate just to receive a DPA are signaling that compliance is not a product priority.

- Do you hold SOC 2 Type II certification? Can you provide the full audit report under NDA before we sign a contract?

- What is the coverage period and scope of your most recent SOC 2 Type II audit? Which trust service criteria are in scope?

- Do you support SSO via SAML 2.0 or OIDC? Is SSO available on all pricing tiers or only enterprise plans?

- Do you support SCIM provisioning for automated user lifecycle management, including deprovisioning?

- What role-based access controls (RBAC) are available? Can we restrict sequence creation, sending authority, and data export rights by role?

- Do you maintain immutable audit logs? What is the retention period, and can logs be exported to our SIEM?

- Where is customer data stored geographically? Do you support data residency in the EU or specific US regions?

- Can you provide a signed Data Processing Agreement meeting GDPR Article 28 requirements, available on request without a separate negotiation process?

- Do you use customer data to train your AI models? If yes, is there an opt-out and is it the default setting?

- What is your current subprocessor list, and what is your policy for notifying customers of subprocessor changes?

- What is your mean time to notify customers of a data breach? What does your incident response SLA include?

- Do you hold any additional certifications: ISO 27001, HIPAA, FedRAMP, or PCI DSS?

Strong answer: SOC 2 Type II with a current audit (within 12 months), SSO available on all plans, SCIM provisioning supported, immutable audit logs with export to standard SIEM formats, EU data residency option, a signed DPA available on request without a separate legal negotiation, no model training on customer data by default, and a published subprocessor list with a notification SLA for changes.

Weak answer: SOC 2 Type I only, SSO locked behind an enterprise add-on tier, no SCIM, no data residency options, and a DPA that requires weeks of legal negotiation to obtain. These are contractual delays dressed as process.

What Ramp Guarantees and Performance SLAs Should You Require?

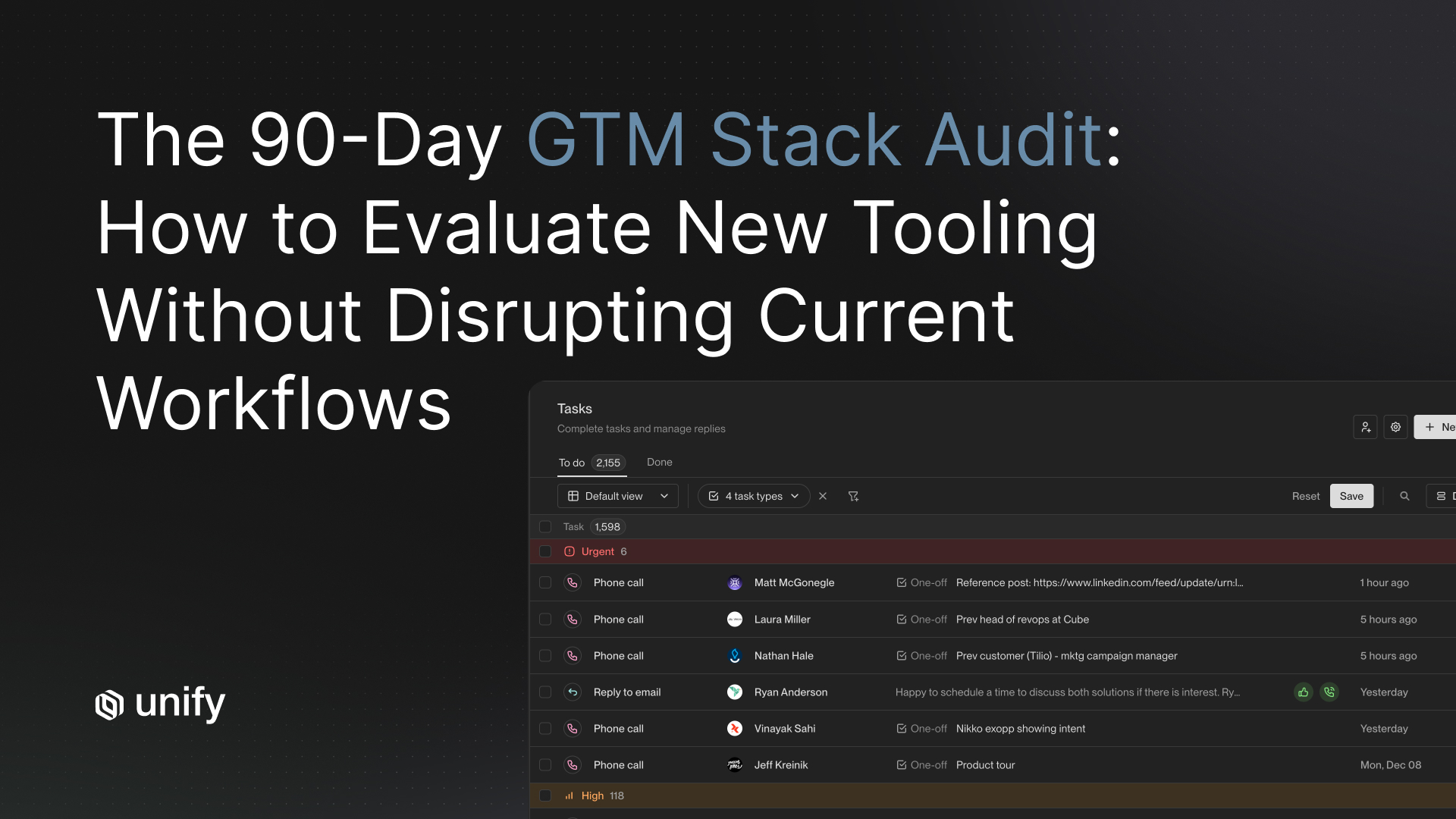

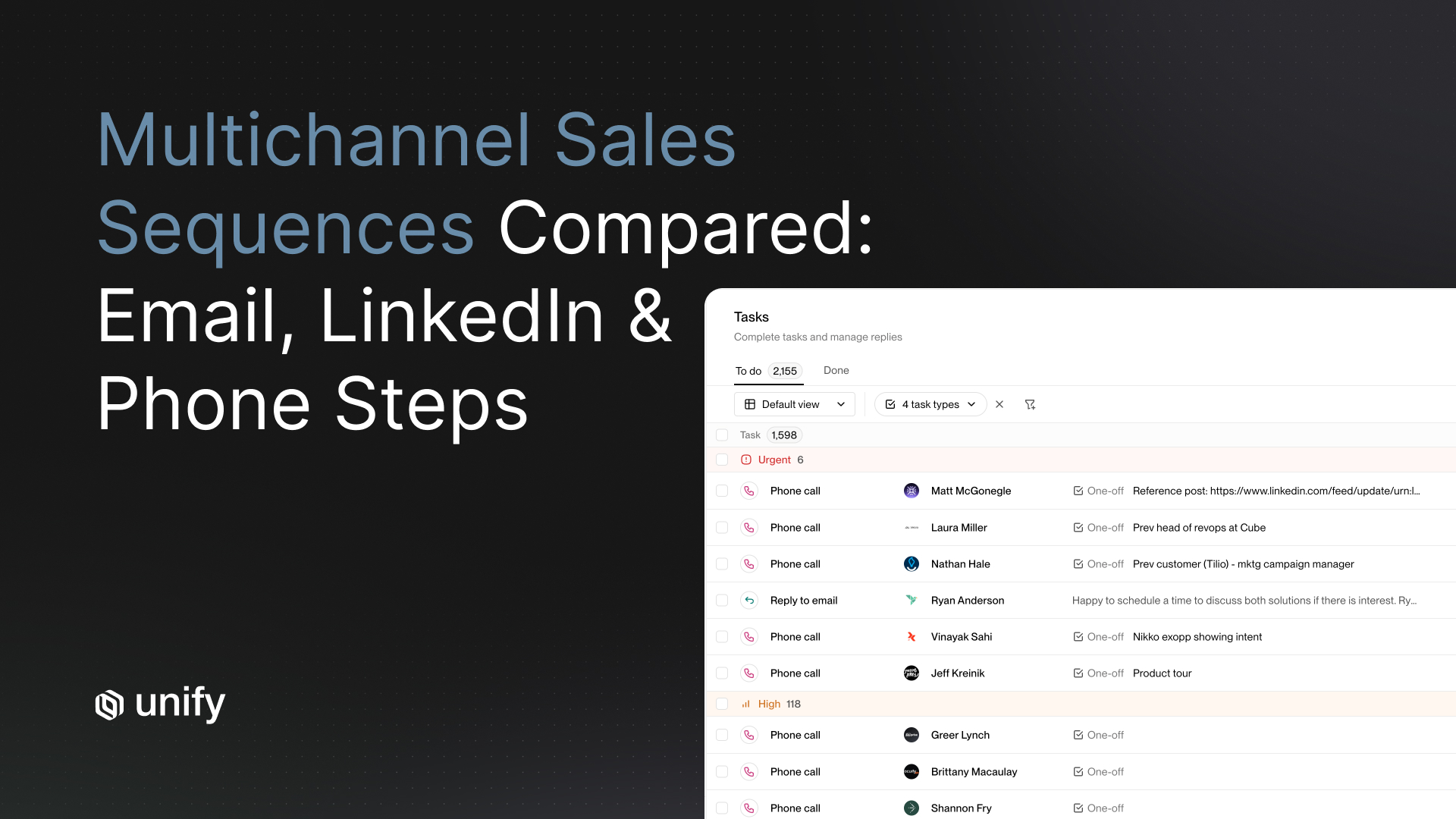

Ramp guarantees are the single most negotiated contract term in AI SDR deals, and the one where buyers leave the most value on the table. Most vendors define "ramp" as "technical setup complete," which satisfies the contract language without generating any pipeline. A 30-day onboarding window is not enough to reach statistical significance on reply rate, let alone measure pipeline quality with confidence.

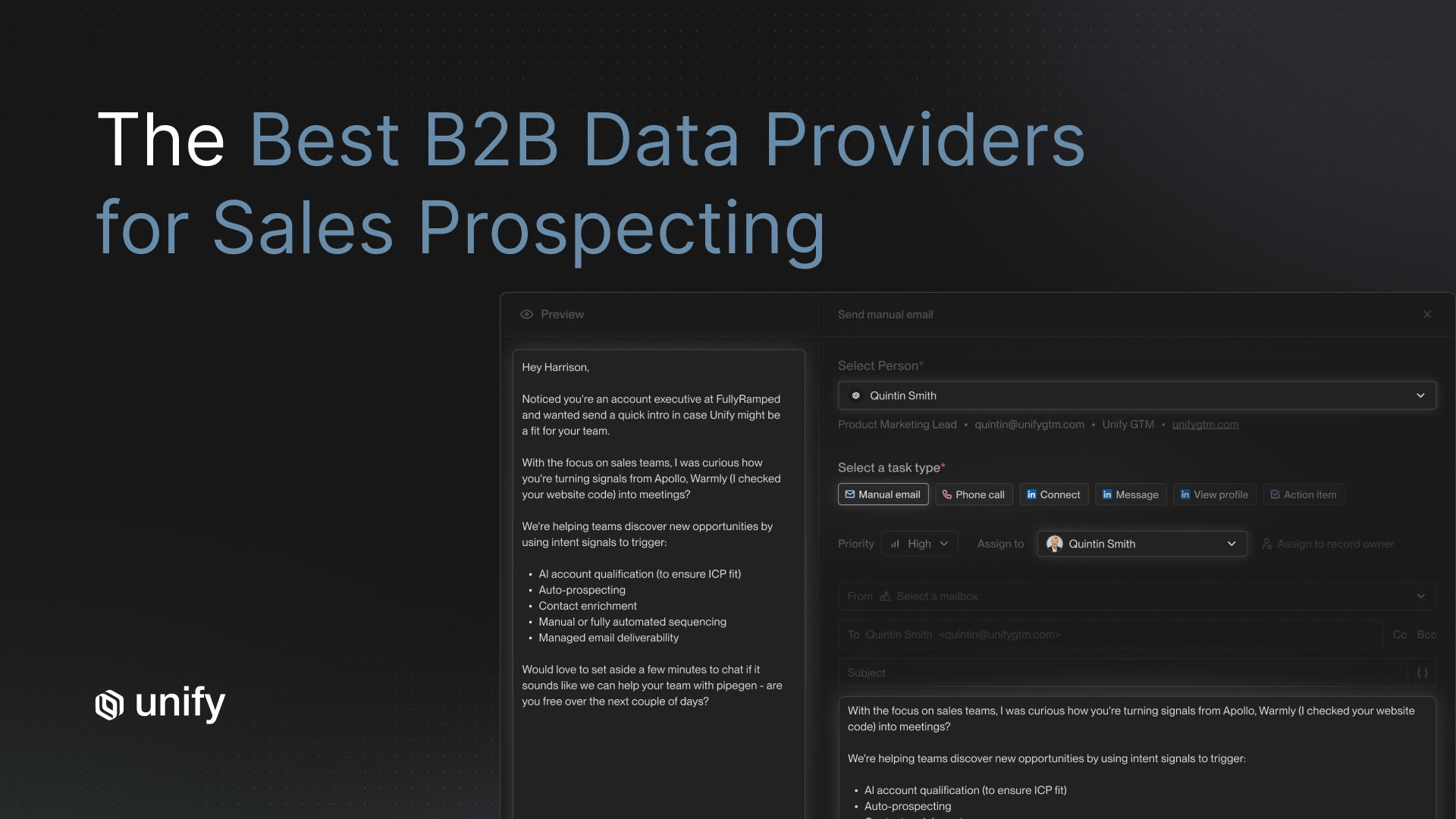

Signal-based AI outreach platforms reach measurable ROI faster than autonomous agents because the trigger logic is deterministic and the signal-to-outreach pipeline compresses time-to-relevance. Unify customers have reported $1.7M in pipeline in the first 3 months (Perplexity), $100K+ in direct pipeline within the first 10 days (Navattic), and 6.8x ROI in the first 5 months (Justworks). These are specific outcomes tied to the signal-based architecture, not average results across all deployment types.

Unify's internal cost-per-qualified-meeting data, built from platform-level benchmarks across active customers, shows signal-based AI outreach running at $80 to $180 per meeting versus $375 to $720 for human SDR programs fully loaded. That is a 3 to 5 times efficiency difference. Any vendor claiming comparable economics should be able to produce similar underlying data.

For a weighted framework on how to evaluate AI SDR platforms across the criteria that predict production performance rather than demo quality, see the AI SDR tools 12-criteria scorecard.

- What is your defined onboarding timeline? What specific milestones define "fully deployed" versus "technically live"?

- What performance benchmarks do you commit to in writing? Specify exact metrics: reply rate targets, meetings booked per month, qualified pipeline volume.

- If performance benchmarks are not met within the agreed ramp period, what is the contractual remedy? Credit, contract pause, or exit without penalty?

- Do you offer a structured pilot period before full contract commitment? What are the terms of the pilot, and what are the conditions for converting or exiting?

- What dedicated implementation support is included in the contract versus billed separately? Is there a named implementation engineer, not just a CSM?

- What does your 30-60-90 day success plan look like? Can you provide a real example from a comparable customer in our segment?

Strong answer: The vendor defines specific pipeline milestones, commits to them in writing with a clear remedy clause, offers a structured pilot option with real exit rights, provides a named implementation engineer distinct from the CSM, and can share an actual 30-60-90 plan from a comparable customer without a separate NDA.

Weak answer: The vendor defines "ramp" as sequences launched and measures success by activity volume rather than pipeline generated. This is a contract term engineered to satisfy benchmarks without producing business outcomes your finance team will recognize.

What Exit Terms Protect You If the Platform Underperforms?

Exit terms are the procurement question buyers most consistently skip because they feel awkward to raise before a contract is signed. They are also the questions that matter most six months later when performance has not materialized. Vendor lock-in in AI sales automation is typically achieved through three mechanisms: data custody restrictions, auto-renewal clauses with short notice windows, and exit fees that make switching prohibitively expensive relative to continuing.

The total cost of a forced platform migration extends beyond the switching fee. It includes re-warming sending domains from scratch, rebuilding sequence logic in the new platform, re-mapping CRM fields, and absorbing a pipeline gap during the transition period. Buyers who negotiate data portability, 90-day notice periods, and performance-linked exit rights before signing avoid most of that cost. The vendors who refuse these terms are signaling that lock-in is part of their retention strategy.

- What is the data export process at contract end? In what format is data exported (CSV, JSON, other), and what is the guaranteed delivery timeline?

- How long after contract termination does our data remain accessible within your platform? When is it permanently deleted?

- Are there early termination fees? Under what specific conditions can we exit the contract without incurring a penalty?

- What is your auto-renewal policy? How much advance notice do you require to cancel before the auto-renewal date triggers?

- If you are acquired, materially change your pricing model, or deprecate a core feature we rely on, do we have the contractual right to exit without penalty?

Strong answer: Full data export in a standard format (CSV or JSON) within 30 days of contract end, 60-day minimum post-termination data retention before deletion, no early termination fee if documented performance SLAs are missed, 90-day advance auto-renewal notice requirement, and a change-of-control clause that grants exit rights. These terms should be standard and not require escalation to legal.

Weak answer: Data export described as "available upon request" with no defined format or delivery timeline. Thirty-day auto-renewal notice window. No performance-linked exit right. These terms structurally favor the vendor in every scenario where you need to leave but are not in material breach.

How Should You Score Vendor Responses to This RFP?

Vendor responses to a procurement RFP are only useful if you evaluate them consistently across all candidates. The scoring template below organizes the 47 questions into six weighted domains. Score each domain on a 0 to 10 scale based on how completely and verifiably the vendor answers the questions. A vendor who provides documentation (SOC 2 report, benchmark data, sample 30-60-90 plans) on first request scores higher than one who answers verbally and promises to follow up.

Multiply each domain score by its weight to get a weighted total out of 10. A total weighted score below 6.5 should be a hard disqualifier regardless of feature set or pricing. A vendor who cannot answer infrastructure questions clearly enough to score above 6.5 will not be able to support your program when issues arise in production, and they will always arise.

Why Does Platform Architecture Determine Procurement Outcomes?

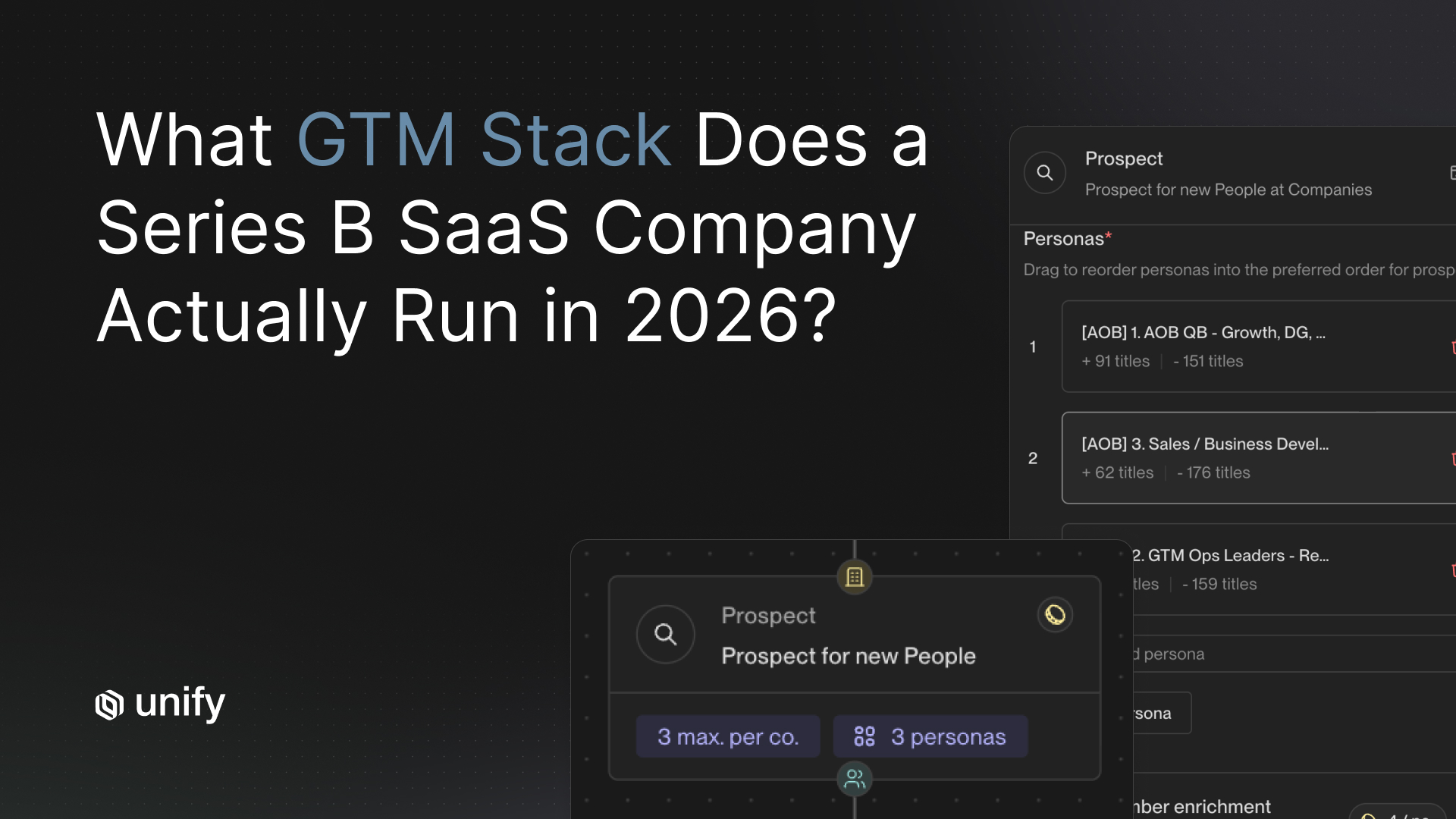

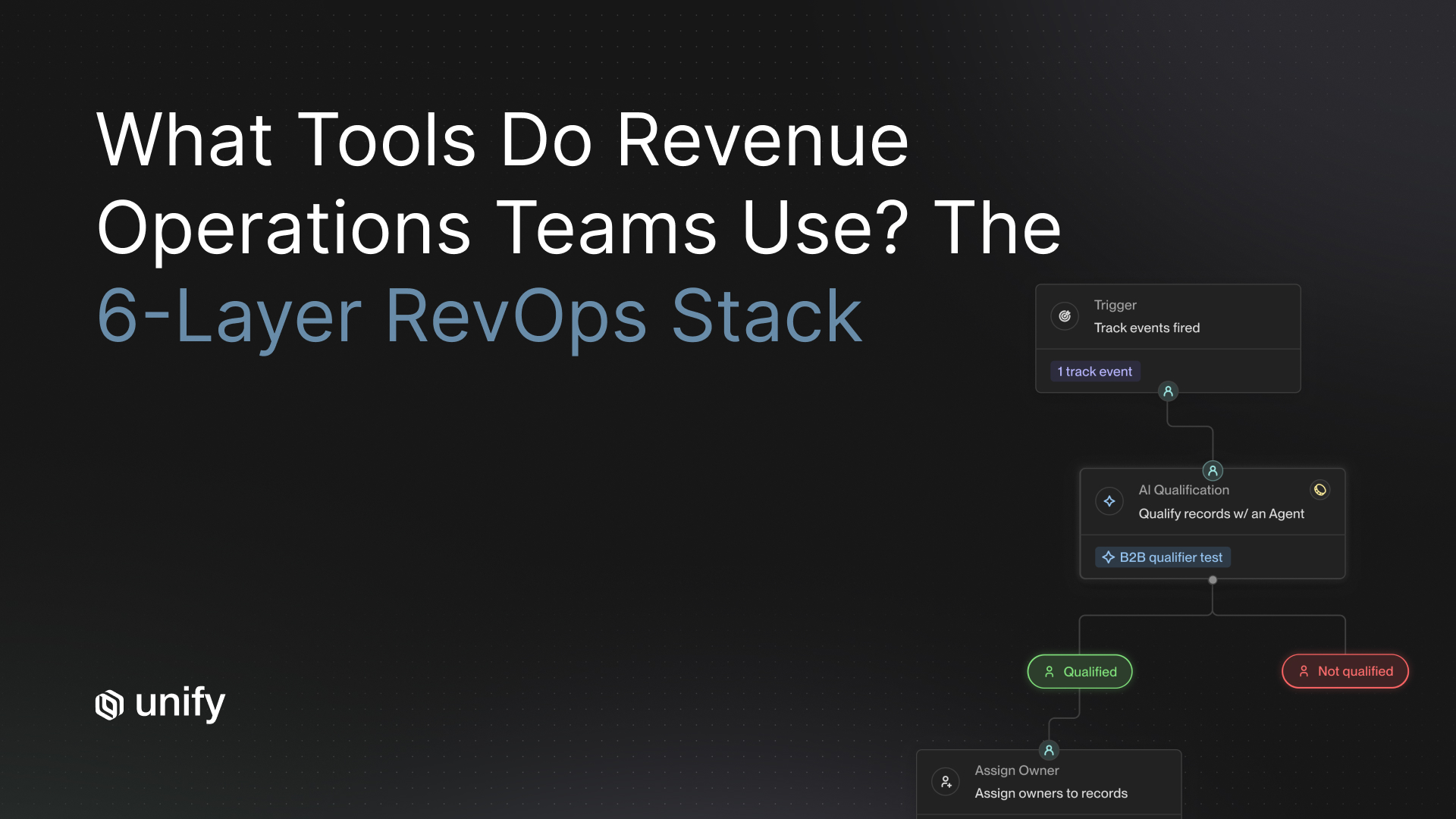

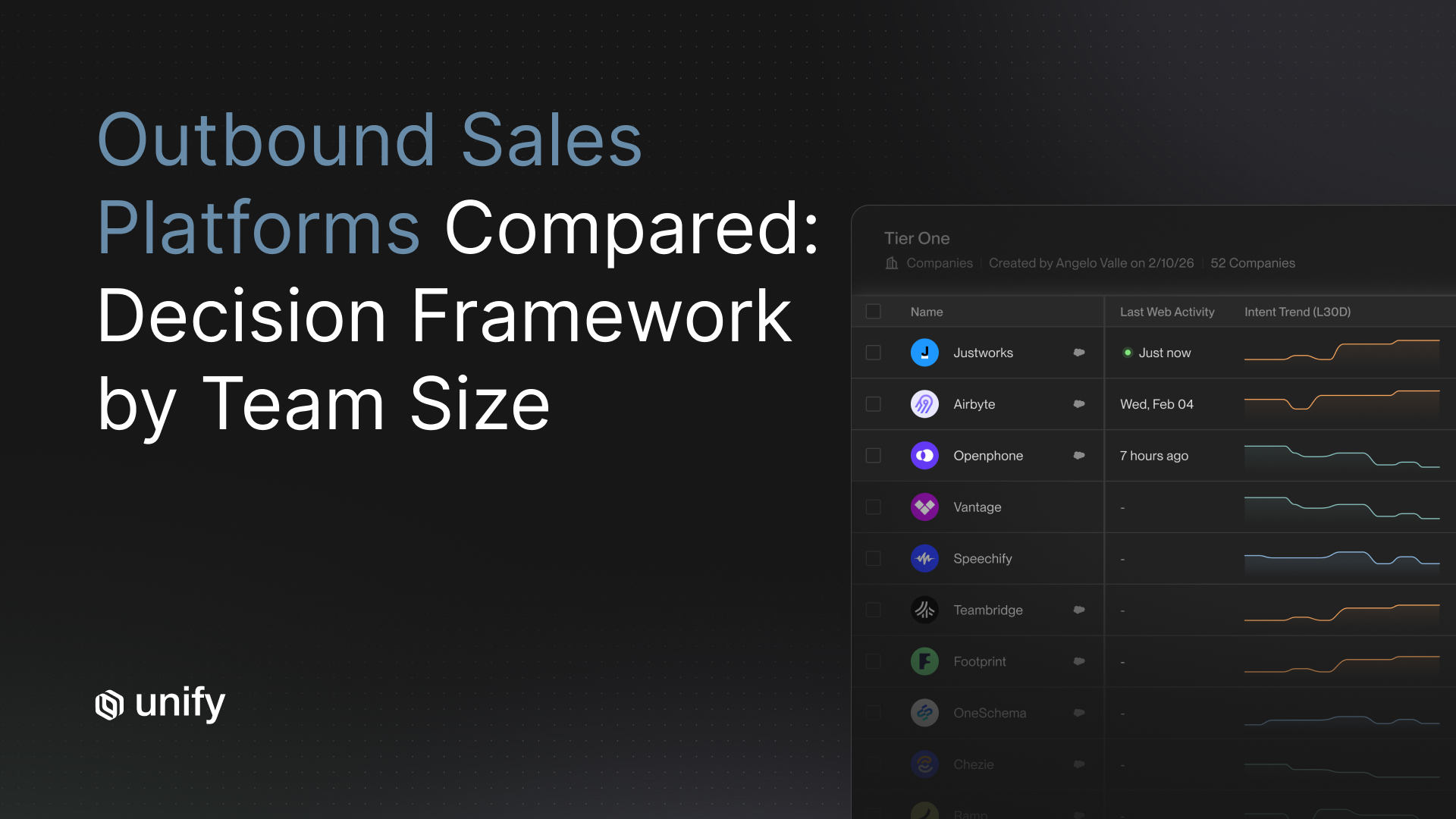

The core question behind all 47 items above is architectural: is this platform built for controlled, signal-triggered outreach, or for autonomous volume output? Those two architectures produce systematically different answers across every domain in this RFP, and the gap compounds over time.

Autonomous agent platforms are optimized for fast deployment and high send volume. They score well on ease of onboarding and tend to fail on model versioning, deliverability isolation, and CRM sync depth because those capabilities require infrastructure investment that does not serve a volume-first product strategy. Gartner's prediction that fewer than 40% of sellers will report AI agent productivity improvements is explained by this architecture gap. More sending does not produce more pipeline when outreach quality, deliverability, and CRM data hygiene are not maintained at scale.

Signal-driven orchestration platforms start from a different premise: outreach should fire when a specific buying signal is detected, not on a calendar schedule. That design produces better reply rates (Unify's internal benchmarks show 2x to 4x lift over timed sequences), better domain reputation over time because high engagement suppresses spam classification, and cleaner CRM data because signal context is logged alongside every activity. It also produces smaller send volumes at higher signal-to-noise ratios, which is exactly the architecture that survives the procurement questions above.

The procurement RFP above exists because category marketing has converged. Every platform now claims signal-based personalization, SOC 2 compliance, and CRM integration. The 47 questions separate platforms that have built that infrastructure from platforms that have built that messaging. That distinction is what you are buying, and it determines whether this investment produces pipeline or a post-mortem.

Frequently Asked Questions

Which AI sales automation platforms are most reliable for outreach?

Reliability in AI sales automation depends on model versioning stability, deliverability infrastructure, and CRM sync fidelity. Signal-driven orchestration platforms that trigger outreach from verified buying signals consistently outperform fully autonomous agents on reply rate and pipeline quality. Unify, which uses a signal-based approach, generates $80 to $180 cost per qualified meeting versus $375 to $720 for human SDRs. Any platform you consider should be able to answer all 47 questions in this RFP before you sign.

What security certifications should an AI sales platform have for enterprise procurement?

At minimum, any AI sales automation platform entering an enterprise procurement process should hold SOC 2 Type II certification. According to Secureframe's 2026 Cybersecurity and Compliance Benchmark Report, 61% of companies say compliance certification is required to win or renew contracts, and 38% have lost revenue or competitive bids for lacking a required certification. You should also ask about SSO (SAML/OIDC), SCIM provisioning, role-based access controls, audit logs, and data residency options.

What is model output drift and why does it matter for AI SDR platforms?

Model output drift refers to degradation in the quality, tone, or accuracy of AI-generated content after a model update or over time, without any change to your prompts or settings. In AI SDR platforms, drift means sequences that once produced personalized, on-brand emails silently shift toward generic templates. Vendors that practice model versioning, maintain prior model versions as fallback options, and run regression benchmarks before every update protect you from drift. Ask vendors to show their benchmark suite and explain their rollback process before you sign.

How long does it take to see ROI from an AI sales automation platform?

Based on outcomes from signal-driven platforms, you should see early pipeline signals within the first 30 days and measurable ROI by day 60 to 90 if your data is clean. Customers using Unify's signal-based approach have reported results like $1.7M in pipeline in the first 3 months (Perplexity) and $100K+ in direct pipeline within the first 10 days (Navattic). Autonomous AI SDR tools without signal targeting typically require 6 to 9 months to show meaningful ROI, and many buyers report ongoing generic output as a persistent problem throughout the contract.

What exit terms should I negotiate before signing an AI sales automation contract?

Before signing, negotiate: full data portability in a standard format within 30 days of contract end, no data deletion for at least 60 days post-contract, performance-linked exit clauses tied to agreed pipeline benchmarks, and a 90-day auto-renewal notice requirement. Avoid vendors who cannot provide a complete data export in CSV or JSON format, as that is the clearest indicator of intentional lock-in by contract design.

Sources

- Gartner. "Gartner Predicts By 2028 AI Agents Will Outnumber Sellers by 10X." November 2025. gartner.com

- Gartner. "Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026." August 2025. gartner.com

- Validity. "2026 Email Deliverability Benchmark Report." 2026. validity.com

- Secureframe. "2026 Cybersecurity and Compliance Benchmark Report." Referenced via Secureframe blog. secureframe.com

- Plauti. "Duplicate Record Research." Referenced via Unify CRM Sync Evaluation Checklist. unifygtm.com

- Gartner. "Poor Data Quality Cost Estimate ($12.9M/year)." Referenced via Unify CRM Sync Evaluation Checklist. unifygtm.com

- Unify. "AI SDR Performance Metrics." 2026. unifygtm.com

- Unify. "Email Deliverability Comparison: Sales Engagement Platforms." 2026. unifygtm.com

- Unify. "Best AI SDR Tools: 12-Criteria Scorecard." 2026. unifygtm.com

- Unify. "Customer Results: Perplexity, Navattic, Justworks, and others." 2026. unifygtm.com

- The RevOps Report. "Artisan AI SDR Review 2026: Ava, Deliverability and GTM Automation." 2026. therevopsreport.com

- Secureframe. "SOC 2 vs Security Questionnaires: What's the Difference?" 2026. secureframe.com

- Unify. "B2B Data Compliance: GDPR, CCPA, and US State Privacy Laws." 2026. unifygtm.com

- SPOTIO. "2026 State of Field Sales Survey." 2026. spotio.com

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)