TL;DR: Most sales engagement platform POCs fail because buyers ask marketing-brochure questions and get marketing-brochure answers. The 15 questions below are organized across five operational buckets — data integrity, automation behavior, rep workflow, analytics fidelity, and scale stress tests — with what a good answer sounds like and what the red flags are. Use the four-week POC timeline and the scorecard at the end to run a structured evaluation that surfaces real differences between vendors before you sign anything.

Why Do Most Sales Engagement Platform POCs Fail?

Most sales engagement platform POCs fail because buyers enter them without defined success criteria and exit them based on demo impressions rather than operational results. According to Dock's sales POC playbook, the top predictor of POC success is not technical metrics — it is stakeholder engagement. Buyer teams that define SMART success criteria before the POC starts and maintain active participation throughout convert at dramatically higher rates than those who treat the evaluation as a passive demo extension.

The second reason POCs fail is question quality. Buyers ask "does it integrate with Salesforce?" instead of "show me exactly what happens when a CRM field is updated mid-sequence and there is a conflict between the platform and the CRM record." The first question gets a yes from every vendor. The second question reveals which vendors have actually solved the problem.

The third failure mode is timeline compression. A four-week POC with defined weekly milestones is the minimum viable evaluation for a sales engagement platform. Anything shorter skips the stress-test phase — week three — where real production conditions expose sync lags, automation misfires, and analytics gaps that never appeared in the demo environment.

What Are the 5 Buckets Every POC Evaluation Must Cover?

Every sales engagement platform POC should evaluate five operational buckets: data integrity, automation behavior, rep workflow, analytics fidelity, and scale readiness. Each bucket maps to a failure mode that costs revenue after purchase. Missing even one means you are buying on incomplete information.

- Data integrity — How the platform handles sync fidelity, deduplication, and field mapping between itself and your CRM

- Automation behavior — Pause logic, safety rails, and human review gates that prevent automation from firing on the wrong contacts

- Rep workflow — The inbox experience, task management interface, and mobile functionality reps will live in daily

- Analytics fidelity — Attribution accuracy, cohort reporting, and sequence-level performance data that drives decisions

- Scale stress tests — How the platform performs under production volume, rapid seat ramp, and multi-tenant configurations

The 15 questions below are numbered so you can reference them individually and drop them directly into a vendor scorecard. Each one targets a specific failure mode that costs revenue after purchase.

Bucket 1: Data Integrity — 3 Questions That Separate Real Integration from Demo Integration

Data integrity questions are the most important in the POC and the most frequently skipped. Poor data quality costs the average organization $12.9 million per year, according to Unify's CRM sync evaluation research. Every answer in this bucket should be demonstrated with your actual CRM data, not a sandbox environment.

Question 1: What is the sync interval, and what happens to a sequence when a CRM field is updated mid-sequence?

Good answer: The platform syncs in near real-time (under five minutes), detects the field change, evaluates whether the sequence should pause or continue based on configurable rules, and logs the decision with a timestamp in both the platform and the CRM record.

Red flag: Sync intervals longer than 15 minutes, or a vendor who says "the sequence continues and the CRM is updated at the next scheduled sync." That answer means reps are sending emails to contacts whose status has already changed.

Question 2: Show me your deduplication logic running against our actual CRM data.

Good answer: The vendor imports a sample of your real CRM data, runs dedupe, shows you the match criteria (email, domain, LinkedIn URL, or fuzzy name matching), and demonstrates what happens when a duplicate is found — which record wins, which is suppressed, and how the decision is logged.

Red flag: The vendor deduplicates only within the platform's own database and does not check against your CRM in real time. This creates phantom duplicates and missed suppression events.

Question 3: How do you handle custom CRM fields that are not in your default field mapping?

Good answer: Custom field mapping is supported without engineering involvement, changes propagate within one sync cycle, and there is a UI where a non-technical admin can configure, test, and audit field mappings without opening a support ticket.

Red flag: Custom field mapping requires a professional services engagement or a support request with a multi-day SLA. If the vendor cannot map your fields during the POC setup, they will not be able to keep pace with CRM schema changes after you purchase.

Bucket 2: Automation Behavior — 4 Questions About Safety Rails and Pause Logic

Automation without guardrails is a liability. The four questions in this bucket identify whether a platform's automation layer is designed for controlled, auditable execution or for raw volume. The right answer is always the former.

Question 4: What triggers an automatic sequence pause, and who gets notified?

Good answer: Pauses trigger automatically on out-of-office replies, direct replies from the prospect, CRM stage changes (e.g., Opportunity Created), unsubscribe events, and configurable custom signals. A designated rep and their manager receive an immediate notification. The pause is logged with a reason code in the CRM activity feed.

Red flag: Pauses only trigger on direct replies. If a contact is moved to Closed-Won in Salesforce by another rep, the platform continues to sequence them because the CRM update has not synced. This is an extremely common failure mode.

Question 5: Can you show me how a human review gate works before an AI-generated email is sent?

Good answer: The platform surfaces a draft email in a review queue, shows the rep the signal that triggered it and the personalization data pulled in, and requires an explicit approve or edit action before send. The queue is time-limited so unreviewed emails do not queue up indefinitely.

Red flag: Human review is a toggle that affects all emails globally, not a configurable step within specific sequences. This means teams must choose between full automation (no review) or manual approval on everything. Neither is appropriate for most sales motions.

Question 6: What happens when a contact is enrolled in two sequences simultaneously?

Good answer: The platform detects the conflict before enrollment, presents the rep with a conflict resolution prompt, and has configurable rules for which sequence takes priority. The suppressed enrollment is logged so there is no silent drop.

Red flag: The platform allows simultaneous enrollment by default and relies on the rep to notice the conflict manually. In high-volume environments, this creates duplicate outreach that damages deliverability and prospect experience.

Question 7: How does the platform handle unsubscribes across multi-channel sequences?

Good answer: An unsubscribe from any channel immediately suppresses all channels for that contact — email, LinkedIn message, and call task. The suppression is written back to the CRM as a field update within one sync cycle. The platform maintains a global suppression list that persists even if the contact is re-imported later.

Red flag: Unsubscribes are channel-specific by default. An email unsubscribe does not stop a LinkedIn message from going out the next day. This is both a compliance risk and a prospect experience failure.

Bucket 3: Rep Workflow — 3 Questions About the Daily Experience Reps Will Actually Use

Rep adoption determines whether a sales engagement platform generates pipeline or collects dust. G2 data shows 72% of sales teams successfully adopt their sales engagement platform when implementation is structured — but that figure masks the teams who adopted a tool that slows reps down daily. Tools with powerful automation but poor daily-use interfaces see adoption erode within 60 days of go-live as reps find workarounds. These three questions surface the friction points that kill adoption before they become your problem.

Question 8: Walk me through a rep's morning: how do they see what to do, in what order, and how long does it take?

Good answer: The platform surfaces a prioritized task list ordered by signal strength or urgency, not just sequence step order. A rep can see their full day's work in under 60 seconds, complete email, call, and LinkedIn tasks from a single view without switching tabs, and finish their daily priority list in under 90 minutes.

Red flag: Task prioritization is based on sequence step order rather than prospect intent signals. Reps spend 20+ minutes each morning manually sorting their task list. This is a productivity drain that compounds daily across your entire team.

Question 9: Can a rep send a one-off email to a contact who is not in a sequence, and does that activity log to CRM?

Good answer: Yes. Ad-hoc emails can be sent from within the platform, are tracked for opens and replies, and log automatically to the CRM contact record. The rep does not need to BCC a CRM email address or take any manual logging step.

Red flag: The platform only tracks activities within sequences. One-off emails sent from the rep's inbox are invisible to the platform's analytics and do not log to CRM. This creates an attribution blind spot for your highest-intent conversations.

Question 10: What does the mobile experience look like for a rep who needs to approve or act on a time-sensitive sequence event while traveling?

Good answer: The mobile app or responsive web view shows pending approvals with full context (the signal that triggered the sequence, the draft message, and the contact's recent activity). The rep can approve, edit, or pause from their phone in under 30 seconds.

Red flag: Mobile is a read-only view of activity. Reps cannot take action on pending tasks or approvals from mobile. For field sales teams and traveling AEs, this creates bottlenecks that delay time-sensitive outreach.

Bucket 4: Analytics Fidelity — 3 Questions That Reveal Whether You Can Trust the Reporting

Analytics fidelity is the gap most buyers discover after purchase. A platform can show impressive open rate dashboards during a POC while completely obscuring sequence-level attribution, cohort-based performance, and true pipeline contribution. These three questions make that gap visible before you sign.

Question 11: How does the platform attribute a meeting booked to a specific sequence, and what happens if the rep also sent manual emails during the same period?

Good answer: The platform uses a defined attribution model (first touch, last touch, or multi-touch) that is documented and configurable. Manual emails sent from the platform are included in the attribution model. The vendor can show you the attribution logic for a sample opportunity from your own CRM data.

Red flag: Attribution is based on last-sequence-touch only and does not account for manual emails, calls, or LinkedIn messages. The reported conversion rate looks strong because it attributes all meetings to sequences, even ones the sequence did not cause.

Question 12: Can I pull a cohort report showing reply rates for contacts enrolled in a sequence during a specific date range, 30 days later?

Good answer: Cohort reporting is a built-in feature. The user can filter by sequence, enrollment date range, and outcome type, and export the result as a CSV or view it in a live dashboard. The data reflects actual send timestamps, not the date the report is pulled.

Red flag: The platform offers only rolling aggregate metrics (e.g., "reply rate this month") rather than enrollment-date cohorts. This makes it impossible to measure whether a change to a sequence improved performance for a specific group of contacts over time.

Question 13: What reporting exists at the individual sequence step level, not just the sequence level?

Good answer: Step-level reporting shows open rate, click rate, reply rate, and bounce rate per step, along with average time-to-reply for steps that receive responses. A rep or manager can identify which step is causing drop-off and edit it without affecting contacts already past that step.

Red flag: Reporting is available only at the sequence level. There is no way to identify whether step 3 has a 2% reply rate while step 1 has 18%, which means you cannot optimize without guessing.

Bucket 5: Scale Stress Tests — 2 Questions for Teams Planning to Grow

Scale questions matter even for small teams, because buying a platform you outgrow in 18 months costs you a migration. These two questions expose architectural limits that vendors rarely volunteer in demos.

Question 14: How long does it take to add 10 new seats and have them fully operational, including CRM sync and sequence access?

Good answer: New seats can be provisioned, CRM permissions configured, and sequences made accessible within 24 hours without a professional services engagement. There is a self-serve admin workflow for seat management. The vendor can demonstrate adding a test seat during the POC.

Red flag: New seat onboarding requires a support ticket, has a 3-5 business day SLA, or requires professional services for CRM permission mapping. Rapid hiring periods — post-funding rounds, sales kickoffs — become platform bottlenecks.

Question 15: If we run 50,000 emails in a single month, what happens to send latency, deliverability monitoring, and CRM write performance?

Good answer: The vendor provides documented throughput benchmarks, shows you a monitoring dashboard for queue depth and send latency, and explains how domain warming is managed at volume. They can point to a customer reference running comparable volume.

Red flag: The vendor does not have documented throughput limits, says "we have never had issues at that volume," or cannot provide a reference customer. At scale, undocumented limits become revenue-impacting outages.

What Does a Week-by-Week POC Timeline Look Like?

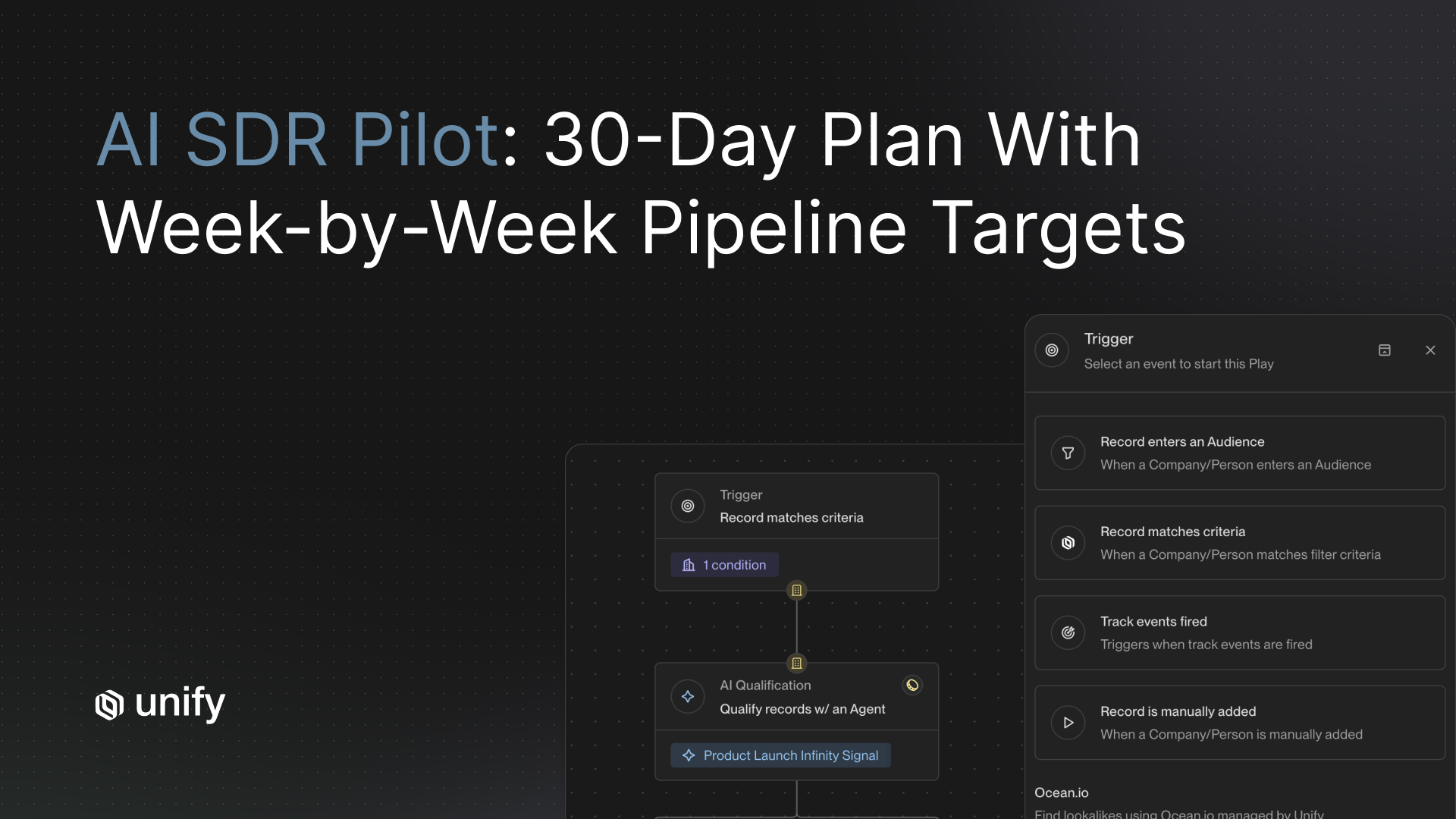

A four-week POC timeline gives you enough time to run sequences with live data, stress-test automation behavior, and gather rep feedback before committing. Here is the structure that surfaces real differences between vendors.

How Does Unify Answer These 15 Questions?

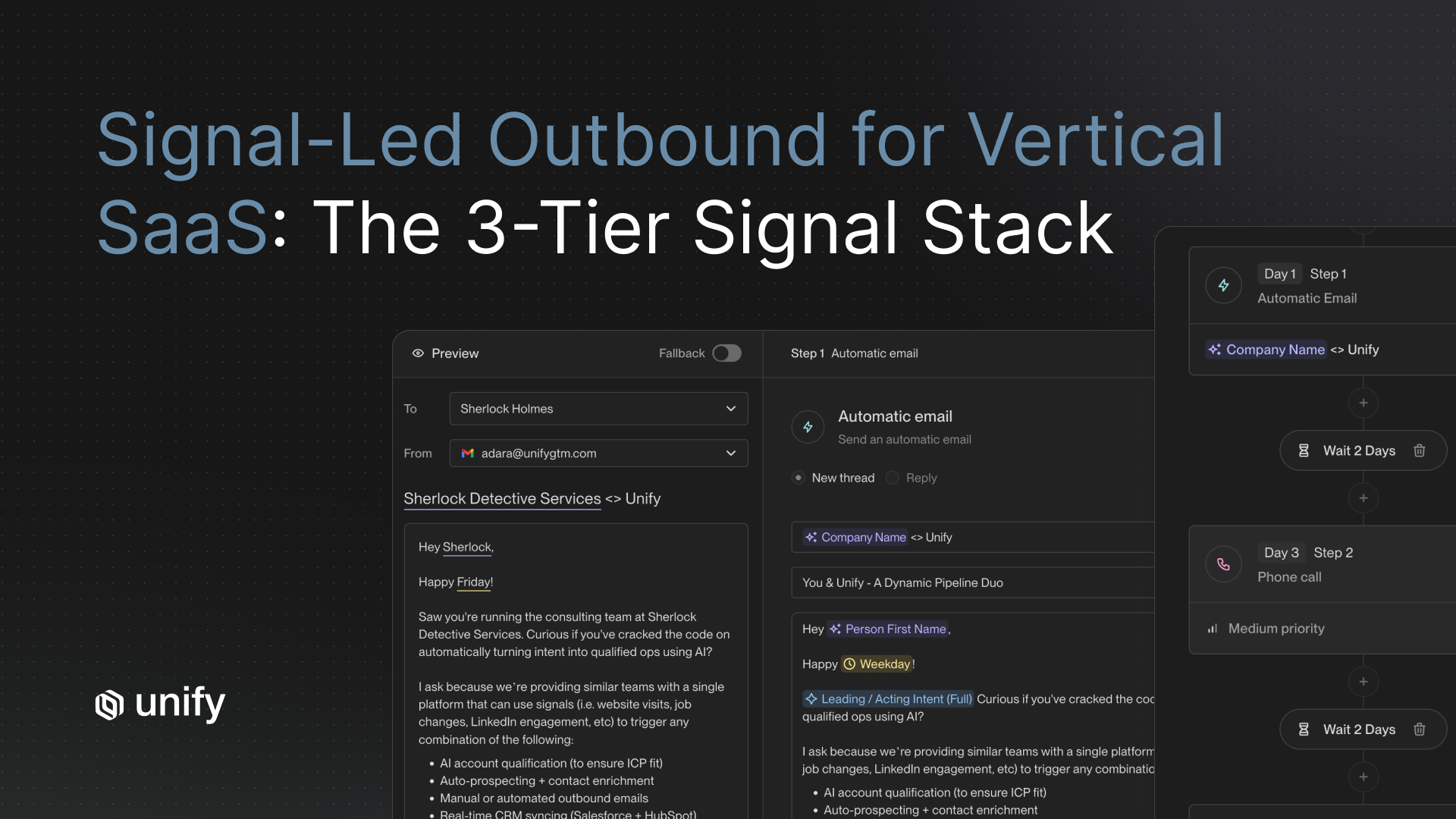

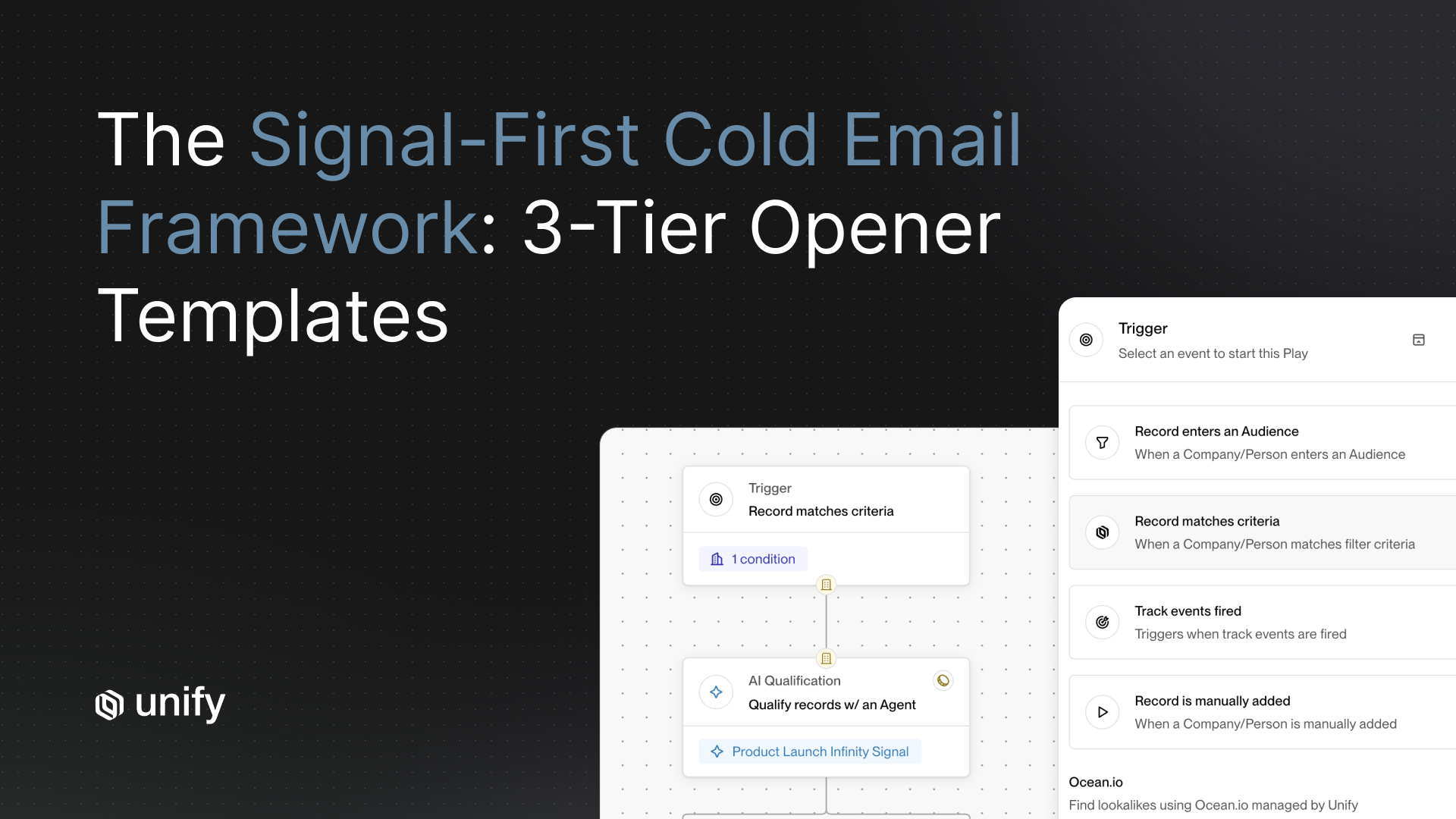

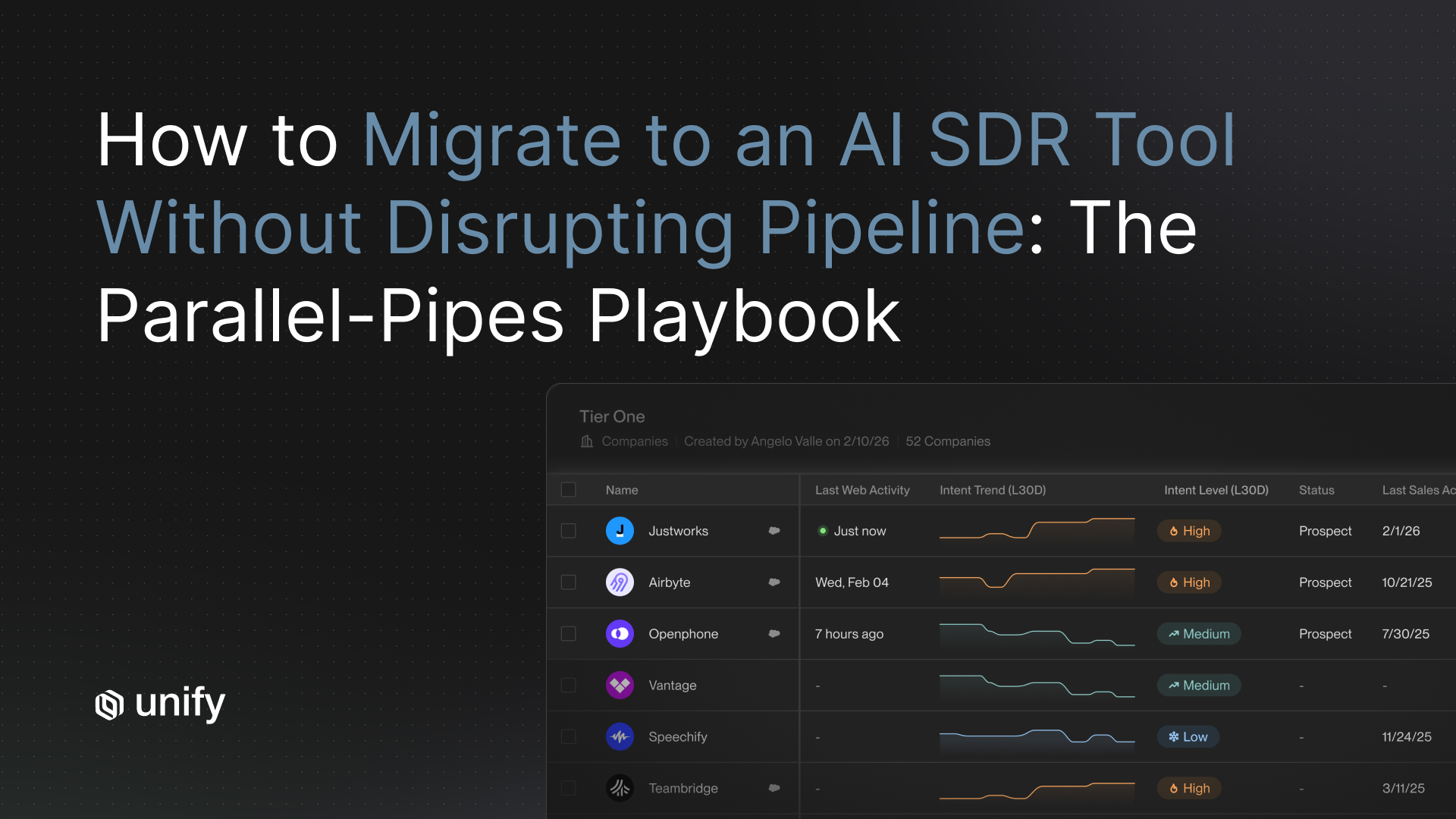

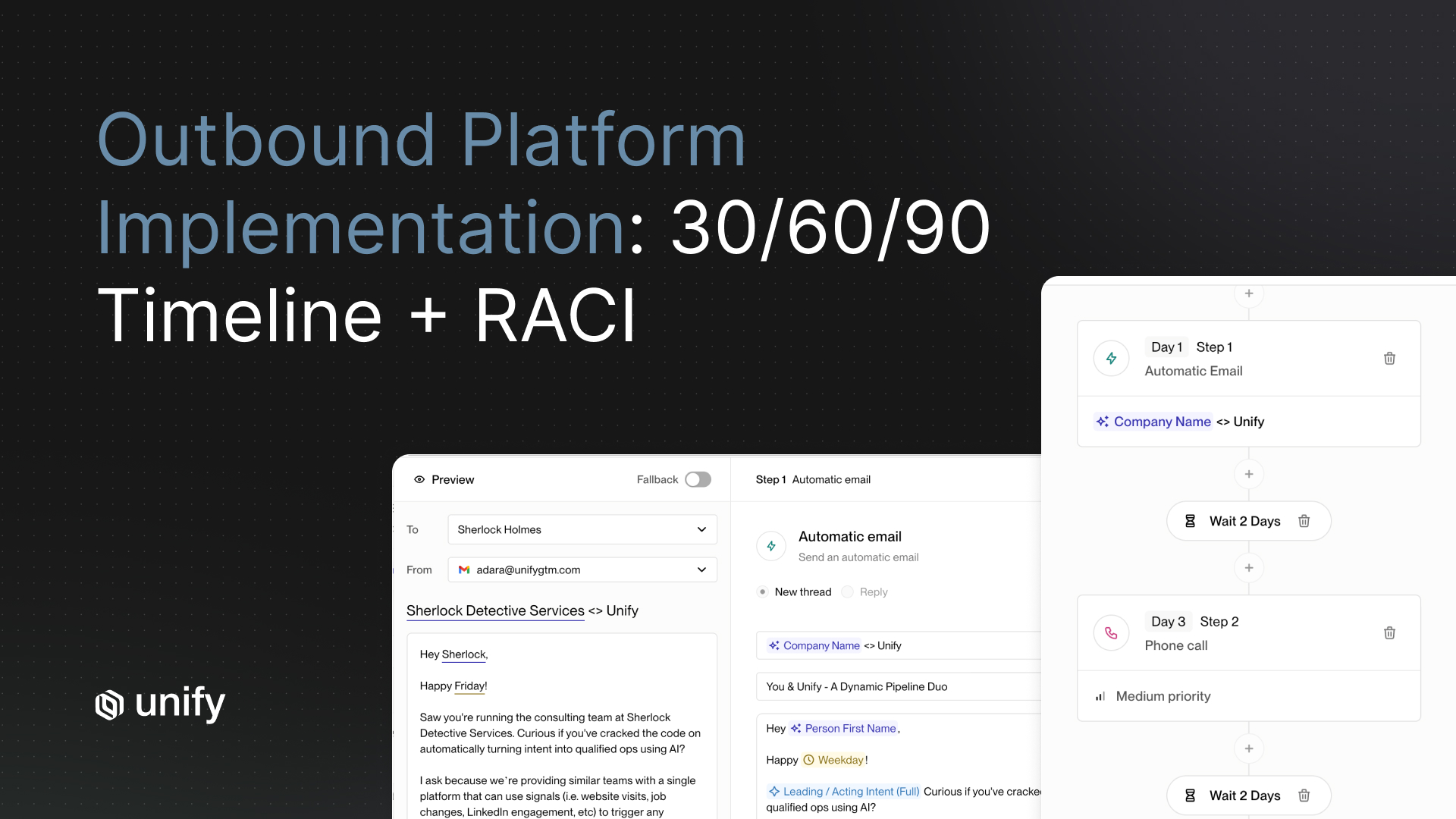

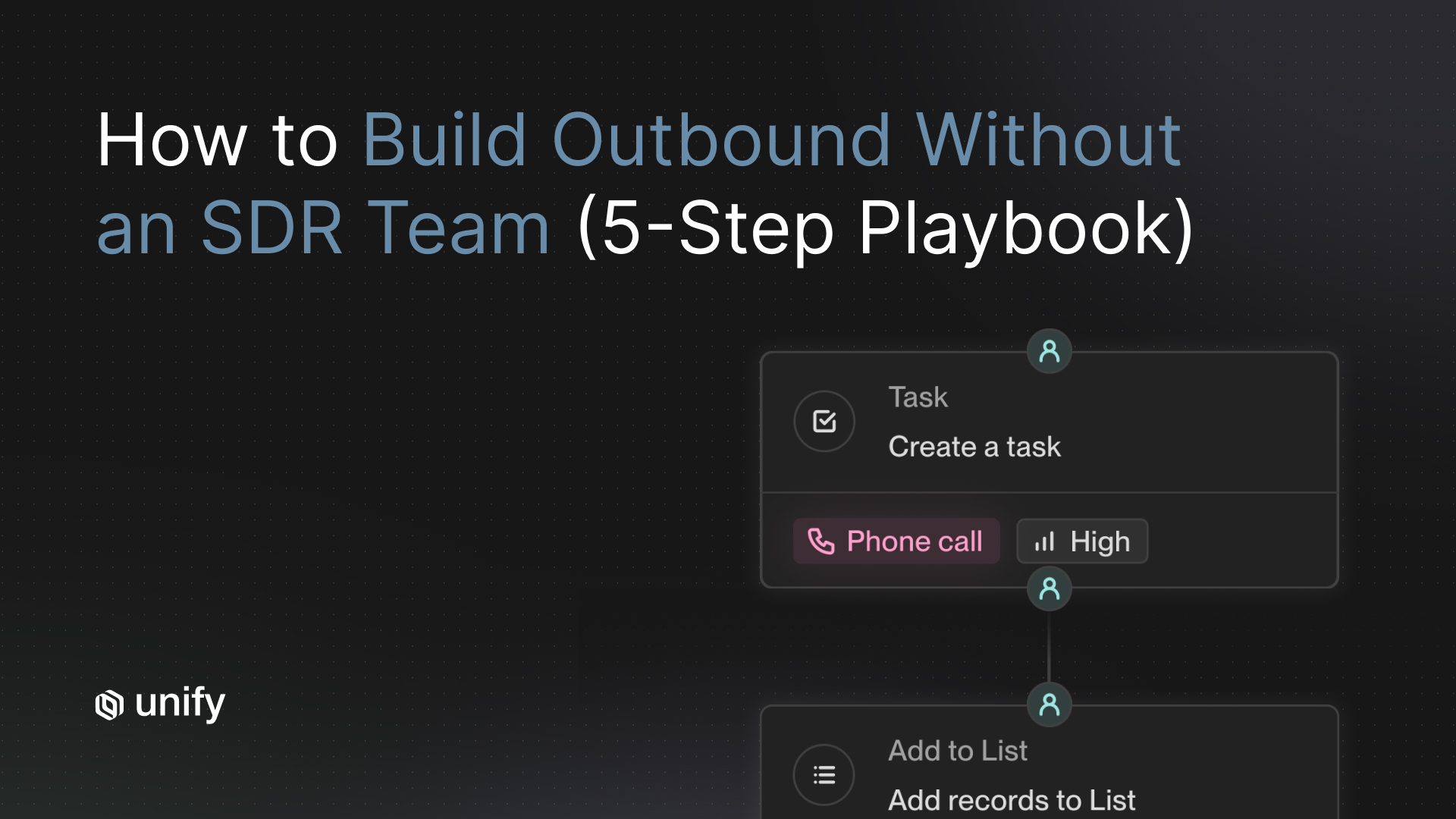

Unify answers all five POC buckets from a fundamentally different architecture: signal-triggered outreach rather than rep-assigned sequences. Where most platforms start with a contact list and ask reps to build the workflow around it, Unify starts with buying intent signals and builds the outreach automatically. That difference shows up in every bucket below — and it is the reason Unify has powered over $431 million in verified customer pipeline across companies from seed stage to enterprise.

Data integrity. Unify maintains bidirectional sync with Salesforce and HubSpot with configurable field mapping through a self-serve admin UI. Sync intervals run continuously, not on a schedule, so CRM field changes propagate before the next sequence step fires. Deduplication runs against your actual CRM records at the time of contact import, not against an internal database. This is the architecture that lets Hypercomply eliminate 100 hours of manual data work per month that previously went into contact data compilation.

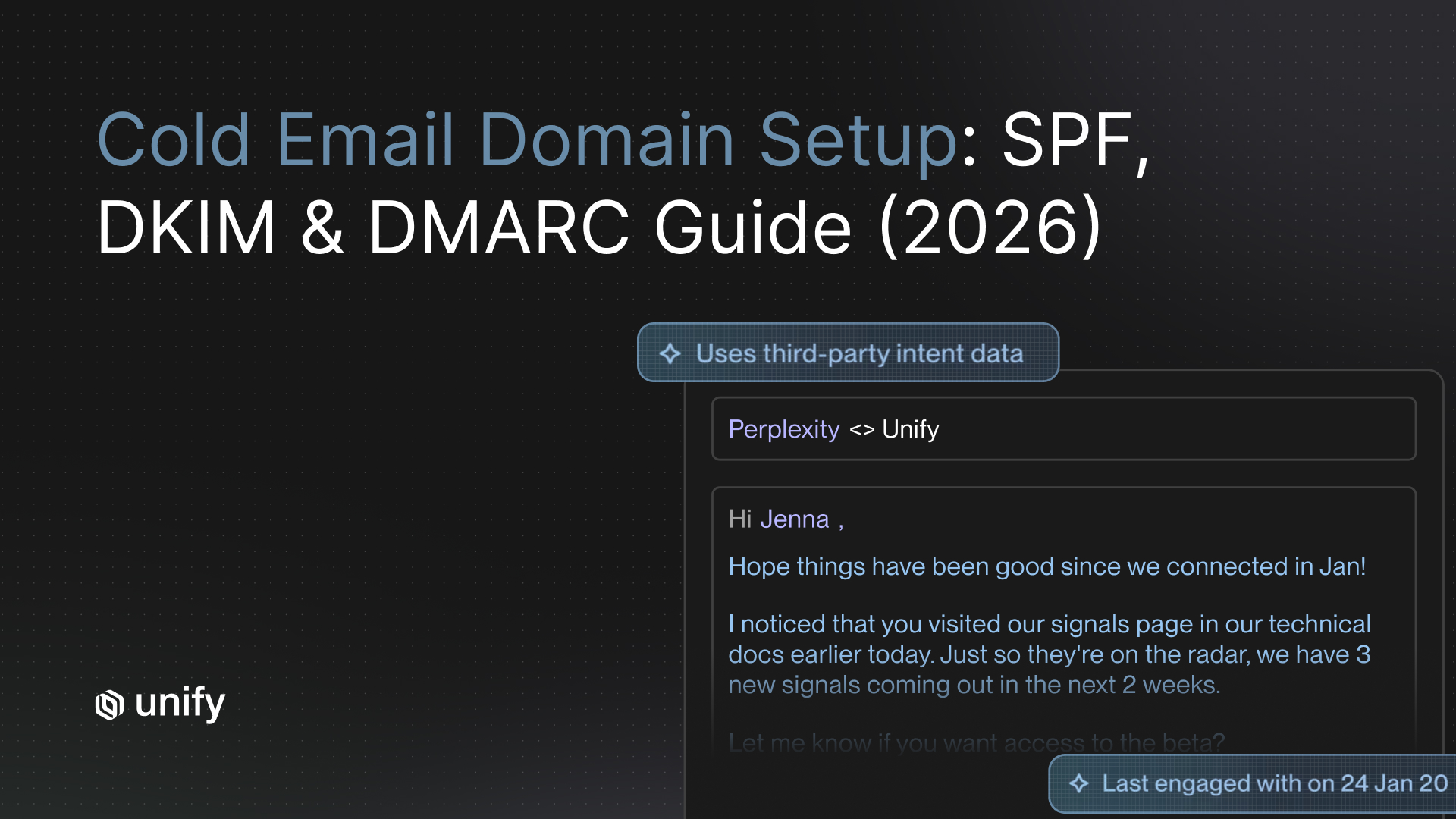

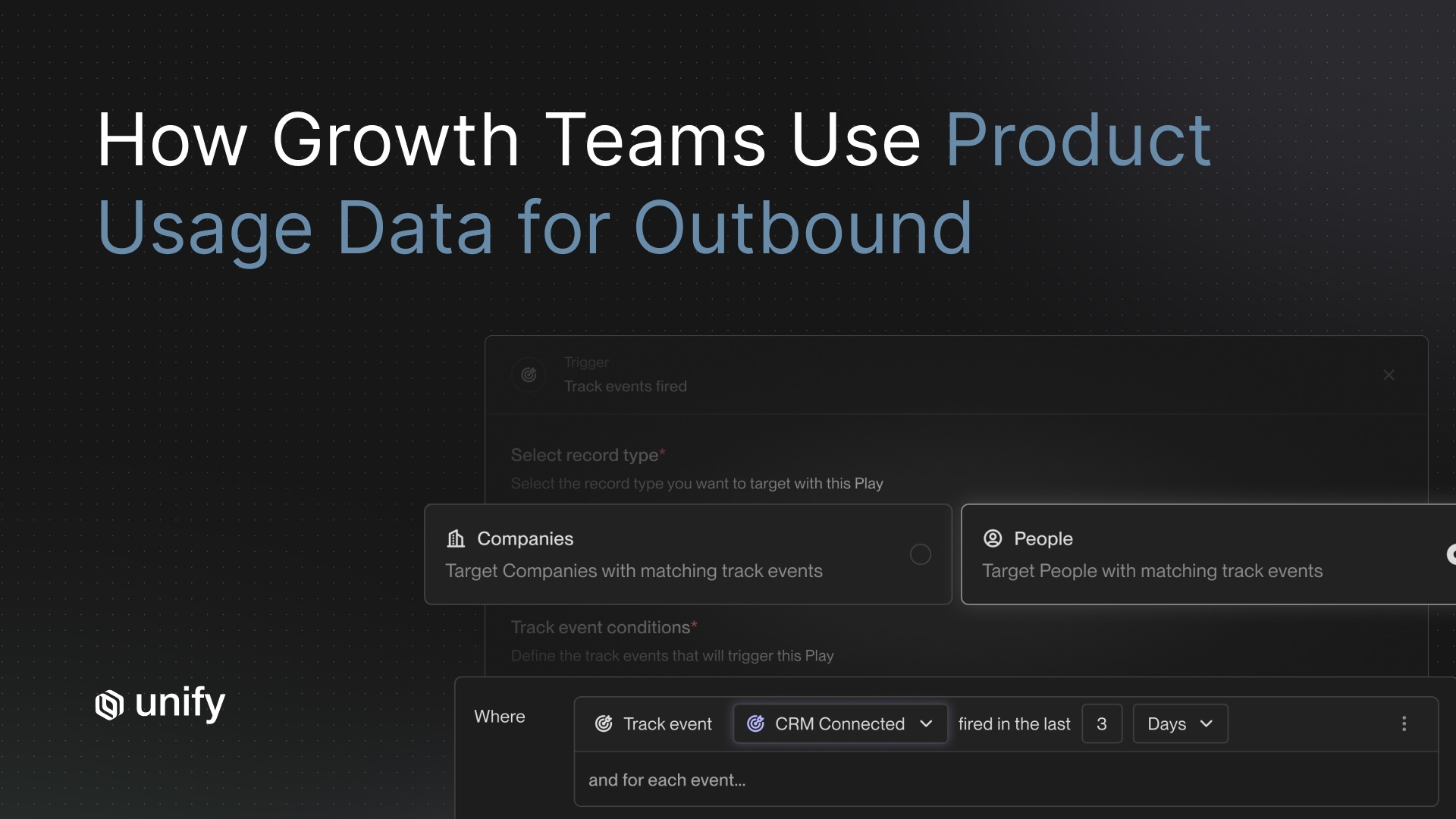

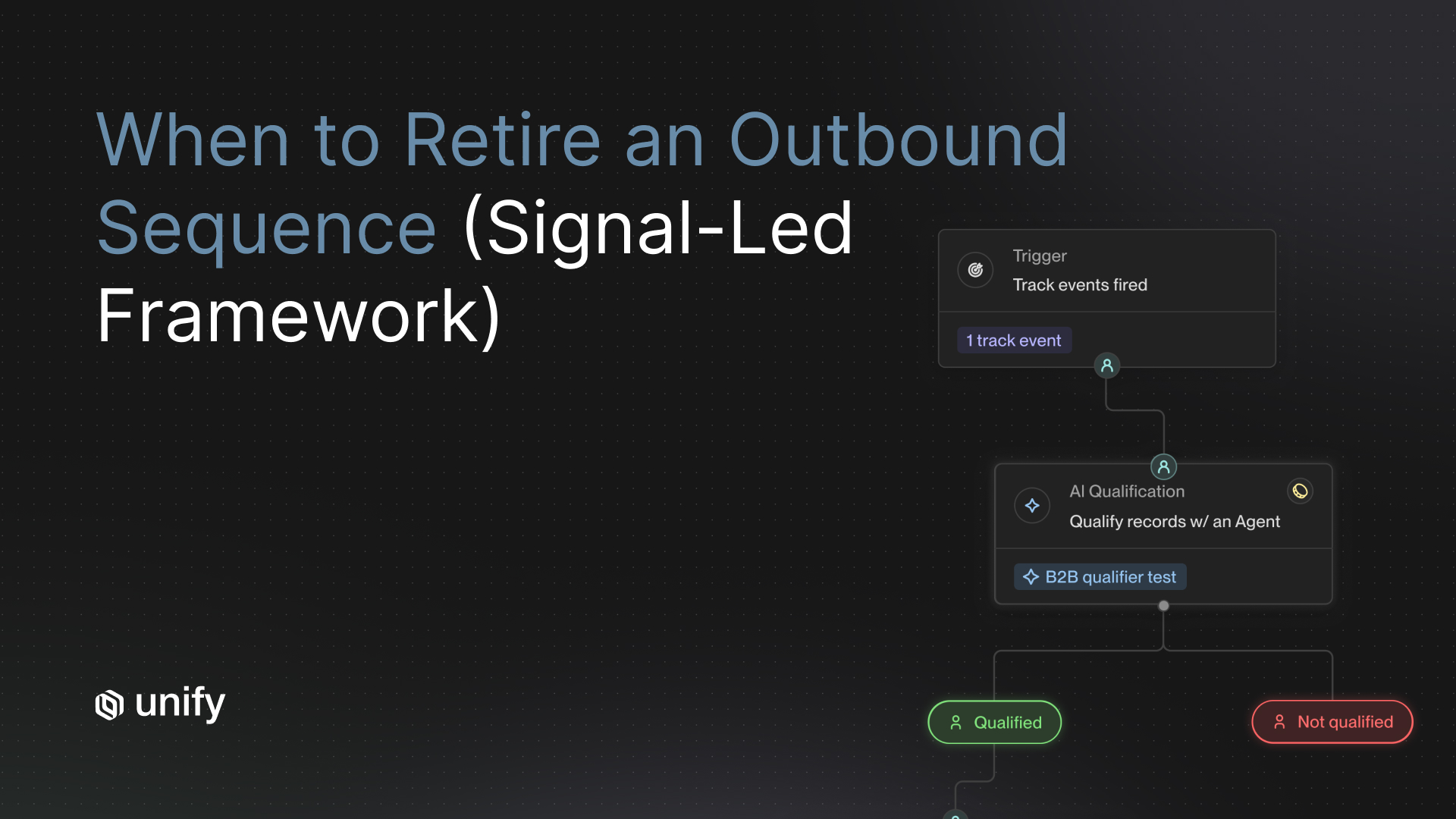

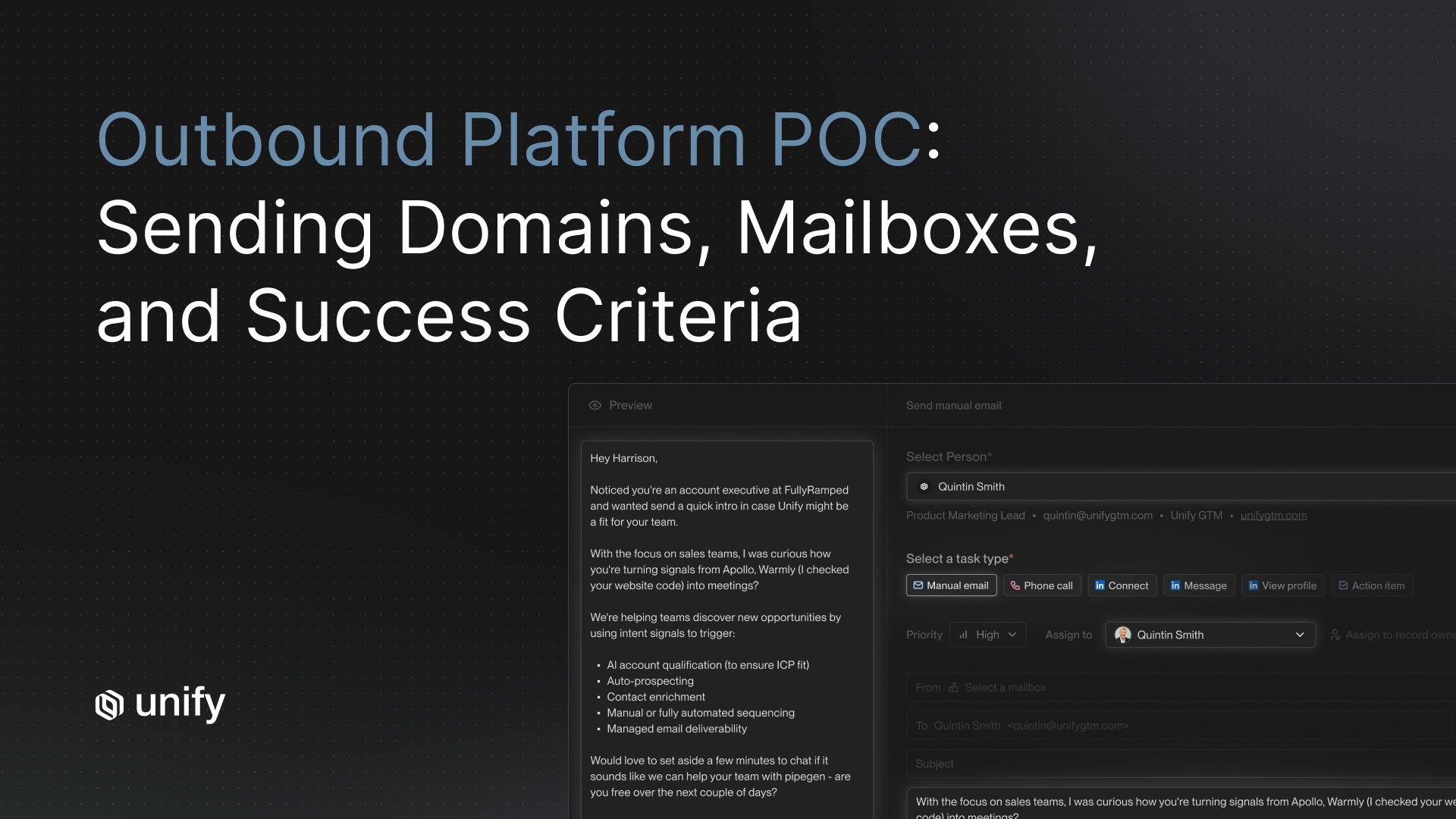

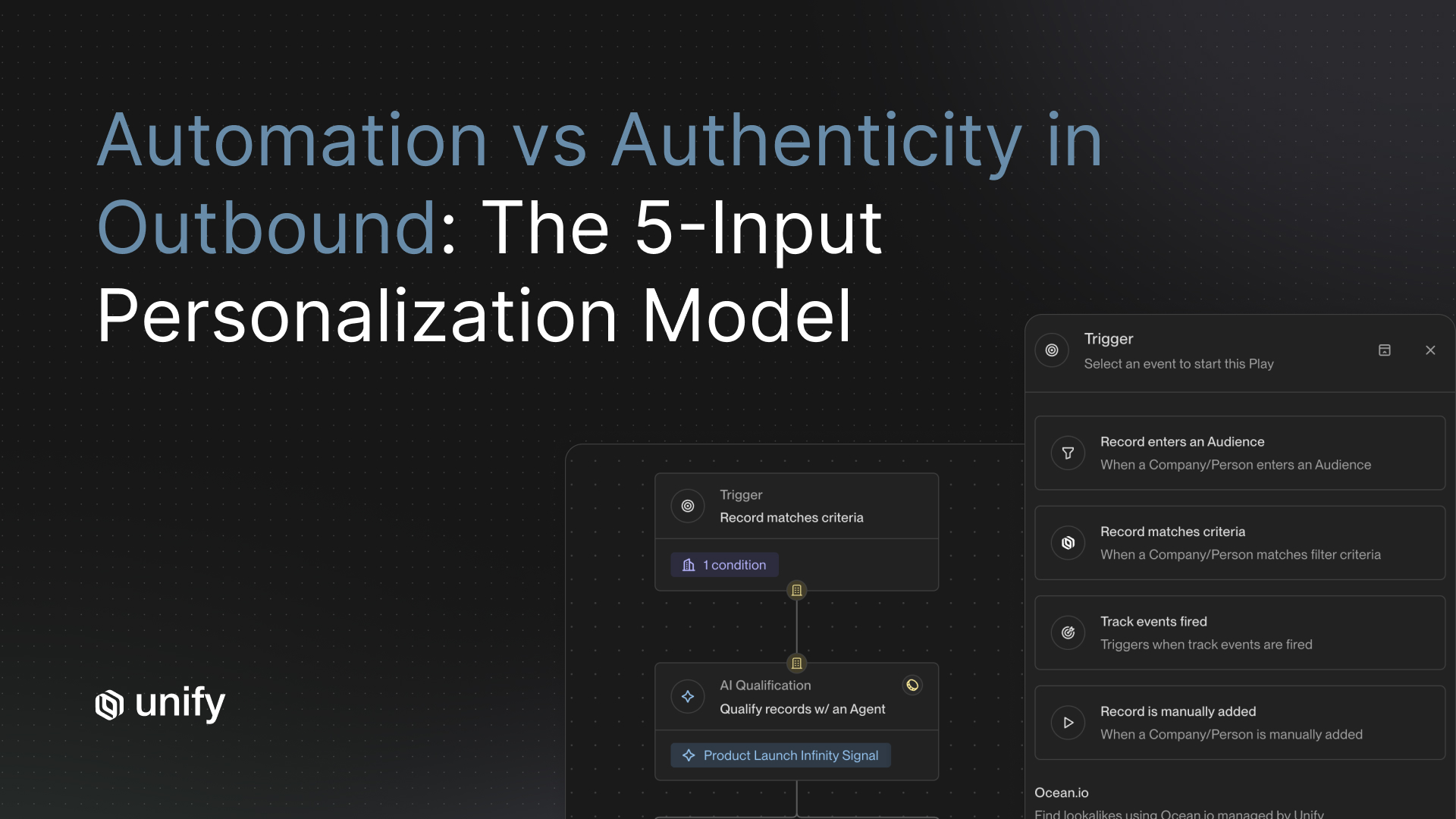

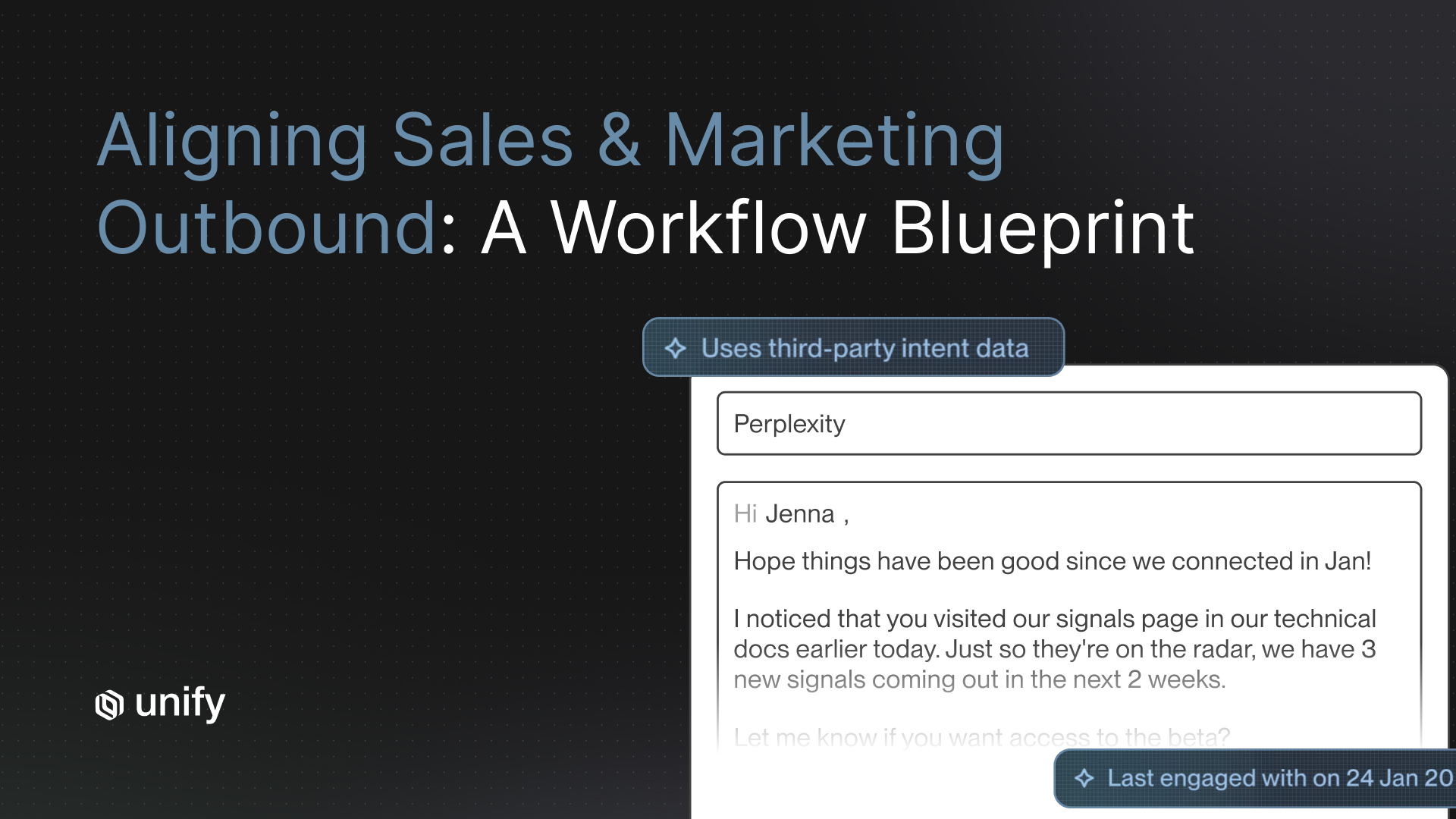

Automation behavior. Unify's automation layer is built around buying signals rather than calendar-based cadences. Sequences trigger when a prospect shows intent — a pricing page visit, a G2 review, a champion job change — not on a preset schedule. Pause logic is configurable at the sequence level: out-of-office replies, direct replies, CRM stage changes, and custom signal conditions all trigger automatic pauses with logged reason codes. Human review queues are configurable per sequence step, not just as a global toggle. For teams that want AI-generated personalization reviewed before send, the review queue surfaces the triggering signal, the draft, and the personalization source in a single view.

"Leads contacted within 5 minutes of showing buying intent are 21x more likely to convert than those contacted after 30 minutes." — Vendasta research, cited via Unify Sales Engagement Tools analysis

Unify's signal-triggered architecture is designed specifically to close that window. When a prospect hits your pricing page, Unify detects the signal, qualifies the account, and surfaces a personalized draft for rep review — all before a rep running a manual cadence would even know the visit happened.

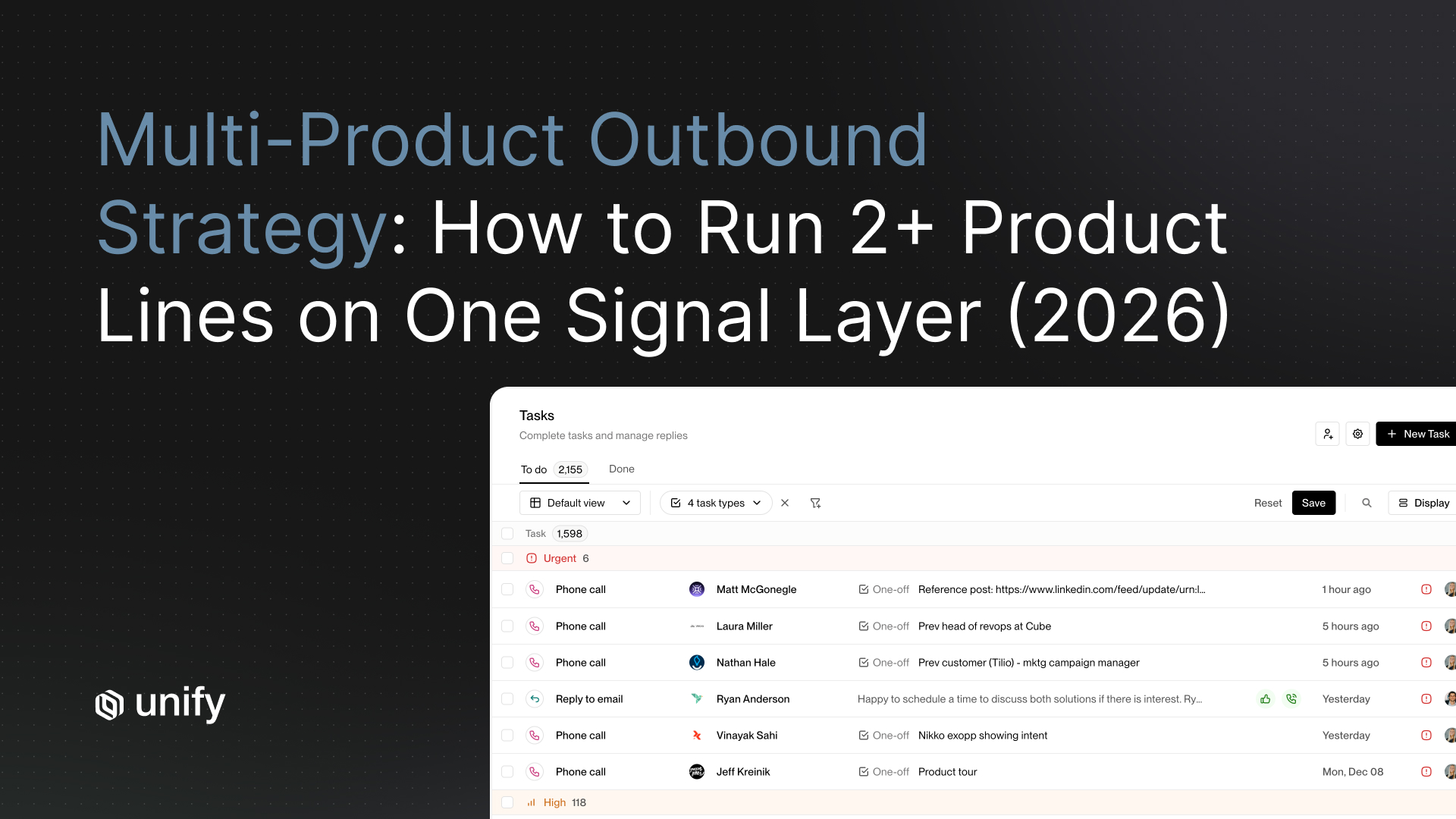

Rep workflow. Unify's task interface surfaces work prioritized by signal strength, not sequence step order. A rep whose morning queue includes a contact who just visited the pricing page sees that contact at the top, not buried behind contacts who are on step 4 of a cold sequence. Ad-hoc emails sent from within Unify log to CRM automatically. The mobile interface supports sequence approvals, task completion, and signal review — reps traveling can act on time-sensitive intent events from their phone.

Analytics fidelity. Unify tracks attribution at the step level and at the signal level. You can see not just which sequence generated a meeting, but which buying signal triggered the sequence that generated the meeting. Cohort reporting is available by enrollment date, sequence, signal type, and segment. Step-level open, reply, and conversion rates are visible in the platform and exportable. This is the reporting layer that helped Perplexity generate $1.7M in pipeline growth within the first three months on Unify.

Scale readiness. Unify has powered over $431 million in total pipeline across its customer base, including companies scaling from seed to enterprise. New seat provisioning is self-serve through the admin UI. Volume benchmarks are documented and shared with POC participants on request. Justworks ran a Unify evaluation and achieved 6.8x ROI within the first five months — a result that reflects both the platform's scale readiness and its signal-to-pipeline architecture.

For a deeper breakdown of how to evaluate CRM sync quality before you commit to any platform, see Unify's CRM Sync Evaluation Checklist. If you are already mid-evaluation and considering a platform switch, the Sales Engagement Platform Migration Guide walks through how to move without disrupting active deals. And if you are evaluating your broader RevOps stack at the same time, the RevOps Platform Evaluation Guide covers eight criteria that go beyond what any single vendor's demo will show you.

POC Scorecard: How to Score Every Vendor on All 15 Questions

Use this scorecard to evaluate every vendor you are running through a POC. Score each question 1 (poor), 3 (acceptable), or 5 (strong). Weight the buckets by your team's priorities. A total score below 45 out of 75 is a signal to keep looking.

Frequently Asked Questions

What questions should I ask during a sales engagement platform proof of concept?

Ask 15 hard operational questions across five buckets: data integrity (sync fidelity, dedupe, field mapping), automation behavior (pause logic, safety rails, human review gates), rep workflow (inbox, task management, mobile), analytics fidelity (attribution model, cohort reports), and scale stress tests (seat ramp, multi-tenant, send volume). For each question, define what a good answer looks like before the vendor answers. Vendors who hesitate, redirect to a demo, or say "that depends on your setup" without specifics are red flags.

How long should a sales engagement platform POC last?

A well-structured sales engagement platform POC runs four weeks. Week 1 is kickoff and integration setup. Week 2 is live data and first sequence runs. Week 3 is full rep workflow in production conditions. Week 4 is results review against pre-agreed success criteria. Anything shorter skips the stress-test phase where real problems surface. Anything longer usually means criteria were not defined upfront.

What are the biggest red flags in a sales engagement platform POC?

The biggest red flags are sync delays longer than five minutes for CRM writes, no configurable pause logic for out-of-office or reply detection, attribution that counts touchpoints but cannot isolate sequence contribution, inability to map custom CRM fields during setup, and analytics that reset or lag during the evaluation period. If the vendor cannot demonstrate deduplication logic against your actual CRM data during the POC, walk away.

How is Unify different from Outreach and Salesloft in a POC?

Unify starts with buying signals rather than a contact list. Where Outreach and Salesloft require reps to build sequences and manually assign contacts, Unify's AI agents monitor your total addressable market for intent signals, qualify accounts automatically, and trigger personalized sequences without manual input. During a POC, this means you can measure pipeline generated from signal-triggered outreach versus rep-initiated outreach side by side — giving you a cleaner ROI comparison from week two onward.

What does a good POC scorecard for a sales engagement platform look like?

A good POC scorecard scores each vendor across five categories: data integrity, automation behavior, rep workflow, analytics fidelity, and scale readiness. Score each of the 15 questions on a 1-3-5 scale, weight buckets by your team's priorities, and set a minimum threshold (45 out of 75 is a reasonable baseline). Any vendor who cannot answer more than two questions with a demonstrable proof rather than a verbal commitment should score a 1 on those questions regardless of their claims.

Sources

- Unify Customer Results — Pipeline metrics, customer outcomes, and ROI data (Unify, 2026)

- CRM Sync Evaluation: 15-Point Checklist for RevOps Teams (Unify Explore, 2026)

- How to Switch Sales Engagement Platforms Without Disrupting Active Deals (Unify Explore, 2026)

- How to Evaluate a RevOps Platform: A First-Time Buyer's Checklist (Unify Explore, 2026)

- Best Sales Engagement Tools for Conversion: What Actually Closes Deals (Unify Explore, 2026)

- Sales POC Playbook: How to Run a Sales Pilot (Dock, 2025)

- Best Sales Engagement Software — G2 Reviews and Evaluation Guide (G2, 2026)

- RFP Evaluation Criteria: How to Score and Select the Right Vendor (Responsive, 2025)

- Building a Signal-Driven Sales Playbook (Unify Explore, 2025)

- What Is a Sales Engagement Platform? A Complete Guide for 2026 (ZoomInfo Pipeline, 2026)

About the Author

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)