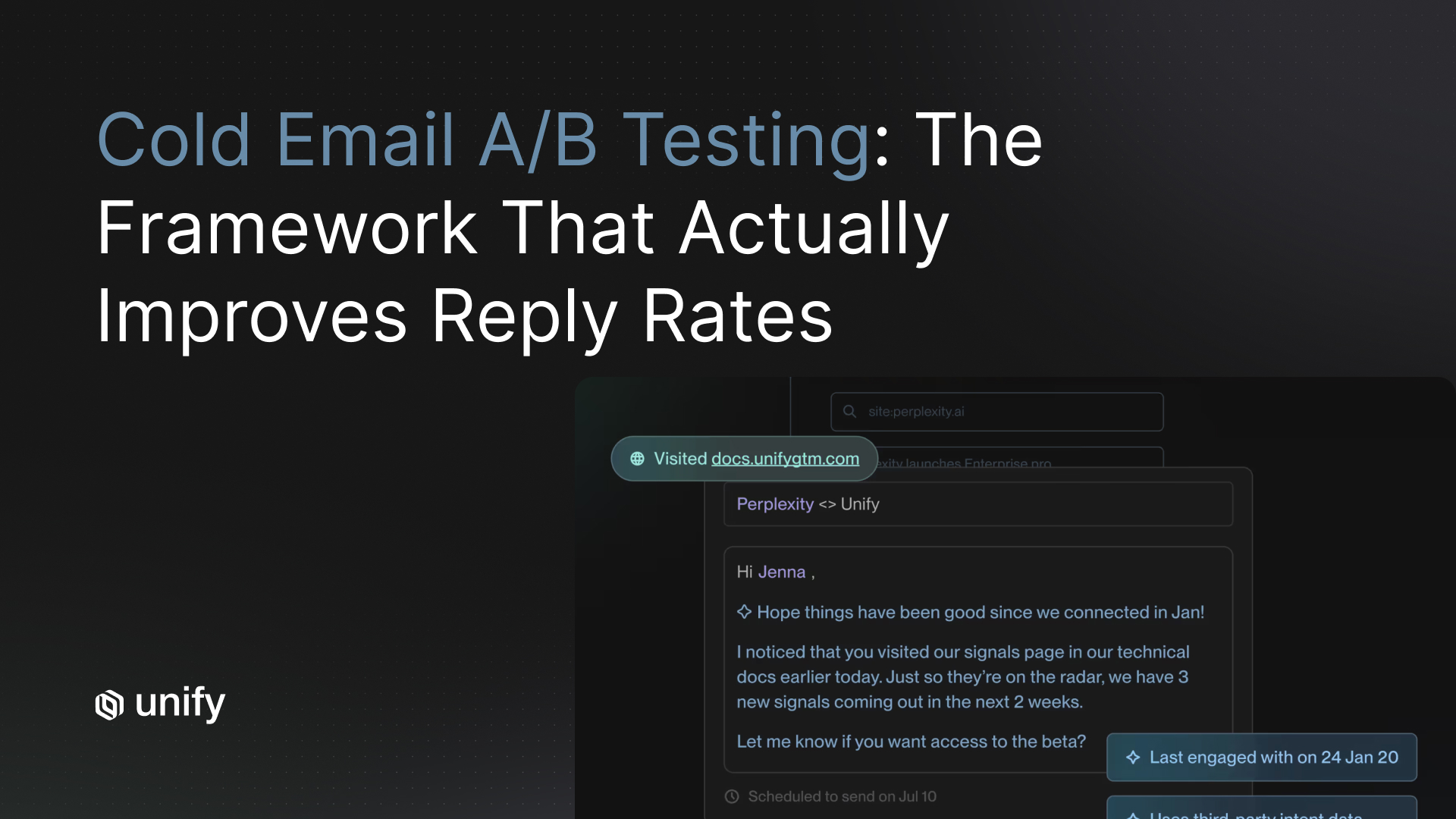

Cold email A/B testing is the practice of sending two or more variants of a single email element (subject line, opener, or CTA) to equal, randomized segments of a prospect list and measuring which variant produces a higher reply rate. Done correctly, it is the single most reliable way to improve cold email reply rates over time.

Most sales teams think they're running real tests. In reality, they're sending two versions of a mediocre email to a random list and hoping one sticks. The average B2B cold email reply rate in 2026 is just 3.43%, according to Instantly's 2026 Benchmark Report. Teams that run disciplined, sequential A/B tests routinely push past 8%.

Key Takeaways

- Test one variable at a time: subject line first, then opener, then CTA, then sequence length.

- Send a minimum of 200 emails per variant. Fewer than that and results are not statistically meaningful.

- Segment by intent level before testing so you compare like-for-like audiences.

- Wait 5 to 7 business days before picking a winner. Cold email reply cycles are slower than marketing email.

- Four sequential wins of 20% improvement each can roughly double your baseline reply rate.

This guide covers the testing methodology, priority order, benchmarks, and tools you need to run cold email copy testing that compounds into real pipeline gains. If you followed our previous article on domain setup and deliverability, you already have the sending infrastructure in place. Now it's time to optimize what you send.

Why Most Cold Email A/B Tests Fail

Cold email optimization sounds simple: write two subject lines, split a list, pick the winner. But three common mistakes make most tests useless.

Testing too many variables at once. When you change the subject line, the opener, and the CTA in the same test, you have no idea which change caused the result. Isolate one variable per test, every time.

Sample sizes that are too small. Sending 50 emails per variant is not a test. It's a coin flip. You need a minimum of 200 prospects per variant to approach statistical significance for cold email reply rates. Anything less and your "winner" is likely noise.

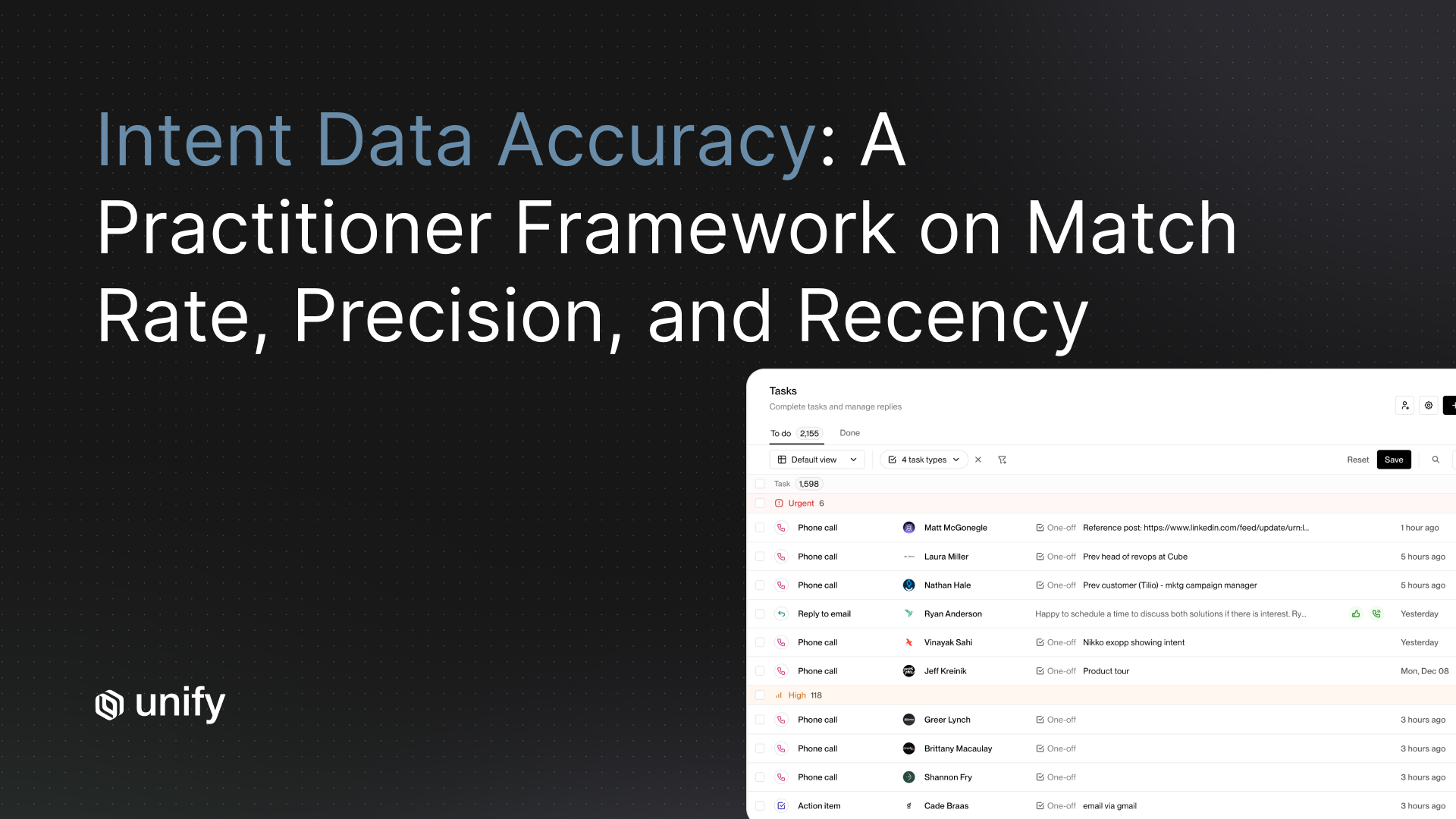

No control for audience quality. This is the mistake almost nobody talks about. If Variant A goes to a list of prospects showing active buying intent and Variant B goes to a cold list, the test results are meaningless. You're measuring list quality, not copy quality. Effective email sequence testing requires audience parity across variants.

The A/B Testing Framework for Cold Email

A reliable cold email A/B testing framework follows four rules.

One variable at a time. Test in this order: subject line, then opening line, then CTA, then sequence length. Subject lines are highest-leverage because they determine whether your email gets opened at all. According to a widely cited HubSpot analysis, 33% of email recipients open messages based on the subject line alone.

Minimum sample sizes. Run each variant against at least 200 prospects. For detecting smaller lifts (under 15%), you'll need 500 or more per variant.

Hold-out control groups. Always keep a control. Your "Variant A" should be your current best-performing copy. Never test two brand-new variants against each other without a known baseline.

Defined time windows. Wait 5 to 7 business days before declaring a winner. Cold email reply cycles are longer than marketing email. Prospects need time to see your message, consider it, and respond. Calling a test early is a common way to pick a false winner.

What to Test (Priority Order)

Not all variables are created equal. Here's where to focus your cold email copy testing, ranked by impact on reply rates.

Subject lines. Test length (under 50 characters vs. over 50), personalization tokens (first name, company name), question format vs. statement format, and curiosity gaps. According to an Outreach study cited by SmartLead, including a company name in the subject line can boost open rates by 22%.

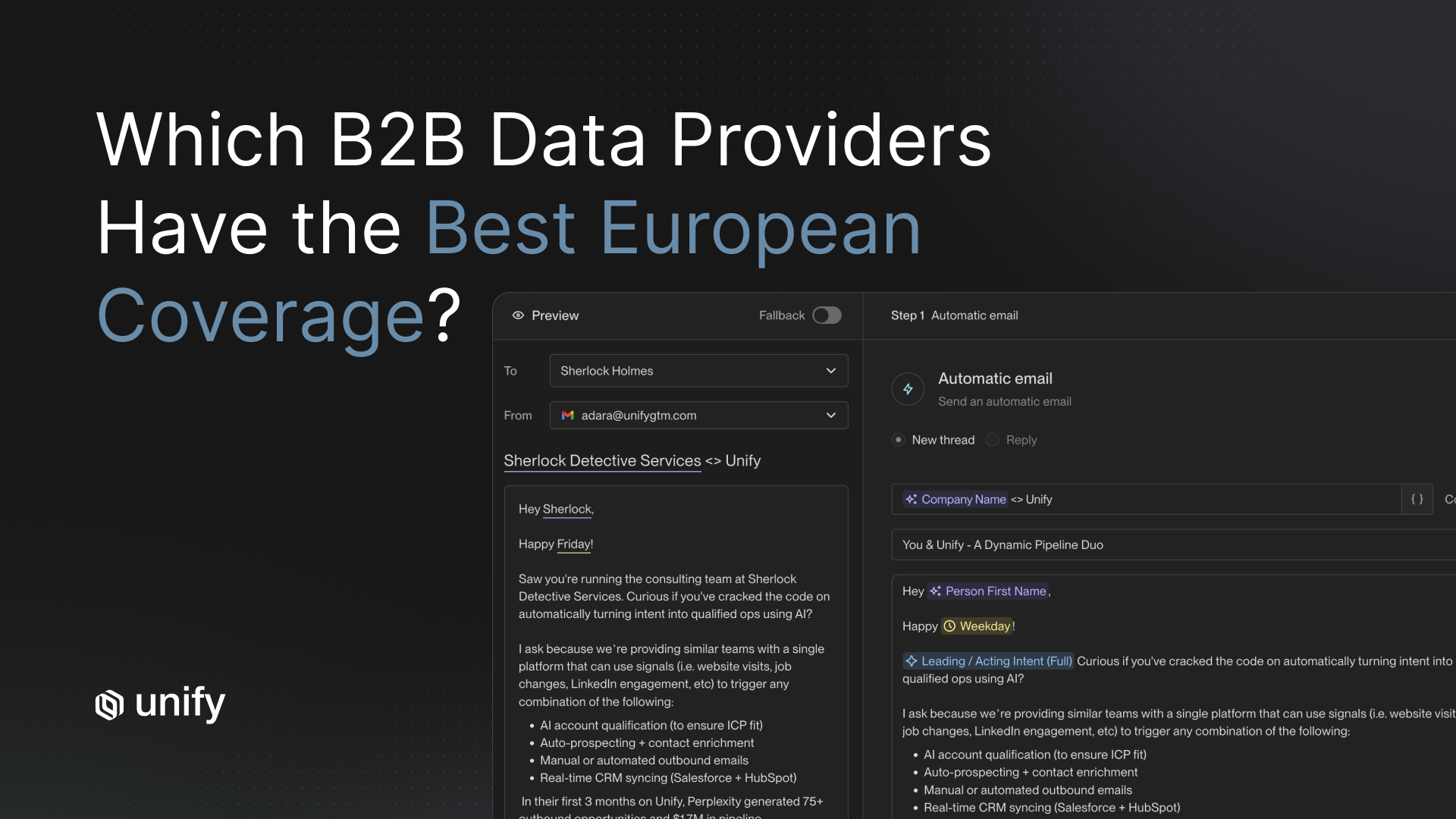

Opening lines. Compare signal-based hooks ("Noticed your team just closed a Series B") against generic intros ("Hope this finds you well") and pain-point leads ("Most VP Growth teams waste 40% of their outbound budget on unqualified prospects"). Signal-based hooks consistently outperform generic openers. According to The Digital Bloom's 2025 cold outbound benchmark study, timeline-based hooks achieve a 10.01% reply rate versus 4.39% for problem-statement approaches, a 2.3x performance gap.

CTAs. Test a soft ask (a simple question like "Worth a conversation?") against a hard ask (a calendar link) and a value offer (a relevant resource or benchmark report). Soft asks typically win in cold outbound because they lower the commitment threshold.

Sequence structure. Test the number of follow-up touches and the spacing between them. A Backlinko study of 12 million outreach emails found that emailing the same contact multiple times leads to 2x more responses than a single email. But adding too many touches can damage sender reputation if your deliverability isn't dialed in.

How Unify Makes A/B Testing at Scale Actually Work

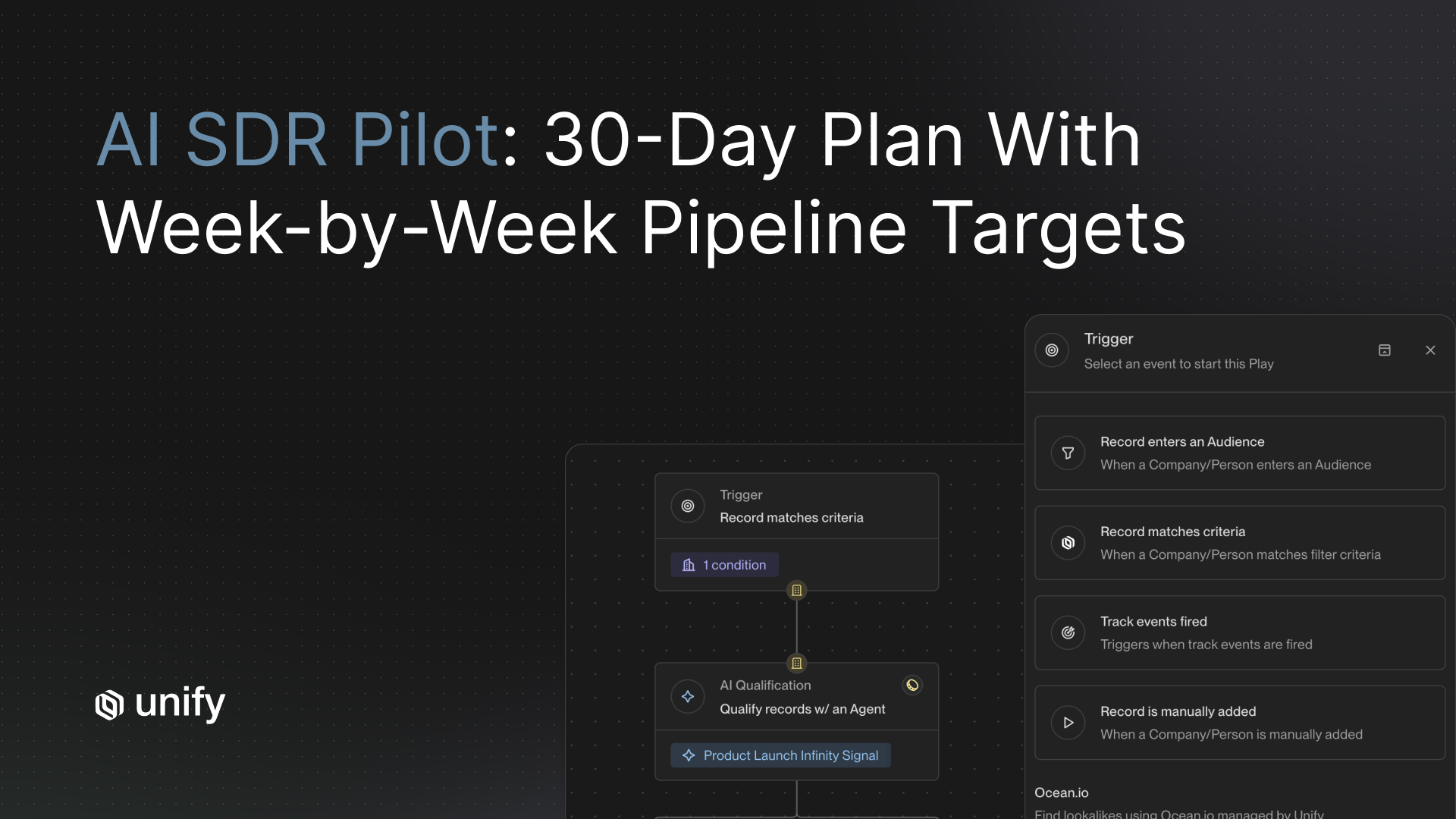

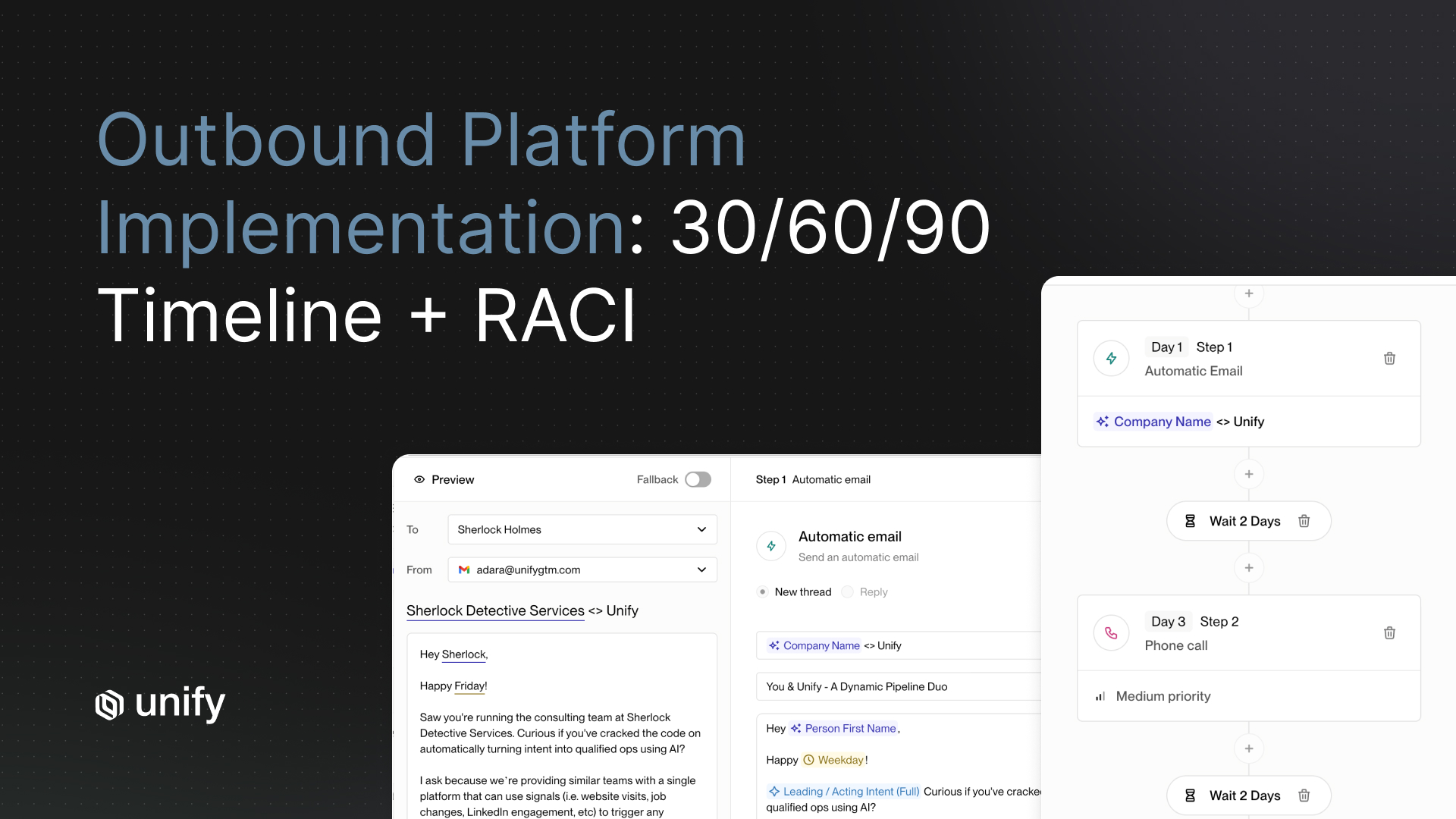

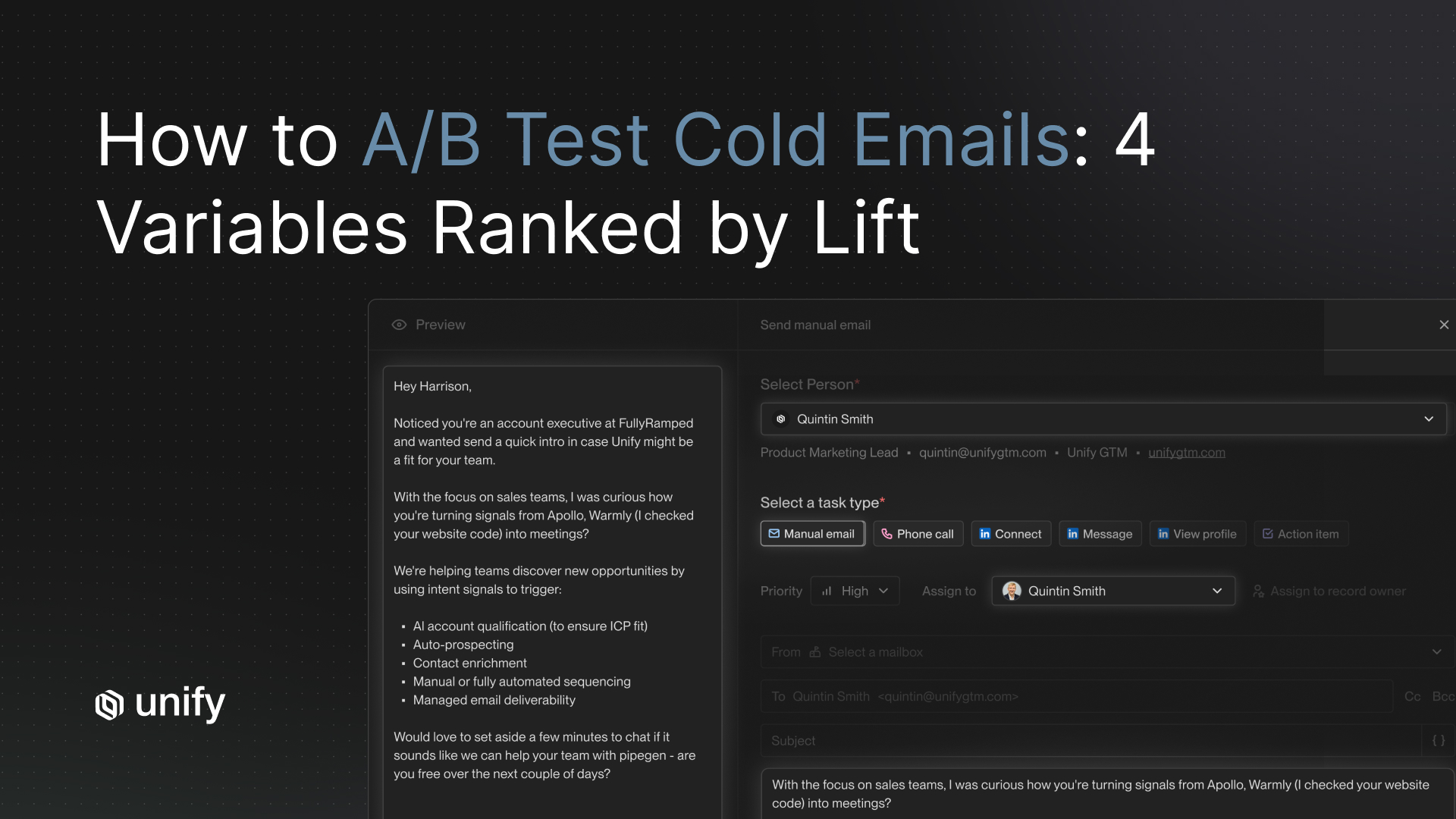

Running cold email A/B testing manually across thousands of prospects is painful. You're juggling spreadsheets, sending tools, and dashboards that don't talk to each other. Unify solves this by building variant testing directly into the sequence workflow.

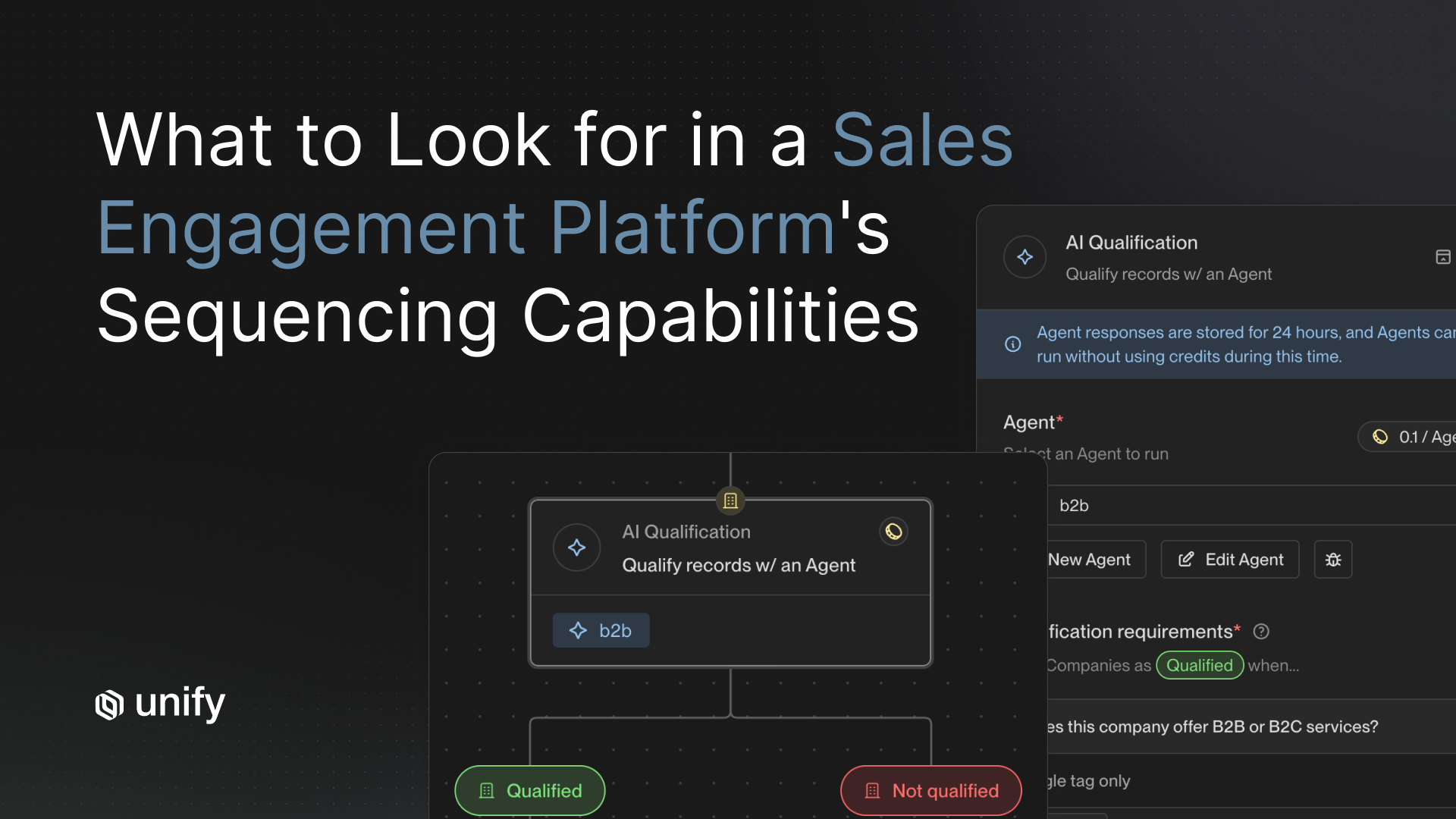

Built-in variant testing within sequences. Unify's sequence builder lets you create multiple copy variants inside a single play. No need to duplicate campaigns or manage separate lists. You set the variants, and the platform handles the random split and tracking.

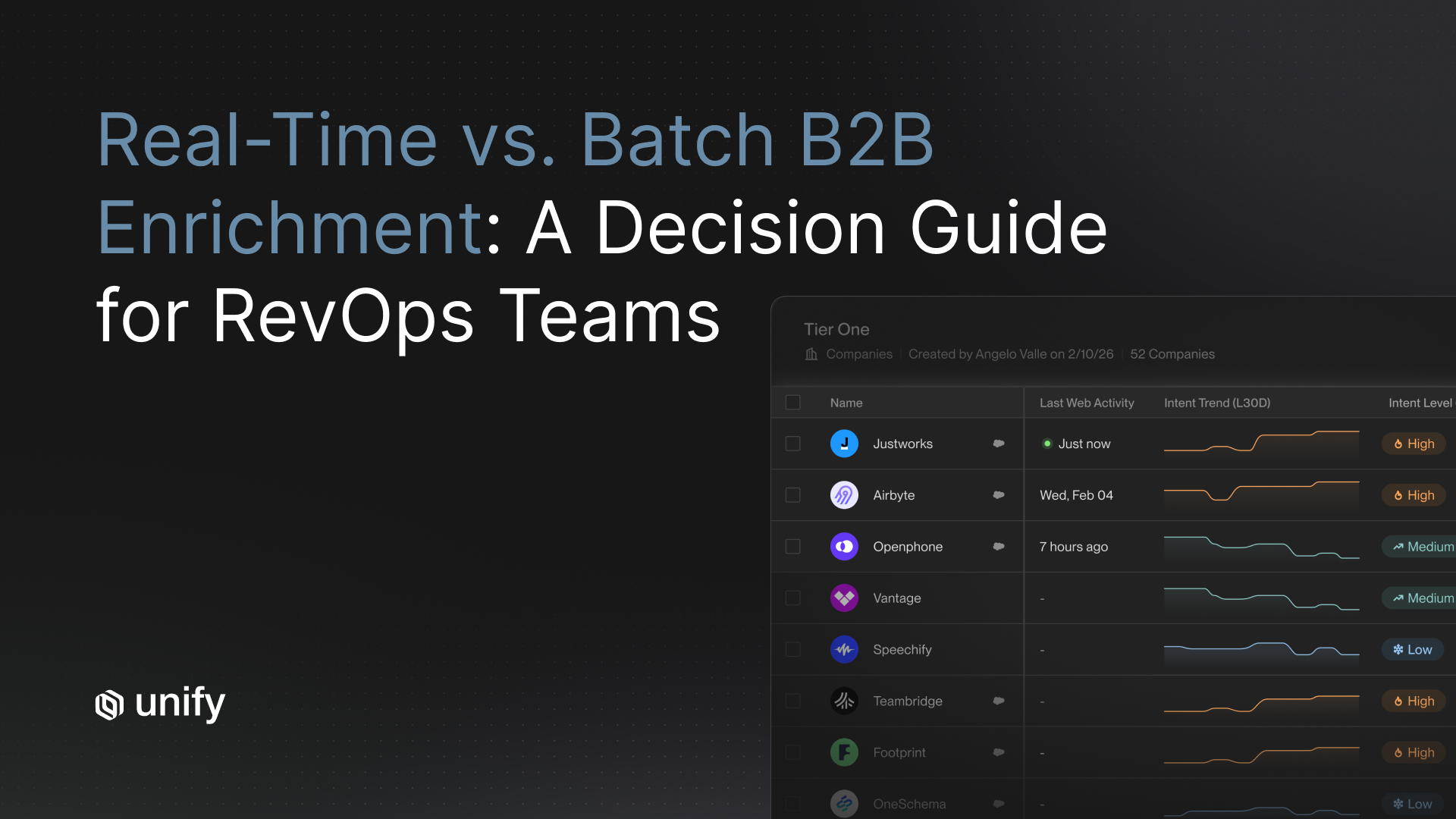

Signal-driven segmentation. This is the biggest differentiator. Unify uses 25+ intent signals to segment your prospects by buying stage before you test. That means your A/B test compares copy performance across prospects with the same intent level, not a random mix of hot leads and cold contacts. The result is cleaner data and faster decisions.

Automatic winner selection. Set a reply rate threshold, and Unify automatically shifts send volume to the winning variant once statistical significance is reached. No manual monitoring required.

Real-time analytics. Unify's reporting dashboard shows variant performance across every active sequence, so you can spot trends across campaigns and roll winning patterns into your next test.

A Step-by-Step Guide to Your First A/B Test

If you've never run a structured cold email A/B test before, start here.

Step 1: Pick one variable. Start with subject lines. They're the fastest to test and easiest to isolate.

Step 2: Write 2 to 3 variants with a clear hypothesis. For example: "A question-format subject line will generate a higher open rate than a statement format because it creates curiosity." Don't test randomly. Each variant needs a reason.

Step 3: Split your prospect list evenly. Minimum 200 per variant. Make sure the segments share the same characteristics: same industry, same role level, same intent signals if possible.

Step 4: Launch simultaneously. Same time of day, same day of the week. Timing differences will pollute your results. Monday sends and Friday sends perform very differently.

Step 5: Wait 5 to 7 business days. Measure reply rate and positive reply rate. Open rate matters for subject line tests, but replies are the metric that ties to pipeline.

Step 6: Roll the winner into production and start the next test. Take the winning subject line, lock it in, and move on to testing the opening line. This sequential approach is how you compound gains.

Benchmarks and What Good Looks Like

You need reference points to know if your cold email optimization is working. Here's what the data says for 2026.

Average cold email reply rates:

- Average: 3.43% platform-wide (Instantly 2026 Benchmark Report)

- Top quartile: 5.5%+ reply rate

- Elite campaigns: 10%+ reply rate

What counts as a meaningful improvement. A winning variant should show at least a 15 to 30% relative lift over your control. For example, if your baseline reply rate is 4%, a winning variant should push you to at least 4.6%. Anything smaller could be statistical noise.

The compounding effect. This is where disciplined testing pays off. Four sequential wins of 20% relative improvement each will roughly double your baseline performance. If you start at a 3% reply rate and run one test per month, you could reach 6%+ within a quarter. That's the difference between a pipeline that trickles and one that flows.

According to a 2026 analysis by Martal Group, campaigns using advanced personalization and systematic A/B testing achieved reply rates up to 18%, compared to roughly 9% for campaigns using generic templates. The primary drivers were micro-segmentation, problem-focused messaging, and frequent iteration.

Teams using Unify's signal-based segmentation typically see faster compounding because each test produces cleaner data. When you remove audience-quality noise from your tests, your winning copy actually generalizes to the broader list.

Frequently Asked Questions

How many emails do I need to send per variant for a valid A/B test?

A minimum of 200 emails per variant is the standard for cold email A/B testing. For detecting smaller improvements (under 15% relative lift), aim for 500 or more per variant. Fewer than 200 and your results are not statistically reliable.

Should I test subject lines or email body copy first?

Start with subject lines. They determine whether your email gets opened at all, and they're the easiest variable to isolate. Once you have a winning subject line, move on to the opening line, then the CTA. Testing in this order lets each win build on the last.

How long should I wait before picking a winner?

Wait 5 to 7 business days for cold email tests. Cold outreach has longer response cycles than marketing email, and many replies come on day 3 through 5. Calling a test before the full cycle completes often leads to picking a false winner.

Can I A/B test follow-up emails in a sequence, or just the first touch?

You should test both, but start with the first email in your sequence. It carries the most weight because it determines whether prospects engage with your sequence at all. Once the first touch is optimized, test follow-up timing and copy separately.

What reply rate should I aim for with cold email in 2026?

The industry average cold email reply rate in 2026 is 3.43% according to Instantly's benchmark data. A well-targeted, well-tested campaign should aim for 5 to 8%. Top performers using intent-based segmentation and systematic A/B testing consistently reach 10%+ reply rates. For context, elite campaigns in the top 10% exceed 10.7% reply rates.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)