"Can AI really handle it when a prospect pushes back?"

If you're evaluating AI SDR tools, this is probably the first question on your mind. And it should be. According to Gartner, AI adoption among sales reps jumped from 24% to 43% between 2023 and 2024, and sellers who effectively partner with AI are 3.7x more likely to meet quota. But objection handling remains the capability that separates useful AI SDRs from expensive email blasters.

AI SDR objection handling refers to how an AI sales development representative classifies, responds to, and escalates prospect pushback during automated outbound sequences. The honest answer is that AI handles some objections brilliantly and others need human escalation. The key is knowing which is which, and building the right handoff system. Here is how to evaluate whether an AI SDR tool actually gets this right.

Which Objections AI Handles Well (and Which It Should Not Touch)

Not all objections are created equal. Some follow predictable patterns that AI can match with context-aware responses. Others require judgment, empathy, or authority that only a human rep can provide.

Objections AI handles well

- Timing objections ("Not right now"): AI schedules a follow-up and re-engages when intent signals change. This is a pattern-matching task, and good AI SDRs excel at it.

- Information requests ("Send me more details"): AI provides relevant case studies, product pages, or resources based on the prospect's industry and role.

- Routing requests ("Talk to someone else on my team"): AI identifies the right contact and re-routes the conversation without losing context.

- Soft no's ("We're happy with our current solution"): AI nurtures with value-based follow-ups over time, referencing new data or relevant triggers.

These work because they follow predictable patterns that can be templated with context. The AI does not need to improvise. It needs to pick the right template and personalize it correctly.

Objections that need human escalation

- Competitive comparisons ("Why should I switch from [competitor]?"): Requires nuanced positioning and knowledge of deal context that shifts conversation by conversation.

- Budget negotiations ("This is too expensive"): Requires authority and flexibility that AI cannot and should not have.

- Technical deep-dives ("How does your API handle X?"): Requires product expertise and the ability to go off-script.

- Emotional responses ("Stop emailing me"): Requires immediate suppression and human empathy. Getting this wrong is a brand risk.

The goal is for AI to handle 60-70% of follow-ups while humans handle the 30-40% that require judgment. According to SaaStr, companies using this hybrid model see 30% higher conversion rates and 2.8x more pipeline than those attempting full AI replacement.

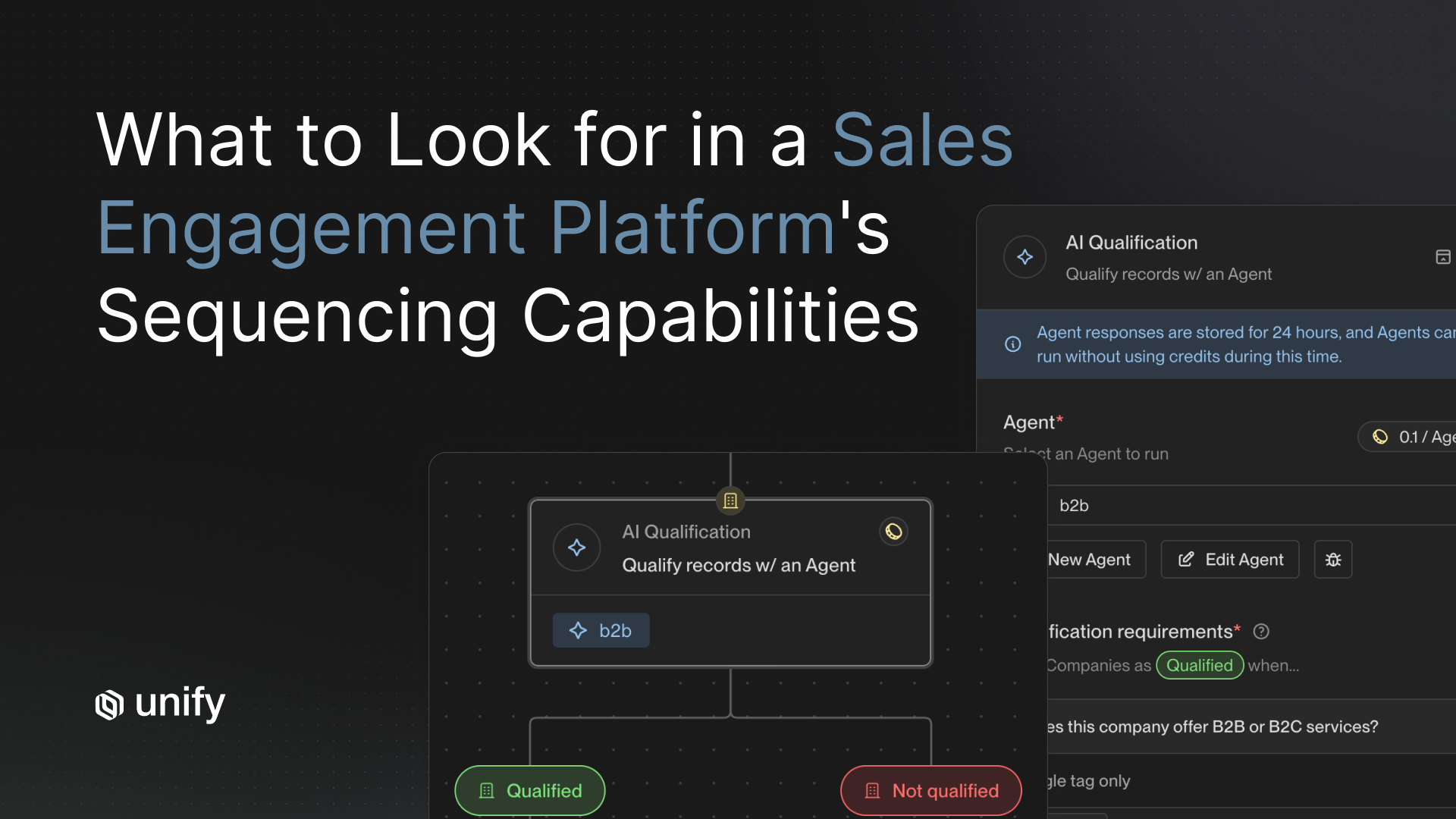

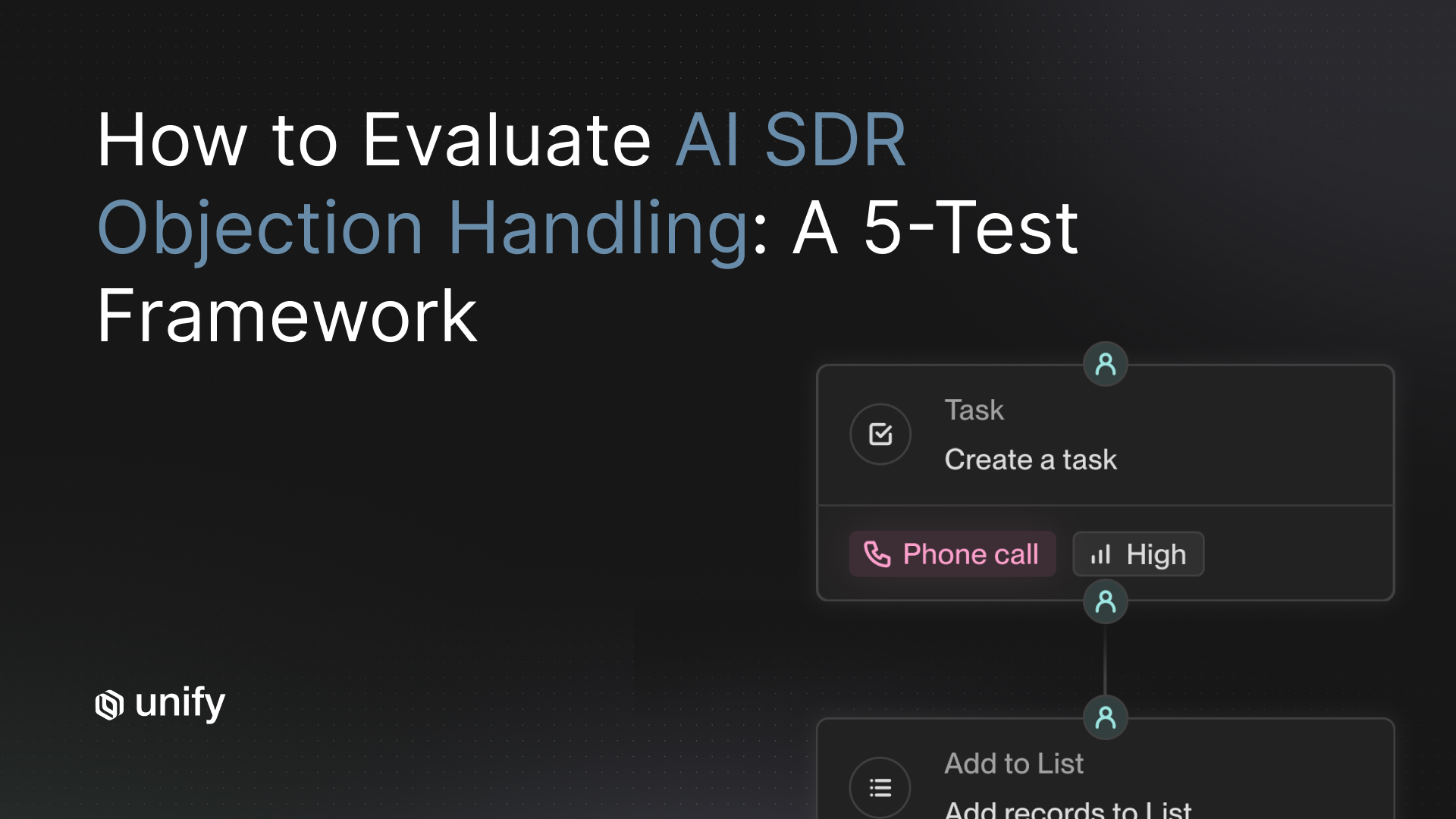

The 5-Test Evaluation Framework for AI Objection Handling

When you are evaluating an AI SDR tool in a demo or pilot, run these five tests. They will reveal more about the product's objection handling than any feature deck.

Test 1: The "not interested" reply

Send the AI a flat "not interested" response. Does it respect the no, or does it blindly follow up with another pitch? Good AI SDRs will acknowledge the response, pause the sequence, and either move the prospect to a nurture track or ask a soft clarifying question. Bad ones ignore the signal and keep pushing.

Test 2: The "tell me more" reply

Send a "tell me more about how this works for [specific use case]" reply. Does the AI provide relevant, contextual information tied to the prospect's industry and role? Or does it send a generic product overview? This test reveals whether the tool is actually using account research in its responses or just running a static sequence.

Test 3: The complex objection

Send something the AI should not try to answer, like "We evaluated three vendors last quarter and chose [competitor] because of X." Does the AI escalate to a human rep, or does it attempt a clumsy competitive response? The best AI SDRs know their limits and route complex scenarios to humans quickly.

Test 4: Follow-up timing

Check the follow-up cadence. Does the AI respect business hours? Does it space follow-ups at reasonable intervals? Research shows that 80% of deals require five or more touchpoints, but 44% of reps give up after one. An AI SDR should be persistent without being aggressive.

Test 5: Bulk quality review

Pull 20 AI-generated follow-up responses and score each one for relevance, tone, and accuracy. This is the most revealing test. McKinsey's 2025 State of AI report found that 78% of companies now use AI in at least one business function, but quality assurance remains the biggest gap. If you cannot read 20 responses without cringing, the tool is not ready.

The Human-in-the-Loop Model That Actually Works

The best AI SDR implementations are not all-AI or all-human. They use an AI-drafted, human-approved model for anything beyond pattern-based responses.

Here is what that looks like in practice:

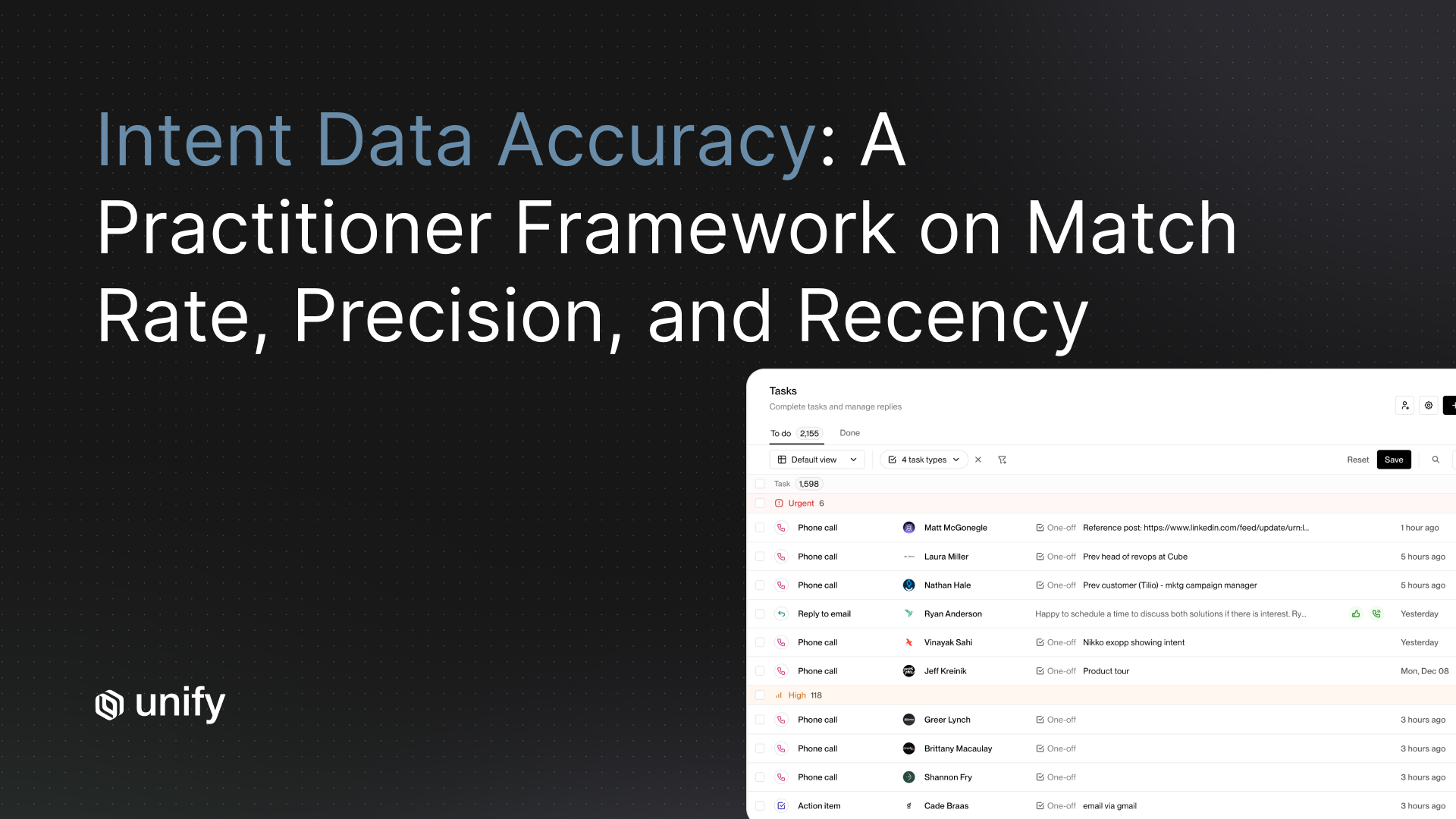

- Escalation triggers: Define which reply types automatically route to a human rep. At minimum, competitive mentions, pricing questions, and opt-out requests should escalate immediately.

- Response quality monitoring: Sample 10% of AI responses weekly for quality control. Score them for relevance, tone, and accuracy. This is how you catch drift before it becomes a problem.

- Continuous improvement loop: Feed human edits back to the AI to improve future responses. Without this feedback loop, your AI objection handling will not get smarter over time.

- Clear ownership: Assign a human owner for AI response quality. Someone on the team needs to be accountable for what the AI is saying on your behalf.

How Unify Handles Objections and Follow-Ups

Unify takes a structured approach to AI objection handling that reflects how the best sales teams actually operate.

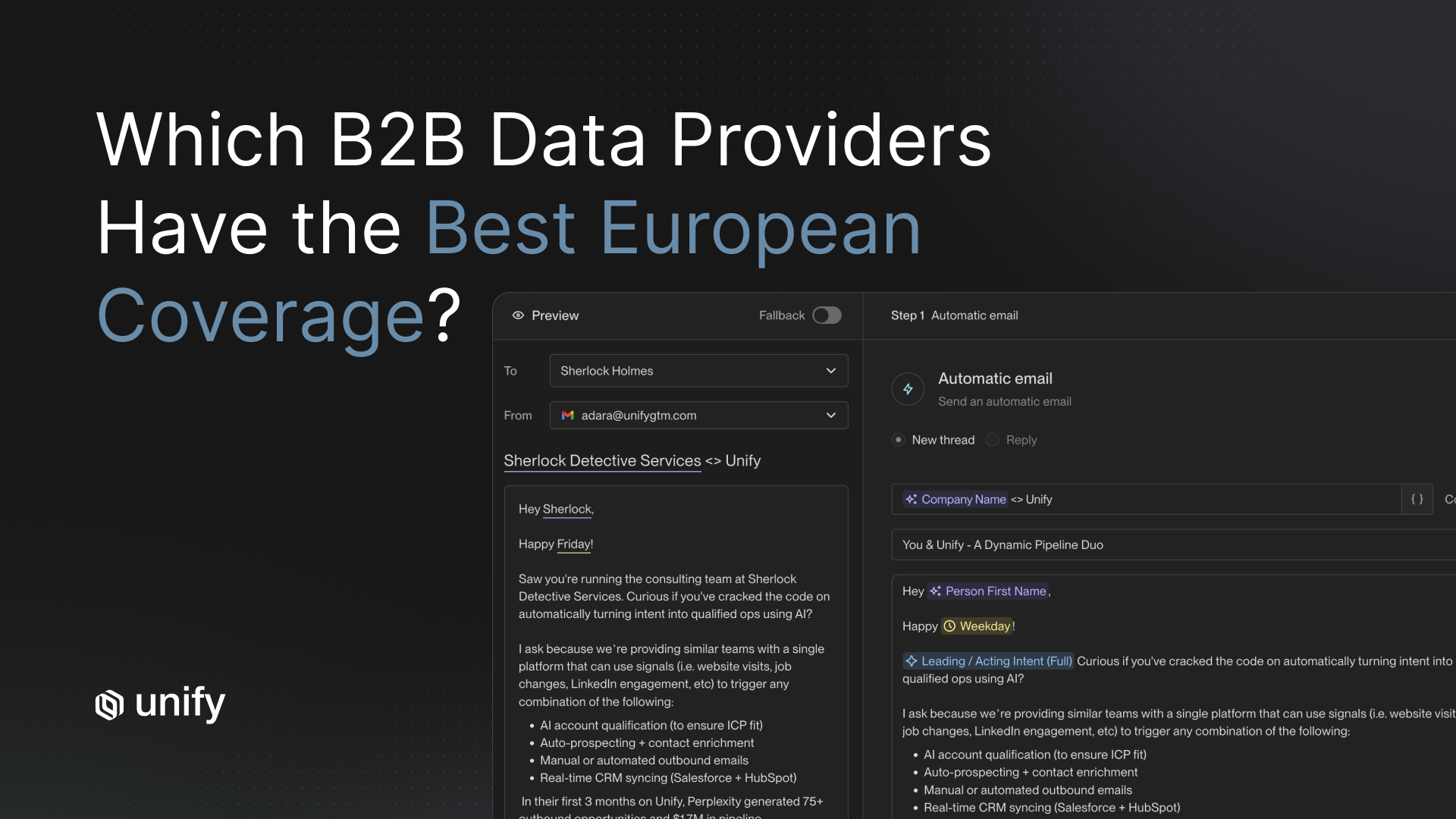

- Reply classification: Unify's AI agents classify incoming replies by type, including positive, objection, question, out-of-office, and unsubscribe, so every response gets routed to the right workflow automatically.

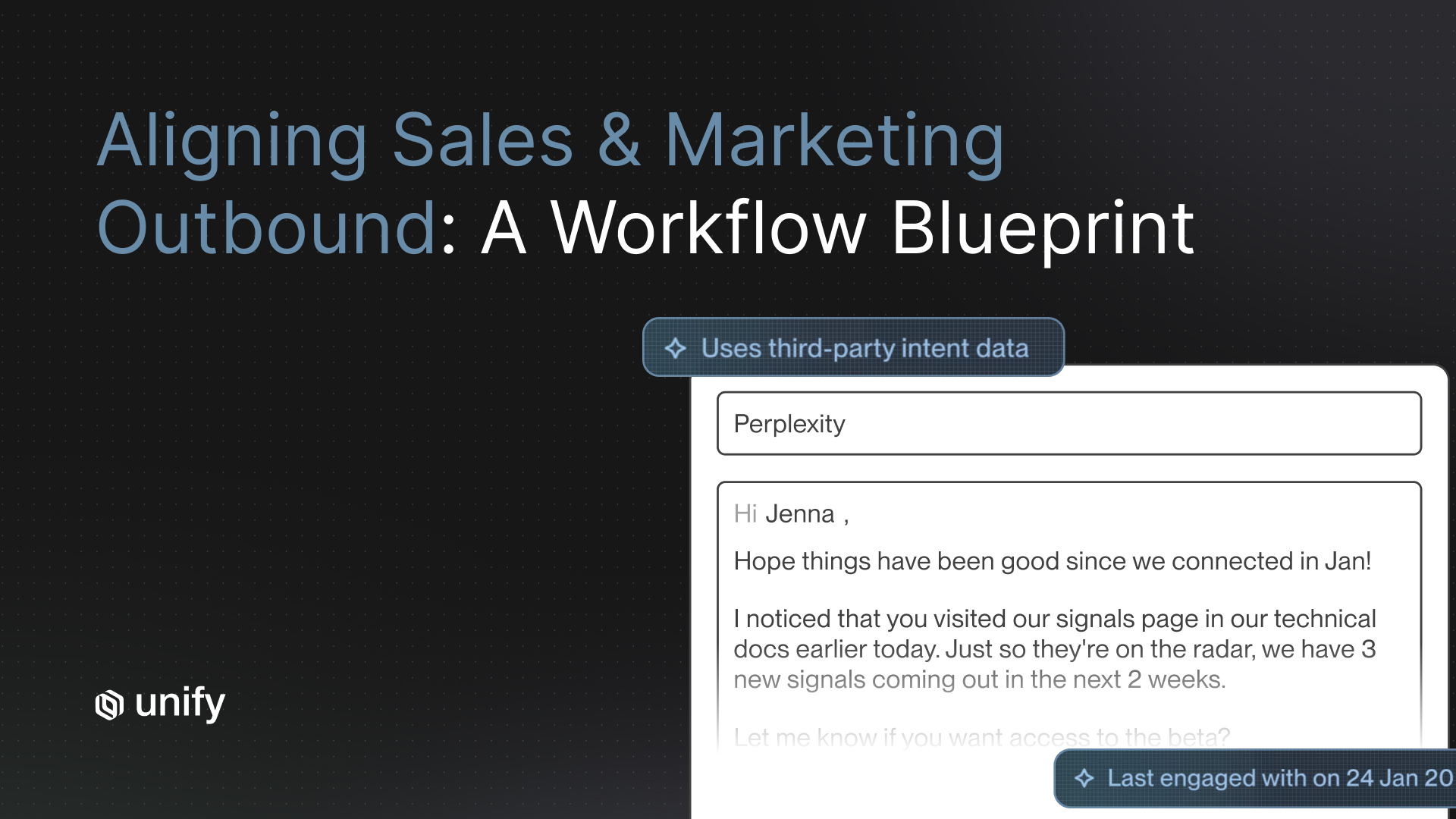

- Context-aware follow-ups: When AI does respond, it references previous touchpoints and account research in every message. This is the difference between a follow-up that feels like a cold email and one that continues a conversation.

- Automatic escalation: Complex objections route to human reps without the prospect noticing a handoff. The rep gets full context on the conversation history, so they are not starting from scratch.

- Built-in suppression: Opt-out and unsubscribe handling is automatic. There is zero risk of a "stop emailing me" response being ignored, which is a real problem with less sophisticated tools.

Unify brings together email, social, and calling so reps never miss a follow-up, and AI handles the high-volume pattern-based responses while humans focus on the conversations that require judgment.

Benchmarks: What Good AI Follow-Up Actually Looks Like

When evaluating AI SDR tools, you need concrete numbers to separate marketing claims from reality. Here are the benchmarks that matter.

- Response accuracy: More than 85% of AI follow-ups should be rated "appropriate" by a human reviewer. If you are below this, the AI is doing more harm than good.

- Escalation rate: Aim for 30-40% of replies being escalated to humans. Lower than 30% means the AI is probably over-aggressive and attempting responses it should not. Higher than 40% means the AI is not providing enough value.

- Time-to-response: AI responses should go out in under 4 hours. Escalated human responses should land within 24 hours. Speed matters in sales, and this is one area where AI consistently outperforms humans.

- Meeting-to-opportunity conversion: AI SDRs currently convert meetings to opportunities at roughly 15%, compared to 25% for human SDRs, according to industry benchmarks tracked by SaaStr. The gap narrows significantly with clean data and well-configured escalation rules.

- Sequence completion rate: 40-60% of prospects should complete the full follow-up sequence. Below 40% suggests your messaging is too aggressive or irrelevant.

"If you have not gotten outbound to work with humans, buying an AI to do it will not fix that." - Jason Lemkin, SaaStr

The tools matter, but they only amplify what is already working. Start by getting your ICP, messaging, and escalation rules right. Then let AI scale what humans have proven works.

Frequently Asked Questions

What percentage of sales objections can AI SDRs handle without human help?

Most well-configured AI SDRs handle 60-70% of follow-up responses autonomously. These are pattern-based objections like timing delays, information requests, and routing questions. The remaining 30-40%, including competitive comparisons, pricing negotiations, and technical questions, should escalate to human reps.

How do you test an AI SDR's objection handling before buying?

Run a structured evaluation during your demo or pilot. Send the AI a "not interested" reply, a "tell me more" reply, and a complex competitive objection. Check whether it respects boundaries, provides contextual responses, and escalates appropriately. Then review 20 AI-generated follow-ups and score each for relevance, tone, and accuracy.

What is a good meeting-to-opportunity conversion rate for AI SDRs?

AI SDRs currently convert meetings to opportunities at around 15%, compared to 25% for human SDRs. This gap narrows with clean data, well-defined escalation rules, and a hybrid model where AI handles initial qualification and humans handle complex conversations. Companies using hybrid models see 2.8x more pipeline than those relying on AI alone.

How should AI SDRs handle "stop emailing me" responses?

Opt-out requests should trigger immediate, automatic suppression with zero human intervention required. This is non-negotiable. Any AI SDR tool that does not have built-in suppression and opt-out handling is a compliance and brand risk. Look for tools like Unify that classify unsubscribe responses automatically and remove prospects from all active sequences instantly.

What is the ideal escalation rate for AI SDR follow-ups?

Aim for 30-40% of incoming replies to be escalated to human reps. An escalation rate below 30% suggests the AI is attempting responses it should not, risking poor prospect experiences. Above 40% means the AI is not handling enough volume to justify its cost. Monitor this metric weekly and adjust your escalation triggers based on response quality audits.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)