AI SDRs are the fastest-growing category in B2B sales tech. The global AI SDR market was valued at $4.12 billion in 2025 and is projected to reach $15.01 billion by 2030 at a CAGR of 29.5%, according to MarketsandMarkets. But that does not mean you should sign an annual contract next week.

What you need is a structured pilot. Thirty days, one ICP segment, clear metrics, and a go/no-go decision framework at the end. This guide gives you the week-by-week plan to run an AI SDR pilot that produces real data, not just a gut feeling.

Why You Should Pilot Before You Commit

AI SDRs are still a new category. According to McKinsey's 2025 State of AI report, 23% of organizations are scaling agentic AI in at least one function, but fewer than 10% have achieved tangible results at scale. Most teams are still figuring out what works.

A 30-day pilot de-risks the investment in three specific ways:

- You get real performance data. Not vendor demos, not case studies from companies with different ICPs. Your data, your segment, your messaging.

- You build internal buy-in. When AEs see meetings landing on their calendar from an AI SDR, skepticism drops fast.

- You avoid the sunk cost trap. A pilot costs a fraction of a full rollout. If it does not work, you walk away with learnings instead of regret.

SaaStr documented this approach publicly. After piloting AI SDRs across inbound and outbound, they scaled to 20+ AI agents and generated $1M+ in closed revenue within 90 days from a single inbound agent. Their outbound agents hit 6.7% response rates, roughly double the industry average. But they did not start there. They started with a focused pilot on a narrow segment.

Pre-Pilot: Setting Up for Success (Days 1-3)

Before you launch anything, spend three days getting the foundation right. Rushed onboarding is the top reason AI SDR pilots fail.

Define Success Criteria Upfront

Decide what metrics will determine your go/no-go decision before a single email goes out. This prevents moving the goalposts later. The metrics that matter most:

- Meetings booked per week compared to your human SDR baseline for the same segment

- Positive reply rate (not just opens or total replies, but replies that indicate genuine interest)

- Cost per meeting for the AI SDR versus the fully loaded cost of a human SDR

- AE satisfaction score (are the meetings being booked actually qualified?)

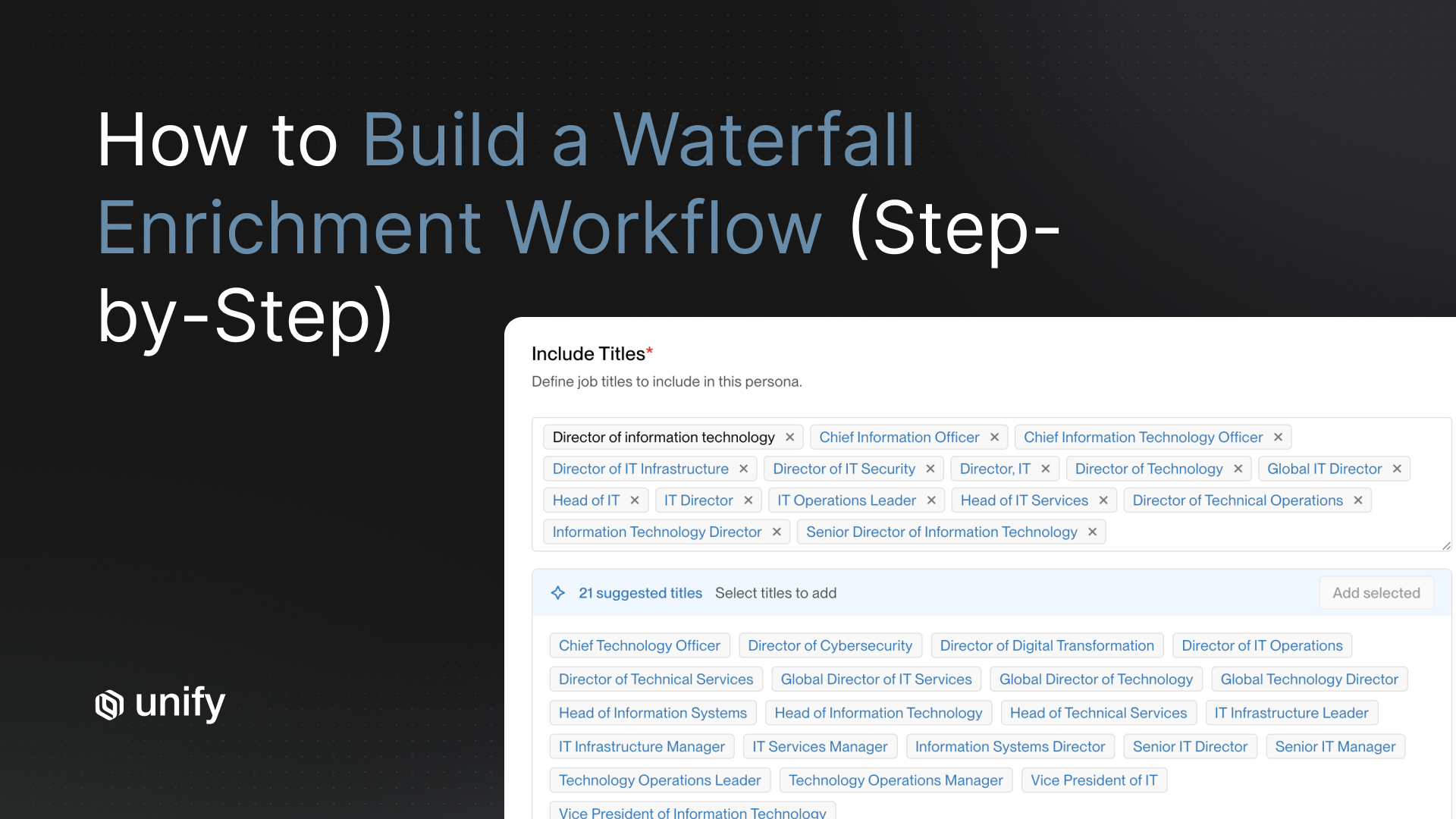

Select Your Pilot Scope

Pick one ICP segment and one territory. Target 500 to 1,000 prospects total. This is enough volume to generate statistically meaningful results without creating noise across your entire pipeline.

Establish Your Baseline

Pull your current human SDR metrics for the same segment you are piloting. If you do not have a baseline, the pilot results will be meaningless. You need to know your existing reply rate, meetings per week, cost per meeting, and average time from first touch to meeting booked.

Technical Setup

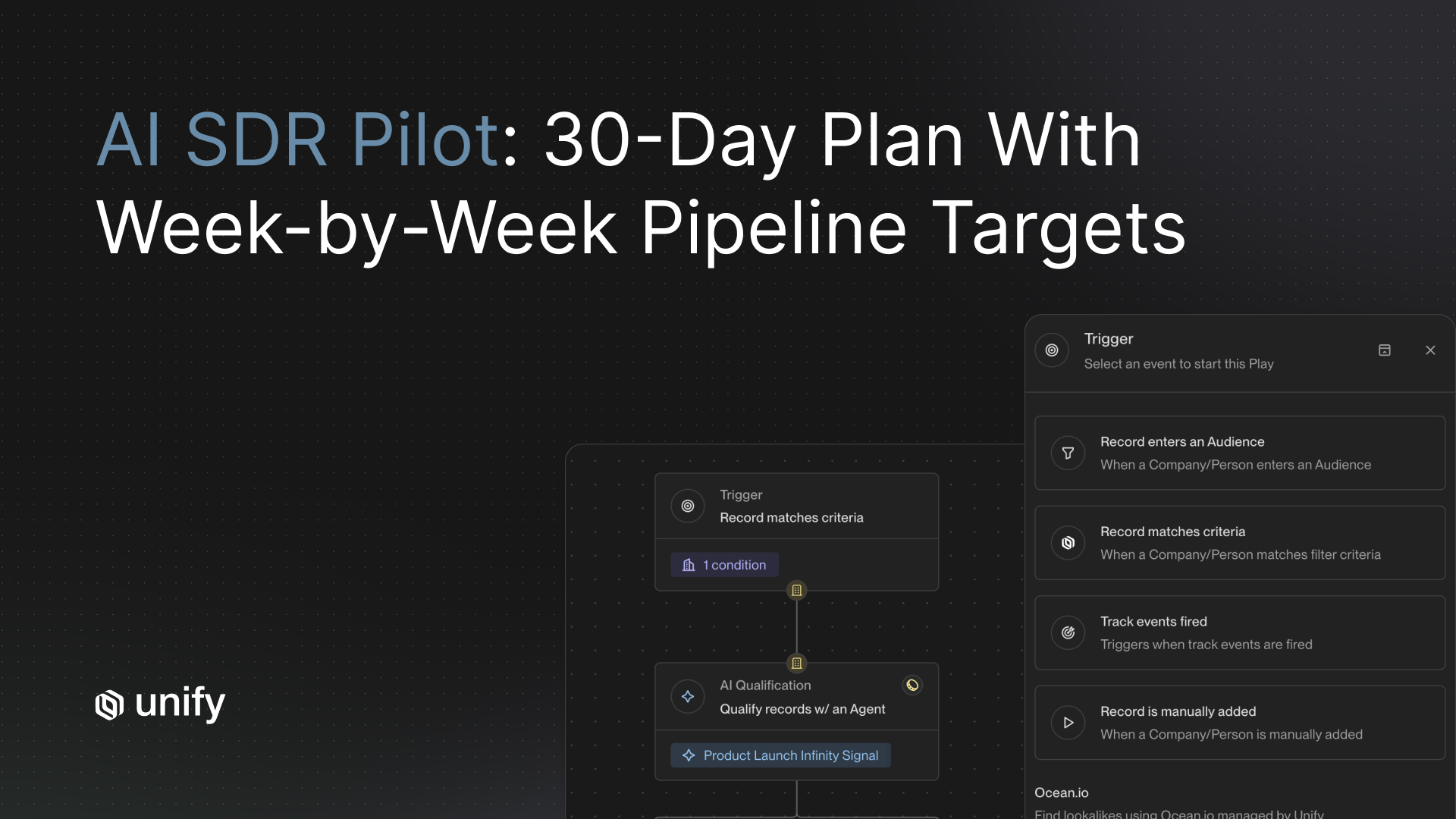

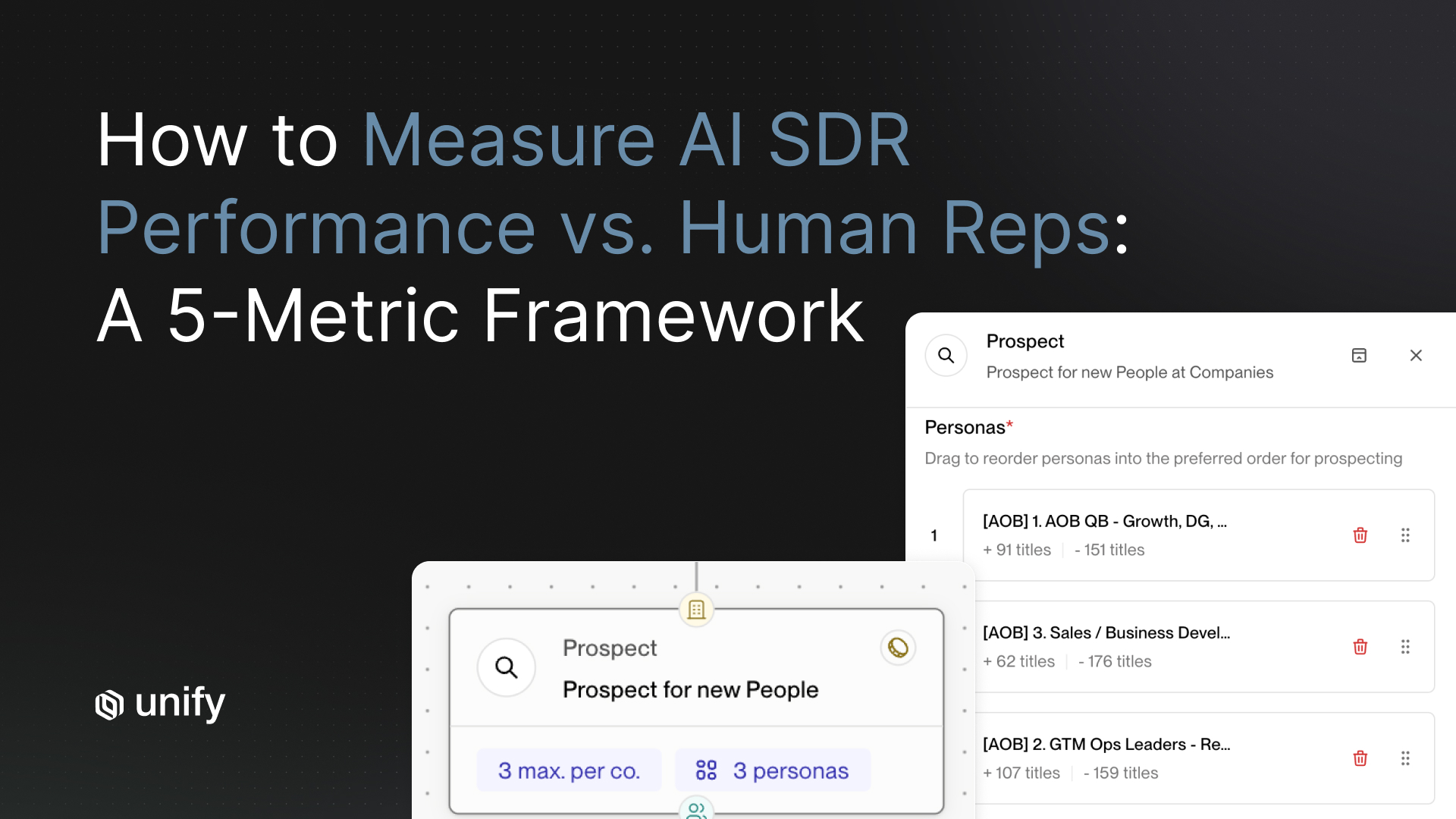

Get your CRM integration live, configure mailboxes, and start domain warming if you are using new sending domains. With a platform like Unify, this typically takes days rather than weeks because intent signals, AI agents, and sequencing are all built into one platform. There is no need to stitch together a separate data provider, enrichment tool, and sequencing tool before you can start.

Week 1: Launch and Learn (Days 4-10)

This is your crawl phase. You are not optimizing yet. You are validating that the system works and the output quality meets your standards.

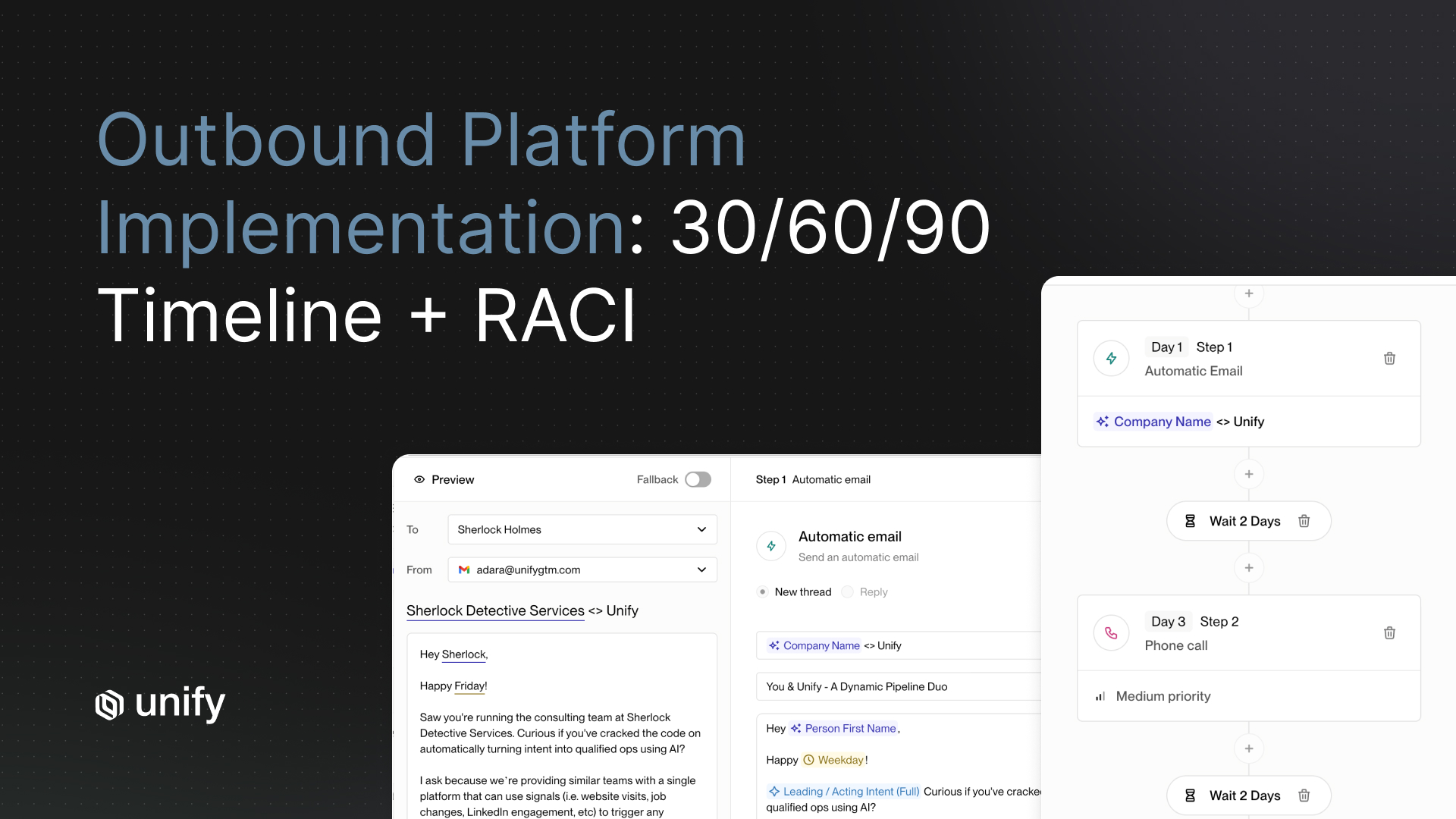

- Launch your first sequences on 200-300 prospects. Keep the volume low enough that you can review everything manually.

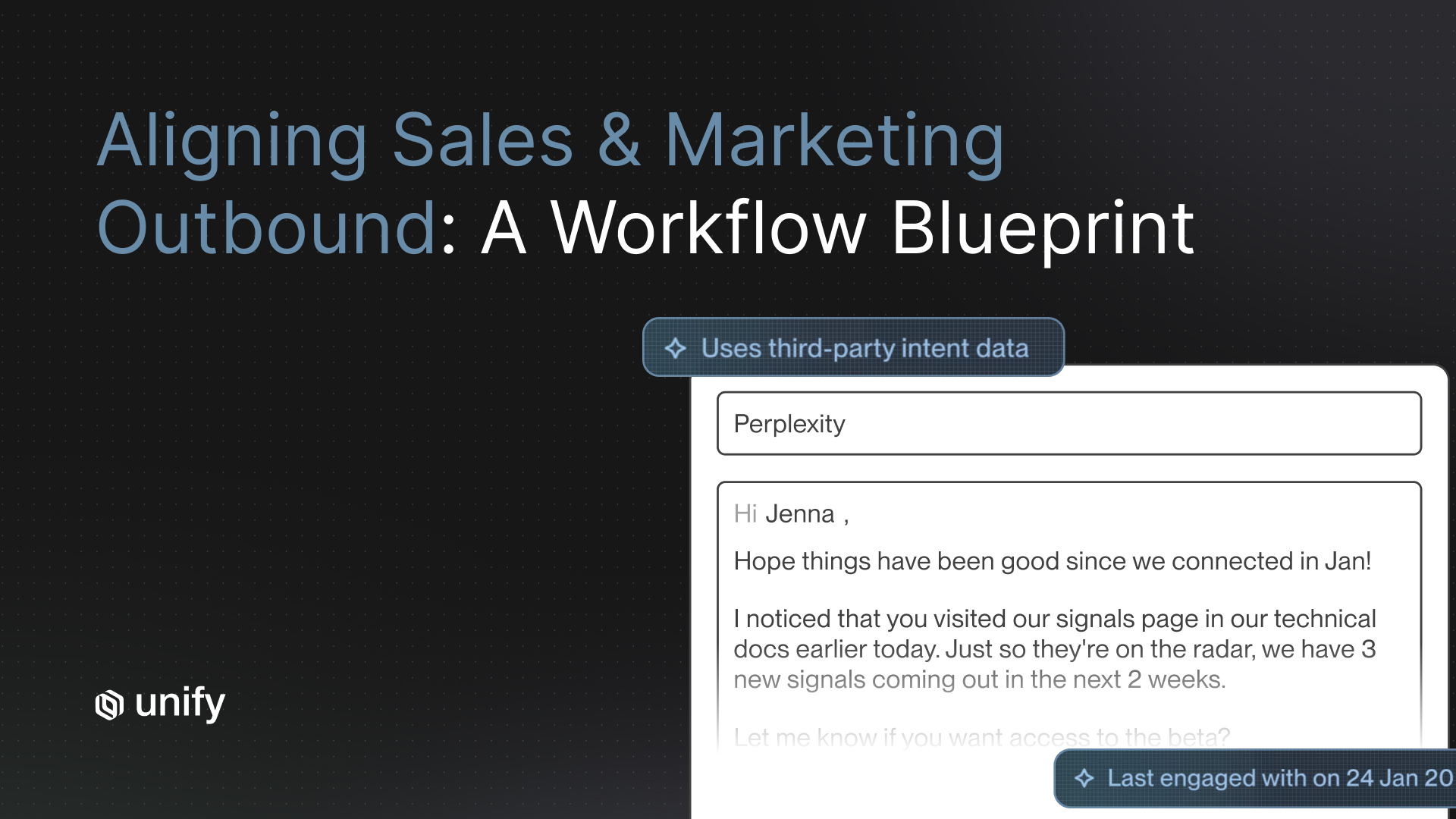

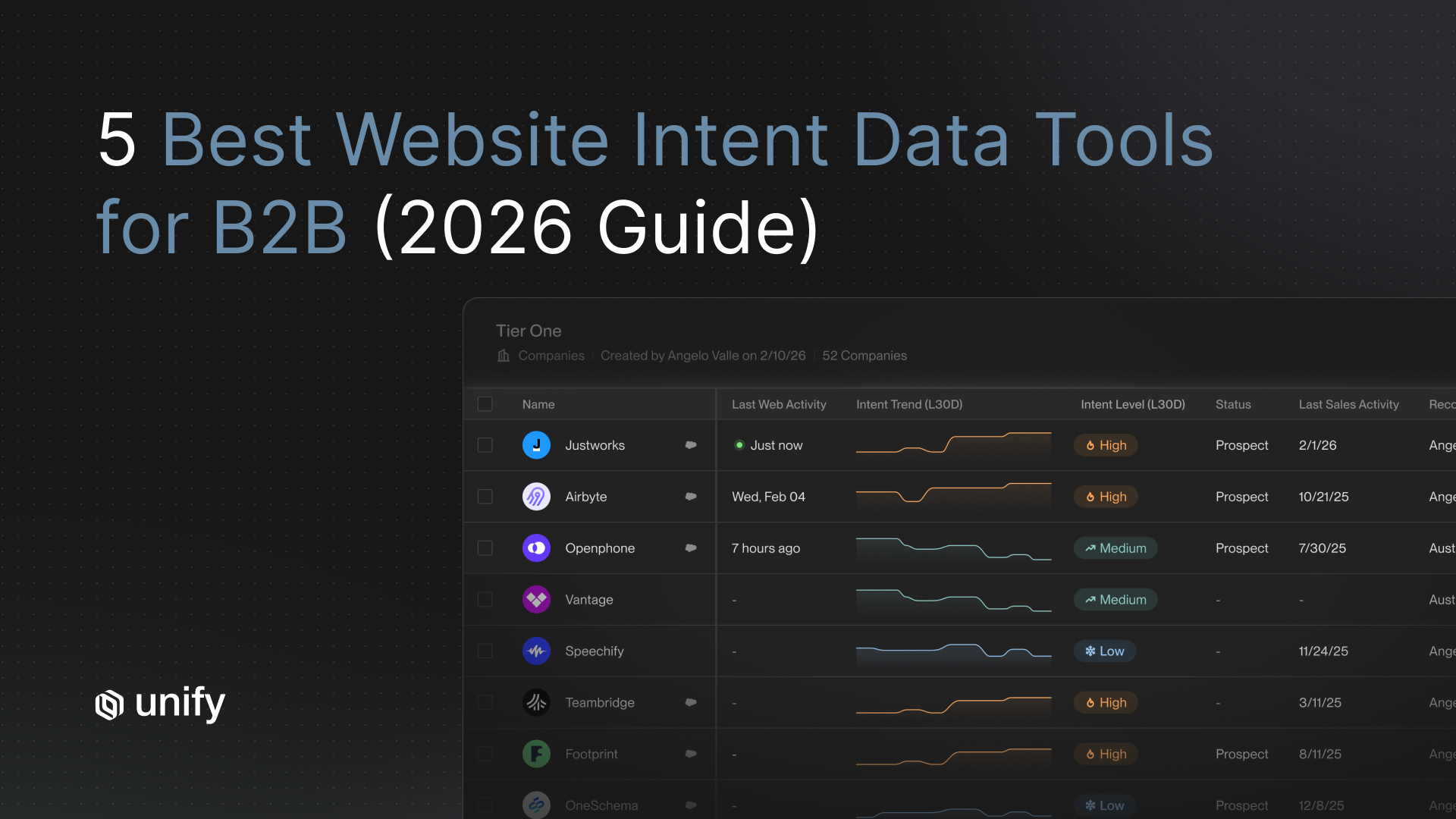

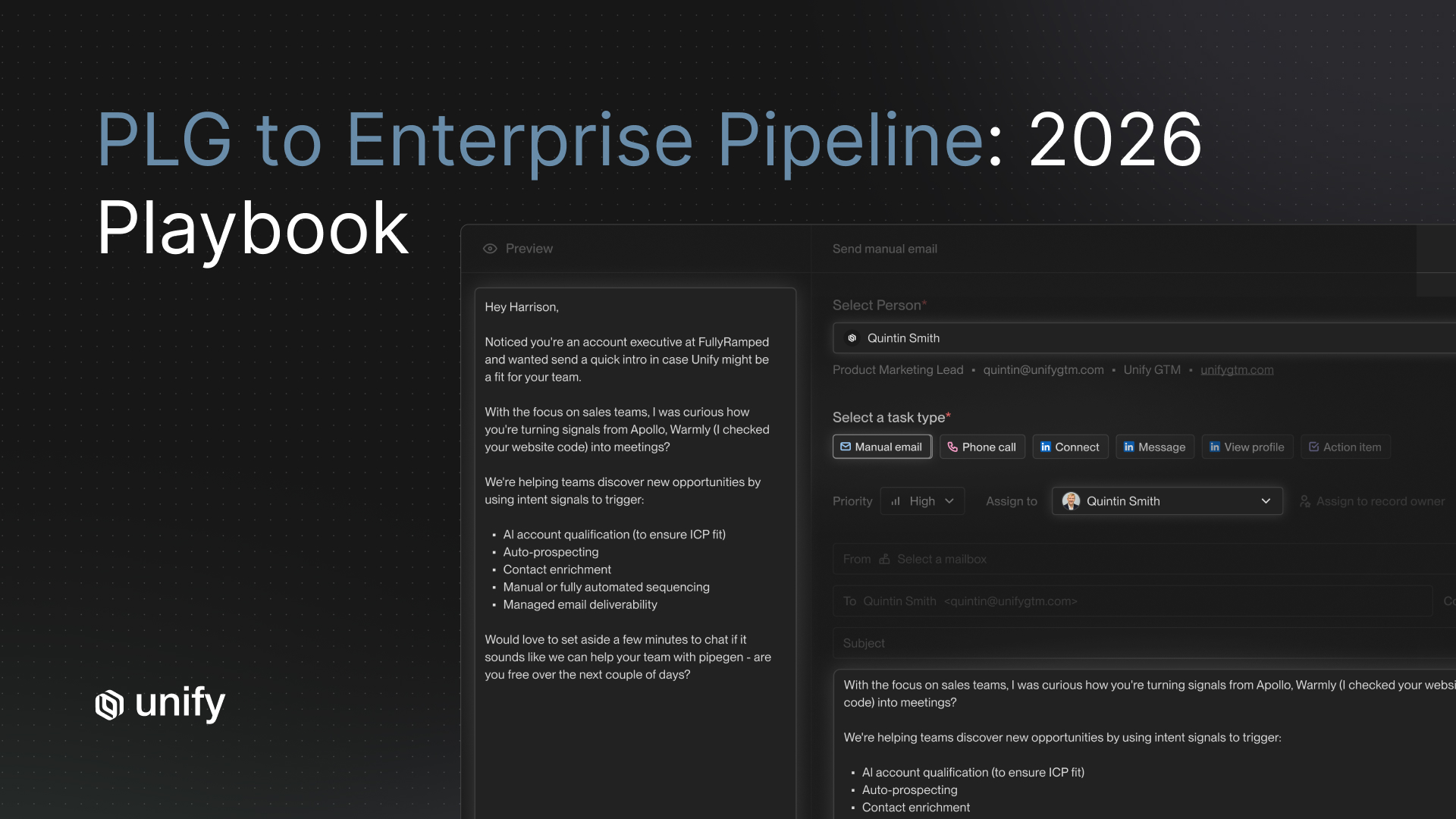

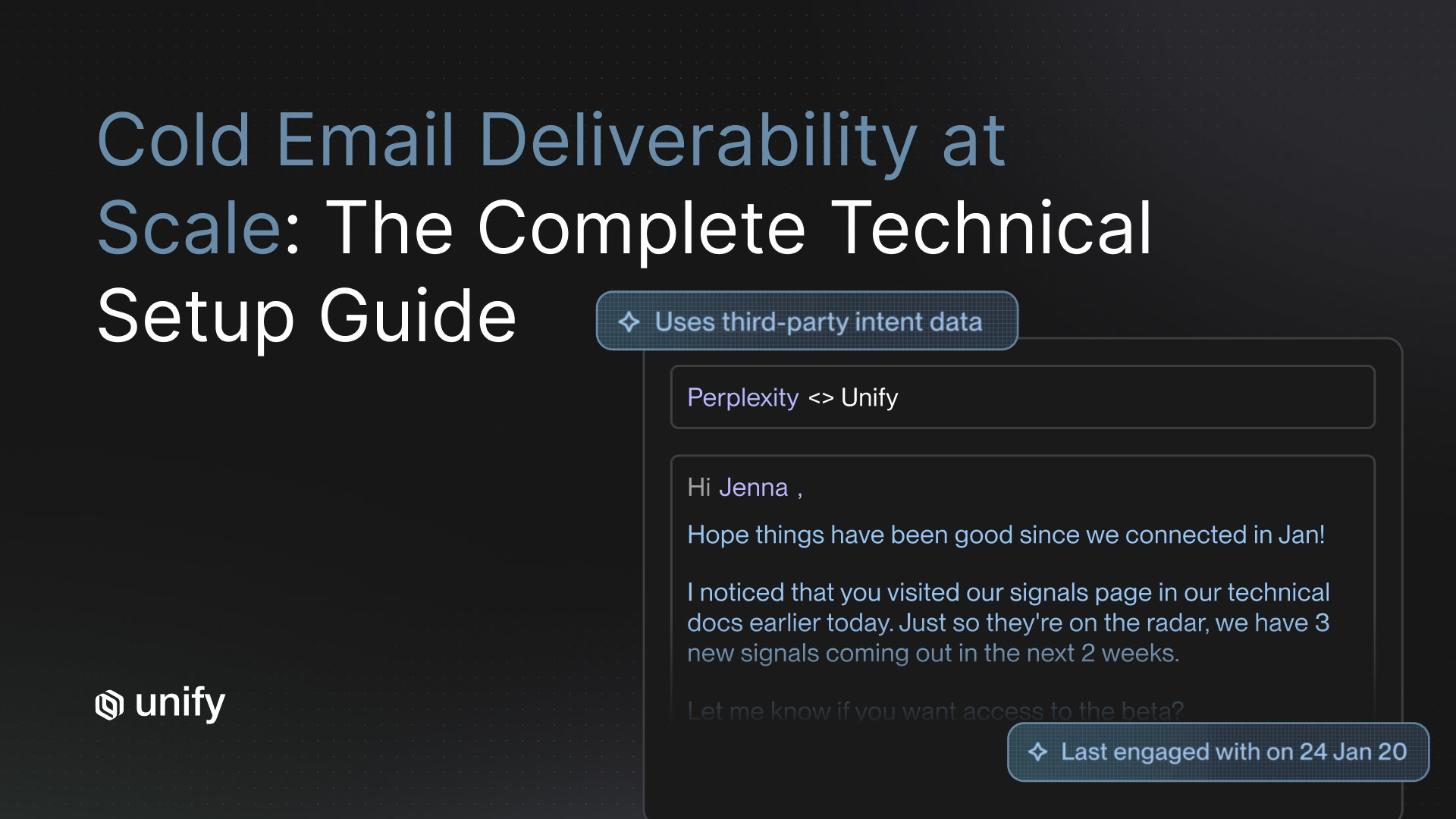

- Let the AI research each account and draft personalized outreach. This is where platforms differ significantly. Unify's AI agents pull from intent signals (website visits, job changes, funding events, technographic data) to write genuinely relevant emails, not just "I saw your LinkedIn" filler.

- Human-review the first 50 emails before approving auto-send. Check for accuracy, tone, relevance, and anything that would embarrass your brand. Flag patterns, not just individual emails.

- Track these Week 1 metrics: email quality score (your subjective rating), send rate, open rate, and bounce rate.

Do not panic if you see zero replies in Week 1. According to Prospeo's deployment data, the median time to first positive response is around 22 days. You are planting seeds this week.

Week 2: Optimize and Scale (Days 11-17)

Now you have a week of engagement data. Use it.

- Review opens, replies, and positive replies. Look for patterns. Which subject lines got opened? Which personalization angles got responses? Which segments are engaging more than others?

- Adjust your AI personalization parameters. If the AI is over-indexing on one type of research (say, recent news mentions) but under-using another (like tech stack data), tune it. On Unify, you can adjust agent instructions and the intent signals feeding your sequences without rebuilding anything from scratch.

- Expand to full pilot volume. Scale up to your full 500-1,000 prospect list.

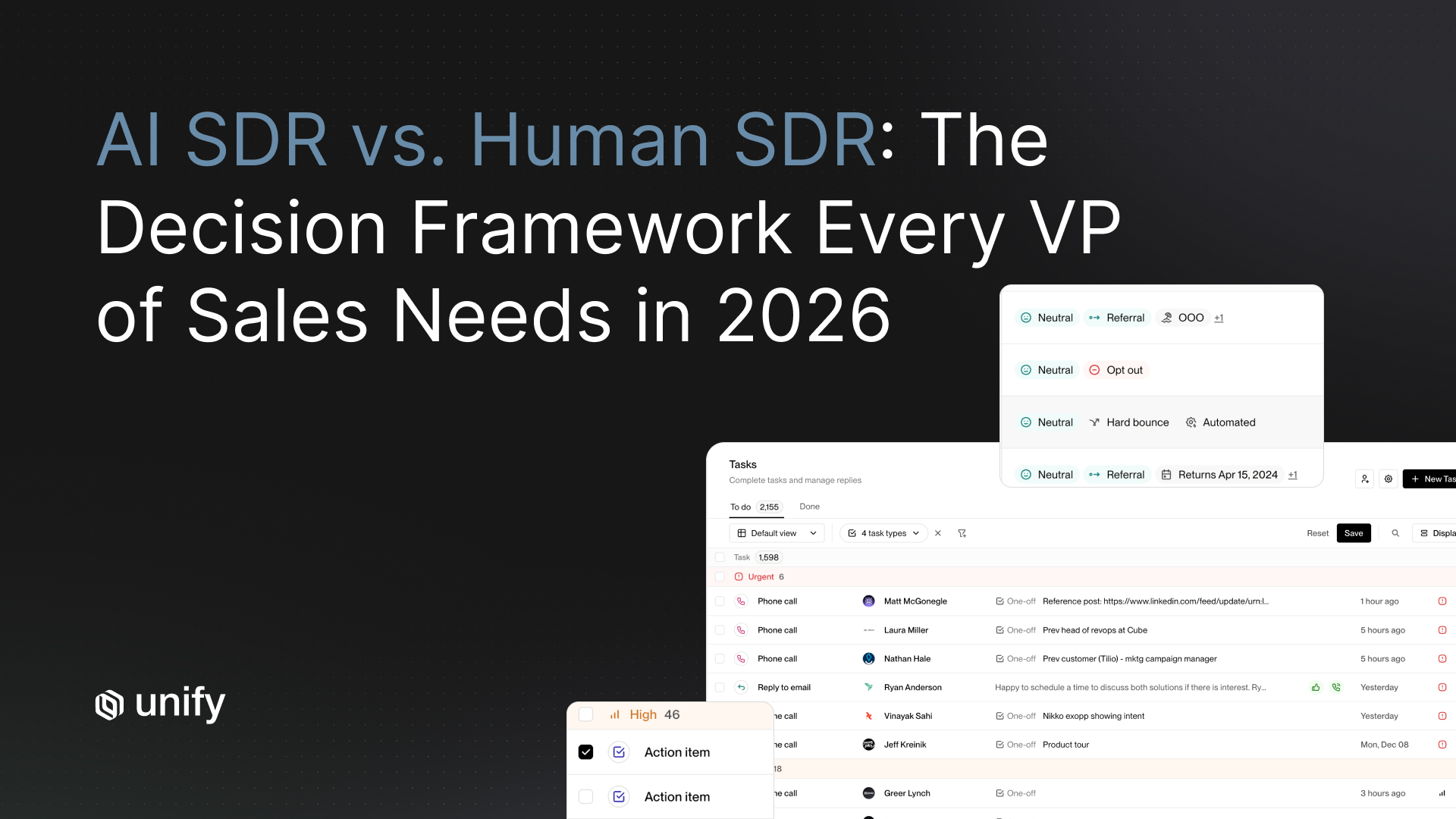

- Start tracking conversion metrics: meetings booked, response sentiment (positive vs. negative vs. neutral), and CRM activity logging accuracy. Make sure every touchpoint is syncing correctly to your CRM so you have clean data for the Week 3 comparison.

Week 3: Measure and Compare (Days 18-24)

This is the most important week of the pilot. You are running a direct comparison between AI SDR performance and your human SDR baseline.

The Head-to-Head Comparison

Pull your numbers across these five dimensions:

- Reply rate: AI SDR vs. human SDR

- Positive reply rate: AI SDR vs. human SDR

- Meetings booked: AI SDR vs. human SDR (normalized for volume)

- Cost per meeting: AI SDR total cost vs. human SDR fully loaded cost (salary, benefits, tools, management overhead)

- Time invested: total hours spent on setup, oversight, and optimization vs. the equivalent full-time SDR hours

What AI Typically Does Better

- Volume and consistency. An AI SDR sends personalized outreach at a scale no human team can match, and it never has an off day.

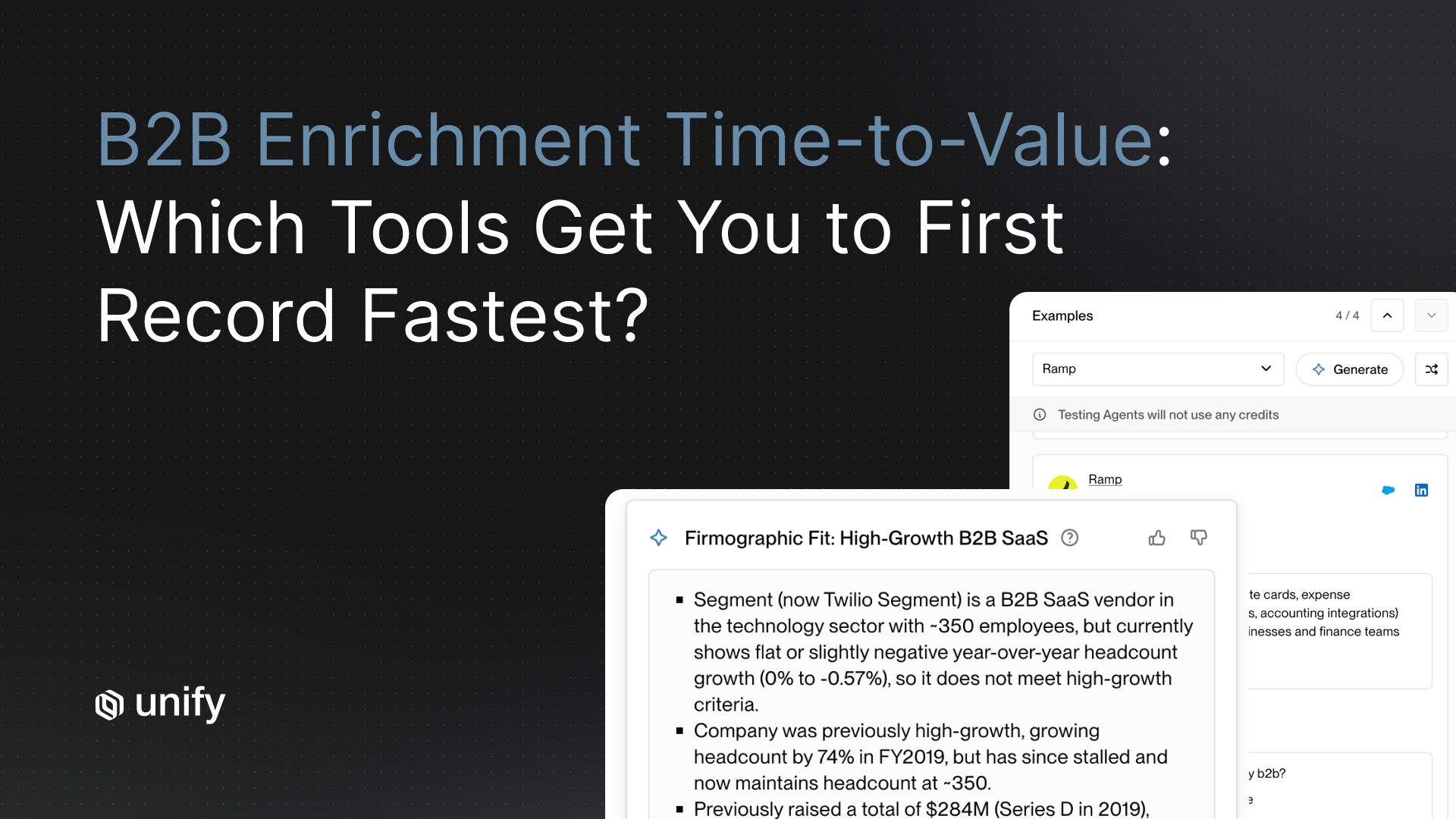

- Research depth. Platforms like Unify pull from 25+ intent signals per prospect, synthesizing data that would take a human SDR 15-20 minutes per account to gather manually.

- Speed to lead. AI responds to intent signals in real time. Industry benchmarks show AI SDRs cut initial response times from several hours to under 60 seconds, regardless of when a lead comes in.

What AI Typically Does Worse

- Nuanced objection handling. When a prospect raises a complex, multi-layered objection, AI still struggles compared to experienced reps.

- Relationship depth. AI cannot build rapport at a dinner or read the room during a conference hallway conversation.

- Edge cases. Unusual situations (non-standard buying processes, highly political deals) still need human judgment.

Week 4: Decision Time (Days 25-30)

By now you have three weeks of real data. Time to make the call.

Go/No-Go Scoring Rubric

Score each dimension on a 1-5 scale, where 3 means "matches human SDR baseline" and 5 means "significantly exceeds baseline."

- Meetings booked (weight: 30%) - Did the AI SDR book meetings at or above your baseline rate?

- Meeting quality (weight: 25%) - Are AEs satisfied with the meetings? Are they converting to pipeline?

- Cost efficiency (weight: 20%) - Is the cost per meeting lower than your human SDR cost?

- Reply rate (weight: 15%) - How does engagement compare to your baseline?

- Operational effort (weight: 10%) - How much human oversight did the pilot require?

Three Possible Outcomes

- Go (weighted score 3.5+): AI meets or exceeds your baseline. Build a rollout plan. Start with 1-2 additional ICP segments, then expand quarterly.

- Iterate (weighted score 2.5-3.4): AI shows promise but needs tuning. Extend the pilot two more weeks with specific adjustments. Common fixes include tightening ICP targeting, refining personalization prompts, or adjusting follow-up cadence.

- No-go (weighted score below 2.5): AI significantly underperforms. Document what you learned, share findings with the team, and re-evaluate in six months as the technology matures.

Why Unify Is the Fastest Way to Pilot

Most AI SDR pilots fail not because the technology does not work, but because the setup takes too long and involves too many tools. You end up spending your entire pilot window just getting integrations to talk to each other.

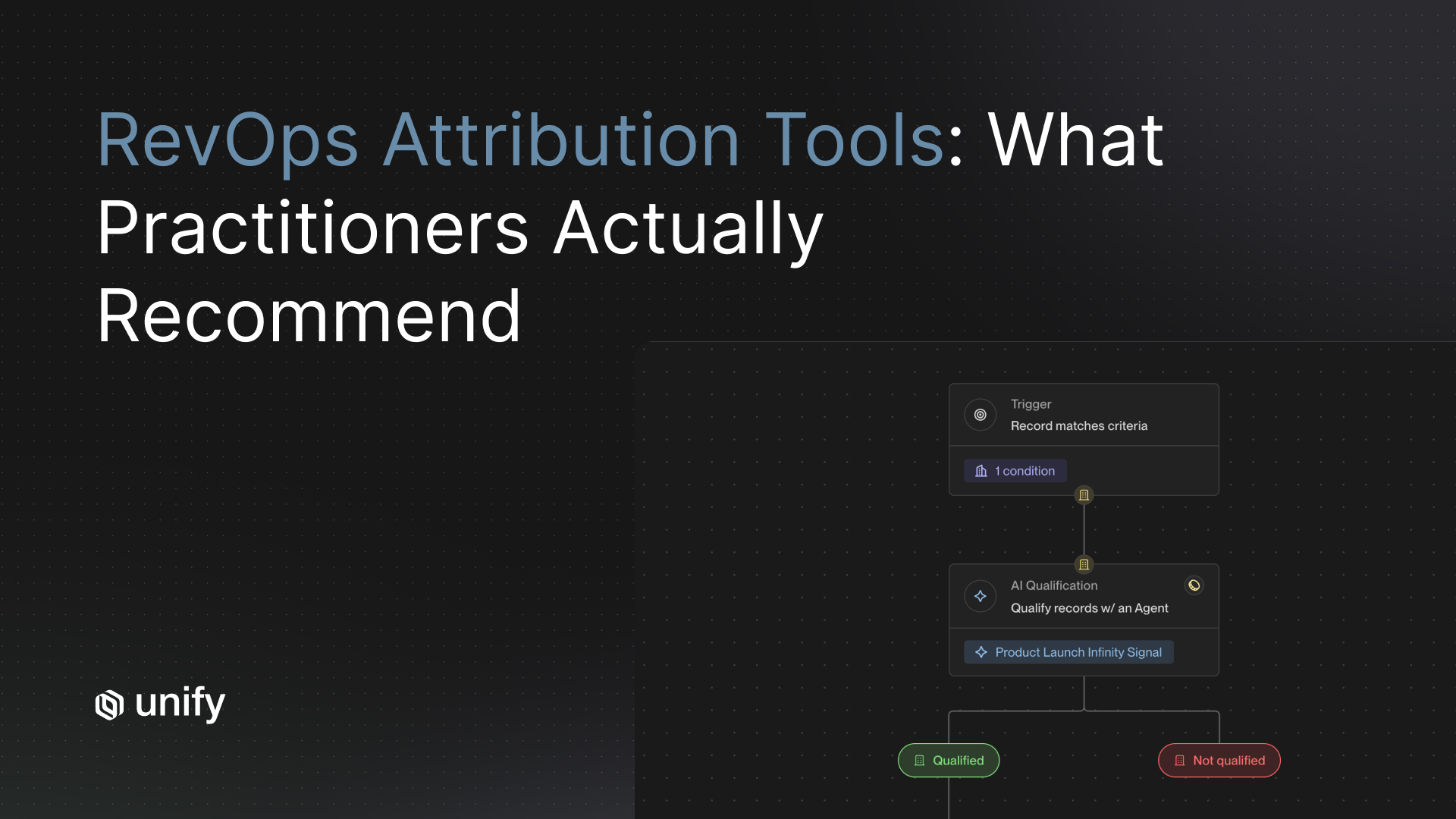

Unify solves this by consolidating intent signals, AI research agents, and outbound sequences into a single platform. Here is what that means for your pilot:

- Launch in days, not weeks. No separate data provider, enrichment tool, or sequencing platform to configure. One platform, one setup.

- Built-in intent signals from day one. Your pilot targets are not cold. Unify surfaces accounts showing buying signals (website visits, job changes, funding rounds, tech installs) so you are reaching prospects who are already in-market.

- AI agents that do the research for you. Unify's agents analyze each prospect across multiple data points and draft personalized outreach. Your team reviews and approves rather than writing from scratch.

- Native CRM sync. Every touchpoint logs directly to Salesforce or HubSpot. Your pilot data is immediately available for the Week 3 comparison without manual data entry or CSV exports.

- One bill, one vendor. No juggling credits across three or four different tools. Your pilot cost is predictable and easy to calculate for the cost-per-meeting comparison.

Pilot Metrics Dashboard Template

Track these numbers daily during your pilot. You can build this in a simple spreadsheet or your CRM dashboard.

Daily Tracking

- Emails sent: total outbound messages per day

- Open rate: percentage of emails opened

- Reply rate: percentage of emails that received any reply

- Positive reply rate: percentage of replies expressing interest

- Meetings booked: confirmed meetings on AE calendars

Weekly Tracking

- Total meetings booked (cumulative)

- Pipeline created (dollar value of opportunities from pilot meetings)

- AE feedback score (1-5 rating on meeting quality)

- Oversight hours (time your team spent reviewing, adjusting, and managing the AI)

Cost-Per-Meeting Calculation

AI SDR cost per meeting: (monthly platform cost + oversight hours x hourly rate) / meetings booked

Human SDR cost per meeting: (monthly salary + benefits + tools + management overhead) / meetings booked

Compare these two numbers at the end of Week 3. If the AI SDR cost per meeting is lower and meeting quality is comparable, you have a strong economic case for scaling.

Frequently Asked Questions

How many prospects should I include in an AI SDR pilot?

Target 500 to 1,000 prospects from a single ICP segment. This volume gives you enough data to draw meaningful conclusions while keeping the scope manageable for manual oversight during the first week. Start with 200-300 in Week 1 and expand to the full list in Week 2.

What is a realistic timeline for an AI SDR pilot to show results?

Expect your first positive replies around day 18-22, with meetings following shortly after. A full 30-day pilot gives you enough data for a confident go/no-go decision. Some organizations extend to 45 days if they need more volume. Do not judge the pilot based on Week 1 data alone.

How do I calculate the ROI of an AI SDR pilot?

Compare the cost per meeting between your AI SDR and your human SDR baseline. Factor in platform costs, setup time, and ongoing oversight hours for the AI SDR. For the human SDR, include fully loaded costs: salary, benefits, tools, training, and management overhead. The AI SDR typically wins on volume and cost efficiency, while the comparison on meeting quality varies by segment and use case.

What are the most common reasons AI SDR pilots fail?

The top three reasons are poor data quality (bad contact data in, bad outreach out), undefined success criteria (no baseline means no way to evaluate results), and too much setup complexity from stitching together multiple tools. Choosing a consolidated platform like Unify that bundles intent data, AI agents, and sequencing eliminates the third problem entirely.

Can I run an AI SDR pilot alongside my existing human SDR team?

Yes, and you should. The best pilots run the AI SDR on a separate ICP segment or territory so results do not overlap with human SDR activity. This gives you a clean comparison. Make sure your CRM is configured to attribute meetings correctly to the AI SDR versus human reps to avoid double-counting.

Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)