An SDR AI training plan is a structured program that helps sales development representatives adopt AI personalization tools as part of their daily outbound workflow, covering everything from initial change management to long-term habit formation. Most rollouts skip this and fail. Leadership buys the tool, sends a Slack message, and wonders six weeks later why usage has flatlined. Adoption fails not because reps are resistant to technology but because nobody treated the rollout as a change management project.

Getting your team to genuinely use AI personalization tools, in ways that move the needle on reply rates and booked meetings, takes more than a one-hour training session. This guide walks through a practical plan built around how reps actually think and work.

"We believe that human-to-human interactions aren't going away. We're leveraging AI to automate 90% of the busywork and give reps the ability to move with velocity and intelligence that wasn't previously possible." — Austin Hughes, Co-Founder and CEO, Unify

Key takeaways

- Only 23% of cold callers use AI tools extensively, with another 49% using them occasionally. Most rollouts stall in that "occasional" bucket. (HubSpot)

- The three root causes of AI adoption failure are trust gaps, workflow friction, and no visible feedback loop.

- Train on one workflow at a time. Depth before breadth is the fastest path to habitual use.

- Signal context shown alongside the email draft is the single biggest driver of rep trust in AI output.

- Spellbook increased email open rates from 19-25% to 70-80% after rolling out AI-assisted outbound with Unify. (Unify customer story)

Why AI personalization adoption breaks down

Before you build a training plan, it helps to understand the real blockers. Working with fast-growing sales teams, resistance usually comes from one of three places:

- Trust gaps: Reps don't believe the AI output is good enough to send. They've seen enough generic AI slop that they assume every draft will need full rewrites, so they skip the tool entirely.

- Workflow friction: If using the AI tool requires jumping between three different tabs, pulling up context manually, and then copy-pasting into a sequence, most reps will default to their existing workflow just because it's faster.

- No visible feedback loop: Reps can't tell whether AI-assisted emails perform better than their manual ones, so the tool never builds credibility with them over time.

According to HubSpot's research on sales technology adoption, only 23% of cold callers use AI tools extensively, with another 49% using them occasionally. That "occasional" bucket is where most rollouts stall. Reps try the tool once or twice, it doesn't feel worth the extra steps, and usage drops off.

The goal of a good SDR AI training plan is to get reps past that initial friction fast, before bad habits form.

Step 1: Start with outcomes, not features

The first mistake most sales enablement teams make is leading with the product demo. Don't. Lead with the outcome your reps care about: booking more meetings without spending more hours on research.

Frame the training around a specific, concrete improvement. Something like: "Reps who use AI personalization consistently are averaging X more replies per hundred emails sent than those relying on templates." You want a number your team can anchor to.

If you don't have your own data yet, you can build the case from what's already published. HubSpot's sales statistics report found that sales professionals incorporating sales enablement content in their approach are 58% more likely to exceed their quota targets. AI personalization tools, when used well, sit at the center of that.

Only after you've made the case should you walk through the mechanics of how the tool works.

Step 2: Pick one workflow and go deep on it

Don't try to train your team on every feature at once. AI personalization platforms can do a lot: generating subject lines, writing opening hooks, pulling in company news, summarizing CRM history. If you demo all of it on day one, nobody remembers anything.

Pick one workflow that maps to your highest-volume play and build the training around that. For most SDR teams, that's the cold outbound first touch. The goal is to get reps to a point where they can go from signal to sent email in under three minutes, using AI research and AI-generated snippets to do the personalization work.

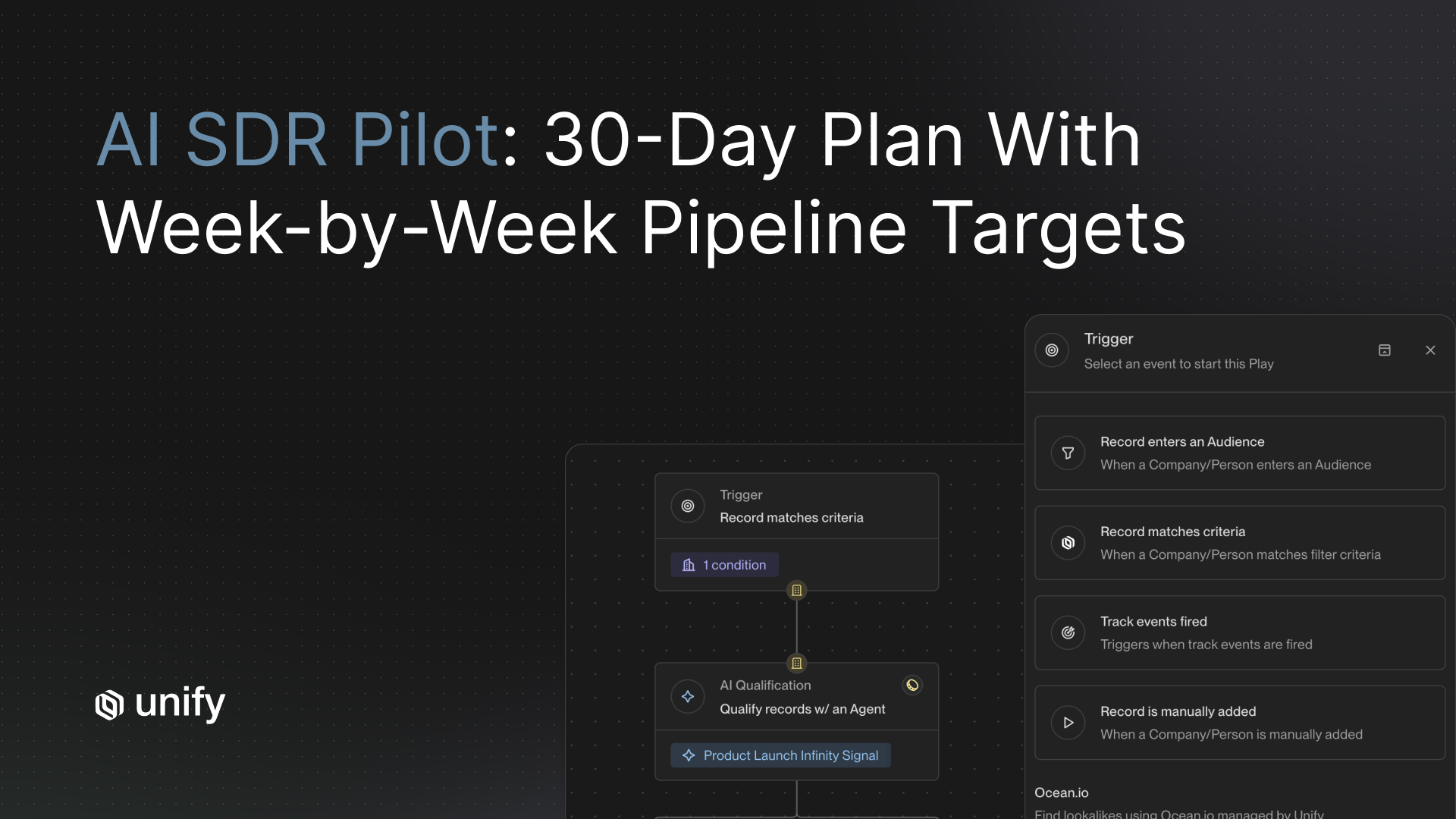

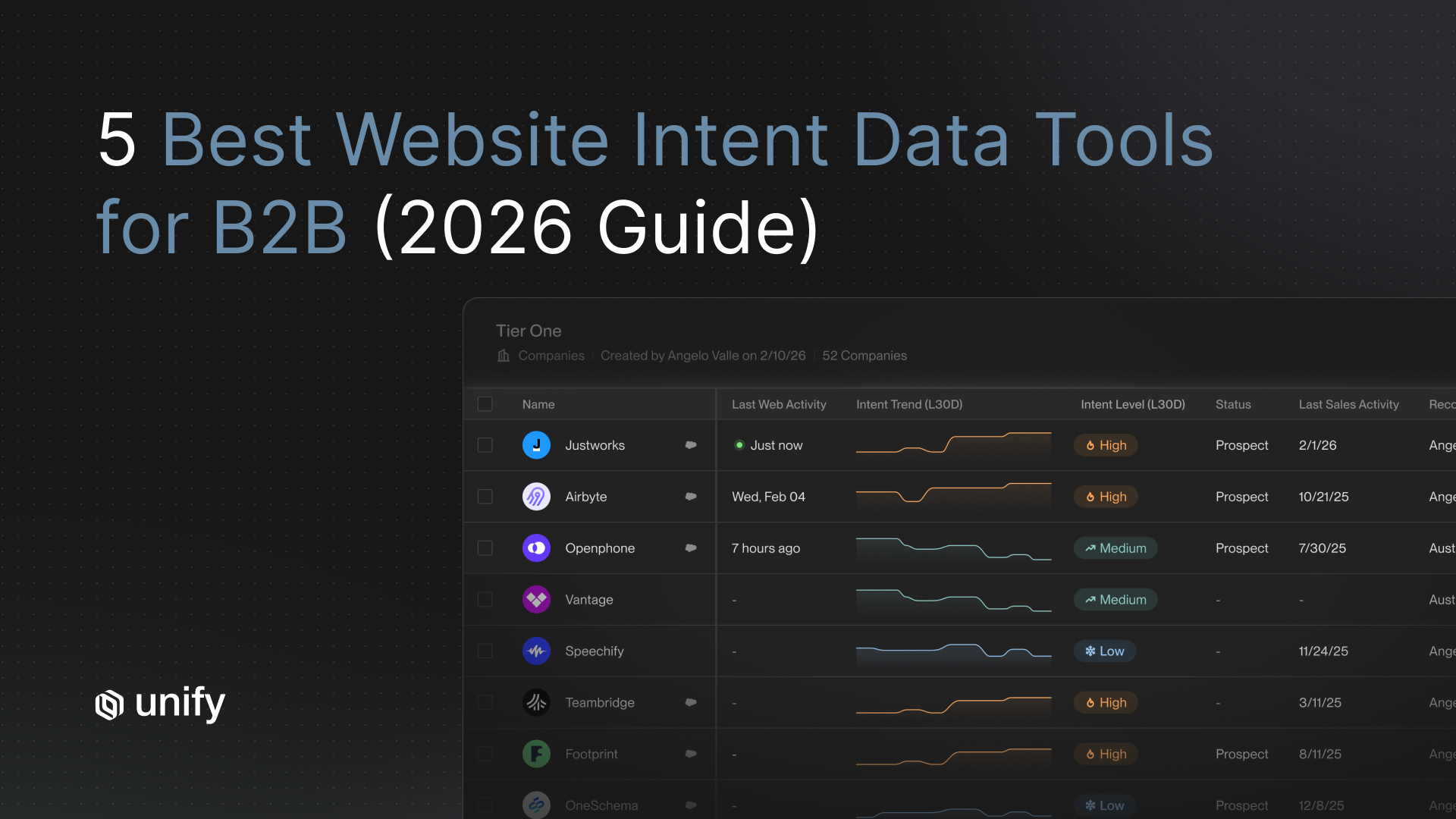

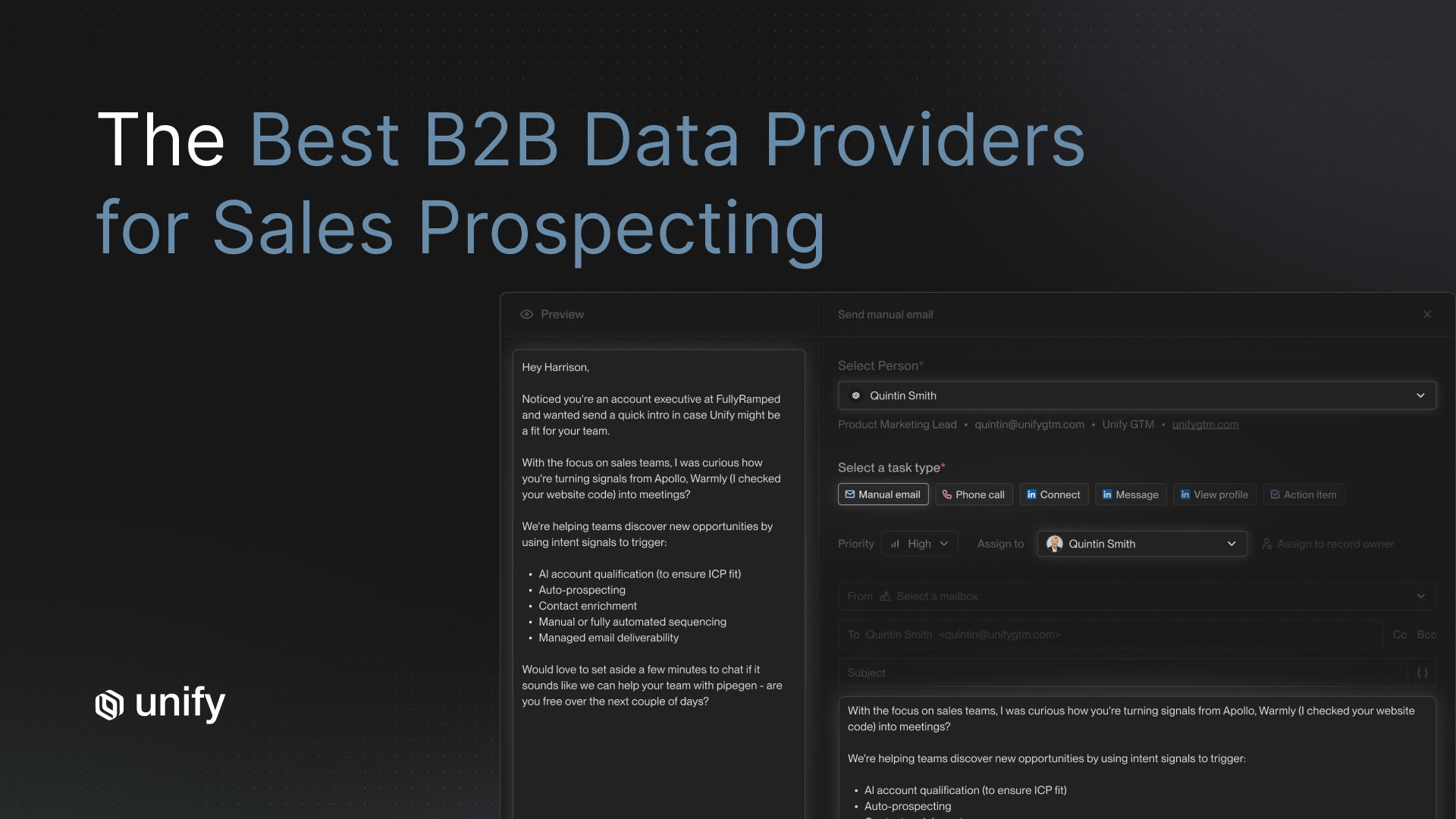

For teams using Unify, this workflow is built into the product. Signal context sits next to the draft, so reps don't have to go find it. The AI Research assistant gathers prospect context from news, social, and CRM history and puts it directly in the task view. Reps see why they're reaching out and what to say, without switching tabs.

Train to mastery on one workflow. Add the next once that one is habitual.

Step 3: Build in a review loop, not an approval bottleneck

One of the best things you can do to accelerate AI adoption is give reps a fast, low-friction way to review AI output before it sends. This sounds obvious, but a lot of teams either skip the review step entirely (which kills trust when a bad email goes out) or make it so cumbersome that reps abandon the AI-generated draft anyway.

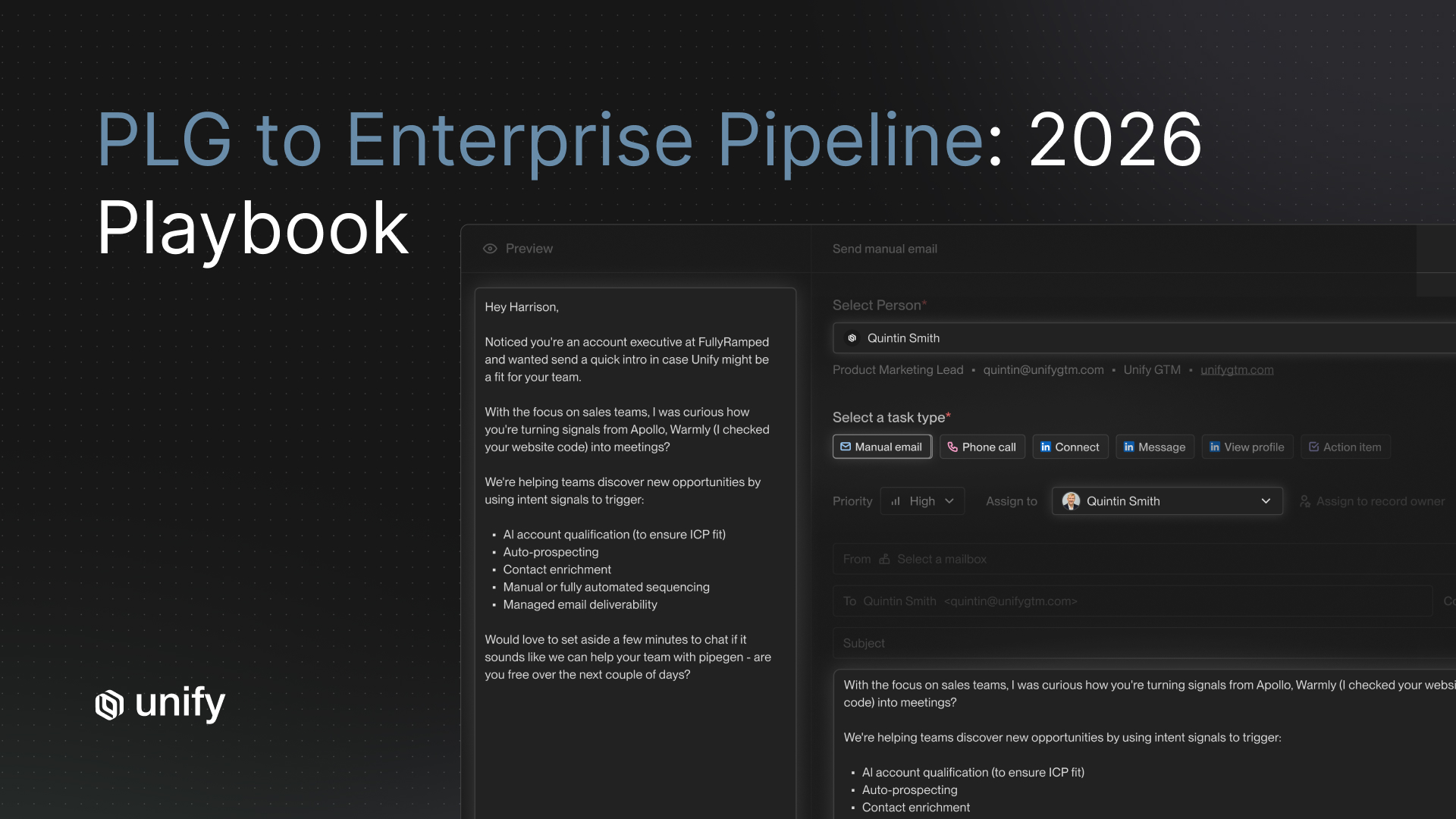

The goal is a review interface that actually speeds reps up. That means the AI-drafted content, the signal context that informed it, and the prospect's background are all visible in one place. Reps should be able to read the draft, make a quick edit if needed, and approve in seconds.

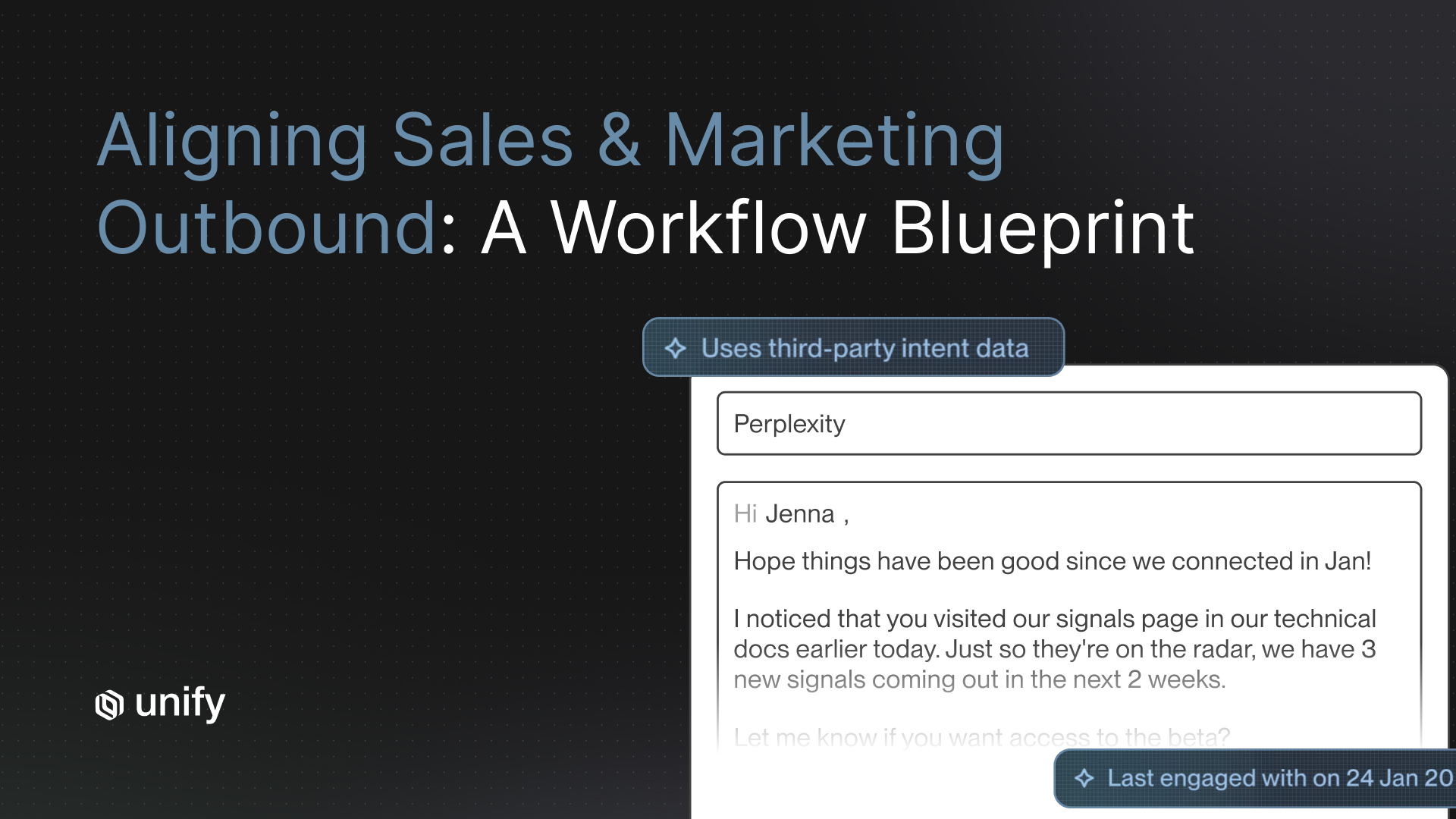

Unify's AI Personalization is built for exactly this. Reps can preview AI snippets inline, see the research the AI used to generate the message, and make edits without leaving the sequence workflow. The AI also learns from those edits over time, so output quality improves the more reps use it. That feedback loop is what turns initial skepticism into a tool reps actually want to use.

The key design principle: human oversight should feel like a quality check, not a second job.

Step 4: Show reps how context drives quality

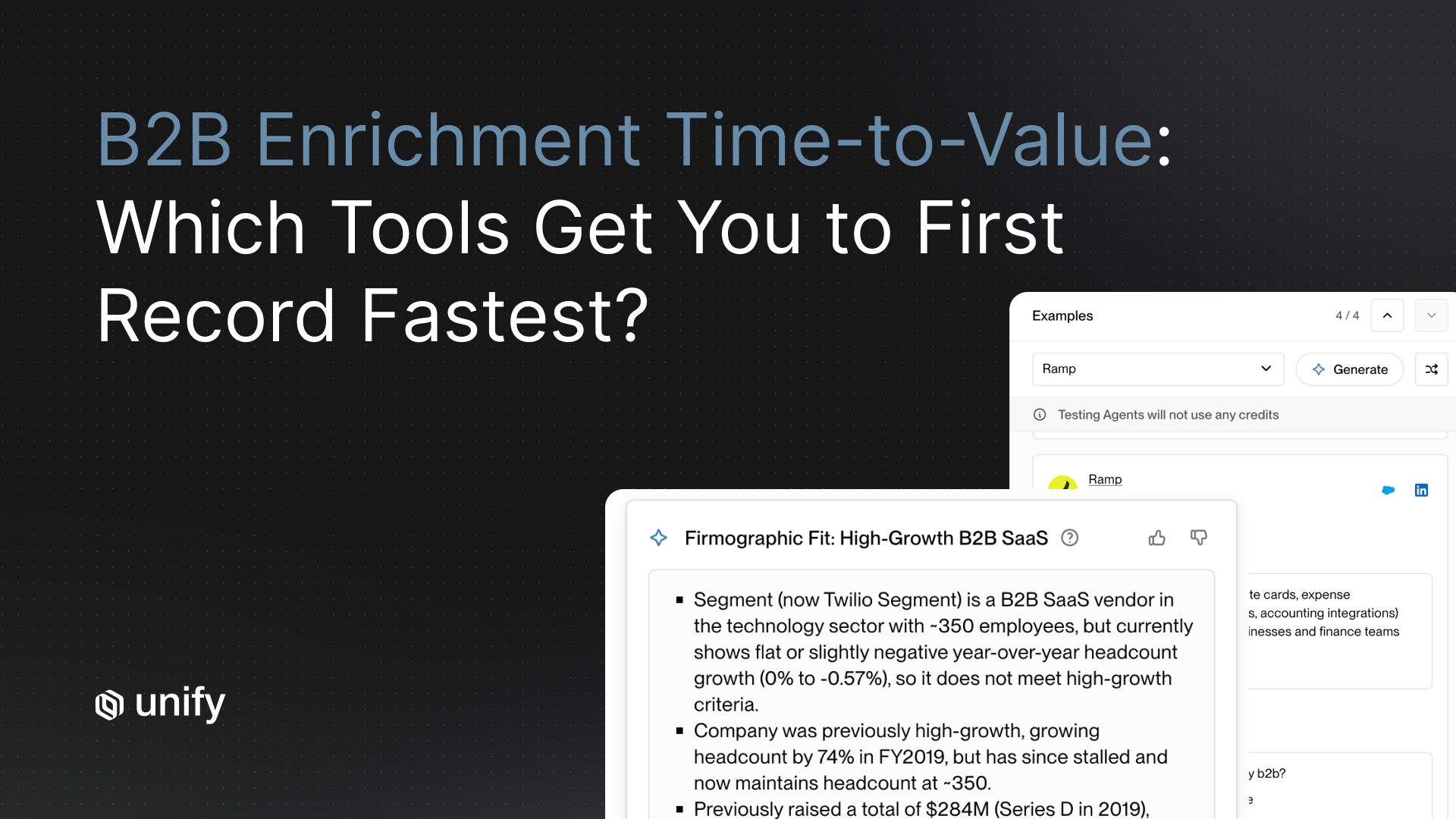

Most reps who distrust AI-generated email haven't seen what good AI output actually looks like. The difference is almost always the quality of the input signal.

A training session that shows side-by-side examples is worth more than any documentation. Build two examples: one email generated without signal context (generic, forgettable), and one generated with strong signal context (recent funding round, new hire in the buying persona, pricing page visit). The second email almost always looks like something a top rep would write, because it has the same information a top rep would have.

This matters because it shifts the mental model. Reps stop thinking of AI as "the thing that writes generic emails" and start thinking of it as "the thing that turns research into words faster than I can." That's the mental model that drives real adoption.

With Unify, signal context is shown directly alongside every email draft. If a prospect visited the pricing page twice last week, that's visible to the rep. If there was a leadership hire at the account, that's in the research summary. Reps can see exactly what the AI knew when it wrote the draft, which makes them confident enough to approve it or informed enough to improve it.

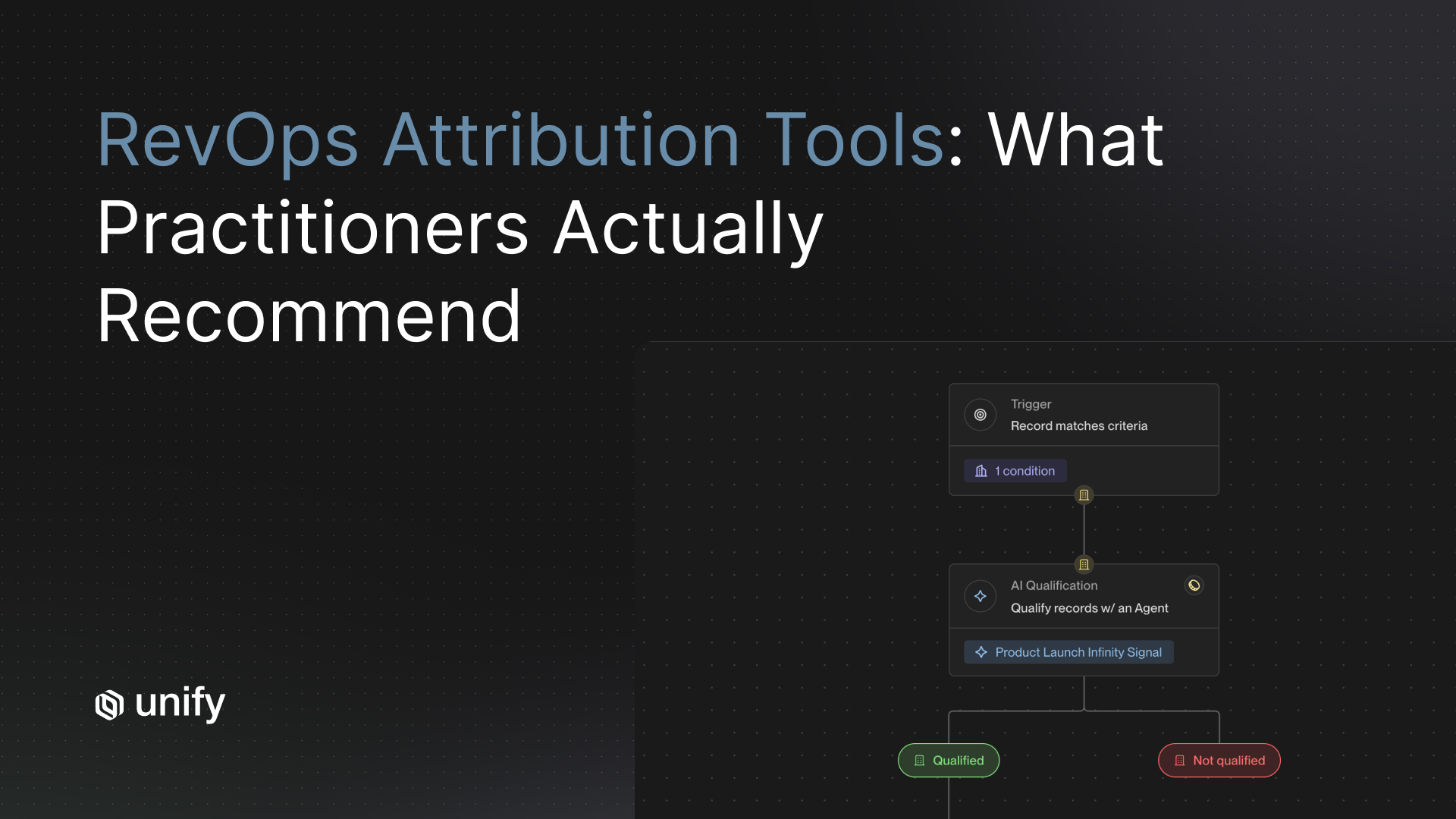

Step 5: Track the right metrics during ramp

The metrics you track during the first 30 days send a signal to your team about what you actually care about. If you track activity volume (emails sent), reps will optimize for volume. They'll approve AI drafts without reading them, which tanks deliverability and reply rates.

Track outcomes instead. Reply rate, positive reply rate, and meetings booked per rep per week. Compare reps using AI personalization consistently against those who aren't. When those numbers diverge, share them in the team standup. Real performance data builds credibility faster than any manager endorsement.

HubSpot's sales data (citing Mailshake) also found that only 5% of cold email senders currently personalize every email individually, while 51% rely on segment-based templates. That gap is the competitive advantage for teams that can do what most won't.

Step 6: Handle the "it doesn't sound like me" objection

This is the most common piece of feedback in any AI personalization rollout, and it's a fair one. AI output can sound generic if the prompts and snippets aren't customized well.

The right response isn't to defend the AI. It's to make the customization easy. Most platforms let reps build their own snippet variations, adjust tone settings, or add personal phrases to the prompt. Walk through this in training, and have each rep build one customized snippet during the session so they leave with something that already sounds like them.

For teams on Unify, custom AI research prompts let reps specify exactly what context they want surfaced. A rep who always mentions recent company news can set that as a default. One who focuses on tech stack can pull that in automatically. The AI adapts to rep preferences, which makes the output feel more theirs over time.

A 30-day SDR AI training plan

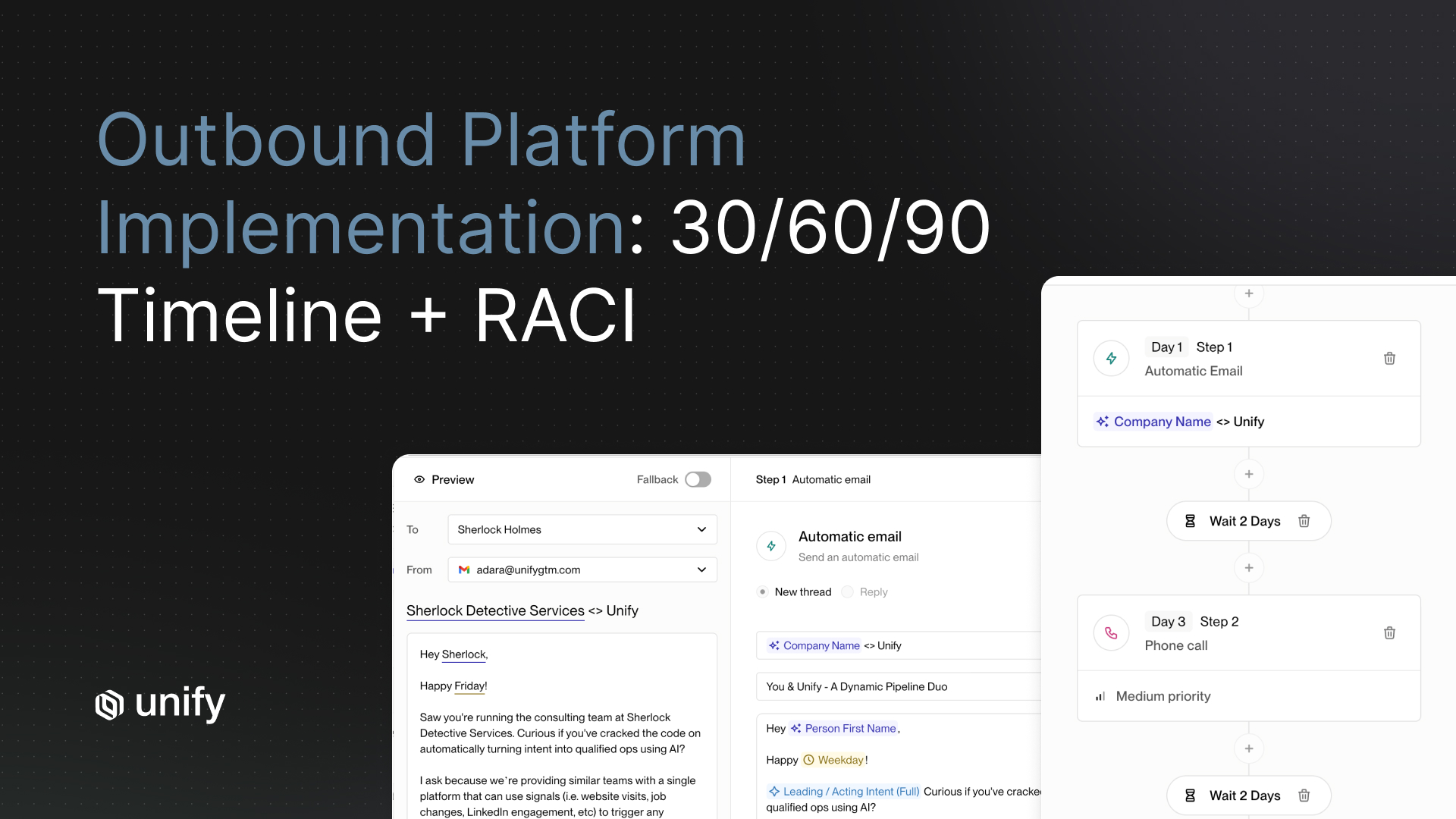

- Week 1 - Outcome-based kickoff: Why this matters, what the data shows, one live demo on your highest-volume play. No feature overload.

- Week 2 - Supervised ramp: Every rep sends at least 20 AI-assisted emails. Manager reviews output with each rep. Focus on the review workflow and snippet quality.

- Week 3 - Data review: Share reply rate comparisons: AI-assisted vs. template. Identify top performers. Have them share one thing they're doing that others aren't.

- Week 4 - Expansion: Introduce a second workflow or signal type. By now, the first workflow should be habitual. Build from there.

What good AI adoption actually looks like

Spellbook, a legal software company with 120+ employees, used Unify to roll out AI-assisted outbound for their seven-person BDR team. After adopting the platform, email open rates climbed to 70-80%, compared to 19-25% with their previous tool. The team generated $2.59M in pipeline and closed $250K in revenue directly attributed to Unify within seven months. Each rep also saved roughly two hours per day on manual prospecting work.

"Unify for Sales Reps now truly matches a BDR's role. Rather than jumping through three different tools just to get people sequenced, everything happens in one place." — Jay Meyers, Business Development Manager, Spellbook

That kind of adoption happens when the tool is fast, the output is good, and reps can see the results in their own numbers. The training plan gets you there by removing friction early and building the feedback loops that make quality compound over time.

If your team is evaluating AI personalization tools or trying to improve adoption with an existing stack, Unify is built to solve all three of the core failure modes: the tool brings signal context into the workflow, the review interface is designed for rep speed, and the AI improves from rep edits over time. Book a demo to see it in action.

Frequently asked questions

How long does it take for SDRs to get comfortable using AI personalization tools?

Most reps reach a comfortable baseline within two to three weeks, assuming the training plan focuses on one workflow at a time and includes regular feedback on output quality. The faster path is pairing supervised ramp with visible performance data, so reps can see the tool working in their own metrics.

What's the biggest reason SDR teams stop using AI tools after rollout?

Workflow friction is the most common culprit. If the tool requires switching between multiple tabs, manually pulling in context, or doing significant editing on every draft, reps will default to their old habits within a few weeks. Tools that put signal context and AI drafts in the same view have higher long-term adoption.

How do I get reps to trust AI-generated email output?

Show them examples side by side. An AI email written without signal context looks generic. The same tool with strong signal context (a pricing page visit, a recent funding round, a new hire) produces output that looks like what a top rep would write. Once reps see the quality difference, the mental model shifts from "AI writes bad emails" to "AI translates good signals into good emails."

Should managers review AI-assisted emails before they send?

Not as a bottleneck, but as a coaching tool. In the first week or two, reviewing a sample of AI-assisted emails with each rep helps identify quality issues and personalization gaps early. After that, the review process should live with the rep, not the manager. The goal is a review interface that takes seconds, not a separate approval workflow.

Which metrics should I track to measure AI personalization adoption?

Reply rate and positive reply rate are the clearest signals. Compare reps using AI personalization consistently against those on templates. Meetings booked per week is the lagging indicator that eventually makes the case for any holdouts. Avoid measuring email volume alone as a proxy for adoption quality.

About the author: Austin Hughes is Co-Founder and CEO of Unify, the system-of-action for revenue that helps high-growth teams turn buying signals into pipeline. Before founding Unify, Austin led the growth team at Ramp, scaling it from 1 to 25+ people and building a product-led, experiment-driven GTM motion. Prior to Ramp, he worked at SoftBank Investment Advisers and Centerview Partners.

.avif)